DeepSeek VL2: Practitioner’s Guide to the MoE Vision-Language Family

Need a vision-language model that reads receipts, parses charts and points at the giraffe at the back of the photo — without paying per-image API fees? DeepSeek VL2 is the open-weight answer most teams reach for after they hit the wall on cost or latency with closed multimodal APIs. Released in December 2024, the DeepSeek VL2 family ships three Mixture-of-Experts (MoE) checkpoints on Hugging Face, with weights you can run on a single GPU at the small end and a workstation rig at the top end. This guide covers what’s inside the model, how the three variants compare, the benchmark numbers worth trusting, where it falls short next to current frontier multimodal models in 2026, and the most pragmatic ways to deploy it today.

What DeepSeek VL2 actually is

DeepSeek VL2 is a series of open-weight vision-language models from DeepSeek-AI built on a Mixture-of-Experts backbone. It significantly improves on its predecessor, DeepSeek-VL, with two key upgrades: a dynamic tiling vision encoder for high-resolution images with different aspect ratios, and DeepSeekMoE language models with Multi-head Latent Attention (MLA), which compresses the Key-Value cache into latent vectors to enable efficient inference and high throughput. The result is a model family that handles document OCR, chart and table parsing, multi-image reasoning, and visual grounding while activating only a fraction of its total parameters per token.

If you’ve used the earlier DeepSeek VL release, VL2 is the same lineage with a sparse backbone and a much stronger OCR pipeline. It is not part of the V4 generation — it pre-dates it — and it remains the only multimodal release DeepSeek has published in this format. For text-only work you should be looking at DeepSeek V4 instead.

Architecture and lineage

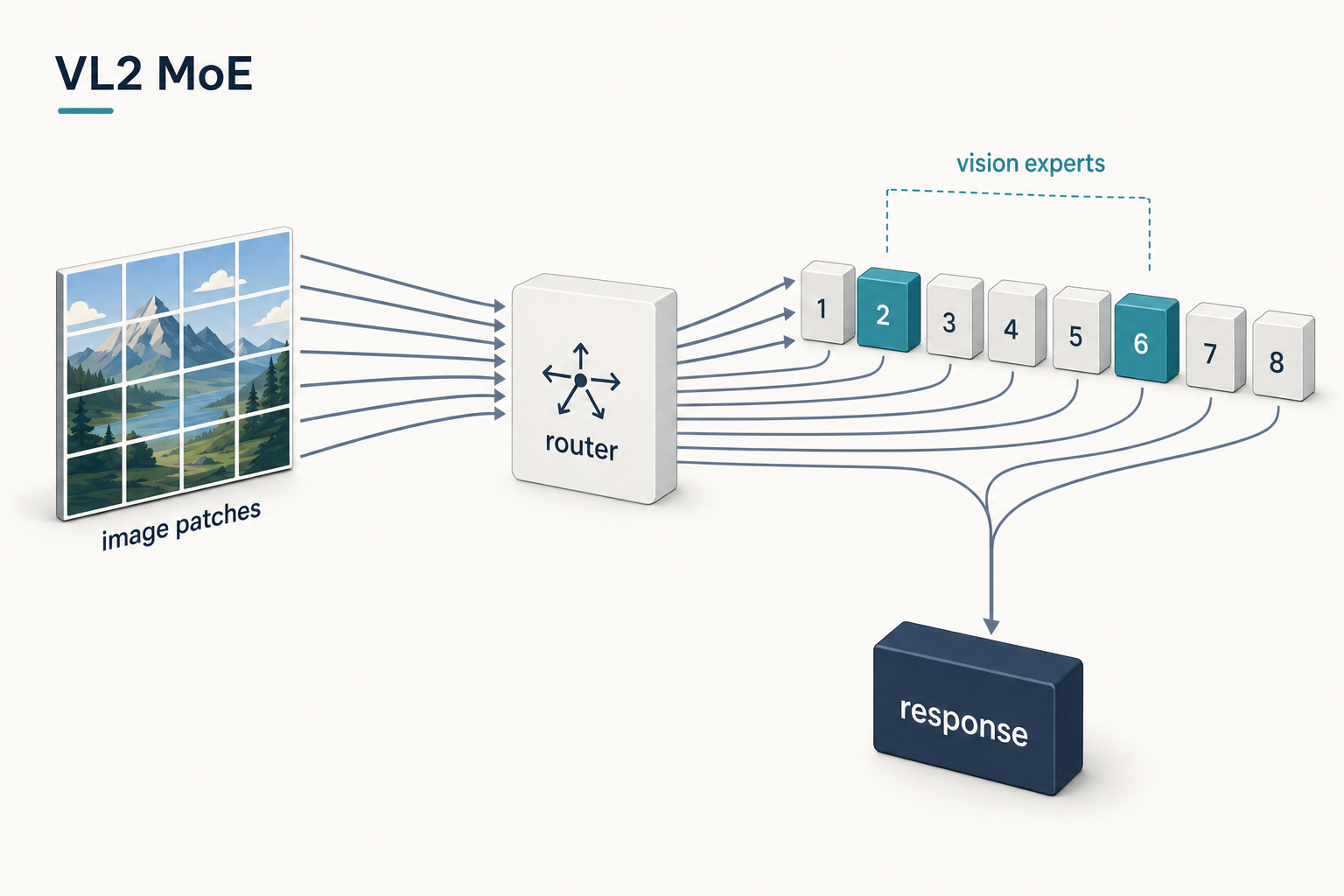

The VL2 stack is deliberately modular. It adopts a LLaVA-style decoder-only structure integrating a vision encoder, a vision-language adaptor, and an MoE language model. The vision encoder uses a single SigLIP-SO400M-384 model that processes images via a dynamic tiling strategy, dividing high-resolution images into local 384×384 tiles to handle diverse aspect ratios while keeping computation in check. A two-layer MLP then compresses and projects the visual tokens into the language model’s embedding space.

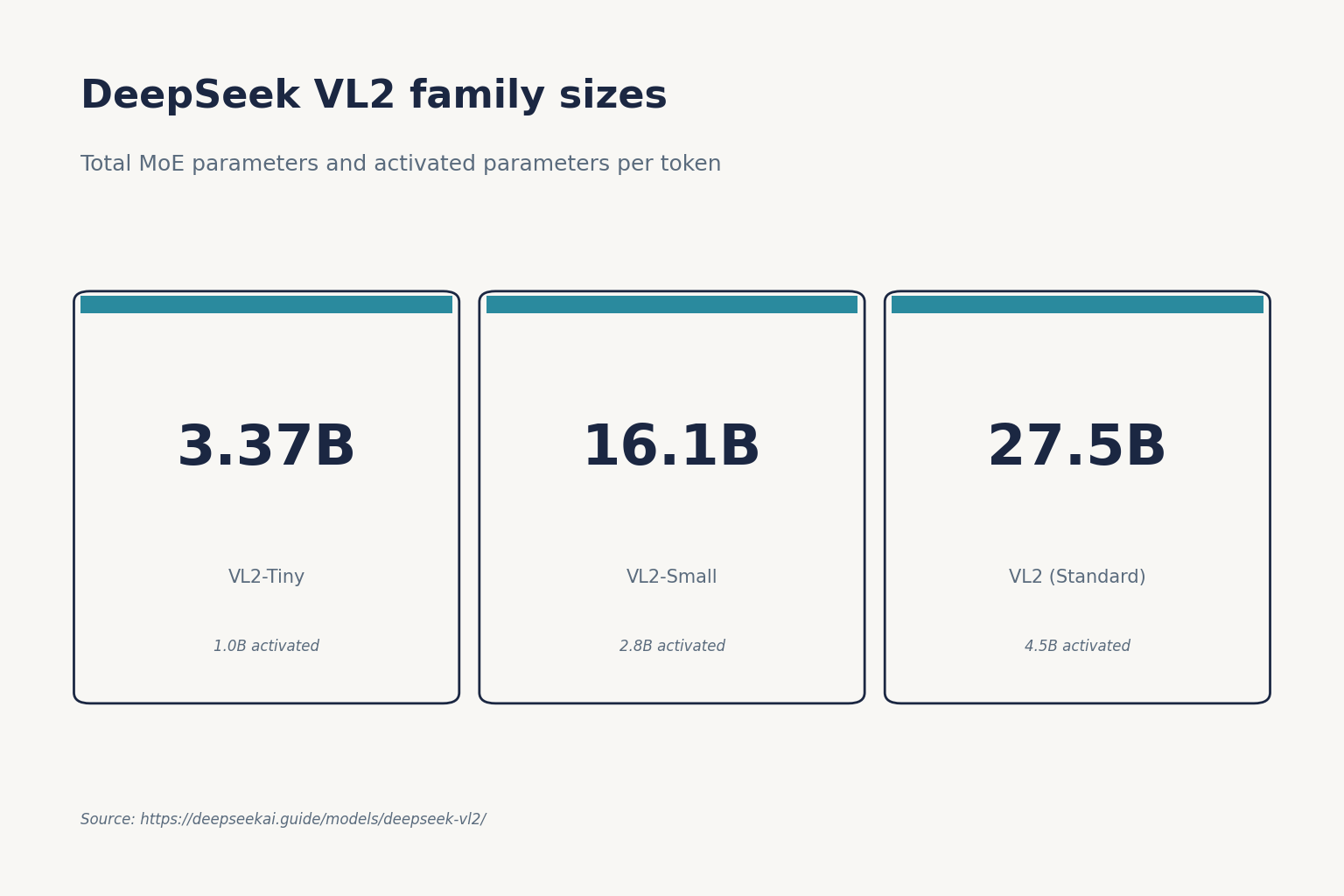

On the language side, all three variants share the DeepSeekMoE design but differ in scale. The model series adopts three MoE variants — 3B, 16B and 27B total parameters — with 0.57B, 2.4B and 4.1B activated parameters respectively in the language backbone. The often-quoted “1.0B / 2.8B / 4.5B activated” figures in the abstract include the vision encoder and adaptor in the count.

Variant breakdown

| Variant | Total params (MoE) | Activated params | Base LLM | Practical GPU floor |

|---|---|---|---|---|

| DeepSeek-VL2-Tiny | 3.37B | 1.0B | DeepSeekMoE-3B | Single GPU < 40 GB |

| DeepSeek-VL2-Small | 16.1B | 2.8B | DeepSeekMoE-16B | 40 GB (with chunked prefill) |

| DeepSeek-VL2 | 27.5B | 4.5B | DeepSeekMoE-27B | 80 GB+ |

Variant sizing and base-LLM mapping confirmed against DeepSeek’s GitHub README and Hugging Face cards. vl2-tiny is 3.37B-MoE in total with ~1B activated and runs on a single GPU under 40 GB; vl2-small is 16.1B in total with 2.4B activated; the full vl2 is 27.5B in total with 4.2B activated in the language stack. You may need 80 GB GPU memory to run the demo script with deepseek-vl2-small and even larger for deepseek-vl2.

One quirk worth knowing before you push it into a pipeline: to keep tokens manageable, dynamic tiling is applied only when there are two or fewer images per turn. With three or more images, inputs are padded to 384×384 without tiling. Plan your batching accordingly — high-resolution multi-document prompts should be split across turns.

Benchmarks worth trusting

The numbers below come from the VL2 technical report and DeepSeek-published comparisons. Always read them as “DeepSeek-VL2-Standard (4.5B activated) vs. competitors at their reported sizes” — and remember these were taken at release in December 2024, against the multimodal field as it stood then.

| Benchmark | DeepSeek-VL2 | Reference point | Why it matters |

|---|---|---|---|

| OCRBench | 834 | GPT-4o: 736 | Dense-text reading |

| DocVQA | 93.3 % | GPT-4o: 92.8 % | Document QA |

| ChartQA | 86.0 % | — | Chart reasoning |

| MMBench | 83.1 | Qwen2-VL-7B: 85.0 | General multimodal |

| MMStar | 61.3 | GPT-4o: 63.9 | Reasoning |

| TextVQA | 84.2 | Qwen2-VL-7B: 84.3 | Scene-text reading |

On OCRBench, DeepSeek-VL2 scores 834, outperforming GPT-4o’s 736, and reaches 93.3 % on DocVQA. For document question answering it edges past GPT-4o’s 92.8 % on DocVQA and scores 86.0 % on ChartQA. The general-multimodal gap is more honest about its size: it matches or exceeds larger models like Qwen2-VL-7B (8.3B) and InternVL2-8B (8.0B) on tasks such as MMBench (83.1 vs. 85.0) and MME (2,253 vs. 2,327), and approaches GPT-4o’s MMStar score (61.3 vs. 63.9) despite a much smaller activated parameter count.

Where DeepSeek VL2 specifically wins

- Document OCR and dense text. The OCRBench and DocVQA numbers above are the reason most teams pick it. If your workload is invoices, contracts, lab forms, or scanned PDFs, this is the open-weight default.

- Visual grounding. The architecture supports visual grounding where the model localises objects within images by category, description, or even abstract concept; a special <|grounding|> token returns grounded responses with object locations. Useful for embodied AI and UI-agent work.

- High-resolution flexibility. VL2 uses 384×384 tiling plus a global thumbnail, with up to 9 local tiles by default and up to 18 in InfoVQA evaluation for extreme aspect ratios. That handles tall receipts and wide spreadsheets without a custom preprocessing layer.

- Activation efficiency. Sparse MoE means a 27.5B-total model runs at the inference cost of a ~4–5B dense model.

- Commercial use allowed. The code repository is licensed under MIT; the use of DeepSeek-VL2 models is subject to the DeepSeek Model License, and the series supports commercial use.

Where it falls short

Direct, no spin: VL2 is a December-2024 model. The multimodal field has moved. If you compare it against current 2026 frontier multimodal systems on reasoning-heavy benchmarks, it loses. Specifically:

- No first-party API. DeepSeek’s hosted API exposes the V4 generation (

deepseek-v4-proanddeepseek-v4-flash), not VL2. Multimodal calls against the official API are not part of the current product surface — VL2 is open weights only. - No video. InternVL3 and the latest closed VLMs handle native video; VL2 is single-frame plus multi-image, no temporal modelling.

- Reasoning ceiling. InternVL3 scores 72.2 on MMMU vs GPT-4o’s 70.7, with a wider gap on MathVista (79.0 vs 63.8) and 79.5 on MLVU for video. VL2 was not designed to compete on multimodal step-by-step reasoning.

- Hardware floor. DeepSeek-VL2-Small needs 40 GB. Tiny is the only variant that runs comfortably on consumer hardware.

- Tiling limit on multi-image. The two-image dynamic tiling cap (above) is a real ceiling for batch document workflows.

How to access DeepSeek VL2

There is no DeepSeek VL2 endpoint on the official chat-completions API. To use it you self-host — that’s the only path. Three practical paths:

- Hugging Face Transformers. Pull

deepseek-ai/deepseek-vl2-tiny,-small, ordeepseek-vl2and load withtrust_remote_code=True. Suitable for prototyping and small-batch jobs. - Optimised serving stacks. For production environments, consider optimised deployment solutions such as vllm, sglang, or lmdeploy. Throughput and memory both improve substantially.

- Cloud GPU rental. The Small variant fits on a single A100 40 GB with chunked prefill; the Standard variant wants 80 GB. See our DeepSeek hardware calculator to size it before you book.

Minimal Transformers load (Python)

The snippet below loads VL2-Tiny in bfloat16. DeepSeek suggests using a temperature T ≤ 0.7 when sampling; a larger temperature decreases generation quality.

import torch

from transformers import AutoModelForCausalLM

from deepseek_vl2.models import DeepseekVLV2Processor, DeepseekVLV2ForCausalLM

model_path = "deepseek-ai/deepseek-vl2-tiny"

processor = DeepseekVLV2Processor.from_pretrained(model_path)

tokenizer = processor.tokenizer

model = AutoModelForCausalLM.from_pretrained(model_path, trust_remote_code=True)

model = model.to(torch.bfloat16).cuda().eval()

# pass `conversation` (with role + image refs) through processor, then model.generate()

How VL2 fits next to V4 (the text API you’ll probably also need)

Most teams that deploy VL2 also need a text-only model behind the same product surface — to summarise extracted documents, answer follow-up questions, or run agent loops. That side of the workload now belongs to V4.

Worth stating cleanly because it confuses people: DeepSeek V4 is a family of two MoE tiers, both open-weight under MIT. deepseek-v4-pro is the frontier tier (1.6T total / 49B active); deepseek-v4-flash is the cost-efficient tier (284B total / 13B active). Both ship a 1,000,000-token default context with output up to 384,000 tokens. Thinking mode is a request parameter on either model — set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}} for a thinking response, or reasoning_effort="max" for max-effort reasoning. The API returns reasoning_content alongside the final content when thinking is enabled.

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. The API is stateless — clients must resend conversation history with every request, in contrast to the web chat which keeps session history. Legacy IDs deepseek-chat and deepseek-reasoner still route to deepseek-v4-flash until they retire on 2026-07-24 at 15:59 UTC; migration is a one-line model= swap.

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise this VL2 OCR output: ..."}],

)

print(resp.choices[0].message.content)

For the full developer surface — temperature presets, JSON mode, tool calling, streaming, FIM, context caching — start with the DeepSeek API documentation.

Cost snapshot — V4-Flash for the text leg of a VL2 pipeline

If you pipe VL2 OCR output into deepseek-v4-flash for downstream summarisation, the V4-Flash rates apply: $0.0028 per 1M tokens for cached input, $0.14 per 1M for cache-miss input, $0.28 per 1M for output. Worked example for one million calls with a 2,000-token cached system prompt, 200-token user message, and 300-token response:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

Pricing as of April 2026; verify on the DeepSeek API pricing page before committing. V4-Pro rates are roughly an order of magnitude higher and rarely justified for OCR cleanup.

Best use cases

- Document automation and back-office OCR — invoices, claims, KYC forms. See DeepSeek for business.

- Data extraction at scale — tables, charts, scanned reports. Pair with DeepSeek for data analysis.

- Developer tooling — UI screenshot understanding, GUI agents, visual grounding for web automation.

- Education and accessibility — describing images, parsing handwritten or printed material.

Comparable alternatives

If VL2’s reasoning ceiling or hardware floor is a problem, the honest alternatives in 2026 are InternVL3 (better reasoning and video), Qwen2-VL family (broader ecosystem support), and the closed multimodal endpoints from OpenAI, Anthropic and Google. For text-only DeepSeek work paired with VL2, see DeepSeek vs Claude and DeepSeek vs ChatGPT. The full list of open-weight options sits in the open-source AI like DeepSeek roundup, and you can browse the wider DeepSeek models hub for siblings like the text-only V4 tiers.

Verdict

DeepSeek VL2 remains the strongest open-weight choice for OCR-heavy and document-understanding workloads when you need control, predictable cost, and commercial-use licensing. It is not the model to pick for video, deep multimodal reasoning, or zero-ops deployment — it is a self-host model with a December-2024 ceiling. For everything else multimodal-document-shaped in 2026, it earns its slot in production.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

DeepSeek VL2 FAQ

Is DeepSeek VL2 free to use commercially?

Yes, with conditions. The code is MIT-licensed, and the model weights are released under the DeepSeek Model License, which permits commercial use. That is different from the V4-generation text models, whose weights are released under MIT directly. If licensing is decision-critical, read the model card on Hugging Face and confirm against your legal team. For a broader licensing primer see is DeepSeek open source.

How does DeepSeek VL2 compare with GPT-4o on documents?

On OCR-leaning benchmarks DeepSeek VL2 wins. It posted 834 on OCRBench against GPT-4o’s 736, and 93.3% on DocVQA against GPT-4o’s 92.8% in DeepSeek’s reported numbers. On general multimodal reasoning (MMStar, MMMU) GPT-4o still edges ahead. Pick VL2 for dense-text and document workflows, GPT-4o or current frontier APIs for free-form reasoning. More context: DeepSeek V3 vs GPT-4o.

What hardware do I need to run DeepSeek VL2?

The Tiny variant (1B activated) runs on a single GPU under 40 GB. The Small variant (2.4B activated) needs 40 GB with chunked prefill enabled, or 80 GB without. The full Standard variant wants 80 GB or more. For self-hosted deployments, vllm, sglang or lmdeploy are the production-grade serving stacks. See install DeepSeek locally for setup steps.

Can I call DeepSeek VL2 through the official DeepSeek API?

No. The official DeepSeek API serves the V4 generation — deepseek-v4-pro and deepseek-v4-flash — at POST /chat/completions, plus legacy IDs that retire on 2026-07-24. VL2 is open weights only and must be self-hosted, or accessed through a third-party inference provider that hosts it. Start with the DeepSeek API documentation for the text models.

What is visual grounding in DeepSeek VL2?

Visual grounding is the ability to localise objects inside an image based on a textual description, category, or abstract concept. VL2 uses a special <|grounding|> token to return responses that include object coordinates, which makes it useful for UI agents, robotics simulation, and accessibility tooling. The capability is particularly notable because it works on natural-language descriptions, not just predefined object classes. Browse more applications in DeepSeek for developers.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

- Model cardDeepSeek-VL2 model card hubVL2 Standard weights, dynamic tiling settingsLast checked: April 30, 2026

- Model cardDeepSeek-VL2-Tiny model cardTiny variant for single-GPU inferenceLast checked: April 30, 2026

- Model cardDeepSeek-VL2-Small model cardSmall variant requiring 40GB GPU with chunked prefillLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-VL2 technical reportOCRBench 834, DocVQA 93.3, ChartQA 86.0 figuresLast checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.