A Practitioner’s Guide to the DeepSeek API Documentation (V4)

If you have just been handed a DeepSeek API key and told to ship something by Friday, the first question is usually the same: where in the DeepSeek API documentation do I actually start, and what has changed since V3? The honest answer as of April 24, 2026 is that a lot has changed. V4 reshaped the model line-up into two tiers, turned thinking mode into a request parameter, and kept the OpenAI-compatible wire format you already know. This guide walks through the reference you need on day one — the endpoint, the parameters, pricing math for both tiers, and the migration window for legacy IDs — with working code you can paste into a shell.

What the DeepSeek API actually is

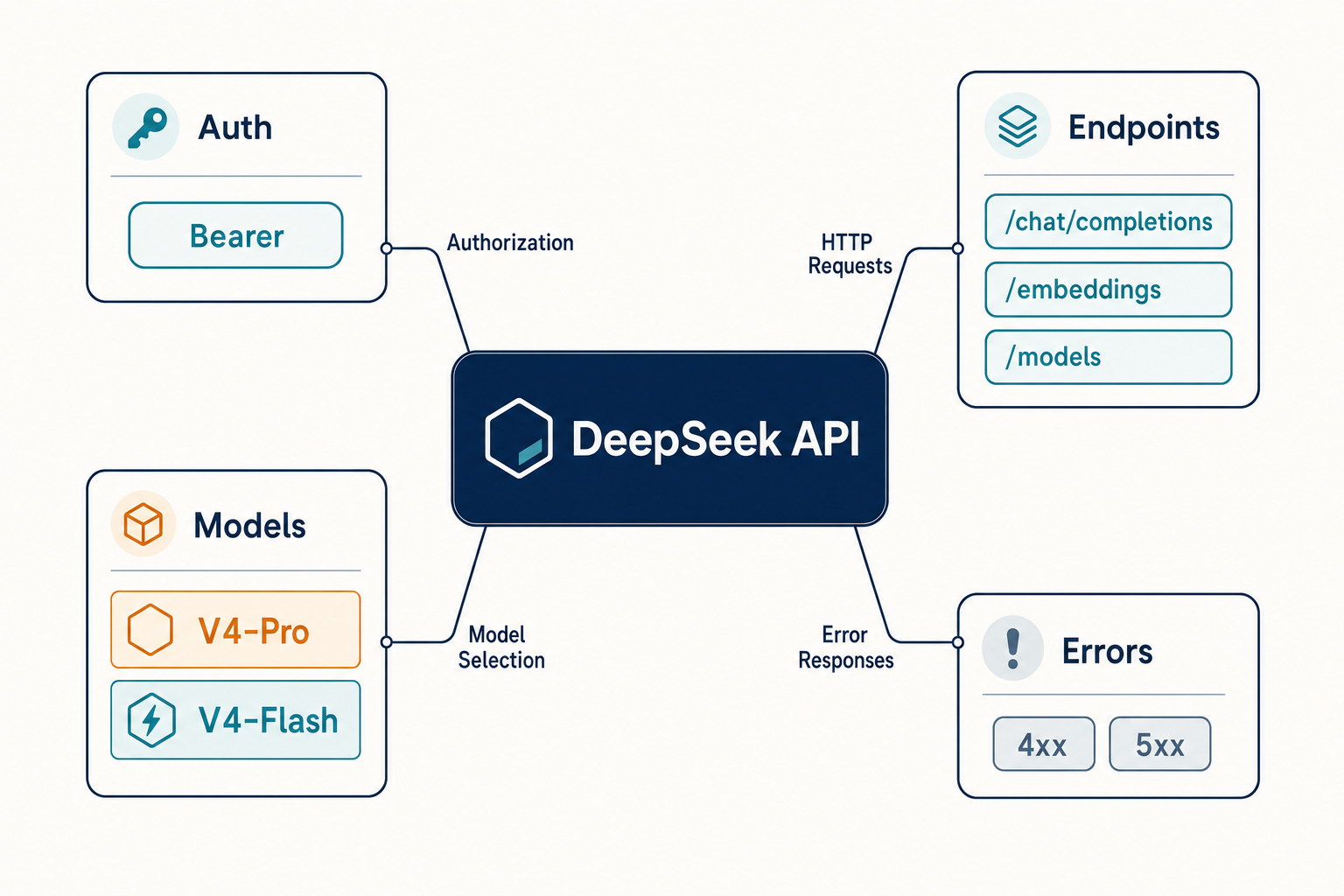

The DeepSeek API is an OpenAI-compatible HTTP service that exposes DeepSeek’s open-weight Mixture-of-Experts models through a single chat-completions endpoint. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com, and authentication is a standard bearer token. If your codebase already talks to OpenAI, you change two lines — base_url and api_key — and you are talking to DeepSeek. DeepSeek also ships an Anthropic-compatible surface against the same base URL for teams with existing Claude-shaped code.

Two points that trip up newcomers on day one:

- The API is stateless. The DeepSeek /chat/completions API is a “stateless” API, meaning the server does not record the context of the user’s requests, so the user must concatenate all previous conversation history and pass it to the chat API with each request. That is the opposite of how the web chat at chat.deepseek.com behaves — the web/app surface keeps session history for you; the API does not.

- V4 is a family, not a single model. You pick a tier with the

modelfield and you pick reasoning effort with a separate parameter. There is no longer a dedicated “reasoner” model ID.

Current model IDs (as of April 24, 2026)

V4 shipped today as a preview, and it ships in two sizes. DeepSeek-V4-Pro has 1.6T parameters (49B activated) and DeepSeek-V4-Flash has 284B parameters (13B activated) — both supporting a context length of one million tokens. Both are open-weight MoE checkpoints on Hugging Face.

| Model ID | Total / Active params | Context | Best for |

|---|---|---|---|

deepseek-v4-pro |

1.6T / 49B | 1,000,000 tokens | Frontier coding, long-horizon agents |

deepseek-v4-flash |

284B / 13B | 1,000,000 tokens | Default chat, high-volume workloads |

deepseek-chat (legacy) |

routes to V4-Flash | 1,000,000 tokens | Backward compatibility only |

deepseek-reasoner (legacy) |

routes to V4-Flash thinking | 1,000,000 tokens | Backward compatibility only |

The legacy IDs still work, but they are on a clock. For compatibility, they correspond to the non-thinking mode and thinking mode of deepseek-v4-flash, respectively. Both retire at 2026-07-24 15:59 UTC. Migrating is a one-line change: the base_url does not move, you only update model. If you maintain an older integration, see our notes on DeepSeek OpenAI SDK compatibility before the cut-off.

Output length also scales up in V4. Maximum output is 384,000 tokens, and thinking-max mode explicitly needs headroom — for the Think Max reasoning mode, DeepSeek recommends setting the context window to at least 384K tokens.

Quickstart: a working request in curl and Python

The simplest thing that works is a non-streaming call to V4-Flash. Thinking is enabled by default on V4; to opt into the cheaper non-thinking path, pass extra_body={"thinking": {"type": "disabled"}} from Python or "thinking": {"type": "disabled"} in the JSON body. Here it is in curl:

curl https://api.deepseek.com/chat/completions

-H "Content-Type: application/json"

-H "Authorization: Bearer $DEEPSEEK_API_KEY"

-d '{

"model": "deepseek-v4-flash",

"messages": [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Summarise the DeepSeek V4 release in one sentence."}

],

"thinking": {"type": "disabled"}

}'

The same call in Python using the OpenAI SDK — note only base_url differs from an OpenAI integration:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_DEEPSEEK_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Summarise the DeepSeek V4 release in one sentence."},

],

extra_body={"thinking": {"type": "disabled"}}, # V4 enables thinking by default; opt out for the cheapest path

)

print(resp.choices[0].message.content)

If you have not created a key yet, start with our walk-through on how to get a DeepSeek API key, then use this snippet as your smoke test. For more variations — streaming, tool calls, retries — see our collection of DeepSeek API code examples.

Thinking mode: a parameter, not a model

V4 collapses the old “chat vs reasoner” split into a single request parameter. Both V4 tiers accept three reasoning-effort settings:

- Non-thinking. Pass

extra_body={"thinking": {"type": "disabled"}}to opt out of the V4 default. No reasoning trace. Fastest and cheapest per request. Supports FIM (Beta). - Thinking (high) — the V4 default.

reasoning_effort="high"with thinking enabled is what V4 does if you send neither flag. Pass it explicitly when you want to be clear; the API returnsreasoning_contentalongside the finalcontent. - Thinking-max. Set

reasoning_effort="max". Maximum effort; requires the longer output window mentioned above.

Minimal Python for V4-Pro in thinking mode:

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Plan a safe schema migration for a 2 TB Postgres table."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

print(resp.choices[0].message.reasoning_content) # reasoning content emitted before the final answer

print(resp.choices[0].message.content) # the final answer

The shape of that response — a separate reasoning_content field plus the normal content — is the same shape the legacy deepseek-reasoner ID produced, so existing parsing code keeps working. For a deeper treatment of when thinking mode earns its extra tokens, see our DeepSeek API review.

Core request parameters worth knowing

The reference page for /chat/completions is long; in practice you will touch a handful of fields. DeepSeek publishes temperature guidance directly in its docs, which is worth matching rather than reinventing.

| Parameter | What it does | Typical value |

|---|---|---|

temperature |

Randomness of sampling | 0.0 code/math · 1.0 data analysis · 1.3 chat/translation · 1.5 creative |

top_p |

Nucleus sampling cut-off | 1.0 (DeepSeek’s recommended default) |

max_tokens |

Cap on output length | Up to 384,000 on V4; set high for JSON mode |

reasoning_effort |

V4-only; "high" or "max" |

Default “high”; pass extra_body={"thinking": {"type": "disabled"}} for non-thinking |

response_format |

Enables JSON mode | {"type": "json_object"} |

tools |

Function/tool declarations | OpenAI-compatible schema |

stream |

SSE streaming | true for UIs |

For local deployment, DeepSeek recommends setting the sampling parameters to temperature = 1.0, top_p = 1.0. For the hosted API you can deviate from that for specific tasks (code at 0.0, creative at 1.5), but do not copy OpenAI or Claude defaults into DeepSeek without checking.

JSON mode: designed, not guaranteed

JSON mode is one of the most useful features for agent work and also the one most often misused. Three rules:

- Include the word “json” in the prompt plus a small example schema.

- Set

max_tokenshigh enough that the output cannot be truncated mid-object. - Handle the occasional empty content response on the client side.

JSON mode is designed to return valid JSON, not guaranteed. If you need the details, we cover validation patterns in DeepSeek API JSON mode.

Beta features

DeepSeek API supports FIM (Fill-In-the-Middle) Completion, compatible with OpenAI’s FIM Completion API, allowing users to provide custom prefixes/suffixes for the model to complete the content — commonly used in scenarios such as story completion and code completion, and charged the same as the Chat Completion API. FIM runs in non-thinking mode only, and you must switch the base URL to https://api.deepseek.com/beta. Chat Prefix Completion requires the role of the last message to be assistant with prefix parameter set to True, and also needs base_url=”https://api.deepseek.com/beta” to enable the Beta feature.

Pricing and a worked cost calculation

DeepSeek charges separately for cache-hit input tokens, cache-miss input tokens, and output tokens. The rate card differs sharply between tiers. As of April 2026, per 1M tokens:

| Tier | Cache hit (input) | Cache miss (input) | Output |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 | $0.14 | $0.28 |

deepseek-v4-pro (75% promo through 2026-05-31) |

$0.003625 (list $0.0145) | $0.435 (list $1.74) | $0.87 (list $3.48) |

Every realistic cost estimate enumerates all three buckets. Here is a worked example for deepseek-v4-flash: 1,000,000 calls, each with a 2,000-token system prompt (cached across calls), a 200-token user message (uncached on arrival), and a 300-token response.

Cached input : 2,000 × 1,000,000 = 2,000,000,000 tok × $0.0028/M = $5.60

Uncached input : 200 × 1,000,000 = 200,000,000 tok × $0.14/M = $28.00

Output : 300 × 1,000,000 = 300,000,000 tok × $0.28/M = $84.00

--------

Total $117.60

The same workload on deepseek-v4-pro at its rate card:

Cached input : 2,000,000,000 tok × $0.003625/M = $ 7.25 (promo; list $29.00)

Uncached input : 200,000,000 tok × $0.435/M = $ 87.00 (promo; list $348.00)

Output : 300,000,000 tok × $0.87/M = $ 261.00 (promo; list $1,044.00)

---------

Total $ 355.25 (V4-Pro 75% promo through 2026-05-31; list $1,421.00)

At list rates V4-Pro is roughly ten times the spend of V4-Flash on that workload (about three times during the 75% V4-Pro promo through 2026-05-31). Total input tokens equal prompt_cache_hit_tokens + prompt_cache_miss_tokens, both of which are reported back in the usage field on every response, so you can observe the cache-hit ratio in production rather than guess at it. Off-peak discounts ended on 2025-09-05 and have not returned. For a calculator you can drop your own numbers into, try our DeepSeek pricing calculator, and for the full rate-card context see DeepSeek API pricing.

Rate limits and quotas

DeepSeek does not publish per-minute token ceilings the way some providers do. The platform holds requests open while inference is scheduled rather than rejecting them with 429s in most cases, which means your retry logic needs explicit timeouts rather than raw exponential backoff. The useful operational signals are HTTP status codes (402 for insufficient balance, 401 for a bad key, 429 when genuine throttling happens) and the two balance endpoints, GET /models and GET /user/balance, which are worth wiring into your deploy sanity checks. We keep a current write-up of throughput behaviour at DeepSeek API rate limits.

Error handling patterns

The error envelope matches OpenAI’s, which means any existing handler from the OpenAI SDK catches DeepSeek errors without modification. The patterns worth baking in from day one:

- Always validate tool-call arguments. The function arguments are generated by the model in JSON format; the model does not always generate valid JSON and may hallucinate parameters not defined by your function schema, so validate the arguments in your code before calling your function.

- Retry only idempotent calls. A 402 is not retryable until you top up the balance; a 5xx usually is.

- Use explicit timeouts. Because the platform can hold a connection during scheduling, a client-side 60–120 s timeout plus a bounded retry is saner than waiting indefinitely.

- Log

usageevery call. The cache-hit split is how you debug sudden cost spikes.

The full error-code reference lives at DeepSeek API error codes.

Context caching — the cheapest optimisation

Cache-hit pricing is automatic: when the provider detects that your messages prefix has been seen before, the repeated tokens bill at the hit rate (on V4-Flash, $0.0028 vs $0.14 cache-miss — a 98% reduction since the 2026-04-26 rate drop). Two things to remember. First, only the prefix hits; each new user message is still a miss against that prefix until the model has seen it. Second, the cache is opportunistic, not guaranteed — you cannot assume a hit rate, only measure it. The practical implication for agent work is to put your stable system prompt and few-shot examples at the start of messages and let the variable user content trail at the end. More detail lives at DeepSeek context caching.

Where to go next

If you want to read the full official reference alongside this page, it is at api-docs.deepseek.com. The V4 release blog from the Hugging Face team also gives a good overview of the architecture changes that matter to API users — two MoE checkpoints are on the Hub, DeepSeek-V4-Pro at 1.6T total parameters with 49B active and DeepSeek-V4-Flash at 284B total with 13B active, both with a 1M-token context window. For everything else on this site, the DeepSeek API docs and guides hub is the starting point.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

How do I start using the DeepSeek API?

Create an account at platform.deepseek.com, generate a key, and point an OpenAI SDK at https://api.deepseek.com. Your first call is a POST /chat/completions with a model and messages array — no extra setup. The DeepSeek API getting started walk-through covers account creation, key storage and a first smoke-test script in more detail.

What is the current DeepSeek model and what happened to deepseek-chat?

As of April 24, 2026 the current generation is V4, shipped as deepseek-v4-pro and deepseek-v4-flash. The legacy IDs deepseek-chat and deepseek-reasoner still route to V4-Flash but retire on 2026-07-24 at 15:59 UTC. For the full history, see our DeepSeek V4 overview.

Is the DeepSeek API really OpenAI-compatible?

Yes. The wire format matches OpenAI Chat Completions, so changing base_url to https://api.deepseek.com and swapping the key is enough to redirect most existing OpenAI code. The path /v1 is also accepted but is not a version indicator. Our notes on DeepSeek OpenAI SDK compatibility cover the small edge cases.

Does the DeepSeek API remember my conversation?

No. The DeepSeek /chat/completions API is stateless, meaning the server does not record the context of the user’s requests, so the user must concatenate all previous conversation history and pass it to the chat API with each request. The web chat keeps session history; the API does not. The DeepSeek browser vs app page explains the wider difference between surfaces.

How much does a typical DeepSeek API call cost?

On V4-Flash, a chat call with a 2,000-token cached system prompt, 200-token user message and 300-token response costs about $117.60 per million calls (after the 2026-04-26 cache-hit rate drop). The same workload on V4-Pro costs about $355.25 per million during the 75% promo through 2026-05-31 (and about $1,421.00 at list). Use our DeepSeek cost estimator to model your own traffic.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- OfficialDeepSeek API documentation (api-docs.deepseek.com)Canonical reference for /chat/completions and parameter docsLast checked: April 30, 2026

- OfficialHugging Face V4 release blogArchitecture overview of the V4-Pro / V4-Flash MoE checkpointsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

Methodology

Pricing figures were checked against the official DeepSeek pricing page and normalised into estimated monthly costs using sample token usage scenarios. Actual costs may vary depending on caching, input/output ratio, regional availability, and provider updates.

Data confidence

High — figures checked directly against the official pricing page on the review date.

Editorial note

DeepSeek occasionally runs promotional discounts that are not always reflected in the headline page. The pricing dataset on this site auto-refreshes monthly; this article reflects the dataset on the date shown above.