DeepSeek V3 vs GPT-4o: Benchmarks, Price and Real-World Verdict

You’re picking between a December 2024 open-weight model from a Chinese lab and OpenAI’s flagship multimodal model from May 2024 — and you want to know which one to wire into your product. The deepseek v3 vs gpt-4o question is genuinely close on text benchmarks, and the price gap is enormous. But “close on benchmarks” doesn’t translate cleanly to “interchangeable in production.” Multimodality, ecosystem, latency under load, and licensing all push the decision in different directions depending on what you’re building. This comparison runs the head-to-head numbers from primary sources, costs out a realistic workload, and tells you exactly which model to pick for which job — including when neither is the right answer in 2026.

Verdict up front: which model wins, and for whom

Pick DeepSeek V3 if you are running text-heavy workloads at scale — RAG pipelines, code assistants, batch summarisation, internal tooling — and the cost line on your invoice matters. V3 is open-weight, posts competitive numbers on knowledge and code benchmarks, and is roughly an order of magnitude cheaper per million tokens than GPT-4o.

Pick GPT-4o if you need native multimodality (vision and audio in one model), tight integration with the OpenAI ecosystem, or you are a US/EU enterprise where the procurement team will not approve a Chinese-hosted endpoint. GPT-4o still has the edge on real-time voice and image understanding, and the developer ecosystem around it is denser.

Important context for 2026 readers: both models are now a generation behind. DeepSeek shipped V3.1, V3.2, and on April 24, 2026 the V4 family (deepseek-v4-pro and deepseek-v4-flash); OpenAI has shipped the GPT-5 generation. If you are starting a new integration today, read this comparison for the architectural and pricing lessons, then jump to the current-generation pages linked at the end. We keep this article live because the question still gets searched — and because the underlying trade-off (frontier-quality model vs frontier-quality price) hasn’t gone away.

At a glance: the spec sheet

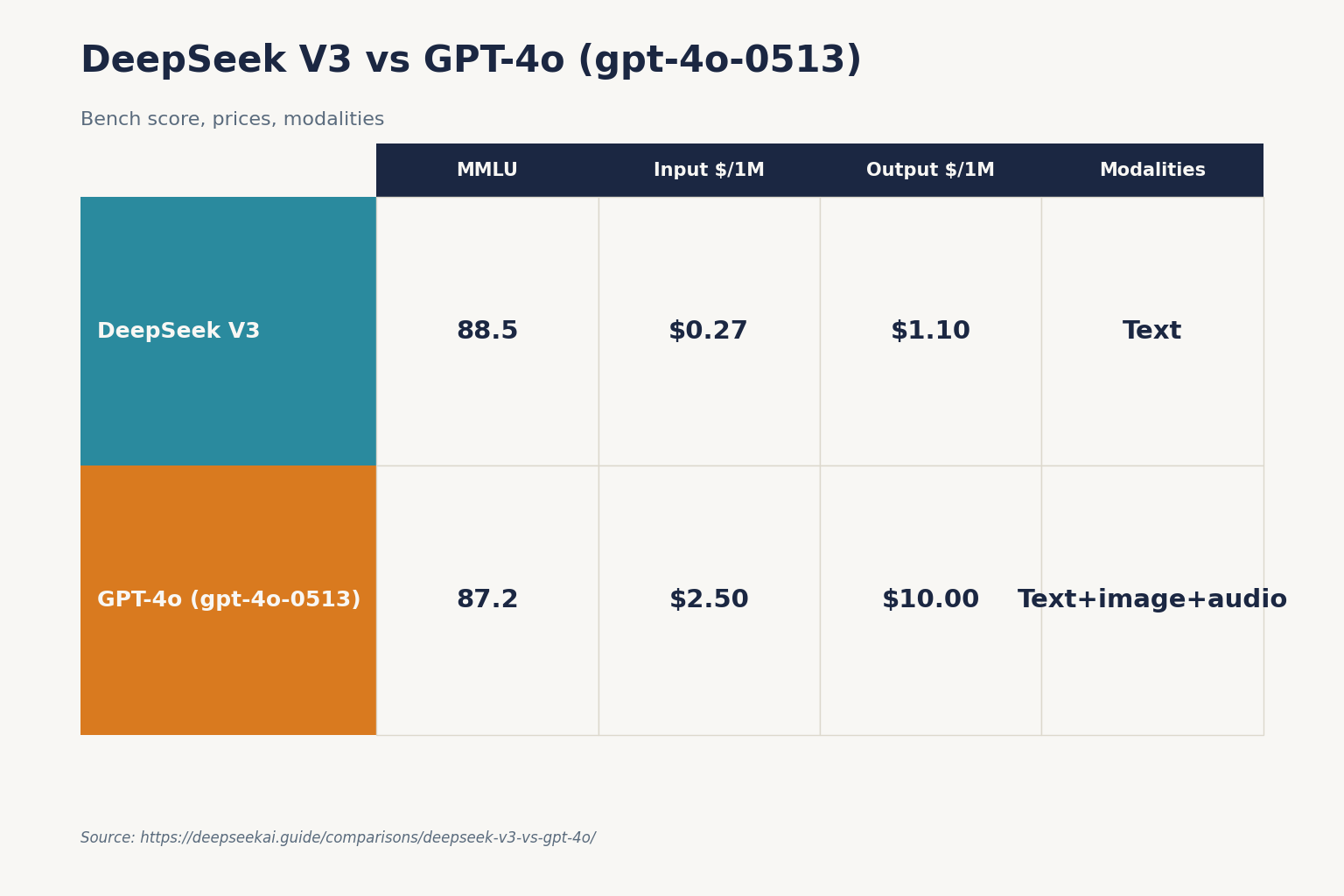

| Feature | DeepSeek V3 | GPT-4o (gpt-4o-0513) |

|---|---|---|

| Release date | 2024-12-26 | 2024-05-13 |

| Architecture | Mixture-of-Experts | Dense (undisclosed) |

| Total parameters | 671B total, 37B activated per token | Not disclosed |

| Context window | 128K tokens | 128K tokens |

| Modalities | Text only | Text, image, audio |

| Weights available | Yes (Hugging Face) | No |

| Input price (per 1M tokens) | $0.27 (cache miss) at launch | $2.50 |

| Output price (per 1M tokens) | $1.10 at launch | $10.00 |

| License (weights) | Separate DeepSeek Model License | Proprietary |

| Training compute | 2.788M H800 GPU hours | Not disclosed |

Architecture: MoE vs dense, and why it matters for cost

DeepSeek V3 is a sparse Mixture-of-Experts model. It has 671B total parameters with 37B activated for each token, and adopts Multi-head Latent Attention (MLA) and DeepSeekMoE architectures, which were validated in DeepSeek-V2. Only a fraction of the network fires for any given token, which is what lets DeepSeek serve V3 at the prices they do.

GPT-4o is a dense (or at least undisclosed-architecture) multimodal model. OpenAI has not published parameter counts or training compute for it. What we can see is the result: a single model that handles text, images, and audio end-to-end, with strong real-time voice latency. That capability is something V3 does not match — V3 is text-only. If your product depends on voice or vision, the architectural comparison ends here and GPT-4o wins by default.

Benchmarks: the head-to-head numbers

The most-cited benchmark numbers come from DeepSeek’s own technical report and the R1 report that followed it. Both reports compare against GPT-4o-0513 (the May 2024 release) and Claude-3.5-Sonnet-1022 — pin those versions when you read the numbers, because OpenAI has updated GPT-4o multiple times since.

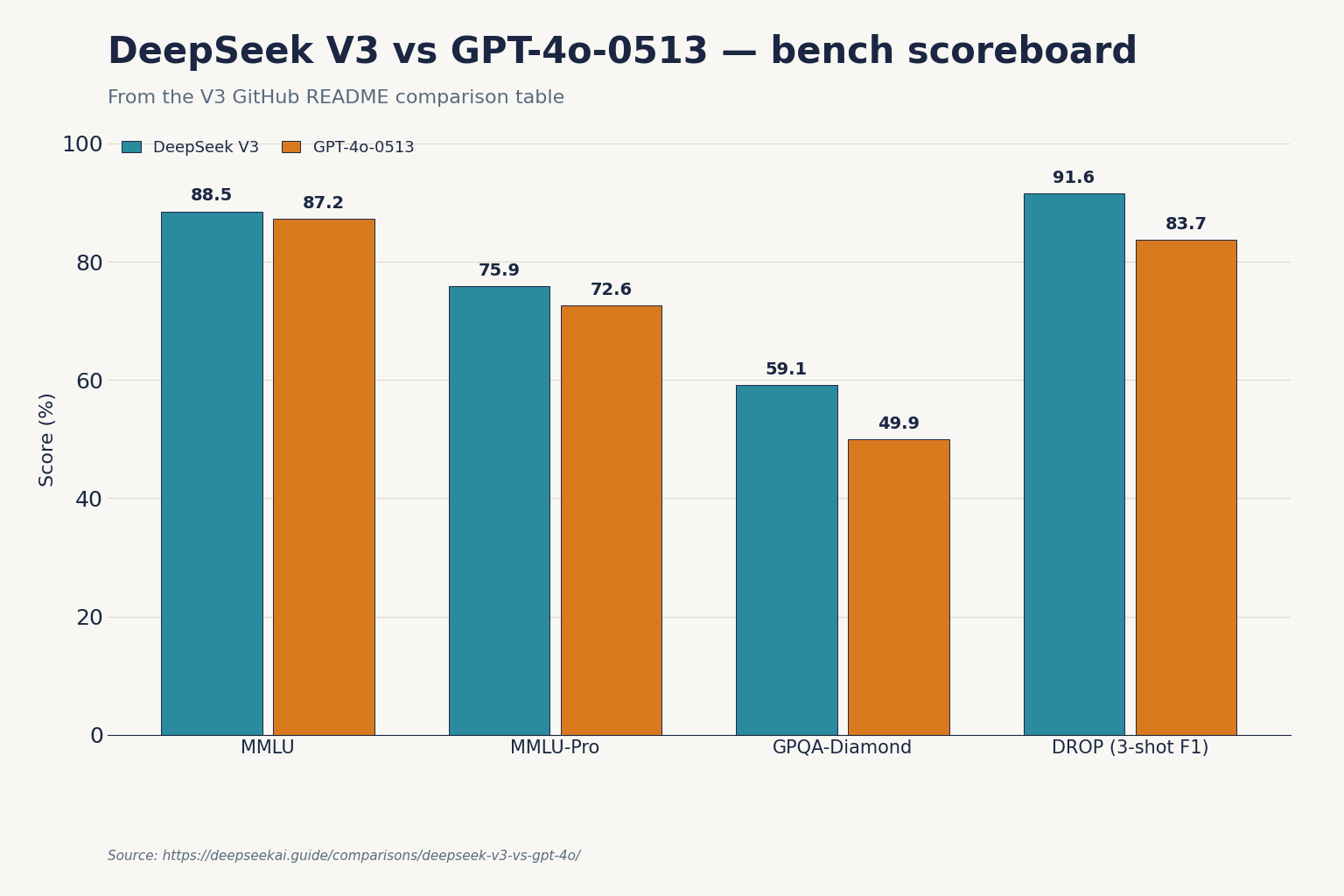

Knowledge and reasoning

| Benchmark | DeepSeek V3 | GPT-4o-0513 | Claude-3.5-Sonnet-1022 |

|---|---|---|---|

| MMLU | 88.5 | 87.2 | 88.3 |

| MMLU-Pro | 75.9 | 72.6 | 78.0 |

| GPQA-Diamond | 59.1 | 49.9 | 65.0 |

| DROP (3-shot F1) | 91.6 | 83.7 | 88.3 |

V3 edges out GPT-4o-0513 on general knowledge (MMLU, MMLU-Pro) and substantially beats it on graduate-level science questions (GPQA-Diamond). Claude-3.5-Sonnet-1022 still leads on the hardest reasoning sets. Practically: for “what does this regulation mean” or “explain this paper” workloads, V3 and GPT-4o are within noise of each other.

Code and math

Code is where V3 looked surprisingly strong at launch. DeepSeek V3 outperformed GPT-4o, Claude 3.5 Sonnet, and Llama-3 with a score of 82.6 on HumanEval. On the Microsoft Foundry catalogue, V3 reports BBH 87.5%, MMLU 87.1%, HumanEval 65.2%, GSM8K 89.3%, and MATH 61.6% — the lower HumanEval figure there reflects a different evaluation harness, which is exactly why you should never quote a single number without naming the source table.

For day-to-day coding (refactor this function, write a SQL query, debug this stack trace), I have not been able to tell V3 and GPT-4o apart on output quality in head-to-head testing. GPT-4o is faster on the streaming-token path; V3 is cheaper. For deeper code reasoning across multiple files, neither was strong enough to replace a reasoning model — that is what R1 and o1 existed for, and now V4-Pro and GPT-5 Thinking. See the broader breakdown in our DeepSeek for coding use-case guide.

Pricing: the gap that defined the V3 launch

This is where the comparison gets uncomfortable for OpenAI.

| Cost line | DeepSeek V3 (launch) | GPT-4o (current) | Multiple |

|---|---|---|---|

| Input, per 1M tokens | $0.27 | $2.50 | ~9× more for GPT-4o |

| Output, per 1M tokens | $1.10 | $10.00 | ~9× more for GPT-4o |

The launch V3 rates of $0.27 input / $1.10 output have since been superseded — V3.2 cut prices further, and the V4 generation cut them again. As of April 2026, the current-generation deepseek-v4-flash tier lists $0.0028 cache-hit / $0.14 cache-miss input and $0.28 output per 1M tokens; deepseek-v4-pro lists $0.0145 / $1.74 / $3.48. Both are still well under GPT-4o list. Always confirm on the official DeepSeek API pricing page before committing.

Worked example: 1M API calls per month

A representative chat workload — 2,000-token system prompt (cached), 200-token user message (uncached), 300-token response, repeated 1,000,000 times in a month — costs out as follows on each model.

Using GPT-4o list rates ($2.50 input / $10.00 output; cached input is half-price on OpenAI):

- Cached input: 2,000,000,000 tokens × $1.25/M = $2,500.00

- Uncached input: 200,000,000 tokens × $2.50/M = $500.00

- Output: 300,000,000 tokens × $10.00/M = $3,000.00

- Total: $6,000.00

Using current-generation deepseek-v4-flash rates ($0.0028 cache-hit / $0.14 cache-miss / $0.28 output per 1M tokens — V4-Flash is the spiritual successor to V3 for chat workloads):

- Cached input: 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200,000,000 tokens × $0.14/M = $28.00

- Output: 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

That is the same workload at roughly 1/51st the cost (after the 2026-04-26 cache-hit drop). If you want to plug your own numbers in, use the DeepSeek pricing calculator. Note: the DeepSeek API is stateless — chat requests hit POST /chat/completions, the OpenAI-compatible endpoint, and the client must resend conversation history every call. The web chat keeps history for you; the API does not.

Coding head-to-head

I have run both models against the same backlog of refactor / bug-fix / SQL / regex tasks for several months. A few patterns held consistently:

- Single-file edits: indistinguishable. Both produce clean, idiomatic code in Python, TypeScript, and Go.

- Multi-file reasoning: GPT-4o was slightly more reliable at “remember what we changed in the controller and update the test” tasks. V3 occasionally regressed earlier turns.

- Latency under load: GPT-4o had a tighter time-to-first-token in US regions; V3 was closer in Asia-Pacific.

- Cost per resolved ticket: not even close. V3 was cheap enough that I could afford to run agentic loops with retries; GPT-4o made the same loop ten times more expensive.

Reasoning, writing, and the things benchmarks miss

Neither V3 nor GPT-4o is a “thinking” model. Both produce an answer in one forward pass. For genuinely hard reasoning — competition math, multi-step logical proofs, planning under constraints — you wanted DeepSeek R1 or OpenAI’s o-series at the time, and the V4-Pro / GPT-5 Thinking tier today.

For writing, GPT-4o has a slight edge in tone control and creative latitude, in my testing. V3 is more literal and tends toward the technical register. For business writing, summarisation, and translation, the gap is small. Our DeepSeek for writing guide has prompt patterns that close most of it.

Privacy, jurisdiction, and the procurement conversation

Be honest with yourself about where the data goes. DeepSeek’s hosted API processes requests on servers subject to Chinese law. GPT-4o through OpenAI processes requests on US infrastructure (with regional options on Azure). For most consumer and internal-tool workloads this does not matter. For regulated workloads — health, finance, legal, government — it absolutely does, and you may not be allowed to use DeepSeek’s hosted API at all.

The mitigation for V3 specifically is that the weights are published on Hugging Face, so you can self-host and remove the jurisdiction question entirely. That is not an option with GPT-4o. If procurement is your blocker and quality is acceptable, self-hosted V3 (or V4) is the move. See how to install DeepSeek locally for the hardware reality of doing that.

Ecosystem: where GPT-4o still pulls ahead

OpenAI’s developer ecosystem is denser. SDKs, vector store integrations, agent frameworks, IDE plugins, third-party tools — all assume OpenAI as the default and add others later. DeepSeek mitigates this by being OpenAI SDK-compatible: point the OpenAI client at https://api.deepseek.com, swap the model ID, and most code works unchanged.

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_DEEPSEEK_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash", # or legacy "deepseek-chat" until 2026-07-24

messages=[{"role": "user", "content": "Summarise this PR."}],

temperature=1.3,

max_tokens=512,

)That snippet uses the current V4 model ID. If you are maintaining an older integration that still references deepseek-chat or deepseek-reasoner, those legacy IDs route to deepseek-v4-flash until they retire on 2026-07-24 at 15:59 UTC. After that they fail. Migrate by changing the model= string; the base_url stays the same.

When to pick which: a decision table

| Your situation | Pick | Why |

|---|---|---|

| High-volume RAG pipeline, cost-sensitive | DeepSeek (V3 then, V4-Flash now) | ~9× cheaper per token at launch; gap remains today |

| Real-time voice agent | GPT-4o | Native audio; V3 is text-only |

| Vision-heavy document processing | GPT-4o | Native image input |

| Self-hosted for compliance | DeepSeek V3 | Open weights on Hugging Face |

| US-regulated industry | GPT-4o | Procurement-friendly jurisdiction |

| Coding assistant for a startup | DeepSeek | Quality close enough, cost makes agentic loops viable |

| Need verified reasoning chains | Neither — use V4-Pro thinking or GPT-5 Thinking | V3/4o are non-thinking |

Alternatives worth considering

If you are reading this in 2026 to make a current decision, neither V3 nor GPT-4o is the model you should actually be picking. The honest recommendations:

- For frontier text quality: the current DeepSeek V4-Pro or the GPT-5 family. See our broader DeepSeek vs ChatGPT breakdown.

- For reasoning: DeepSeek R1 vs OpenAI o1 covers the thinking-model split.

- For Anthropic-curious teams: DeepSeek vs Claude.

- Browse the wider field: our AI comparison hub has the rest of the head-to-heads.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Is DeepSeek V3 better than GPT-4o?

It depends on the task. On text benchmarks reported in the V3 technical report — MMLU, MMLU-Pro, GPQA-Diamond, HumanEval — V3 either matches or beats GPT-4o-0513. On multimodal tasks (vision and audio), GPT-4o wins because V3 is text-only. On price, V3 was roughly 9× cheaper per million tokens at launch. For a deeper read, see our DeepSeek V3 review.

How much cheaper is DeepSeek V3 than GPT-4o?

At V3’s December 2024 launch, the rates were $0.27 input / $1.10 output per million tokens vs GPT-4o’s $2.50 / $10.00 — roughly 9× cheaper on both lines. Current-generation V4-Flash undercuts V3’s launch rates further. Run your own numbers with the DeepSeek pricing calculator before committing.

Can I use DeepSeek V3 with the OpenAI Python SDK?

Yes. DeepSeek’s API is OpenAI-compatible. Point the OpenAI client at base_url="https://api.deepseek.com", supply your DeepSeek API key, and change the model string. The chat endpoint is POST /chat/completions, exactly like OpenAI’s. The full walkthrough is in the DeepSeek OpenAI SDK compatibility guide.

Does DeepSeek V3 support images like GPT-4o?

No. V3 is a text-only model. For vision tasks within the DeepSeek family you want DeepSeek VL2, or for the current generation, the V4 models (which remain primarily text-focused — multimodality is more limited than GPT-4o’s). If you need vision and audio in one model, GPT-4o or its successors remain the better fit. Our DeepSeek VL2 page covers DeepSeek’s vision lineage.

Why is DeepSeek V3 so much cheaper than GPT-4o?

Three reasons. First, the Mixture-of-Experts architecture activates only 37B of 671B parameters per token, cutting inference compute. Second, DeepSeek trained efficiently — the V3 paper reports 2.788M H800 GPU hours for full training, low for a model of that scale. Third, DeepSeek operates at lower margins. See the architecture details on the DeepSeek V3 model page.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricing pageGPT-4o pricing verified April 25, 2026Last checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.