DeepSeek R1 Distill: The Six Reasoning Models You Can Run Locally

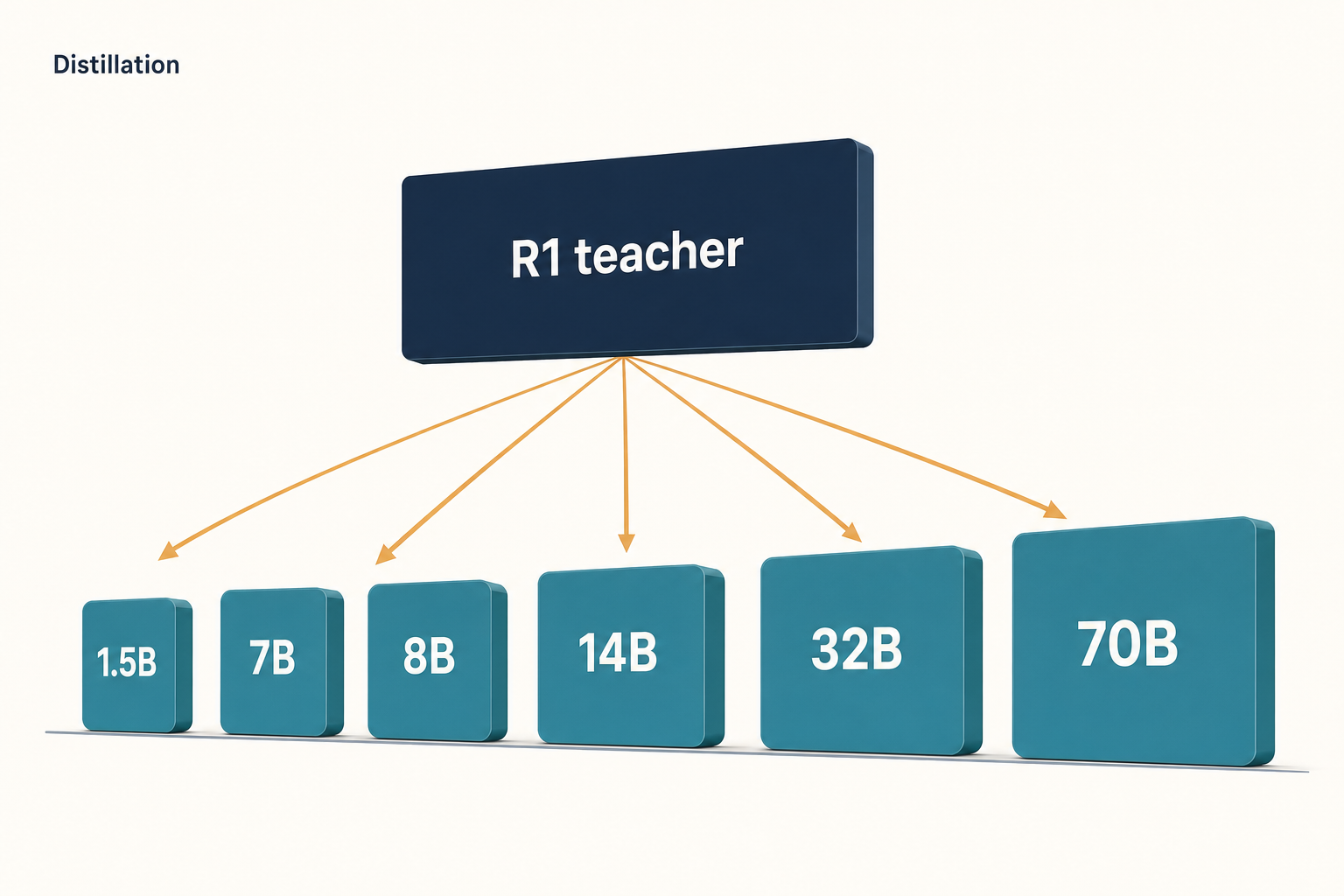

If you wanted R1-style reasoning on your own GPU rather than through an API, the DeepSeek R1 Distill family is the practical answer. These are six smaller dense models — 1.5B, 7B, 8B, 14B, 32B and 70B parameters — fine-tuned on 800,000 reasoning traces produced by the full DeepSeek-R1, then released as open weights in January 2025. They are not new architectures. They are existing Qwen 2.5 and Llama 3.1/3.3 checkpoints retrained to think the way R1 thinks, and they can match or beat much larger non-reasoning models on maths and code.

This guide walks through each size, the benchmark numbers, where they win and lose, how to run them, and how they fit alongside the newer DeepSeek V4 generation.

What DeepSeek R1 Distill actually is

DeepSeek R1 Distill is a set of six open-weight dense models that inherit the reasoning behaviour of the full DeepSeek-R1 through supervised fine-tuning. These smaller, more efficient models bring the reasoning power of R1 to a more accessible scale by starting with six open-source models from Llama 3.1/3.3 and Qwen 2.5, generating 800,000 high-quality reasoning samples using R1, and fine-tuning the smaller models on these synthetic reasoning data — unlike R1, these distilled models rely solely on SFT and they do not include an RL stage.

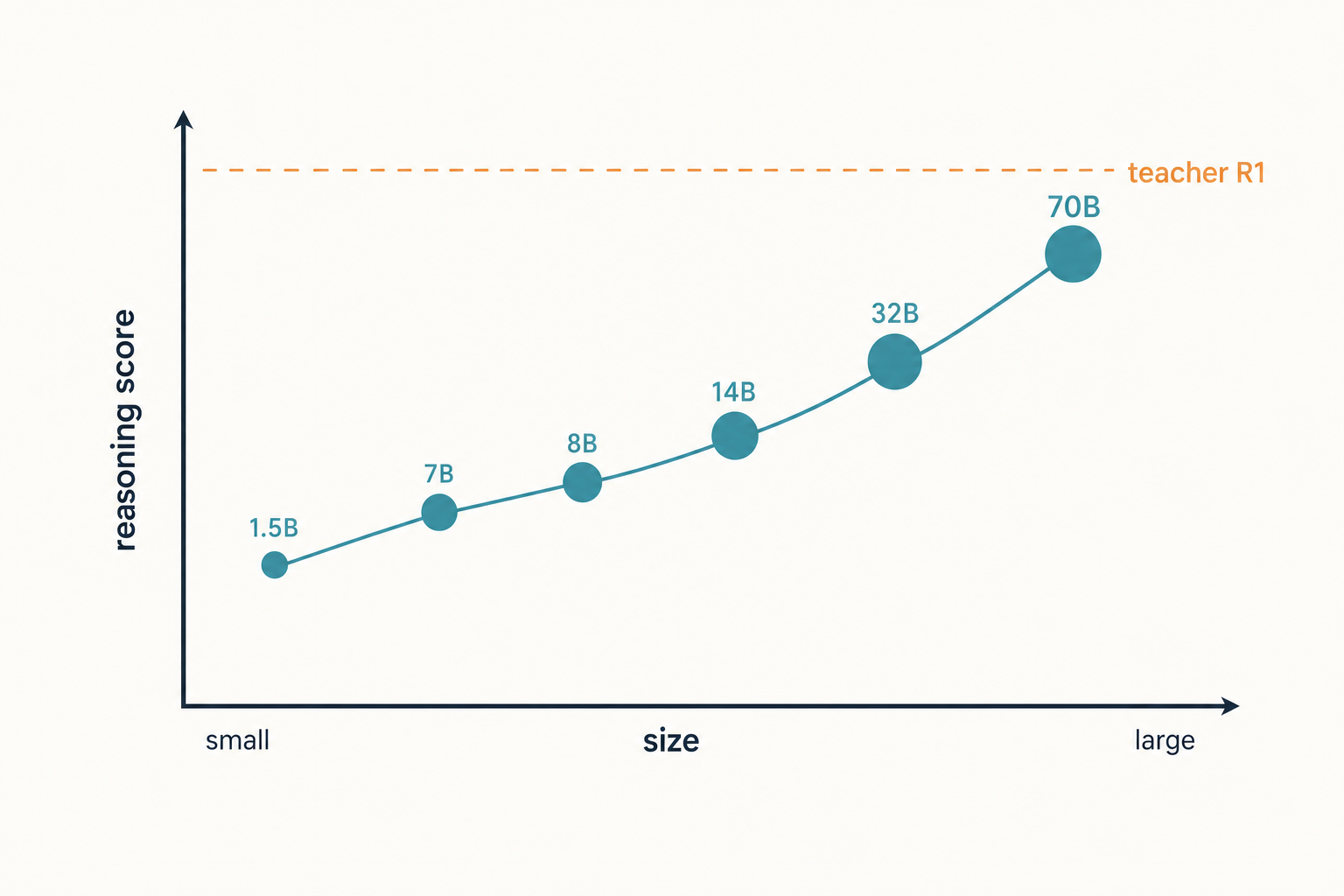

That distinction matters. The full DeepSeek R1 went through reinforcement learning to discover its reasoning patterns. The distilled siblings skipped the RL phase entirely and just learned to imitate R1’s outputs. The conclusion is that distilling powerful models into smaller ones works better; in contrast, smaller models using large-scale RL need massive computing power and may still underperform compared to distillation.

The six checkpoints at a glance

DeepSeek released the family on January 20, 2025. The team open-sourced distilled 1.5B, 7B, 8B, 14B, 32B, and 70B checkpoints based on Qwen2.5 and Llama3 series to the community. Three things vary across the line: the base model, the parameter count, and the licence inherited from the base.

| Model | Base | Params | Inherited licence |

|---|---|---|---|

| R1-Distill-Qwen-1.5B | Qwen 2.5-Math-1.5B | 1.5B | Apache 2.0 |

| R1-Distill-Qwen-7B | Qwen 2.5-Math-7B | 7B | Apache 2.0 |

| R1-Distill-Llama-8B | Llama 3.1-8B-Base | 8B | Llama 3.1 |

| R1-Distill-Qwen-14B | Qwen 2.5-14B | 14B | Apache 2.0 |

| R1-Distill-Qwen-32B | Qwen 2.5-32B | 32B | Apache 2.0 |

| R1-Distill-Llama-70B | Llama 3.3-70B-Instruct | 70B | Llama 3.3 |

The Qwen-1.5B/7B/14B/32B variants are derived from Qwen-2.5 series, originally licensed under Apache 2.0 and now finetuned with 800k samples curated with DeepSeek-R1; R1-Distill-Llama-8B is derived from Llama3.1-8B-Base under the Llama3.1 license; and R1-Distill-Llama-70B is derived from Llama3.3-70B-Instruct under the Llama3.3 license. DeepSeek’s own code and the additional fine-tuning weights ship under MIT, but the underlying base-model licence still applies — so the 70B is governed by Meta’s Llama 3.3 community licence, not by MIT alone.

Benchmarks: how the distilled models stack up

The distilled models punch well above the weight class their parameter count suggests. The distilled models achieve impressive results on reasoning benchmarks, often outperforming larger non-reasoning models like GPT-4o and Claude-3.5-Sonnet. Two headline numbers from the R1 technical report:

| Model | AIME 2024 (Pass@1) | MATH-500 (Pass@1) | LiveCodeBench |

|---|---|---|---|

| R1-Distill-Qwen-32B | 72.6% | 94.3% | — |

| R1-Distill-Llama-70B | 70.0% | 94.5% | 57.5 |

DeepSeek-R1-Distill-Qwen-32B achieves 72.6% Pass@1 on AIME 2024 and 94.3% Pass@1 on MATH-500, significantly outperforming other open-source models, while DeepSeek-R1-Distill-Llama-70B achieves 70.0% Pass@1 on AIME 2024 and 94.5% Pass@1 on MATH-500. For LiveCodeBench, the 70B Llama variant scores 94.5 on MATH-500 and reaches 57.5 on LiveCodeBench, the highest coding score among all distilled models.

The 32B is the more interesting result. DeepSeek-R1-Distill-Qwen-32B outperforms OpenAI-o1-mini across various benchmarks, posting new high marks for dense models — a 32B dense model beating a frontier provider’s small reasoning model on the published numbers is the headline most readers remember.

One caveat the report itself flags: at sample-time these benchmarks are not deterministic. For all models, the maximum generation length is set to 32,768 tokens, and for benchmarks requiring sampling the team used a temperature of 0.6, a top-p value of 0.95, and generated 64 responses per query to estimate pass@1. Reproducing the headline numbers with greedy decoding will give you worse results.

Strengths — where R1 Distill specifically wins

- Maths and code under your roof. The 14B and 32B Qwen variants run on a single 24 GB or 48 GB GPU at 4-bit quantisation and produce R1-style step-by-step reasoning for problems that smaller models simply give up on.

- Permissive enough for most production use. The Qwen-derived checkpoints inherit Apache 2.0; the Llama-derived ones inherit Meta’s community licence. Either way, commercial use is allowed in the typical case — read the specific licence before you ship.

- Drop-in tooling. DeepSeek-R1-Distill models can be utilized in the same manner as Qwen or Llama models — for instance, you can easily start a service using vLLM with `vllm serve deepseek-ai/DeepSeek-R1-Distill-Qwen-32B –tensor-parallel-size 2 –max-model-len 32768`. No custom inference stack required.

- Reasoning trace is visible. The output contains a

<think>block followed by a final answer, which is useful for debugging prompts and auditing reasoning steps.

Weaknesses — where they fall short

- They are reasoning specialists, not generalists. Creative writing, multi-turn instruction following and tool use are weaker than the underlying Qwen-Instruct or Llama-Instruct checkpoints in some tests.

- Quirks inherited from R1. The team recommends setting temperature within 0.5–0.7 (0.6 is recommended) to prevent endless repetitions or incoherent outputs, avoiding system prompts and putting all instructions in the user prompt, and for maths problems including a directive such as: “Please reason step by step, and put your final answer within boxed{}.” Ignore those settings and you will get worse output than the benchmark numbers suggest.

- Older base models. Qwen 2.5 and Llama 3.1/3.3 are from 2024. The distilled models do not benefit from the gains in newer Qwen 3 or Llama 4 base checkpoints — though DeepSeek later released a separate DeepSeek-R1-0528-Qwen3-8B, distilled from DeepSeek-R1-0528 onto Qwen3 8B Base, which surpasses Qwen3 8B by +10.0% on AIME 2024 and matches Qwen3-235B-thinking.

- 32K output cap. The maximum generation length is 32,768 tokens. Long agentic traces can hit that ceiling and truncate.

Hardware: what you actually need to run them

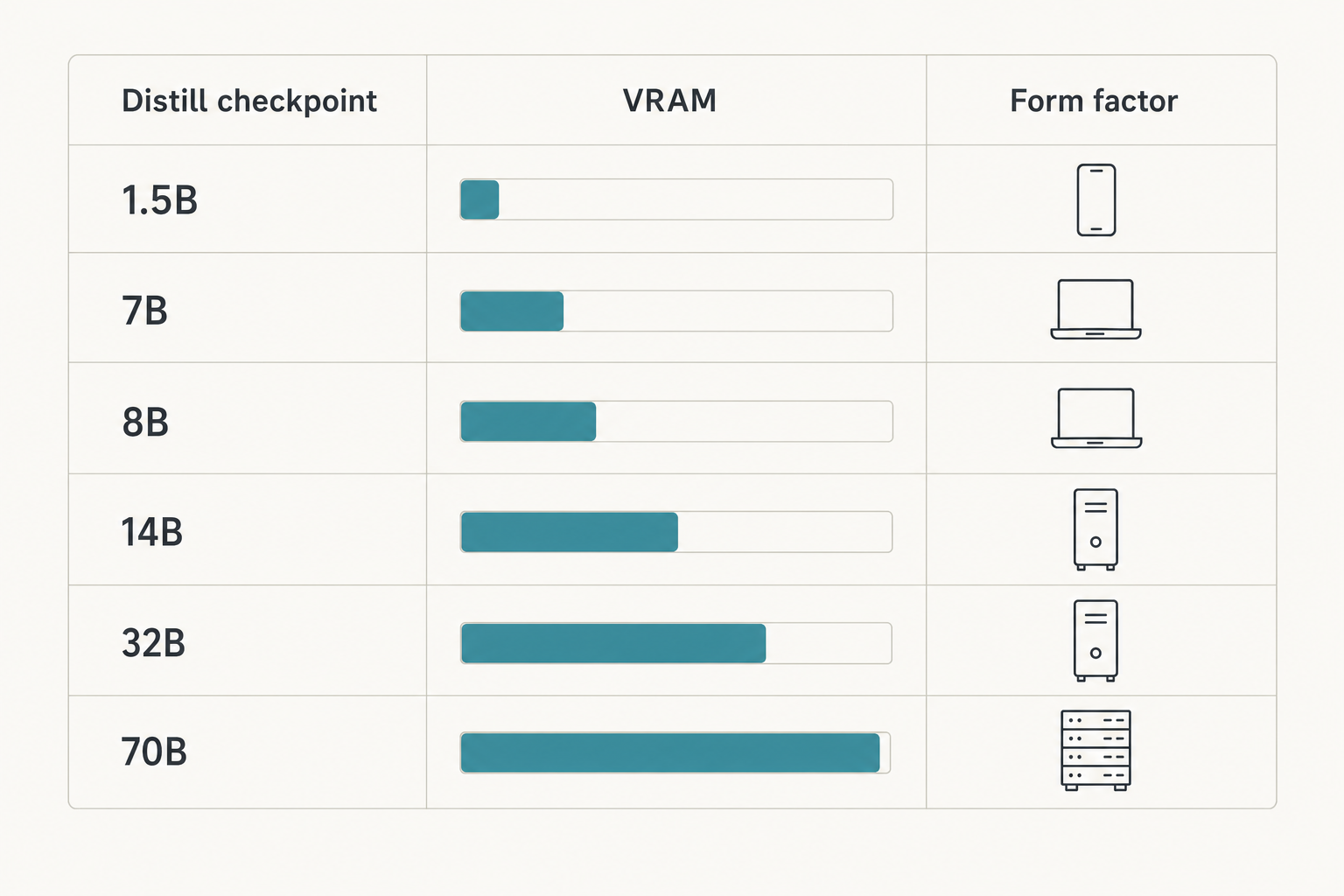

Rough VRAM requirements at common quantisations, assuming a single GPU and a modest context window:

| Model | FP16 VRAM | Q4 VRAM | Practical fit |

|---|---|---|---|

| 1.5B | ~4 GB | ~1.5 GB | Laptop CPU or any GPU |

| 7B / 8B | ~16 GB | ~5 GB | RTX 3060 12 GB and up |

| 14B | ~28 GB | ~9 GB | RTX 3090 / 4090 24 GB |

| 32B | ~64 GB | ~20 GB | Single 24 GB GPU at Q4, or 2× 24 GB |

| 70B | ~140 GB | ~42 GB | 2× 24 GB at Q4, or A100 80 GB |

For interactive single-user inference, the 14B at Q4 on an RTX 4090 is the sweet spot. The DeepSeek hardware calculator will give you a tighter estimate for your context length and batch size.

How to run R1 Distill locally

The fastest route is Ollama. After installing it, the following shell command pulls and chats with the 14B variant:

ollama run deepseek-r1:14bFor a server with concurrency, vLLM is the default. The README ships a one-liner for the 32B:

vllm serve deepseek-ai/DeepSeek-R1-Distill-Qwen-32B

--tensor-parallel-size 2

--max-model-len 32768

--enforce-eagerSGLang works the same way:

python3 -m sglang.launch_server

--model deepseek-ai/DeepSeek-R1-Distill-Qwen-32B

--trust-remote-code --tp 2Step-by-step instructions, including model-pull and quantisation choices, are in our running DeepSeek on Ollama walkthrough and the broader install DeepSeek locally guide.

R1 Distill vs the DeepSeek API

R1 Distill is a self-hosting story. If you want managed inference instead, the same conversational interface lives behind POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. The current generation there is DeepSeek V4, released April 24, 2026, shipped as two model IDs — deepseek-v4-pro (1.6T total / 49B active) and deepseek-v4-flash (284B / 13B active). Both are open-weight MoE under the MIT licence.

The legacy IDs deepseek-chat and deepseek-reasoner still work and currently route to deepseek-v4-flash, but they will be retired on 2026-07-24 at 15:59 UTC. Migrating is a one-line model= change; the base_url stays the same.

A minimal Python call against V4 thinking mode using the OpenAI SDK:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Prove sqrt(2) is irrational."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

max_tokens=4096,

)

print(resp.choices[0].message.reasoning_content)

print(resp.choices[0].message.content)Thinking mode returns reasoning_content alongside the final content. The API itself is stateless — clients must resend the full message history with every request, in contrast to the web/app, which retains session history server-side. The default context window on V4 is 1,000,000 tokens with output up to 384,000 tokens. Other useful parameters include temperature, top_p, max_tokens and reasoning_effort; tool calling, JSON mode, streaming and context caching are all supported.

Pricing snapshot (April 2026)

R1 Distill itself is free to download and run; the only cost is your hardware. If you instead use the V4 API, the rate cards as of April 2026 are below — verify on the DeepSeek API pricing page before committing budget.

| Tier | Cache hit ($/M in) | Cache miss ($/M in) | Output ($/M) |

|---|---|---|---|

| deepseek-v4-flash | $0.0028 | $0.14 | $0.28 |

| deepseek-v4-pro | $0.003625 (promo, list $0.0145) | $0.435 (promo, list $1.74) | $0.87 (promo, list $3.48) |

Worked example on V4-Flash, 1,000,000 calls with a 2,000-token cached system prompt, a 200-token user message and a 300-token reply:

Cached input : 2,000,000,000 tok x $0.0028/M = $5.60

Uncached input : 200,000,000 tok x $0.14 /M = $28.00

Output : 300,000,000 tok x $0.28 /M = $84.00

Total $117.60Best use cases

- Maths tutoring and proof writing — see DeepSeek for math for prompt patterns that work well with reasoning models.

- Coding assistants offline — the 14B and 32B variants pair well with VS Code or a local agent; our DeepSeek for coding page covers integration patterns.

- Research workflows with private data — running locally avoids sending sensitive prompts off-machine; the DeepSeek for research guide goes deeper.

- Edge deployment — the 1.5B at Q5 fits comfortably on a phone or Raspberry Pi-class device for constrained reasoning tasks.

Comparable alternatives

If R1 Distill is on your shortlist, you should also evaluate DeepSeek R1 vs OpenAI o1 for the API-side reasoning comparison, and the broader DeepSeek alternatives for reasoning roundup, which covers Qwen QwQ, Llama-Nemotron and other open reasoning checkpoints. For self-hosting flexibility specifically, the open-source AI like DeepSeek page is the right starting point. You can browse the rest of the family on the DeepSeek models hub.

Verdict

R1 Distill is the right choice when you need R1-style reasoning on hardware you control and you can live with the 32K output cap and the older base models. The 14B Qwen and 32B Qwen are the two most useful sizes in practice — they beat much larger non-reasoning models on maths and code while fitting on commodity GPUs. For frontier-tier work, send the request to the V4 API instead.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

What is the difference between DeepSeek R1 and DeepSeek R1 Distill?

DeepSeek R1 is the full 671B-parameter reasoning model trained with reinforcement learning. R1 Distill is a family of six smaller dense models (1.5B–70B) fine-tuned on 800,000 reasoning samples that R1 generated. The distilled versions are cheaper to run but inherit base architectures from Qwen 2.5 and Llama 3.x. See the full DeepSeek R1 page for the comparison.

Can I use DeepSeek R1 Distill commercially?

In most cases, yes, but the licence depends on which checkpoint you pick. The Qwen-derived 1.5B/7B/14B/32B inherit Apache 2.0; the Llama 8B and 70B inherit Meta’s Llama 3.1 and 3.3 community licences. Read the specific licence on the model’s Hugging Face page before deploying. Our is DeepSeek open source guide covers the nuance.

How much VRAM do I need to run DeepSeek R1 Distill?

Roughly 1.5 GB at Q4 for the 1.5B, 5 GB for the 7B/8B, 9 GB for the 14B, 20 GB for the 32B and 42 GB for the 70B. A single RTX 4090 comfortably runs everything up to the 32B at Q4. For tighter estimates that account for context length and batch size, use the DeepSeek hardware calculator.

Does DeepSeek R1 Distill support function calling?

The distilled checkpoints were not trained for tool use, so function calling is unreliable compared to the base instruct models or to the V4 API, which supports tool calling natively. If your workflow needs structured tool calls, the managed DeepSeek API function calling surface on V4-Flash or V4-Pro is a better fit than the distilled local models.

Why is my R1 Distill model producing repetitive or incoherent output?

This is a known quirk. DeepSeek recommends a temperature of 0.5–0.7 (0.6 is the default), no system prompt — put all instructions in the user message — and an explicit “reason step by step” directive for maths. The model also uses a 32,768-token output ceiling that long traces can hit. Our DeepSeek prompt engineering guide has prompt templates that match these recommendations.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- Model cardDeepSeek R1-Distill-Qwen-32B model card32B distill weights, vLLM serve commandLast checked: April 30, 2026

- Model cardDeepSeek R1-Distill-Llama-70B model cardLlama-3.3 base, 70B distilled checkpointLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

- Technical reportDeepSeek R1 technical report (distillation results)AIME/MATH-500 numbers for the distilled checkpointsLast checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.