Open Source AI Like DeepSeek: The 2026 Shortlist for Builders

If DeepSeek’s open weights pulled you into self-hosting and now you want to know what else is out there, this guide is the answer. The shortlist of credible open source AI like DeepSeek has grown sharply across 2025 and 2026: Alibaba’s Qwen line, Meta’s Llama 4 family, Zhipu’s GLM, Google’s Gemma, and Mistral all ship downloadable weights with very different licences, hardware footprints and benchmark profiles. I have run DeepSeek V4-Pro and V4-Flash in production since release day, and tested most of the alternatives below at length. What follows is a practical comparison — licences, parameter counts, where each model wins, and how the maths works against DeepSeek’s API rates — so you can pick the right model for your workload without wading through marketing copy.

What “open source AI like DeepSeek” actually means

DeepSeek set a high bar for what “open” looks like in 2026. Alibaba launched Qwen3 in April 2025 as the latest generation of its open-sourced large language model family, and Qwen3 represented a new benchmark for AI innovation, but the lineage of true open-weight frontier models really accelerated when DeepSeek released V3 in late 2024 and R1 in early 2025 under permissive terms.

The current generation, DeepSeek V4 (Preview), was released on April 24, 2026. It ships as two open-weight Mixture-of-Experts (MoE) models under the MIT licence:

deepseek-v4-pro— 1.6T total parameters, 49B active per token. Frontier tier.deepseek-v4-flash— 284B total, 13B active. Cost-efficient tier.

Both models carry a 1,000,000-token default context window, with output up to 384,000 tokens. Thinking mode is a request parameter (reasoning_effort="high" or "max" with extra_body={"thinking": {"type": "enabled"}}), not a separate model ID. When enabled, the API returns reasoning_content alongside the final content. For the full spec, see DeepSeek V4.

For the rest of this article, I use “open source” loosely — the way most practitioners do — to mean “weights you can download and run”. The Open Source Initiative has argued that Meta’s Llama community licences fail the Open Source Definition, and they have a point. Where the licence matters for your decision, I call it out explicitly.

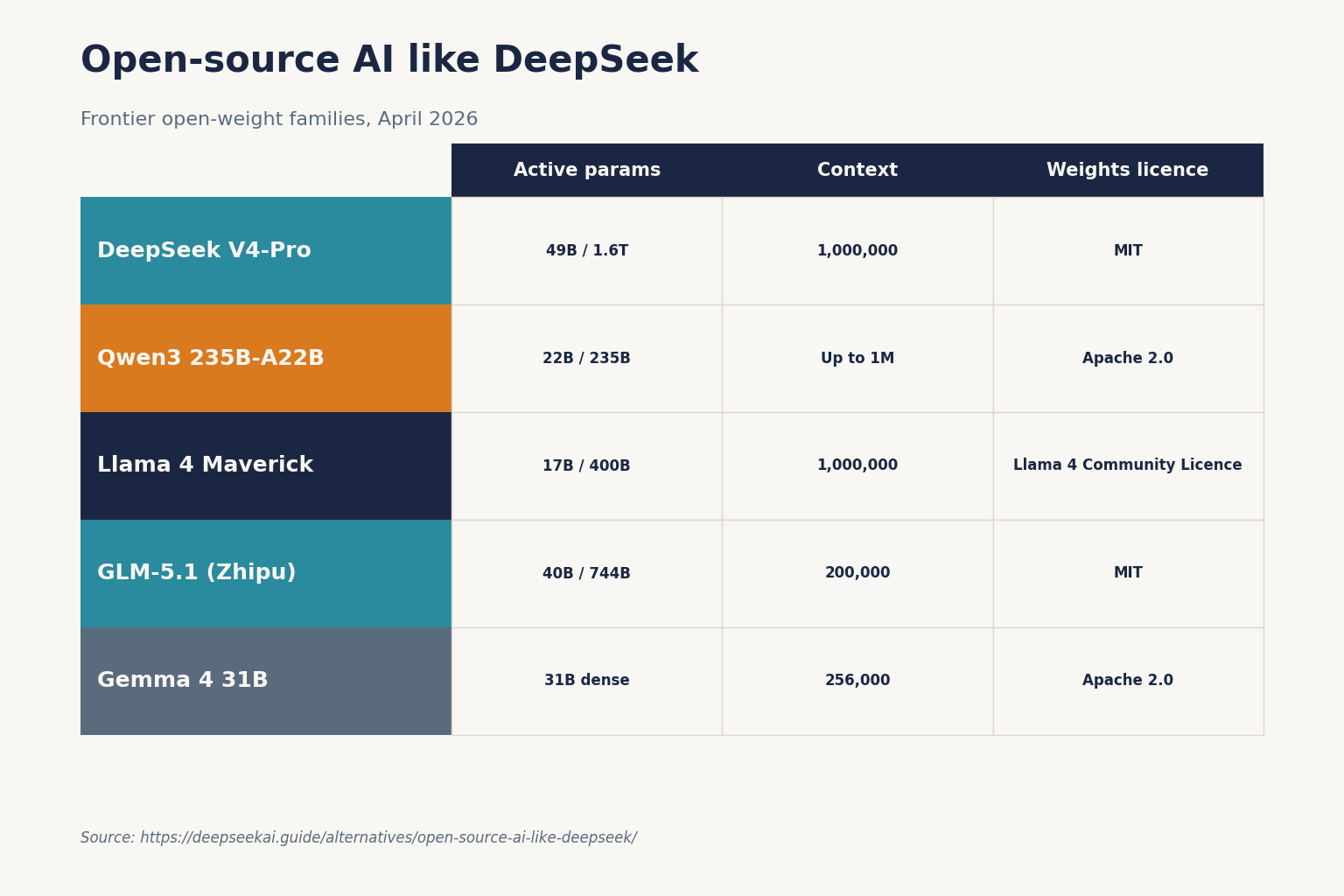

The 2026 shortlist at a glance

Six families currently compete head-on with DeepSeek for builders who want downloadable weights. Pricing below reflects either the official API for the model’s home lab (where one exists) or commodity inference providers; verify on the source page before committing.

| Model family | Largest open variant | Architecture | Context | Weights licence | Notable home-API price (per 1M tokens) |

|---|---|---|---|---|---|

| DeepSeek V4-Pro | 1.6T total / 49B active | MoE | 1,000,000 | MIT | $0.435 promo / $1.74 list in (miss); $0.87 promo / $3.48 list out — through 2026-05-31 |

| DeepSeek V4-Flash | 284B / 13B active | MoE | 1,000,000 | MIT | $0.14 in (miss) / $0.28 out |

| Qwen3 (235B-A22B) | 235B total, 22B active per forward pass via 128 experts | MoE | Up to 1M tokens with the 2507 update | Apache 2.0 | Hosted via Alibaba Cloud / Together / Fireworks (varies) |

| Llama 4 Maverick | 17B active, 400B total, 128 experts, 1M context | MoE | 1,000,000 | Llama 4 Community Licence | Self-host or partner APIs |

| Llama 4 Scout | 17B activated / 109B total, 10M-token context | MoE | 10,000,000 | Llama 4 Community Licence | Self-host or partner APIs |

| GLM-5.1 (Zhipu) | 744B MoE / 40B active, 200K context | MoE | 200,000 | MIT | Free open weights |

| Gemma 4 31B | 31B dense, 256K context | Dense | 256,000 | Apache 2.0 | Free open weights |

The seven I would actually consider

1. Qwen3 / Qwen3.6 (Alibaba)

Qwen is the closest peer to DeepSeek in licensing, architecture and ambition. The Qwen3 series features six dense models and two MoE models — dense at 0.6B, 1.7B, 4B, 8B, 14B and 32B parameters, plus 30B-A3B and 235B-A22B MoE — all open-sourced and globally available. All Qwen3 models are available under the Apache 2.0 open-source licence, which is materially friendlier than Meta’s Llama licence for commercial work.

The April 2026 line extends this with Qwen3.6: Qwen3.6-27B and Qwen3.6-35B-A3B were released on Hugging Face Hub and ModelScope in April 2026. One caveat from the Wikipedia summary worth noting if you care about pure open access: Qwen3.5-Omni and Qwen3.6-Plus were released as proprietary in April 2026, with access limited to the chatbot websites and Alibaba Cloud, while Qwen3.6-35B-A3B was released under Apache 2.0 in the same month. So Alibaba is splitting its line — flagship “Plus” tier proprietary, mid-tier open. For a deeper dive see DeepSeek vs Qwen.

2. Llama 4 (Meta)

Meta released Llama 4 Scout and Maverick on April 5, 2025: Scout targets ultra-long context at 10M tokens with 17B activated / 109B total parameters, while Maverick offers 1M tokens with 17B activated / 400B total. Both are natively multimodal and use a Mixture-of-Experts design.

Llama’s strength is the ecosystem — almost every framework, inference engine and quantisation toolchain supports it first. The friction is licensing. Llama weights are downloadable under the Meta Llama Community License, which permits commercial use as long as your product has fewer than 700 million monthly active users and you display a “Built with Llama” attribution. For a small or mid-sized team that 700M MAU clause is theoretical, but every commit-to-production decision still has to factor in Meta’s acceptable-use policy. In April 2026, Meta Superintelligence Labs released Muse Spark as a replacement for Llama, so check Meta’s roadmap before betting long-term on Llama 4. See DeepSeek vs Llama for a head-to-head.

3. GLM-5.1 (Zhipu AI)

GLM is the third Chinese lab to watch alongside DeepSeek and Qwen. GLM-5.1 was released by Zhipu AI in early April 2026 as a 744B MoE with 40B active parameters, a 200K-token context window, and MIT-licensed open weights. The MIT licence makes this one of the most permissive releases of a frontier-scale model to date — no usage restrictions, no registration required. If your priority is licence cleanliness on a frontier-scale model, GLM is in the same tier as DeepSeek V4. See DeepSeek vs GLM.

4. Gemma 4 (Google)

Google’s Gemma family had a genuine licence upgrade this year. In April 2026, Gemma 4 shipped under Apache 2.0 — no usage restrictions, no monthly active user limits, no acceptable-use policies, fully permitting commercial use, modification and redistribution. This was a major change, since every previous Gemma release shipped under a custom Google licence that deterred enterprise adoption.

The Gemma 4 line includes a 31B dense model, a 26B MoE, and smaller E4B and E2B variants, all with 256K context under Apache 2.0. If your hardware budget tops out at a single H100 or two consumer GPUs, Gemma 4 31B is the strongest open dense model in that weight class right now.

5. Mistral

Mistral’s openly-released models — Mistral 7B, Mixtral 8x7B and 8x22B — pioneered the modern MoE wave for open weights. The lab now ships some of its strongest models commercially only, but the Apache 2.0 ones remain a decent fit for cost-sensitive English/European workloads where you want a pure dense or modest MoE rather than a 200B+ model. See DeepSeek vs Mistral.

6. Llama-derivative reasoning models (e.g. R1 distills)

If you specifically want reasoning quality at small parameter counts, the DeepSeek R1 Distill models — distilled into Llama and Qwen backbones — remain a strong option for offline use. They inherit the underlying licence of their backbone.

7. DeepSeek’s own back catalogue

If V4 is overkill for what you are building, DeepSeek’s earlier open weights are still on Hugging Face. DeepSeek V3.2 remains usable, and DeepSeek R1 is the reference open reasoning model. All three carry MIT-licensed weights. The full catalogue lives on the DeepSeek models hub.

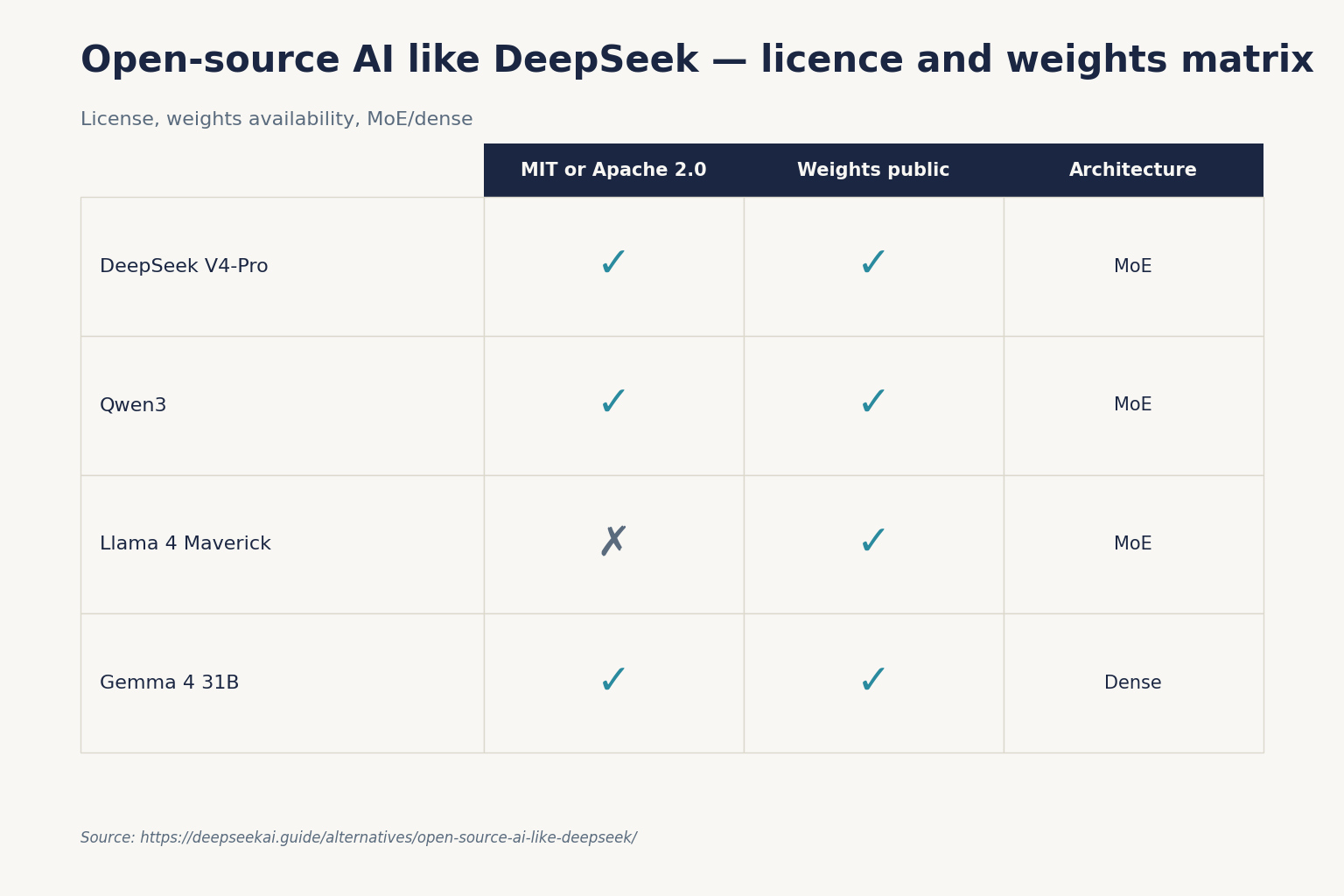

Licences matter more than benchmark scores

Two practical patterns I have seen in the past six months of production work:

- Apache 2.0 / MIT (DeepSeek V4, Qwen3, GLM-5.1, Gemma 4) — drop into any commercial product, modify freely, redistribute. No legal review beyond standard OSS hygiene.

- Llama Community Licence (Llama 3.x, Llama 4) — usable, but every release adds friction. Llama’s licence enforces an acceptable use policy that prohibits some purposes, so it is not open source, and Meta’s use of the term has been disputed by the Open Source Initiative. The licence requires that artefacts using Llama be tagged with the “Llama-” name, the Llama licence, the “Built with Llama” branding if used commercially, and respect use-case restrictions.

If you build customer-facing AI features in a regulated industry, the difference between MIT and the Llama licence will dominate any benchmark difference. For more on this, see our guide on whether DeepSeek is open source.

Cost: when self-hosting beats DeepSeek’s API, and when it doesn’t

The reason most teams stay on the DeepSeek API rather than self-host one of the alternatives above is straightforward: V4-Flash is very cheap per million tokens, and the API is OpenAI-compatible. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com; an Anthropic-compatible surface lives at the same base URL. The API is stateless, so clients must resend the full conversation history on every call (the web/app keeps session state for you; the API does not).

A minimal Python call using the OpenAI SDK:

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise this PR."}],

temperature=0.0,

max_tokens=1024,

)

print(resp.choices[0].message.content)Useful parameters to know up front: temperature (DeepSeek recommends 0.0 for code/maths, 1.0 for data analysis, 1.3 for chat/translation, 1.5 for creative writing), top_p, max_tokens, reasoning_effort, plus features such as JSON mode, tool calling, streaming, context caching, FIM completion (Beta — requires thinking: {"type": "disabled"}) and Chat Prefix Completion (Beta). For details, see the DeepSeek API documentation and DeepSeek OpenAI SDK compatibility.

If you are maintaining an older integration: legacy IDs deepseek-chat and deepseek-reasoner currently route to deepseek-v4-flash (non-thinking and thinking respectively) and will be retired on 2026-07-24 at 15:59 UTC. Migration is a one-line model= swap; base_url does not change.

Worked example: 1M calls a day on V4-Flash

Take the canonical workload — a 2,000-token cached system prompt, a 200-token user message, and a 300-token response, repeated one million times. Costed against deepseek-v4-flash:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

The same workload on deepseek-v4-pro would cost $29.00 + $348.00 + $1,044.00 = $1,421.00. V4-Pro is roughly 10× the cost — only worth it for frontier-tier agentic or coding work where the benchmark lift justifies the spend. To self-host an open alternative cheaper than $117.60/day at this volume, you need one or more H100s running near capacity; below ~30% utilisation, the maths almost never beats the API. See the DeepSeek pricing calculator to plug in your own numbers.

When to pick which

- Want the cheapest production chat with no licence friction? Stay on DeepSeek V4-Flash via the API.

- Need 10M-token context for whole-codebase or whole-corpus work? Llama 4 Scout, with the licence trade-off accepted.

- Need MIT-clean frontier weights to fine-tune and ship under your own brand? DeepSeek V4-Pro/Flash or GLM-5.1.

- Building on a single GPU? Gemma 4 31B (Apache 2.0) or Qwen3-32B dense.

- Multilingual workload across >100 languages? Qwen3 supports 119 languages and dialects with leading performance in translation and multilingual instruction-following.

- Pure on-device / edge? Gemma 4 E2B or Qwen3 0.6B/1.7B.

For more, see the DeepSeek alternatives hub or the focused list of DeepSeek alternatives for coding.

The honest verdict

If I had to pick one default for a new project today, it would still be DeepSeek V4-Flash via the API for chat workloads, with V4-Pro reserved for agentic or coding pipelines where output quality dominates cost. Among the open alternatives, Qwen3 / Qwen3.6 is the closest like-for-like, GLM-5.1 is the strongest MIT-licensed challenger, and Gemma 4 31B is the strongest dense model on a small footprint. Llama 4’s 10M context is genuinely useful, but the licence overhead is real — go in with eyes open. For more granular comparisons, browse DeepSeek comparisons.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

What is the best open source AI like DeepSeek for commercial use?

For licence-clean commercial use, the strongest options are DeepSeek V4-Pro/Flash (MIT), Qwen3 (Apache 2.0), GLM-5.1 (MIT), and Gemma 4 (Apache 2.0). All four can be modified, redistributed and shipped inside a commercial product without attribution clauses or user-count thresholds. Llama 4 is workable but its community licence adds operational obligations. See our DeepSeek alternatives guide for a deeper breakdown.

How does DeepSeek V4 compare to Llama 4 on context length?

DeepSeek V4-Pro and V4-Flash both default to a 1,000,000-token context with output up to 384,000 tokens. Llama 4 Scout reaches 10M tokens at 17B activated / 109B total, and Maverick offers 1M tokens at 17B activated / 400B total. Scout’s 10M context is the largest in any open model, but the licence is more restrictive. For a full breakdown, see DeepSeek vs Llama.

Is Qwen3 actually open source under Apache 2.0?

The Qwen3 base release is. All Qwen3 MoE and dense models are available under the Apache 2.0 licence. However, Qwen3.5-Omni and Qwen3.6-Plus were released in April 2026 as proprietary, with access limited to chatbot sites and Alibaba Cloud, while smaller variants like Qwen3.6-35B-A3B remain Apache 2.0. Always check the Hugging Face card. More in DeepSeek vs Qwen.

Can I run open source AI like DeepSeek locally on a single GPU?

Not the largest variants. DeepSeek V4-Pro (1.6T) and V4-Flash (284B) need multi-GPU servers. For single-GPU deployment, look at smaller models — Gemma 4 31B, Qwen3-32B dense, Llama 3.3 70B with quantisation, or DeepSeek R1 Distill variants. Our install DeepSeek locally tutorial walks through the trade-offs.

Why is DeepSeek’s API cheaper than self-hosting most open models?

Because DeepSeek runs at scale on optimised infrastructure and prices V4-Flash aggressively — $0.14 per million input (cache miss) and $0.28 per million output as of April 2026. To beat $117.60/day at 1M calls of a 2,500-token average request, you need an H100 running near capacity. Below ~30% GPU utilisation, the API wins on cost. See the DeepSeek API pricing page for current rates.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.