DeepSeek Math 7B: Benchmarks, GRPO and How to Use It in 2026

If you have ever tried to get a 7-billion-parameter open model to solve a competition-level integral and watched it confidently produce nonsense, you already understand why DeepSeek Math matters. Released alongside its February 2024 paper, DeepSeek Math is a small, math-specialised language model that posted competition-grade results without external tools — and, more importantly, introduced the reinforcement-learning recipe (GRPO) that later powered DeepSeek R1 and the V4 family.

This guide covers what the model is, the architecture and training data behind it, the exact benchmark numbers from the technical report, how it compares to the current generation, where to get the weights, and when it still makes sense to run in 2026.

What DeepSeek Math is

DeepSeek Math is a 7-billion-parameter open-weight language model purpose-built for mathematical reasoning. It continues pre-training DeepSeek-Coder-Base-v1.5 7B with 120B math-related tokens sourced from Common Crawl, together with natural language and code data, and achieved 51.7% on the competition-level MATH benchmark without relying on external toolkits and voting techniques, approaching the performance level of Gemini-Ultra and GPT-4. The release ships in three flavours: a base model, an instruction-tuned model, and an RL-tuned model. DeepSeek released the DeepSeekMath 7B base, instruct and RL models to the public to support a broader range of research within both academic and commercial communities; commercial usage is permitted under the licence terms.

The model is the direct ancestor of two things you have probably heard of: the GRPO algorithm now used across the open-source RL ecosystem, and DeepSeek’s later reasoning models. If you want to understand how a small model can punch above its weight class on quantitative tasks, this is the canonical case study.

Architecture, lineage and training data

DeepSeek Math is a 7B dense transformer rather than a Mixture-of-Experts model — quite different from the current-generation DeepSeek V4 family, which uses MoE at trillion-scale. The lineage runs Coder → Math, with the math model inheriting the Coder backbone’s habit of structured, programmatic reasoning. DeepSeekMath is initialized with DeepSeek-Coder-v1.5 7B and continues pre-training on math-related tokens sourced from Common Crawl, together with natural language and code data for 500B tokens.

The 120B-token math corpus is the unsung hero. The DeepSeekMath Corpus is a large-scale high-quality pre-training corpus comprising 120B math tokens, extracted from Common Crawl using a fastText-based classifier — the classifier was trained using instances from OpenWebMath as positive examples while incorporating diverse other web pages as negative examples, then iteratively refined with human annotation. The team also went to unusual lengths to keep evaluation honest: they followed prior work to filter out web pages containing questions or answers from English mathematical benchmarks such as GSM8K and MATH and Chinese benchmarks such as CMATH and AGIEval — any text segment containing a 10-gram string that matches exactly with any sub-string from the evaluation benchmarks was removed from the math training corpus.

Key facts at a glance

| Attribute | Value |

|---|---|

| Parameters | 7B (dense) |

| Base model | DeepSeek-Coder-Base-v1.5 7B |

| Math pre-training tokens | 120B (math) within a 500B-token continued pre-training run |

| Variants | Base, Instruct, RL |

| Paper | arXiv:2402.03300 (February 2024) |

| Licence | Code MIT; weights under DeepSeek Model Licence (commercial use permitted) |

| Context length | 4K (small by 2026 standards) |

Benchmarks from the technical report

The headline numbers come from the DeepSeek Math technical report on arXiv. Two are worth memorising. Using only a subset of English instruction tuning data, GRPO obtained substantial improvement over DeepSeekMath-Instruct, including in-domain GSM8K rising from 82.9% to 88.2%, MATH rising from 46.8% to 51.7%, and out-of-domain CMATH rising from 84.6% to 88.8% during the reinforcement learning phase. Self-consistency over 64 samples from DeepSeekMath 7B achieves 60.9% on MATH.

| Benchmark | DeepSeekMath-Instruct 7B (CoT) | DeepSeekMath-RL 7B (CoT) | Notes |

|---|---|---|---|

| GSM8K | 82.9% | 88.2% | Grade-school word problems |

| MATH | 46.8% | 51.7% | Competition-level; no tools, no voting |

| MATH (self-consistency, 64 samples) | — | 60.9% | Majority vote over 64 generations |

| CMATH | 84.6% | 88.8% | Chinese math; out-of-domain for the RL run |

Two important caveats. First, these are 2024 numbers against 2024 baselines — Gemini-Ultra and GPT-4 as they existed at submission time, not the current Gemini 3 or GPT-5 families. Second, the RL run was deliberately narrow. For DeepSeekMath-RL 7B, GSM8K and MATH with reasoning steps can be regarded as in-domain tasks; despite the constrained scope of its training data, it outperforms DeepSeekMath-Instruct 7B across all evaluation metrics, showcasing the effectiveness of reinforcement learning.

GRPO: the algorithm that came out of this paper

If you have read anything about DeepSeek R1 or open-source reasoning training in the last year, you have read about GRPO — and it was introduced here. GRPO is a variant reinforcement learning algorithm of Proximal Policy Optimization (PPO); it foregoes the critic model, instead estimating the baseline from group scores, significantly reducing training resources. GRPO directly evaluates the model-generated responses by comparing them within groups of generation to optimize the policy model, instead of training a separate value model — this approach leads to significant reduction in computational cost.

For practitioners, the result is that you can do RL fine-tuning on math (or any verifiable task) on a single multi-GPU box rather than needing the memory budget for a parallel critic network. The Hugging Face TRL library, vLLM, and dozens of open RL stacks now support GRPO directly, and the algorithm has become the default starting point for reasoning post-training.

Strengths — where DeepSeek Math still wins

- Tiny footprint for the quality. 7B parameters fits on a single 24 GB consumer GPU in 4-bit, or runs comfortably on a 48 GB card at fp16. You will not get this kind of MATH performance per VRAM byte from a general-purpose 7B.

- Genuinely open weights. Commercial use is permitted under the released licence, which makes it usable in product workflows where the original V3 / Coder-V2 licences would have been awkward.

- Reproducible research baseline. Because the data pipeline and GRPO recipe are documented, this model is the cleanest starting point for anyone teaching themselves RL post-training.

- Strong Chinese math. The corpus is multilingual; CMATH performance reflects that.

Weaknesses — be honest about what you are getting

- It is a 2024 model. A 7B specialist from early 2024 is no longer state of the art on competition mathematics. While DeepSeekMath achieves impressive results on quantitative reasoning benchmarks, the authors acknowledge limitations in geometry and theorem proving.

- Short context. 4K tokens is not enough for long multi-part problems with extensive scratch work. The current V4 tiers ship with a 1,000,000-token default context window.

- Narrow specialty. Outside maths, code, and structured reasoning, it is weaker than a general 7B chat model. Do not use it as a general assistant.

- RL gains were measurement-style, not capability-style. RL enhances Maj@K’s performance but not Pass@K — the improvement is attributed to boosting the correct response from TopK rather than the enhancement of fundamental capabilities. Translation: GRPO made the model more reliable, not strictly more capable.

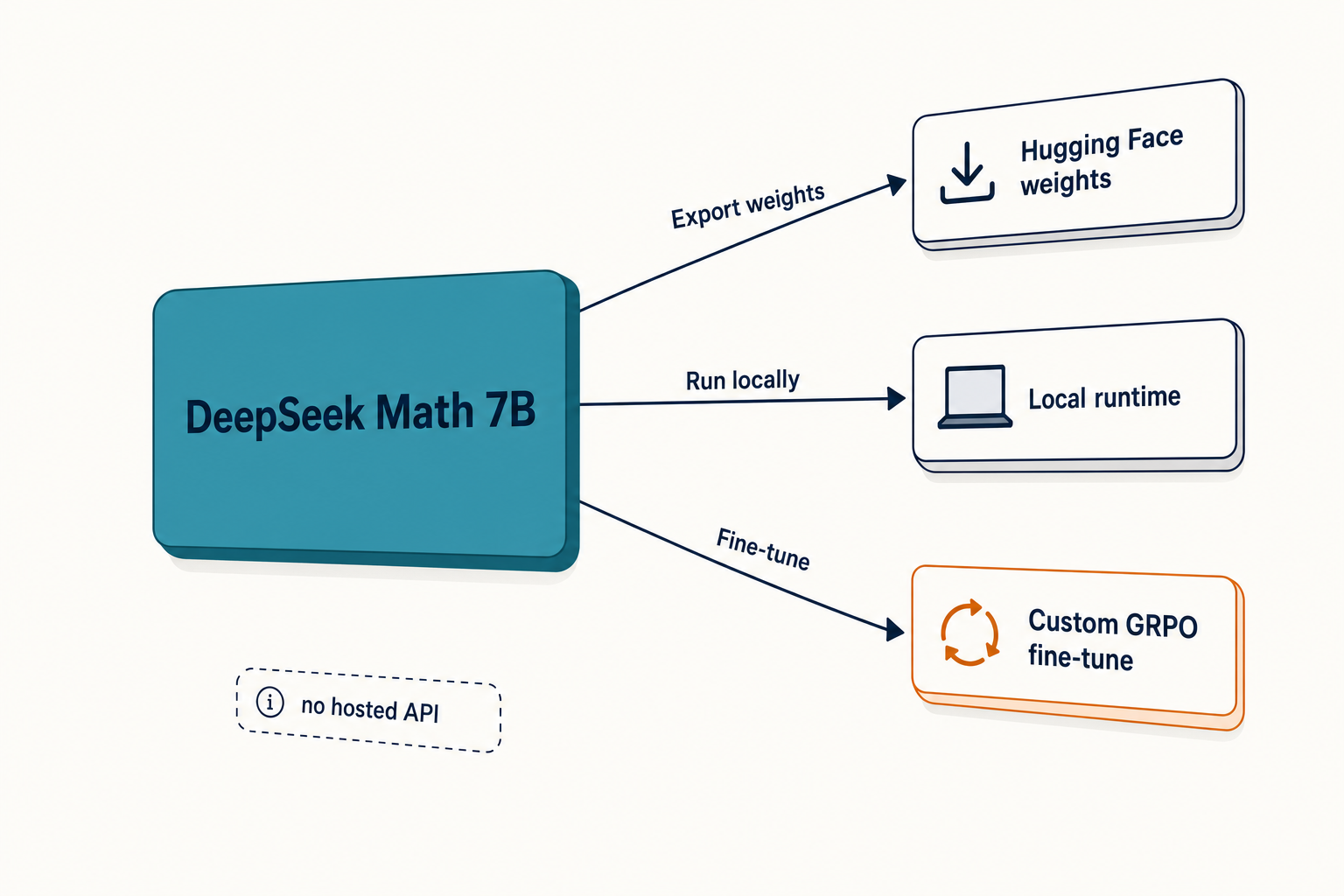

How to access DeepSeek Math

There is no first-party hosted API for DeepSeek Math the way there is for the V4 family. You have three practical paths:

- Hugging Face weights. You can directly use Hugging Face’s Transformers for model inference. The repos are

deepseek-ai/deepseek-math-7b-base,-instruct, and-rl. Pair this with our install DeepSeek locally walkthrough for a clean setup. - Ollama or LM Studio. Quantised GGUFs of all three variants are widely mirrored. See running DeepSeek on Ollama for the exact

ollama pullcommands. - Third-party API providers. Together AI, Fireworks and Nebius have hosted the RL variant intermittently. Always check current availability before committing.

If you simply want strong maths from DeepSeek today and do not specifically need the math model, the V4 family is the practical answer. DeepSeek Math is documented in the arXiv paper at 2402.03300, but for production maths workloads in 2026 most teams should compare it against V4-Flash before defaulting to it.

Minimal Python inference example

A minimal Hugging Face Transformers snippet to run the RL variant locally:

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

model_id = "deepseek-ai/deepseek-math-7b-rl"

tok = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(

model_id, torch_dtype=torch.bfloat16, device_map="auto"

)

prompt = (

"Problem: Find the smallest positive integer n such that "

"n^2 + n + 41 is composite.n"

"Please reason step by step, and put your final answer within boxed{}."

)

inputs = tok(prompt, return_tensors="pt").to(model.device)

out = model.generate(**inputs, max_new_tokens=512, temperature=0.0)

print(tok.decode(out[0], skip_special_tokens=True))Set temperature=0.0 for deterministic maths, per DeepSeek’s own guidance for code and mathematics tasks. Use the boxed-answer prompt template — DeepSeek Math was trained to emit final answers inside boxed{}, and downstream evaluators expect it.

Pricing snapshot

DeepSeek Math itself has no first-party API price — it is a weights release. If you want to use DeepSeek’s hosted endpoint for maths reasoning today, you are paying V4 rates. As of April 2026, V4-Flash lists $0.0028 (cache hit) / $0.14 (cache miss) input and $0.28 output per 1M tokens, while V4-Pro lists $0.0145 / $1.74 / $3.48 (currently 75% off through 2026-05-31, making the effective rates $0.003625 / $0.435 / $0.87). Verify both on the DeepSeek API pricing page before committing.

Self-hosting DeepSeek Math is genuinely cheap: a 7B model in bf16 occupies roughly 14 GB of VRAM, and a single A10/L4 instance is enough for batched inference. For sizing, our DeepSeek hardware calculator covers the maths.

Best use cases

This is a niche model. The honest fits are:

- Education and tutoring backends. Step-by-step solutions for grade-school through competition problems. See DeepSeek for math and DeepSeek for students for workflow patterns.

- Research baselines. If you are publishing on math reasoning or RL post-training, this is one of the cleanest open baselines available.

- GRPO experimentation. The clearest entry point for hands-on RL fine-tuning experiments — the paper’s recipe is fully reproducible.

- STEM content generation. Worked solutions, problem variations, and answer-key generation in workflows where a small specialist beats a large generalist.

Comparable alternatives

DeepSeek Math is not the only option, and in 2026 it is rarely the best one for production. Compare against:

- DeepSeek V4-Flash with thinking enabled for current-generation hosted maths. See the full DeepSeek V4-Flash breakdown.

- DeepSeek R1 if you specifically want a reasoning model trained with the GRPO descendants. The DeepSeek R1 page covers the differences.

- OpenAI’s reasoning models if you need the absolute maximum on competition mathematics and the spend is justified — see DeepSeek vs OpenAI o1 for a direct comparison.

- Other open specialists such as Qwen-Math, Mathstral, and Llemma — covered in our open-source AI like DeepSeek roundup.

For the broader picture across DeepSeek’s lineup, the DeepSeek models hub indexes every model in one place.

One note on the API surface, since people ask

If you mix DeepSeek Math (self-hosted weights) with DeepSeek’s hosted API in the same stack, remember the hosted side is a different beast. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com, and the API is stateless — you must resend the conversation history with each request, unlike the web app which keeps session state for you. The current generation is DeepSeek V4, shipped as two model IDs: deepseek-v4-pro (1.6T total / 49B active) and deepseek-v4-flash (284B / 13B active), both open-weight MoE under the MIT licence. Thinking mode is a request parameter — not a separate model — accepted as reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}, or reasoning_effort="max" for max-effort thinking; the response then returns reasoning_content alongside the final content.

Legacy IDs deepseek-chat and deepseek-reasoner still work and currently route to deepseek-v4-flash, but they retire on 2026-07-24 at 15:59 UTC; migration is a one-line model= swap. Both V4 tiers run a 1,000,000-token default context window with output up to 384,000 tokens, so anything you used to chunk against DeepSeek Math’s 4K window can now go in one shot.

Verdict

DeepSeek Math is historically important and still useful as a small specialist, but it is not the model you reach for on a fresh 2026 production decision unless self-hosted weights and a tiny VRAM budget are non-negotiable. Read it as the paper that gave the field GRPO, run it locally if you are teaching yourself RL post-training, and use V4-Flash for everything else.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

What is DeepSeek Math used for?

DeepSeek Math is a 7B specialist model for mathematical reasoning — grade-school word problems, competition mathematics, step-by-step worked solutions, and basic theorem-proving experiments. It is also widely used as a research baseline for reinforcement-learning post-training, since the GRPO algorithm was introduced alongside it. For applied workflows, see our roundup of DeepSeek for math.

How does DeepSeek Math compare to DeepSeek V4?

They are very different beasts. DeepSeek Math is a 4K-context dense 7B from early 2024; DeepSeek V4 is a 2026 MoE family with 1M-token context and a thinking mode. V4 is far stronger on most maths tasks, but DeepSeek Math is dramatically smaller, fully self-hostable on a single GPU, and useful as a clean GRPO baseline. Pick V4 for production, Math for research.

Is DeepSeek Math free to use commercially?

Yes, with caveats. The use of the model is subject to the terms outlined in its licence section, and commercial usage is permitted under those terms. The code is MIT-licensed; the weights are under the DeepSeek Model Licence rather than MIT. Read the full licence on the GitHub repo before shipping a product. For a broader discussion, see is DeepSeek open source.

What benchmark scores did DeepSeek Math achieve?

The headline numbers from the technical report: 88.2% on GSM8K and 51.7% on MATH for the RL variant with step-by-step reasoning, plus 60.9% on MATH with self-consistency over 64 samples. Out-of-domain CMATH reaches 88.8%. These are 2024 numbers against 2024 baselines — useful for context, not for current-generation comparisons. Our DeepSeek benchmarks 2026 page tracks the latest figures.

Can I fine-tune DeepSeek Math with GRPO myself?

Yes. The Hugging Face TRL library has a first-class GRPO trainer, vLLM supports the rollout pattern, and the original paper documents hyperparameters (learning rate 1e-6, KL coefficient 0.04, 64 samples per question) clearly enough to reproduce. Start with the Instruct variant as your base. For a Python-side setup, see fine-tuning DeepSeek.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

- Model cardDeepSeek Math 7B (RL) model cardOpen-weight RL variant for local inferenceLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- Technical reportDeepSeekMath technical report on arXiv (2402.03300)GRPO algorithm details and GSM8K/MATH/CMATH numbersLast checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.