Is DeepSeek Open Source? A Clear Look at Licensing in 2026

Short answer, up front: yes, DeepSeek open source releases are real, and the current generation — DeepSeek V4 — ships both weights and code under the MIT License. But “open source” in AI is a term people use loosely, and older DeepSeek models (V3 base, Coder-V2, VL2) split a permissive code license from a more restrictive weights license. If you plan to fine-tune, self-host or ship a commercial product on top of DeepSeek, those differences matter.

This guide walks through exactly what’s open in April 2026, what each license allows, where to download the weights, and what trade-offs still apply compared with closed APIs from OpenAI, Anthropic and Google.

Is DeepSeek open source? The short answer

Yes — with caveats worth knowing. DeepSeek publishes its models as open-weight releases on Hugging Face, and every recent flagship (V4-Pro, V4-Flash, V3.2, V3.1, R1) carries the MIT License on both code and weights. DeepSeek the company runs a paid API and a free chat app, but the underlying models are downloadable, forkable and commercially usable.

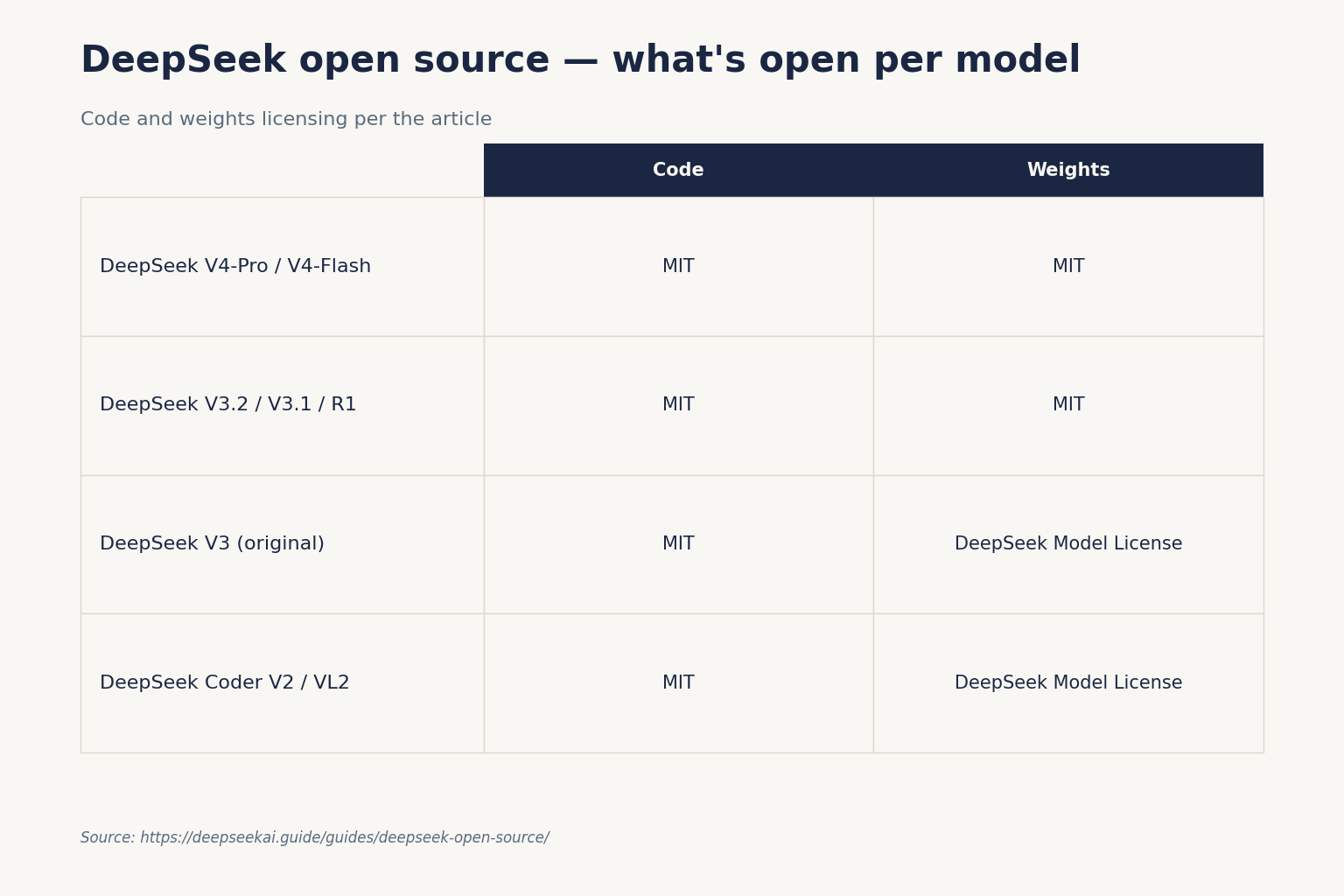

That makes DeepSeek more permissive than Meta’s Llama family (which restricts certain commercial uses) and radically more open than the GPT-5, Claude 4 or Gemini 3 families, whose weights are not published at all. It does not automatically make every DeepSeek artefact MIT-licensed — some older model weights sit under a separate custom license. The table below shows the split.

DeepSeek licensing, per model

There are two licenses in play across the DeepSeek catalogue:

- MIT License — a short, permissive license that allows commercial use, modification, redistribution and sublicensing, as long as the copyright notice is preserved.

- DeepSeek Model License — a custom license modified from OpenRAIL, with use-based restrictions (no illegal or hazardous use) but otherwise broad freedoms including commercial deployment, fine-tuning, distillation and derivative works.

Here is where each major model lands as of April 2026:

| Model | Release | Code license | Weights license |

|---|---|---|---|

| DeepSeek-V4-Pro | 2026-04-24 | MIT | MIT |

| DeepSeek-V4-Flash | 2026-04-24 | MIT | MIT |

| DeepSeek-V3.2 | 2025-12 | MIT | MIT |

| DeepSeek-V3.1 | 2025-08 | MIT | MIT |

| DeepSeek-R1 | 2025-01 | MIT | MIT |

| DeepSeek-V3 (original base/chat) | 2024-12 | MIT | DeepSeek Model License |

| DeepSeek-Coder-V2 | 2024 | MIT | DeepSeek Model License |

| DeepSeek-VL2 | 2024 | MIT | DeepSeek Model License |

For V4, the Hugging Face cards for DeepSeek-V4-Pro and DeepSeek-V4-Flash state plainly that the repository and model weights are licensed under the MIT License. The same is true for R1: DeepSeek’s own launch notes confirmed code and models released under the MIT License, with freedom to distill and commercialize, and the R1 repo adds that the R1 series supports commercial use and allows modifications and derivative works, including distillation for training other LLMs.

What MIT actually grants you

For V4 and R1, MIT means you can:

- Download the weights and run them on your own hardware.

- Fine-tune them on proprietary data and keep the result private.

- Serve the model to paying customers without a royalty or revenue share.

- Distill outputs into smaller models — explicitly permitted.

- Redistribute modified versions under any compatible license, including proprietary ones.

The only hard requirement is preserving the MIT copyright notice in source distributions. If you are auditing DeepSeek privacy and data handling for a contract, MIT is about as clean a license as you will find on a frontier-class model.

What the DeepSeek Model License adds

For older weights (V3 base, Coder-V2, VL2), the DeepSeek Model License is a custom OpenRAIL-style license. It still permits commercial deployment and derivative work, but layers in use-based restrictions — for instance, no use for illegal activity, weapons development, surveillance of protected groups, and similar categories. According to DeepSeek’s own license FAQ, current DeepSeek open-source models can be utilized for any lawful purpose, including direct deployment, derivative development, developing proprietary products, or integrating into a model platform for distribution. Downstream authors must pass on the same use-based restrictions when redistributing.

Where to download DeepSeek weights

DeepSeek publishes official weights on two platforms:

- Hugging Face — the primary distribution channel for

deepseek-ai/DeepSeek-V4-Pro,DeepSeek-V4-Flash, and the entire back-catalogue. - ModelScope — the Chinese-hosted equivalent, useful if Hugging Face is slow from your region. According to Phemex’s reporting, V4 is available under the MIT license on platforms like Hugging Face and ModelScope.

Community re-hosts appear quickly on Ollama and in quantized GGUF builds. If you are looking for something you can actually run on a workstation rather than a multi-GPU server, start with the R1 distilled models (1.5B to 70B) or the Coder family. Full V4-Pro is a 1.6T-parameter MoE and V4-Flash is 284B — both are data-centre workloads, not laptop workloads.

How V4 compares on openness

V4’s release notes position it as a frontier-tier open-weight drop. From the Hugging Face card: V4-Pro has 1.6T parameters (49B activated) and V4-Flash has 284B parameters (13B activated), both supporting a context length of one million tokens. On efficiency, in the 1M-token setting V4-Pro requires only 27% of single-token inference FLOPs and 10% of the KV cache compared with DeepSeek-V3.2.

Independent coverage framed this as a deliberately open posture. According to The Next Web, V4-Pro claims top performance on coding and maths among open models and trails only Gemini 3.1-Pro for world knowledge, with DeepSeek estimating a 3-to-6-month gap to closed frontier systems. Investing.com noted that on MMLU-Pro, V4-Pro matches OpenAI’s GPT-5.4 while slightly trailing Gemini-3.1-Pro and Claude Opus 4.6. Numbers are DeepSeek-reported pending independent evaluation — treat them as a starting point, not a final verdict.

How it stacks up against other “open” releases

| Model family | Weights published | License posture | Commercial use |

|---|---|---|---|

| DeepSeek V4 / R1 | Yes | MIT (code + weights) | Unrestricted |

| Meta Llama 4 | Yes | Custom community license | Restricted above user thresholds; can’t be used to improve competing LLMs |

| Mistral open models | Select tiers | Apache 2.0 on open tiers; commercial tiers closed | Depends on tier |

| Qwen 3 | Yes | Apache 2.0 on most sizes | Broad |

| GPT-5 family | No | Proprietary, API-only | Via API terms only |

| Claude 4 family | No | Proprietary, API-only | Via API terms only |

| Gemini 3 family | No (except Gemma tier) | Proprietary; Gemma separate | Via API terms only |

For a deeper head-to-head, see DeepSeek vs Llama on licensing specifics. The practical read: among serious frontier models, DeepSeek and Qwen are the two where you can legitimately take weights, fine-tune, and ship a commercial product without a signed agreement.

Is DeepSeek “fully” open source? The honest answer

By the Open Source Initiative’s draft AI definition (OSAID), a fully open model also publishes training data, training code and enough documentation to reproduce the model. DeepSeek publishes technical reports, weights, inference code and MIT licensing, but it does not publish:

- The pre-training dataset in a reproducible form.

- The full training pipeline source.

- Exact data-mixing recipes.

That puts DeepSeek in the same “open-weight, not fully open” bucket as Llama, Qwen and Mistral by OSAID criteria. For most practical users — developers fine-tuning for a product, researchers studying capabilities, enterprises self-hosting for privacy — open-weight plus MIT is what you actually need. If reproducibility is a hard requirement, neither DeepSeek nor any other frontier lab currently meets it.

Accessing the models — open weights vs API

Even if you never download the weights, you can hit V4 through the managed API. The current generation is DeepSeek V4 (released April 24, 2026), exposed as two open-weight MoE model IDs: deepseek-v4-pro (1.6T total / 49B active) and deepseek-v4-flash (284B / 13B active). Both are MIT-licensed open weights on Hugging Face, and both serve from the same API surface.

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. DeepSeek also exposes an Anthropic-compatible surface at the same base URL — per TechNode, the API supports compatibility with both OpenAI and Anthropic interface standards. The API is stateless: unlike the web chat and mobile app, which keep session history for you, API clients must resend the full conversation on every request.

Here is the minimum Python to hit V4 through the OpenAI SDK:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="sk-...",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Explain MIT licensing in one paragraph."}],

temperature=1.3,

max_tokens=512,

)

print(resp.choices[0].message.content)

Thinking mode is a request parameter on either V4 model, not a separate model ID. To enable it, set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}, or reasoning_effort="max" for maximum-effort reasoning (the latter wants a context window of at least 384K tokens). When thinking is on, the response returns reasoning_content alongside the final content. Beta features include JSON mode, tool calling, streaming, context caching, FIM completion (non-thinking mode only) and Chat Prefix Completion. See the DeepSeek API documentation for the parameter reference.

Legacy IDs (deepseek-chat, deepseek-reasoner) still resolve, but only as a migration aid — they route to deepseek-v4-flash until retirement on 2026-07-24 at 15:59 UTC. After that date, requests using those IDs will fail. Swapping model IDs is a one-line change; base_url does not need to move.

API pricing snapshot

Because the weights are open, there is no vendor lock on inference — you can always run V4 yourself or pick a third-party host. DeepSeek’s own rates, as of April 2026, undercut the closed frontier APIs by a wide margin:

| Model | Input (cache hit) | Input (cache miss) | Output |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 / 1M | $0.14 / 1M | $0.28 / 1M |

deepseek-v4-pro |

$0.0145 / 1M (list; promo $0.003625 through 2026-05-31) | $0.435 promo / $1.74 list per 1M | $0.87 promo / $3.48 list per 1M |

Verify current rates on the official pricing page before committing to a production budget; Preview-window prices can shift. Note that the off-peak discount that applied in early 2025 ended on 2025-09-05 and has not returned. See our DeepSeek API pricing breakdown for worked examples, or the DeepSeek pricing calculator if you want to cost out your own workload.

Running DeepSeek open source weights locally

Open weights only matter if you can actually run them. The hardware reality is blunt: V4-Pro and V4-Flash are large MoE models that assume multi-GPU inference. If you want something on a single machine, drop down the range:

- R1 Distill (1.5B – 14B) — runs on consumer GPUs with 8–24 GB VRAM. Good for laptops.

- R1 Distill 32B — needs a 24 GB card and quantization, or two lower-end cards.

- R1 Distill 70B — needs serious workstation hardware or quantized-GGUF tricks.

- Full R1, V3.2, V4-Flash, V4-Pro — data-centre only, or managed cloud inference.

The simplest on-ramp is Ollama. Follow our guide to running DeepSeek on Ollama for a one-command install of a distilled variant. If you prefer containerised deployment, our install DeepSeek locally walkthrough covers Docker and direct Python paths. Confirm your machine meets the minimums in the DeepSeek system requirements reference before downloading tens of gigabytes of weights.

Why does DeepSeek give the weights away?

It is a fair question, and the honest answer is a mix of strategy and engineering culture. Open-weight releases drive adoption, surface bugs through community testing, build developer mindshare in a space dominated by closed labs, and — not incidentally — put pressure on closed competitors’ pricing. R1’s launch in January 2025 triggered a market re-pricing of AI compute expectations; V4 is a continuation of that playbook with a frontier-tier model and transparent efficiency claims.

That doesn’t make DeepSeek a charity. The company monetises through its API (which is where most commercial demand lands) and through enterprise relationships. Open weights are a lever, not the business.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Is DeepSeek open source or open weight?

Both terms apply, but with precision. DeepSeek publishes model weights and inference code on Hugging Face under the MIT License for V4, V3.2, V3.1 and R1 — that is fully “open weight” and, by most practical definitions, “open source.” It does not publish the training dataset or full training pipeline, so it does not meet the strictest OSAID “fully open” bar. For commercial use, MIT is enough. See our what is DeepSeek explainer for context.

Can I use DeepSeek commercially without paying?

Yes, if you run the open weights yourself. MIT licensing on V4-Pro, V4-Flash, V3.2 and R1 allows commercial deployment, fine-tuning and distillation without royalties or revenue share. You only pay if you use DeepSeek’s hosted API, which has its own pricing tiers. Older weights under the DeepSeek Model License (V3 base, Coder-V2, VL2) also permit commercial use but add use-based restrictions for lawful-purpose compliance.

What license does DeepSeek V4 use?

Both DeepSeek-V4-Pro and DeepSeek-V4-Flash ship under the MIT License on both the GitHub code repository and the Hugging Face weights repository, as stated on the model cards. That makes V4 the most permissively licensed frontier-class open model family in April 2026. See the full DeepSeek V4 model profile for architecture details, including the 1M-token context and the FP4/FP8 mixed-precision weights.

How does DeepSeek’s openness compare to Llama or GPT?

DeepSeek is more permissive than Meta’s Llama, whose custom license restricts using Llama outputs to improve non-Llama LLMs and caps free commercial use above specific user thresholds. It is radically more open than OpenAI’s GPT-5 or Anthropic’s Claude 4 families, whose weights are not released at all. For a head-to-head on licensing and capabilities, see DeepSeek vs Llama.

Can I fine-tune DeepSeek on my own data?

Yes. The MIT License on V4 and R1 explicitly permits modifications and derivative works, including fine-tuning and distillation. The DeepSeek Model License on older weights also allows fine-tuning for any lawful purpose. Fine-tuned outputs belong to you and can be deployed commercially or kept private. Our fine-tuning DeepSeek tutorial covers the practical workflow with LoRA and full-parameter approaches.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

- Model cardDeepSeek-V4-Pro on Hugging FaceMIT-licensed weights repository confirming V4 license termsLast checked: April 30, 2026

- OfficialDeepSeek License FAQ (deepseeklicense.github.io)DeepSeek's own license FAQ for the legacy V3-base / Coder / VL2 splitLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.