Category 18 articles

Models

Comprehensive guides to every DeepSeek AI model including V3, V3.2, R1, Coder, Math, VL, and more. Compare capabilities, architecture, benchmarks, and real-world performance in one place.

-

Models

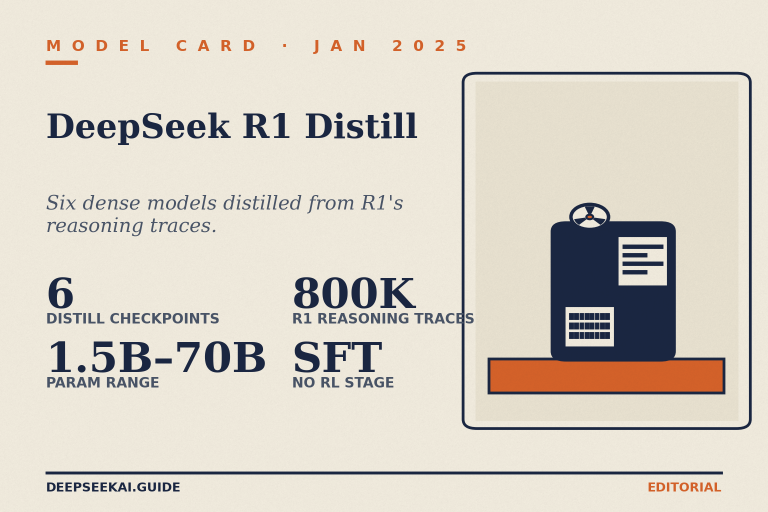

DeepSeek R1 Distill: The Six Reasoning Models You Can Run Locally

DeepSeek R1 Distill brings R1's reasoning to 1.5B–70B local models. Compare benchmarks, licences and hardware needs — pick the right size today.

Models

DeepSeek R1 Distill: The Six Reasoning Models You Can Run Locally

DeepSeek R1 Distill brings R1's reasoning to 1.5B–70B local models. Compare benchmarks, licences and hardware needs — pick the right size today.

-

Models

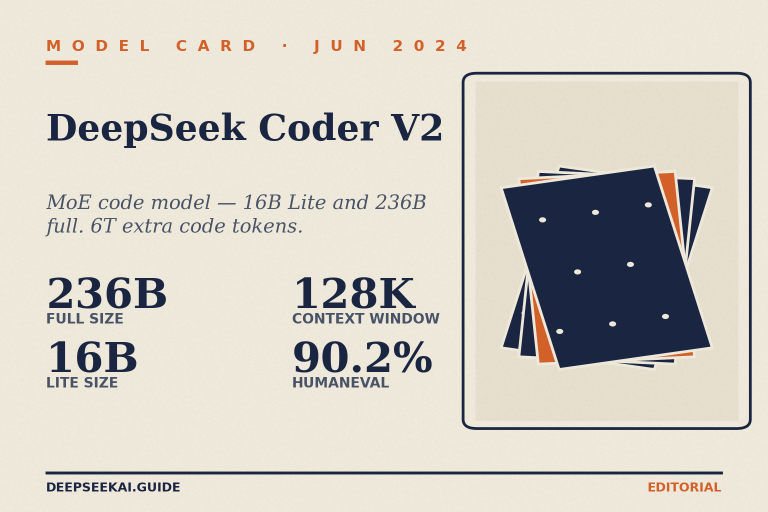

DeepSeek Coder V2: The Open-Source MoE Code Model Explained

DeepSeek Coder V2 hit 90.2% on HumanEval as an open MoE coder. See specs, benchmarks, pricing and how to access it today.

Models

DeepSeek Coder V2: The Open-Source MoE Code Model Explained

DeepSeek Coder V2 hit 90.2% on HumanEval as an open MoE coder. See specs, benchmarks, pricing and how to access it today.

-

Models

DeepSeek Math 7B: Benchmarks, GRPO and How to Use It in 2026

DeepSeek Math 7B hit 51.7% on MATH and pioneered GRPO. See benchmarks, access options and how it compares to V4 — read…

Models

DeepSeek Math 7B: Benchmarks, GRPO and How to Use It in 2026

DeepSeek Math 7B hit 51.7% on MATH and pioneered GRPO. See benchmarks, access options and how it compares to V4 — read…

-

Models

DeepSeek VL: The First-Generation Vision-Language Model, Reviewed

DeepSeek VL is the original 1.3B/7B open vision-language model. See architecture, benchmarks, licensing and how to run it. Read the practitioner guide.

Models

DeepSeek VL: The First-Generation Vision-Language Model, Reviewed

DeepSeek VL is the original 1.3B/7B open vision-language model. See architecture, benchmarks, licensing and how to run it. Read the practitioner guide.

-

Models

DeepSeek VL2: Practitioner’s Guide to the MoE Vision-Language Family

DeepSeek VL2 is an open-weight MoE vision-language model with strong OCR and grounding. Compare variants, benchmarks and access — read the practitioner…

Models

DeepSeek VL2: Practitioner’s Guide to the MoE Vision-Language Family

DeepSeek VL2 is an open-weight MoE vision-language model with strong OCR and grounding. Compare variants, benchmarks and access — read the practitioner…

-

Models

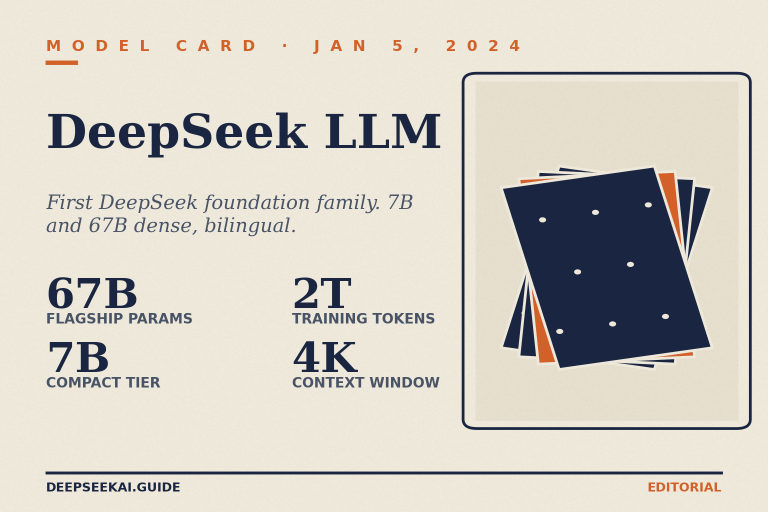

DeepSeek LLM Explained: Architecture, Benchmarks and Lineage

DeepSeek LLM was the first DeepSeek release: 7B and 67B open-weight models trained on 2T tokens. Read the architecture, benchmarks and verdict.

Models

DeepSeek LLM Explained: Architecture, Benchmarks and Lineage

DeepSeek LLM was the first DeepSeek release: 7B and 67B open-weight models trained on 2T tokens. Read the architecture, benchmarks and verdict.

-

Models

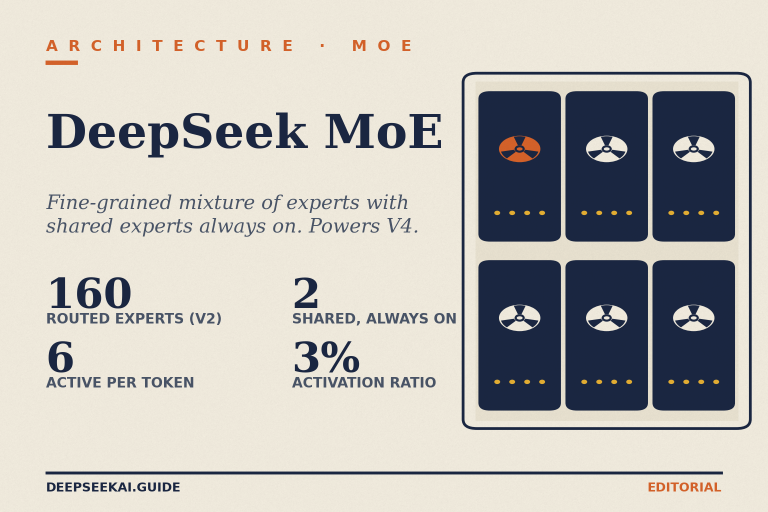

What DeepSeek MoE Is and Why It Powers V4-Pro and V4-Flash

DeepSeek MoE explained: fine-grained experts, shared experts, and how V4-Pro and V4-Flash use it. Compare specs and pricing — read the full…

Models

What DeepSeek MoE Is and Why It Powers V4-Pro and V4-Flash

DeepSeek MoE explained: fine-grained experts, shared experts, and how V4-Pro and V4-Flash use it. Compare specs and pricing — read the full…

-

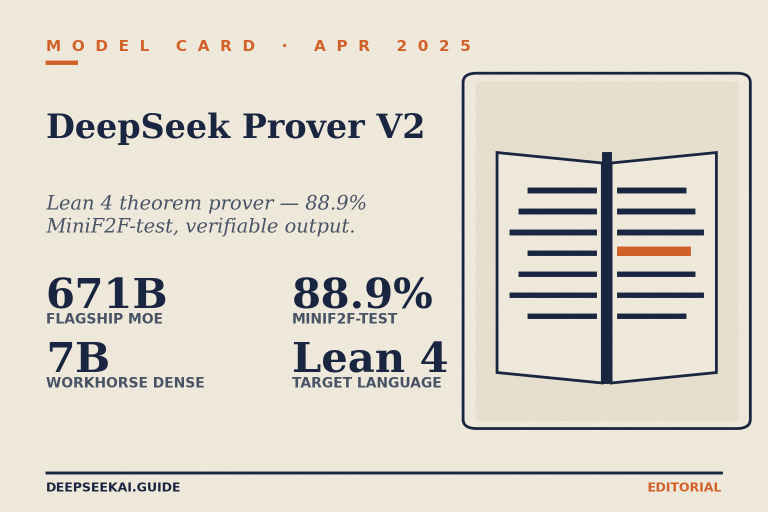

Models

DeepSeek Prover: The Open-Source Lean 4 Theorem-Proving Model

DeepSeek Prover hits 88.9% on MiniF2F for Lean 4 theorem proving. See architecture, benchmarks, access, and limits — read the full breakdown.

Models

DeepSeek Prover: The Open-Source Lean 4 Theorem-Proving Model

DeepSeek Prover hits 88.9% on MiniF2F for Lean 4 theorem proving. See architecture, benchmarks, access, and limits — read the full breakdown.

-

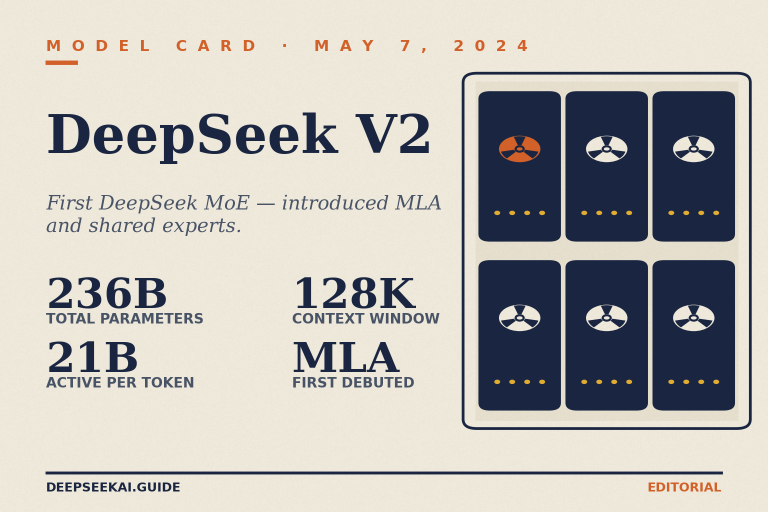

Models

DeepSeek V2 Explained: The MoE Model That Started a Lineage

DeepSeek V2 introduced MLA and DeepSeekMoE in 2024. See specs, benchmarks, pricing legacy and how it compares to V4 — read the…

Models

DeepSeek V2 Explained: The MoE Model That Started a Lineage

DeepSeek V2 introduced MLA and DeepSeekMoE in 2024. See specs, benchmarks, pricing legacy and how it compares to V4 — read the…

-

Models

DeepSeek V2.5 Explained: Specs, Benchmarks and What Replaced It

DeepSeek V2.5 merged Chat and Coder V2 into one model. See specs, benchmarks, pricing context and migration paths — read the full…

Models

DeepSeek V2.5 Explained: Specs, Benchmarks and What Replaced It

DeepSeek V2.5 merged Chat and Coder V2 into one model. See specs, benchmarks, pricing context and migration paths — read the full…