DeepSeek VL: The First-Generation Vision-Language Model, Reviewed

Should you still use DeepSeek VL in 2026, or has the field moved on? It’s a fair question. The original DeepSeek VL shipped in March 2024 as a 1.3B/7B open vision-language family — long before V4, before VL2, and before multimodal became table stakes. Two years on, plenty of teams are still pulling those weights from Hugging Face for OCR pilots, on-device demos and academic baselines, because the tiny 1.3B variant runs on a single consumer GPU and the licence permits commercial use.

This article is a practitioner’s review: what DeepSeek VL actually is, how its hybrid SigLIP-plus-SAM encoder works, what the published benchmarks say, where it falls short of newer multimodal models, and exactly how to load it today.

What DeepSeek VL is

DeepSeek VL is DeepSeek AI’s first open-source vision-language model family, released on March 11, 2024. The release included DeepSeek-VL-7B-base, DeepSeek-VL-7B-chat, DeepSeek-VL-1.3B-base, and DeepSeek-VL-1.3B-chat — two sizes, each with a base and a chat variant for different integration scenarios. The point of the release was practical multimodality: a model that could read web screenshots, PDFs, charts and natural images without sacrificing the underlying language ability inherited from DeepSeek LLM.

The DeepSeek VL family was positioned as a vision-language chatbot for real-world applications, with the 1.3B and 7B weights made publicly accessible to support further work on top of them. Today it has been superseded by DeepSeek VL2, but the original VL is still the cleanest reference implementation for the hybrid-encoder approach DeepSeek pioneered.

Architecture and lineage

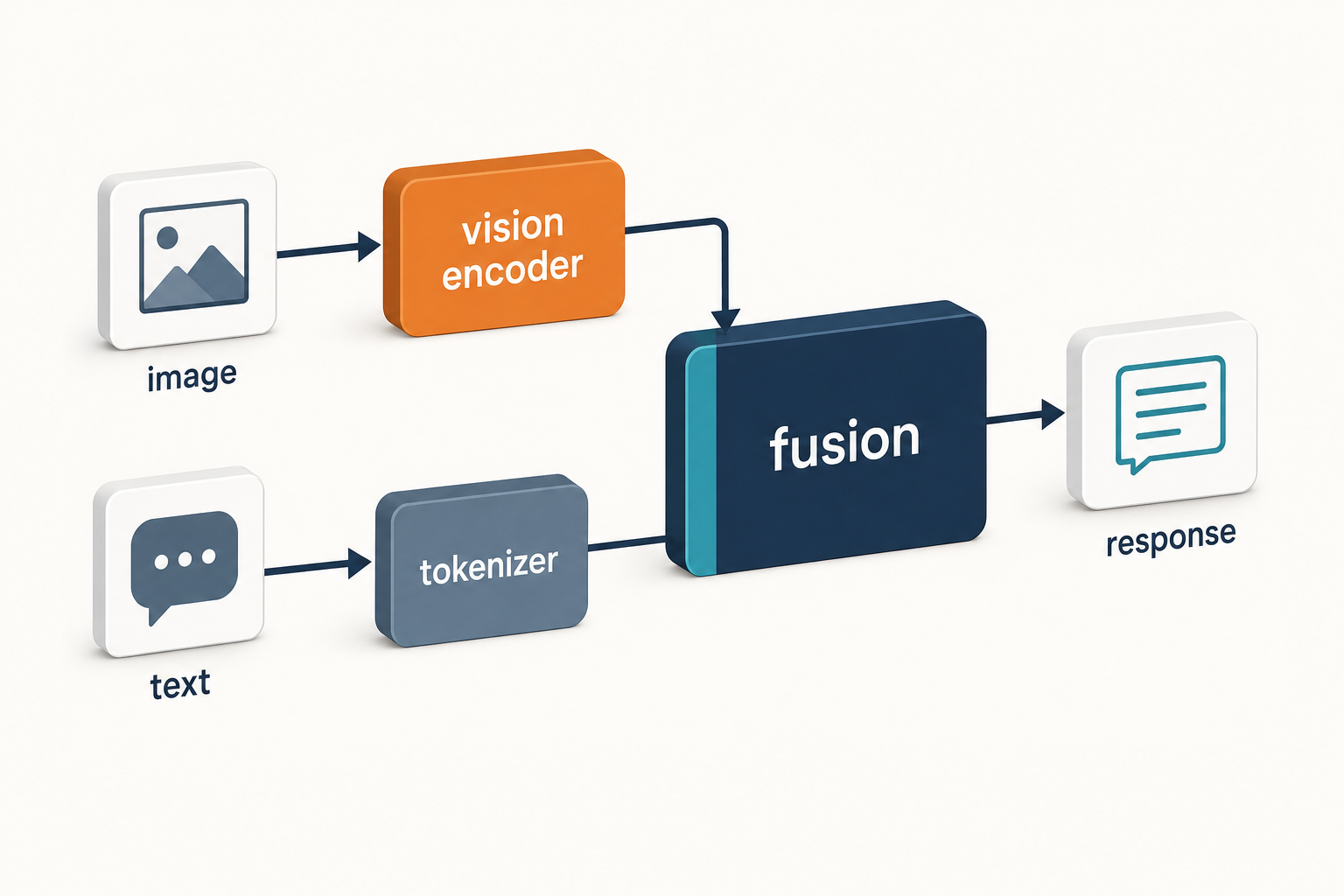

DeepSeek VL is a dense (non-MoE) adaptor-based VLM. Three components matter:

- Hybrid vision encoder. SigLIP-L accepts a low-resolution 384×384 input and focuses on high-level semantic features; SAM-B, based on ViTDet, accepts high-resolution 1024×1024 input and captures low-level detail. The two were chosen because details and semantics are best extracted at different resolutions.

- VL adaptor. The high-resolution SAM features are interpolated to 96×96×256, then run through two stride-2 convolutions to produce a 24×24×1024 feature map reshaped to 576×1024. The low-resolution SigLIP-L features at 576×1024 are concatenated with these, yielding 576 visual tokens at 2,048 dimensions, which pass through a GeLU activation and embedding layer into the language model.

- Language model. Built on DeepSeek LLM. The FFN uses SwiGLU, the model uses Rotary Embedding for positional encoding, and the tokenizer is shared with DeepSeek-LLM.

Training proceeds in three stages. Stage 1 trains only the VL adaptor while the vision encoder and language model stay frozen. Stage 2 jointly trains the VL adaptor and language model. Stage 3 is supervised fine-tuning, during which the low-resolution SigLIP-L, the VL adaptor and the language model are all trained.

On training scale, the 1.3B-base uses SigLIP-L on 384×384 inputs, is constructed on top of DeepSeek-LLM-1.3B-base (≈500B text tokens) and is finally trained on around 400B vision-language tokens. The 7B variant is built on the corresponding DeepSeek-LLM-7B base. Note that the technical report references the 7B language backbone as “available in scales of 1.3B and 6.7B parameters” — the “7B” in the model card is a rounding.

How DeepSeek VL fits in the lineage

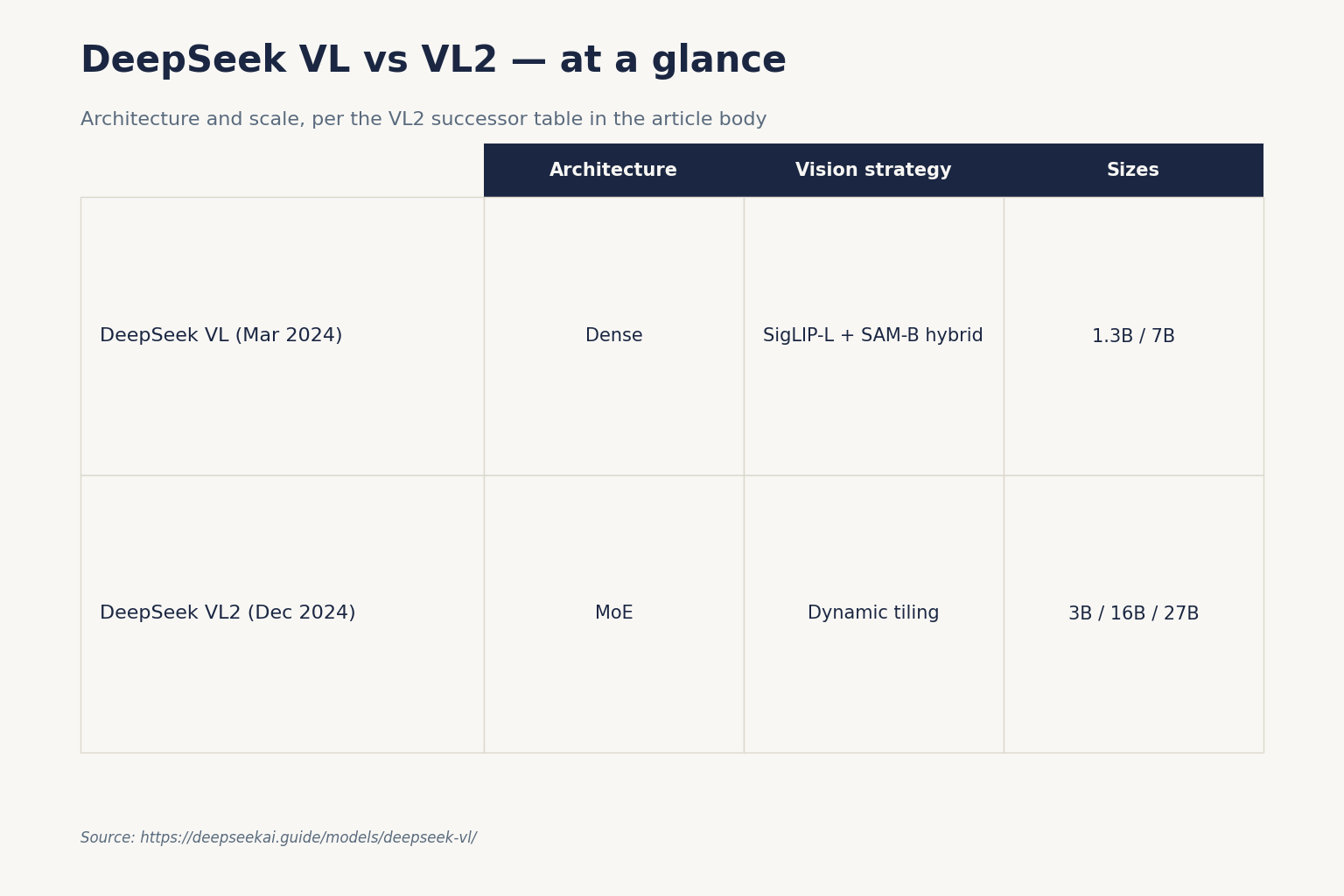

| Model | Released | Type | Vision strategy |

|---|---|---|---|

| DeepSeek VL | 2024-03-11 | Dense, 1.3B / 7B | SigLIP-L + SAM-B hybrid, fixed 1024×1024 |

| DeepSeek VL2 | 2024-12-13 | MoE, 3B / 16B / 27B total | Dynamic tiling, variable aspect ratio |

| DeepSeek Janus | 2024–2025 | Unified understanding + generation | Decoupled visual encoders |

VL2 is a meaningful jump rather than a refresh. It introduces a dynamic tiling vision encoding strategy that processes high-resolution images of varying aspect ratios, improving over DeepSeek VL’s hybrid encoder which extracted features at two fixed resolutions, and it removes the limitations of the old fixed-size encoder for tasks needing ultra-high resolution like document and chart analysis. If aspect ratio or document fidelity matter to you, skip straight to VL2.

Benchmarks

The DeepSeek VL technical report (arXiv 2403.05525) reports comparisons on multimodal benchmarks like MMBench, MMC, SEEDBench and OCRBench. The headline finding was that DeepSeek VL outperformed open-source models of similar size on benchmarks such as MMB, MMC and SEEDBench, and approached proprietary models — the report notes DeepSeek-VL versus GPT-4V at 70.4 vs 71.6 on SEEDBench, and DeepSeek-VL-1.3B significantly outperformed comparably sized models.

Two practitioner-relevant claims from the paper:

- Across the majority of language benchmarks, DeepSeek VL performs comparably to or surpasses DeepSeek-7B — meaning the multimodal training did not collapse text ability.

- DeepSeek-VL-7B shows a degree of decline on mathematics (GSM8K), suggesting a residual competitive relationship between vision and language modalities, possibly attributable to the limited 7B model capacity.

For exact numbers, read Table 6 and Table 9 of the arXiv paper — the report breaks results down by base vs chat and by benchmark. Do not quote those numbers second-hand: the table layout changed between v1 and v2.

Strengths — where DeepSeek VL still wins

- Tiny footprint. The 1.3B variant runs on a single 8 GB GPU at FP16 and is small enough for laptop or edge demos.

- Real-world data mix. The training data covers web screenshots, PDFs, OCR, charts and knowledge-based content (expert knowledge, textbooks), with an instruction-tuning dataset built from a use-case taxonomy of real user scenarios. This shows up in screenshot and form-reading tasks.

- Hybrid resolution. The SigLIP-plus-SAM combination handles 1024×1024 detail in a fixed token budget, which is unusual for a model this small.

- Commercial-friendly. Use of DeepSeek VL Base/Chat models is subject to the DeepSeek Model License, and the series — including Base and Chat — supports commercial use.

Weaknesses

- Fixed-resolution encoder. Tall receipts, wide spreadsheets and high-DPI scans are squeezed into 1024×1024, which loses fine detail. VL2’s dynamic tiling fixes this.

- Mathematical reasoning regression. The 7B’s GSM8K dip is documented in the report itself — do not pick DeepSeek VL for visual maths.

- No video, no audio. This is a still-image VLM.

- Older language backbone. The DeepSeek LLM behind it predates V2’s MLA and DeepSeekMoE breakthroughs, so reasoning is weaker than anything R1-derived.

- Few image slots per turn. Long multi-image conversations are awkward — a limitation VL2 also acknowledged, planning to extend the context window for richer multi-image interactions in a future version.

How to access DeepSeek VL

DeepSeek VL is not served from DeepSeek’s hosted API. The current generation behind POST /chat/completions on https://api.deepseek.com is the text-only DeepSeek V4 family — deepseek-v4-pro and deepseek-v4-flash, released April 24, 2026 — and that endpoint does not accept vision input as of this writing. You run DeepSeek VL yourself, from open weights.

Loading the weights

The official path is the deepseek-ai/DeepSeek-VL repository on GitHub plus the corresponding Hugging Face checkpoints. A minimal Python loader for the 7B chat model, taken from the project README:

import torch

from transformers import AutoModelForCausalLM

from deepseek_vl.models import VLChatProcessor, MultiModalityCausalLM

from deepseek_vl.utils.io import load_pil_images

model_path = "deepseek-ai/deepseek-vl-7b-chat"

vl_chat_processor = VLChatProcessor.from_pretrained(model_path)

tokenizer = vl_chat_processor.tokenizer

vl_gpt = AutoModelForCausalLM.from_pretrained(

model_path, trust_remote_code=True

)

vl_gpt = vl_gpt.to(torch.bfloat16).cuda().eval()

conversation = [

{"role": "User",

"content": "<image_placeholder>Describe each stage of this image.",

"images": ["./images/training_pipelines.jpg"]},

{"role": "Assistant", "content": ""},

]If you prefer the standard transformers pipeline interface, a transformers-port of DeepSeek VL is published as deepseek-community/deepseek-vl-1.3b-base on the Hub — a foundation model for visual language modelling, designed to process both text and images for contextually relevant responses. That community build is the easier on-ramp if you already use Hugging Face pipelines. For end-to-end setup help, the install DeepSeek locally walkthrough applies the same pattern.

Hardware requirements

| Model | Precision | Approx. VRAM | Practical hardware |

|---|---|---|---|

| DeepSeek-VL-1.3B | bf16 | ~4 GB | Consumer laptop GPU, Apple Silicon |

| DeepSeek-VL-1.3B | int8 | ~2 GB | Edge / on-device |

| DeepSeek-VL-7B | bf16 | ~16 GB | Single RTX 4090 / A10 |

| DeepSeek-VL-7B | int4 | ~6 GB | Mid-range consumer GPU |

For sizing, the DeepSeek hardware calculator takes precision and batch size into account.

Licensing

DeepSeek VL ships under a split licence: code under MIT, weights under the separate DeepSeek Model License. Use of DeepSeek VL Base/Chat models is subject to the DeepSeek Model License; the series supports commercial use. Read the full text on the model card before deploying — the Model License has terms (notably around restricted use) that the bare “MIT” label found in some third-party summaries does not capture. This contrasts with the V4 generation, where both code and weights ship MIT.

Best use cases

- Document and screenshot understanding — strong fit, given the training mix. Pair with a DeepSeek RAG tutorial for retrieval over PDFs.

- Education and tutoring demos — small enough for classroom hardware. See DeepSeek for education.

- Academic research baselines — VL is widely cited and reproducible. The DeepSeek for research page collects related workflows.

- On-device or air-gapped pilots — 1.3B fits where most multimodal models do not.

For higher-stakes production work — agents that need OCR fidelity, dynamic-aspect document parsing, or visual reasoning at scale — go to VL2 or look at the multimodal options listed in open-source AI like DeepSeek.

Comparable alternatives

If DeepSeek VL is on your shortlist, you are probably comparing it against:

- DeepSeek VL2 — the direct successor, MoE-based, dynamic tiling.

- DeepSeek vs Qwen — Qwen-VL is the most direct open-weight alternative at 1B–7B scale.

- DeepSeek vs Llama — LLaVA-style stacks built on Llama backbones cover similar ground.

For a broader view of where DeepSeek’s multimodal work sits in the family tree, see the DeepSeek models hub.

Verdict

DeepSeek VL was a credible 2024 release and is now a historical baseline. Pick the 1.3B if you need the smallest commercially usable VLM with real document-understanding training, or the 7B if you want a single-GPU multimodal chat model with permissive terms. For anything beyond a pilot, move to VL2 — and watch DeepSeek’s roadmap for whether the V4 family ever ships a vision tier.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

DeepSeek VL FAQ

Is DeepSeek VL free to use commercially?

Yes, with caveats. Use of DeepSeek VL Base and Chat models is subject to the DeepSeek Model License, and the series supports commercial use. The code is MIT, but the weights ship under the separate Model License. Read the model card for the full terms before deploying. For a plain-English overview of how DeepSeek’s licensing works across the line-up, see is DeepSeek open source.

What is the difference between DeepSeek VL and DeepSeek VL2?

VL is dense at 1.3B/7B; VL2 is a Mixture-of-Experts family. VL2 introduces dynamic tiling that handles varying aspect ratios, improving over VL’s fixed two-resolution hybrid encoder, and removes limitations on tasks needing ultra-high resolution such as document and chart analysis. If document fidelity or visual grounding matters, choose DeepSeek VL2.

Can I call DeepSeek VL through the DeepSeek API?

No. The hosted API at https://api.deepseek.com currently serves the text-only V4 family (deepseek-v4-pro and deepseek-v4-flash) on POST /chat/completions, and does not accept image input. To use DeepSeek VL you run the open weights yourself from Hugging Face. See the DeepSeek API documentation for the surfaces that are supported.

What hardware do I need to run DeepSeek VL locally?

The 1.3B variant runs in roughly 4 GB of VRAM at bf16, which fits a modern laptop GPU or Apple Silicon Mac. The 7B variant needs about 16 GB at bf16 — a single RTX 4090, A10 or comparable card. Quantising to int4 cuts those numbers further. Plug your specs into the DeepSeek hardware calculator to confirm before you download.

How does DeepSeek VL handle high-resolution images?

Through a hybrid two-encoder design. SigLIP-L takes 384×384 inputs for high-level semantics and SAM-B (a ViTDet-based encoder) takes 1024×1024 inputs for low-level detail, on the rationale that semantic and detailed information are best extracted at different resolutions. The two streams are fused into 576 visual tokens before reaching the language model. For more on the broader architecture choices DeepSeek has made, see the DeepSeek history page.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

- Model cardDeepSeek-VL-7B-chat model cardCanonical VL chat weights and Python loader patternLast checked: April 30, 2026

- RepositoryDeepSeek VL GitHub repositoryReference implementation and processor classesLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- Technical reportDeepSeek VL technical report (arXiv 2403.05525)Hybrid SigLIP+SAM encoder design and benchmark tablesLast checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.