Using DeepSeek for Business: A Practitioner’s Playbook

If you run a business and your finance team has flagged the AI line item, you have two practical options: cap usage, or move workloads to a cheaper provider that still does the job. DeepSeek for business sits squarely in that second bucket. The Hangzhou-based lab released its V4 Preview models on April 24, 2026, and they undercut frontier US providers on per-token pricing by roughly an order of magnitude while shipping with open weights, a 1-million-token context window, and an OpenAI-compatible API. That changes which projects make economic sense — bulk document review, always-on chat agents, internal knowledge bases — and which ones still belong on a closed-source frontier model. This guide walks through ten concrete business workflows, the API math behind them, the privacy questions you should not skip, and where DeepSeek genuinely is not the right tool.

Why DeepSeek matters for business buyers in 2026

The economics shifted on April 24, 2026. DeepSeek dropped the first of their V4 series in the shape of two preview models, DeepSeek-V4-Pro and DeepSeek-V4-Flash. Both models are 1 million token context Mixture of Experts. Pro is 1.6T total parameters, 49B active. Flash is 284B total, 13B active. Both ship under the MIT license, both are accessible via the same API base URL, and both support thinking and non-thinking modes through a single request parameter — not a separate model ID.

For business buyers, the headline is price. V4-Flash runs at $0.14 per million input tokens and $0.28 per million output tokens. V4-Pro currently runs at $0.435 input / $0.87 output during the 75% promo through 2026-05-31 (list $1.74 / $3.48). Both carry a 1M-token context window and up to 384K output tokens. Cache-hit pricing automatically kicks in on repeated prefixes, which on agent-style workloads (long system prompts, fixed tool schemas) is where most production spend lives.

One independent benchmarking site frames the trade-off honestly: DeepSeek V4 Pro costs $0.435 per 1M input tokens during the 75% promo through 2026-05-31 (list $1.74) (at the higher end, median: $0.60) and $0.87 per 1M output tokens during the 75% promo through 2026-05-31 / $3.48 list (the median figure is somewhat higher than average, median: $2.20), based on DeepSeek’s API. Read: V4-Pro is not the absolute cheapest option among open-weight reasoning models, but it pairs near-frontier quality with a price the average enterprise procurement team can stomach. V4-Flash is where the real cost story lives.

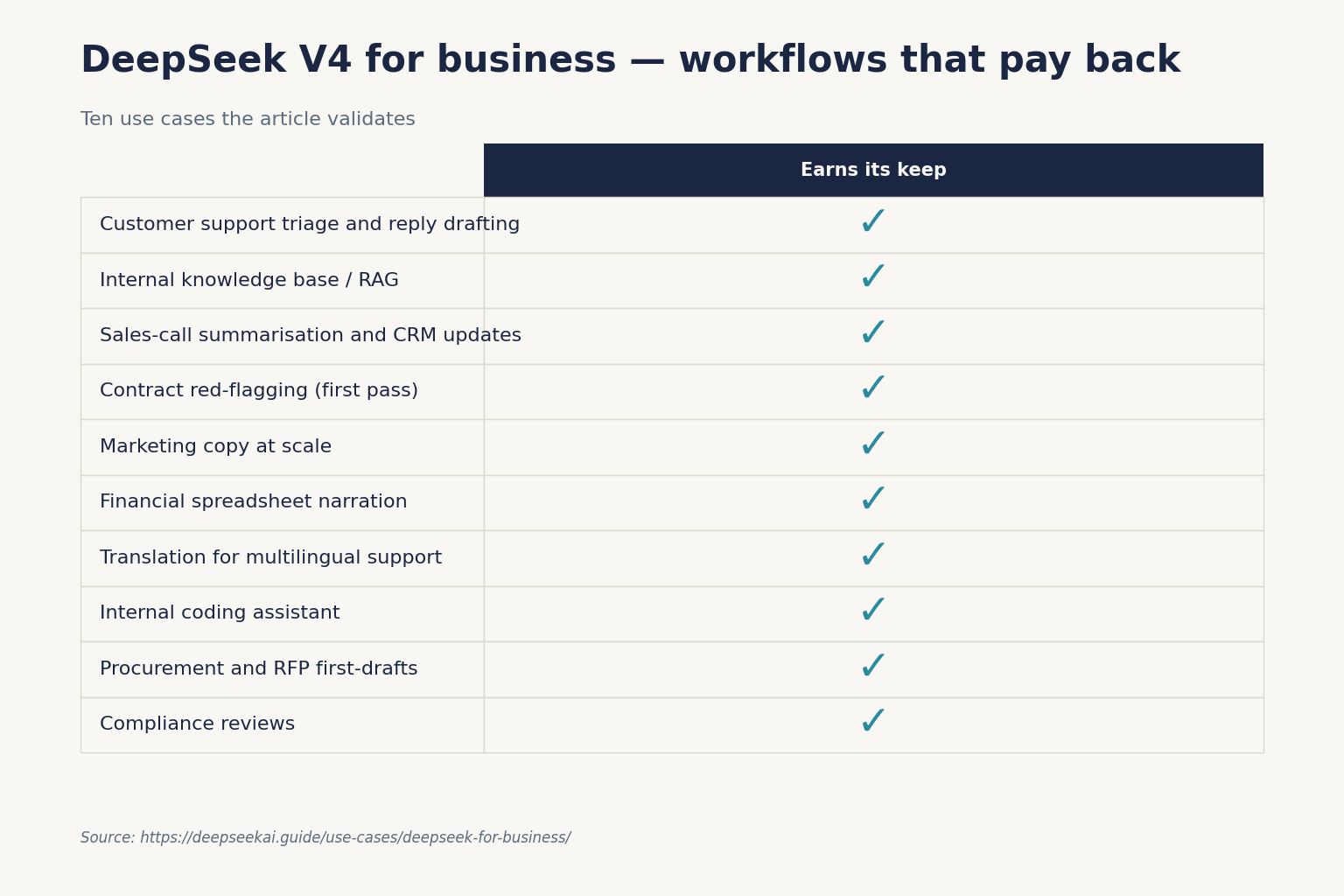

The ten business workflows that actually pay back

The list below is what I have personally run on V4-Flash and V4-Pro since the preview opened. Each item names the model tier I would default to and a one-line prompt sketch. For a deeper prompt library, see our DeepSeek prompt engineering guide.

1. Customer support triage and reply drafting

Use V4-Flash with a cached system prompt that contains your tone-of-voice rules, refund policy, and escalation criteria. New tickets cost cents per resolution. Pair with a human-in-the-loop reviewer for the first 60 days. See DeepSeek for customer support for the full setup.

2. Internal knowledge base / RAG over company documents

The 1M-token context window means small companies can sometimes skip a vector database entirely and stuff the whole policy library into a single request. Larger orgs still want retrieval. Build it once, then swap providers if costs move. The DeepSeek RAG tutorial covers both patterns.

3. Sales-call summarisation and CRM updates

V4-Flash, non-thinking mode. Feed the transcript, a short JSON schema for the CRM fields you want filled, and use JSON mode. Keep max_tokens high enough to avoid truncated objects (more on the JSON-mode caveats below).

4. Contract red-flagging (first pass only)

V4-Pro, thinking mode. The model flags suspicious clauses; a qualified lawyer reviews. This is augmentation, not replacement. Use the dedicated DeepSeek for legal research guide before letting any AI near a counter-party agreement.

5. Marketing copy at scale

V4-Flash for volume, V4-Pro for the hero pieces. Set temperature to 1.5 for creative writing and 1.3 for general translation, per DeepSeek’s own guidance. Our DeepSeek for marketing piece has a full prompt library.

6. Financial spreadsheet narration

Paste the CSV, ask for the three-bullet executive summary, the two anomalies worth investigating, and a single follow-up question for the analyst. V4-Flash handles this for fractions of a cent. For the deeper finance-specific playbook (filings analysis, modelling, risk and reporting), see our finance workflows guide.

7. Translation for multilingual support and product copy

V4-Flash at temperature=1.3. For high-stakes copy (legal, medical), keep a native-speaker reviewer in the loop.

8. Internal coding assistant

For engineering teams, V4-Pro is the better pick on agentic coding tasks. V4-Pro matches or beats GPT-5.5 on LiveCodeBench (93.5 vs the top tier) and Codeforces (3206 vs 3168) while costing a small fraction. Connect it to VS Code via our DeepSeek with VS Code walkthrough.

9. Procurement and RFP first-drafts

Long context shines here. Drop the full RFP plus your boilerplate response library into one request and ask V4-Pro for a structured first draft, then have your bid team rewrite the technical sections.

10. Compliance reviews against changing regulation

Cache the regulation text as the system prompt; ask new questions against it as user messages. This is where context caching genuinely saves four-figure monthly bills.

What it actually costs: a worked example on V4-Flash

The single biggest mistake business buyers make when comparing AI vendors is comparing the wrong column. DeepSeek’s pricing page shows three buckets: cache-hit input, cache-miss input, and output. You pay all three on most real workloads.

Imagine an internal support agent: 1,000,000 calls per month, a 2,000-token cached system prompt, a 200-token user question (always uncached on first sight), and a 300-token answer. On deepseek-v4-flash:

| Bucket | Tokens | Rate | Cost |

|---|---|---|---|

| Input, cache hit (system prompt) | 2,000,000,000 | $0.0028 / 1M | $56.00 |

| Input, cache miss (user question) | 200,000,000 | $0.14 / 1M | $28.00 |

| Output | 300,000,000 | $0.28 / 1M | $84.00 |

| Total | $117.60 |

The same workload on V4-Pro lands at roughly $1,421 — about ten times more — because cache-miss input is $0.435 / 1M promo (list $1.74) and output is $0.87 / 1M promo (list $3.48) through 2026-05-31. At cache-miss rates, V4-Pro is ~2.9x cheaper than GPT-5.5 on input and ~8.6x cheaper on output. So even Pro is competitive against frontier US APIs; Flash is in a different price tier entirely. For a more interactive sandbox, see the DeepSeek pricing calculator.

Setting up DeepSeek for your team

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. Keep base_url, just update model to deepseek-v4-pro or deepseek-v4-flash. Supports OpenAI ChatCompletions and Anthropic APIs. If your team has existing OpenAI SDK code, the migration is a one-line change. Here is the minimum Python:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_DEEPSEEK_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash", # or "deepseek-v4-pro"

messages=[

{"role": "system", "content": "You are a support agent for Acme Co."},

{"role": "user", "content": "How do I reset my password?"},

],

temperature=1.3,

max_tokens=512,

)

print(resp.choices[0].message.content)

Five things worth knowing before you ship this to production:

- The API is stateless. Resend the full

messagesarray on every call. The web chat at chat.deepseek.com remembers your session; the API does not. - Thinking mode is a parameter, not a model. Add

reasoning_effort="high"plusextra_body={"thinking": {"type": "enabled"}}to either V4 model. The response then returnsreasoning_contentalongside the finalcontent. - JSON mode is designed, not guaranteed. Set

response_format={"type": "json_object"}, include the word “json” in your prompt with a small example schema, and setmax_tokenshigh enough to avoid truncation. Handle the occasional empty response. - Legacy IDs still work — for now. deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time). Currently routing to deepseek-v4-flash non-thinking/thinking. Migrate before that date with a single

model=swap. - Context caching is automatic. Repeated prefixes get the cache-hit rate without any flag on your side.

Step-by-step setup, including key generation, billing top-up and rate limits, lives in DeepSeek API getting started.

Privacy, compliance and the China question

This is where most business conversations stop, and rightly so. DeepSeek’s first-party API processes data on servers in mainland China. Several governments have taken action: multiple US states, Australia, Taiwan, South Korea, Denmark and Italy introduced bans or other restrictions on DeepSeek-R1 shortly after its release, citing privacy and national security concerns. Treat that as the starting point of your due diligence, not the end.

Three practical paths if you want DeepSeek’s economics without its hosting:

- Self-host the open weights. V4-Flash at 160GB and V4-Pro at 865GB are both available on Hugging Face under MIT. Run them inside your own VPC, your own data, your own region.

- Use a Western-hosted reseller. Providers like OpenRouter or third-party clouds host the same weights and apply their own data-handling agreements.

- Restrict DeepSeek to non-sensitive workloads. Marketing copy, public-website FAQs, internal brainstorming. Keep customer PII and regulated data on a provider whose terms your legal team has already cleared.

For a country-by-country availability check, see DeepSeek availability by country.

Where DeepSeek is not the right tool

Honest list, from production experience:

- Regulated industries with strict data-residency rules unless you self-host. Clinical and HIPAA-bound workflows, defence, and many financial-services workloads need contractual data-handling guarantees the first-party API does not provide.

- Long-context retrieval where every needle matters. Claude still beats V4-Pro on long-context retrieval benchmarks, and Gemini 3.1 Pro still leads MMLU-Pro. If your workload depends on needle-in-a-haystack retrieval across a million tokens, the per-token savings may not recover the quality gap.

- Multimodal-first workflows. V4 is text-first. For heavy image or audio work, look elsewhere or pair DeepSeek with a vision model.

- Brand-voice writing where the human cost of a single bad output is high. Frontier US models still edge V4 on the subtle stuff. Use V4 for volume, premium models for the hero piece.

If any of these apply, the DeepSeek vs Claude and DeepSeek vs ChatGPT comparisons walk through the trade-offs in detail.

A 30-day rollout plan for SMBs

If you are a small business with no AI infrastructure today, here is the sequence I recommend:

- Week 1. Sign up for the API, top up $20, and ship a single internal tool — meeting summariser or first-draft email assistant. Keep humans in the loop.

- Week 2. Pick the one customer-facing workflow with the highest manual effort. Prototype with V4-Flash. Track cost-per-resolution against the staff-time baseline.

- Week 3. Add caching to the system prompt. Re-run the cost analysis. The ratio almost always improves.

- Week 4. Decide: scale up, swap in V4-Pro for quality-sensitive sub-tasks, or move to a competitor if the data-handling story does not clear legal.

For broader business context across roles and industries, browse the full DeepSeek use cases hub.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Is DeepSeek safe to use for business?

It depends on your data-handling requirements. The first-party API processes requests on servers in China, and several governments have placed restrictions on DeepSeek for government use. For non-sensitive workloads (marketing copy, public FAQs, internal brainstorming), most businesses are fine. For regulated data, self-host the open weights or use a Western-hosted reseller. The full breakdown sits in our DeepSeek privacy guide.

How much does DeepSeek cost for a small business?

For most SMB workloads, V4-Flash at $0.14 / 1M input (cache miss) and $0.28 / 1M output keeps monthly costs under $50 unless you are running heavy automation. A typical 100,000-call-per-month internal assistant lands around $15-$25. Heavier agentic workflows on V4-Pro will run several hundred dollars. Model your specific spend with the DeepSeek cost estimator.

Can DeepSeek replace ChatGPT for our company?

For most cost-sensitive text workflows, yes. For brand-voice writing and edge-case reasoning, frontier US models still hold a small quality edge. The honest answer is that DeepSeek is the better default for high-volume internal automation; closed-source frontier models still win on hero copy and multimodal tasks. Side-by-side benchmarks live in our DeepSeek vs ChatGPT comparison.

Does DeepSeek work with our existing developer tools?

Yes — the API is OpenAI-compatible and Anthropic-compatible at the same base URL. Existing code using the OpenAI Python or Node SDK works after a one-line change to base_url and api_key. LangChain, LlamaIndex, and most agent frameworks support DeepSeek out of the box. Our DeepSeek OpenAI SDK compatibility page covers the migration in detail.

What happens to my integration when DeepSeek retires the legacy model IDs?

The legacy deepseek-chat and deepseek-reasoner IDs currently route to V4-Flash and stop working after July 24, 2026, 15:59 UTC. Migration is a one-line model= change to deepseek-v4-flash or deepseek-v4-pro; the base URL stays the same. Plan the swap during a low-traffic window and re-run your evals. The DeepSeek API best practices guide covers the cutover checklist.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.