DeepSeek LLM Explained: Architecture, Benchmarks and Lineage

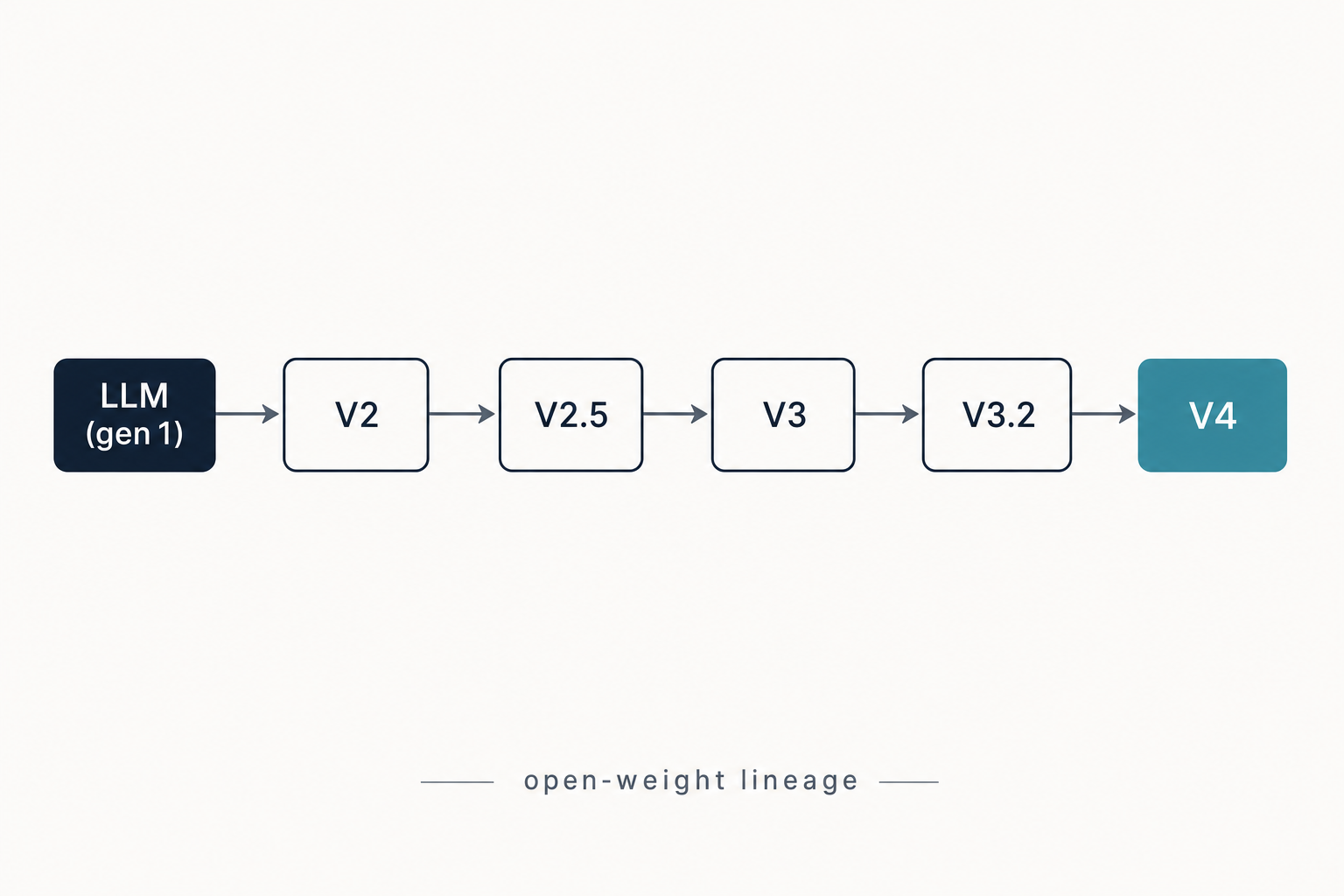

If you have arrived here looking for the latest DeepSeek model, you want V4 — but DeepSeek LLM is the release that started the whole lineage. Published on January 5, 2024, DeepSeek LLM was the lab’s first open-weight language model, shipped as 7B and 67B parameter checkpoints with both base and chat variants. It is the dense-transformer ancestor of every Mixture-of-Experts model DeepSeek has shipped since, and the paper that came with it (“Scaling Open-Source Language Models with Longtermism”) is still cited in scaling-law work today. This article walks through what DeepSeek LLM actually is, how it was built, the benchmarks it posted, where it falls short in 2026, and how to access the weights if you still want to run it.

What DeepSeek LLM is

DeepSeek LLM is the first foundation-model family from Hangzhou-based DeepSeek-AI, released as a pair of dense decoder-only transformers in January 2024. DeepSeek released DeepSeek LLM, its first large language model, on January 5, 2024. It contains 67 billion parameters and was trained from scratch on a dataset containing 2 trillion tokens covering Chinese and English, and the company open-sourced the complete DeepSeek LLM 7B/67B Base and DeepSeek LLM 7B/67B Chat for the research community. The release was paired with a technical report — “DeepSeek LLM: Scaling Open-Source Language Models with Longtermism” — that was as influential as the weights themselves.

Two things to set straight up front. First, “DeepSeek LLM” specifically refers to the 7B/67B January 2024 family. It is not a synonym for the DeepSeek brand or for the current generation. Second, the lab has moved on from this architecture. The current production family is DeepSeek V4 (V4-Pro and V4-Flash, released April 24, 2026), and most users should start there or at the DeepSeek V4 overview rather than downloading the 67B weights. DeepSeek LLM is interesting today as historical context, as a research artefact for scaling-law work, and as a self-hostable dense model for teams that specifically need a 7B or 67B dense baseline.

Architecture and lineage

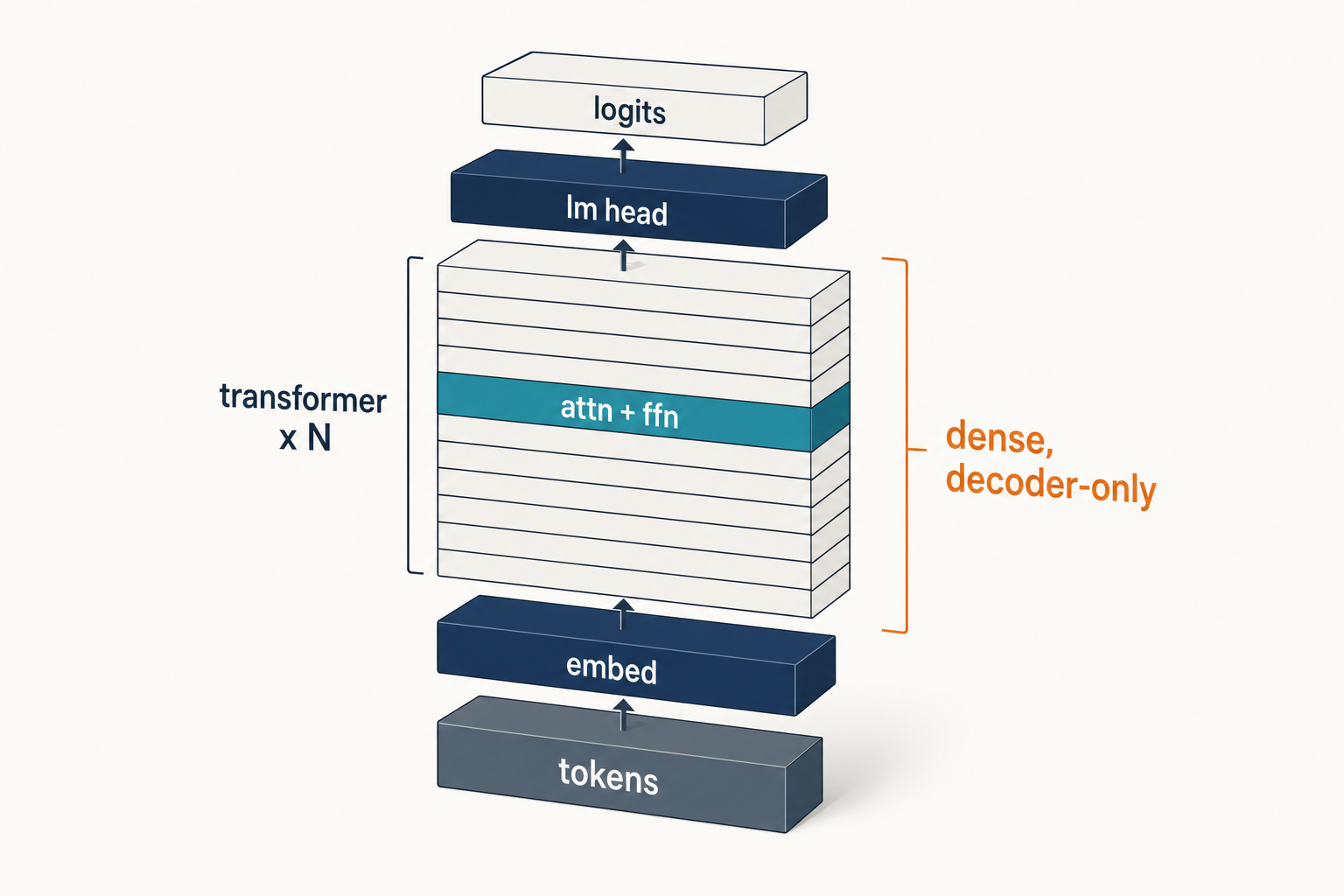

DeepSeek LLM is a LLaMA-style dense transformer with a few deliberate departures. The micro design largely follows LLaMA, adopting a Pre-Norm structure with RMSNorm and using SwiGLU as the activation function for the Feed-Forward Network, with Rotary Embedding for positional encoding, and the 67B model uses Grouped-Query Attention (GQA) instead of traditional Multi-Head Attention to optimise inference cost. DeepSeek LLM 7B is a 30-layer network, while DeepSeek LLM 67B has 95 layers. The 7B retains standard MHA; only the 67B uses GQA.

The training corpus is described as 2 trillion tokens of bilingual English/Chinese text. The models were pre-trained on 2 trillion tokens with a sequence length of 4096 and AdamW optimiser; the 7B used a batch size of 2304 and a learning rate of 4.2e-4, while the 67B used a batch size of 4608 and a learning rate of 3.2e-4. Instead of a cosine schedule, DeepSeek used a multi-step learning-rate schedule that they argued made continual training and rescaling cleaner — a choice that prefigured the company’s later willingness to depart from convention.

How it fits in the family

| Model | Released | Architecture | Total / Active params | Context |

|---|---|---|---|---|

| DeepSeek LLM | 2024-01-05 | Dense (LLaMA-style) | 7B / 67B (dense) | 4,096 |

| DeepSeek V2 | 2024-05-07 | MoE (MLA + DeepSeekMoE) | 236B / 21B | 128K |

| DeepSeek V3 | 2024-12 | MoE | 671B / 37B | 128K |

| DeepSeek R1 | 2025-01 | MoE + RL reasoning | 671B / 37B | 128K |

| DeepSeek V4-Flash / V4-Pro | 2026-04-24 | MoE | 284B/13B and 1.6T/49B | 1,000,000 |

The architectural break came with V2. DeepSeek-V2 (May 7, 2024) is a Mixture-of-Experts model with 236 billion total parameters and 21 billion activated per token, and compared to DeepSeek 67B, V2 saved 42.5% in training costs, reduced the KV cache by 93.3%, and increased maximum generation throughput to 5.76 times. Every DeepSeek model since has been MoE; DeepSeek LLM is the last dense flagship the lab shipped.

Benchmarks

The headline result the lab pushed at launch was that DeepSeek LLM 67B beat Meta’s Llama-2 70B despite having 3B fewer parameters. Evaluation results demonstrate that DeepSeek LLM 67B surpasses LLaMA-2 70B on various benchmarks, particularly in the domains of code, mathematics, and reasoning, and open-ended evaluations reveal that DeepSeek LLM 67B Chat exhibits superior performance compared to GPT-3.5. Specific chat-model numbers from the official repository:

| Benchmark | DeepSeek LLM 67B Chat | Source |

|---|---|---|

| HumanEval (Pass@1) | 73.78 | Official repo |

| GSM8K (0-shot) | 84.1 | Official repo |

| MATH (0-shot) | 32.6 | Official repo |

| Hungarian Nat. HS Exam | 65 | Official repo |

DeepSeek LLM 67B Chat exhibits outstanding performance in coding (HumanEval Pass@1: 73.78) and mathematics (GSM8K 0-shot: 84.1, Math 0-shot: 32.6), and demonstrates remarkable generalisation, scoring 65 on the Hungarian National High School Exam. The Hungarian exam result mattered at the time because it was a fresh, contamination-free test set — the same evaluation Grok-1 had used. DeepSeek designed fresh problem sets to assess capabilities and the evaluation results indicated that DeepSeek LLM 67B Chat performs exceptionally well on never-before-seen exams.

For the base model, third-party leaderboard scores landed in a similar zone. According to the LLM Explorer index of the Hugging Face card, deepseek-llm-67b-base reports MMLU 71.9, ARC 93.7, HellaSwag 82.3, TruthfulQA 51.1, WinoGrande 84.1 and GSM8K 66.5, with a 4K context window. Numbers like these were competitive in early 2024 but are now well below current open-weight frontiers — DeepSeek’s 2026 benchmark numbers live on a different scale.

Strengths

- Strong bilingual performance. DeepSeek LLM 67B Chat surpasses GPT-3.5 in Chinese. If your workload is Chinese-heavy and you need a dense self-hostable model, the 67B is still a reasonable starting point for fine-tuning experiments.

- Honest scaling-law contribution. The accompanying paper introduced fresh batch-size and learning-rate scaling formulas that have been cited across subsequent open-source work.

- Permissive commercial use. The code repository is licensed under the MIT License, the use of DeepSeek LLM models is subject to the Model License, and DeepSeek LLM supports commercial use. The split (MIT for code, separate model licence for weights) is the same pattern DeepSeek used through V3 base.

- Dense, not MoE. If your inference stack does not handle expert routing well, a dense 7B or 67B is operationally simpler than an MoE model of comparable quality.

Weaknesses

- 4K context window. Context: 4K. By 2026 standards this is tiny — the current V4 family ships a 1,000,000-token default — so anything involving long documents, RAG over large corpora, or extended agentic workflows is out of scope.

- No reasoning trace. DeepSeek LLM predates R1. There is no `reasoning_content` field, no inference-time scaling, no “thinking” mode. For math-heavy or multi-step tasks, even a small R1 distill will outperform 67B Chat.

- Hardware demand. The 67B base model needs roughly 135 GB of VRAM at fp16, per the LLM Explorer index — well beyond a single consumer GPU. The 7B is much more tractable; our hardware calculator covers the quantisation maths.

- Superseded on every public benchmark. Coder-V2, V3, R1, V3.2 and V4-Flash all post higher scores at lower $/token when accessed through the API. For most readers, “DeepSeek LLM” is a research footnote, not a production target.

How to access DeepSeek LLM

There is no first-party hosted API for DeepSeek LLM 7B/67B in 2026 — the lab’s hosted endpoint serves the V4 family, with the legacy IDs `deepseek-chat` and `deepseek-reasoner` routing to `deepseek-v4-flash` until they retire on 2026-07-24 at 15:59 UTC. To use DeepSeek LLM specifically, you self-host the open weights:

- Hugging Face: the four canonical repositories are

deepseek-ai/deepseek-llm-7b-base,deepseek-ai/deepseek-llm-7b-chat,deepseek-ai/deepseek-llm-67b-baseanddeepseek-ai/deepseek-llm-67b-chat. - Quantised builds: community GGUF and AWQ conversions exist for both sizes; see our Ollama walkthrough for an Ollama-friendly approach.

- Local install: for a from-scratch setup, the install DeepSeek locally tutorial covers the dependencies.

A minimal Python load using Hugging Face Transformers (cite the 7B if you only have one GPU):

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

model_name = "deepseek-ai/deepseek-llm-7b-chat"

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(

model_name, torch_dtype=torch.bfloat16, device_map="auto"

)

inputs = tokenizer("Explain RMSNorm in one paragraph.", return_tensors="pt").to(model.device)

out = model.generate(**inputs, max_new_tokens=200)

print(tokenizer.decode(out[0], skip_special_tokens=True))If your real goal is “I want to call DeepSeek from code”, do not run DeepSeek LLM — call the hosted V4 API instead. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint, against base_url="https://api.deepseek.com". DeepSeek also exposes an Anthropic-compatible surface at the same base URL. Minimal Python with the OpenAI SDK:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Hello"}],

)

print(resp.choices[0].message.content)Note that the API is stateless — clients must resend the full conversation history with every request, unlike the web chat or mobile app which maintain session history. Useful parameters include temperature (0.0 for code, 1.3 for general chat per DeepSeek’s guidance), top_p, max_tokens, and reasoning_effort (“high” with extra_body={"thinking": {"type": "enabled"}} for thinking mode, which returns reasoning_content alongside the final content). The full DeepSeek API documentation covers JSON mode, streaming, function calling and context caching.

Pricing snapshot (for the API, not for self-hosted DeepSeek LLM)

Self-hosting DeepSeek LLM is “free” only in the API-cost sense — you pay for the GPUs. For workloads where the hosted API is appropriate, V4 pricing as of April 2026 (verify on the DeepSeek API pricing page):

| Tier | Input cache hit ($/M) | Input cache miss ($/M) | Output ($/M) |

|---|---|---|---|

| deepseek-v4-flash | $0.0028 | $0.14 | $0.28 |

| deepseek-v4-pro | $0.003625 promo / $0.0145 list | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list |

V4-Pro is currently 75% off through 2026-05-31 (input cache-miss $0.435/M, output $0.87/M).

Off-peak discounts ended on 2025-09-05 and were not reintroduced with V4. DeepSeek may offer a granted balance — a small promotional credit that can expire — so check the billing console for current offers rather than assuming free credits.

Best use cases for DeepSeek LLM today

- Scaling-law research. The 7B and 67B checkpoints, plus intermediate checkpoints DeepSeek released, are useful as a fixed reference point.

- Bilingual fine-tuning baselines where a dense model is preferred — see the role-specific patterns in our DeepSeek for research and DeepSeek for translation guides.

- Air-gapped deployments where the operational simplicity of a dense model outweighs benchmark quality.

- Teaching and reproduction — running 7B Chat on a single GPU is a clean classroom example of LLaMA-family architecture without the complexity of MoE routing.

For everything else — coding assistance, agents, RAG, reasoning, long-context work — pick a current model. DeepSeek Coder V2 and the V4 family will outperform DeepSeek LLM on every coding and reasoning benchmark we have run, and at lower total cost when you factor in inference time.

Comparable alternatives

If you are evaluating DeepSeek LLM against something else, the relevant comparisons are not against current ChatGPT or Claude — those are different generations entirely. Useful frames:

- Within the DeepSeek lineage: read DeepSeek V3 vs GPT-4o to see how far the family moved in 12 months.

- Against other open-weight families: DeepSeek vs Llama covers the historical Llama-2/3 contrast directly.

- For the broader landscape, see the DeepSeek alternatives hub.

- Browse the full DeepSeek models hub for every release with current status.

Verdict

DeepSeek LLM is a well-executed, well-documented dense transformer that did exactly what its paper claimed in early 2024 — beat Llama-2 70B and outperform GPT-3.5 on open-ended evaluation, with reproducible scaling-law work behind it. As a 2026 production model it has been outclassed on every public benchmark by DeepSeek’s own MoE successors. Run it for research, teaching, or specific bilingual fine-tuning experiments; for everything else, use V4-Flash or V4-Pro.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

What is DeepSeek LLM?

DeepSeek LLM is the lab’s first foundation-model family, released on January 5, 2024 as 7B and 67B dense decoder-only transformers, each shipped in Base and Chat variants. It contains 67 billion parameters and was trained from scratch on a dataset containing 2 trillion tokens covering Chinese and English, and the company open-sourced the complete 7B/67B Base and Chat checkpoints for the research community. See our what is DeepSeek overview for the broader context.

Is DeepSeek LLM open source?

Yes, with a caveat. The code repository is licensed under the MIT License, the use of DeepSeek LLM models is subject to a separate Model License, and DeepSeek LLM supports commercial use. Code is MIT, weights are governed by the DeepSeek Model License — the same split DeepSeek used through V3 base. Newer releases (R1, V3.1, V3.2, V4) publish weights under MIT directly. More detail in is DeepSeek open source.

How does DeepSeek LLM 67B compare to Llama 2 70B?

On the lab’s own evaluations, the 67B beat Llama-2 70B with 3B fewer parameters. DeepSeek 67B surpassed LLaMA-2 70B across various benchmarks, particularly excelling in code, mathematics, and reasoning tasks, and in open-ended conversational evaluations DeepSeek 67B Chat outperformed GPT-3.5. For a current head-to-head with the wider Llama family, see DeepSeek vs Llama.

Can I run DeepSeek LLM locally?

Yes. The 7B fits comfortably on a single 24 GB consumer GPU at 4-bit quantisation; the 67B needs roughly 135 GB of VRAM at fp16, so plan for multi-GPU or aggressive quantisation. Both have community GGUF and AWQ builds. The install DeepSeek locally tutorial covers dependencies, and DeepSeek system requirements has the hardware specifics.

Should I use DeepSeek LLM or DeepSeek V4 in 2026?

For almost any production workload, V4. DeepSeek LLM has a 4K context window, no thinking mode, and benchmark scores below DeepSeek’s own MoE successors. The current V4-Flash and V4-Pro models offer a 1,000,000-token context, optional thinking mode, and lower per-token costs through the hosted API. Use DeepSeek LLM only for research, teaching, or specific dense-model experiments. The DeepSeek V4-Flash page covers what to migrate to.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- Model cardDeepSeek-LLM-67B-Chat model card67B Chat checkpoint and benchmark scoresLast checked: April 30, 2026

- Model cardDeepSeek-LLM-7B-Chat model card7B Chat checkpoint for single-GPU useLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- Technical reportDeepSeek LLM: Scaling Open-Source Language Models with Longtermism (arXiv 2401.02954)Scaling-law work, training recipe, GQA decisionLast checked: April 30, 2026

Context sources

- AnalysisLLM Explorer index of DeepSeek-LLM-67B-BaseThird-party MMLU/ARC/HellaSwag/GSM8K leaderboard figuresLast checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.