DeepSeek vs ChatGPT: Which One Should You Actually Use in 2026?

If you are deciding between DeepSeek vs ChatGPT today, the right answer depends less on which is “smarter” and more on how you plan to use them. Both shipped frontier-tier updates in the same 24 hours — DeepSeek V4 (Preview) on April 24, 2026, and OpenAI’s GPT-5.5 on April 23 — and they have drifted into genuinely different products. ChatGPT is a broad consumer and enterprise application with agents, Codex, connectors and a polished UI. DeepSeek is a model-and-API house with minimal chat polish and an aggressive open-weights posture. This guide compares the two on coding, reasoning, writing, price, privacy and ecosystem, with real benchmark numbers and a worked cost example so you can pick with your eyes open.

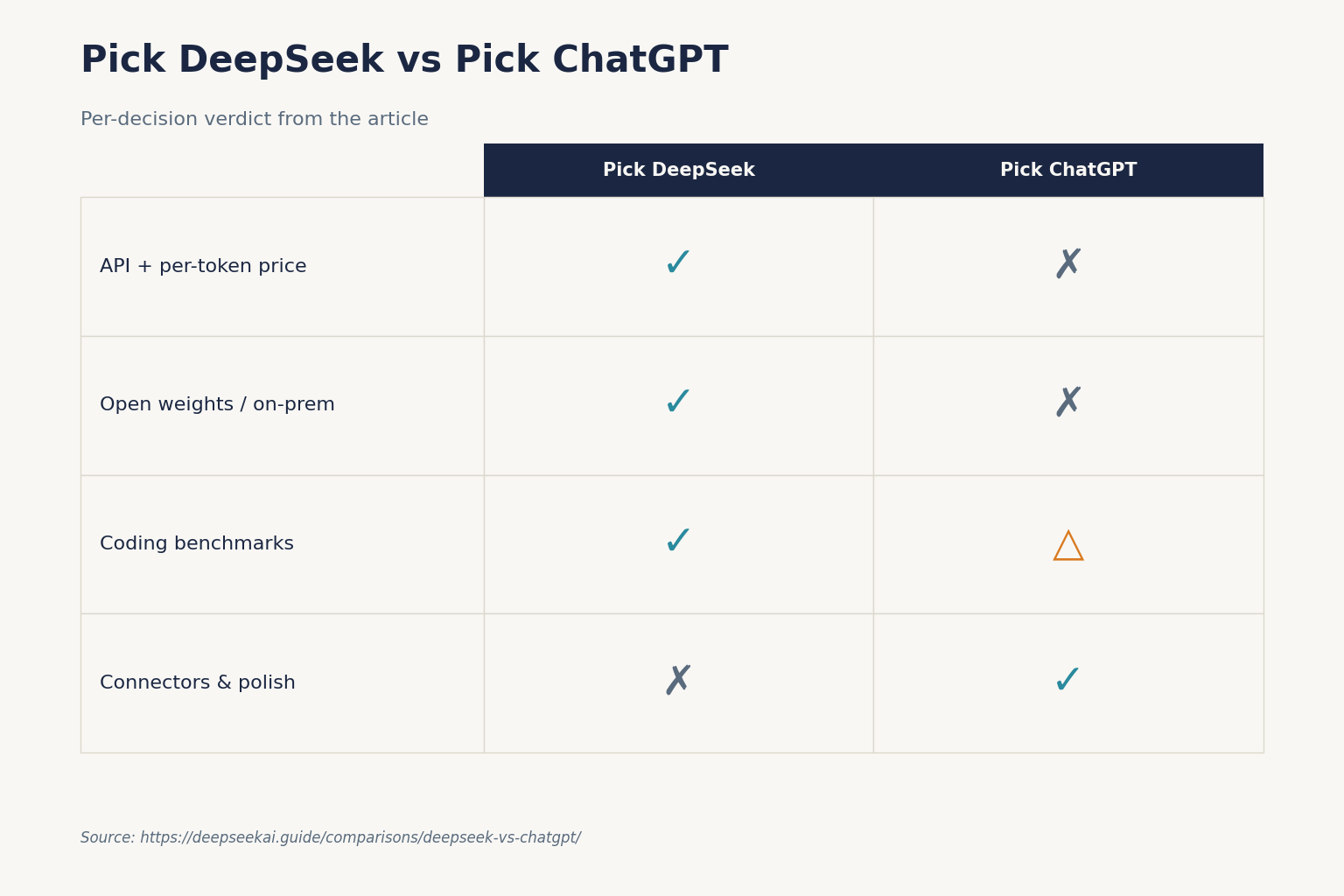

The short verdict

For most individuals who want a single assistant that handles email, research, image generation, voice mode, shared projects and app integrations — ChatGPT wins. It is a better product, not just a better model. For developers building on an API, for teams that need open weights they can self-host, or for anyone cost-sensitive at scale — DeepSeek wins decisively on price. DeepSeek V4-Flash lists at $0.14 input (cache miss) / $0.28 output per 1M tokens, which is roughly 107× cheaper on output (and 35× cheaper on input) than GPT-5.5 at $5.00 per million input tokens, $30.00 per million output tokens.

The honest nuance: on coding benchmarks DeepSeek’s top tier is competitive with the OpenAI frontier; on general knowledge, agentic tool use, multimodality and polish, ChatGPT still has the edge.

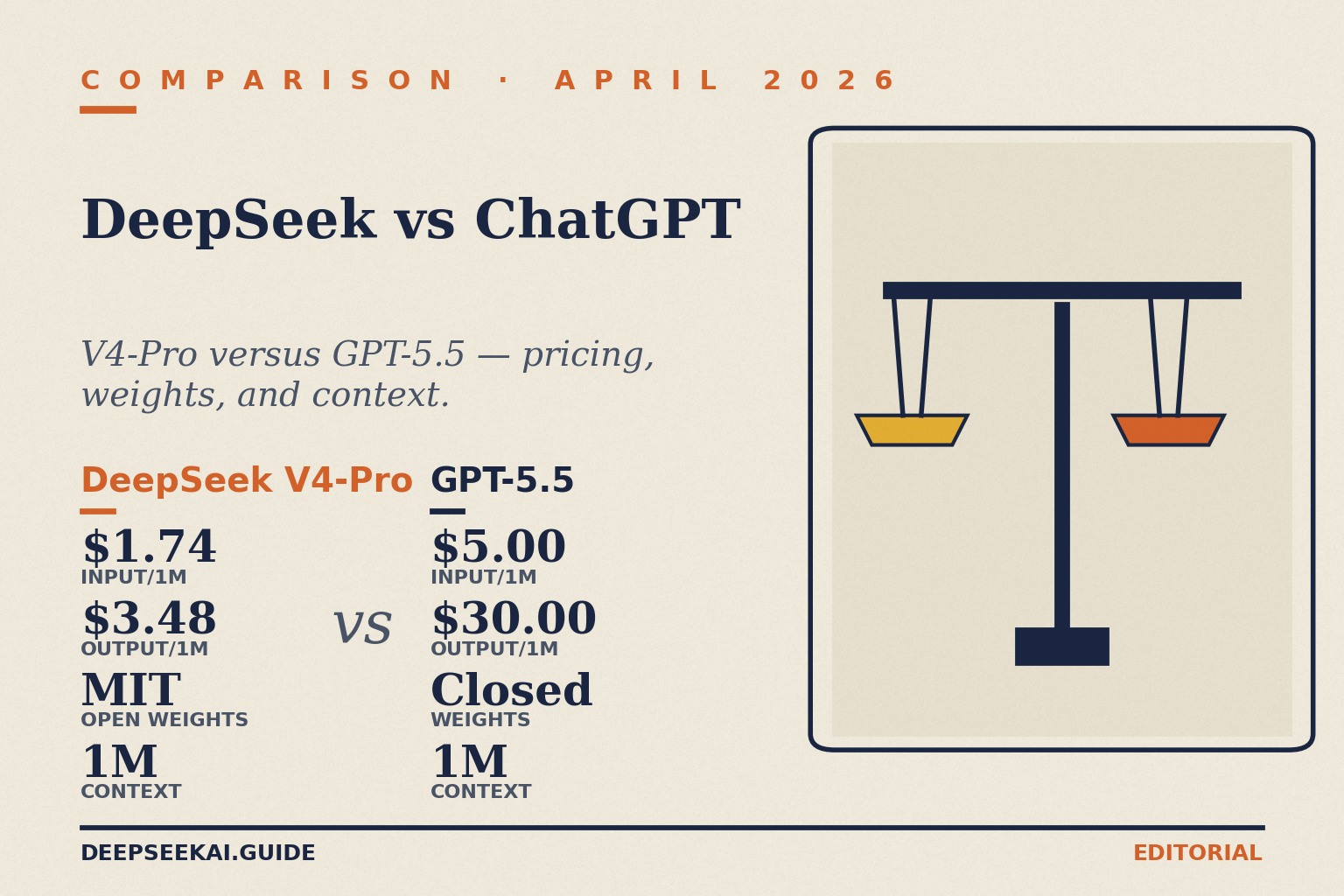

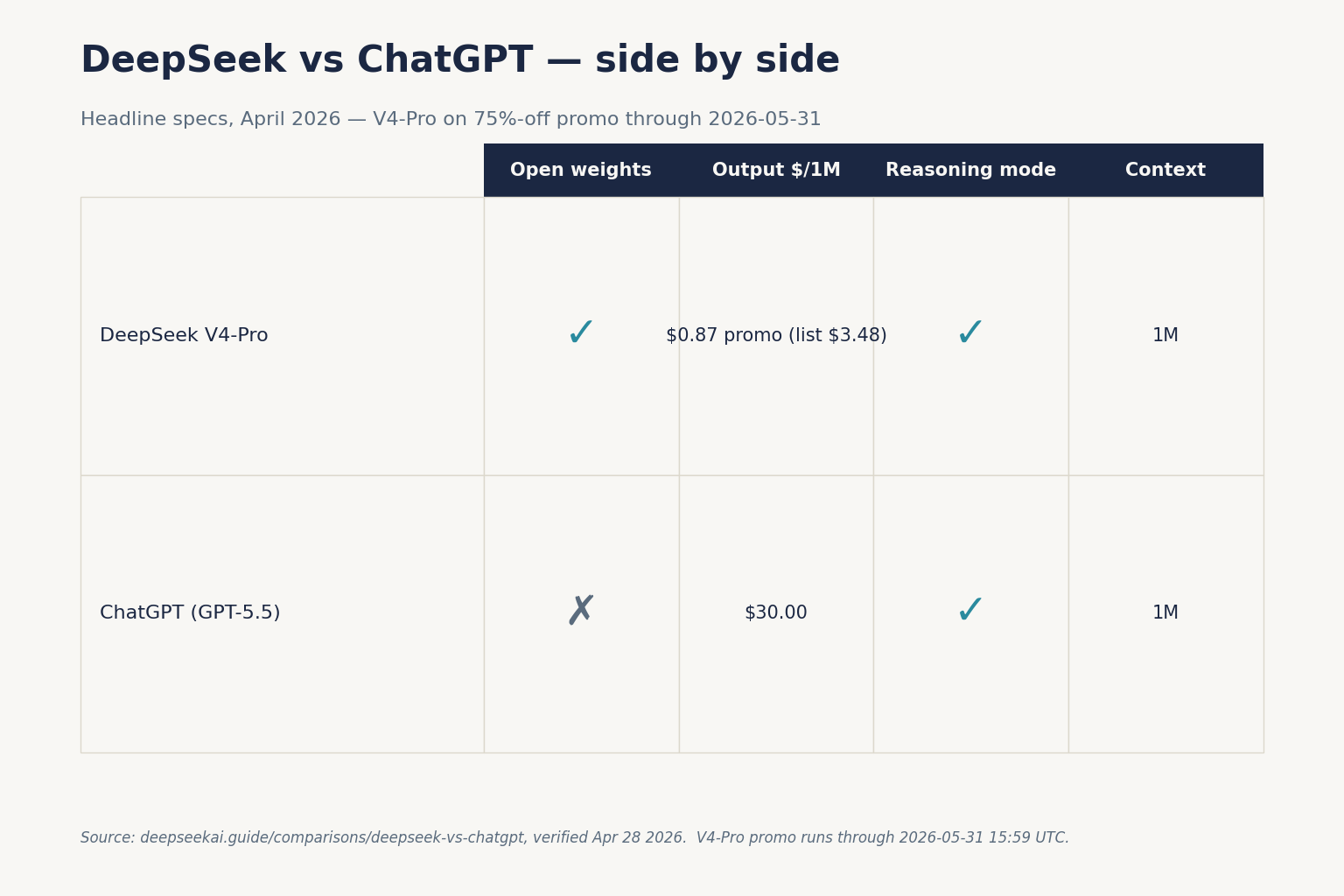

At-a-glance comparison

Numbers below reflect the public pricing and benchmark pages as of April 24, 2026. For DeepSeek we show the two V4 tiers — the frontier DeepSeek V4-Pro and the cost-efficient V4-Flash. For ChatGPT we show the two current API flagships.

| Feature | DeepSeek V4-Flash | DeepSeek V4-Pro | GPT-5.4 (API) | GPT-5.5 (API) |

|---|---|---|---|---|

| Input, cache miss ($/1M) | $0.14 | $0.435 promo / $1.74 list | $2.50 | $5.00 |

| Output ($/1M) | $0.28 | $0.87 promo / $3.48 list | $15.00 | $30.00 |

| Context window | 1,000,000 tokens | 1,000,000 tokens | ~1M tokens | 1M tokens |

| Max output | 384,000 tokens | 384,000 tokens | 128,000 tokens | Not specified here |

| Open weights | Yes, MIT | Yes, MIT | No | No |

| Thinking mode | Request parameter | Request parameter | reasoning_effort | reasoning_effort |

| Release | 2026-04-24 | 2026-04-24 | Earlier 2026 | 2026-04-23 |

OpenAI’s GPT-5.4 costs $2.50 per million input tokens, $15 per million output tokens, and OpenAI doubled the per-token price on the GPT-5 line with the April 23, 2026 release of GPT-5.5. Input goes from $2.50 to $5.00 per million tokens. Output goes from $15.00 to $30.00 per million tokens. DeepSeek’s V4-Flash sits well below both.

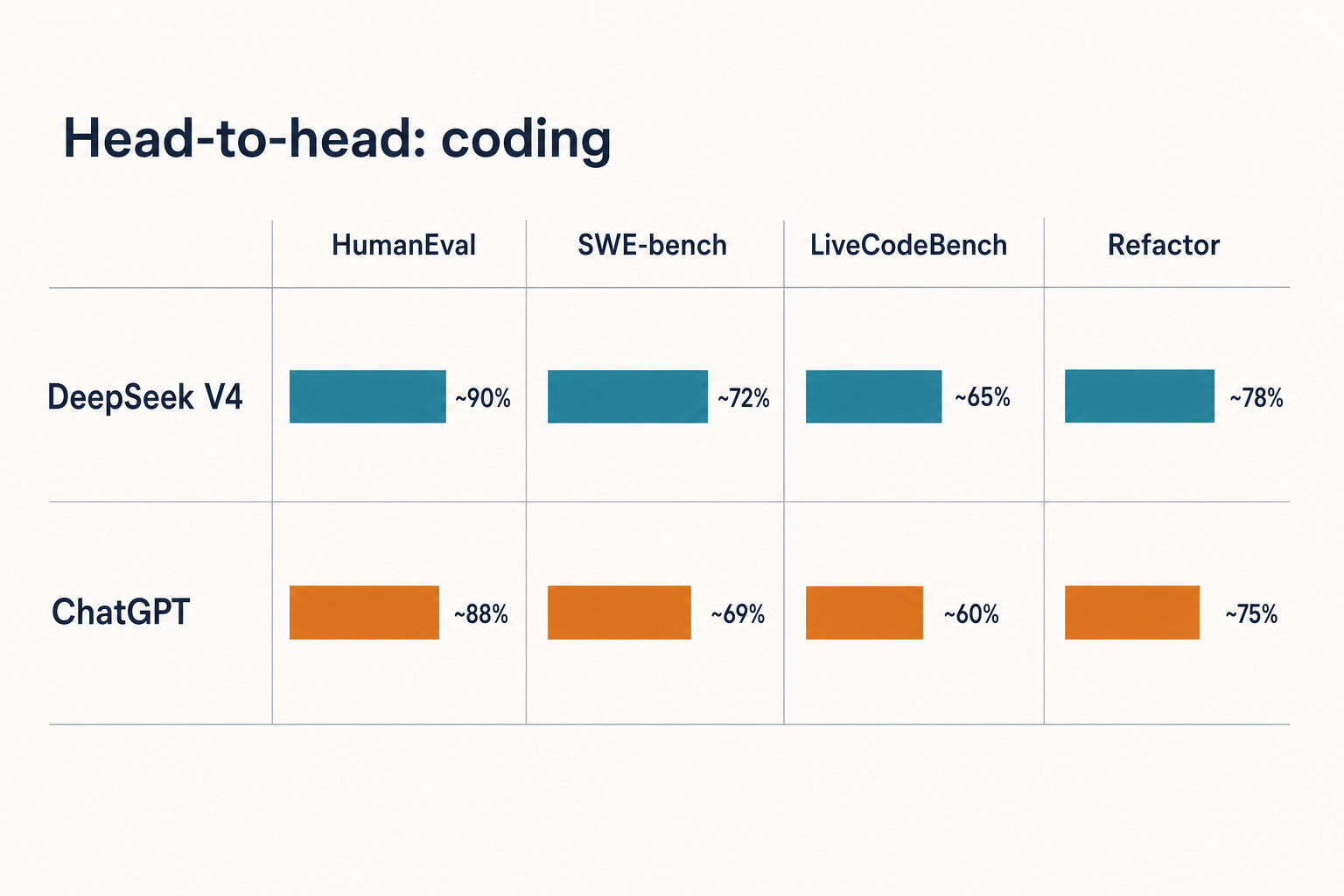

Head-to-head: coding

This is where the gap is narrowest and the DeepSeek value proposition is strongest.

On DeepSeek’s reported V4 benchmarks, DeepSeek V4-Pro activates 49 billion parameters per token, supports a 1 million token context window, and achieves 80.6% on SWE-bench Verified — within 0.2 points of Claude Opus 4.6. On coding benchmarks, DeepSeek V4-Pro leads Claude on Terminal-Bench 2.0 (67.9% vs 65.4%), LiveCodeBench (93.5% vs 88.8%), and Codeforces rating (3206 vs no reported score). Claude Opus 4.6 holds a marginal lead on SWE-bench Verified (80.8% vs 80.6%).

Against OpenAI specifically, the picture is more mixed. GPT-5.4 wins HMMT, Apex, GDPval-AA, and Terminal Bench 2.0 by a wide margin (75.1 vs 67.9). OpenAI has since released GPT-5.5 at 82.7% on Terminal Bench 2.0, which re-opens the gap on agentic coding. So if your primary workload is long-horizon agentic coding — many tool calls, minutes-to-hours of autonomous work — GPT-5.5 Thinking is ahead. For single-turn competitive programming and pull-request-sized patches, V4-Pro is roughly a peer at a fraction of the price.

V4-Flash is the surprise. V4-Flash has 284 billion total parameters (13B active) and costs $0.28/M output tokens — 12.4x cheaper than Pro. On SWE-bench Verified, Flash scores 79.0% versus Pro’s 80.6%. That puts a $0.28/M-output model within a couple of points of a frontier closed model on real GitHub-issue benchmarks. For most day-to-day coding, Flash is the right default. Our longer write-up on DeepSeek for coding covers the tradeoffs in detail.

Head-to-head: reasoning

Both V4 models expose thinking mode as a request parameter, not as a separate model ID. In the API you set reasoning_effort="high" and pass extra_body={"thinking": {"type": "enabled"}}. The model then returns reasoning_content alongside the final content. For max effort you use reasoning_effort="max", which requires a context window of at least 384K tokens to avoid truncation.

OpenAI’s equivalent is the GPT-5 Thinking variants inside ChatGPT and the reasoning_effort parameter on the GPT-5 family API. OpenAI does not expose the full internal step-by-step reasoning through the API — you get a reasoning plan or summary, not the raw trace.

On pure reasoning benchmarks the closed models still lead in places. HLE (Humanity’s Last Exam) at 37.7% puts V4-Pro below Claude (40.0%), GPT-5.4 (39.8%), and well below Gemini-3.1-Pro (44.4%). And HMMT 2026 math competition: Claude (96.2%) and GPT-5.4 (97.7%) pull decisively ahead of V4-Pro (95.2%). These are small absolute gaps, but on the hardest questions they compound.

For a deeper treatment of DeepSeek’s reasoner lineage, see DeepSeek R1 and our DeepSeek R1 vs OpenAI o1 comparison.

Head-to-head: writing and general knowledge

ChatGPT remains my default for long-form writing and research where tone, voice and citations matter. OpenAI has invested in product surface — Canvas, Projects, voice mode, file attachments, memory — that DeepSeek simply does not have. On MMLU-Pro, V4-Pro scores 87.5 — matching GPT-5.4 exactly, trailing Gemini-3.1-Pro (91.0) and Claude Opus 4.6 (89.1). General-knowledge retrieval shows a wider gap: SimpleQA-Verified at 57.9% versus Gemini’s 75.6% reveals a meaningful factual knowledge retrieval gap. If your use case requires accurate real-world knowledge recall — not just code generation — Gemini holds a clear edge. OpenAI sits between those two on factual recall.

In practice, DeepSeek writes competent English prose with occasional tells — the odd phrasing that flags a Chinese-trained model, slightly stiffer tone. For content teams, that matters. For internal notes, it does not.

Head-to-head: pricing and plans

This is where the comparison gets lopsided.

ChatGPT consumer plans

Consumer plans now run from free to $200 per month. Until now, plans were priced at: free (which now includes ads), an $8/month Go plan (that also includes ads), a $20/month Plus plan (ad free), and then all the way up to a $200 Pro plan (also ad free). On April 9, 2026, OpenAI dropped a new pricing tier right in the middle of its lineup: a new $100 per month ChatGPT Pro tier (the “$100 Pro” plan, geared at heavier Codex use), sitting between the $20 Plus plan most casual users know and the premium $200 Pro plan that was already on offer. OpenAI offers tailored plans for teams and organizations under its ChatGPT Team and ChatGPT Enterprise offerings, each priced and structured according to usage and organizational needs. ChatGPT Team is priced at $25 per user/month (billed annually) or $30 per user/month (billed monthly). It includes access to GPT-5 with increased usage limits, shared workspaces, and basic administrative tools.

The free and lower-tier plans have dynamic usage limits that OpenAI adjusts by plan and traffic; heavy users fall back to a smaller model after caps. Exact model-picker labels inside ChatGPT change frequently — assume the GPT-5 family is what you are talking to on paid tiers.

DeepSeek plans

DeepSeek has no “Plus vs Pro” consumer tiers. The web chat at chat.deepseek.com is free to use with V4 as the default model and a DeepThink toggle for thinking mode. DeepSeek does not publicly document a daily message cap as of April 2026 — practical limits exist under load but are not posted. For scale you go to the API, where you pay per token, not per seat. See our is DeepSeek free page for the boundary between the free web chat and paid API access.

API pricing compared

Assuming OpenAI-compatible wire formats and similar workloads, the per-million-token gap is large. DeepSeek may offer a granted balance — a small promotional credit that can expire; check the billing console for current offers. OpenAI historically applies credits to new accounts as well; verify both on the respective providers’ billing pages.

When to pick DeepSeek vs ChatGPT

Pick ChatGPT if:

- You want one assistant for email, research, images, voice, Canvas and integrations — examples like Slack, Google Drive, SharePoint, GitHub and Atlassian connectors are available on business plans (availability varies by plan and workspace admin).

- You care about product polish, shared projects and mobile apps more than per-token price.

- You are doing long-horizon agentic coding where GPT-5.5 Thinking’s Terminal Bench 2.0 lead matters.

- Your organisation has a procurement preference for a US-hosted provider.

Pick DeepSeek if:

- You are building on an API and per-token price is a budget lever. V4-Flash is roughly 107× cheaper on output (and 35× cheaper on input) than GPT-5.5.

- You need open weights for on-prem deployment, fine-tuning or compliance. V4-Pro and V4-Flash are released on April 24, 2026 by DeepSeek under the MIT License.

- Your workload is coding-heavy and benchmark parity on SWE-bench Verified, LiveCodeBench or Codeforces is what you actually care about.

- You want the full 1M-token context with up to 384K tokens of output for long-document work.

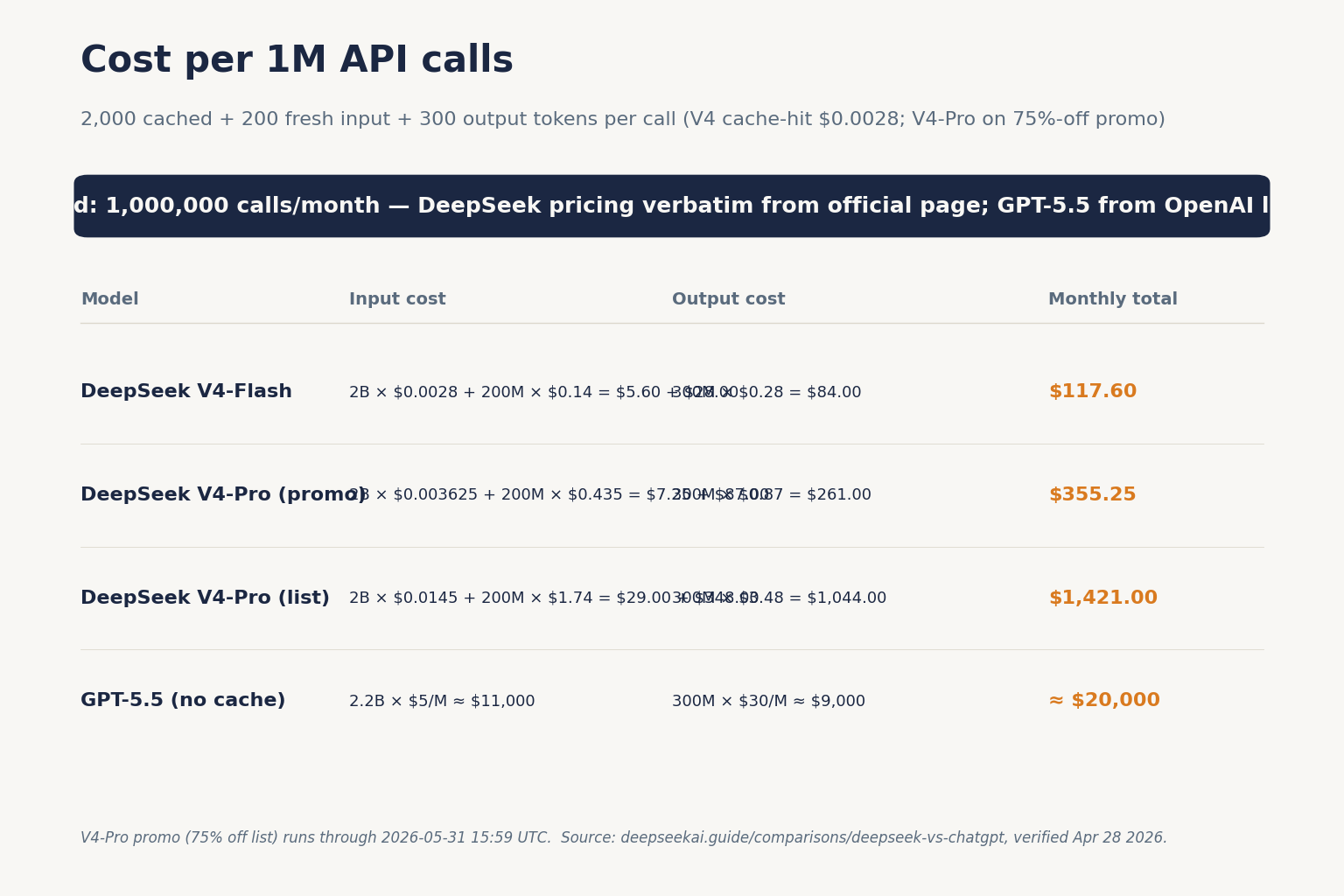

Worked example: cost per million API calls

Consider a production chat assistant that makes one million API calls with a 2,000-token system prompt (cached across calls), a 200-token user message, and a 300-token response. Here is the math on DeepSeek V4-Flash:

Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

-------

Total: $117.60Same workload on DeepSeek V4-Pro, at the frontier tier:

Cached input: 2,000,000,000 tokens × $0.003625/M (promo) = $7.25 (list $29.00)

Uncached input: 200,000,000 tokens × $0.435/M (promo) = $87.00 (list $348.00)

Output: 300,000,000 tokens × $0.87/M (promo) = $261.00 (list $1,044.00)

---------

Total: $355.25 (V4-Pro 75% promo through 2026-05-31; list $1,421.00)The same one million calls on GPT-5.5 at $5.00 input / $30.00 output — ignoring OpenAI’s cache-hit multiplier, which reduces input cost — would be roughly $11,000 on input and $9,000 on output, before cache discounts. Even generously caching, you are looking at multiple thousands of dollars versus $117.60 on V4-Flash. For a detailed breakdown of the three token buckets and when each applies, see our DeepSeek API pricing reference and the DeepSeek pricing calculator.

API architecture — the part most comparisons skip

ChatGPT (the app) and DeepSeek (the app) look similar. Their APIs are also similar, but with real differences worth naming.

DeepSeek’s API is OpenAI-compatible. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. DeepSeek also ships an Anthropic-compatible surface against the same base URL. That means you can swap either the OpenAI or Anthropic Python SDK onto DeepSeek with a single-line base_url change. A minimal Python snippet using the OpenAI SDK:

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise the design doc."}],

)A few technical points every team should know before committing:

- The API is stateless — you must resend the conversation history with each request. The web/app maintains session history; the API does not.

- Current model IDs are

deepseek-v4-proanddeepseek-v4-flash. Legacydeepseek-chatanddeepseek-reasonercurrently route todeepseek-v4-flashuntil they are retired on 2026-07-24 at 15:59 UTC. Migration is a one-linemodel=swap; the base URL does not change. - Core parameters to know:

temperature(DeepSeek recommends 0.0 for code, 1.0 for data analysis, 1.3 for general chat, 1.5 for creative writing),top_p,max_tokens,reasoning_effort, plus streaming, tool calling, JSON mode, context caching, FIM completion (Beta, non-thinking mode only), and Chat Prefix Completion (Beta). - JSON mode is designed to return valid JSON, not guaranteed. Include the word “json” in your prompt, a small example schema, and enough

max_tokensto avoid truncation — handle occasional empty outputs.

For OpenAI’s side of the API story, OpenAI supports its Responses API, Chat Completions, Realtime, Batch and Assistants surfaces, all billed at the chosen model’s input and output rates — see OpenAI’s API pricing page for current rates. Our DeepSeek OpenAI SDK compatibility walk-through shows a real migration in under 10 minutes.

Privacy and data handling

ChatGPT processes data on OpenAI’s infrastructure. Paid plans — Business, Enterprise, and API — offer stronger data-handling assurances, including commitments that data is not used to train OpenAI models on Enterprise.

DeepSeek processes data on servers subject to Chinese law. Conversations may be stored and are accessible to Chinese authorities under legal process. For personal or non-sensitive commercial use this is a tradeoff most users make consciously; for regulated industries (healthcare, legal, financial) the calculus is different. Italy’s Garante ordered blocking of the DeepSeek app in January 2025 over data-protection concerns. Several US states have restricted DeepSeek use on government devices; there is no federal US ban as of April 2026. Our DeepSeek privacy and DeepSeek US restrictions pages cover the specifics.

Ecosystem and product surface

ChatGPT is a broader product ecosystem spanning consumer, business and enterprise use, with apps, Codex, agent features and a large connector catalogue. Unlimited GPT‑5.5 messages, with generous access to GPT‑5.5 Thinking, and access to GPT‑5.5 Pro—plus the flexibility to add credits as needed · 60+ apps that bring your tools and data into ChatGPT—like Slack, Google Drive, SharePoint, GitHub, Atlassian, and more are examples of what Business-tier users can enable — availability varies by plan, region and workspace admin controls.

DeepSeek is more model-and-API-centric. The chat UI exists mainly to let people try the underlying models. There is no equivalent of Canvas, Projects, GPTs, or a plugin marketplace. What DeepSeek has instead is open weights on Hugging Face, strong third-party tool support (Claude Code, OpenClaw, OpenCode all route to V4 happily), and an active local-inference community. If you want to run a frontier model on your own GPUs, DeepSeek is the only option in this comparison.

Alternatives worth a look

This comparison is deliberately two-model. Real decisions usually involve a third or fourth contender. See our DeepSeek vs Claude and DeepSeek vs Gemini write-ups, the DeepSeek alternatives hub, or browse the DeepSeek comparisons category for every side-by-side we publish.

Final verdict

If you have $20 a month and want the most full-featured single AI assistant for everyday use, ChatGPT is the better buy. If you have an API key and a workload, DeepSeek V4-Flash is among the cheapest frontier-tier chat APIs as of April 2026 (verify against the official pricing pages before committing). If you are doing serious coding at volume, V4-Pro is a credible swap for Claude Opus or GPT-5.5 on the benchmarks that matter, at a fraction of the per-token cost. Use both. The smart money in 2026 is not picking a winner but routing each task to the cheapest model that passes your quality bar.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Is DeepSeek better than ChatGPT?

It depends on the task. On coding benchmarks like LiveCodeBench and Codeforces, DeepSeek V4-Pro leads Claude Opus 4.6 and trades with GPT-5.4. On general knowledge, agentic tool use, multimodality and product polish, ChatGPT is still ahead, and GPT-5.5 retook the lead on Terminal Bench 2.0. For per-token cost, DeepSeek wins decisively. See our full DeepSeek vs competitors review.

How much cheaper is the DeepSeek API than OpenAI’s?

At list prices on April 24, 2026, DeepSeek V4-Flash is $0.14 input (cache miss) and $0.28 output per 1M tokens. GPT-5.5 is $5.00 / $30.00. That is roughly 36× cheaper on input and 107× cheaper on output at headline rates, before caching discounts on either side. Our DeepSeek API pricing page has worked examples.

Can I use DeepSeek with the OpenAI Python SDK?

Yes. DeepSeek’s API is OpenAI-compatible at the wire-format level. Set base_url="https://api.deepseek.com" and your DeepSeek api_key, then call client.chat.completions.create() exactly as you would against OpenAI. The POST /chat/completions endpoint mirrors OpenAI’s shape. See our DeepSeek OpenAI SDK compatibility guide for a worked migration.

Does DeepSeek have a thinking mode like ChatGPT?

Yes. On DeepSeek V4, thinking mode is a request parameter, not a separate model. Set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}} on either deepseek-v4-pro or deepseek-v4-flash. The response returns reasoning_content alongside the final content. reasoning_effort="max" uses the highest effort and requires a 384K-token context. More in our DeepSeek V4 reference.

Is DeepSeek safe to use for sensitive business data?

DeepSeek processes conversations on servers in China and may be subject to law-enforcement access under Chinese law. For regulated industries (healthcare, legal, finance) that is usually a deal-breaker for cloud API use — self-hosting the MIT-licensed V4 weights is an option. For non-sensitive internal use the tradeoff is commonly accepted. Read is DeepSeek safe and DeepSeek privacy before deciding.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingGoogle Gemini API pricingGemini API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricing pageGPT-5.4 / GPT-5.5 input and output ratesLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.