DeepSeek V4 Preview: Pro and Flash Tiers, 1M Context, Tested

If you have been running DeepSeek V3.2 in production and keep seeing headlines about a new release, here is the short version: DeepSeek V4 landed as a Preview on April 24, 2026, and it is not a single model — it is a two-tier family. The `deepseek-v4-pro` ID gives you frontier-scale reasoning and agentic coding; `deepseek-v4-flash` gives you most of that quality at roughly a seventh of the per-token cost. Both share the same 1,000,000-token context window, both ship with MIT-licensed weights on Hugging Face, and both expose thinking mode as a request parameter rather than a separate endpoint. This article covers what each tier actually does, what it costs, where it beats (and loses to) Claude and GPT-5 competitors, and how to migrate from the legacy `deepseek-chat` and `deepseek-reasoner` IDs before they retire.

What is DeepSeek V4?

DeepSeek V4 is the fourth-generation model family from the Hangzhou-based lab DeepSeek, released as a Preview on April 24, 2026. The preview includes two strong Mixture-of-Experts (MoE) language models — DeepSeek-V4-Pro with 1.6T parameters (49B activated) and DeepSeek-V4-Flash with 284B parameters (13B activated) — both supporting a context length of one million tokens. Unlike V3.x, which split thinking and non-thinking behaviour across separate model IDs, V4 treats reasoning effort as a request parameter, so either tier can run in non-thinking, thinking, or max-thinking mode.

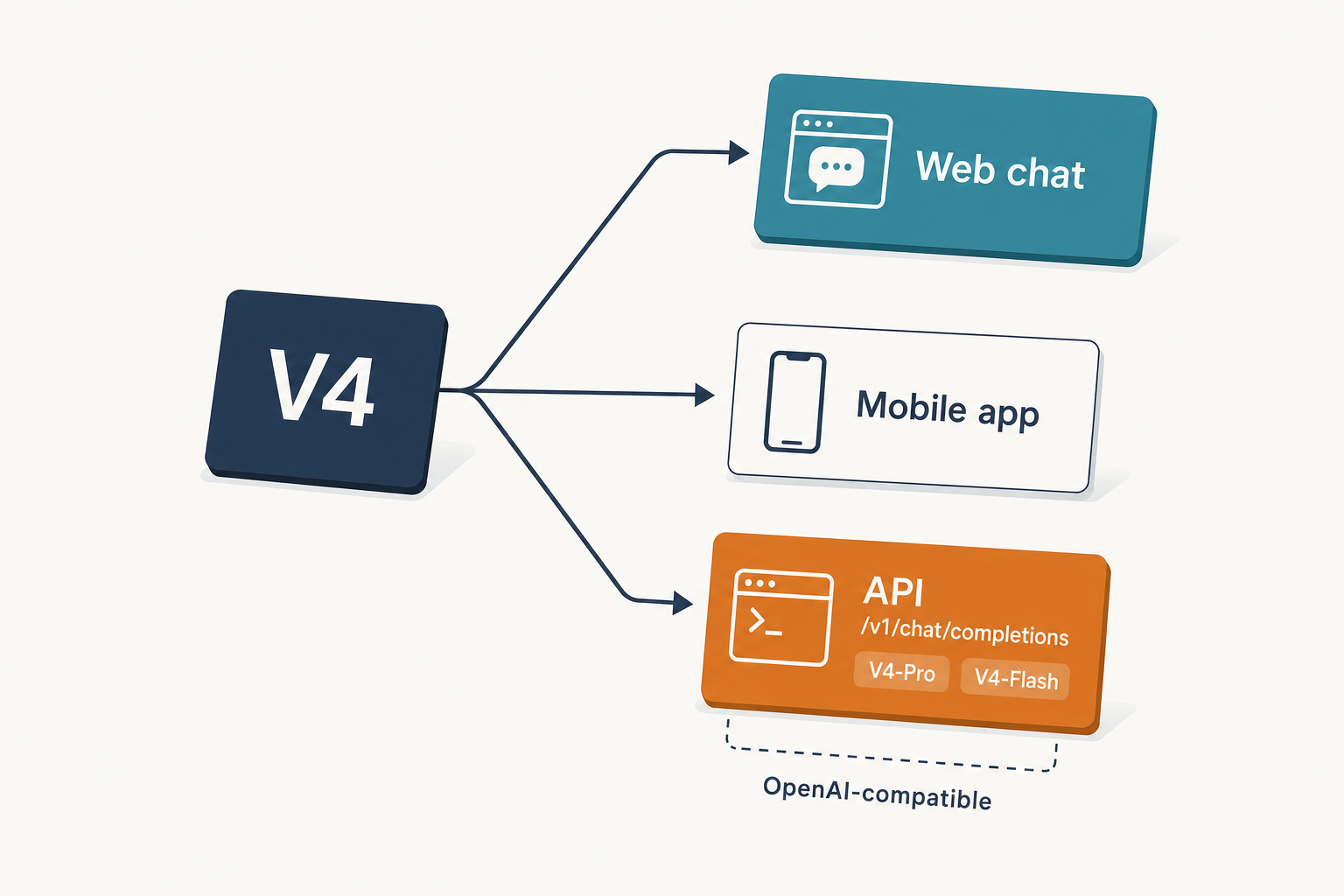

Both tiers are open-weight under the MIT license and available three ways: the DeepSeek API, the official web chat at chat.deepseek.com (V4-Pro as “Expert Mode”, V4-Flash as “Instant Mode”), and direct download from Hugging Face. For context on how this sits in the lineage, see DeepSeek V3.2 and the wider DeepSeek models hub.

Architecture and lineage

Tier specifications

| Spec | DeepSeek V4-Pro | DeepSeek V4-Flash |

|---|---|---|

| Total parameters | 1.6T | 284B |

| Active per token | 49B | 13B |

| Context window | 1,000,000 tokens | 1,000,000 tokens |

| Max output | 384,000 tokens | 384,000 tokens |

| License (weights) | MIT | MIT |

| Hugging Face footprint | ~865 GB | ~160 GB |

| Release date | 2026-04-24 | 2026-04-24 |

DeepSeek-V4-Pro is the new largest open weights model — larger than Kimi K2.6 (1.1T) and GLM-5.1 (754B) and more than twice the size of DeepSeek V3.2 (685B). Pro is 865 GB on Hugging Face, Flash is 160 GB. Practically, that means V4-Pro is a data-centre model; V4-Flash, lightly quantised, is the one hobbyists and smaller teams have a realistic chance of serving on their own hardware.

The efficiency story

The headline claim in the technical report is not capability — it is cost per token at very long contexts. V4 uses a hybrid attention mechanism combining Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA) to dramatically improve long-context efficiency. In the 1M-token context setting, DeepSeek-V4-Pro requires only 27% of single-token inference FLOPs and 10% of KV cache compared with DeepSeek-V3.2. V4-Flash pushes those numbers further, down to roughly 10% of FLOPs and 7% of the KV cache.

That matters because it is the reason 1M context is the default on both tiers rather than an expensive premium feature. The model was pre-trained on 33T tokens using FP4 + FP8 mixed precision — MoE experts at FP4, most other parameters at FP8.

Benchmarks

All figures below are DeepSeek-reported numbers from the V4 announcement and technical report. Cross-check against the V4-Pro model card on Hugging Face before making procurement decisions — frontier benchmark numbers move weekly.

| Benchmark | V4-Pro (reasoning_effort=max) | Claude Opus 4.6 | Notes |

|---|---|---|---|

| SWE-Bench Verified | 80.6% | 80.8% | Agentic coding |

| Terminal-Bench 2.0 | 67.9% | 65.4% | Terminal agent tasks |

| LiveCodeBench | 93.5% | 88.8% | Open-model high |

| Codeforces rating | 3,206 | not reported | ~23rd human rank |

| SimpleQA-Verified | 57.9% | — | Large open-model jump |

| HLE | 37.7% | 40.0% | Claude leads |

V4-Pro activates 49 billion parameters per token, supports a 1 million token context window, and achieves 80.6% on SWE-bench Verified — within 0.2 points of Claude Opus 4.6. On coding benchmarks, V4-Pro leads Claude on Terminal-Bench 2.0 (67.9% vs 65.4%), LiveCodeBench (93.5% vs 88.8%), and Codeforces rating (3206 vs no reported score). Claude Opus 4.6 holds a marginal lead on SWE-bench Verified (80.8% vs 80.6%), and a meaningful lead on HLE (40.0% vs 37.7%) and HMMT 2026 math (96.2% vs 95.2%).

On long-context retrieval — the benchmark that most justifies a 1M window — V4-Pro holds 94% MRCR retrieval accuracy up to 128K tokens and 82% at 512K tokens, still landing at 66% at 1M tokens. DeepSeek reports that V4-Pro outperforms Gemini-3.1-Pro on the MRCR task (83.5 vs 76.3) but remains behind Claude Opus 4.6 (92.9). On CorpusQA, a benchmark closer to real long-document use, V4-Pro reaches 62.0% at 1M tokens, beating Gemini 3.1 Pro at 53.8%.

Strengths

- Agentic coding. V4-Pro’s Terminal-Bench and LiveCodeBench numbers put it at or above closed-source frontier models on the workloads developer tools actually care about. For role-specific context, see DeepSeek for coding.

- Long-context economics. A 27% FLOP reduction at 1M tokens versus V3.2 makes full-repository analysis and long-running agent episodes financially realistic, not just technically possible.

- Open weights under MIT. Both tiers publish weights under MIT, which is broader than the split licensing applied to older models like V3 base, Coder-V2, and VL2.

- Tooling compatibility. The API supports both OpenAI ChatCompletions and Anthropic protocols against the same base URL, so existing Claude-SDK clients switch by changing `base_url` and `api_key`.

Weaknesses

- Preview, not final. DeepSeek’s own technical report notes further post-training refinements are expected. For production workloads, evaluate V4 on your specific tasks before switching from a stable model.

- Long-context retrieval still trails Claude. Opus 4.6 remains ahead on MRCR at 1M tokens; if needle-in-a-haystack retrieval across very long inputs is your core workload, Claude is still the safer call.

- Data residency. DeepSeek’s API infrastructure is China-based, which creates data sovereignty considerations for teams handling regulated or sensitive data. Self-hosting via open weights under the MIT License is the recommended path for teams with strict data residency requirements.

- World knowledge. V4 leads all current open models on world knowledge but trails Gemini-3.1-Pro, so if you are building a factual Q&A product without retrieval, Gemini may still edge it.

How to access DeepSeek V4

Web chat and app

The simplest path is chat.deepseek.com or the mobile app. V4-Pro appears as Expert Mode; V4-Flash is Instant Mode. The DeepThink toggle now switches the active tier between non-thinking and thinking behaviour. For onboarding help, see the DeepSeek beginners guide.

API quickstart

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. The API is stateless — clients must resend the full conversation history on every request, unlike the web chat, which maintains session history on DeepSeek’s side. A minimal Python example using the OpenAI SDK:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[

{"role": "system", "content": "You are a concise code reviewer."},

{"role": "user", "content": "Review this diff..."},

],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

temperature=0.0,

max_tokens=8192,

)

print(resp.choices[0].message.reasoning_content)

print(resp.choices[0].message.content)Thinking mode returns reasoning_content alongside the final content. Thinking is enabled by default in V4 (reasoning_effort=”high”). To opt out for a faster/cheaper non-thinking call, pass extra_body={"thinking": {"type": "disabled"}} — non-thinking still supports tool calling and streaming. DeepSeek also exposes an Anthropic-compatible surface against the same base URL. For deeper coverage, see the DeepSeek API documentation and the DeepSeek API getting started tutorial.

Legacy IDs and the migration window

If you have existing integrations calling deepseek-chat or deepseek-reasoner, nothing breaks today. deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time), currently routing to deepseek-v4-flash non-thinking/thinking. Migration is a one-line model= swap; keep base_url, just update model to deepseek-v4-pro or deepseek-v4-flash.

Self-hosting

Weights are on Hugging Face. V4-Flash at ~160 GB is within reach of a well-specced workstation at 4-bit quantisation; V4-Pro at ~865 GB is a multi-GPU-server proposition. Our install DeepSeek locally tutorial walks through the common setups.

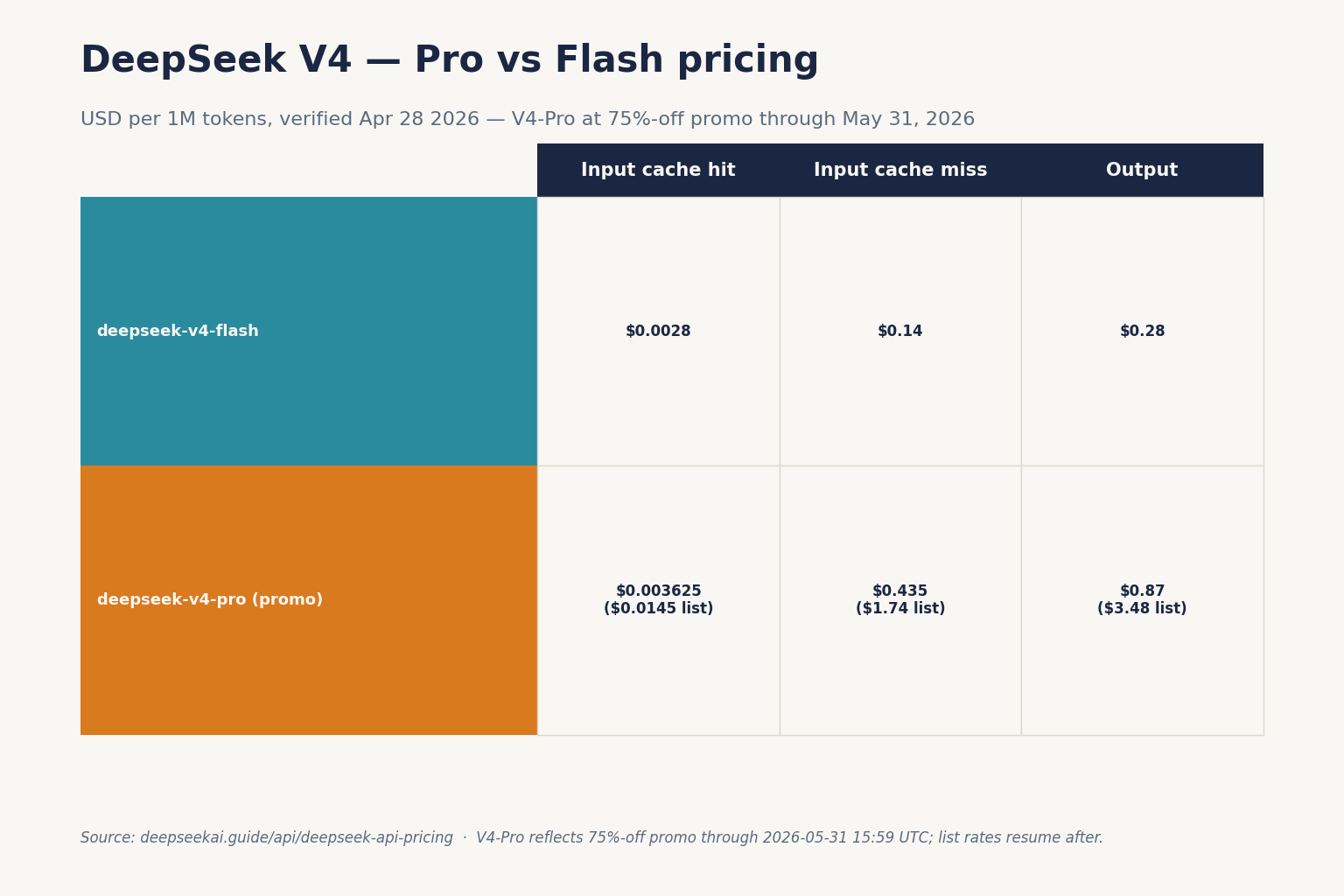

Pricing snapshot (as of April 2026)

Both tiers publish three token rates: cached input, uncached input, and output. Rates are in USD per 1 million tokens and apply equally in thinking and non-thinking modes — the reasoning mode only affects how many tokens you burn at that rate.

| Model | Cache hit (input) | Cache miss (input) | Output |

|---|---|---|---|

| deepseek-v4-flash | $0.0028 | $0.14 | $0.28 |

| deepseek-v4-pro (75% promo through 2026-05-31) | $0.003625 (list $0.0145) | $0.435 (list $1.74) | $0.87 (list $3.48) |

DeepSeek is charging $0.14/million tokens input and $0.28/million tokens output for Flash, and $0.435/million input and $0.87/million output for Pro during the 75% promo through 2026-05-31 (list $1.74 / $3.48). Off-peak discounts are not currently offered — DeepSeek ended them on 2025-09-05 and did not reintroduce them with V4. Verify numbers on the official pricing page before budgeting, and see the DeepSeek API pricing page for a live-tracked breakdown.

Worked example — V4-Flash at scale

1,000,000 API calls, 2,000-token cached system prompt, 200-token uncached user message, 300-token response:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

Worked example — V4-Pro at the same volume

- Cached input: 2,000,000,000 × $0.003625/M (promo) = $7.25

- Uncached input: 200,000,000 × $0.435/M (promo) = $87.00

- Output: 300,000,000 × $0.87/M (promo) = $261.00

- Total: $355.25 (V4-Pro 75% promo through 2026-05-31; list-price total is $1,421.00)

At list rates Pro is roughly 10× Flash on the same workload (about 3× during the 75% promo through 2026-05-31). That is the honest price of the benchmark lift. For most chat and standard-reasoning workloads, Flash is the correct default. Reserve Pro for agentic coding, complex tool-use, or the specific long-context tasks where its numbers clearly lead.

Best use cases

- Agentic coding assistants. V4-Pro on Terminal-Bench 2.0 and LiveCodeBench is the strongest open-weight option today — see DeepSeek for developers.

- Long-document analysis. 1M context with CorpusQA numbers that beat Gemini 3.1 Pro makes V4-Pro a solid choice for research workflows.

- High-volume chat applications. V4-Flash at $0.14/$0.28 per million tokens makes customer-facing assistants economically viable at scale.

- Self-hosted enterprise deployments. MIT-licensed weights on both tiers remove the licensing ambiguity that blocked some teams on V3-era releases.

Comparable alternatives

The obvious frontier comparisons are GPT-5.x and Claude Opus 4.x on the closed side, and Kimi K2.6 and GLM-5.1 among open-weight peers. For structured head-to-heads, start with DeepSeek vs Claude and DeepSeek vs ChatGPT. If you are evaluating broader options, the DeepSeek alternatives hub tracks the current open-weight landscape.

Verdict

DeepSeek V4 is the first open-weight release where the two-tier product strategy — frontier Pro, economic Flash — is genuinely executed, not just announced. V4-Pro is the most capable open model you can run at 1M context today; V4-Flash is the one most teams should actually deploy. It is still a Preview, Claude still leads on long-context retrieval, and the infrastructure is China-based — so evaluate on your workload, budget for the uncached-input line, and check the official docs before committing.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

What is the difference between DeepSeek V4-Pro and V4-Flash?

V4-Pro is the frontier tier: 1.6T total parameters, 49B active per token, priced at $0.435 input / $0.87 output per million tokens during the 75% promo through 2026-05-31 (list rates: $1.74 input / $3.48 output). V4-Flash is the cost-efficient tier: 284B total, 13B active, priced at $0.14/$0.28. Both share a 1M context window and the same API surface. For a side-by-side, see our DeepSeek V4-Pro and DeepSeek V4-Flash pages.

How do I enable thinking mode on DeepSeek V4?

Thinking mode is a request parameter on either V4 tier, not a separate model. Set reasoning_effort="high" and pass extra_body={"thinking": {"type": "enabled"}}; use "max" for maximum reasoning effort. The response returns reasoning_content alongside the final content. More examples live in the DeepSeek API documentation.

Is DeepSeek V4 free to use?

The web chat at chat.deepseek.com is free to try, and the weights are MIT-licensed and downloadable from Hugging Face for self-hosting. The hosted API is paid per token at the rates listed on the official pricing page. There is no general “free tier” of the API beyond any promotional granted balance; see is DeepSeek free for specifics.

Do legacy DeepSeek model IDs still work?

Yes, for now. deepseek-chat and deepseek-reasoner currently route to deepseek-v4-flash in non-thinking and thinking modes respectively. They will be fully retired on 2026-07-24 at 15:59 UTC. Migration requires only a model-ID swap; the base URL does not change. Our DeepSeek API getting started guide covers the switch.

Can DeepSeek V4 really handle 1 million tokens of context?

Yes, 1M input context is the default on both tiers, with up to 384,000 tokens of output. Retrieval accuracy at the full 1M window is around 66% on MRCR — usable but not perfect. For workloads that need reliable retrieval across very long inputs, Claude Opus 4.6 still leads. For everything else, V4-Pro’s CorpusQA results are competitive; see the DeepSeek context length checker to plan your prompts.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- Model cardDeepSeek V4-Pro model card on Hugging FaceCross-checking V4-Pro benchmarks before procurementLast checked: April 30, 2026

- Model cardDeepSeek V4-Flash model card on Hugging FaceV4-Flash 284B/13B specs and ~160 GB on-disk sizeLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

- BenchmarkHumanity's Last ExamHard knowledge-recall benchmarkLast checked: April 30, 2026

- Technical reportDeepSeek V4 technical reportCSA/HCA hybrid attention, MRCR/CorpusQA numbers, training tokensLast checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.