DeepSeek V4-Pro review: the 1.6T open-weight frontier model

If you are choosing a frontier-tier coding model in April 2026 and want to know whether DeepSeek V4-Pro actually competes with Claude Opus 4.6 and GPT-5.4 — or just undercuts them on price — this is the review to read. DeepSeek V4-Pro shipped on April 24, 2026 as the larger half of a two-model V4 Preview family, published under MIT with a 1 million token default context. I have been running it against V3.2 and V4-Flash in production since launch, and the short version is that it earns the frontier label on coding benchmarks while trailing the closed models on general-knowledge recall. This article breaks down the architecture, benchmarks, pricing math, access routes, and the workloads where Pro beats Flash.

What DeepSeek V4-Pro is

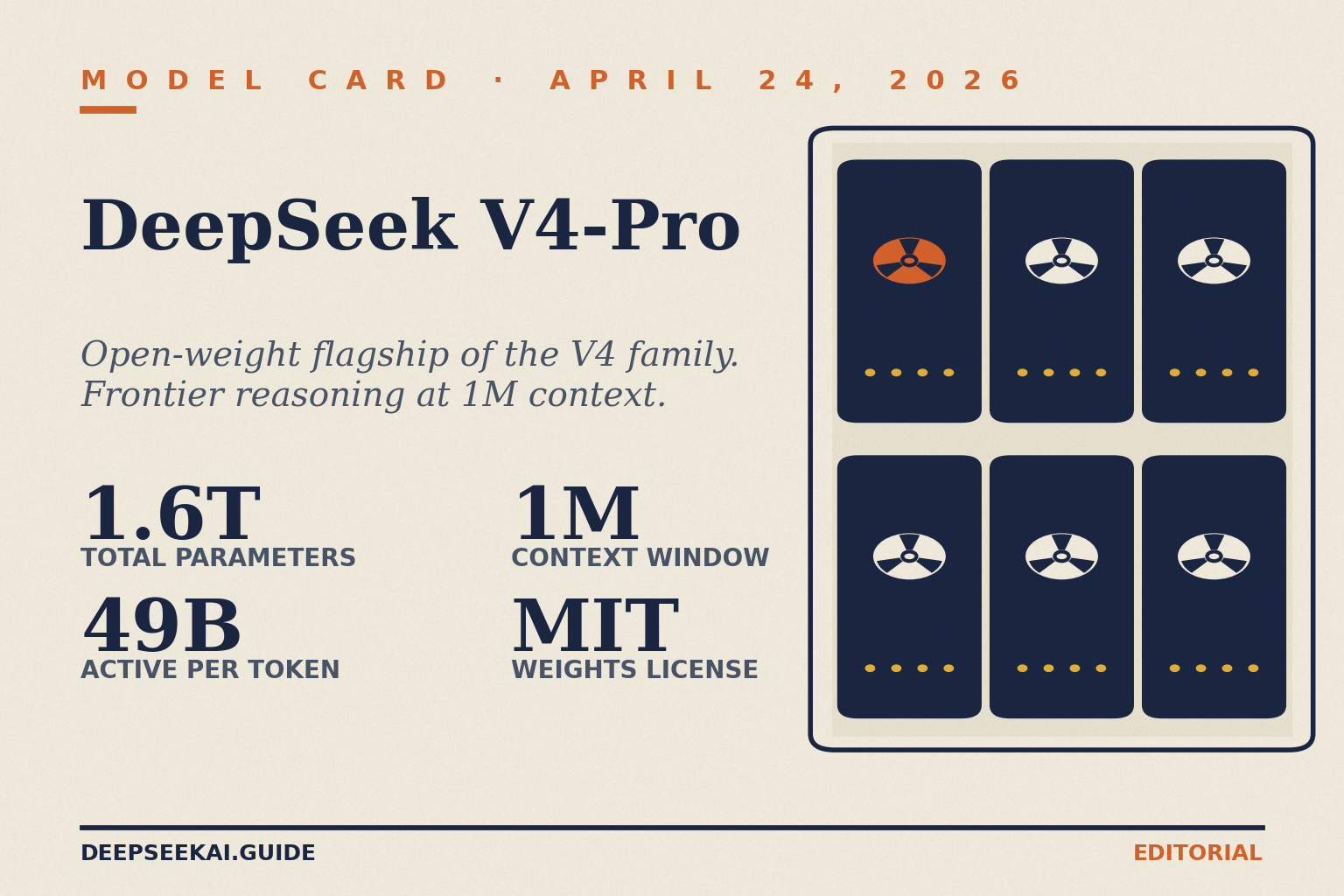

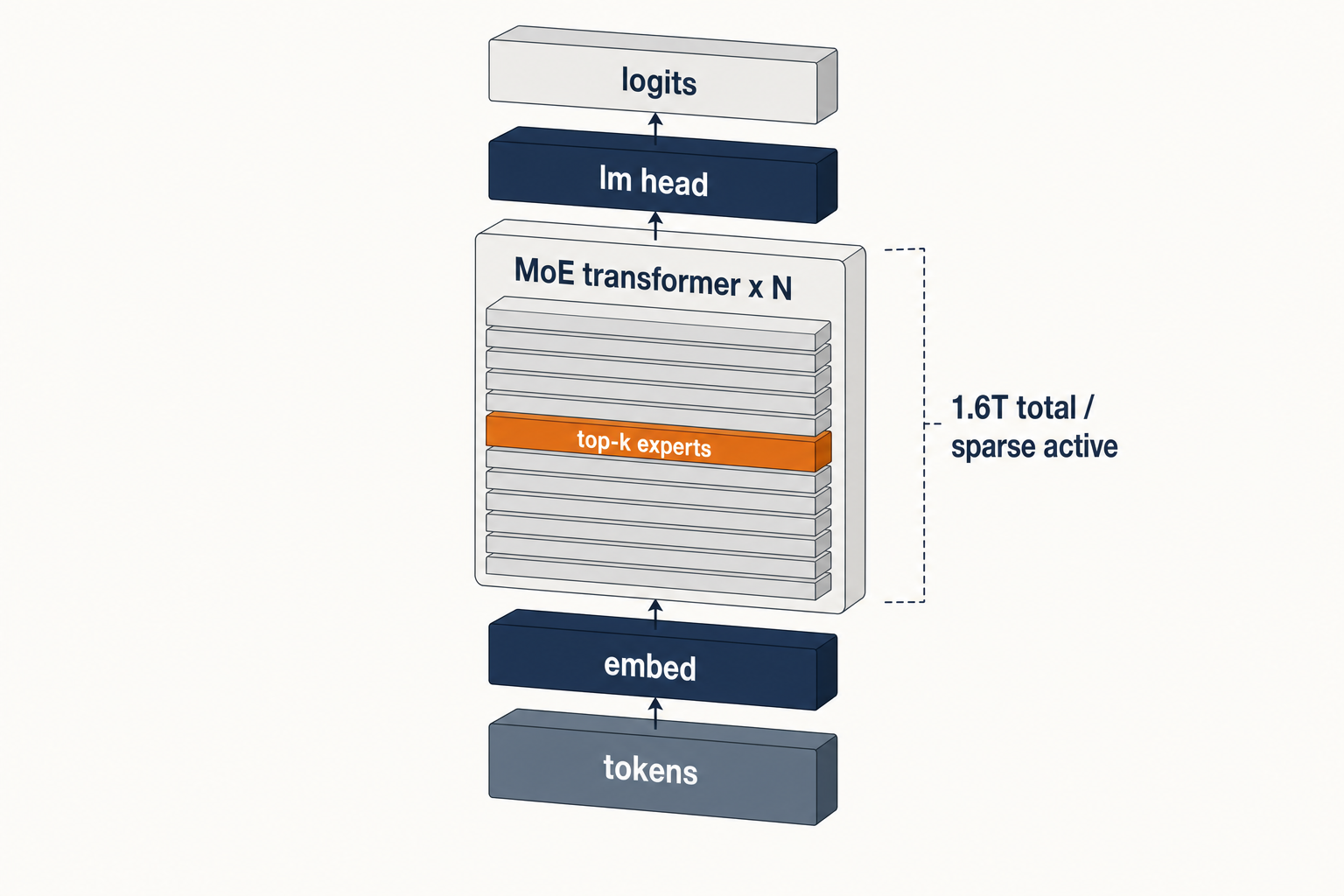

DeepSeek V4-Pro is the flagship tier of the V4 Preview series. DeepSeek V4 is a two-model family, both using Mixture-of-Experts (MoE) architecture and released simultaneously under the MIT License on April 24, 2026. DeepSeek-V4-Pro is the flagship: 1.6 trillion total parameters, 49 billion active per token, pre-trained on 33 trillion tokens. It is the larger sibling of DeepSeek V4-Flash, and together they replace the V3.x generation that preceded them. You can browse the shared feature set across both tiers on the DeepSeek V4 overview page.

Unlike the V3.x API, where a chat model and a reasoner were separate IDs, V4 collapses that into a single model ID with a reasoning-effort parameter. DeepSeek-V4-Pro and DeepSeek-V4-Flash both support three reasoning effort modes: thinking with reasoning_effort="high" (the default), thinking-max with reasoning_effort="max", and non-thinking via extra_body={"thinking": {"type": "disabled"}}.

Architecture and lineage

V4-Pro is the largest open-weight model DeepSeek has shipped, and on current evidence the largest open-weight model from any lab. DeepSeek-V4-Pro is the new largest open weights model. It is larger than Kimi K2.6 (1.1T) and GLM-5.1 (754B) and more than twice the size of DeepSeek V3.2 (685B). Pro is 865GB on Hugging Face, Flash is 160GB.

The headline change in V4 is not raw parameter count — it is long-context efficiency. DeepSeek-V4 series incorporate a hybrid attention mechanism combining Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA) to dramatically improve long-context efficiency. In the 1M-token context setting, DeepSeek-V4-Pro requires only 27% of single-token inference FLOPs and 10% of KV cache compared with DeepSeek-V3.2. Manifold-Constrained Hyper-Connections (mHC) strengthen conventional residual connections, enhancing stability of signal propagation across layers while preserving model expressivity.

Specs at a glance

| Spec | DeepSeek V4-Pro |

|---|---|

| Release date | 2026-04-24 (Preview) |

| Total parameters | 1.6T (MoE) |

| Active parameters / token | 49B |

| Pre-training tokens | 33T |

| Context window | 1,000,000 tokens |

| Max output | 384,000 tokens |

| License (code and weights) | MIT |

| Hugging Face size on disk | 865 GB |

| Precision | FP4 (MoE experts) + FP8 (other params) |

The predecessors that shaped V4-Pro are worth a quick note for context: DeepSeek V3.2 introduced the sparse-attention work that CSA and HCA build on, and DeepSeek R1 established the thinking-mode pattern that V4 now exposes as a parameter rather than a separate model. For the full timeline, see the DeepSeek models hub.

Benchmarks

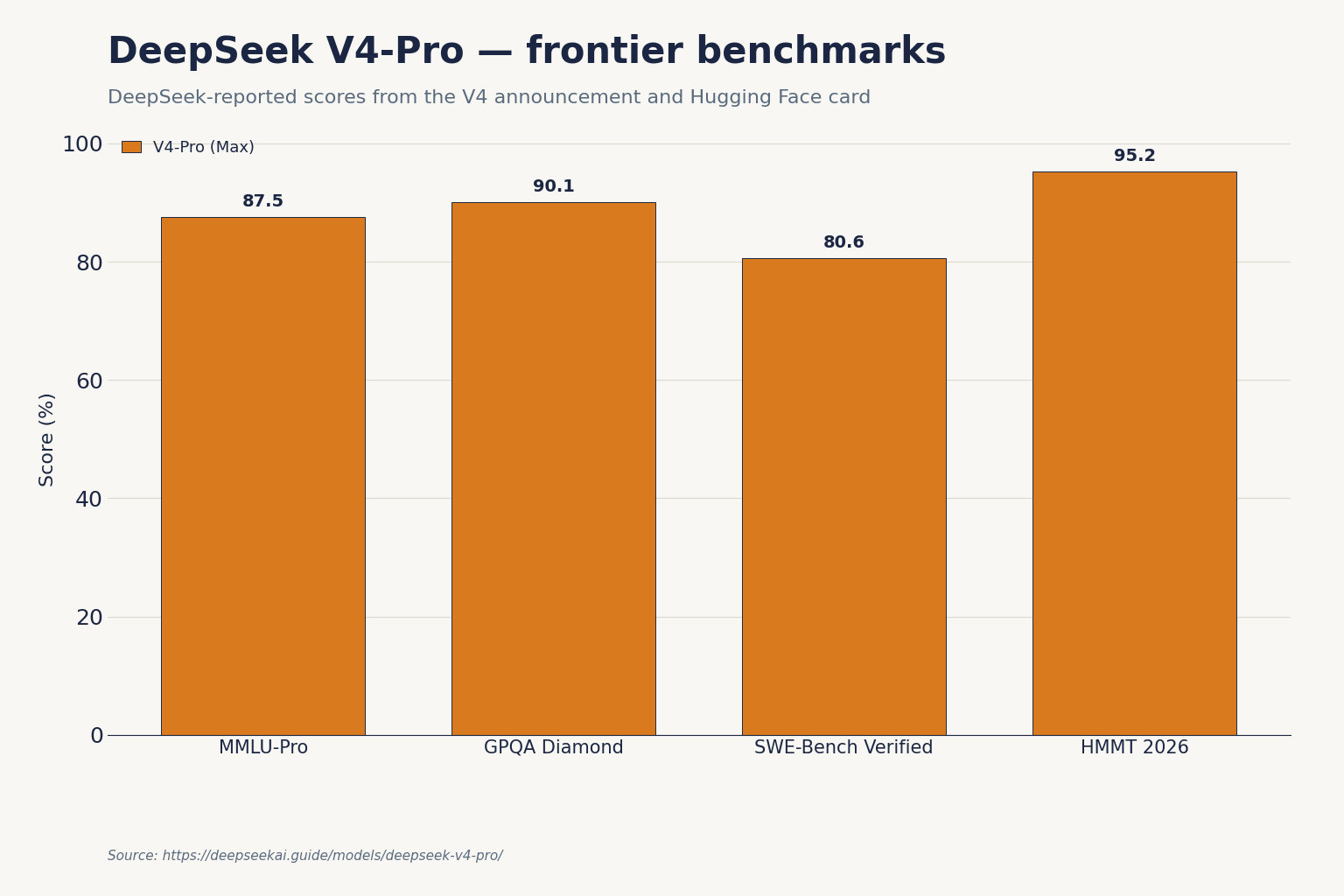

These numbers come from DeepSeek’s published V4 announcement and Hugging Face model card. They are DeepSeek-reported evaluations under the company’s own configurations; independent replication will take days to weeks, as with every major release.

| Benchmark | V4-Pro (reasoning_effort=max) | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|---|

| SWE-Bench Verified | 80.6 | 80.8 | n/r | 80.6 |

| Terminal-Bench 2.0 | 67.9 | 65.4 | — | — |

| LiveCodeBench | 93.5 | 88.8 | — | — |

| Codeforces rating | 3206 | n/r | 3168 (xHigh) | — |

| HMMT 2026 | 95.2 | 96.2 | 97.7 | — |

| IMOAnswerBench | 89.8 | 75.3 | 91.4 | 81.0 |

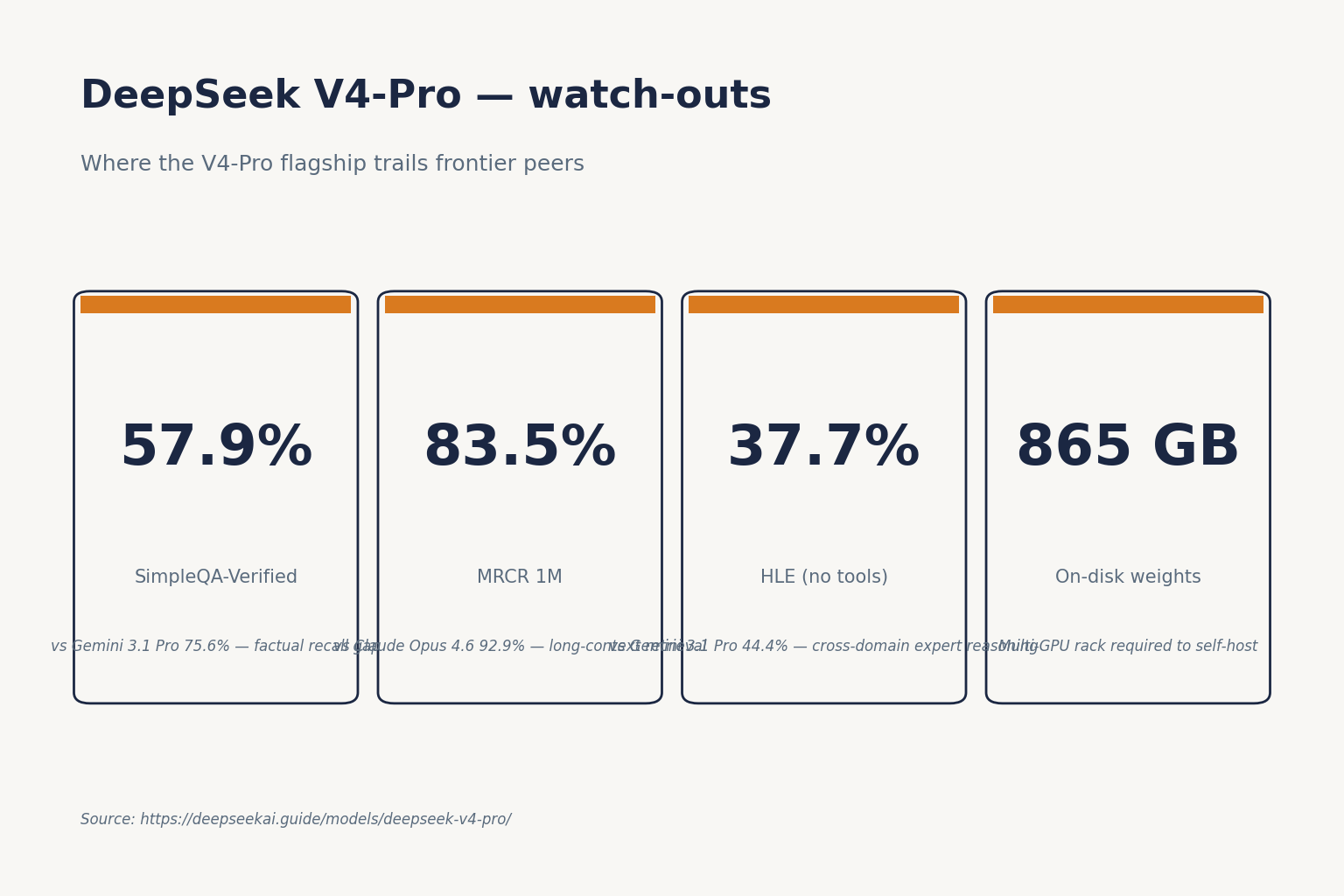

| HLE (no tools) | 37.7 | 40.0 | 39.8 | 44.4 |

| SimpleQA-Verified | 57.9 | — | — | 75.6 |

| MMLU-Pro | 87.5 | — | — | — |

| GPQA Diamond | 90.1 | — | — | — |

| MRCR 1M (long context) | 83.5 | 92.9 | — | — |

Sources: On coding benchmarks, DeepSeek V4-Pro leads Claude on Terminal-Bench 2.0 (67.9% vs 65.4%), LiveCodeBench (93.5% vs 88.8%), and Codeforces rating (3206 vs no reported score). Claude Opus 4.6 holds a marginal lead on SWE-bench Verified (80.8% vs 80.6%), and a meaningful lead on HLE (40.0% vs 37.7%) and HMMT 2026 math (96.2% vs 95.2%). GPQA Diamond 90.1, GSM8K 92.6, HLE 37.7, MMLU-Pro 87.5, SWE-Bench Pro 55.4, SWE-Bench Verified 80.6. Codeforces 3206 is the line that matters. That is better than GPT-5.4 (xHigh) at 3168. MRCR 1M: 83.5 vs Opus 4.6’s 92.9. Opus is still the long-context leader. You can cross-check individual leaderboard entries at the official SWE-bench leaderboards, and the full DeepSeek V4-Pro model card on Hugging Face.

Strengths

- Agentic coding. V4-Pro at 67.9% on Terminal-Bench 2.0 beats Claude Opus 4.6 (65.4%) by 2.5 points — this benchmark involves real autonomous terminal execution with a 3-hour timeout. That gap matters for agentic workflows more than a single-turn coding benchmark would.

- Competitive programming. The Codeforces rating of 3206 puts V4-Pro ahead of GPT-5.4 in that specific style of reasoning.

- Formal and olympiad math. V4-Pro beats Claude and Gemini on IMOAnswerBench and lands close to GPT-5.4 on HMMT 2026.

- Long-context economics. The 10% KV cache figure vs V3.2 is the reason 1M context ships as default, not as a premium add-on.

- Licensing clarity. Both code and weights are MIT, which simplifies commercial use compared with the separate DeepSeek Model License that covered some older releases (V3 base, Coder-V2, VL2).

Weaknesses

- World knowledge. SimpleQA-Verified at 57.9% versus Gemini’s 75.6% reveals a meaningful factual knowledge retrieval gap. If your use case requires accurate real-world knowledge recall — not just code generation — Gemini holds a clear edge.

- Long-context retrieval quality. V4-Pro ships the infrastructure to process 1M tokens cheaply, but Opus 4.6 still extracts more accurately from very long haystacks on MRCR.

- HLE. Gemini 3.1 Pro pulls ahead on cross-domain expert reasoning.

- Tooling caveat. This release does not include a Jinja-format chat template. DeepSeek provides Python encoding scripts in the model repository for prompt construction. Plan for this in your integration.

- Self-hosting hardware. At 865 GB on disk, V4-Pro realistically needs a multi-GPU rack; most developers will reach for V4-Flash for local work.

How to access V4-Pro

Web and app

On chat.deepseek.com and the mobile app, V4-Pro is exposed as Expert Mode; V4-Flash appears as Instant Mode. The DeepThink toggle switches between non-thinking and thinking modes on whichever tier you have selected. See the DeepSeek chat walkthrough if you are new to the interface.

API

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. DeepSeek also ships an Anthropic-compatible surface against the same base URL, so the Anthropic SDK works by swapping base_url and api_key. The API is stateless — your client must resend the full conversation history on every request. The web and app, by contrast, keep session state for you.

A minimal Python example using the OpenAI SDK, calling V4-Pro in thinking mode:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_DEEPSEEK_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[

{"role": "system", "content": "You are a senior Go engineer."},

{"role": "user", "content": "Port this Rust service to Go, preserving concurrency semantics."},

],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

max_tokens=8000,

)

print(resp.choices[0].message.content)When thinking is enabled the response returns reasoning_content alongside the final content. Set reasoning_effort="max" for the maximum thinking budget, and pair it with a context window of at least 384K tokens to avoid truncation.

Legacy IDs still work during the migration window. deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time). Currently routing to deepseek-v4-flash non-thinking/thinking. If you are on one of those, swap the model= field to deepseek-v4-pro or deepseek-v4-flash before that deadline — the base_url does not change. For a longer walkthrough, see our DeepSeek API getting started tutorial, and for parameter reference (temperature, top_p, max_tokens, reasoning_effort, JSON mode, tool calling, streaming, context caching) the DeepSeek API documentation.

Open weights

Both V4 models are published on Hugging Face under MIT. At 865 GB you will not run V4-Pro on a single workstation — most teams wanting local inference will reach for the DeepSeek hardware calculator to size V4-Flash instead.

Pricing snapshot (as of April 2026)

| Metric | V4-Pro ($/1M tokens) | V4-Flash ($/1M tokens) |

|---|---|---|

| Input, cache hit | $0.003625 promo (list $0.0145) | $0.0028 |

| Input, cache miss | $0.435 promo (list $1.74) | $0.14 |

| Output | $0.87 promo (list $3.48) | $0.28 |

DeepSeek is charging $0.14/million tokens input and $0.28/million tokens output for Flash. Pro list rates are $0.435/million input and $0.87/million output during the 75% promo through 2026-05-31 (list $1.74 / $3.48), but a 75% promotional discount runs through 2026-05-31 15:59 UTC: during the promo, V4-Pro bills at $0.435/million cache-miss input and $0.87/million output (cache-hit input at $0.003625 vs list $0.0145). After the promo ends, list rates resume. The off-peak night discount that existed in the V3 era is not active — DeepSeek discontinued it on 2025-09-05 and has not reintroduced it for V4. Pricing during a Preview window can change; confirm on the DeepSeek API pricing page before committing.

Worked example: 1 million V4-Pro calls

Scenario: 1,000,000 API calls, each with a 2,000-token system prompt (cached across calls), a 200-token user message (uncached miss against the prefix), and a 300-token response. Pricing tier: deepseek-v4-pro.

Cached input : 2,000,000,000 tokens × $0.003625/M = $7.25 (promo; list $29.00)

Uncached input: 200,000,000 tokens × $0.435/M = $87.00 (promo; list $348.00)

Output : 300,000,000 tokens × $0.87/M = $261.00 (promo; list $1,044.00)

---------

Total $355.25 (promo through 2026-05-31; list $1,421.00)The same workload on V4-Flash works out to $117.60 (after the 2026-04-26 cache-hit drop) — roughly one-third of V4-Pro under the current promo, or about one-twelfth at list price. That is the central pricing trade-off at list rates: V4-Pro is ~7× the cache-miss input and ~12× the output of V4-Flash; the 75% promo through 2026-05-31 narrows that to ~3×. Either way, it should only be earned by a benchmark lift that justifies it. For most chat, summarisation, and straightforward code-completion workloads, Flash is the rational default. For autonomous coding agents running long Terminal-Bench-style loops, Pro starts to pay back. Model the exact numbers with the DeepSeek pricing calculator.

Competitive context matters here. OpenAI’s GPT 5.4 costs $2.50 per 1M input tokens and $15.00 per 1M output tokens, while Claude Opus 4.6 costs $5 per 1M input tokens and $25 per 1M output tokens. As such, DeepSeek — at least on benchmarks — delivers similar performance to these models at a 50-80% cost reduction at list rates, deepening to roughly 90-97% during the V4-Pro 75% promo through 2026-05-31. V4-Pro is among the cheapest frontier-tier coding APIs as of April 2026, but any specific “cheapest” claim depends on which provider you are comparing against — check each vendor’s live pricing page.

Best use cases

- Autonomous coding agents. Terminal-Bench 2.0 and SWE-Bench Verified scores make this the standout application. Pair with DeepSeek for coding patterns.

- Repo-scale code review and migration. The 1M-token window lets Pro ingest mid-sized monorepos in one pass — see DeepSeek for developers.

- Research and formal math. The IMOAnswerBench and Putnam results slot V4-Pro into workflows described in DeepSeek for research and DeepSeek for math.

- High-volume data-analysis pipelines where Pro’s reasoning lift is worth the premium on a subset of requests — see DeepSeek for data analysis.

Alternatives worth comparing

If you are evaluating V4-Pro against closed frontier models, the head-to-heads to read are DeepSeek vs Claude and DeepSeek vs ChatGPT. If you want a shortlist of open-weight options at similar scale, open-source AI like DeepSeek rounds up Kimi K2.6, GLM 5.1 and others.

Verdict

V4-Pro is a credible frontier-tier coding and agent model at roughly one-seventh the output cost of Claude Opus 4.6. It trails the closed models on world knowledge and HLE, and Opus still leads on long-context retrieval quality. For teams building coding copilots, autonomous agents, or competitive-programming tools on an open-weight MIT license, Pro earns a place in the stack; for general chat, V4-Flash remains the rational default.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

How big is DeepSeek V4-Pro and how does it compare to V4-Flash?

V4-Pro has 1.6 trillion total parameters with 49 billion active per token; V4-Flash has 284 billion total with 13 billion active. Both share a 1M-token context window, MIT-licensed weights, and the same three reasoning-effort modes. On SWE-Bench Verified, Flash scores 79.0 vs Pro’s 80.6 — close on general code, with wider gaps on Terminal-Bench and SimpleQA. See the side-by-side DeepSeek V4-Flash page.

Is DeepSeek V4-Pro open source?

Yes. Both code and weights for V4-Pro and V4-Flash ship under the MIT license, published on Hugging Face. That is different from older releases where weights carried a separate DeepSeek Model License. The practical implication: commercial and redistributive use is straightforward. The background on DeepSeek’s licensing history is covered in is DeepSeek open source.

What is the DeepSeek V4-Pro API price?

List rates as of April 2026: V4-Pro is $0.0145 per 1M cached-input tokens, $1.74 per 1M uncached-input tokens, and $3.48 per 1M output tokens. A 75% promotional discount runs through 2026-05-31 15:59 UTC, bringing those down to $0.003625 / $0.435 / $0.87 respectively. That is roughly 7× the V4-Flash rate card at list, ~3× during the promo. The off-peak discount that used to apply in the V3 era is not active. Confirm current rates on the DeepSeek API pricing page.

How do I enable thinking mode on DeepSeek V4-Pro?

Thinking mode is a request parameter, not a separate model. Send reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}, or reasoning_effort="max" for the maximum budget. The response returns reasoning_content alongside the final content. A worked snippet lives in our DeepSeek API code examples.

Can I still use the deepseek-chat and deepseek-reasoner model IDs?

Yes, but only until 2026-07-24 at 15:59 UTC. Until then, both legacy IDs route to V4-Flash (non-thinking and thinking respectively). After the retirement date, requests using those IDs will fail, so migrate your model= field to deepseek-v4-pro or deepseek-v4-flash before the deadline. No base_url change is required. More at DeepSeek OpenAI SDK compatibility.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- Model cardDeepSeek V4-Pro model card on Hugging FaceV4-Pro architecture, 865 GB on-disk size, FP4/FP8 precisionLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

- BenchmarkHumanity's Last ExamHard knowledge-recall benchmarkLast checked: April 30, 2026

- Technical reportDeepSeek V4 technical reportCodeforces 3206, IMOAnswerBench 89.8, MRCR 1M numbersLast checked: April 30, 2026

- BenchmarkOfficial SWE-bench leaderboardsCross-checking SWE-Bench Verified entries for V4-Pro vs Claude Opus 4.6Last checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.