DeepSeek Chat: The 2026 Guide to the Web App, Mobile and API

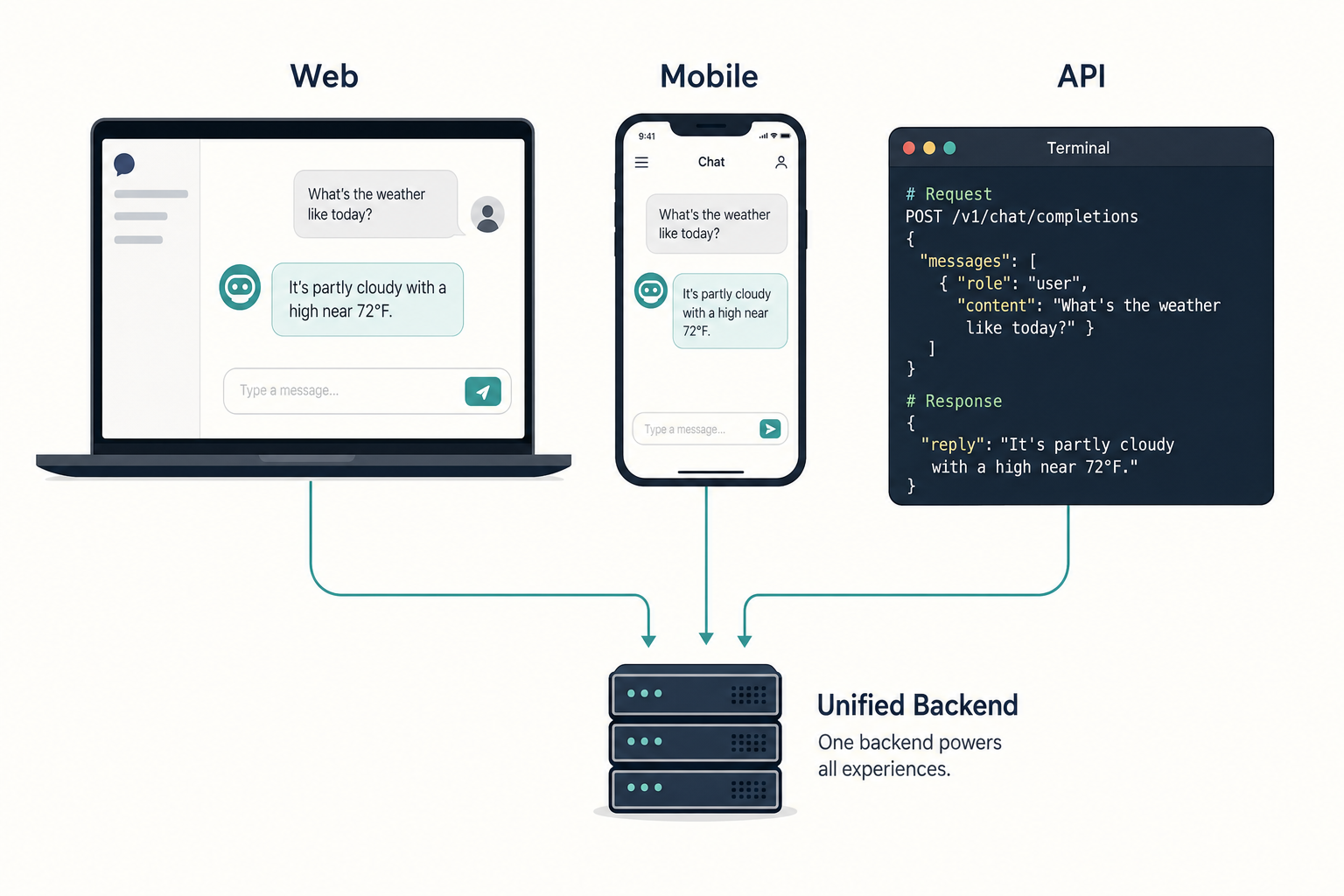

If you have searched for “deepseek chat”, you are probably trying to answer one concrete question: where do I actually type a prompt, and is it free? The short answer is that DeepSeek chat exists in three places — the browser app at chat.deepseek.com, the official iOS and Android apps, and a developer API that speaks the same wire format as OpenAI. They look similar, but they bill differently, remember conversations differently, and expose different controls. This guide walks through each surface as of April 24, 2026 — the day DeepSeek V4 Preview shipped — and shows you which one to pick for everyday questions, long-document work, coding, or building your own product on top.

What “DeepSeek chat” actually means in 2026

The phrase gets used three different ways, and most confusion online comes from mixing them up:

- The web chat at chat.deepseek.com — a free ChatGPT-style interface in the browser.

- The mobile apps on iOS and Android, which share accounts and history with the web.

- The legacy API model ID

deepseek-chat, which developers call from code. This one is retiring soon; details below.

All three are powered by the same underlying model family. DeepSeek-V4 Preview is officially live and open-sourced, with DeepSeek-V4-Pro at 1.6T total / 49B active parameters positioned against top closed-source models, and DeepSeek-V4-Flash at 284B total / 13B active parameters as the fast, efficient, economical choice. Both ship as open-weight Mixture-of-Experts models under the MIT license and both support a 1,000,000-token context window with output up to 384,000 tokens. If you want the deeper architecture story, see our dedicated DeepSeek V4 page.

The web chat at chat.deepseek.com

This is what most people mean by “DeepSeek chat”. Open the site, sign in with an email address (Google, Gmail, Yahoo and similar global providers are accepted), and you land on a clean conversation pane with a prompt box at the bottom. No credit card, no trial countdown.

Expert Mode and Instant Mode

The V4 launch replaced the old “DeepThink” toggle with two named modes. DeepSeek-V4 Preview launched on Hugging Face, the DeepSeek API, and chat.deepseek.com, with Expert Mode mapped to V4-Pro and Instant Mode mapped to V4-Flash. In practice:

- Instant Mode (V4-Flash). Fast replies, low latency. Use it for everyday questions, drafting, summarising, translation, light coding.

- Expert Mode (V4-Pro). The frontier tier. Slower, but stronger on long multi-step reasoning, agentic coding and difficult maths. Use it when the answer quality matters more than the wait.

Both modes also expose a thinking toggle. When enabled, the model first produces a reasoning trace, then the final answer. In the API that trace is returned as reasoning_content alongside the final content; in the web UI you see it rendered as a collapsible block above the reply.

Features the web chat gives you

- File upload. Drop PDFs, Word documents, spreadsheets or images into the prompt box; the chat extracts text and reasons over it.

- Web search. A toggle that lets V4 pull fresh pages into its context before answering.

- Chat history. Conversations are saved to your account and sync across the web and mobile app.

- Voice input. Available in the mobile apps; transcribed and sent as a normal text prompt.

The mobile apps

The official DeepSeek app on the App Store and Google Play pairs with the same account. The Google Play listing was last updated on April 24, 2026, and the app is advertised as free with no in-app purchases. If you need help picking between browser and app on a given device, our DeepSeek browser vs app comparison lays out the trade-offs (battery, share-sheet access, typing comfort, file handling).

Two points worth knowing before you install:

- Verify the publisher. Several lookalike apps exist. Check the developer name is “DeepSeek” and cross-reference with our guide to verifying the official DeepSeek app.

- Availability varies by region. Italy’s data-protection authority ordered the app blocked in January 2025, and several US states restrict it on government devices. Check our availability by country page before travel.

Is DeepSeek chat free?

The consumer web and mobile products are free to use with an account. DeepSeek does not publicly document a daily message cap as of April 2026, though the web experience does apply dynamic throttles to stop automated abuse. The API is separate and billed per token — that is where “free” stops.

If your goal is to build something (a script, a bot, a product), you will want the API. That is a different surface and a different mental model. Full pricing on the DeepSeek API pricing page; a side-by-side of consumer vs developer access is in our DeepSeek free vs paid write-up.

The developer side: the API behind the chat

Developers call DeepSeek through a single HTTPS endpoint. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at base URL https://api.deepseek.com. DeepSeek also ships an Anthropic-compatible surface against the same base URL, so the Anthropic SDK works by swapping base_url and api_key.

Here is a minimal Python example using the OpenAI SDK pattern:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_DEEPSEEK_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": "You are a concise assistant."},

{"role": "user", "content": "Summarise the Treaty of Westphalia in three bullets."},

],

temperature=1.3,

max_tokens=512,

)

print(resp.choices[0].message.content)

To switch on thinking mode, set reasoning_effort="high" and pass extra_body={"thinking": {"type": "enabled"}}. For maximum reasoning effort use reasoning_effort="max". Two technical points that catch newcomers out:

- The API is stateless. DeepSeek does not remember previous turns on its side. You must resend the full

messagesarray with each request. Contrast that with the web chat, which keeps history server-side for your account. - Thinking mode is a parameter, not a separate model. Both

deepseek-v4-proanddeepseek-v4-flashaccept the samereasoning_effort/thinkingflags. There is no “reasoner” model to choose.

Step-by-step setup, authentication, key management and error handling are covered in our DeepSeek API getting started tutorial.

The legacy deepseek-chat model ID

If you find the string deepseek-chat in older code or tutorials, it refers to the legacy API model ID. During the V4 migration window it still works: deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after July 24, 2026, 15:59 UTC, and currently route to deepseek-v4-flash in non-thinking and thinking modes respectively. Migrating is a one-line change — swap model="deepseek-chat" for model="deepseek-v4-flash"; the base_url does not change.

Pricing snapshot for the API

V4 pricing is listed per 1 million tokens, as of April 24, 2026. Always re-check on the DeepSeek API pricing page before you commit to a production estimate — Preview pricing can move.

| Model tier | Input (cache hit) | Input (cache miss) | Output |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 | $0.14 | $0.28 |

deepseek-v4-pro |

$0.003625 promo / $0.0145 list | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list |

DeepSeek is charging $0.14/million tokens input and $0.28/million tokens output for Flash, and $0.435/million input and $0.87/million output for Pro during the 75% promo through 2026-05-31 (list $1.74 / $3.48). The off-peak night discount that existed in 2025 has not been reintroduced — do not budget around it.

A worked example (V4-Flash)

Say you run 1,000,000 API calls with a 2,000-token cached system prompt, a 200-token user message (uncached each time) and a 300-token response. At V4-Flash rates:

- Cached input: 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200,000,000 tokens × $0.14/M = $28.00

- Output: 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

The same workload on V4-Pro comes to roughly $1,421 — ten times the cost for about an order of magnitude more capacity on hard reasoning tasks. Flash is the default recommendation for chat-shaped workloads; Pro is for frontier-tier coding or agentic jobs where the benchmark lift justifies the spend. See our DeepSeek cost estimator for quick what-ifs on your own numbers.

Web chat vs API: a decision table

| Question | Use the web/app | Use the API |

|---|---|---|

| Need to start in the next 30 seconds? | Yes | No |

| Want automatic conversation history? | Yes, server-side per account | No — stateless, you resend each turn |

| Building a product or internal tool? | No | Yes |

| Need JSON output, tool calls, streaming, context caching? | No | Yes |

| Care about per-request cost? | Free tier | Billed per token |

| Uploading a 400-page PDF to chat about? | Yes | Yes (via file text in prompt) |

Where DeepSeek chat fits in the field

V4 lands in a crowded frontier. On DeepSeek’s own reported numbers, V4-Pro (reasoning_effort=max) “outperforms Claude Sonnet 4.5 and approaches the level of Opus 4.5” on agent tasks, with internal numbers suggesting the real-workload gap is smaller than headline scores indicate. Independent reviewers frame it more narrowly: DeepSeek-V4-Pro at maximum reasoning effort demonstrates superior performance relative to GPT-5.2 and Gemini-3.0-Pro on standard reasoning benchmarks, but falls marginally short of GPT-5.4 and Gemini-3.1-Pro, suggesting a trajectory that trails current-generation frontier models by approximately 3 to 6 months.

Press coverage tracks the same shape — V4 surpasses some U.S. models such as GPT-5.2 and Gemini 3.0-Pro, but sits slightly below GPT-5.4 and Gemini 3.1-Pro. For head-to-head context, see DeepSeek vs ChatGPT and DeepSeek vs Claude. For the wider competitive field, the AI comparison hub carries the full set. Primary source for the launch numbers: Al Jazeera’s launch-day write-up.

Privacy, regions and what to avoid pasting

DeepSeek processes web and app conversations on servers governed by Chinese law. That is a legitimate concern for sensitive client data, regulated information (health, legal, financial) or anything under NDA. If you fall into any of those categories, either use the open weights locally (see our install DeepSeek locally tutorial) or route through a provider hosted in a jurisdiction you trust. The longer discussion is on our DeepSeek privacy page.

Quick reference: which surface for which job

- Casual questions, drafting, study help: web chat in Instant Mode.

- Long research or long documents: web chat, Expert Mode, with file upload and web search on.

- Coding agent or automated script: API,

deepseek-v4-pro, thinking enabled. - High-volume classification or extraction: API,

deepseek-v4-flash, JSON mode, system prompt cached. - Anything sensitive or regulated: local weights via Ollama or a trusted host.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Is DeepSeek chat really free?

The browser version at chat.deepseek.com and the official mobile apps are free to use with a DeepSeek account, with no listed subscription tier and no public daily message cap as of April 2026. Heavy automated traffic can hit soft throttles, and regional availability varies. Paid billing only kicks in when you use the developer API, which is priced per token. For a breakdown, see our is DeepSeek free guide.

How is DeepSeek chat different from the DeepSeek API?

The chat is a user-facing product with conversation history, file upload and a mode toggle. The API is a stateless developer endpoint at POST /chat/completions — you resend the full message history on every call, and you pay per token. The web keeps state for you; the API never does. Full details in our DeepSeek API documentation overview.

What happened to the deepseek-chat model ID?

deepseek-chat model ID?It is a legacy API identifier that currently routes to deepseek-v4-flash in non-thinking mode. DeepSeek has announced it will be retired at 15:59 UTC on July 24, 2026, together with deepseek-reasoner. Migrating is a one-line model= swap; the base URL and auth stay the same. Our DeepSeek OpenAI SDK compatibility page walks through the swap.

Can DeepSeek chat read PDFs and images?

Yes. The web and mobile chat both accept file uploads: PDFs, Word documents, plain text, spreadsheets and common image formats. The app extracts text and, for images, performs visual understanding before responding. There is a per-file size limit and a total-context limit (V4 defaults to a 1,000,000-token window). For multimodal specifics see DeepSeek capabilities.

Does DeepSeek chat remember previous conversations?

On the web and in the mobile apps, yes — chats are saved to your account and available across devices once you log in. In the API, no: every request is independent and the client must resend the full conversation history to preserve context. That is a deliberate architectural choice, not a bug. More on the user-side behaviour in our DeepSeek account setup guide.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

Context sources

- NewsAl Jazeera: China's DeepSeek unveils latest model (April 2026)Primary source for V4 launch-day numbers and competitive framingLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.