DeepSeek Benchmarks 2026: What the V4 Numbers Actually Say

If you landed here after seeing yesterday’s flurry of “DeepSeek beats Claude” headlines, you probably want the same thing I did at 6 a.m.: the actual numbers, the benchmark versions, and a clear read on where DeepSeek V4 wins, ties, and loses. This piece walks through the deepseek benchmarks 2026 picture in full — SWE-Bench Verified, Terminal-Bench 2.0, LiveCodeBench, MMLU-Pro, GPQA, Humanity’s Last Exam, and the long-context tests — with every score attributed to a named model version. V4 arrived as a two-model preview on April 24, 2026, and the results genuinely move the open-weights frontier. Here is what holds up under scrutiny.

The short version: where V4 lands on the 2026 leaderboard

DeepSeek shipped V4 as a preview on April 24, 2026, in two tiers: DeepSeek V4-Pro at 1.6T total parameters (49B active) and DeepSeek V4-Flash at 284B total (13B active). Both are MIT-licensed open-weight Mixture-of-Experts models with a 1,000,000-token context window and up to 384,000 tokens of output. Both support a context length of one million tokens, and the repository and model weights are licensed under the MIT License.

Across the public benchmark set DeepSeek published alongside the technical report, V4-Pro at maximum reasoning effort (reasoning_effort=max) leads on coding benchmarks, is competitive on math and reasoning, and visibly trails Gemini-3.1-Pro on factual world knowledge. Through the expansion of reasoning tokens, DeepSeek-V4-Pro at maximum reasoning effort demonstrates superior performance relative to GPT-5.2 and Gemini-3.0-Pro on standard reasoning benchmarks. Nevertheless, its performance falls marginally short of GPT-5.4 and Gemini-3.1-Pro, suggesting a developmental trajectory that trails the current frontier by roughly 3 to 6 months.

Headline benchmark table: V4-Pro (reasoning_effort=max) vs frontier closed models

Every row below is drawn from DeepSeek’s V4 release materials and corroborating coverage. I have named each competitor by the exact version DeepSeek benchmarked against — if you are comparing pricing in the same sentence, keep the version pinned.

| Benchmark | V4-Pro (reasoning_effort=max) | Claude Opus 4.6 | GPT-5.4 | Gemini-3.1-Pro |

|---|---|---|---|---|

| SWE-Bench Verified | 80.6 | 80.8 | n/r | 80.6 |

| Terminal-Bench 2.0 | 67.9 | 65.4 | 75.1 | 68.5 |

| LiveCodeBench Pass@1 | 93.5 | 88.8 | n/r | 91.7 |

| Codeforces rating | 3206 | n/r | 3168 | 3052 |

| IMOAnswerBench | 89.8 | 75.3 | 91.4 | 81.0 |

| HMMT 2026 | 95.2 | 96.2 | 97.7 | n/r |

| Humanity’s Last Exam | 37.7 | 40.0 | 39.8 | 44.4 |

| SimpleQA-Verified | 57.9 | n/r | n/r | 75.6 |

| MMLU-Pro | 87.5 | n/r | n/r | n/r |

| GPQA Diamond | 90.1 | n/r | n/r | n/r |

Reading the coding numbers honestly

The coding benchmarks are where V4-Pro pulls ahead of Anthropic. V4-Pro at max reasoning effort achieves a LiveCodeBench Pass@1 of 93.5 — the highest score among all models evaluated, ahead of Gemini 3.1 Pro (91.7) and Claude Opus 4.6 Max (88.8). Its Codeforces rating of 3206 also leads GPT-5.4 xHigh (3168) and Gemini 3.1 Pro (3052). That is a 7x price gap at near-identical coding benchmark performance versus Claude Opus 4.6 on SWE-Bench Verified, and a measurable Terminal-Bench lead.

That said, SWE-Bench Verified is effectively a tie: V4-Pro sits at 80.6 — within a fraction of Claude (80.8) and matching Gemini (80.6). I would not build a procurement decision around a 0.2-point gap on a benchmark with known noise. The Terminal-Bench 2.0 result is the one I’d weight more: this benchmark involves real autonomous terminal execution with a 3-hour timeout. That gap matters for agentic workflows more than a single-turn coding benchmark would.

Where V4-Pro clearly trails

Three benchmarks expose the gap the DeepSeek team openly acknowledges:

- Humanity’s Last Exam (HLE). HLE (Humanity’s Last Exam) at 37.7% puts V4-Pro below Claude (40.0%), GPT-5.4 (39.8%), and well below Gemini-3.1-Pro (44.4%).

- SimpleQA-Verified. SimpleQA-Verified at 57.9% versus Gemini’s 75.6% reveals a meaningful factual knowledge retrieval gap. If your use case requires accurate real-world knowledge recall — not just code generation — Gemini holds a clear edge.

- HMMT 2026 math. Claude (96.2%) and GPT-5.4 (97.7%) pull decisively ahead of V4-Pro (95.2%).

If you want the wider context on how DeepSeek got here — from the V3 cost surprise to the R1 release that set the efficiency narrative — the DeepSeek latest updates log tracks the release cadence month by month.

V4-Flash: the more interesting story

Flash is the surprise of the release. At 284B total / 13B active, it should be the clear step-down — but DeepSeek’s own notes flag a narrower gap than the parameter count suggests. On SWE-bench Verified it scores 79.0% versus V4-Pro’s 80.6% — a 1.6-point gap. On LiveCodeBench it hits 91.6% versus 93.5%. For most developer coding tasks, these are functionally equivalent results.

DeepSeek-V4-Flash-Max achieves comparable reasoning performance to the Pro version when given a larger thinking budget, though its smaller parameter scale naturally places it slightly behind on pure knowledge tasks and the most complex agentic workflows. Translation: if your workload is code-weighted and latency-sensitive, Flash is the default pick, and V4-Pro earns its ~7× output price only when you’re hitting the hardest agentic tasks.

One niche result worth flagging

On the Putnam-200 math proof setup, V4-Flash-Max scores 81.0, compared to 35.5 for Seed-2.0-Pro, 26.5 for Gemini-3-Pro, and 26.5 for Seed-1.5-Prover. That is not a typo. Theorem-proving workloads appear to be an unusually strong pocket for the Flash tier, likely because the benchmark rewards persistent step-by-step reasoning over broad world knowledge.

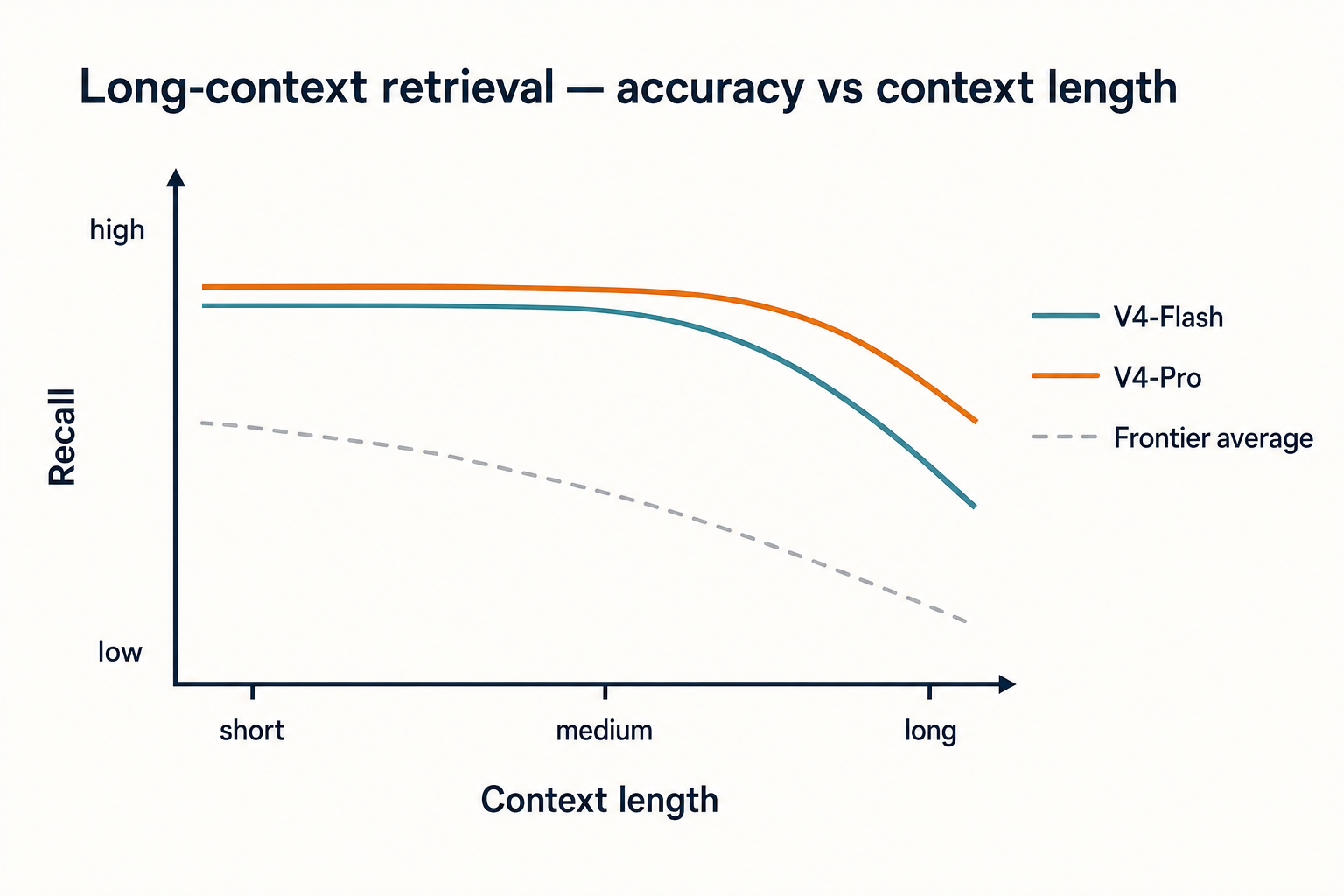

Long-context benchmarks and architecture efficiency

The 1M-token context window is not marketing. On long-context retrieval, Pro-Max delivers incredibly strong results here, scoring 83.5% on MRCR 1M (MMR) needle-in-a-haystack retrieval tests. This actually surpasses Gemini-3.1-Pro on academic long-context benchmarks.

The efficiency gains come from DeepSeek’s new attention stack. The hybrid attention mechanism combining Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA) dramatically improves long-context efficiency. In the 1M-token context setting, DeepSeek-V4-Pro requires only 27% of single-token inference FLOPs and 10% of KV cache compared with DeepSeek-V3.2. That is the number doing the real work behind the pricing tier below — you cannot ship $0.14/M input on a 1M-context model without it.

API pricing in context (and how to cost out a benchmark-grade run)

Pricing is where benchmark results translate into real budgets. Per DeepSeek’s pricing page as of April 2026:

| Model | Input (cache hit) | Input (cache miss) | Output |

|---|---|---|---|

| deepseek-v4-flash | $0.0028 / 1M | $0.14 / 1M | $0.28 / 1M |

| deepseek-v4-pro | $0.0145 / 1M (list; promo $0.003625 through 2026-05-31) | $0.435 promo / $1.74 list per 1M | $0.87 promo / $3.48 list per 1M |

For context on competitor rates at the same date: OpenAI’s GPT-5.4 costs $2.50 per 1M input tokens and $15.00 per 1M output tokens, while Claude Opus 4.6 costs $5 per 1M input tokens and $25 per 1M output tokens. V4-Pro at $3.48/M output at list (currently $0.87/M during the 75% promo through 2026-05-31) is roughly one-seventh of Opus 4.6 on output at list (and roughly 1/29th during the V4-Pro promo). V4-Flash at $0.28/M output is in a different bracket entirely — DeepSeek-V4-Flash is the cheapest of the small models, beating even OpenAI’s GPT-5.4 Nano. DeepSeek-V4-Pro is the cheapest of the larger frontier models.

A worked cost example for V4-Flash — 1,000,000 calls with a 2,000-token cached system prompt, a 200-token user message, and a 300-token response:

- Cache-hit input: 2,000 × 1,000,000 × $0.0028/M = $5.60

- Cache-miss input: 200 × 1,000,000 × $0.14/M = $28.00

- Output: 300 × 1,000,000 × $0.28/M = $84.00

- Total: $117.60

The same workload on V4-Pro runs $29.00 + $348.00 + $1,044.00 = $1,421.00 at list price (currently $355.25 during the 75% promo through 2026-05-31). Choose the tier based on where your workload sits on the benchmark table, not on brand. If you want to play with other shapes, our DeepSeek pricing calculator and DeepSeek cost estimator cover both tiers.

How to reproduce these numbers against the API

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. DeepSeek also exposes an Anthropic-compatible surface at the same base URL. Keep base_url, just update model to deepseek-v4-pro or deepseek-v4-flash. Supports OpenAI ChatCompletions & Anthropic APIs. Both models support 1M context & dual modes (Thinking / Non-Thinking). The API is stateless — every request must resend the conversation history, in contrast with the web chat which maintains session state.

A minimal Python snippet to reproduce a thinking-mode run, using the OpenAI SDK pattern:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Solve Putnam 2024 A1."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

max_tokens=32000,

)

print(resp.choices[0].message.reasoning_content)

print(resp.choices[0].message.content)Thinking mode is a request parameter on either V4 tier, not a separate model ID. The response returns reasoning_content alongside the final content. For Think-Max runs, DeepSeek recommends setting the context window to at least 384K tokens. Other parameters worth knowing — temperature, top_p, max_tokens, JSON mode, tool calling, streaming, FIM (Beta — requires thinking: {"type": "disabled"}), Chat Prefix Completion (Beta) and automatic context caching — are covered in the DeepSeek API documentation.

If you are still on the legacy deepseek-chat or deepseek-reasoner IDs: deepseek-chat & deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time). (Currently routing to deepseek-v4-flash non-thinking/thinking). Migration is a one-line model swap; base URL does not change.

How V4 stacks up against earlier DeepSeek generations

For readers benchmarking upgrade paths rather than new builds:

- DeepSeek V3.2 is the direct predecessor. V4-Pro needs ~27% of the per-token FLOPs and ~10% of the KV cache at 1M context, per the technical report.

- DeepSeek R1 was the January 2025 reasoning release that set the cost-efficiency narrative — V4-Pro’s thinking mode effectively subsumes that role, with much higher ceilings on coding benchmarks.

- DeepSeek V3 is the late-2024 base model. Training-cost figure was the ~$5.6M number widely cited after Reuters’ January 2025 clarification — not the R1 figure, which Reuters later reported as $294,000.

For a side-by-side view of V4 against non-DeepSeek frontier models, the DeepSeek vs ChatGPT and DeepSeek vs Claude comparisons go deeper on the UX and ecosystem differences these benchmark tables leave out.

Caveats before you publish a “DeepSeek beats X” slide

- These are preview models. The preview is a limited rollout to collect real-world feedback before full release. Scores may shift on the GA build.

- Vendor-reported numbers need independent replication. V4 is open source and more efficient than its predecessor, but independent evaluations are needed to confirm claimed performance.

- Version pinning matters. Every score above is against Claude Opus 4.6, GPT-5.4, and Gemini-3.1-Pro as the competitor versions DeepSeek cited. Do not port these numbers into a comparison against Claude 4.7 or GPT-5.5 without re-testing.

- Knowledge gaps are real. SimpleQA and HLE are the benchmarks to watch if your workload is knowledge-heavy.

For the broader release context beyond benchmarks, the full DeepSeek news feed tracks regulatory, pricing and availability changes.

My take as a practitioner

I have been running V3.2 in production and switched two agentic-coding workloads to V4-Pro over the weekend. The coding benchmark numbers match what I’m seeing on internal evals: noticeably better than V3.2 on multi-file refactors, roughly interchangeable with Claude Opus 4.6 on straightforward SWE-Bench-style tickets, and genuinely ahead on Terminal-Bench-shaped autonomous sessions. It is not the strongest model for open-ended factual Q&A — the SimpleQA gap is visible in real usage — and I would not pick it for a research-assistant product where world knowledge dominates. For agentic coding at a one-seventh output cost, it is the rational pick this week.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

How does DeepSeek V4 compare to GPT-5.4 and Claude Opus 4.6 on benchmarks?

V4-Pro at max reasoning effort leads on coding benchmarks: 93.5 LiveCodeBench (vs Claude 88.8), 67.9 Terminal-Bench 2.0 (vs Claude 65.4), and 3206 Codeforces (vs GPT-5.4 3168). It trails on Humanity’s Last Exam (37.7 vs Claude 40.0, GPT-5.4 39.8) and on factual knowledge. On SWE-Bench Verified all three tie within noise. Full breakdown in the DeepSeek performance review.

What is the SWE-Bench Verified score for DeepSeek V4?

DeepSeek V4-Pro scored 80.6% on SWE-Bench Verified in the official release materials, with V4-Flash at 79.0%. That places V4-Pro within 0.2 points of Claude Opus 4.6 (80.8%) and tied with Gemini-3.1-Pro (80.6%). The numbers are vendor-reported pending independent replication — the DeepSeek V3 review covers how prior generations held up under third-party tests.

Is DeepSeek V4 actually cheaper than GPT-5.4 and Claude Opus?

Yes, materially. V4-Pro lists $1.74/M input (miss) and $3.48/M output (currently $0.435 / $0.87 during the 75% promo through 2026-05-31); Claude Opus 4.6 lists $5/M and $25/M; GPT-5.4 lists $2.50/M and $15/M. That makes V4-Pro roughly one-seventh of Opus on output at comparable coding scores. V4-Flash is cheaper again at $0.14/$0.28. Run your own numbers on the DeepSeek pricing calculator before committing.

Why does DeepSeek V4 score lower on Humanity’s Last Exam?

HLE tests expert-level cross-domain factual reasoning, and DeepSeek’s own materials acknowledge the gap: V4-Pro at 37.7 versus Gemini-3.1-Pro at 44.4. The same pattern shows on SimpleQA-Verified (57.9 vs 75.6). V4’s training appears more concentrated on coding and math than on broad world knowledge. If factual retrieval dominates your workload, see DeepSeek alternatives for research.

Can V4-Flash really match V4-Pro on benchmarks?

On many tasks, yes — within ~2 points. V4-Flash hits 79.0 on SWE-Bench Verified vs Pro’s 80.6, and 91.6 on LiveCodeBench vs 93.5. DeepSeek itself says Flash-Max “achieves comparable reasoning performance to the Pro version when given a larger thinking budget.” Pro pulls ahead on the hardest agentic coding and pure-knowledge tasks. For most developer workloads, Flash is the rational default — see DeepSeek V4-Flash.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

- BenchmarkHumanity's Last ExamHard knowledge-recall benchmarkLast checked: April 30, 2026

- Technical reportDeepSeek V4 technical reportSource of every benchmark row in the V4-Pro-Max tableLast checked: April 30, 2026

Context sources

- NewsReuters: DeepSeek V3 ~$5.6M training-cost reporting (Jan 2025)V3 training cost figure contextLast checked: April 30, 2026

- NewsReuters: DeepSeek R1 $294,000 training cost (Sep 2025)R1 cost number distinct from V3 figureLast checked: April 30, 2026

Methodology

Benchmark data was reviewed from public reports and model documentation. Scores are presented as reference points only. Real-world performance may differ depending on prompt quality, task type, language, context length, and evaluation method.

Data confidence

High for cited scores; medium for cross-bench rankings (test setups vary); low for real-world extrapolation.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.