DeepSeek V2 Explained: The MoE Model That Started a Lineage

Why does anyone still write about DeepSeek V2 in 2026, two model generations after it shipped? Because every architectural choice in today’s V4-Pro and V4-Flash traces back to it. If you want to understand why DeepSeek’s API costs what it does, why its KV cache is small enough to serve 1M-token contexts, or where Multi-head Latent Attention came from, V2 is the answer. I ran V2 in production from mid-2024 until V3 replaced it, and the architecture still rewards close reading.

This article gives you the full V2 picture: parameter counts, the benchmarks DeepSeek published in the original technical report, what V2 was good and bad at, how to access the open weights today, and how to think about V2 now that V4 is the live frontier model. By the end you’ll know whether V2 is still worth your time.

What is DeepSeek V2?

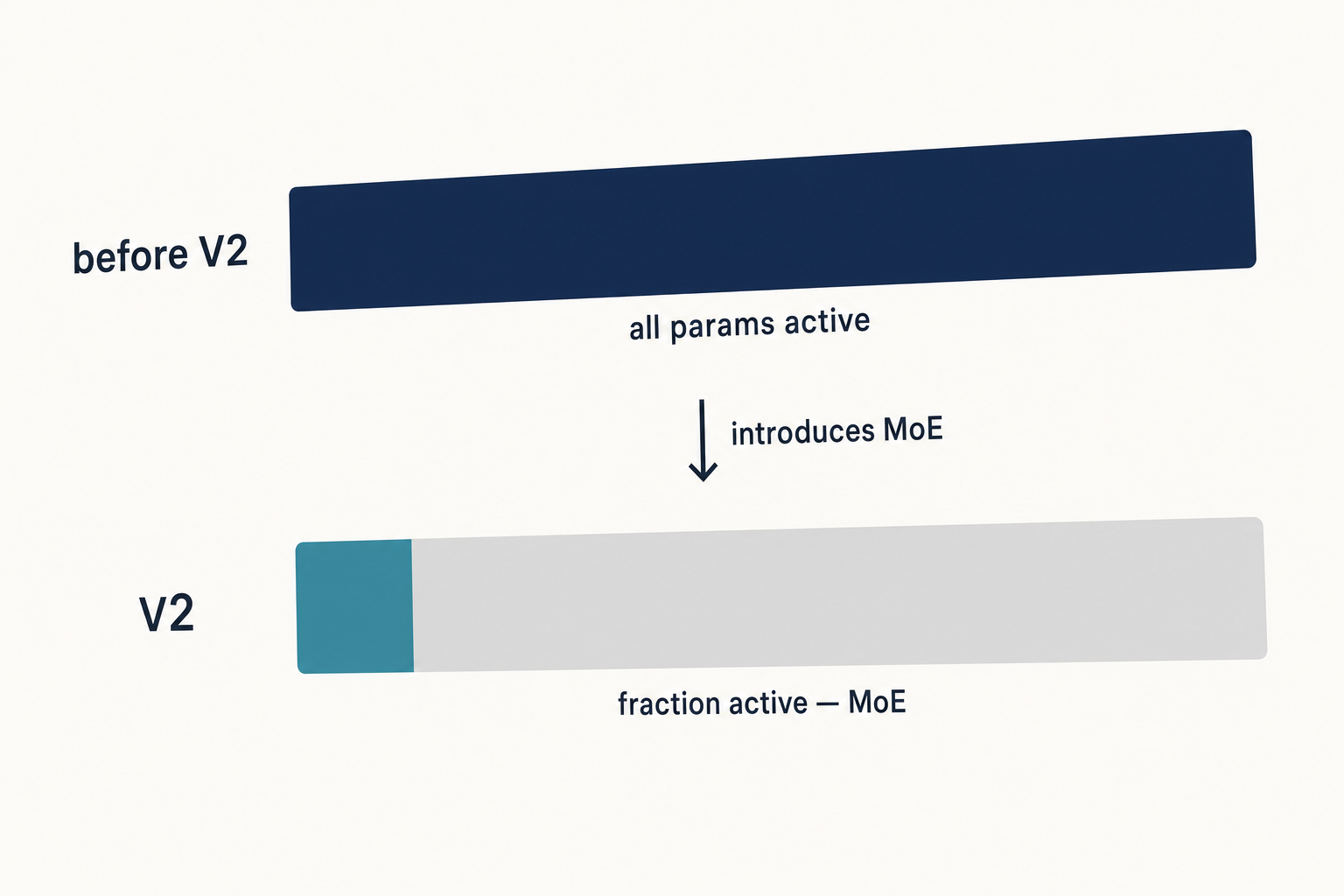

DeepSeek V2 is an open-weight Mixture-of-Experts (MoE) language model released by DeepSeek-AI on May 7, 2024. It is a strong open-source Mixture-of-Experts language model, characterized by economical training and efficient inference through an innovative Transformer architecture, equipped with a total of 236B parameters, of which 21B are activated for each token, and supports a context length of 128K tokens. The release marked DeepSeek’s pivot from dense models (the earlier 67B line) to sparse MoE — and it introduced the two architectural ideas that every later DeepSeek model has used.

Two ideas matter most. The first is Multi-head Latent Attention (MLA), which compresses the key and value tensors that drive attention into a much smaller cache. The second is DeepSeekMoE, a fine-grained expert-routing scheme. Together they made V2 cheap to train and fast to serve. Compared with DeepSeek 67B, DeepSeek-V2 saves 42.5% of training costs, reduces the KV cache by 93.3%, and boosts the maximum generation throughput to 5.76 times.

Architecture and lineage

Parameter count and context

- Total parameters: 236B

- Active parameters per token: 21B

- Context length: 128K tokens

- Pre-training corpus: 8.1 trillion tokens

- Release date: May 7, 2024

- Variants at launch: DeepSeek-V2 (base), DeepSeek-V2 Chat (SFT), DeepSeek-V2 Chat (RL), plus a smaller DeepSeek-V2-Lite

The pre-training corpus consists of 8.1T tokens. Compared with the corpus used in DeepSeek 67B, this corpus features an extended amount of data, especially Chinese data, and higher data quality. The Chinese data weighting is one reason V2 still scores well on Chinese-language evaluations relative to its size.

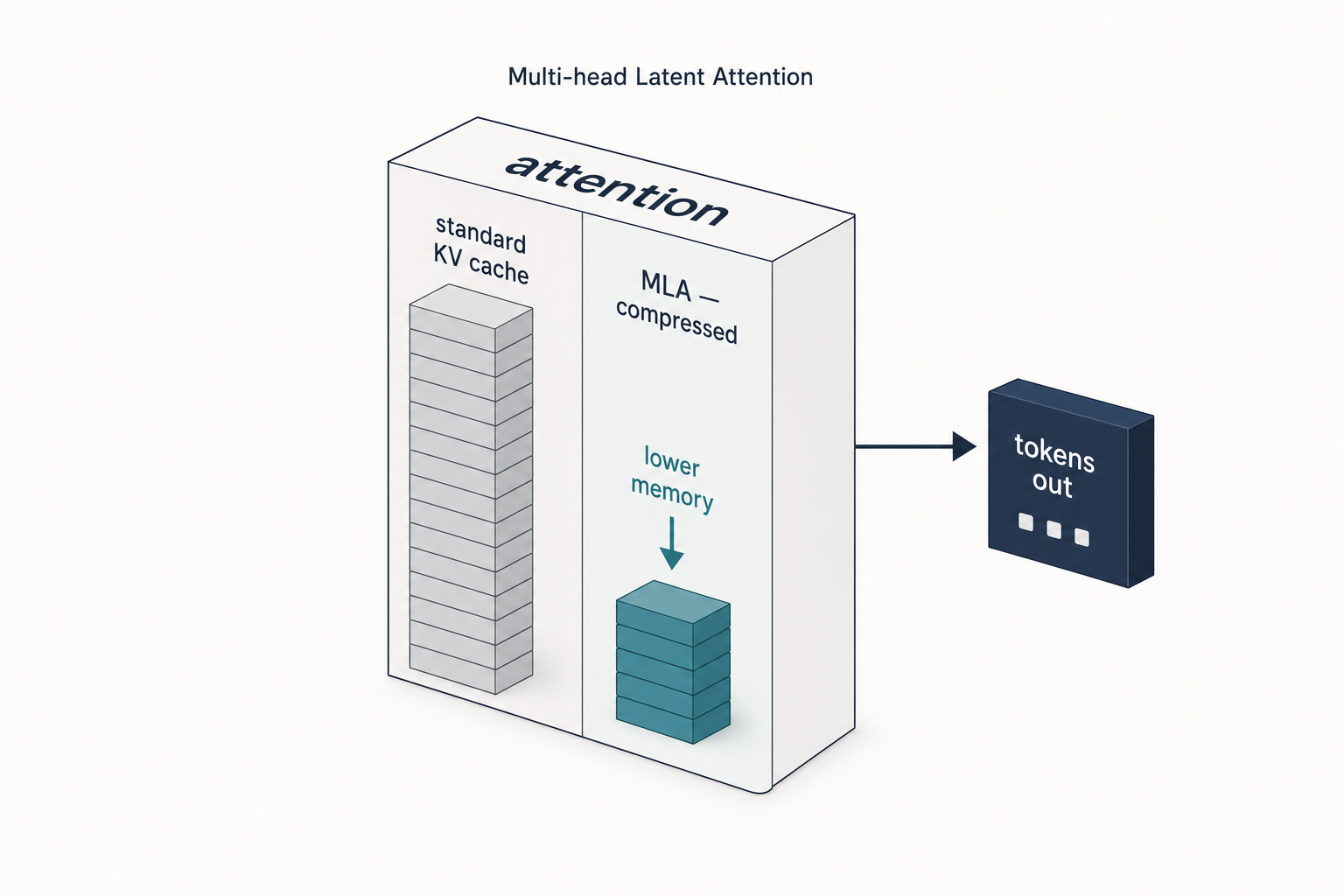

Multi-head Latent Attention (MLA)

Standard multi-head attention stores a separate key and value vector for every token, every layer, every head. At long context lengths the KV cache dominates GPU memory. MLA, which is used in DeepSeek V2, V3, and R1, offers a memory-saving strategy that pairs particularly well with KV caching. The idea in MLA is that it compresses the key and value tensors into a lower-dimensional space before storing them in the KV cache. At inference time, these compressed tensors are projected back to their original size before being used. This adds an extra matrix multiplication but reduces memory usage.

This is the trick that made 128K context economical in 2024 and that still underpins long-context inference on the current generation. If you read the V3 or V4 technical reports, you will see them cite V2’s MLA as the foundation they built on.

DeepSeekMoE

For FFNs, DeepSeek-V2 employs the DeepSeekMoE architecture, which has two key ideas: segmenting experts into finer granularity for higher expert specialization and more accurate knowledge acquisition, and isolating some shared experts for mitigating knowledge redundancy. Routing is constrained at training time to limit cross-device communication, which is part of why DeepSeek’s training-cost numbers came out so low.

Lineage to current models

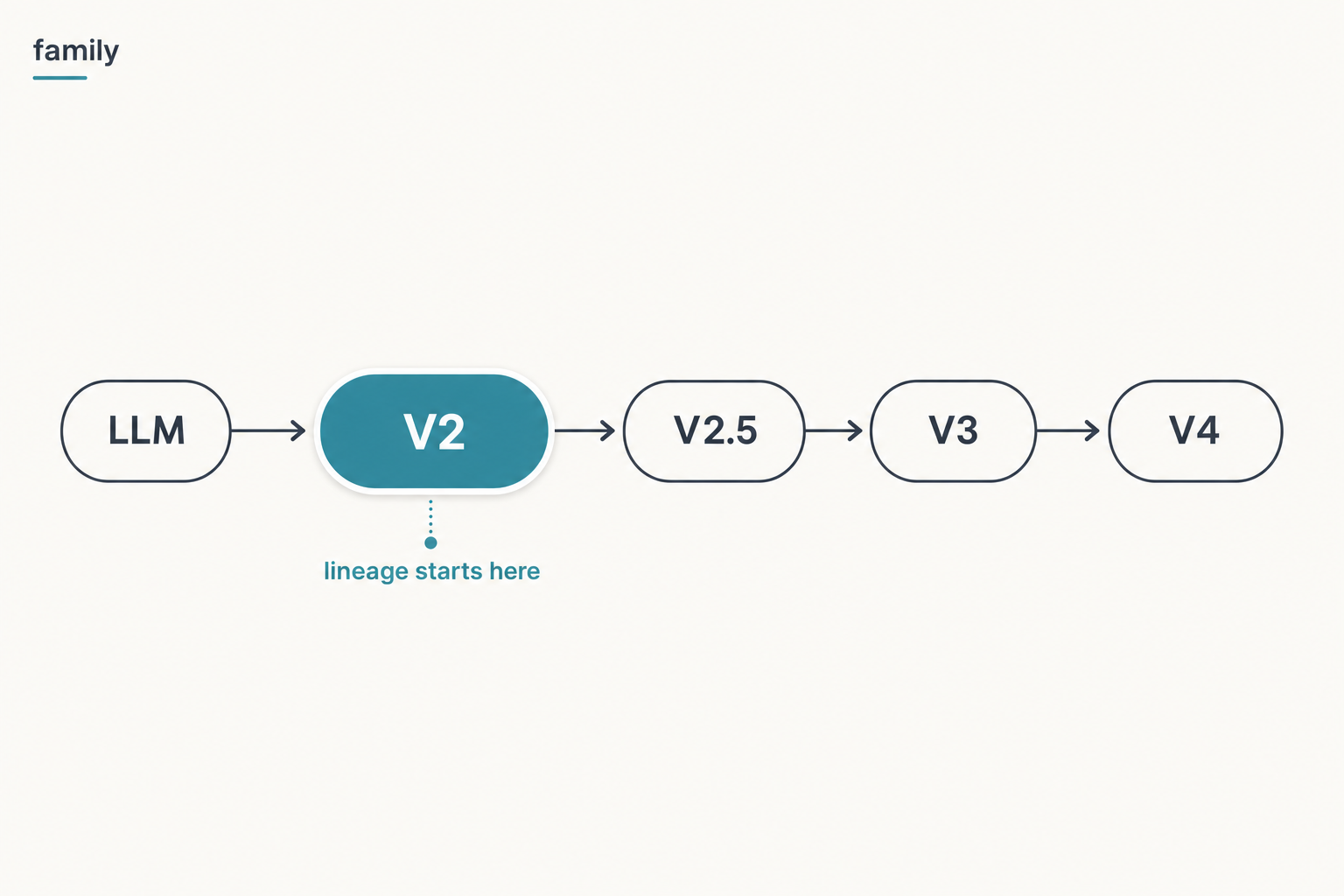

Everything DeepSeek has shipped since V2 inherits this architecture. DeepSeek-V3 is a strong Mixture-of-Experts language model with 671B total parameters with 37B activated for each token, and to achieve efficient inference and cost-effective training, DeepSeek-V3 adopts Multi-head Latent Attention (MLA) and DeepSeekMoE architectures, which were thoroughly validated in DeepSeek-V2. R1 used the V3 base; V3.1 added a hybrid thinking mode; V3.2 added DeepSeek Sparse Attention; V4 layered Compressed Sparse Attention on top. The branch starts at V2.

For the live picture of where this lineage now sits, see DeepSeek V4 and the DeepSeek models hub.

DeepSeek V2 benchmarks

The numbers below are taken from DeepSeek’s original V2 technical report (arXiv 2405.04434, May 2024). Comparison rows reference the model versions DeepSeek cited at the time — primarily LLaMA 3 70B Instruct and Mixtral 8x22B. Do not read these as competitive against current-generation models; the right comparison for V4-era benchmarking is in the DeepSeek benchmarks 2026 roundup.

| Benchmark | DeepSeek-V2 Chat (RL) | What it measures |

|---|---|---|

| MMLU | 77.8 | Broad academic knowledge across 57 subjects |

| HumanEval (pass@1) | 81.1 | Python function generation from docstrings |

| GSM8K | 92.2 | Grade-school multi-step math |

| MATH | 53.9 | Competition-style mathematics |

| AlpacaEval 2.0 (LC win rate) | 38.9 | Open-ended instruction following |

| MT-Bench | 8.97 | Multi-turn chat quality (LLM-judged) |

DeepSeek-V2 Chat (RL) achieves 38.9 length-controlled win rate on AlpacaEval 2.0 and 8.97 overall score on MT-Bench, per the V2 paper. On MMLU, DeepSeek-V2 achieves top-ranking performance with only a small number of activated parameters — the headline framing was efficiency-per-parameter, not absolute capability.

One thing the report is careful about: DeepSeek-V2 Chat (SFT) shows similar performance in code and math related benchmarks to LLaMA3 70B Chat. LLaMA3 70B Chat exhibits better performance on MMLU and IFEval, while DeepSeek-V2 Chat (SFT) showcases stronger performance on Chinese tasks. V2 was not a sweep; it was a careful trade-off. DeepSeek-V2 Chat (RL) demonstrates further enhanced performance in both mathematical and coding tasks compared with DeepSeek-V2 Chat (SFT).

Strengths — where V2 specifically won

- Efficiency per active parameter. Activating only 21B of 236B total parameters at inference made V2 dramatically cheaper to serve than dense 70B models with similar quality.

- KV cache footprint. The 93.3% reduction versus the dense 67B baseline meant a single GPU could hold far more concurrent long-context conversations.

- Math and code (Chat RL). The RL-tuned chat variant pushed GSM8K above 92 and HumanEval above 81 — strong numbers for an open-weight model in mid-2024.

- Chinese-language tasks. The training mix gave V2 an edge on Chinese benchmarks like C-Eval and CMMLU that LLaMA 3 did not match.

- Open weights with permissive code license. The code repository was released under MIT, with model weights under DeepSeek’s separate Model License — usable commercially with conditions. Read the model card before redistributing.

Weaknesses — where V2 fell short

- Absolute MMLU. V2’s 77.8 trailed both LLaMA 3 70B Instruct and the closed-source frontier (GPT-4-Turbo, Claude 3 Opus) of the time.

- Instruction following and IFEval. The V2 paper itself flags that LLaMA 3 70B Chat was stronger on IFEval. Tightly worded constraint-following was not V2’s strong suit until the 2024-09 V2.5 refresh.

- Hardware to self-host. 236B parameters in BF16 needs roughly 470 GB of weights memory before any KV cache. Most people who ran V2 locally used the smaller V2-Lite. For sizing, the DeepSeek hardware calculator is useful.

- No reasoning mode. V2 predates the thinking-mode era. There is no

reasoning_contentoutput and no step-by-step reasoning trace; for that you want R1 or any V4-tier model. - Aging quality. A V2 chat exchange feels distinctly older than a V4-Flash exchange in 2026. That is the point of V4 existing.

How to access DeepSeek V2 today

V2 is no longer the default model anywhere. Three access paths still exist:

- Open weights on Hugging Face. The original

deepseek-ai/DeepSeek-V2,DeepSeek-V2-Chat, andDeepSeek-V2-Literepositories remain online for self-hosting and research. See the install DeepSeek locally tutorial for the practical setup. - Legacy API IDs (transitional). The

deepseek-chatanddeepseek-reasonermodel IDs in the API do not route to V2 any more. They currently route todeepseek-v4-flashand will be retired entirely on 2026-07-24 at 15:59 UTC. If you have an old client that hard-codesdeepseek-chat, you are talking to V4-Flash, not V2. - Self-hosted only for V2-specific work. If you specifically need V2 weights — for reproducibility of a 2024 paper, for example — Hugging Face is the only option.

Calling the current API (V4) instead

If you came here because an old tutorial pointed you at V2 and you just want a working chat call, use V4. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. Minimal Python with the OpenAI SDK:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise the V2 paper in 3 bullets."}],

temperature=1.3,

max_tokens=512,

)

print(resp.choices[0].message.content)The API is stateless — your client must resend the conversation history with each request. The web chat and mobile app keep session history for you; the API does not. DeepSeek also exposes an Anthropic-compatible surface against the same base URL if you prefer that SDK. For a longer walkthrough, see the DeepSeek API getting started guide.

Thinking mode is a request parameter on either V4 model — set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}} to get reasoning_content alongside the final content. V2 has no equivalent.

Pricing snapshot — what V2 cost, and what current models cost

V2’s hosted pricing is no longer published; the deepseek-chat alias has migrated through V2 → V2.5 → V3 → V3.1 → V3.2 → V4-Flash. As of April 2026, current rates per 1M tokens are:

| Model | Input (cache hit) | Input (cache miss) | Output |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 | $0.14 | $0.28 |

deepseek-v4-pro |

$0.003625 (promo, list $0.0145) | $0.435 (promo, list $1.74) | $0.87 (promo, list $3.48) |

Always verify these on the DeepSeek API pricing page before committing to a budget — pricing during a Preview window can change. Off-peak discounts ended on 2025-09-05 and have not returned.

Worked example: 1M calls on V4-Flash

For a typical chatbot — 2,000-token system prompt cached across calls, 200-token user message uncached, 300-token reply, repeated 1,000,000 times — the math on V4-Flash is:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

The same workload on V4-Pro lands at roughly $355.25 (75% promo through 2026-05-31; list $1,421.00) — about three times Flash during the promo and ten times at list — which is the trade-off when you reach for the frontier tier.

Best use cases for V2 (in 2026)

Honestly, very few. V2 is now a research artefact more than a working tool. The cases where it still makes sense:

- Reproducing the 2024 paper for academic work — use the original Hugging Face checkpoint.

- Studying MLA implementations — V2’s reference code is the cleanest place to read the original MLA design.

- Air-gapped Chinese-language deployment on hardware that can host 236B but not 671B — V2 still beats most ~70B dense models on Chinese tasks.

For everything else — coding, writing, agents — go to V4-Flash. See DeepSeek for coding and DeepSeek for developers for current-generation workflows.

Comparable alternatives

If you are comparing V2 historically, the obvious peers are LLaMA 3 70B and Mixtral 8x22B. If you are comparing the V2 line to a current alternative, the more useful pages are:

- DeepSeek V2.5 — the September 2024 refresh that fixed V2’s instruction-following gap

- DeepSeek V3 — the 671B/37B successor that replaced V2 in the API on December 26, 2024

- DeepSeek vs Llama — the open-weight comparison most V2 readers actually want

- Open-source AI like DeepSeek — broader landscape

Verdict

DeepSeek V2 is the model that taught the rest of the lab how to build cheap, fast, long-context MoE — MLA and DeepSeekMoE both originate here, and both still drive every model in the current line-up. As a working tool in 2026 it is obsolete; as an architectural reference it is essential reading. If you are choosing a model to use today, pick V4-Flash. If you are trying to understand why V4-Flash costs $0.14 per million input tokens, read the V2 technical report first.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

What is DeepSeek V2 and when was it released?

DeepSeek V2 is an open-weight Mixture-of-Experts language model released by DeepSeek-AI on May 7, 2024. It has 236B total parameters with 21B activated per token and a 128K context window. It introduced two architectural ideas — Multi-head Latent Attention and DeepSeekMoE — that every later DeepSeek model still uses. For the wider model family, see the DeepSeek models hub.

How does DeepSeek V2 compare to V3 and V4?

V2 is 236B/21B with 128K context. V3 scaled to 671B/37B and added auxiliary-loss-free load balancing and multi-token prediction. V4 ships as two tiers — V4-Pro (1.6T/49B) and V4-Flash (284B/13B) — both with 1M-token context and selectable thinking modes. V2 remains the architectural ancestor; V4 is what you should actually deploy. Compare directly on the DeepSeek V4 page.

Can I still use DeepSeek V2 through the API?

Not directly. The legacy deepseek-chat and deepseek-reasoner model IDs no longer route to V2 — they currently route to deepseek-v4-flash and will be fully retired on 2026-07-24 at 15:59 UTC. To run V2 specifically, download the open weights from Hugging Face and self-host. For current API options see the DeepSeek API documentation.

Is DeepSeek V2 open source and free to use commercially?

The V2 code is released under MIT, while the model weights are released under DeepSeek’s separate Model License, which permits commercial use with conditions. This is different from later releases like V3.2, V3.1, R1, V4-Flash and V4-Pro, which publish both code and weights under MIT. Always check the specific Hugging Face repository before redistribution. More context: is DeepSeek open source.

What hardware do I need to run DeepSeek V2 locally?

The full 236B-parameter V2 model in BF16 needs roughly 470 GB of memory for the weights alone, before KV cache — typically multiple H100/A100 GPUs or equivalent. Most local users instead run DeepSeek-V2-Lite, which fits on a single high-end consumer or workstation GPU. Estimate exact requirements with the DeepSeek hardware calculator, then follow the install DeepSeek locally tutorial.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- Model cardDeepSeek-V2 model cardOriginal V2 base weightsLast checked: April 30, 2026

- Model cardDeepSeek-V2-Chat model cardV2 Chat (RL) checkpoint and Model LicenseLast checked: April 30, 2026

- Model cardDeepSeek-V2-Lite model cardLite variant for self-hostingLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- Technical reportDeepSeek V2 technical report (arXiv 2405.04434)MLA, DeepSeekMoE, MMLU/MATH/HumanEval numbers, training cost reductionsLast checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.