What Is DeepSeek? The Independent Guide for 2026

If you have searched “what is DeepSeek” in the last twelve months, you have probably hit a wall of breathless headlines and very little plain-English explanation. This guide fixes that. DeepSeek is a Hangzhou-based AI lab that builds open-weight large language models, distributes them on Hugging Face, and runs a low-cost API that mirrors the OpenAI and Anthropic wire formats. Its current flagship, the DeepSeek V4 Preview, was released on April 24, 2026, and ships as two Mixture-of-Experts models with a one-million-token context window. By the end of this article you will know what the company makes, how the models differ, what you pay per million tokens, and where DeepSeek genuinely competes — and where it does not.

DeepSeek in one paragraph

DeepSeek is a Chinese AI research lab, founded in 2023 and backed by the quantitative hedge fund High-Flyer, that develops open-weight foundation models. Founded in 2023, DeepSeek gained attention in late 2024 with its free, open-source V3 model, which it said was trained with less powerful chips and at a fraction of the cost of models built by the likes of OpenAI and Google. Weeks later, in January 2025, it released a reasoning model, R1, that hit similar benchmarks or outperformed many of the world’s leading LLMs. The company publishes its weights under permissive licences, ships detailed technical reports, and runs a developer API at https://api.deepseek.com that is compatible with both the OpenAI Chat Completions and Anthropic SDK formats. If you want the short answer to “what is DeepSeek”: it is a frontier-adjacent model maker that competes primarily on price, openness and long-context efficiency rather than on raw benchmark leadership.

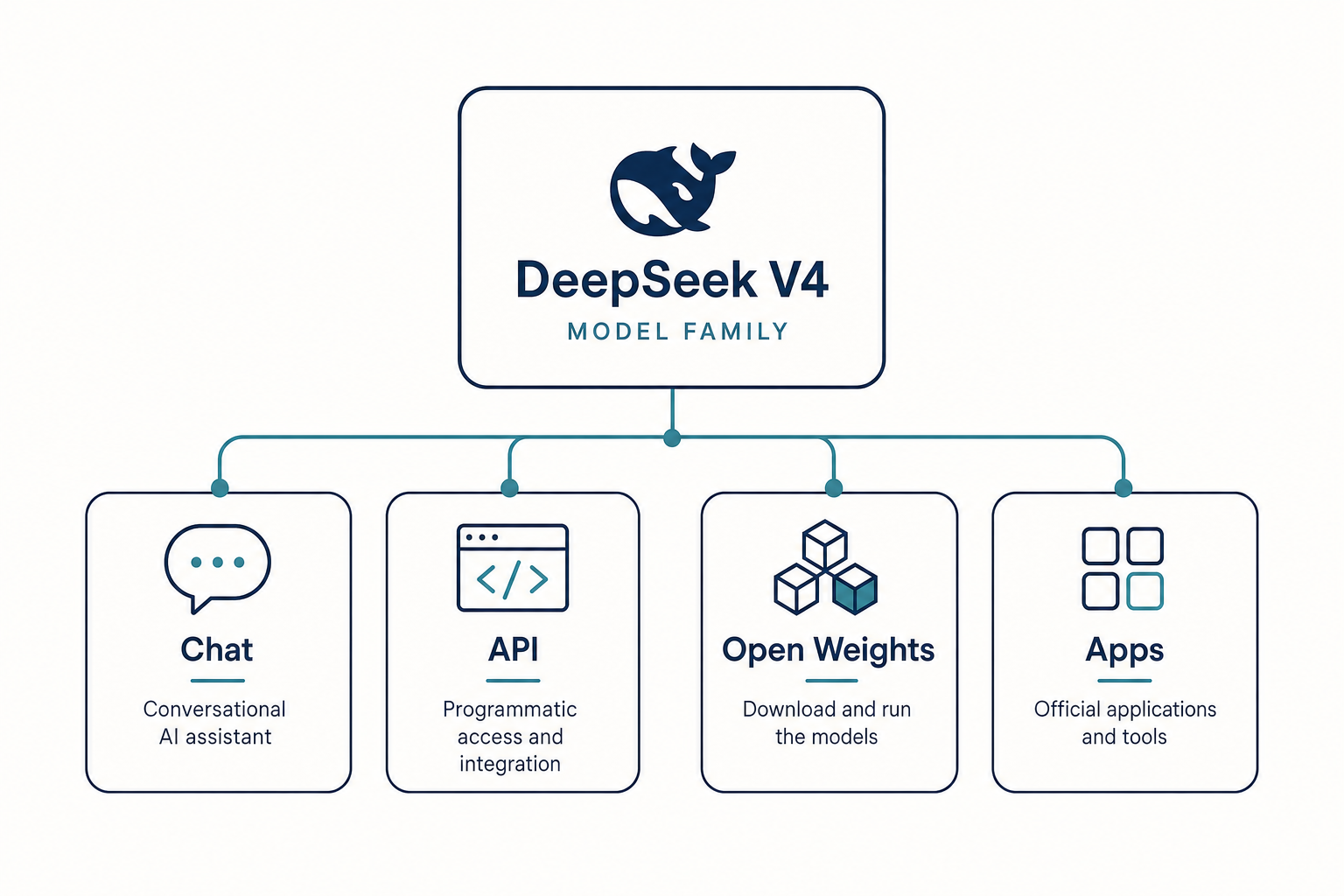

The DeepSeek V4 family — the current generation

On April 24, 2026 DeepSeek released the V4 Preview. The Chinese startup unveiled the V4 Flash and V4 Pro series, touting top-tier performance in coding benchmarks and big advancements in reasoning and agentic tasks. Unlike previous releases, V4 is shipped as a two-tier family that shares a single feature set, with thinking mode controlled by a request parameter rather than a separate model ID.

| Model | Total params | Active params | Context | Tier | Licence |

|---|---|---|---|---|---|

deepseek-v4-pro |

1.6T | 49B | 1,000,000 tokens | Frontier | MIT |

deepseek-v4-flash |

284B | 13B | 1,000,000 tokens | Cost-efficient | MIT |

DeepSeek-V4-Pro with 1.6T parameters (49B activated) and DeepSeek-V4-Flash with 284B parameters (13B activated) — both supporting a context length of one million tokens. Both models output up to 384,000 tokens. Both are open weights, downloadable from the official Hugging Face repository. For deeper architectural detail, see our breakdown of DeepSeek V4, the per-tier write-ups for DeepSeek V4-Pro and DeepSeek V4-Flash, or browse all DeepSeek models on the DeepSeek models hub.

Why two models instead of one

The split is pragmatic. V4-Flash handles the bulk of chat, summarisation, RAG and standard coding work at a low per-token price; V4-Pro is reserved for frontier-tier agentic coding and reasoning where the benchmark lift justifies roughly six times the output cost. At DeepSeek’s maximum reasoning-effort setting (“thinking-max”), V4-Pro posts leading open-weight scores on published coding and reasoning benchmarks as of April 2026 — including 80.6% on SWE-Bench Verified — though it still trails the current closed-frontier GPT-5 and Claude 4 families on the hardest agentic tasks. V4-Flash at the same thinking-max setting closes much of the reasoning gap when given a longer thinking budget, with the usual caveat that a 13B-active model will sit behind a 49B-active one on the most complex agent workflows.

Where DeepSeek came from — a short lineage

- 2023 — DeepSeek founded in Hangzhou by Liang Wenfeng, spun out of the High-Flyer quant fund.

- Late 2024 — DeepSeek V3 released; the technical report claims a roughly $5.6M training compute cost.

- January 2025 — DeepSeek R1 reasoning model released. Marc Andreessen called it “AI’s Sputnik moment” at the time. R1’s training cost was later disclosed as $294,000 (Reuters, September 2025).

- August–December 2025 — V3.1 and V3.2 introduce DeepThink toggling and refined pricing.

- April 24, 2026 — V4 Preview ships, releasing on “the same day as GPT-5.5”.

If you want the long version, our DeepSeek history article walks through every major release with citations.

What DeepSeek actually does well

Coding and competitive programming

This is the area where V4-Pro genuinely competes with — and on some benchmarks beats — the closed frontier models. On coding benchmarks, DeepSeek V4-Pro leads Claude on Terminal-Bench 2.0 (67.9% vs 65.4%), LiveCodeBench (93.5% vs 88.8%), and Codeforces rating (3206 vs no reported score). Claude Opus 4.6 holds a marginal lead on SWE-bench Verified (80.8% vs 80.6%), and a meaningful lead on HLE (40.0% vs 37.7%) and HMMT 2026 math (96.2% vs 95.2%). The Codeforces rating in particular is striking — DeepSeek reports this places the model roughly 23rd among human contest participants.

Long-context efficiency

V4 is built around the assumption that million-token prompts should be cheap, not just possible. We design a hybrid attention mechanism combining Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA) to dramatically improve long-context efficiency. In the 1M-token context setting, DeepSeek-V4-Pro requires only 27% of single-token inference FLOPs and 10% of KV cache compared with DeepSeek-V3.2. In practice this means feeding an entire codebase or a very long contract into a single prompt is no longer reckless on cost.

Open weights

Every recent release (V4-Pro, V4-Flash, V3.2, V3.1, R1) ships both code and model weights under MIT. Some older releases (V3 base, Coder-V2, VL2) split MIT-licensed code from a separate DeepSeek Model License for the weights, so check the specific repo if licensing matters to you. For a deeper read, see is DeepSeek open source.

Where DeepSeek falls short

Honesty matters here. V4-Pro is not the universal winner that some launch coverage implied.

- Factual recall and world knowledge — SimpleQA-Verified at 57.9% versus Gemini’s 75.6% reveals a meaningful factual knowledge retrieval gap. If your use case requires accurate real-world knowledge recall — not just code generation — Gemini holds a clear edge.

- Frontier closed models still lead on some axes — DeepSeek itself states the model “falls only ‘marginally short’ of OpenAI’s GPT‑5.4 and Gemini 3.1-Pro, ‘suggesting a developmental trajectory that trails current-generation frontier models by approximately 3 to 6 months'”.

- Regulatory friction — DeepSeek apps and services have been restricted in several jurisdictions; see DeepSeek availability by country.

- Privacy — conversations on the official chat are processed in China. Treat anything sensitive accordingly; DeepSeek privacy covers this in detail.

How you actually use DeepSeek

There are three surfaces, and they behave differently.

- Web chat at

chat.deepseek.com— keeps your conversation history server-side; no setup beyond an account. Browse DeepSeek chat for a walkthrough. - Mobile apps on iOS and Android — same model, same DeepThink toggle, same conversation memory.

- Developer API at

https://api.deepseek.com— stateless, billed per token, OpenAI- and Anthropic-SDK compatible.

The crucial distinction for developers: chat requests hit POST /chat/completions, the OpenAI-compatible endpoint, and the API is stateless. The server does not remember previous turns; your client must resend the full conversation history with every request. This is the opposite of how the web chat behaves.

A minimal Python call

Below is a minimal Python example using the OpenAI SDK against DeepSeek V4-Pro in thinking mode:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_DEEPSEEK_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Plan the migration."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

print(resp.choices[0].message.content)

When thinking mode is enabled, the response returns reasoning_content alongside the final content. Useful parameters worth knowing: temperature (DeepSeek recommends 0.0 for code, 1.0 for data analysis, 1.3 for general chat, 1.5 for creative writing), top_p, max_tokens (up to 384,000) and reasoning_effort ("high" or "max"). For a fuller tour see the DeepSeek API documentation and the DeepSeek API getting started tutorial.

Legacy model IDs

If you maintain an older integration, the legacy IDs deepseek-chat and deepseek-reasoner still work and currently route to deepseek-v4-flash in non-thinking and thinking mode respectively. They will be retired on 2026-07-24 at 15:59 UTC; after that date, requests with those IDs will fail. Migration is a one-line model= swap — base_url does not change.

Pricing — what you actually pay

Pricing as of April 2026, taken directly from DeepSeek’s public pricing page. Verify on the DeepSeek API pricing reference before committing to production.

| Model | Input (cache hit) /M | Input (cache miss) /M | Output /M |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 | $0.14 | $0.28 |

deepseek-v4-pro |

$0.003625 promo / $0.0145 list | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list |

The off-peak / night-time discount that DeepSeek ran during 2025 ended on September 5, 2025 and has not returned. Don’t budget around it.

Worked example — V4-Flash, one million calls

1,000,000 calls with a 2,000-token cached system prompt, a 200-token user message and a 300-token reply:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 × $0.28/M = $84.00

- Total: $117.60

The same workload at V4-Pro rates costs $1,421.00 — roughly 12× more. For most production chat and RAG, Flash is the default; reach for Pro when an agentic coding task or a hard reasoning problem genuinely justifies the spend. The DeepSeek pricing calculator will run the numbers for any token mix you feed it.

How DeepSeek compares to the alternatives

For a one-line summary: DeepSeek competes on price and openness. Closed frontier models from OpenAI, Anthropic and Google still hold edges on factual recall, certain agentic tasks and ecosystem polish, while DeepSeek leads or matches on coding benchmarks at a substantially lower per-token cost. The headline takeaway: V4-Pro is genuinely competitive with GPT-5.4 and Claude Opus 4.6 across most categories, and beats both on coding benchmarks. It trails Gemini-3.1-Pro on general knowledge and HLE, and trails GPT-5.4 on a handful of agentic tasks. For an open-source model available via API at a fraction of closed-source prices, this is a meaningful achievement.

Detailed head-to-heads live in DeepSeek vs ChatGPT and DeepSeek vs Claude; for a wider survey, see DeepSeek alternatives.

Who should use DeepSeek

- Developers running high-volume agentic coding workflows where output token cost dominates the bill.

- Researchers and engineers who need open weights for fine-tuning, on-prem deployment or audit.

- Teams that already speak the OpenAI SDK and want a near-drop-in second provider.

- Cost-sensitive production users shipping chat, summarisation or RAG at scale.

Who probably should not: anyone with a hard requirement that data not be processed in China, anyone in a jurisdiction that has restricted DeepSeek, and anyone whose workload is dominated by factual recall against a current world-knowledge benchmark.

For role-specific guidance, see DeepSeek for developers or browse all DeepSeek beginner guides for entry points.

The honest verdict

DeepSeek is not the best AI lab in the world by every measure, and its launch materials sometimes overstate the case. What it is, in April 2026, is the most credible open-weight challenger to the closed frontier — three to six months behind on the hardest benchmarks, ahead on several coding ones, and priced low enough that switching cost rarely beats trying it. That combination is what the question “what is DeepSeek” really points at: a lab that has changed the price floor for serious AI, while keeping the weights downloadable.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Is DeepSeek free to use?

The web chat at chat.deepseek.com and the official mobile apps are free to use after creating an account; DeepSeek does not publicly document a fixed daily message cap as of April 2026. The API is paid per token, with V4-Flash listing $0.14 input (cache miss) and $0.28 output per million tokens. DeepSeek may offer a granted balance — a small promotional credit that can expire; check the billing console. See is DeepSeek free.

What is the difference between DeepSeek V4-Pro and V4-Flash?

V4-Pro has 1.6 trillion total parameters with 49 billion active, while V4-Flash has 284 billion total with 13 billion active. Both share the 1M-token context window and the same feature set, including thinking mode. Pro leads on the hardest benchmarks; Flash matches it within roughly 1–3 points on most coding tasks at roughly one-tenth the per-token cost. Compare directly on the DeepSeek V4-Pro and DeepSeek V4-Flash pages.

How is DeepSeek different from ChatGPT?

ChatGPT is a broader product ecosystem spanning consumer, business and enterprise plans with apps, agents and integrations. DeepSeek is more model-and-API-centric, with a minimal chat UI that exists mainly to let people try the underlying models. DeepSeek also publishes its model weights under MIT for download; OpenAI does not for its frontier closed models. The detailed head-to-head lives at DeepSeek vs ChatGPT.

Can I run DeepSeek on my own machine?

Yes for the smaller distilled and Flash variants, with caveats. V4-Flash (284B total / 13B active per token) takes roughly 158 GB on disk and benefits from quantisation to fit consumer hardware; V4-Pro (1.6T total / 49B active per token) at roughly 862 GB on disk realistically needs serious GPU memory. Distilled R1 variants run locally on more modest setups. Walk through the options in install DeepSeek locally and running DeepSeek on Ollama.

Is DeepSeek safe and private to use?

The web chat and API process conversations on infrastructure subject to Chinese law, which means law-enforcement access under legal process is possible. Multiple jurisdictions including Italy and several US states have introduced restrictions on the official app for government devices. Open-weight self-hosting sidesteps the data-residency question entirely. For a fuller treatment see DeepSeek privacy and is DeepSeek safe.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- Model cardDeepSeek-V4-Pro on Hugging FaceOfficial V4-Pro repository for weights and license confirmationLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Context sources

- NewsReuters: DeepSeek R1 training cost disclosure (Sep 2025)$294,000 R1 training-cost figure cited in the timelineLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.