What DeepSeek MoE Is and Why It Powers V4-Pro and V4-Flash

If you have read about DeepSeek V4, R1, or V3 and seen the phrase “1.6T total / 49B active” without quite understanding what it means, you are in the right place. DeepSeek MoE is the mixture-of-experts architecture that lets the lab ship trillion-parameter models that only spend a fraction of those parameters on any given token. It started as a 2024 research paper, became the backbone of V2, V3, R1, and V3.2, and now defines the V4 generation released on April 24, 2026.

This guide walks through what DeepSeek MoE actually is, the two ideas that make it work, the lineage from the original DeepSeekMoE 16B to V4-Pro and V4-Flash, and how to use it through the API today.

What is DeepSeek MoE?

DeepSeek MoE is a mixture-of-experts architecture introduced in a January 2024 paper by Damai Dai and colleagues at DeepSeek. In the era of large language models, mixture-of-experts is a promising architecture for managing computational costs when scaling up model parameters, but conventional MoE architectures like GShard, which activate the top-K out of N experts, face challenges in ensuring each expert acquires non-overlapping and focused knowledge. DeepSeek’s answer combines two ideas — fine-grained expert segmentation and shared expert isolation — to push each expert toward a narrower, less redundant slice of knowledge.

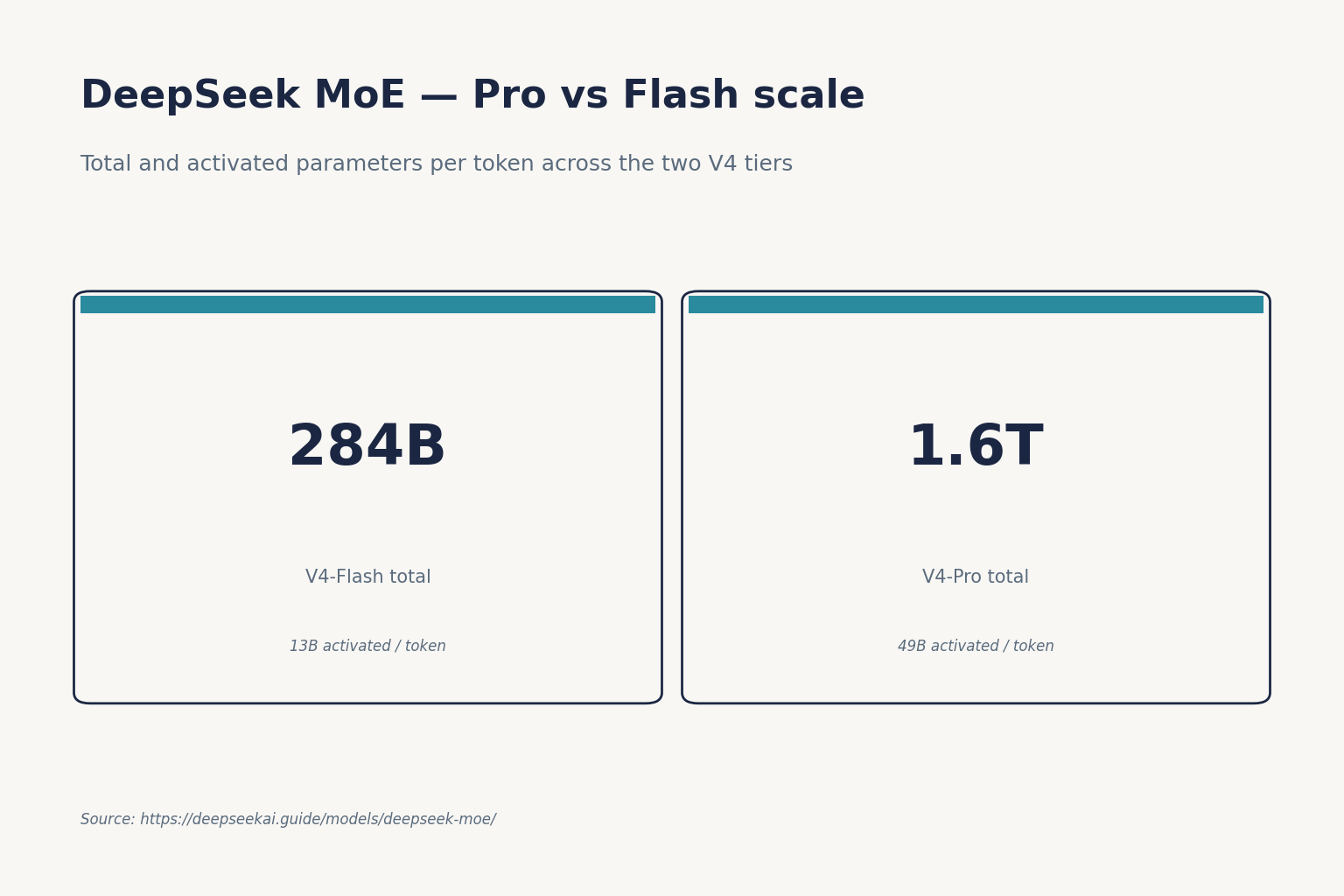

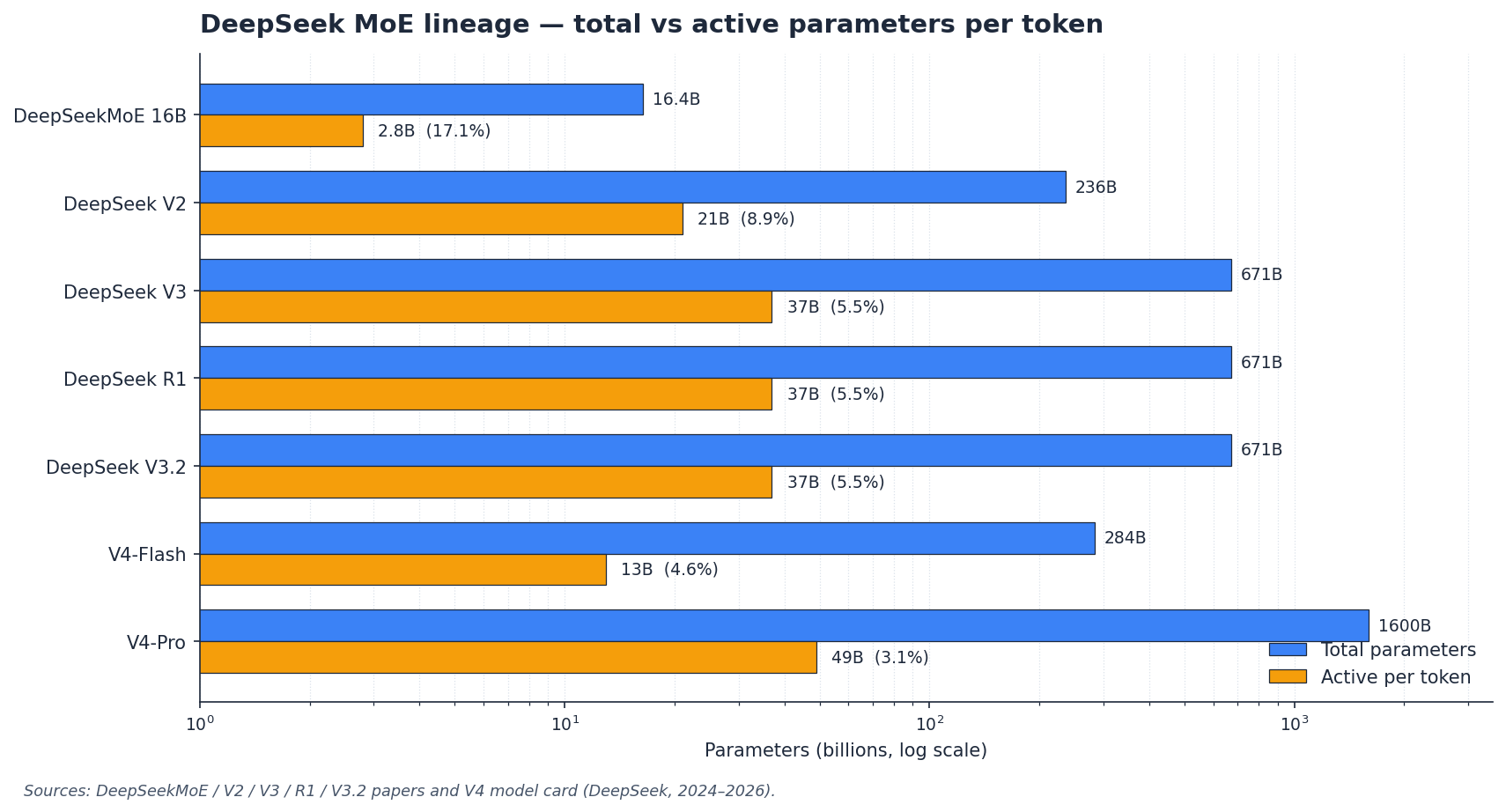

The architecture matters because every recent DeepSeek release uses it. The current generation, DeepSeek V4, ships as two open-weight MoE models: deepseek-v4-pro at 1.6 trillion total parameters with 49 billion activated per token, and deepseek-v4-flash at 284 billion total with 13 billion active. Earlier MoE releases include V3, V3.1, V3.2, R1, and the original DeepSeekMoE 16B research model.

How DeepSeek MoE works: two ideas

Fine-grained expert segmentation

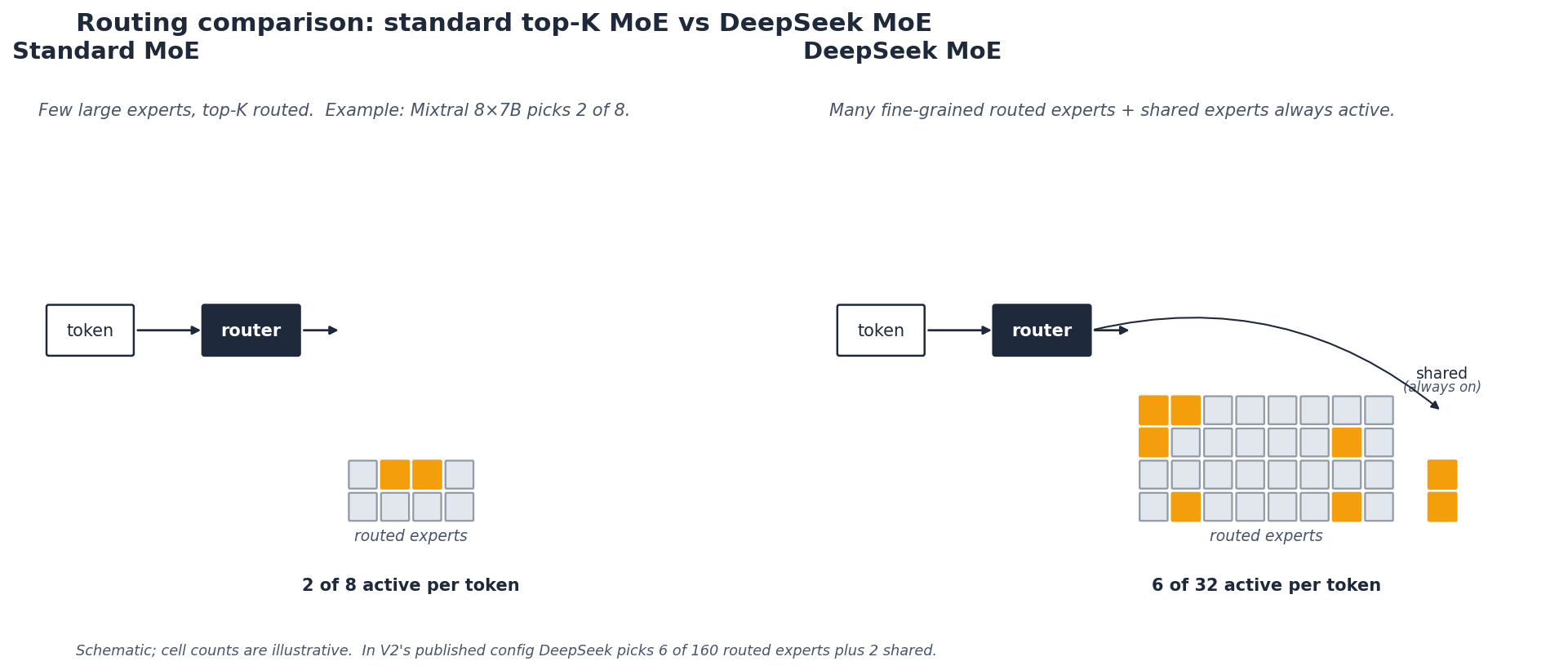

In a standard MoE layer, the feed-forward network is split into a small number of large experts (Mixtral 8x7B uses eight). A router picks the top two and combines their outputs. DeepSeek segments the experts into a finer grain by splitting the FFN intermediate hidden dimension while maintaining the number of parameters constant; keeping a constant computational cost, it also activates more fine-grained experts to enable a more flexible and adaptable combination, which allows diverse knowledge to be decomposed more finely and learned more precisely into different experts.

The practical effect: instead of choosing 2 of 8 experts, V2’s MoE layer chooses 6 of 160. Each MoE layer in V2 consists of 2 shared experts and 160 routed experts, where the intermediate hidden dimension of each expert is 1536, and among the routed experts, 6 experts are activated for each token. More, smaller experts give the router a finer instrument; the math reorganises so the FLOPs and parameter count stay the same.

Shared expert isolation

The second trick is to carve out a small number of experts that are always active for every token. DeepSeek isolates K_s experts as shared ones, aiming at capturing common knowledge and mitigating redundancy in routed experts. The intuition is plain: grammar, common-sense priors, and basic syntax do not need to be re-learned by every routed expert. Park that knowledge in a shared expert, and the routed experts can specialise harder on what is left.

Both choices are designed to push each routed expert toward a narrower slice of the data distribution. DeepSeek’s own ablations show fine-grained expert segmentation and shared expert isolation both contribute to stronger overall performance.

Architecture and lineage

DeepSeek MoE has shipped in five generations of model. The table tracks total parameters, activated parameters, and what the model was for. Activated-parameter counts are what you pay for at inference; total counts are what you store on disk.

| Model | Released | Total params | Active params | Context |

|---|---|---|---|---|

| DeepSeekMoE 16B | 2024-01 | 16.4B | 2.8B | 4K |

| DeepSeek V2 | 2024-05 | 236B | 21B | 128K |

| DeepSeek V3 | 2024-12 | 671B | 37B | 128K |

| DeepSeek R1 | 2025-01 | 671B | 37B | 128K |

| DeepSeek V3.2 | 2025-12 | 671B | 37B | 128K |

| DeepSeek V4-Flash | 2026-04-24 | 284B | 13B | 1M |

| DeepSeek V4-Pro | 2026-04-24 | 1.6T | 49B | 1M |

The V4 generation reuses the MoE backbone but pairs it with a new attention stack. DeepSeek-V4 designs a hybrid attention mechanism combining Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA) to dramatically improve long-context efficiency; in the 1M-token setting, V4-Pro requires only 27% of single-token inference FLOPs and 10% of KV cache compared with V3.2. That is why V4 can ship a 1,000,000-token context window while staying serveable.

Benchmarks

For the historical DeepSeekMoE paper, the headline result is efficiency parity with much larger dense models. DeepSeekMoE 16B achieves comparable performance with DeepSeek 7B and LLaMA2 7B with only about 40% of computations. A 145B variant in the same paper kept pace with the 67B dense model at about 28.5% (maybe even 18.2%) of computations.

For V4, DeepSeek’s own self-reported numbers from the V4 technical report are below. These are the lab’s figures, not independent reruns — treat them as a starting point.

| Benchmark | V4-Pro-Base | V4-Pro (reasoning_effort=max) | Notes |

|---|---|---|---|

| MMLU | 90.1% | — | Base, DeepSeek-reported |

| HumanEval | 76.8% | — | Base, DeepSeek-reported |

| GSM8K | 92.6% | — | Base, DeepSeek-reported |

| SWE-Bench Verified | — | 80.6% | DeepSeek-reported, max-thinking mode |

| Terminal-Bench 2.0 | — | 67.9% | DeepSeek-reported |

For deeper benchmark coverage including independent reruns, see DeepSeek benchmarks 2026.

Strengths of the DeepSeek MoE approach

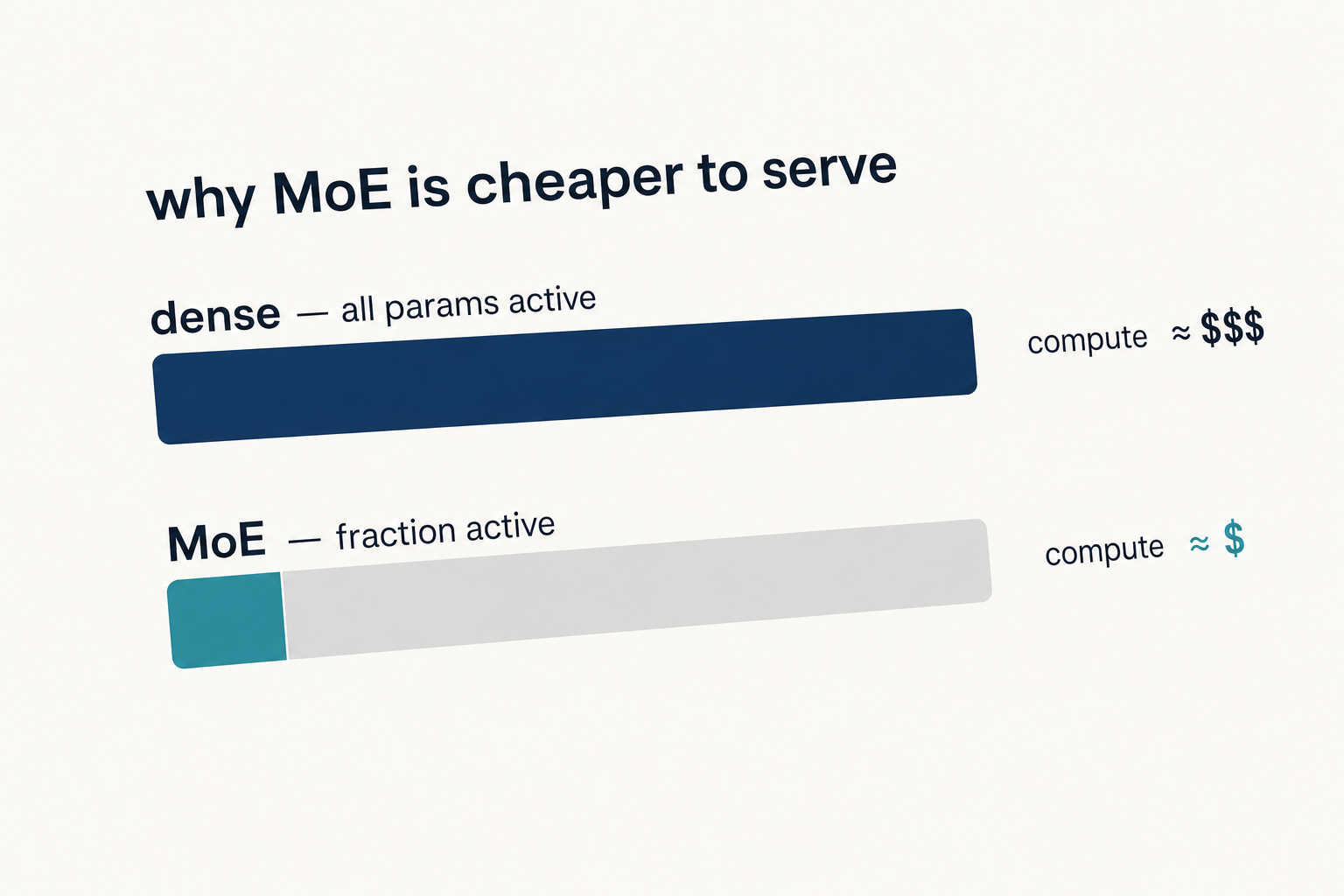

- Cheap inference per token. Activating 13B of 284B (Flash) or 49B of 1.6T (Pro) means inference cost tracks the active count, not the total.

- Specialisation. Fine-grained segmentation gives the router more, smaller experts to compose; ablations in the original paper show this helps.

- Reduced redundancy. Shared experts hold the common-knowledge floor so routed experts can specialise.

- Open weights. V4-Pro, V4-Flash, V3.2, V3.1, and R1 are all MIT-licensed on Hugging Face. Older releases (V3 base, Coder-V2, VL2) split MIT code from a separate DeepSeek Model License on the weights — check the specific repo if licensing matters to you.

- Scales without quadratic cost. Combined with V4’s hybrid attention, the architecture supports 1M-token context with 384K-token output.

Weaknesses and trade-offs

MoE is not free. The architecture comes with real engineering costs that show up the moment you try to host it yourself.

- Memory floor. You still need to store all 1.6T (V4-Pro) or 284B (V4-Flash) parameters in fast memory, even if only a fraction is active. V4-Pro has 1.6T total params; even at Q4, you’re looking at ~800GB just for weights, plus KV cache and activation memory — this is not a homelab story, but a workstation with a terabyte of fast RAM, and an 8-GPU H100 or H200 box can serve it comfortably while a pair of 5090s and a Threadripper cannot.

- Routing complexity. Load-balancing experts across devices, avoiding routing collapse, and managing all-to-all communication add code complexity that dense models do not have.

- Quantisation lag. Community GGUF support for V4 was not ready at launch, so llama.cpp users had to wait. vLLM and SGLang shipped day-zero recipes; smaller toolchains caught up over the following days.

- Self-reported benchmarks. V4’s headline numbers are DeepSeek’s own. Independent reruns will move some of them.

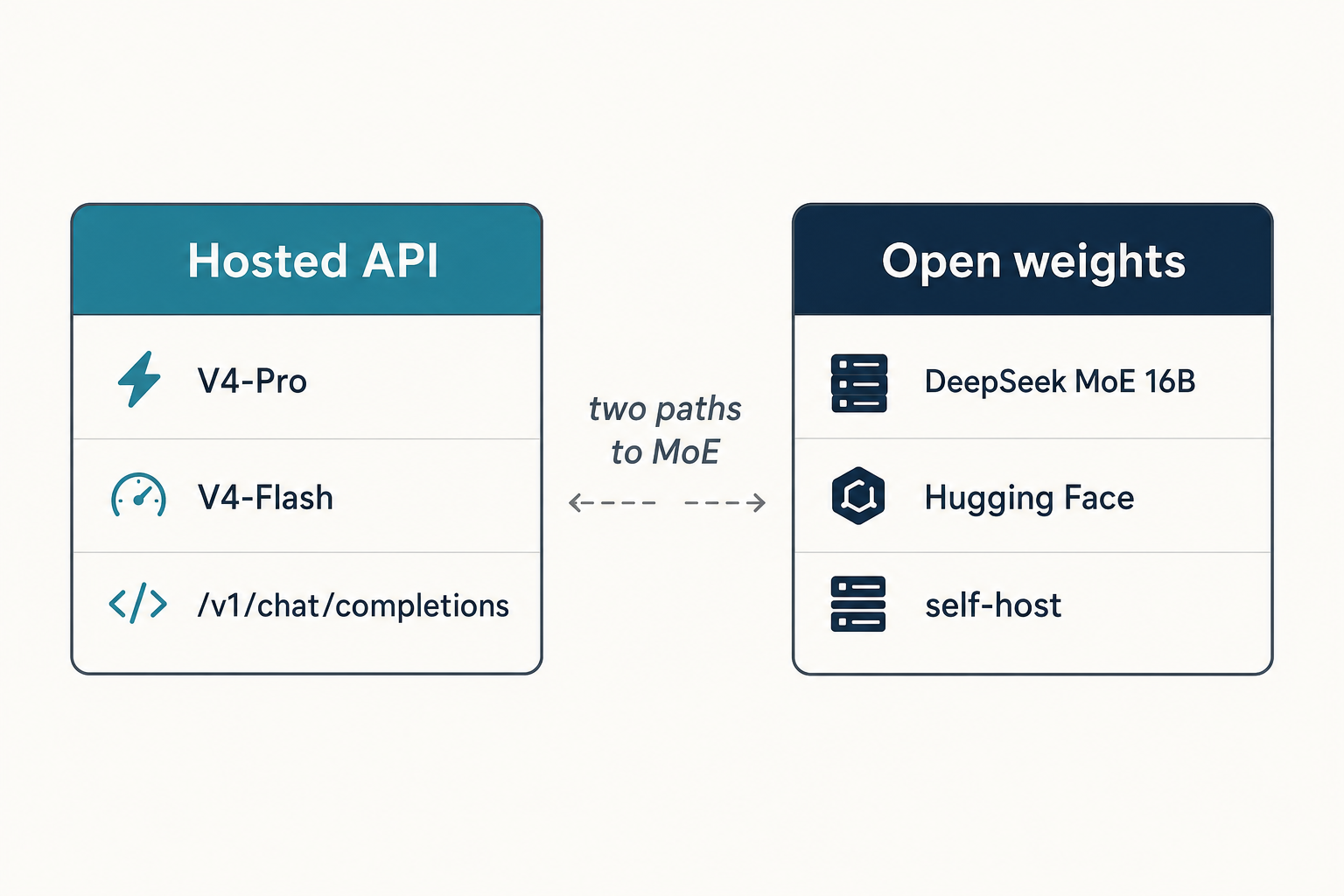

How to access DeepSeek MoE models

Three surfaces are live for V4 today:

- Web chat and mobile app. Expert Mode routes to V4-Pro and Instant Mode routes to V4-Flash on chat.deepseek.com. The web/app keeps conversation history across turns; the API does not.

- Hugging Face. Both V4-Pro and V4-Flash are on Hugging Face under the MIT license. Instruct checkpoints ship as FP4 for MoE experts and FP8 for everything else; base checkpoints are FP8 throughout.

- API. Chat requests hit

POST /chat/completions, the OpenAI-compatible endpoint athttps://api.deepseek.com. DeepSeek also ships an Anthropic-compatible surface against the same base URL.

Minimal Python example

Using the OpenAI Python SDK against V4-Flash:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Explain MoE in one paragraph."}],

temperature=1.3,

max_tokens=512,

)

print(resp.choices[0].message.content)

Thinking mode is a request parameter on either V4 model, not a separate model ID. Set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}, or reasoning_effort="max" for max-effort thinking. The response then returns reasoning_content alongside the final content. For a step-by-step setup, see the DeepSeek API getting started guide.

If you maintain integrations using the legacy deepseek-chat or deepseek-reasoner model IDs: they currently route to deepseek-v4-flash and will be retired on 2026-07-24 at 15:59 UTC. Migration is a one-line model= swap; base_url does not change.

The API is stateless — you must resend the conversation history with each request. Other parameters worth knowing: temperature, top_p, max_tokens (up to 384,000 on V4), JSON mode (designed to return valid JSON, not guaranteed — include the word “json” plus an example schema in your prompt and set max_tokens high), tool calling, and streaming.

Pricing snapshot

DeepSeek prices V4-Pro and V4-Flash separately. Rates below are USD per 1M tokens, as of April 2026 — verify on the DeepSeek API pricing page before committing budget, since Preview-window prices can change.

| Model | Input (cache hit) | Input (cache miss) | Output |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 | $0.14 | $0.28 |

deepseek-v4-pro |

$0.003625 promo / $0.0145 list | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list |

Worked example: cost of 1M API calls on V4-Flash

2,000-token cached system prompt, 200-token uncached user message, 300-token response, run a million times:

- Cached input: 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200,000,000 tokens × $0.14/M = $28.00

- Output: 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

The same workload on V4-Pro costs $1,421.00 — roughly 12× more, driven by the $3.48/M output rate at list (currently $0.87/M during the 75% V4-Pro promo through 2026-05-31). Pick Flash by default; reach for Pro when the benchmark lift on coding or agentic work earns the premium. Run your own numbers with the DeepSeek pricing calculator.

Best use cases

- Coding agents and tool-calling pipelines. V4-Pro’s reported SWE-Bench and Terminal-Bench numbers are competitive with closed frontier models — see DeepSeek for coding and DeepSeek for developers.

- Long-document analysis. The 1M-token context window plus the CSA/HCA attention stack make Flash a sensible choice for whole-codebase or contract-set analysis.

- High-volume chat. V4-Flash at $0.28 per million output tokens fits customer-support and content-generation workloads where per-call cost dominates.

- Research on MoE itself. The original DeepSeekMoE 16B is still a useful study artefact — small enough to run, designed to expose the architecture cleanly.

Comparable alternatives

Other open MoE models worth weighing against DeepSeek MoE include Mixtral, Qwen’s MoE checkpoints, and Kimi K2.6. Closed competitors — GPT-5, Claude 4 Opus, Gemini 3 — do not publish architecture details at the same level of detail. For head-to-head reading, see DeepSeek vs Mistral and DeepSeek vs Qwen, or browse the wider DeepSeek models hub for context on the rest of the lineup.

Verdict

DeepSeek MoE is the architectural through-line that makes everything from V2 to V4-Pro economically viable. The two ideas — fine-grained expert segmentation and shared expert isolation — are simple on paper and well-validated in the original ablations. With V4 the lab has stretched the same backbone to 1.6T parameters and 1M-token context. The catch is hardware: running V4-Pro yourself takes serious infrastructure, so most readers will meet DeepSeek MoE through the API or the chat app rather than at the weights level.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

What does MoE mean in DeepSeek?

MoE stands for mixture-of-experts. In DeepSeek’s models, each transformer feed-forward layer is replaced by many small expert networks plus a router that picks a few per token. Only the chosen experts run, so a 1.6T-parameter model like V4-Pro activates just 49B parameters per token. The full architecture is described in the DeepSeekMoE research paper.

How is DeepSeek MoE different from Mixtral?

Mixtral uses eight large experts and picks two per token. DeepSeek MoE splits the same FFN budget into many more, smaller experts and adds shared experts that are always active. The result is finer-grained specialisation at the same compute cost. For a wider comparison, see DeepSeek vs Mistral.

Are DeepSeek’s MoE weights open source?

The recent ones, yes. V4-Pro, V4-Flash, V3.2, V3.1, and R1 publish both code and weights under MIT. Some older releases — V3 base, Coder-V2, VL2 — keep code under MIT but put weights under a separate DeepSeek Model License. Check the specific Hugging Face repo if licensing matters; our open-source guide covers the per-model breakdown.

Can I run DeepSeek V4 MoE models locally?

V4-Flash (284B/13B active) is feasible on a serious workstation with enough fast memory; V4-Pro (1.6T/49B active) realistically needs an 8-GPU H100 or H200 server. Quantised GGUF builds for llama.cpp lagged the launch by a few days. The install DeepSeek locally tutorial walks through the practical options.

Does DeepSeek MoE work with the OpenAI SDK?

Yes. The API matches OpenAI’s Chat Completions wire format, so the official OpenAI Python or Node SDK works against DeepSeek by changing only base_url to https://api.deepseek.com and supplying your DeepSeek API key. Requests still hit POST /chat/completions. See DeepSeek OpenAI SDK compatibility for full details.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- Model cardDeepSeek V4-Pro model card on Hugging FaceV4-Pro 1.6T MoE specs and FP4/FP8 mixed precisionLast checked: April 30, 2026

- Model cardDeepSeekMoE 16B model cardReference 16B MoE research checkpointLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- Technical reportDeepSeekMoE research paper (Damai Dai et al., Jan 2024)Fine-grained expert segmentation and shared-expert isolationLast checked: April 30, 2026

- Technical reportDeepSeek V4 technical reportCSA/HCA hybrid attention, 27% FLOPs / 10% KV cache numbersLast checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.