A Guide to DeepSeek Research Papers From V2 to V4

If you are trying to understand why DeepSeek V4 uses only 10% of the KV cache of V3.2, or how a Chinese lab trained a reasoning model for under $300,000, the answer sits inside the DeepSeek research papers — not the press coverage. This guide maps every major publication the lab has put on arXiv and Hugging Face, from the original DeepSeek LLM scaling-law work in January 2024 to the DeepSeek-V4 technical report released on April 24, 2026. For each paper we note the model it describes, the single architectural idea it contributed, and how those ideas stack into the V4 release.

You will finish with a reading order, a benchmark cross-reference, and pointers to our deeper model-by-model breakdowns.

Why DeepSeek’s papers matter more than most

Most frontier AI labs ship products and a blog post. DeepSeek ships products, open weights, and a detailed technical report — usually on arXiv, usually the same day, usually with enough implementation detail to reproduce the architecture. That is how the lab became a reference point in 2025: DeepSeek drew wide attention in 2025 when its R1 release delivered near industry-leading reasoning performance – for allegedly a fraction of the price.

The V4 preview, released on April 24, 2026, continues that pattern. The paper presents two Mixture-of-Experts language models — DeepSeek-V4-Pro with 1.6T parameters (49B activated) and DeepSeek-V4-Flash with 284B parameters (13B activated) — both supporting a context length of one million tokens. If you want to understand what V4 actually does differently, you read the V4 technical report. This article is a reading guide, not a replacement for those primary sources.

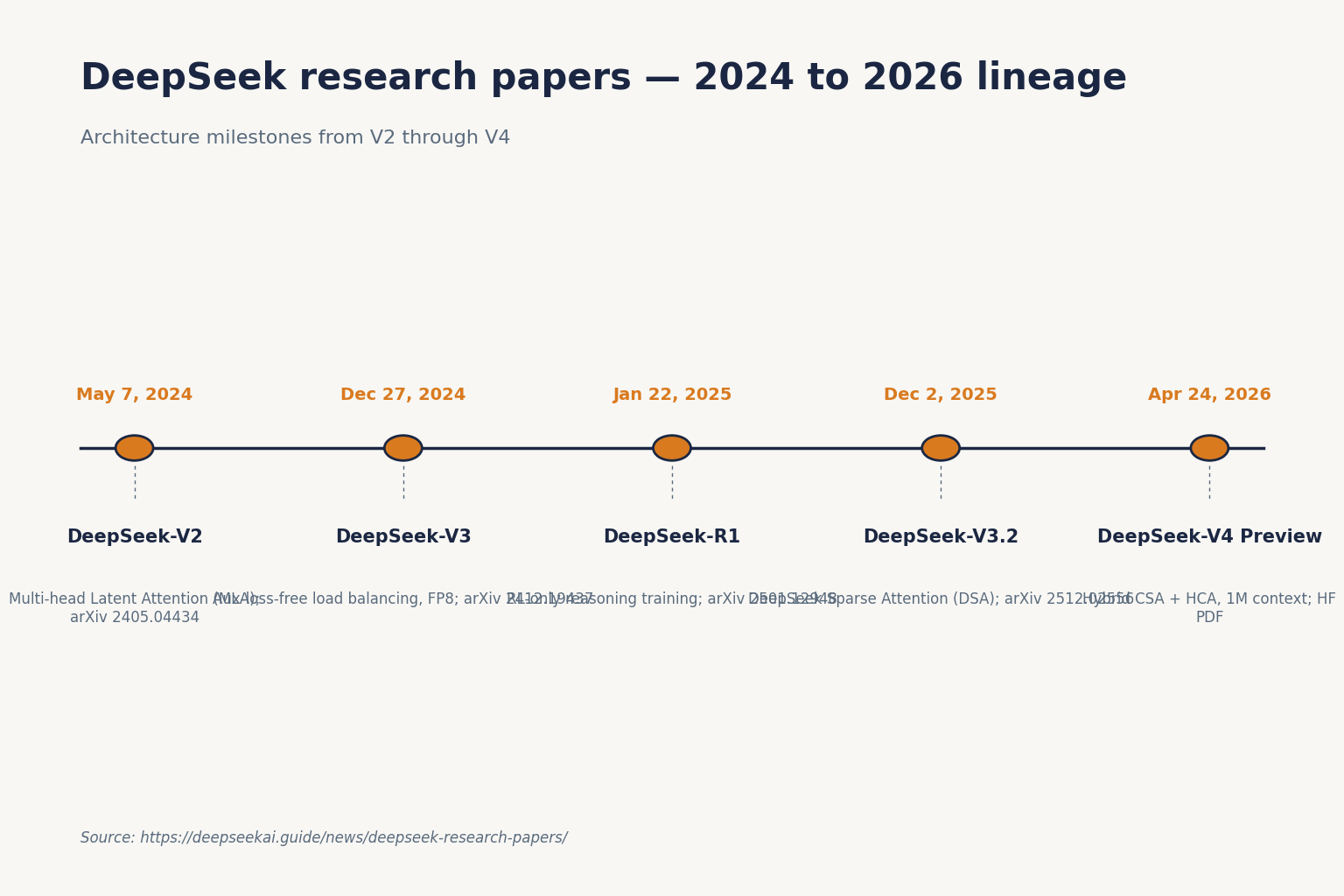

The DeepSeek paper timeline at a glance

Every paper below is first-party DeepSeek-AI work. I have limited the list to the releases that matter for understanding the current V4 generation. For a wider bibliography, the community-maintained DeepSeek Papers collection on Hugging Face is the most complete single index.

| Paper | Published | arXiv / host | Core contribution |

|---|---|---|---|

| DeepSeek LLM | 2024-01-05 | arXiv 2401.02954 | Scaling-law study for 7B and 67B dense models |

| DeepSeekMoE | 2024-01-11 | arXiv 2401.06066 | Fine-grained expert specialisation |

| DeepSeek-Coder | 2024-01-25 | arXiv 2401.14196 | Code-specialised base models |

| DeepSeekMath (GRPO) | 2024-02-05 | arXiv 2402.03300 | Group Relative Policy Optimisation |

| DeepSeek-V2 | 2024-05-07 | arXiv 2405.04434 | Multi-head Latent Attention (MLA) |

| DeepSeek-V3 | 2024-12-27 | arXiv 2412.19437 | Auxiliary-loss-free load balancing, FP8 training |

| DeepSeek-R1 | 2025-01-22 | arXiv 2501.12948 | RL-only reasoning training |

| DeepSeek-V3.2 | 2025-12-02 | arXiv 2512.02556 | DeepSeek Sparse Attention (DSA) |

| DeepSeek-V4 Preview | 2026-04-24 | Hugging Face PDF | Hybrid CSA + HCA attention, 1M context by default |

The foundation papers (2024)

DeepSeek LLM — the scaling-law study

The first DeepSeek paper is not about a frontier model. It is a scaling-law study that justifies everything that came after. The authors argue that prior scaling literature gave conflicting guidance, so they re-derived the laws for their own training stack and applied them to 7B and 67B dense configurations. The published corpus at the time was 2 trillion tokens and growing. If you want context on why later DeepSeek models look the way they do — and why the lab keeps publishing token-count figures prominently — this is the origin. For a plain-English summary, see our page on DeepSeek LLM.

DeepSeekMoE and DeepSeek-V2 — the architecture base

DeepSeekMoE (January 2024) introduced the fine-grained expert split that every later DeepSeek MoE model inherits. DeepSeek-V2 (May 2024) then combined that MoE design with Multi-head Latent Attention (MLA), which is the attention trick that makes long-context serving affordable. DeepSeek-V2 comprises 236B total parameters, of which 21B are activated for each token, and supports a context length of 128K tokens. MLA guarantees efficient inference through significantly compressing the Key-Value (KV) cache into a latent vector, while DeepSeekMoE enables training strong models at an economical cost through sparse computation. Compared with DeepSeek 67B, DeepSeek-V2 achieves significantly stronger performance, and meanwhile saves 42.5% of training costs, reduces the KV cache by 93.3%, and boosts the maximum generation throughput to 5.76 times.

Every subsequent paper assumes you know what MLA and fine-grained MoE are. If you read only one paper from 2024, read this one. Our deeper breakdowns sit on the DeepSeek MoE and DeepSeek V2 pages.

DeepSeek-Coder and DeepSeekMath

Two specialist papers from early 2024 matter for the V4 story. DeepSeek-Coder introduced the code-pretraining recipe that fed later general-purpose releases. DeepSeekMath introduced Group Relative Policy Optimisation (GRPO), the RL algorithm that R1 would later use to learn reasoning without human demonstrations. Readers focused on coding should also see our notes on DeepSeek Coder and the DeepSeek Math page.

DeepSeek V3 — the efficiency thesis in paper form

The V3 technical report is the one most cited in the January 2025 news cycle. The paper presents DeepSeek-V3, a strong Mixture-of-Experts (MoE) language model with 671B total parameters with 37B activated for each token. To achieve efficient inference and cost-effective training, DeepSeek-V3 adopts Multi-head Latent Attention (MLA) and DeepSeekMoE architectures, which were thoroughly validated in DeepSeek-V2. Furthermore, DeepSeek-V3 pioneers an auxiliary-loss-free strategy for load balancing and sets a multi-token prediction training objective for stronger performance. DeepSeek-V3 was pre-trained on 14.8 trillion diverse and high-quality tokens, followed by Supervised Fine-Tuning and Reinforcement Learning stages.

The paper’s most-quoted number is the training cost: Combined with 119K GPU hours for context length extension and 5K GPU hours for post-training, DeepSeek-V3 costs only 2.788M GPU hours for its full training. Assuming the rental price of the H800 GPU is $2 per GPU hour, our total training costs amount to only $5.576M. That is the number that Reuters and everyone else converted to “about $5.6M.” The paper is careful to note this excludes prior research and ablations — a caveat many press summaries drop. Our DeepSeek V3 review covers how the claims held up in production.

DeepSeek R1 — reasoning without human demonstrations

R1 is the paper that turned DeepSeek from “efficient lab” to “strategic concern” in Western press coverage. The core claim is that reasoning steps can be taught with pure RL, no supervised step-by-step reasoning data required. The reasoning abilities of LLMs can be incentivized through pure reinforcement learning (RL), obviating the need for human-labeled reasoning trajectories. The proposed RL framework facilitates the emergent development of advanced reasoning patterns, such as self-reflection, verification, and dynamic strategy adaptation. The trained model achieves superior performance on verifiable tasks such as mathematics, coding competitions, and STEM fields.

Two points readers often get wrong:

- The training cost figure belongs to R1, not V3. Reuters reported DeepSeek’s own R1 training estimate at $294,000 in September 2025. The $5.576M figure in the V3 paper is a separate model.

- R1 builds on V3-Base. The paper uses DeepSeek-V3-Base as the base model, DeepSeek-V3 as the instructed model. DeepSeek-R1 and DeepSeek-R1-Zero are trained on top of DeepSeek-V3-Base and DeepSeek-R1 reuses non-reasoning data from DeepSeek-V3 SFT data. The two papers are a pair.

Our DeepSeek R1 review and the DeepSeek R1 vs OpenAI o1 comparison pair well with the paper.

DeepSeek V3.2 — the sparse attention bridge

V3.2 is the under-rated paper. Released in December 2025, it is the one that lays the groundwork for V4’s efficiency claims. The paper introduces DeepSeek Sparse Attention (DSA), an efficient attention mechanism that substantially reduces computational complexity while preserving model performance in long-context scenarios. By implementing a robust reinforcement learning protocol and scaling post-training compute, DeepSeek-V3.2 performs comparably to GPT-5. Notably, the high-compute variant, DeepSeek-V3.2-Speciale, surpasses GPT-5 and exhibits reasoning proficiency on par with Gemini-3.0-Pro, achieving gold-medal performance in both the 2025 International Mathematical Olympiad (IMO) and the International Collegiate Programming Contest.

V3.2’s DSA is instantiated on top of MLA, not as a replacement for it. The V3.2 paper is the most detailed account of how DeepSeek thinks about attention efficiency before the bigger leap in V4. The DeepSeek V3.2 page summarises the release notes.

DeepSeek V4 — the current paper

The V4 technical report, titled DeepSeek-V4: Towards Highly Efficient Million-Token Context Intelligence, is hosted as a PDF in the V4-Pro repository on Hugging Face. It is the primary source for every V4 claim.

Architecture contributions

The paper’s headline contribution is a new hybrid attention stack. DeepSeek-V4 designs a hybrid attention mechanism combining Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA) to dramatically improve long-context efficiency. In the 1M-token context setting, DeepSeek-V4-Pro requires only 27% of single-token inference FLOPs and 10% of KV cache compared with DeepSeek-V3.2. The paper also incorporates Manifold-Constrained Hyper-Connections (mHC) to strengthen conventional residual connections, enhancing stability of signal propagation across layers while preserving model expressivity.

Two more items are worth extracting from the report:

- Training precision. FP4 + FP8 Mixed: MoE expert parameters use FP4 precision; most other parameters use FP8. FP4 experts are unusual and are part of why V4 trained at the cost it did.

- Three reasoning-effort modes. DeepSeek-V4-Pro and DeepSeek-V4-Flash both support three reasoning effort modes. These map to the API’s non-thinking default,

reasoning_effort="high"with a thinking flag, andreasoning_effort="max". In the API this returnsreasoning_contentalongside the finalcontent.

Positioning against closed frontier models

The report is unusually candid about where V4 sits. Simon Willison extracted the key quote: “Through the expansion of reasoning tokens, DeepSeek-V4-Pro at maximum reasoning effort demonstrates superior performance relative to GPT-5.2 and Gemini-3.0-Pro on standard reasoning benchmarks. Nevertheless, its performance falls marginally short of GPT-5.4 and Gemini-3.1-Pro, suggesting a developmental trajectory that trails current-generation frontier models by approximately 3 to 6 months.” That is a self-description the press did not have to spin.

On specific benchmarks the paper reports, V4-Pro (reasoning_effort=max) wins on LiveCodeBench (93.5 — a new open-model high), Codeforces rating (3206 — DeepSeek reports this places the model roughly 23rd among human contest participants), Apex Shortlist (90.2), SimpleQA-Verified at 57.9 against open and near-open models, and a proof-perfect 120/120 on Putnam-2025 formal reasoning under the hybrid informal-formal pipeline. Verify any individual number against the PDF before you quote it — frontier benchmark standings shift monthly.

How the papers connect: a suggested reading order

If you have a weekend and want the architecture thread, read in this sequence:

- DeepSeek-V2 for MLA and DeepSeekMoE — the foundation.

- DeepSeek-V3 for auxiliary-loss-free load balancing, multi-token prediction, and FP8 training.

- DeepSeek-R1 for the RL-only reasoning recipe (which uses GRPO from DeepSeekMath).

- DeepSeek-V3.2 for DSA as an attention-efficiency prototype.

- DeepSeek-V4 for the CSA + HCA hybrid and the full mHC / Muon / FP4 training stack.

If you only have an evening, read the V4 report and V3 report back to back. Together they cover roughly 80% of the current generation’s ideas. The DeepSeek benchmarks 2026 page has the cross-model numbers.

Using the papers as a developer, not a reader

Every V3 paper onward describes a model you can call through POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. The current V4 model IDs are deepseek-v4-pro and deepseek-v4-flash. Legacy IDs (deepseek-chat, deepseek-reasoner) remain accepted until 2026-07-24 15:59 UTC, when they retire; both route to deepseek-v4-flash in the meantime.

A minimal Python call against V4-Pro, matching the paper’s thinking-mode setup, looks like this:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Summarise the V4 paper's DSA section."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

print(resp.choices[0].message.reasoning_content)

print(resp.choices[0].message.content)A few practical details for anyone replicating paper results against the hosted API:

- The API is stateless. Resend the full

messagesarray with every call. The web chat at chat.deepseek.com keeps session history; the API does not. - Context window is 1,000,000 tokens by default on both V4 tiers, with output up to 384,000 tokens.

- Temperature guidance from DeepSeek’s docs: 0.0 for code and maths (matching the paper’s evaluation setup), 1.0 for data tasks, 1.3 for general chat and translation, 1.5 for creative writing.

- JSON mode is designed to return valid JSON, not guaranteed. Prompt explicitly with the word “json” plus a small schema example, and set

max_tokenshigh enough to avoid truncation.

For costs, V4-Flash runs at $0.0028 (cache hit) / $0.14 (cache miss) / $0.28 (output) per 1M tokens; V4-Pro at promotional $0.435 / $0.87 (75% off through 2026-05-31; list $0.0145 / $1.74 / $3.48). A 1M-call workload with a 2,000-token cached system prompt, a 200-token user message and a 300-token response on V4-Flash works out to $5.60 cached input + $28 uncached input + $84 output = $117.60 total. On V4-Pro the same workload is $29 + $348 + $1,044 = $1,421. Check the DeepSeek API pricing page before committing — Preview-window rates can move.

For the full API surface, start with the DeepSeek API documentation and the DeepSeek API getting started tutorial.

What the papers do not tell you

Three honest limitations of reading DeepSeek’s papers as your only source:

- Self-reported benchmarks are self-reported. The V4 report’s evaluation configurations are the paper’s own. Cross-reference against independent benchmark aggregators before making a procurement decision.

- Training-cost figures exclude prior research. The V3 paper says this explicitly. The $5.576M number is the official run, not the full R&D bill.

- Licensing is per-model. V4-Pro, V4-Flash, V3.2, V3.1 and R1 publish weights under MIT. Some older releases — V3 base, Coder-V2, VL2 — split code (MIT) from weights (a separate DeepSeek Model License). Check the specific Hugging Face repo if licensing matters to your deployment.

For broader independent context, the DeepSeek latest updates feed tracks post-publication corrections and community replications.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Where can I find the official DeepSeek research papers?

DeepSeek publishes technical reports on arXiv and as PDFs in the matching Hugging Face repository. The V4 report lives inside the V4-Pro model repo; V3 is arXiv 2412.19437; V3.2 is arXiv 2512.02556; R1 is arXiv 2501.12948; V2 is arXiv 2405.04434. A community-maintained index collects the older papers too. For our plain-English summaries of each release, see the DeepSeek models hub.

What does the DeepSeek V4 paper actually introduce?

The V4 technical report introduces a hybrid attention stack — Compressed Sparse Attention plus Heavily Compressed Attention — that the authors claim cuts single-token inference FLOPs to 27% and KV cache to 10% of V3.2 at 1M-token context. It also documents mHC residual connections, Muon-optimised training, and FP4 expert precision. Full details in the PDF linked from the DeepSeek V4 release date page.

How much did it cost to train DeepSeek V3 and R1?

The V3 technical report reports 2.788M H800 GPU hours at $2/hour, totalling about $5.576M — explicitly excluding prior research and ablations. DeepSeek’s separately disclosed R1 training cost was around $294,000 (Reuters, September 2025). The two figures belong to different models and should not be combined. Our DeepSeek V3 review unpacks the cost claim.

Is the DeepSeek R1 paper really a reasoning trace breakthrough?

The R1 paper’s contribution is showing that reasoning patterns like self-reflection and verification can emerge from pure reinforcement learning, without human-labelled step-by-step reasoning data. That is a methodological result, not a claim to the best possible reasoning model. Compare it honestly against closed competitors in our DeepSeek R1 vs OpenAI o1 analysis.

Can I reproduce the benchmark numbers in the papers using the hosted API?

Partially. You can match most reasoning and coding benchmarks by setting model="deepseek-v4-pro", reasoning_effort="max", temperature=0.0, and a max_tokens large enough for the reasoning content. Agentic benchmarks depend on tool-use scaffolding that the papers describe but do not ship as a kit. The DeepSeek API getting started tutorial has a minimal client setup.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- RepositoryHugging Face: DeepSeek Papers community collectionCommunity-maintained bibliography of DeepSeek papersLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- Technical reportDeepSeek LLM scaling-law paperScaling-law study for 7B and 67B dense modelsLast checked: April 30, 2026

- Technical reportDeepSeekMoE paperFine-grained expert specialisation architectureLast checked: April 30, 2026

- Technical reportDeepSeek-Coder paperCode-specialised base model recipeLast checked: April 30, 2026

- Technical reportDeepSeekMath / GRPO paperGroup Relative Policy Optimisation introductionLast checked: April 30, 2026

- Technical reportDeepSeek-V2 technical reportMLA + MoE architecture foundation for later modelsLast checked: April 30, 2026

- Technical reportDeepSeek-V3 technical reportV3 efficiency thesis, training-cost figureLast checked: April 30, 2026

- Technical reportDeepSeek-R1 technical reportRL-only reasoning recipe and benchmark resultsLast checked: April 30, 2026

- Technical reportDeepSeek-V3.2 technical report (DSA)Sparse attention bridge before V4Last checked: April 30, 2026

- Technical reportDeepSeek V4 technical reportCSA + HCA hybrid attention, FLOP / KV cache claimsLast checked: April 30, 2026

Context sources

- NewsReuters: V3 ~$5.6M training cost (Jan 2025)Conversion of GPU-hour figure to dollar headlineLast checked: April 30, 2026

- NewsReuters: R1 $294,000 training cost (Sep 2025)Distinct R1 cost figure separate from V3Last checked: April 30, 2026

- AnalysisSimon Willison: V4 launch-day writeupExtracted key quotes from V4 technical reportLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.