DeepSeek vs OpenAI o1: Which Reasoning Model Wins in 2026?

If you’re choosing between deepseek vs openai o1 for a reasoning-heavy workload — agentic coding, multi-step math, long-document analysis — the comparison has shifted hard in the last year. OpenAI’s o1, the model that defined the “thinking before answering” category in late 2024, is now the priciest reasoning model in OpenAI’s lineup and has been largely overtaken by o3 and the GPT-5 family. DeepSeek, meanwhile, shipped V4 on April 24, 2026, with both Pro and Flash tiers carrying a one-million-token context window and reasoning-effort controls baked into a single model.

This article gives you a verdict, the numbers behind it, a worked cost example, and clear advice on when each model is still the right pick.

Verdict: DeepSeek V4 wins on price and parity; o1 only wins on inertia

For new builds in April 2026, DeepSeek V4 is the better default for reasoning workloads — particularly coding agents, long-context analysis, and any pipeline where output token spend matters. DeepSeek V4-Pro posts 80.6% on SWE-Bench Verified at $3.48 per million output tokens at list (currently $0.87/M during the 75% promo through 2026-05-31), while o1 lists $15 input and $60 output per million tokens — roughly 69× cheaper on output during the V4-Pro promo (17× at list rates) for a model OpenAI itself has positioned as legacy.

o1 is still the right call in narrow cases: existing production code that depends on o1’s specific reasoning behaviour, an enterprise procurement contract that already covers OpenAI, or a team unwilling to accept that DeepSeek’s API processes data on servers in China. For everyone else, the migration math leans heavily toward V4.

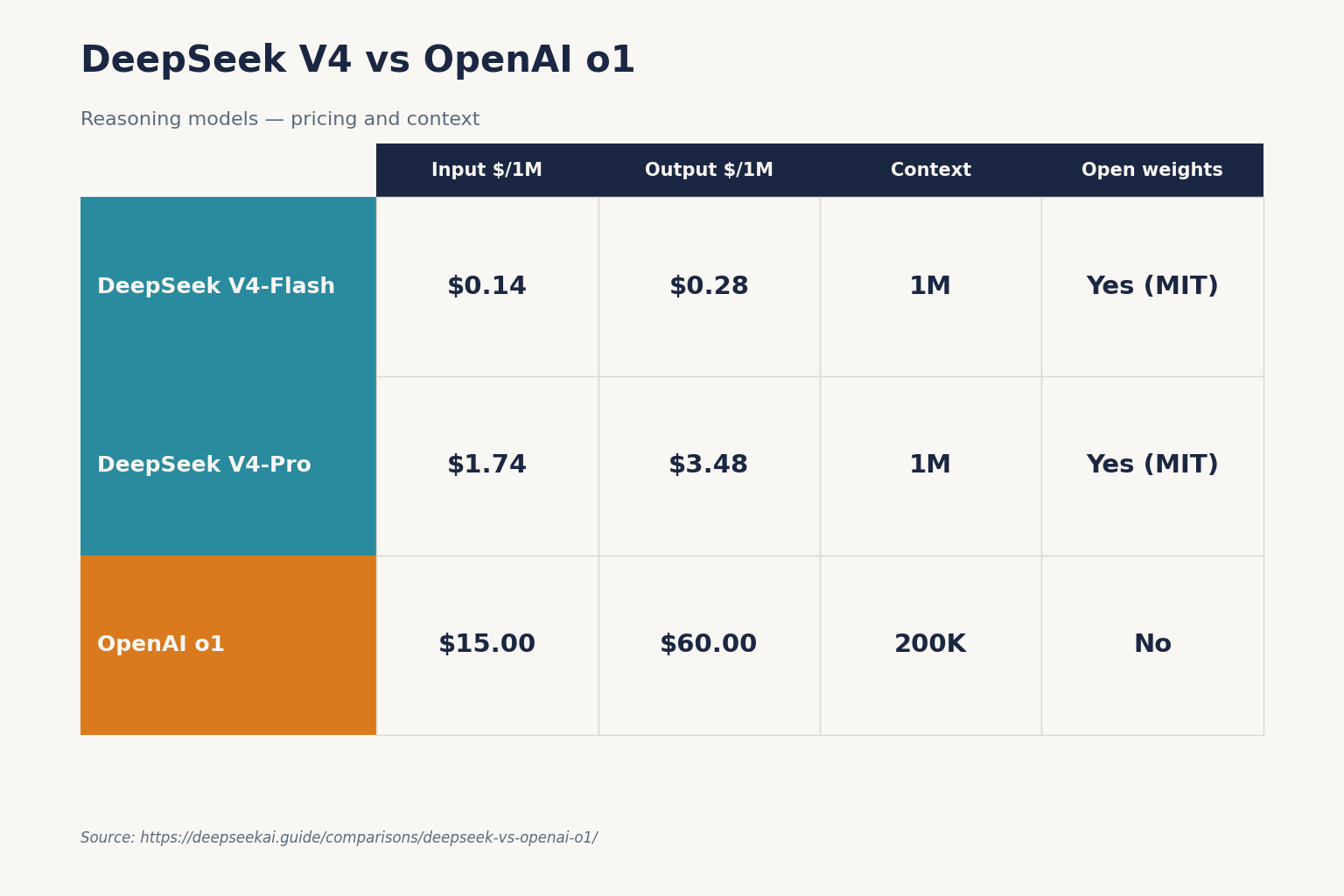

At-a-glance comparison

| Feature | DeepSeek V4-Pro | DeepSeek V4-Flash | OpenAI o1 |

|---|---|---|---|

| Released | 2026-04-24 | 2026-04-24 | 2024-12 (full release) |

| Architecture | MoE, 1.6T total / 49B active | MoE, 284B total / 13B active | Dense (undisclosed) |

| Context window | 1,000,000 tokens | 1,000,000 tokens | 200,000 tokens |

| Max output | 384,000 tokens | 384,000 tokens | ~100,000 tokens |

| Input (cache miss) | $0.435 / 1M promo through 2026-05-31 (list $1.74); $0.003625 / 1M promo cache hit (list $0.0145) | $0.14 / 1M (cache miss); $0.0028 / 1M cache hit | $15.00 / 1M |

| Output | $0.87 / 1M promo through 2026-05-31 (list $3.48) | $0.28 / 1M | $60.00 / 1M |

| Reasoning trace exposed | Yes (reasoning_content) |

Yes (reasoning_content) |

Summary only |

| Open weights | Yes (MIT) | Yes (MIT) | No |

| Best for | Frontier coding, agents | High-volume reasoning | Existing o1 pipelines |

DeepSeek V4 prices are from DeepSeek’s pricing page as of April 2026. o1 prices are from OpenAI’s pricing page and third-party trackers, also April 2026. Always verify before committing.

How the two models actually work

DeepSeek V4: one model, three reasoning modes

V4 ships as two open-weight Mixture-of-Experts models under the MIT license. DeepSeek’s own model card describes V4-Pro as 1.6 trillion parameters with 49B activated per token, V4-Flash as 284B with 13B activated, and both supporting a one-million-token context. Reasoning is controlled with a request parameter, not a separate model ID:

- Non-thinking — pass

extra_body={"thinking": {"type": "disabled"}}; no step-by-step reasoning; cheapest and fastest. (V4 default is thinking-enabled.) - Thinking (high) —

reasoning_effort="high"withextra_body={"thinking": {"type": "enabled"}}. - Thinking-max —

reasoning_effort="max"; needs a context window of at least 384K tokens to avoid truncation.

When thinking is enabled, the API returns reasoning_content alongside the final content. You can read both fields and decide what to log, surface to users, or feed back into a downstream agent.

OpenAI o1: opaque thinking, premium pricing

o1 was OpenAI’s first reasoning model and is now the priciest model in OpenAI’s lineup at $15 input and $60 output per 1M tokens. The o-series uses internal “thinking tokens” before producing visible output, and the cost trap (per OpenAI’s reasoning-models docs) is that those tokens are billed as output but capped only by max_completion_tokens. A simple-looking response can quietly burn 5–10× the visible token count. OpenAI does not return the full reasoning chain through the API — you get a summary at most.

OpenAI itself has effectively retired o1 in favour of o3 ($2 input / $8 output) for new builds. If you read “OpenAI o1” in a 2026 architecture document, the relevant comparison today is usually V4 vs the o-series as a whole, not V4 vs o1 specifically.

Head-to-head: Coding

Coding is where reasoning models earn their keep, and where V4-Pro lands hardest. Independent reviewers report V4-Pro at 80.6% on SWE-Bench Verified, within 0.2 points of Claude Opus 4.6, and ahead of Claude on Terminal-Bench 2.0 (67.9% vs 65.4%) and LiveCodeBench (93.5% vs 88.8%). On VentureBeat’s read of the wider field, V4 lands at roughly one-sixth the cost of GPT-5.5 and Opus 4.7 while staying competitive on most agentic benchmarks.

OpenAI did not publish SWE-Bench Verified numbers for o1 that are directly comparable to V4-Pro’s evaluation harness — most current SWE-Bench leaderboard entries are for o3, GPT-5 variants, or Claude. If your coding pipeline currently runs on o1, the upgrade path is more honestly a choice between o3 and V4-Pro, not staying on o1. For dedicated coding workflows, DeepSeek for coding covers prompt patterns and agent loops in detail.

Head-to-head: Reasoning and math

This is the most nuanced category. o1’s original claim to fame was multi-step reasoning, and on hardest-problem benchmarks (AIME, GPQA Diamond) o1 still beats DeepSeek V4-Flash in non-thinking mode. With thinking enabled, V4-Pro closes most of the gap and beats it on several agent-style evaluations.

Two caveats worth surfacing:

- DeepSeek’s V4 announcement explicitly notes V4-Pro trails Claude (96.2%) and GPT-5.4 (97.7%) on HMMT 2026 with its own 95.2% — so for the most demanding contest-math problems, the very best closed models still hold a small lead.

- HLE (Humanity’s Last Exam) puts V4-Pro at 37.7% versus 40.0% for Claude and 39.8% for GPT-5.4 — a meaningful gap on broad expert reasoning.

If you genuinely need the deepest available reasoning and price isn’t a constraint, an OpenAI thinking model is still defensible. If your reasoning is “complicated business logic” rather than “graduate-level physics,” V4 in thinking-max mode handles it at a fraction of the cost.

Head-to-head: Context and long documents

This is a one-sided fight. V4 carries a 1,000,000-token context window by default on both tiers, with output up to 384,000 tokens. o1 sits at 200K context with output capped around 100K. For multi-document research, repository-scale code review, or long-running agent traces, V4 has roughly 5× the headroom.

The architecture supports it efficiently too: DeepSeek’s V4-Pro requires roughly 27% of the single-token inference FLOPs and 10% of the KV cache compared with V3.2 at 1M-token contexts, per the V4 model card. Long-context inference with o1 gets expensive fast — both because of the per-token rates and because reasoning tokens scale with input size.

Head-to-head: Pricing

Stack the rates side by side and the gap is hard to argue with:

| Model | Input (cache miss) | Cached input | Output |

|---|---|---|---|

| DeepSeek V4-Flash | $0.14 | $0.0028 | $0.28 |

| DeepSeek V4-Pro | $0.435 promo / $1.74 list | $0.0145 | $0.87 promo / $3.48 list |

| OpenAI o1 | $15.00 | $7.50 (50% cache) | $60.00 |

Output rate is the line that matters for reasoning workloads, because thinking models emit a lot of tokens. V4-Pro is roughly 69× cheaper than o1 on output during the 75% promo through 2026-05-31 (17× at list rates); V4-Flash is roughly 214× cheaper. See DeepSeek API pricing for the full rate card.

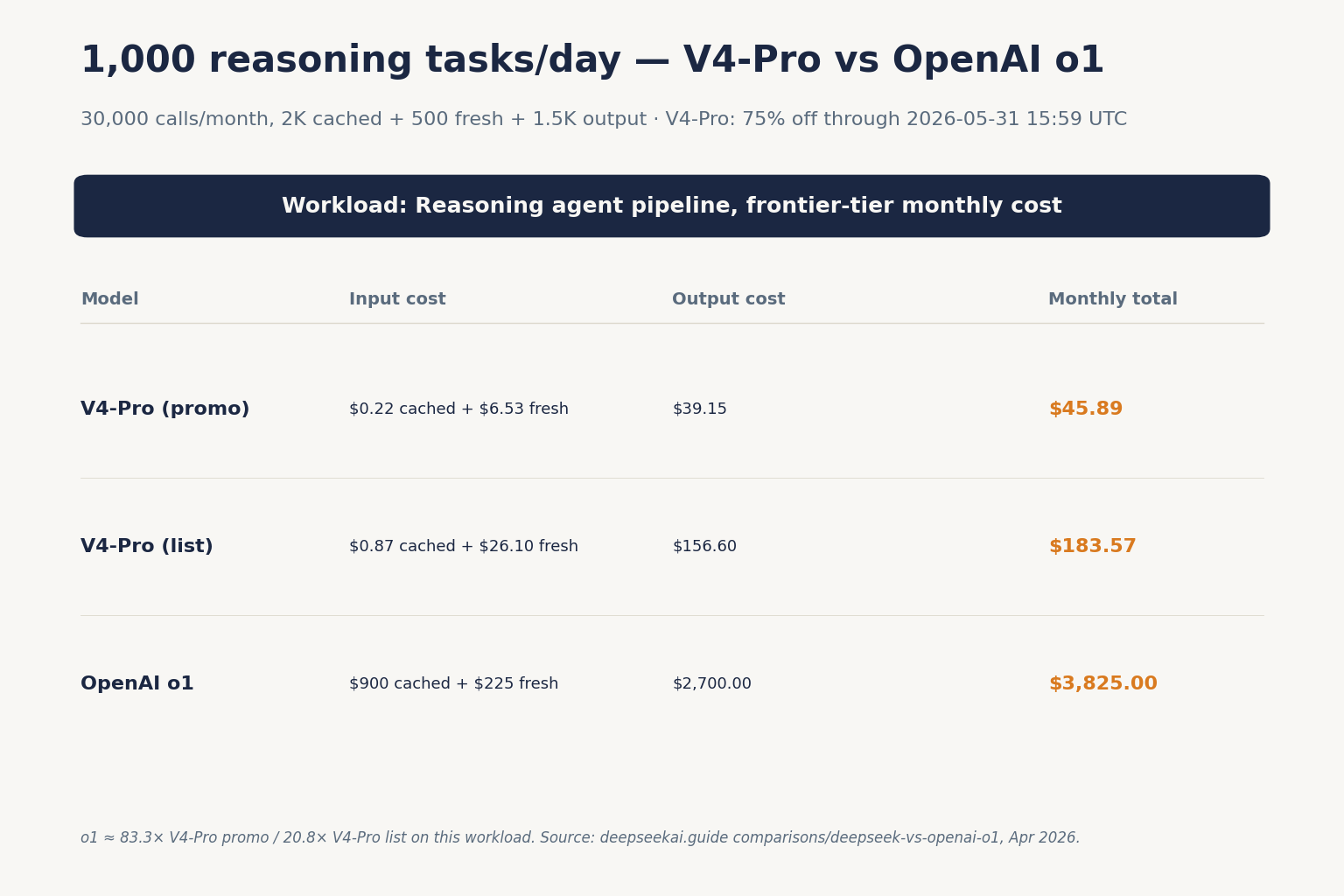

Worked cost example: 1,000 reasoning tasks per day

Imagine a coding-agent pipeline running 1,000 tasks per day, 30,000 per month. Each task uses a 2,000-token system prompt (cached across calls), a 500-token user message (uncached on each call), and produces a 1,500-token reasoning trace plus answer. We’re computing on DeepSeek V4-Pro, not Flash, since this is a frontier-tier workload.

# Promo rates (current through 2026-05-31)

Cached input : 60,000,000 × $0.003625/M = $0.22

Uncached input : 15,000,000 × $0.435/M = $6.53

Output : 45,000,000 × $0.87/M = $39.15

-------

Monthly total (promo) $45.90

# List rates (after 2026-05-31)

Cached input (list) : 60,000,000 × $0.0145/M = $0.87

Uncached input (list) : 15,000,000 × $1.74/M = $26.10 # promo $0.435/M = $6.53

Output (list) : 45,000,000 × $3.48/M = $156.60 # promo $0.87/M = $39.15

-------

Monthly total (list) $183.57

Same workload on o1 (no automatic prefix caching for the system prompt unless you opt into the 50% cached-input rate, which we’ll generously assume):

Cached input : 60,000,000 tokens × $7.50/M = $450.00

Uncached input : 15,000,000 tokens × $15.00/M = $225.00

Output : 45,000,000 tokens × $60.00/M = $2,700.00

---------

Monthly total $3,375.00

On promo rates that’s roughly 73× more expensive on o1; on list rates after the promo expires, roughly 18× more expensive. Move to V4-Flash where the task allows it and the gap widens to about 100×. You can run your own numbers in the DeepSeek pricing calculator.

Head-to-head: Privacy and ecosystem

This is where o1 still has a defensible case. DeepSeek’s API processes data on servers in China; conversations may be subject to Chinese law and law-enforcement access under legal process. OpenAI processes data in the US, EU, or designated regions depending on your plan. For regulated industries — healthcare, legal, finance with cross-border data restrictions — this matters more than the price gap.

DeepSeek’s offsetting strength is that V4’s weights are MIT-licensed and downloadable from Hugging Face. You can run V4-Flash on your own infrastructure if data residency is non-negotiable. o1 is closed; there is no self-hosted version. For more on this trade-off, see DeepSeek privacy.

Talking to the API: a minimal example

The DeepSeek API is OpenAI-compatible. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint, with the same wire format as api.openai.com. The only changes are base_url, api_key, and the model field. Here’s a minimal Python example using V4-Pro in thinking mode:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[

{"role": "user", "content": "Audit this SQL query for n+1 issues..."},

],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

max_tokens=8000,

)

print(resp.choices[0].message.reasoning_content) # the trace

print(resp.choices[0].message.content) # the answer

The API is stateless — clients must resend the full conversation history with every request. The web chat at chat.deepseek.com keeps session history for users; the API does not. DeepSeek also exposes an Anthropic-compatible surface against the same base URL, so existing Claude SDK code can swap providers with minimal changes.

If you’re maintaining an older integration, the legacy deepseek-chat and deepseek-reasoner model IDs continue to work and currently route to deepseek-v4-flash. Both legacy IDs retire on 2026-07-24 at 15:59 UTC; migrating is a one-line model= swap and the base_url stays the same. Step-by-step setup is in the DeepSeek API getting started guide.

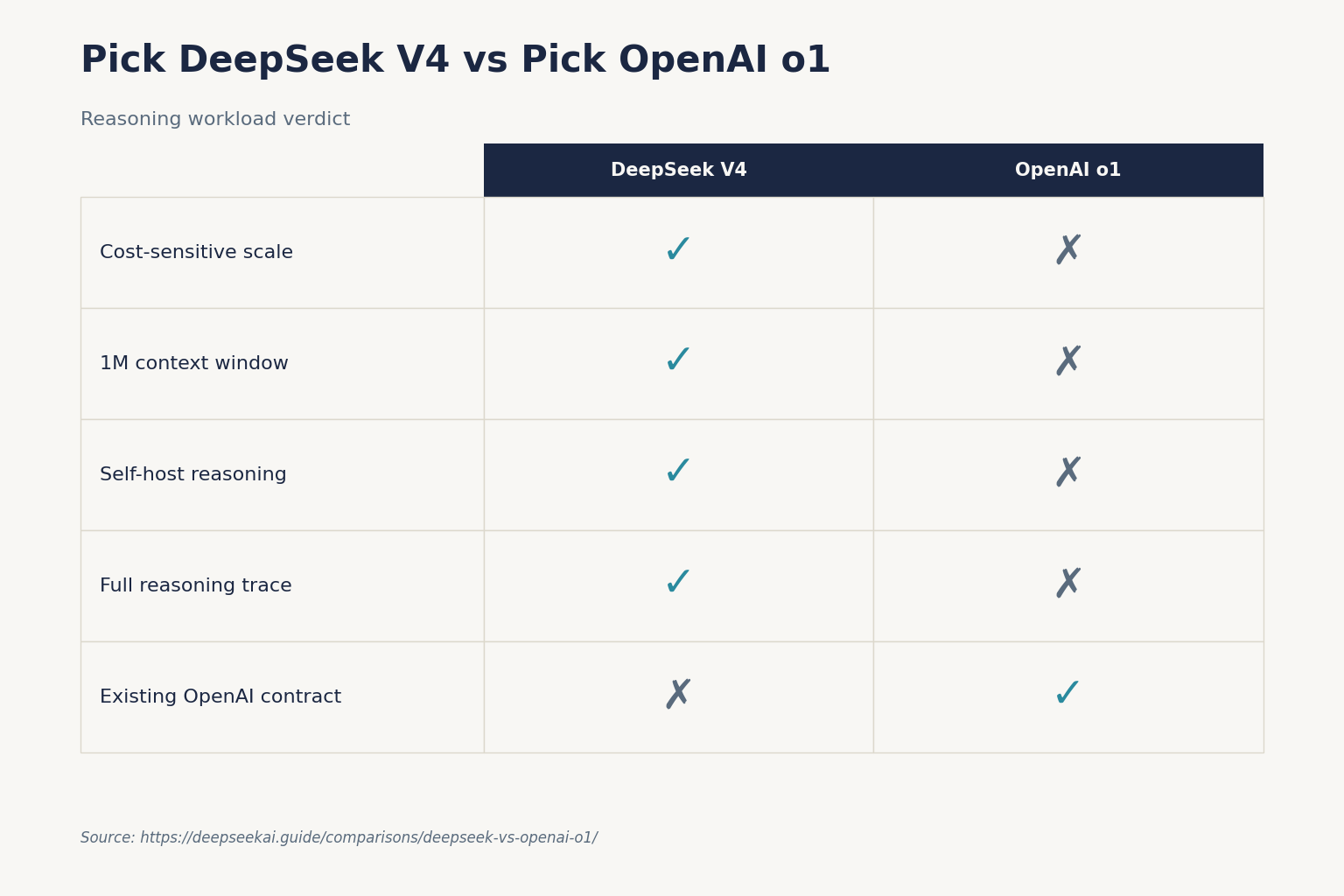

When to pick which

Pick DeepSeek V4 when

- You’re cost-sensitive and the workload generates a lot of output tokens.

- You want a 1M-token context window — long documents, repos, multi-file diffs.

- You need open weights for self-hosting or auditability.

- You want the reasoning trace as a structured field, not a summary.

- Your data-residency policy permits China-based processing, or you self-host.

Pick OpenAI o1 (or, more honestly, o3 / GPT-5 thinking) when

- You have an existing o1 deployment that you would prefer not to revalidate.

- You’re inside an enterprise procurement contract with OpenAI already.

- Your workload requires the highest published reasoning scores on HLE-style benchmarks (where the very newest closed models — not o1 specifically — currently lead).

- Cross-border data compliance rules out Chinese infrastructure and you can’t self-host.

Alternatives worth considering

This isn’t a binary. Several models sit between V4 and o1 on the price/quality curve:

- DeepSeek R1 vs OpenAI o1 — the original 2025 head-to-head, still useful if you’re maintaining an R1 deployment.

- DeepSeek vs Claude — Claude Opus 4.6 is the closest performance peer to V4-Pro on coding benchmarks.

- DeepSeek vs ChatGPT — the broader app-vs-API comparison if you’re choosing for a team rather than a backend.

For more reasoning-specific options, browse DeepSeek alternatives for reasoning or the wider AI comparison hub.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Is DeepSeek better than OpenAI o1 for reasoning?

For most reasoning workloads in 2026, DeepSeek V4-Pro in thinking mode matches or beats o1 on agentic and coding benchmarks at roughly 1/17th the output cost. On the hardest contest-math and expert-knowledge benchmarks (HMMT, HLE), the very newest closed models still lead, but o1 specifically is no longer competitive with the current frontier — OpenAI itself has positioned o3 as the replacement. See the DeepSeek V4-Pro page for full benchmark detail.

How much cheaper is DeepSeek V4 than OpenAI o1?

DeepSeek V4-Pro currently $0.435 input miss / $0.87 output during the 75% promo through 2026-05-31 (list $1.74 / $3.48), versus $15 input and $60 output for o1 — roughly 34× cheaper on input and 69× on output during the promo (at list, 8.6× cheaper on input and 17× cheaper on output). V4-Flash widens the gap further at $0.14 / $0.28 per million tokens. Run your own numbers through the DeepSeek pricing calculator before committing.

Does DeepSeek expose its reasoning trace like o1 does?

DeepSeek goes further than o1 here. When thinking mode is enabled, V4 returns reasoning_content alongside the final content as separate fields in the API response. OpenAI’s o-series returns only a summary of the reasoning, not the full chain. This matters for audit trails, agent orchestration, and debugging. The DeepSeek API documentation walks through both fields.

Can I run DeepSeek V4 locally to avoid sending data to China?

V4-Pro and V4-Flash weights are MIT-licensed and published on Hugging Face, so yes — you can self-host. V4-Flash (284B total / 13B active) is the realistic option for most teams; V4-Pro at 1.6T parameters needs serious infrastructure. The install DeepSeek locally tutorial covers hardware requirements and setup. o1 has no self-hosted option.

What happens to deepseek-chat and deepseek-reasoner after July 2026?

The legacy deepseek-chat and deepseek-reasoner model IDs currently route to deepseek-v4-flash (in non-thinking and thinking mode respectively). Both retire on 2026-07-24 at 15:59 UTC, after which requests using those IDs will fail. Migration is a one-line model= swap; the base_url doesn’t change. The DeepSeek latest updates page tracks deprecation timelines.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- Model cardHugging Face: DeepSeek-V4-Pro model cardV4-Pro / V4-Flash size, active params, context windowLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

Context sources

- AnalysisBuildFastWithAI: DeepSeek V4-Pro review80.6% SWE-Bench Verified citation for V4-ProLast checked: April 30, 2026

- AnalysisPE Collective: OpenAI o-series API pricing trackero1 pricing ($15 input / $60 output) and thinking-token cost trapLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.