The DeepSeek Fine Tuning Guide for LoRA, QLoRA and Full SFT

You have a domain — legal clauses, support tickets, internal Python conventions — and the stock model gets it 70 % right. The remaining 30 % is where production breaks. This DeepSeek fine tuning guide walks through the realistic options in April 2026: LoRA and QLoRA on the R1 distills and Coder, full supervised fine-tuning on V4-Flash for teams with serious GPU budget, and the dataset and evaluation work that decides whether any of it pays off. I run V4-Pro and V4-Flash in production and have fine-tuned the 7B–8B distills on a single 24 GB card. By the end you will know which DeepSeek checkpoint to start from, how to prepare data, what hardware to rent, and the specific commands to ship a working adapter.

What you will build

By the end of this tutorial you will have a parameter-efficient adapter (LoRA or QLoRA) trained on a small DeepSeek checkpoint, evaluated against a held-out split, and ready to serve behind an OpenAI-compatible API. The same recipe scales up to multi-GPU full fine-tuning on the larger weights, with the budget and risk increasing accordingly.

One framing point before we start: DeepSeek’s flagship API models are not the right starting point for fine-tuning. DeepSeek-V4-Pro is the largest open-weights model around, larger than Kimi K2.6 and more than twice the size of V3.2; the Pro checkpoint is 865 GB on Hugging Face and Flash is 160 GB. The R1 model contains over 600 billion parameters and requires data centres to fine-tune, which is why DeepSeek ships smaller distilled versions for accessible adaptation. Most teams should fine-tune a distill or Coder variant and use the V4 API for everything else.

Prerequisites

- Python 3.10+ and a CUDA-capable GPU. For QLoRA on a 7–8B distill, 16 GB VRAM is the floor; 24 GB (RTX 4090, A10G) is comfortable.

- A Hugging Face account with a write-access token (for downloading weights and pushing your adapter).

- 500–5,000 cleaned training examples in JSONL chat format, plus a held-out evaluation split.

- The trio:

transformers,peft,trl,bitsandbytes,datasets,accelerate. Optionallyunslothfor memory and speed wins. - If you plan to call the V4 API for synthetic data generation or evaluation comparisons, see the DeepSeek API getting started walkthrough first.

Step 1 — Pick the right base checkpoint

This single decision sets the cost ceiling for the project. A wrong choice here cannot be salvaged by hyperparameter tuning later.

| Checkpoint | Params | Min VRAM (QLoRA) | Best for | Licence |

|---|---|---|---|---|

| DeepSeek-R1-Distill-Qwen-1.5B | 1.5B | 8 GB | Smoke tests, edge deployment | MIT |

| DeepSeek-R1-Distill-Qwen-7B | 7B | 16 GB | Classification, function calling | MIT |

| DeepSeek-R1-Distill-Llama-8B | 8B | 16 GB | General SFT, reasoning style transfer | MIT |

| DeepSeek-Coder 6.7B | 6.7B | 16 GB | Code completion, internal SDK conventions | MIT (code) / DeepSeek Model License (weights) |

| DeepSeek-V4-Flash | 284B / 13B active | Multi-GPU cluster | Full SFT for serious budgets | MIT |

| DeepSeek-V4-Pro | 1.6T / 49B active | Large cluster only | Rare; usually use the API | MIT |

Picking a distilled base such as DeepSeek-R1-Distill-Qwen-7B that fits your GPU budget, then preparing 500–5,000 labelled examples in JSONL ChatML format with train and validation splits, is the textbook starting position. V4-Flash weights are the practical self-hosting target — at 284B parameters with 13B activated per token, V4-Flash can run on a multi-GPU setup that most mid-size teams can afford, while V4-Pro at 1.6T total parameters requires significant cluster capacity to serve at production latency, so most teams will use the DeepSeek API for Pro and consider self-hosting only for Flash. If your task is code-shaped, start with a DeepSeek Coder base; if it is reasoning-shaped, a DeepSeek R1 Distill base.

Step 2 — Prepare the dataset

Dataset quality is the single largest predictor of fine-tune success. Using parameter-efficient fine-tuning techniques like LoRA and QLoRA, teams without massive GPU budgets can adapt these models to specialised tasks, typically seeing 10–30 percentage point accuracy gains with 500–5,000 examples. That gain disappears fast if the data is noisy.

Format

Use JSONL, one example per line, in ChatML-style messages. A minimal example for a support-classification task:

{"messages": [

{"role": "system", "content": "Classify the ticket. Output one of: billing, bug, feature, other."},

{"role": "user", "content": "Charged twice this month, need refund."},

{"role": "assistant", "content": "billing"}

]}Splits and hygiene

- Split 80 / 10 / 10 into train, validation, and held-out test. Never let the test split leak into training.

- Deduplicate — exact matches and near-duplicates inflate validation accuracy without improving the model.

- Balance classes when possible. If 90 % of tickets are

other, the model will learn to sayother. - Run a sanity prompt of every example through the base model first; if the base already gets it right, you don’t need it in training.

Step 3 — Configure LoRA / QLoRA

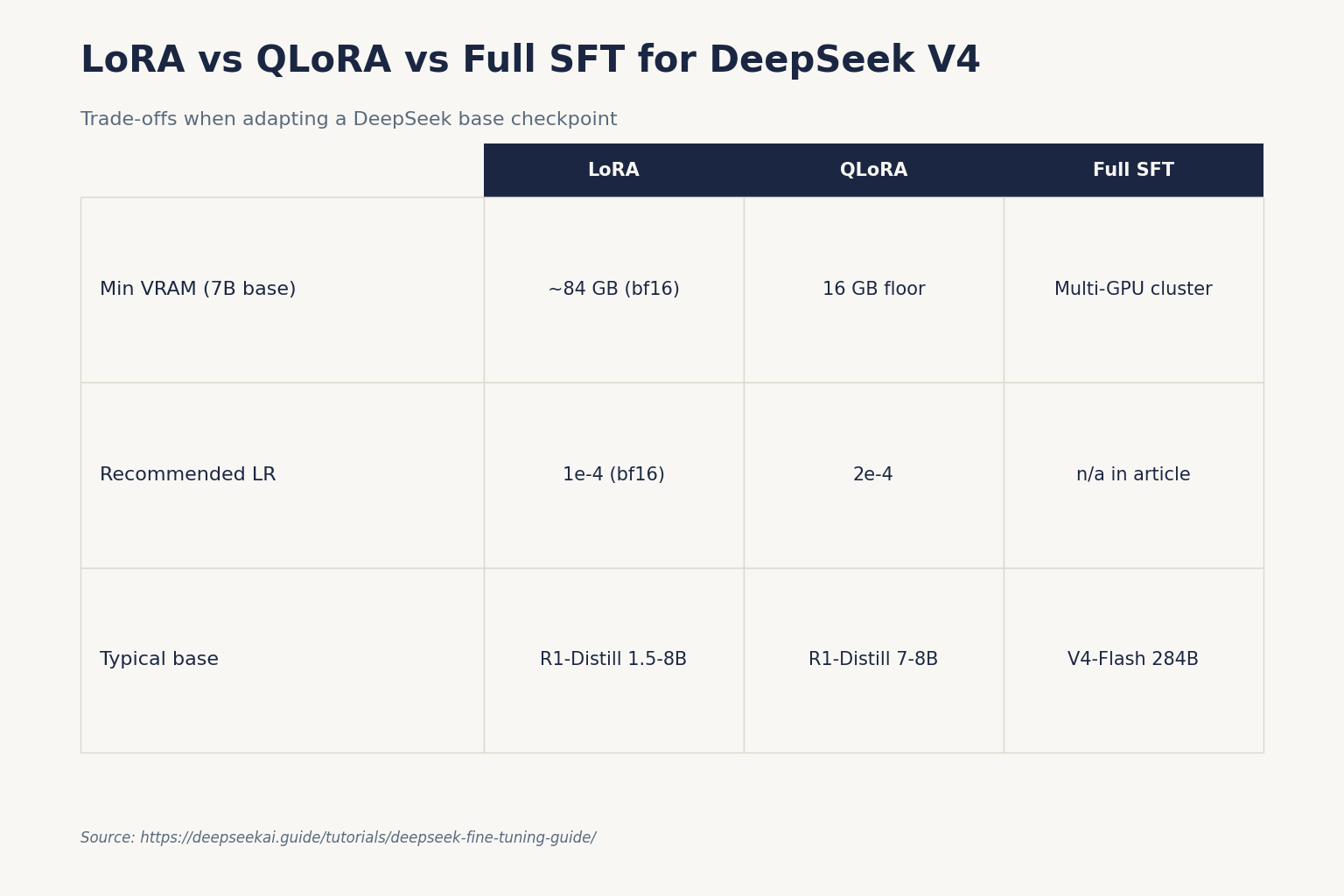

LoRA freezes the base model weights and injects small trainable low-rank matrices into specific layers, typically the attention projections; for example, with r=16 targeting 4 attention matrices in a 7B model, trainable parameters are roughly 40M compared to 7B base parameters, dropping VRAM usage from roughly 84 GB to 6–8 GB for a 7B model under QLoRA, which loads the base model in 4-bit quantised form so a 7B model fits on a single 16 GB GPU.

Sensible defaults that have held up across my own runs and the published recipes:

- Rank (r): 16 for classification, 32 for instruction following, 64 only if you have signal that 32 is underfitting.

- Alpha: 2× rank is a safe starting point.

- Target modules: q_proj, k_proj, v_proj, o_proj. Add gate/up/down projections only when attention-only adapters underfit.

- Learning rate: 2e-4 for QLoRA, 1e-4 for LoRA on bf16.

- Epochs: 3 for >2,000 examples, up to 5 for smaller sets. Watch validation loss and stop early.

- Batch size: 1–2 on 16 GB, 4–8 on 24 GB+. Use gradient accumulation to reach an effective batch of 16–32.

Step 4 — Train

Below is a minimal Python QLoRA run using transformers, peft, and trl. It trains the 8B Llama-distilled DeepSeek on a JSONL file:

import torch

from datasets import load_dataset

from transformers import AutoTokenizer, AutoModelForCausalLM, BitsAndBytesConfig

from peft import LoraConfig, get_peft_model, prepare_model_for_kbit_training

from trl import SFTTrainer, SFTConfig

MODEL_ID = "deepseek-ai/DeepSeek-R1-Distill-Llama-8B"

bnb = BitsAndBytesConfig(

load_in_4bit=True,

bnb_4bit_quant_type="nf4",

bnb_4bit_compute_dtype=torch.bfloat16,

bnb_4bit_use_double_quant=True,

)

tok = AutoTokenizer.from_pretrained(MODEL_ID)

model = AutoModelForCausalLM.from_pretrained(MODEL_ID, quantization_config=bnb, device_map="auto")

model = prepare_model_for_kbit_training(model)

lora = LoraConfig(

r=16, lora_alpha=32, lora_dropout=0.05,

target_modules=["q_proj", "k_proj", "v_proj", "o_proj"],

bias="none", task_type="CAUSAL_LM",

)

model = get_peft_model(model, lora)

ds = load_dataset("json", data_files={"train": "train.jsonl", "eval": "eval.jsonl"})

cfg = SFTConfig(

output_dir="ds-r1-distill-tuned",

num_train_epochs=3,

per_device_train_batch_size=2,

gradient_accumulation_steps=8,

learning_rate=2e-4,

bf16=True,

logging_steps=20,

eval_strategy="steps",

eval_steps=100,

save_steps=200,

)

trainer = SFTTrainer(model=model, args=cfg, train_dataset=ds["train"],

eval_dataset=ds["eval"], tokenizer=tok)

trainer.train()

trainer.save_model("ds-r1-distill-tuned/final")If you want a higher-level wrapper, LlamaFactory supports DeepSeek alongside LLaMA, Qwen3, Mistral and others, with continuous pre-training, supervised fine-tuning, reward modelling, PPO, DPO, KTO and ORPO, and 16-bit full-tuning, freeze-tuning, LoRA and 2/3/4/5/6/8-bit QLoRA. It hides most of the boilerplate above behind a YAML config.

Step 5 — Verify it worked

Three checks, in order:

- Validation loss curve: should drop and then plateau. A loss that keeps falling while held-out accuracy stalls is overfitting — stop earlier next time.

- Held-out accuracy or exact-match: compute on the test split you set aside in step 2. Compare against the same metric for the un-tuned base model. If the gap is under 5 percentage points, the fine-tune is not pulling its weight.

- Spot-check 20 outputs by hand. Numerical wins sometimes hide format regressions, hallucinated tags, or repeated tokens.

Then merge the adapter and serve. For local serving, vLLM and SGLang both load LoRA adapters directly. If you prefer a managed serverless path, see DeepSeek Docker deployment for the container side, or push the adapter to Hugging Face and load it from any inference runtime that supports PEFT.

Step 6 — Common errors and fixes

| Symptom | Likely cause | Fix |

|---|---|---|

| CUDA OOM at first step | Batch size too high for VRAM | Set per-device batch to 1, raise gradient accumulation to 16+ |

| Loss is NaN after a few steps | fp16 underflow on T4 | Use bf16 on Ampere+; keep fp16 only for older cards with low LR |

| Validation loss flat from step 0 | Adapter not attached or wrong target modules | Print model.print_trainable_parameters(); should be ~0.1–1 % |

| Model outputs base style, not your data | Chat template mismatch between training and inference | Use the exact tokenizer chat template at inference; test with tokenizer.apply_chat_template |

| Repeats tokens or rambles | Over-trained on too few examples | Cut epochs, add more examples, or lower LR to 1e-4 |

Where DeepSeek V4 fits in

The V4 family changes the calculus for some of these projects. deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after July 24, 2026, 15:59 UTC, and currently route to deepseek-v4-flash non-thinking and thinking respectively. If your fine-tune was previously aimed at extending deepseek-chat‘s behaviour through prompts, V4-Flash’s much lower API rates may close the gap without any training. Both V4 tiers default to a 1,000,000-token context window with output up to 384,000 tokens — context that previously demanded a fine-tune for retrieval-style tasks now often fits inline.

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com; an Anthropic-compatible surface lives at the same base URL. Thinking mode is a parameter, not a separate model — pass reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}} on either V4 model and the response returns reasoning_content alongside the final content. The API is stateless: the client must resend conversation history with every request, unlike the web/app which keeps session history for the user.

A minimal Python call with the OpenAI SDK:

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise this ticket: ..."}],

temperature=0.0,

max_tokens=512,

)Other parameters worth knowing before deciding to fine-tune: top_p, JSON mode (response_format={"type": "json_object"} — designed to return valid JSON, not guaranteed; include the word “json” plus a small example schema in the prompt and set max_tokens high enough to avoid truncation), tool calling, streaming via stream=true, FIM completion (Beta — requires thinking: {"type": "disabled"}), and Chat Prefix Completion (Beta). The recommended temperature is 0.0 for code and maths, 1.0 for data analysis, 1.3 for general chat and translation, and 1.5 for creative writing.

Cost: train your own vs call the API

Run the numbers before you rent a GPU. Take a workload of 1,000,000 calls per month with a 2,000-token cached system prompt, a 200-token user message, and a 300-token response. On deepseek-v4-flash rates as of April 2026:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60 per month

The same workload on deepseek-v4-pro (promo through 2026-05-31: cached $0.003625, uncached $0.435, output $0.87 per 1M; list rates $0.0145 / $1.74 / $3.48 resume after) totals $1,421.00 — roughly 10× the Flash bill. By contrast, RunPod A10G instances start around $0.50/hr (verify current rates) and a tutorial dataset of about 1,000 examples over 3 epochs takes 1 to 3 hours on an A10G, costing roughly $1.50 to $3.00 for the training run alone — but factor in iterations, evaluation, debugging, and ongoing serving on a dedicated endpoint, and most adaptation projects beat their break-even at much higher volume than $117.60/month. For a fuller breakdown try the DeepSeek pricing calculator.

Always check the official DeepSeek pricing page before committing — Preview-window rates can shift, and the off-peak discount programme that ended on 2025-09-05 has not returned.

Licensing — read before you ship

Per-model, not generalised. Both V4 models support a 1 million token context window with a maximum output of 384K tokens, and both are released under the MIT licence — meaning free commercial use and full weights access on Hugging Face. R1 and the R1 distills are also MIT (both code and weights). Older releases — V3 base, Coder-V2, VL2 — split MIT code from a separate DeepSeek Model License on the weights. If your fine-tune is going into a commercial product, check the specific Hugging Face repo for your base checkpoint before training.

Alternative paths

- Prompt engineering first. Prompts are reversible; fine-tunes are not. See DeepSeek prompt engineering.

- RAG instead of fine-tuning. When the goal is recall over private documents, retrieval is almost always cheaper. The DeepSeek RAG tutorial covers the pipeline.

- Hosted SFT. If you do not want to run a GPU at all, providers like Fireworks support DeepSeek fine-tuning on their platform — useful if you also need their inference layer.

- Other tutorials in this series. Browse the DeepSeek tutorials hub for related step-by-steps.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Can you fine-tune DeepSeek V4-Pro directly?

Technically yes — the weights are MIT-licensed and on Hugging Face — but practically almost no one should. At 1.6T total parameters even a LoRA pass needs serious cluster capacity. Most teams fine-tune a smaller R1 distill or DeepSeek Coder, then call V4-Pro through the API for tasks where the frontier-tier benchmark lift matters. See the DeepSeek V4-Pro overview for context.

How much GPU memory do I need for QLoRA on a 7B DeepSeek model?

A single GPU with 16 GB VRAM is the practical floor. With QLoRA’s 4-bit base loading and LoRA adapters on attention layers, a 7B model fits on a 16 GB card with batch size 1–2; a 24 GB card (RTX 4090, A10G) gives you bf16 and batch size 4+. Read the DeepSeek system requirements guide for full hardware notes.

What dataset size is enough for a useful fine-tune?

500 to 5,000 high-quality labelled examples is the sweet spot for parameter-efficient fine-tuning, with most teams seeing 10 to 30 percentage point gains in that range. Quality beats quantity once you pass a few hundred examples — a thousand clean, deduplicated, balanced examples will outperform ten thousand noisy ones. The DeepSeek prompt engineering guide covers data-shaping techniques.

Does fine-tuning DeepSeek R1 preserve its reasoning style?

Only if your dataset preserves it. R1 and its distills emit a reasoning content before the answer; if your training examples drop that structure, the adapter will train it away. Either include reasoning in target outputs or use the official chat template that separates reasoning_content from content. See the DeepSeek R1 page for the model’s expected output shape.

Should I fine-tune or use the V4 API with prompts?

Try prompts and retrieval first. V4-Flash’s input rates start at $0.0028 per million cached tokens as of April 2026, and the 1,000,000-token context window absorbs a lot of what fine-tuning used to be required for. Fine-tune when you have a stable, narrow task; thousands of examples; and a clear quality or latency gap that prompting cannot close. The DeepSeek API best practices guide covers when each approach pays off.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- PricingDeepSeek API pricing pageV4 input/output token rates used in cost worked exampleLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.