DeepSeek Coder V2: The Open-Source MoE Code Model Explained

If you’re picking an open-weight code model in 2026, where does DeepSeek Coder V2 actually fit — and is it still worth running now that V4 has shipped? This guide walks through the architecture, benchmark numbers from the original report, licensing, and the realistic ways to access the model today (locally or on Hugging Face — the original deepseek-coder hosted endpoint was retired in 2024 and is no longer mapped to a current V4 model). DeepSeek Coder V2 was the company’s June 2024 push to close the gap with GPT-4-Turbo on code generation, and the technical report’s headline numbers — 90.2% HumanEval, 76.2% MBPP+, 128K context — still anchor most “open vs closed” coding comparisons. By the end you’ll know exactly when Coder V2 is the right pick versus DeepSeek’s newer general-purpose models.

What is DeepSeek Coder V2?

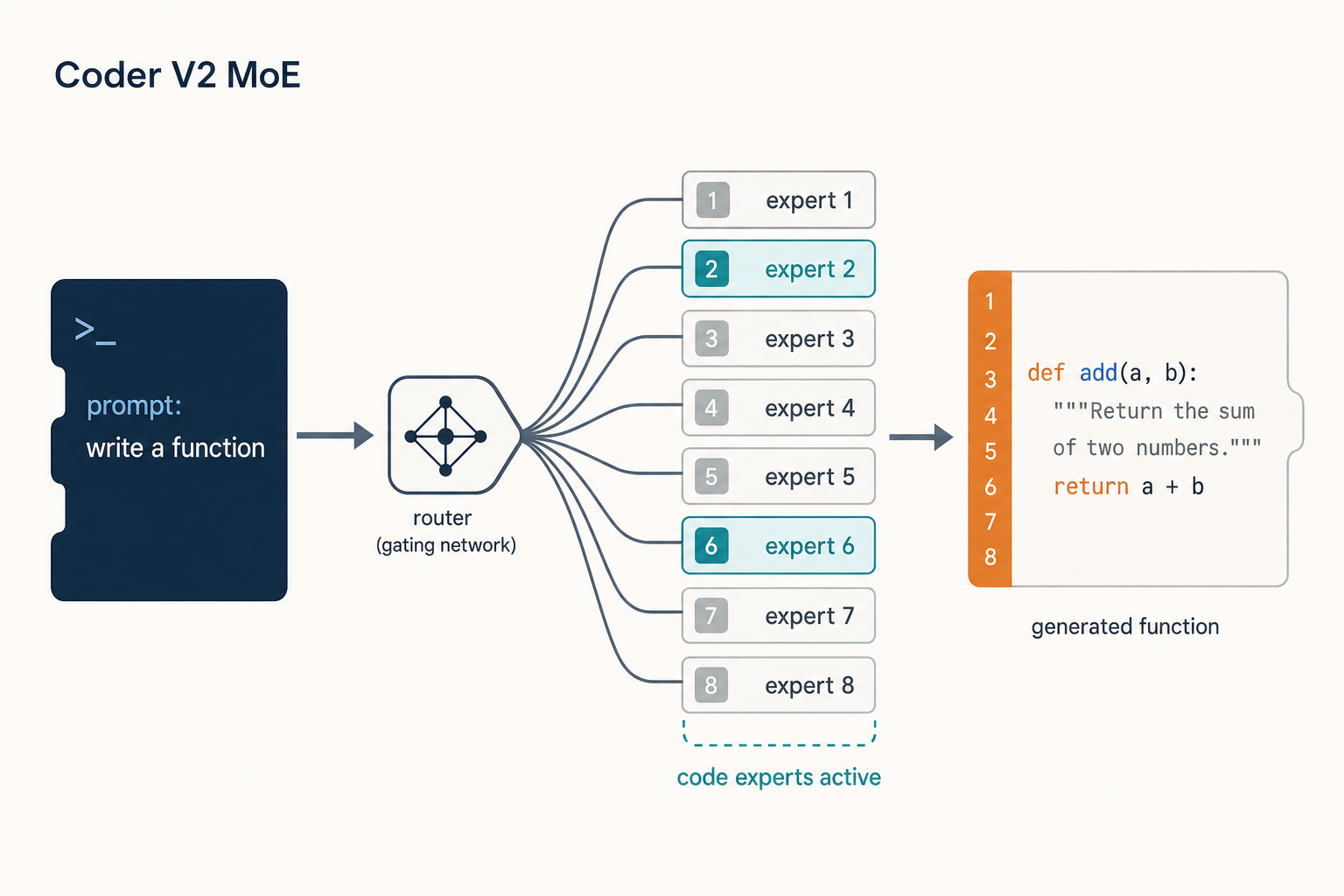

DeepSeek Coder V2 is an open-source Mixture-of-Experts (MoE) code language model released in June 2024. It is further pre-trained from an intermediate checkpoint of DeepSeek-V2 with an additional 6 trillion tokens, which substantially enhances coding and mathematical reasoning while maintaining comparable general-language performance. The point of the project was simple: produce an open-weight coder that could match GPT-4-Turbo on code-specific tasks without the closed-source price tag.

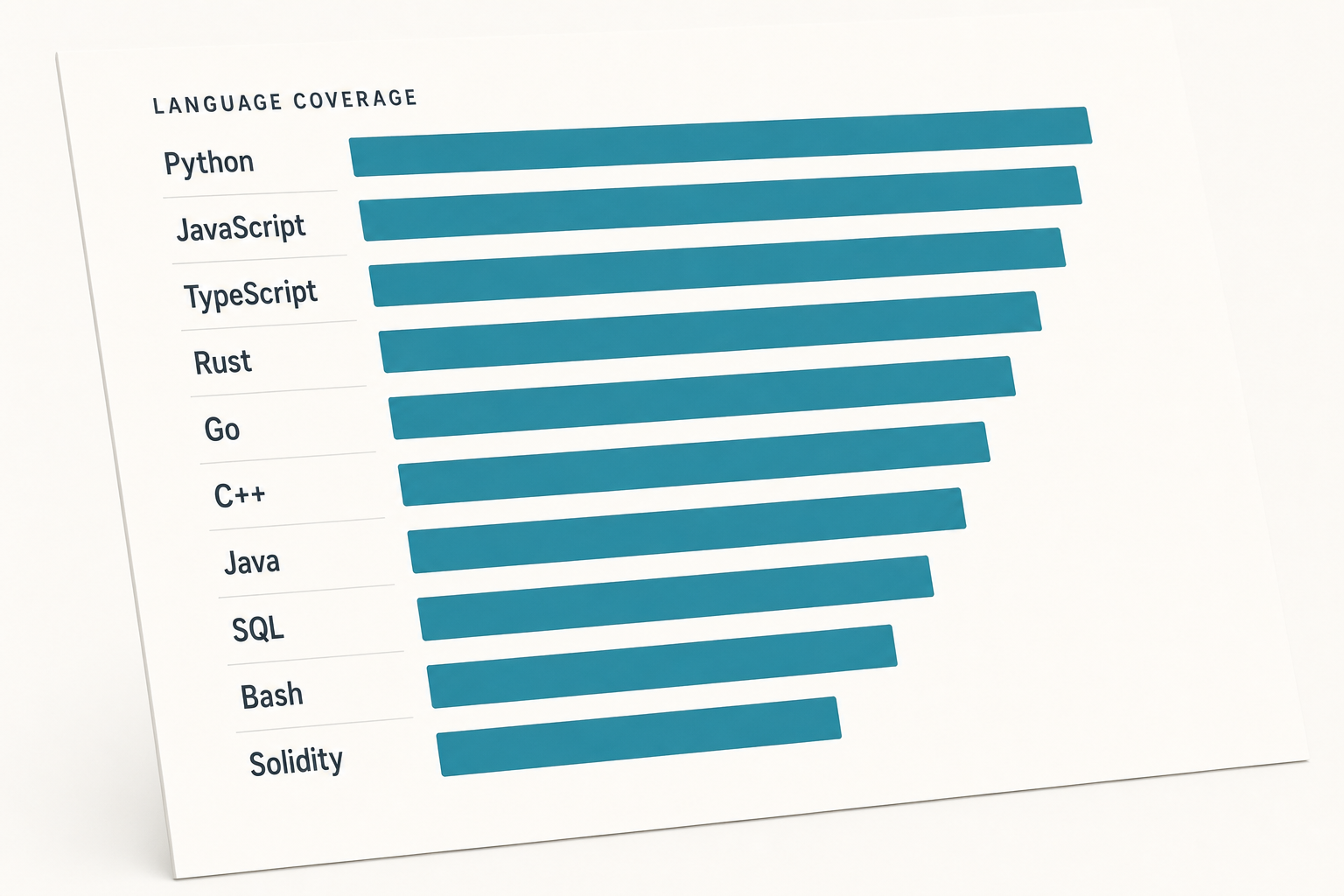

The release covers two sizes — a Lite 16B model and a full 236B model — with both Base and Instruct variants. It expanded programming-language support from 86 to 338 and extended context length from 16K to 128K. If you want a wider picture of where this model sits in the lineage, the DeepSeek models hub is the index.

Architecture and lineage

DeepSeek Coder V2 is built on the DeepSeekMoE framework. DeepSeek released the V2 with 16B and 236B parameters based on the DeepSeekMoE framework, with active parameters of only 2.4B and 21B respectively, including base and instruct models. That sparsity is the whole point of the architecture — the full 236B model only fires 21B parameters per token, so inference cost scales with active parameters rather than the total.

It is a continuation of the original DeepSeek V2 base, not a from-scratch model. The earlier DeepSeek Coder family (1.3B–33B dense) was trained on 2T tokens with a 16K window; Coder V2 inherits MoE plus the larger 6T-token code-focused pre-training.

| Spec | DeepSeek Coder V2-Lite | DeepSeek Coder V2 (full) |

|---|---|---|

| Total parameters | 16B | 236B |

| Active parameters | 2.4B | 21B |

| Architecture | MoE | MoE |

| Context length | 128K | 128K |

| Languages supported | 338 | 338 |

| Release | 2024-06-17 | 2024-06-17 |

| Variants | Base, Instruct | Base, Instruct |

Training recipe in one paragraph

DeepSeek Coder V2 is further pre-trained from an intermediate checkpoint of DeepSeek-V2 with an additional 6 trillion tokens, substantially enhancing the coding and mathematical reasoning capabilities of DeepSeek-V2 while maintaining comparable performance in general language tasks. The mix was heavily code-weighted, with multi-language coverage and a fill-in-the-middle objective — both relevant if you plan to use it as an editor backend.

Benchmarks (from the V2 report)

All numbers below are from DeepSeek’s own technical report and GitHub README, comparing the 236B Instruct against the closed-source generation that was current in mid-2024 (GPT-4-Turbo, GPT-4o-0513, Claude-3-Opus, Gemini-1.5-Pro). Cite these as 2024 numbers — current frontier models have moved on.

| Benchmark | Coder V2-Instruct (236B) | Coder V2-Lite-Instruct (16B) | Best closed-source (mid-2024) |

|---|---|---|---|

| HumanEval | 90.2 | 81.1 | GPT-4o-0513: 91.0 |

| MBPP+ | 76.2 | 68.8 | GPT-4o-0513: 73.5 |

| LiveCodeBench (Dec 23–Jun 24) | 43.4 | 24.3 | GPT-4o-0513: 43.4 |

| USACO | 12.1 | 6.5 | GPT-4o-0513: 18.8 |

| SWE-Bench | 12.7 | — | GPT-4-Turbo-0409: 18.7 |

DeepSeek’s report notes a 90.2% score on HumanEval, a 76.2% score on MBPP (a leading result with the EvalPlus pipeline), and a 43.4% score on LiveCodeBench (questions from Dec. 2023 to June 2024). Coder V2 was the first open-source model to surpass 10% on SWE-Bench. See the official DeepSeek-Coder-V2 technical report for the full tables.

Strengths — where Coder V2 specifically wins

- Open-weight commercial use. Use of the Base and Instruct models is subject to the DeepSeek Model License, and the Coder V2 series supports commercial use.

- Two right-sized tiers. The 16B Lite (2.4B active) is realistic to self-host; the full 236B is for serious infrastructure or batch jobs.

- 338-language coverage with strong multilingual HumanEval results, including Java and PHP — useful if you work outside the Python monoculture.

- 128K context for repository-level prompts. The models support a 128K token context length, and Needle-In-A-Haystack tests show DeepSeek-Coder-V2 maintains performance across the entire 128K window, which matters for tasks involving large codebases or documentation.

- Native FIM for editor integrations — relevant if you’re plumbing it into an IDE.

Weaknesses — where it falls short

- SWE-Bench is mediocre. Coder V2 scored 12.7 on SWE-Bench, behind GPT-4-Turbo-0409 (18.7) and Gemini-1.5-Pro (18.3); it still outperformed Claude-3-Opus (11.7), Llama-3-70B (2.7), and Codestral. Real-world repo-fix tasks remain a gap.

- It’s superseded for chat use. DeepSeek-V2.5 officially merged DeepSeek-V2-0628 and DeepSeek-Coder-V2-0724, retaining the Coder model’s code processing power while adding general conversational capabilities and human-preference alignment. If you want a single model for chat plus code, V2.5 — and now DeepSeek V4-Flash — supersede it.

- Hardware floor is high for the full model. Running DeepSeek-Coder-V2 in BF16 format for inference requires 80GB×8 GPUs. The Lite 16B is the realistic local option.

- No reasoning mode. Unlike DeepSeek R1 or V4 with thinking enabled, Coder V2 has no step-by-step reasoning toggle.

How to access DeepSeek Coder V2

Local / Hugging Face

The four canonical Hugging Face repos are deepseek-ai/DeepSeek-Coder-V2-Base, DeepSeek-Coder-V2-Instruct, DeepSeek-Coder-V2-Lite-Base and DeepSeek-Coder-V2-Lite-Instruct. Minimal Python loading pattern (Transformers):

from transformers import AutoTokenizer, AutoModelForCausalLM

import torch

tok = AutoTokenizer.from_pretrained(

"deepseek-ai/DeepSeek-Coder-V2-Lite-Instruct",

trust_remote_code=True,

)

model = AutoModelForCausalLM.from_pretrained(

"deepseek-ai/DeepSeek-Coder-V2-Lite-Instruct",

trust_remote_code=True,

torch_dtype=torch.bfloat16,

).cuda()For quantised local inference, the Lite-Instruct GGUF builds run cleanly in LM Studio or llama.cpp. If you’d rather not wrangle weights directly, the Ollama setup walkthrough covers a faster path.

Hosted API (no current Coder V2 endpoint)

The original deepseek-coder API endpoint was deprecated in September 2024 when DeepSeek V2 Chat and DeepSeek Coder V2 were merged and upgraded into DeepSeek V2.5. The current API model IDs are deepseek-v4-pro and deepseek-v4-flash; the only legacy IDs DeepSeek still maps are deepseek-chat and deepseek-reasoner, which retire on 2026-07-24 at 15:59 UTC.

Today, only deepseek-chat and deepseek-reasoner are documented as still routable; per DeepSeek’s official notice they retire on 2026-07-24 at 15:59 UTC. deepseek-coder is not in that retirement notice and is no longer a documented current model ID. New code should target deepseek-v4-pro or deepseek-v4-flash directly. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com; an Anthropic-compatible surface is also exposed at the same base URL. The API is stateless — your client must resend the full message history every call. The web chat at chat.deepseek.com keeps session history; the API does not.

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash", # current Coder V2 work targets V4-Flash directly; deepseek-coder is no longer a current API ID

messages=[{"role": "user", "content": "Refactor this Python function..."}],

temperature=0.0, # 0.0 for code generation per DeepSeek guidance

max_tokens=2048,

)

print(resp.choices[0].message.content)For the full parameter reference, see the DeepSeek API documentation. Useful parameters worth knowing: temperature, top_p, max_tokens, response_format (JSON mode), tool calling, streaming, FIM completion (Beta — requires thinking: {"type": "disabled"}), and Chat Prefix Completion (Beta). JSON mode is designed to return valid JSON, not guaranteed — include the word “json” plus a small example schema in the prompt and set max_tokens high enough to avoid truncation.

Pricing snapshot (as of April 2026)

Coder V2 has no separate price list now — calls land on V4-Flash. Headline rates per 1M tokens:

| Tier | Input (cache hit) | Input (cache miss) | Output |

|---|---|---|---|

| deepseek-v4-flash | $0.0028 | $0.14 | $0.28 |

| deepseek-v4-pro (75% promo through 2026-05-31) | $0.003625 (list $0.0145) | $0.435 (list $1.74) | $0.87 (list $3.48) |

Worked example on V4-Flash: 1,000,000 calls with a 2,000-token cached system prompt, a 200-token user message, and a 300-token reply.

- Cached input: 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200,000,000 tokens × $0.14/M = $28.00

- Output: 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

Off-peak discounts ended on 2025-09-05 and have not returned. Always re-check the official pricing page before committing — see also the DeepSeek API pricing breakdown.

Best use cases

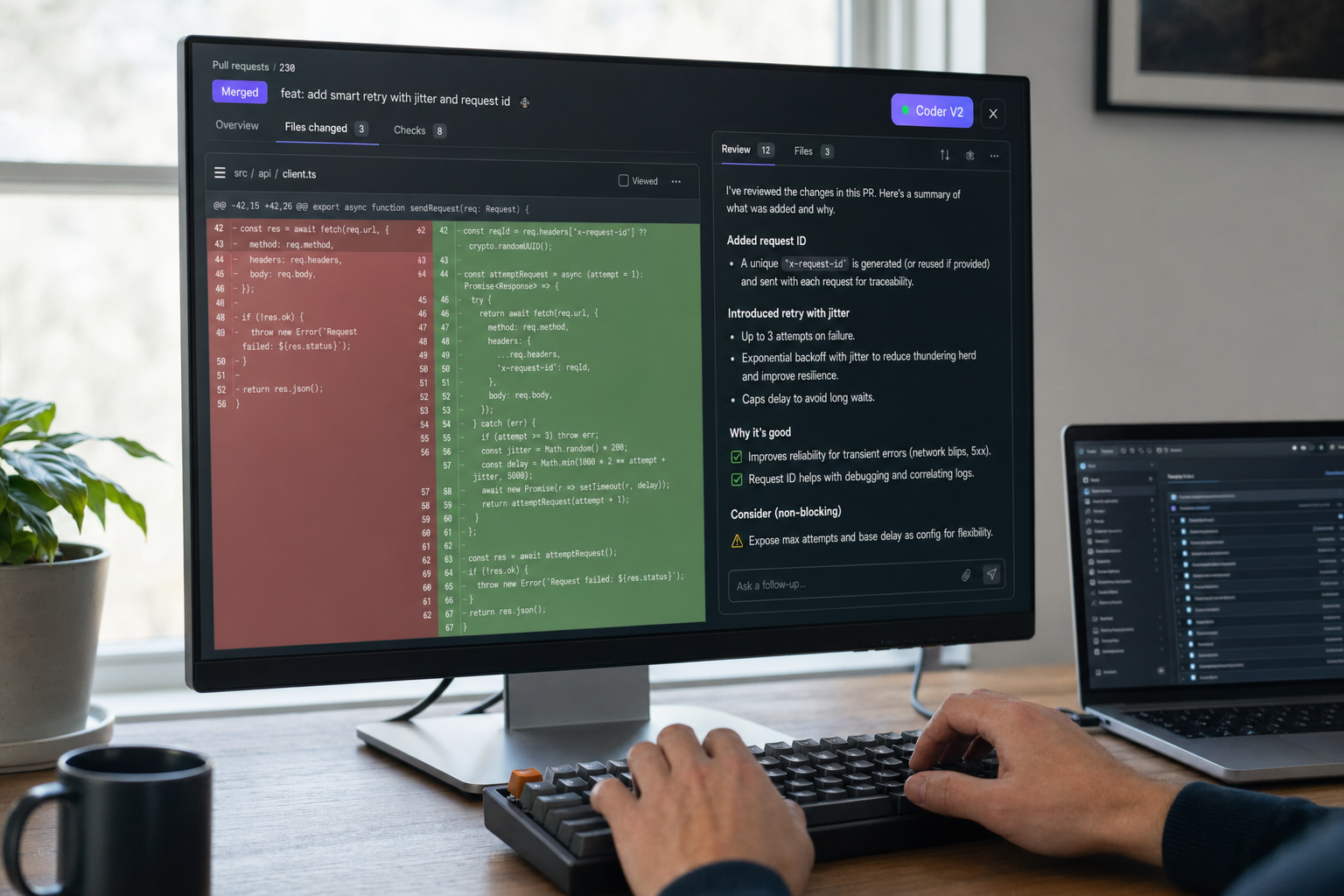

- IDE autocomplete and FIM completion — pair with the DeepSeek with VS Code setup.

- Bulk code refactors over 128K-token repository contexts.

- Multi-language code generation across the 338-language surface — see DeepSeek for coding for end-to-end workflows.

- On-prem or air-gapped deployments where weights matter — the Lite 16B is the realistic target.

- Workflows for individual contributors covered in DeepSeek for developers.

Comparable alternatives

If you’re weighing Coder V2 against closed-source coders, the natural comparisons are DeepSeek Coder versus GitHub Copilot for the IDE workflow, and broader chat-coder evaluations such as DeepSeek versus ChatGPT. For other open-weight options, the coding-focused DeepSeek alternatives roundup covers Codestral, Qwen-Coder and StarCoder2.

Verdict

DeepSeek Coder V2 is still the right answer when you need open weights with strong HumanEval/MBPP+ numbers, a 128K context, and a permissive commercial-use license — particularly the Lite 16B for self-hosting. For new hosted-API work, point at deepseek-v4-flash, which is cheaper per token and ships thinking mode by default; the original deepseek-coder hosted endpoint was retired in 2024 and is not a current model ID.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Is DeepSeek Coder V2 free to use commercially?

Yes, with a caveat about the licence document. The code repository is MIT-licensed, but the use of DeepSeek-Coder-V2 Base/Instruct models is subject to the separate DeepSeek Model License — and the Coder V2 series (Base and Instruct) supports commercial use. Read the model licence on the Hugging Face repo before shipping. For a wider summary of permissions across the family see is DeepSeek open source.

What context length does DeepSeek Coder V2 support?

128,000 tokens. The model expanded programming-language support from 86 to 338 and extended context length from 16K to 128K. That is enough for most repository-level prompts, though it is a quarter of the 1M context window in V4. If you need to estimate whether a particular codebase will fit, the DeepSeek token counter is a quick way to check before sending the request.

How does DeepSeek Coder V2 compare to GPT-4-Turbo on HumanEval?

Very close on the headline number. DeepSeek’s report records a 90.2% score on HumanEval, a 76.2% score on MBPP, and a 43.4% score on LiveCodeBench (questions from Dec. 2023 to June. 2024). GPT-4-Turbo-1106 was at 87.8 on HumanEval in the same table. For more detail across coding tasks see the DeepSeek Coder review.

Can I run DeepSeek Coder V2 locally?

The 16B Lite model runs on consumer hardware with quantisation; the 236B model needs serious GPUs. Running it in BF16 format for inference requires 80GB×8 GPUs. Most developers want the Lite-Instruct GGUF builds running through llama.cpp or Ollama. The install DeepSeek locally guide walks through the steps end to end.

Is DeepSeek Coder V2 still the latest coding model from DeepSeek?

No. DeepSeek-V2.5 officially merged DeepSeek-V2-0628 and DeepSeek-Coder-V2-0724, retaining the Coder model’s code processing power while adding general capabilities and human-preference alignment. Since then DeepSeek shipped V3, V3.2 and now V4 — see the DeepSeek V4 overview for the current generation. Coder V2 remains a sound pick if you specifically want an open-weight code-specialist model with permissive commercial use.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

- Model cardDeepSeek-Coder-V2 model card hubCanonical V2 Instruct weights and Model LicenseLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

- Technical reportDeepSeek-Coder-V2 technical report on arXivFull benchmark tables and 338-language coverage figuresLast checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.