DeepSeek Token Counter: How to Measure Prompt Size and API Cost

You are about to send a 30,000-word legal contract to `deepseek-v4-pro` and you need to know two things before pressing run: will it fit in the context window, and how much will the round trip cost? That is the job a DeepSeek token counter does — it converts raw text into the same units the API meters and bills on, so you can size prompts to the 1,000,000-token context, predict spend per call, and avoid surprise truncation. This guide walks through the official offline tokenizer, third-party web counters, the live `usage` block returned on every chat completion, and how to turn those numbers into a real dollar figure on V4-Flash and V4-Pro. By the end you will have a workflow you can paste into a script today.

What a DeepSeek token counter actually counts

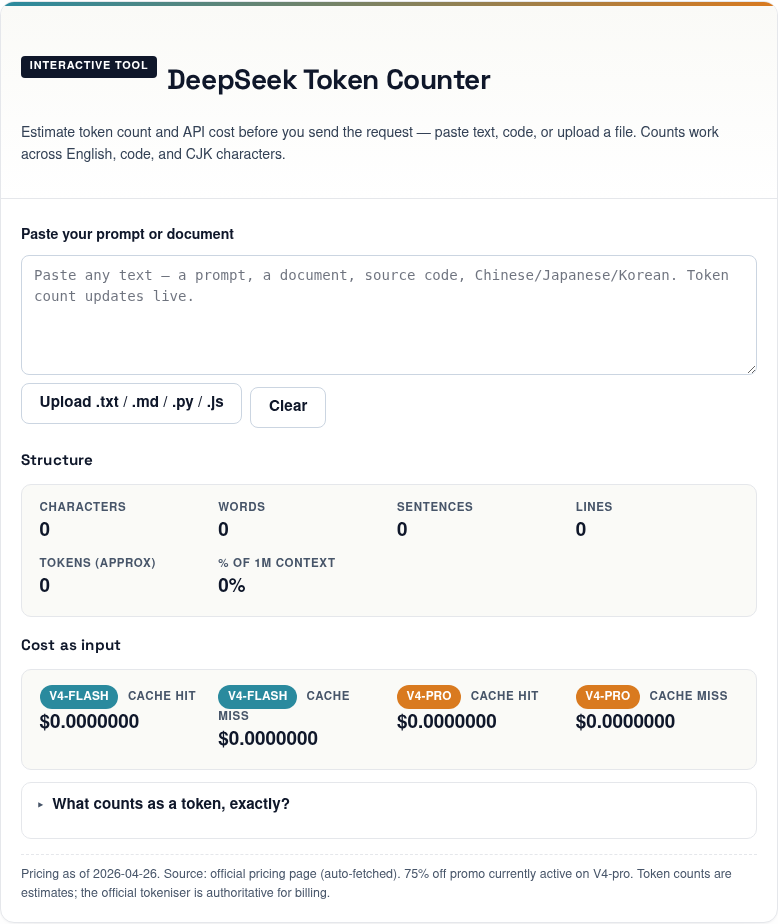

DeepSeek Token Counter

Estimate token count and API cost before you send the request — paste text, code, or upload a file. Counts work across English, code, and CJK characters.

Structure

Cost as input

What counts as a token, exactly?

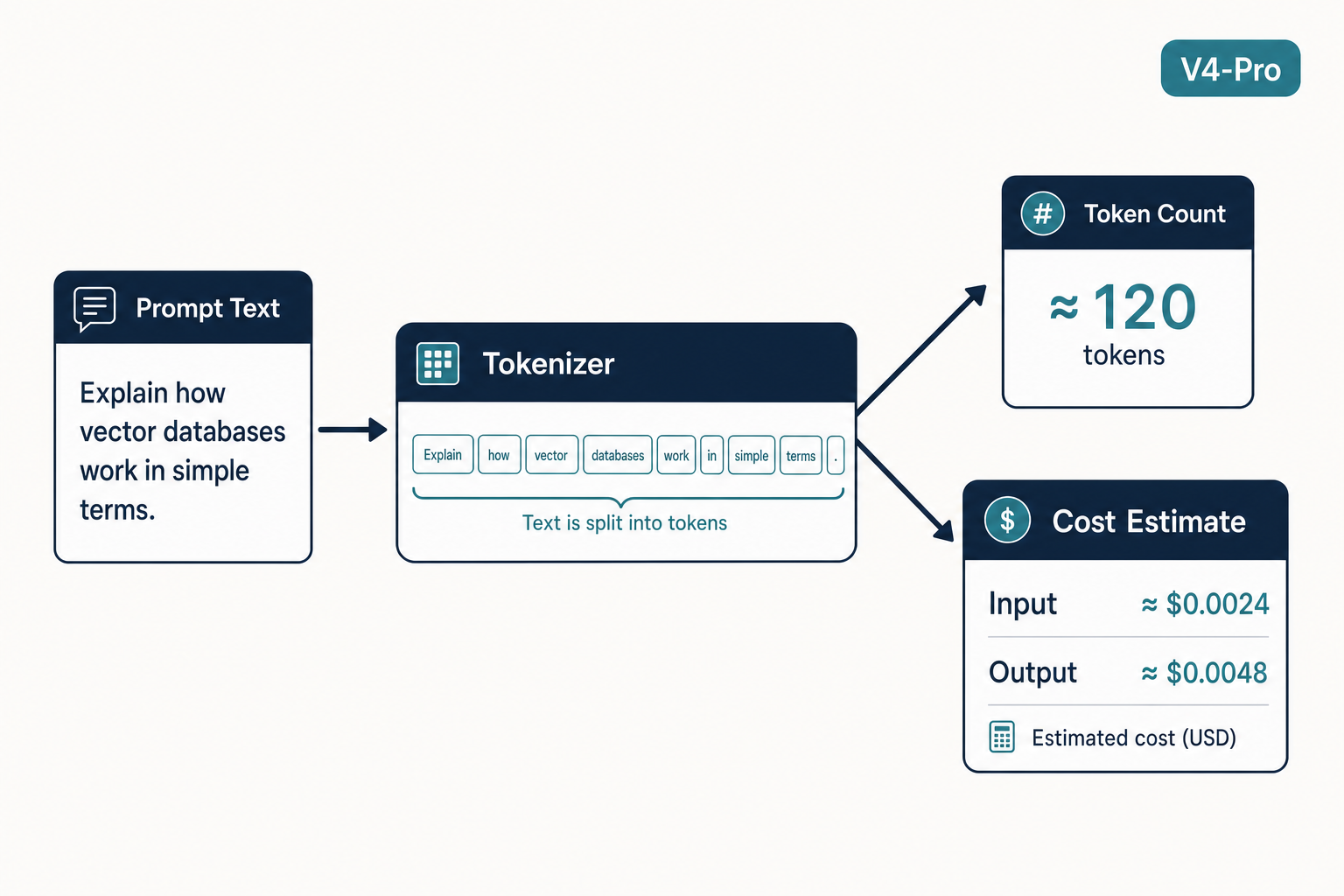

DeepSeek's BPE tokeniser splits text into subword units. For English prose, a token is on average about ¾ of a word; for code, it's closer to 3.5 characters; and for Chinese, Japanese, or Korean characters, each character counts as roughly one token. This tool combines all three heuristics so you get a sensible estimate on mixed inputs.

Pricing as of 2026-04-29. Source: official pricing page (auto-fetched). Token counts are estimates; the official tokeniser is authoritative for billing.

Tokens are the chunks of text the model sees, and they are also the unit DeepSeek bills on. Tokens are the basic units used by models to represent natural language text, and the units DeepSeek uses for billing — they can be intuitively understood as ‘characters’ or ‘words’, and typically a Chinese word, an English word, a number, or a symbol is counted as a token. A token counter applies the model’s tokenizer to your text and returns an integer. The same string can produce different counts on different models because each tokenizer has a different vocabulary, so a counter calibrated for GPT or Claude is only an approximation for DeepSeek.

There are two reasons to count before you call:

- Fit checking. Both DeepSeek V4 tiers expose a 1,000,000-token context window, with output capped at 384,000 tokens. If your prompt plus expected reply exceeds that budget, the request fails or truncates.

- Cost forecasting. The V4-Flash output rate is $0.28 per million tokens; the V4-Pro output rate is $0.87 during the 75% promo through 2026-05-31 ($3.48 list). A 10× difference makes guessing expensive.

DeepSeek itself is candid that estimates are estimates. Due to the different tokenization methods used by different models, the conversion ratios can vary, and the actual number of tokens processed each time is based on the model’s return, which you can view from the usage results. In other words: estimate before sending, reconcile after.

The four ways to count DeepSeek tokens

The right tool depends on whether you are sketching a prompt, scripting a batch job, or auditing a finished bill.

| Method | Best for | Accuracy | Setup time |

|---|---|---|---|

| Official offline tokenizer (Python) | Pre-flight checks in code, batch sizing | Exact for the matching model version | ~5 min |

Hugging Face AutoTokenizer |

V4-Pro / V4-Flash specifically | Exact (uses the released vocab) | ~5 min |

| Third-party web counters | Quick paste-and-check on a single prompt | Approximate; vocab may lag | 0 — open in browser |

API usage response field |

Authoritative billing reconciliation | Authoritative — this is what you pay for | One real API call |

1. The official offline tokenizer

DeepSeek publishes a downloadable tokenizer demo. You can run the demo tokenizer code in the following zip package to calculate the token usage for your input/output. Use it in CI or a pre-commit hook to flag prompts that drift past a threshold. For V4 specifically, the cleanest path is the Hugging Face vocabulary that ships with the weights:

The following Python snippet uses the V4-Pro tokenizer to count a chat-style message list, mirroring what the model will see on the wire:

from transformers import AutoTokenizer

from encoding_dsv4 import encode_messages

tokenizer = AutoTokenizer.from_pretrained("deepseek-ai/DeepSeek-V4-Pro")

messages = [

{"role": "system", "content": "You are a careful legal assistant."},

{"role": "user", "content": "Summarise the attached contract."},

]

prompt = encode_messages(messages, thinking_mode="non-thinking")

n_tokens = len(tokenizer.encode(prompt))

print(n_tokens)

This pattern comes straight from the model card. DeepSeek provides a dedicated encoding folder with Python scripts and test cases demonstrating how to encode messages in OpenAI-compatible format into input strings for the model, and how to parse the model’s text output. The same approach works for deepseek-ai/DeepSeek-V4-Flash; just swap the model ID. If you need a step-by-step walkthrough of the surrounding API plumbing, the DeepSeek Python integration tutorial covers project layout, environment variables, and error handling.

2. Web-based DeepSeek token counters

Several browser tools offer paste-and-count workflows for casual estimation. They are convenient for one-off prompt drafting, but they have two caveats: vocabularies for V4 (released April 24, 2026) may not yet be live in every third-party tool, and most counters do not apply the V4 chat template, so multi-turn conversations under-count by a small fixed overhead. Treat the result as a floor, not a ceiling.

3. The authoritative number — the API usage field

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. Every response includes a usage block that is the source of truth for billing. The DeepSeek API reference defines six fields: the usage object is the core accounting block in DeepSeek’s chat-completions response, and currently defines completion_tokens, prompt_tokens, prompt_cache_hit_tokens, prompt_cache_miss_tokens, total_tokens, and completion_tokens_details.reasoning_tokens.

prompt_tokens— total input tokens. It equals prompt_cache_hit_tokens + prompt_cache_miss_tokens.prompt_cache_hit_tokens— input tokens that reused a cached prefix (cheap rate).prompt_cache_miss_tokens— input tokens that did not (full rate).completion_tokens— output tokens generated by the model.completion_tokens_details.reasoning_tokens— when thinking mode is on, this is the slice of completion tokens spent on the reasoning trace. The API returnsreasoning_contentalongside the finalcontent, and these tokens are billed at the standard output rate.total_tokens— sum of prompt and completion.

The practical rule: estimate before sending, but account after the response arrives. Log the full breakdown — not just total_tokens — or you will lose the cache-hit signal that explains your bill.

4. Python: parse usage on every call

A minimal logging helper using the OpenAI SDK against DeepSeek’s base URL:

from openai import OpenAI

client = OpenAI(api_key="...", base_url="https://api.deepseek.com")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Hello"}],

)

u = resp.usage

print({

"prompt_tokens": u.prompt_tokens,

"cache_hit": u.prompt_cache_hit_tokens,

"cache_miss": u.prompt_cache_miss_tokens,

"completion_tokens": u.completion_tokens,

"total": u.total_tokens,

})

For the wire-level shape of every field, see the DeepSeek API documentation. If you are calling from JavaScript, the DeepSeek Node.js integration guide shows the same pattern with the OpenAI Node SDK.

From token count to dollar cost

Once you have prompt_cache_hit_tokens, prompt_cache_miss_tokens and completion_tokens, the math is mechanical — but you must apply the right rate card. As of April 2026, V4-Flash and V4-Pro have very different rates:

| Model | Input cache hit ($/M) | Input cache miss ($/M) | Output ($/M) |

|---|---|---|---|

| deepseek-v4-flash | $0.0028 | $0.14 | $0.28 |

| deepseek-v4-pro | $0.003625 promo / $0.0145 list | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list |

Confirm the rates on the official DeepSeek pricing page before you commit a budget — Preview-window pricing can change.

Worked example A — V4-Flash, 1M calls a day

Assume a 2,000-token system prompt cached across calls, a 200-token user message (uncached on each call), and a 300-token reply. For one million calls:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

Worked example B — V4-Pro, same workload

- Cached input: 2,000,000,000 × $0.0145/M = $29.00

- Uncached input: 200,000,000 × $1.74/M (list) = $348.00

- Output: 300,000,000 × $3.48/M (list) = $1,044.00

- Total: $1,421.00 at list (currently $355.25 during the 75% V4-Pro promo through 2026-05-31)

Two notes that catch most teams out. First, do not skip the uncached-input line — even when a system prompt is cached, each new user message is a fresh miss against that prefix until the model has seen it before. Second, do not mix tier rates in one calculation; pick V4-Flash or V4-Pro and stay there. If you want the math automated, the DeepSeek pricing calculator walks through both tiers with editable inputs, and the DeepSeek cost estimator handles monthly projections.

Counting tokens for thinking mode

Thinking mode is a request parameter on either V4 model, not a separate model ID. Set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}, or reasoning_effort="max" for max-effort reasoning. The model then emits a reasoning trace before the answer; the API returns reasoning_content alongside the final content, and the reasoning tokens are billed at the standard output rate.

Two practical implications for token counting:

- Reserve more output budget. Reasoning traces routinely run thousands of tokens. For thinking-max workloads, set

max_tokensat least to 384K and ensure your context window is sized accordingly. - Watch

completion_tokens_details.reasoning_tokens. A high ratio of reasoning to final content is normal for hard problems; a high ratio for trivial queries means you should drop back to non-thinking mode.

Legacy IDs deepseek-chat and deepseek-reasoner still work and currently route to deepseek-v4-flash, but they retire on 2026-07-24 at 15:59 UTC; migration is a one-line model= swap, no base_url change. The DeepSeek context caching guide explains how prefix reuse interacts with token counting in production.

Common counting mistakes

- Using a GPT tokenizer for DeepSeek. The vocabularies differ; expect 5–15% drift, more for non-English text.

- Forgetting chat template overhead. Roles, special tokens, and message separators all consume tokens. Counting raw message strings under-counts.

- Ignoring the cache split. If you log only

total_tokens, you cannot tell whether your bill is high because of long prompts or because caching is not landing. - Mixing model rates. Costing a V4-Pro workload at V4-Flash rates is a 10× error on output.

- Skipping

max_tokenswith JSON mode. JSON mode is designed to return valid JSON, not guaranteed; truncated output is invalid output.

For more on the surrounding plumbing — auth, rate limits, JSON mode caveats — see the DeepSeek tools and utilities hub and the DeepSeek API best practices guide.

A practical workflow

- Draft the prompt in any text editor.

- Run it through the offline tokenizer (Hugging Face

AutoTokenizerwith the V4-Pro or V4-Flash repo) to estimate prompt size. - Project cost using the rate card for your chosen tier and an expected output size.

- Send a single real call; capture the full

usageblock. - Reconcile estimate vs actual; tune your forecasting multiplier.

Do that once per prompt template and you will not be surprised by a monthly bill again.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

How do I count tokens for DeepSeek before sending a prompt?

Use the official offline tokenizer or load the V4 vocabulary with Hugging Face AutoTokenizer from deepseek-ai/DeepSeek-V4-Pro or DeepSeek-V4-Flash. Encode your message list with the V4 chat template, then call tokenizer.encode() and take the length. For a one-off paste-and-check, web counters are fine but treat the number as approximate — the authoritative count comes back in the API usage block. The DeepSeek API documentation covers the full response shape.

What does the usage block return on a DeepSeek API call?

Every POST /chat/completions response includes prompt_tokens, prompt_cache_hit_tokens, prompt_cache_miss_tokens, completion_tokens, total_tokens, and (in thinking mode) completion_tokens_details.reasoning_tokens. Log the full breakdown, not just the headline total — without the cache split you cannot diagnose why a bill grew. The DeepSeek context caching guide explains how prefix reuse drives the hit/miss ratio.

Is one DeepSeek token the same as one word?

No. A token is roughly a word, sub-word, number, or punctuation mark, and the ratio shifts by language and content. English prose runs about four characters per token on average; code and Chinese text tokenize differently. The only way to know exactly is to run the V4 tokenizer or read the usage field after a real call. The DeepSeek token limits guide expands on context length specifics.

Does the DeepSeek token counter work for V4-Pro and V4-Flash?

Yes — both V4 tiers share the same family tokenizer and a 1,000,000-token context window with up to 384,000 output tokens. Load deepseek-ai/DeepSeek-V4-Pro or deepseek-ai/DeepSeek-V4-Flash with Hugging Face AutoTokenizer, encode through the V4 chat template, and you get an exact count. Pricing differs sharply between tiers, so always tag your counts with the model. See DeepSeek V4 for the full architecture overview.

How do I turn token counts into a dollar estimate?

Apply the rate card for your tier. For V4-Flash that is $0.0028 cache-hit, $0.14 cache-miss, and $0.28 output per million tokens; for V4-Pro it is currently $0.435 / $0.87 during the 75% promo through 2026-05-31 (list $0.0145 / $1.74 / $3.48). Multiply each token bucket by its rate and sum. Do not skip the uncached input bucket even when a system prompt is cached. The DeepSeek pricing calculator automates the math for both tiers.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- PricingOfficial DeepSeek pricing pageToken-counter cost-forecast V4-Flash and V4-Pro ratesLast checked: April 30, 2026

- Model cardDeepSeek V4-Pro tokenizer on Hugging FaceOfficial offline tokenizer used in pre-flight token countsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.