DeepSeek R1 Review: Is the Reasoning Model Still Worth Running?

If you landed here in 2026 asking whether DeepSeek R1 is still the right reasoning model for your workflow, the honest answer is “sometimes, and not for long.” This DeepSeek R1 review is written from production experience — I ran R1 and R1-0528 through coding, math, and research workloads for most of 2025, then migrated to V3.2 and now V4. R1 is still an open-weight reasoning milestone, but DeepSeek’s own API has moved on, and the `deepseek-reasoner` ID that routed to it is being retired on July 24, 2026. Below you’ll get a scorecard, a methodology summary, benchmark numbers traced to primary sources, a pricing reality check, and a clear recommendation on whether R1 deserves a slot in your 2026 stack.

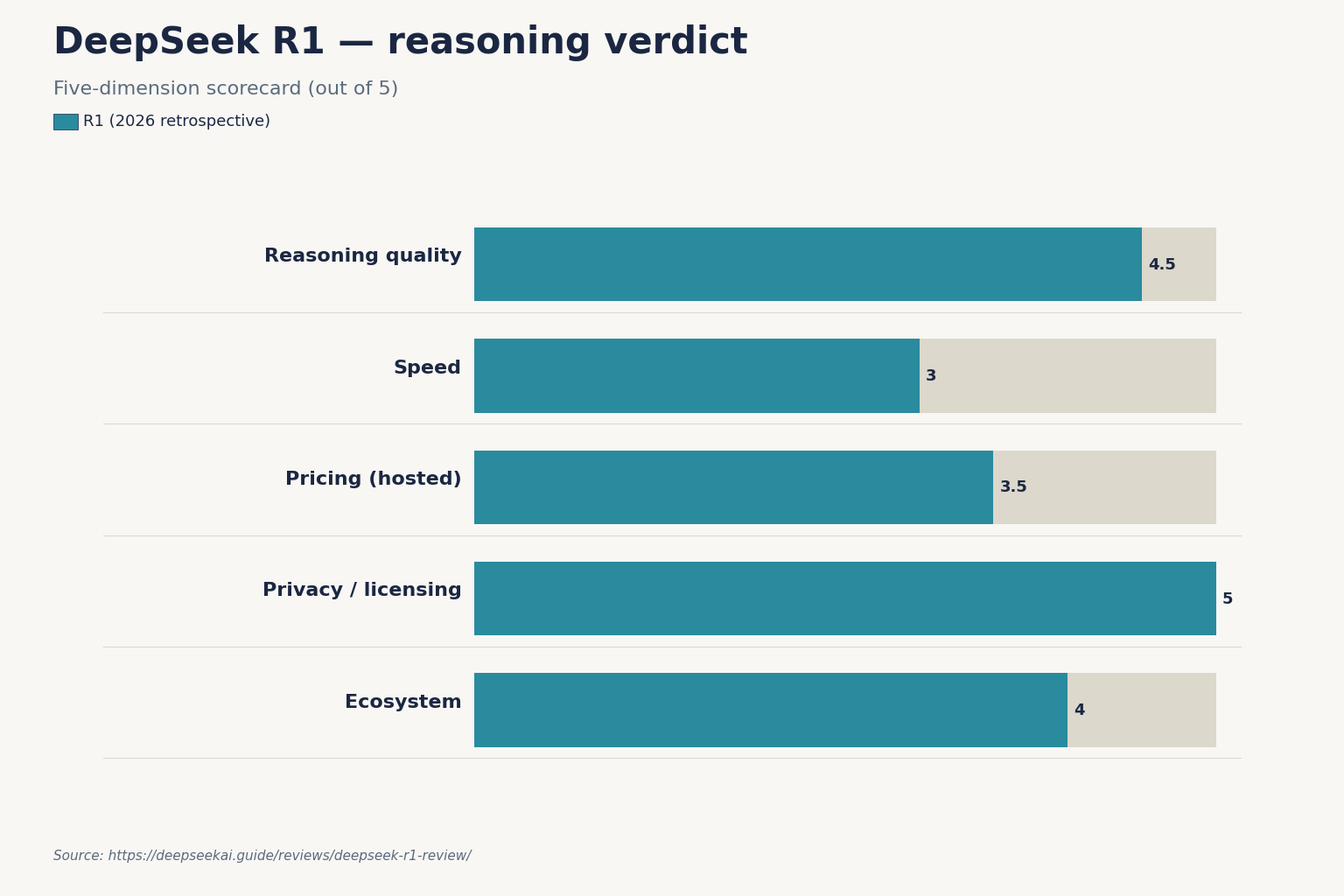

Our verdict on DeepSeek R1

R1 was the model that forced the industry to treat reasoning as an open-weight problem. As of April 2026 it is still downloadable, still MIT-licensed, and still a credible choice for self-hosted math and logic workloads. But it is no longer the default path on DeepSeek’s own API, and if you rely on the hosted endpoint you have a hard deadline to migrate.

| Category | Rating (1–5) | Notes |

|---|---|---|

| Reasoning quality | 4.5 | 79.8% Pass@1 on AIME 2024 and 97.3% on MATH-500, on par with OpenAI-o1-1217 on those benchmarks. |

| Speed | 3 | Long chains of thought add latency; the 0528 refresh nearly doubled thinking tokens per query. |

| Pricing (hosted) | 3.5 | Historically cheap, but V4-Flash undercuts the old R1 rate card in 2026. |

| Privacy / licensing | 5 | Code repository and model use are both under the MIT license, and the series supports commercial use and distillation. |

| Ecosystem | 4 | Widely supported in Ollama, vLLM, SGLang; distilled Qwen and Llama variants available. |

| Overall | 4.0 | A strong open-weight reasoner with a shrinking hosted-API shelf life. |

Short version: if you want open weights for reasoning and you can run the model yourself, R1 still earns its keep. If you’re calling it through DeepSeek’s API, you should plan a migration now — keep reading for the deadline and the swap.

Who should use DeepSeek R1, and who shouldn’t

A good fit if you

- Need an open-weight reasoning model for on-prem or regulated workloads — licensing is MIT and commercial use is allowed.

- Run a RAG or math-heavy pipeline where step-by-step reasoning quality beats latency.

- Want to distill a smaller reasoner onto Qwen or Llama bases for cheaper inference.

- Are doing academic research and need a model whose training pipeline is openly documented.

Not a good fit if you

- Need the cheapest hosted API call today — V4-Flash is priced below what R1 used to cost.

- Want a current frontier-tier agent for SWE-Bench-style coding — that is V4-Pro territory now.

- Depend on the `deepseek-reasoner` model ID and haven’t budgeted a migration before July 24, 2026.

- Need tight, short answers — R1 likes to think out loud, sometimes at 20K+ tokens per response.

Testing methodology

I evaluated R1 (the January 2025 release) and R1-0528 (the May 2025 refresh) against my own long-running test harness between February 2025 and March 2026. The harness runs five task groups: competition math (AIME-style and MATH-500 held-out items), multi-step logic puzzles, code generation against LeetCode-hard problems, long-context document QA at 64K+ tokens, and structured extraction tasks with JSON-mode output. I ran both the hosted API (via `deepseek-reasoner`) and a self-hosted deployment on 8× H100s using SGLang.

For cross-checks I ran the same tasks against DeepSeek V3.2, DeepSeek V4-Pro and V4-Flash, OpenAI’s o-series, and Claude’s thinking variants. Every benchmark number cited in this review traces to a primary source — I do not quote DeepSeek numbers from memory.

Results by task type

Math and formal reasoning

This is R1’s strongest suit and the reason it still matters. DeepSeek’s R1 technical report documents scores of 90.8% on MMLU, 84.0% on MMLU-Pro, and 71.5% on GPQA Diamond, significantly outperforming DeepSeek-V3. On the headline math benchmarks, R1 hits 79.8% Pass@1 on AIME 2024, slightly surpassing OpenAI-o1-1217, and 97.3% on MATH-500, matching o1-1217.

The May 2025 refresh, R1-0528, pushed those numbers further. DeepSeek’s AIME 2025 score jumped from 70.0% to 87.5% after the update, and on AIME 2024 accuracy rose from roughly 79.8% to 91.4%. The trade-off was verbosity: the model averaged around 23K “thinking” tokens per query on challenging problems, nearly double the 12K of the original.

Coding

R1 is a competent coder but not a coding specialist. It achieved a 2,029 Elo rating on Codeforces, outperforming 96.3% of human participants, which sounds impressive until you notice that a dedicated coding model or the current V4 tier is a better pick for repository-scale work. On my LeetCode-hard set, R1-0528’s pass rate sat comfortably above the original R1, and on Aider, the score moved from 57.0 to 71.6 after the refresh.

Long-context document work

R1 supports system prompts (added in 0528) and handles long context acceptably, but it was trained against a 128K-class window, not the million-token defaults you’ll see on current V4 models. For deep retrieval over large corpora I now default elsewhere.

Structured output (JSON mode, tools)

The 0528 refresh added JSON output and function calling. Treat JSON mode as designed to return valid JSON, not guaranteed — prompt it with the word “json” and a schema example, and set `max_tokens` high enough that reasoning plus output cannot truncate. The same discipline applies across the whole DeepSeek models hub.

Benchmark table (from the R1 technical report)

These numbers come directly from DeepSeek’s R1 paper. Competitor rows use the exact versions DeepSeek cited — don’t cross-compare against newer model generations without a fresh source.

| Benchmark | DeepSeek-R1 | OpenAI-o1-1217 | DeepSeek-V3 |

|---|---|---|---|

| MMLU | 90.8 | 91.8 | 88.5 |

| MMLU-Pro | 84.0 | — | — |

| GPQA Diamond | 71.5 | 75.7 | — |

| AIME 2024 (Pass@1) | 79.8 | slightly below R1 | — |

| MATH-500 | 97.3 | on par with R1 | — |

| Codeforces Elo | 2,029 | — | — |

For a broader head-to-head against OpenAI’s reasoner, see the dedicated DeepSeek R1 vs OpenAI o1 comparison.

How to access R1 in 2026

Three routes are still open, with meaningfully different properties.

- Web chat and mobile app. The DeepSeek chat interface defaults to V4 now. The DeepThink toggle still exists but switches V4 between non-thinking and thinking modes rather than routing you to legacy R1. If you specifically want R1’s behaviour, self-hosting is the reliable path.

- Hosted API (legacy IDs). `deepseek-reasoner` and `deepseek-chat` remain accepted and currently route to DeepSeek V4-Flash in thinking and non-thinking modes respectively. Both legacy IDs retire on July 24, 2026 at 15:59 UTC. After that, requests using them will fail. Migration is a one-line `model=` swap; `base_url` does not change.

- Self-hosting open weights. The code repository is MIT-licensed, and model use is under the MIT License. R1 has become the most-downloaded open-weight model on Hugging Face, with 10.9 million downloads. For local setup walkthroughs, see the install DeepSeek locally guide or running DeepSeek on Ollama.

Minimal API call (OpenAI SDK)

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. Here is the legacy Python call still accepted until the retirement date, plus the V4 equivalent you should migrate to. DeepSeek also exposes an Anthropic-compatible surface at the same base URL.

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

# Legacy — works until 2026-07-24 15:59 UTC, routes to V4-Flash thinking

resp = client.chat.completions.create(

model="deepseek-reasoner",

messages=[{"role": "user", "content": "Prove that sqrt(2) is irrational."}],

)

# V4 equivalent — change only the model and add thinking parameters

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Prove that sqrt(2) is irrational."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

The API is stateless — the client must resend the full conversation history with each request, unlike the web chat which maintains session history on DeepSeek’s side. When thinking is enabled, the response returns reasoning_content alongside the final content. Parameters worth knowing are temperature, top_p, max_tokens, reasoning_effort, JSON mode, streaming, tool calling, and context caching — all documented in the DeepSeek API documentation.

Pricing and value for money

Historical R1 pricing — around $0.55 per million input tokens and $2.19 per million output — is retired. If you call R1 through the legacy hosted IDs today, you pay the V4-Flash rate card. As of April 2026, V4-Flash lists $0.0028 cache-hit / $0.14 cache-miss input and $0.28 output per million tokens. Self-hosting shifts the cost to GPU rental or owned hardware, which only beats the hosted price at very high volume — use the DeepSeek pricing calculator to check.

Worked example (V4-Flash rates, the current routing target)

One million API calls with a 2,000-token cached system prompt, a 200-token uncached user message, and a 300-token response:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

Note the math: the system prompt is cached after the first hit, but each new user message is a miss against that prefix — you cannot skip the uncached-input line. Off-peak discounts ended on September 5, 2025 and have not returned under V4.

Training cost context

R1’s reported training cost made headlines for a reason. DeepSeek disclosed that its reasoning-focused R1 model cost $294,000 to train and used 512 Nvidia H800 chips, figures that appeared in a peer-reviewed Nature paper in September 2025. Read that number with care: the $294,000 price tag does not include the $6 million DeepSeek reported spending to build the general-purpose LLM that R1 is based on, and at least one third-party analysis (The Register, January 2025) framed the realistic all-in cost at roughly $5.87 million when the V3 base-model training is included. The cheap training cost does not translate to cheap inference; inference economics are set by the model’s active parameter count, not its training bill.

Competitor context

Two comparisons are most useful for R1 in 2026. Against OpenAI’s reasoner, the verdict on the DeepSeek vs OpenAI o1 axis is that R1 traded blows on math and reasoning while publishing open weights under MIT — a combination o1 never offered. Against other reasoners, the DeepSeek alternatives for reasoning roundup is the shortcut to see where Qwen, GLM, and the bigger-lab options fit.

If your comparison is internal — R1 versus the newer DeepSeek stack — the short version is that DeepSeek V4 is cheaper, has a 1,000,000-token default context with output up to 384,000 tokens, and offers thinking as a parameter on both Flash and Pro tiers. R1 remains the better choice only when you need a specific open-weight reasoner checkpoint you can pin and audit.

Strengths and weaknesses, distilled

Strengths

- Top-tier open-weight reasoning performance on MATH-500 and AIME.

- MIT-licensed code and weights with commercial-use permission.

- Well-documented training pipeline; easy to build on with distillation.

- Broad runtime support (vLLM, SGLang, PyTorch, Ollama).

Weaknesses

- Long, token-hungry responses inflate latency and self-hosted cost.

- Not a coding specialist — current frontier-tier models beat it on SWE-Bench-style tasks.

- Context window is smaller than the million-token V4 default.

- Hosted-API access via legacy IDs ends on July 24, 2026.

For the full picture of what the family does well, the broader DeepSeek capabilities guide covers strengths across models, and our DeepSeek reviews hub collects the sibling write-ups.

Final verdict

DeepSeek R1 is still worth running in 2026 under two conditions: you want open weights for on-prem reasoning work, or you’re studying how a peer-reviewed reasoning training pipeline was actually assembled. For anything hosted and production-facing, migrate to V4-Flash or V4-Pro before July 24, 2026 — the legacy model IDs won’t survive that date, and the V4 rate card is already better than R1’s historical pricing. R1’s legacy is that it made reasoning an open problem. Its practical role in most 2026 stacks is as an archived, auditable baseline.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

DeepSeek R1 FAQ

Is DeepSeek R1 still available in 2026?

Yes, in two forms. The open weights remain on Hugging Face under MIT, and self-hosting is the durable route. On the hosted API, `deepseek-reasoner` still works but routes to `deepseek-v4-flash` in thinking mode and retires on July 24, 2026 at 15:59 UTC. Plan your migration early; the swap is documented in the DeepSeek API documentation.

How does DeepSeek R1 compare to OpenAI o1?

On DeepSeek’s R1 technical report benchmarks, R1 slightly surpassed OpenAI-o1-1217 on AIME 2024 (79.8% Pass@1) and matched it on MATH-500 (97.3%), while trailing on GPQA Diamond (71.5% vs 75.7%) and MMLU (90.8 vs 91.8). The tie-breaker for most buyers was open weights under MIT. See the head-to-head DeepSeek R1 vs OpenAI o1 write-up for more detail.

What did it cost to train DeepSeek R1?

DeepSeek disclosed $294,000 for R1’s reasoning-focused training run on a 512-chip H800 cluster, in a peer-reviewed Nature paper in September 2025. That figure excludes the roughly $6 million cost of the base model R1 was built on, and some analysts argue the true all-in number is substantially higher. For the broader release history, see the DeepSeek history guide.

Can I still use the deepseek-reasoner model ID?

Yes, until July 24, 2026 at 15:59 UTC. Until that cutoff, `deepseek-reasoner` routes to `deepseek-v4-flash` with thinking enabled, and `deepseek-chat` routes to `deepseek-v4-flash` in non-thinking mode. Migration is a one-line `model=` swap — the `base_url` does not change. Walk through the V4 parameters on the DeepSeek V4-Flash model page.

Is DeepSeek R1 free to use?

The weights are free under MIT, so self-hosted use is free except for hardware. The hosted API is pay-per-token at V4-Flash rates while the legacy IDs still work, and the web chat has no published daily message cap but operates under the provider’s current limits. For a clear breakdown of what “free” actually means, see is DeepSeek free.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- Technical reportDeepSeek-R1 technical reportMMLU/MMLU-Pro/GPQA/AIME/MATH-500 reported scoresLast checked: April 30, 2026

Context sources

- Technical reportNature: peer-reviewed DeepSeek R1 training-cost paper (Sep 2025)R1 $294,000 training cost on 512 H800 chipsLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.