DeepSeek Partnerships in the V4 Era: Who’s Actually Shipping

Who actually builds on top of DeepSeek, and who just reposts the logo on a landing page? That was the question I took into the DeepSeek V4 Preview window, because it’s easy to confuse a press release with a working integration. This article maps the current DeepSeek partnerships worth knowing about as of April 24, 2026 — the day V4-Pro and V4-Flash shipped — split into chips, cloud, agent tooling, and enterprise channels. Every claim is tied to a dated primary source, not training memory. I have run V4-Flash in production since launch morning and V3.2 before that, so the verdicts come from an engineer’s seat, not a marketing deck. By the end, you will know which partnerships change how you deploy, and which are noise.

Why DeepSeek partnerships matter right now

DeepSeek’s business model is unusual: the lab publishes open-weight models under MIT, runs a first-party API, and leaves most distribution to third parties. That means partnerships are not a side-channel — they are the main way most developers will touch a DeepSeek model. The DeepSeek V4 preview, released on April 24, 2026, arrived with two model IDs (deepseek-v4-pro at 1.6T total / 49B active parameters, and deepseek-v4-flash at 284B / 13B active), both MIT-licensed, both with a 1,000,000-token context window. DeepSeek-V4-Pro ships at 1.6T total / 49B active params with performance rivaling top closed-source models; DeepSeek-V4-Flash ships at 284B total / 13B active params as the fast, efficient, economical option.

That matters for partners because the MIT weights can be redistributed, fine-tuned, and hosted by anyone — and cloud providers, chip vendors, and agent frameworks are all taking that option. DeepSeek’s own guidance is to keep base_url and just update the model to deepseek-v4-pro or deepseek-v4-flash; the API supports OpenAI ChatCompletions and Anthropic APIs, with both models exposing 1M context and dual Thinking / Non-Thinking modes.

Chip partnerships: the Huawei and Cambricon axis

The most consequential partnership shift with V4 is on silicon. V3 and R1 were trained predominantly on Nvidia hardware. V4 has moved hard toward Chinese accelerators.

To fulfil V4’s computing needs, DeepSeek partnered with Chinese tech giant Huawei, which said in a statement that it supports the AI startup with its “Supernode” technology by combining large clusters of its “Ascend 950” chips to provide more computing power. The Ascend support extends beyond training: soon after DeepSeek’s release, Huawei announced “full support” from its range of Ascend chips, along with its supernode systems, to serve V4 models for model inference.

Cambricon Technologies is the second name on the chip side. DeepSeek developed V4 to run on Huawei hardware after months of collaboration with Cambricon Technologies, with engineers rewriting certain parts of the model’s code to achieve compatibility with Chinese-made processors. Counterpoint Research’s Wei Sun highlighted the fact that V4 is run on domestic chips from Huawei and Cambricon, in comparison to R1, which was trained on Nvidia hardware.

For readers outside China, the practical question is whether any of this changes your deployment. Mostly, it does not — you still hit POST /chat/completions at https://api.deepseek.com. But it does change the supply story behind the API, and it matters for anyone doing hardware planning on DeepSeek system requirements. In its technical report, DeepSeek said it developed custom GPU “kernels” compatible with both Nvidia and Huawei chips, suggesting a hybrid or adaptable infrastructure approach.

Agent-tool partnerships: Claude Code, OpenClaw, OpenCode

V4’s second partnership story is agentic coding. DeepSeek shipped the release already wired into several well-known agent harnesses. DeepSeek-V4 is integrated with leading AI agents like Claude Code, OpenClaw and OpenCode, and is already driving DeepSeek’s in-house agentic coding.

That integration works specifically because DeepSeek now exposes two wire formats at the same base URL. The API supports OpenAI ChatCompletions and Anthropic APIs. The Anthropic-compatible surface is what lets Anthropic’s own Claude Code CLI point at DeepSeek by swapping base_url and api_key — no rewriting of tool-calling glue.

A minimal Python call against V4-Pro in thinking mode, using the OpenAI SDK, looks like this:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Refactor this module."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

print(resp.choices[0].message.reasoning_content)

print(resp.choices[0].message.content)

Thinking mode returns reasoning_content alongside the final content. Every request hits POST /chat/completions — the API is stateless, so your client (or the agent harness on your behalf) must resend the full message history each turn. For a full walkthrough, see the DeepSeek API documentation.

Cloud partnerships: the post-R1 hyperscaler landscape

Cloud integrations have been layering up since R1’s breakout in January 2025. The rollout started immediately: Microsoft, Amazon and Google made the technology available to developers on their Azure, AWS and Google Cloud platforms. Microsoft announced “DeepSeek R1 is now available on Azure AI Foundry and GitHub” and published guidance for using DeepSeek models in Microsoft Semantic Kernel.

On AWS, the entry points were SageMaker and Bedrock. Customers can run the distilled Llama and Qwen DeepSeek models on Amazon SageMaker AI, use the distilled Llama models on Amazon Bedrock with Custom Model Import, or train DeepSeek models with SageMaker via Hugging Face.

Alibaba Cloud followed quickly on its home turf. Alibaba Cloud users can log into its PAI Model Gallery — a collection of open-source LLMs — where they can select DeepSeek’s AI models and deploy them to power their own reasoning and text-generating applications.

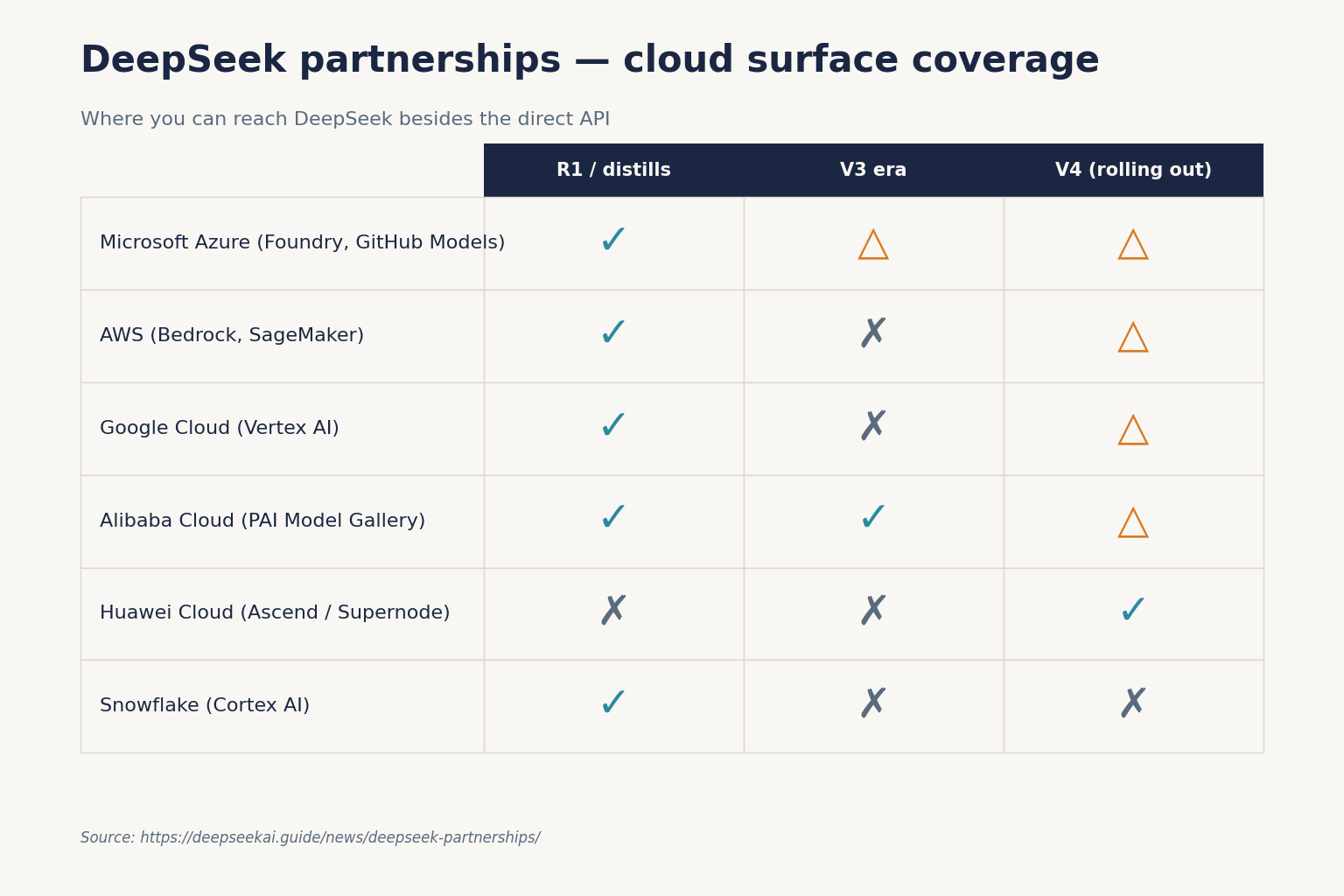

Here is the short summary of where you can currently reach a DeepSeek model besides DeepSeek’s own API:

| Provider | Surface | Primary model coverage (reported) | First confirmed |

|---|---|---|---|

| Microsoft Azure | Azure AI Foundry, GitHub Models | R1 and distills; V4 integration via open weights | 2025-01-29 |

| AWS | Bedrock Custom Model Import, SageMaker | R1 distilled Llama/Qwen variants | 2025-01-30 |

| Google Cloud | Vertex AI endpoints | R1 via customer-managed deployment | 2025-01-30 |

| Alibaba Cloud | PAI Model Gallery | V3, R1 and distilled variants | 2025-02-03 |

| Huawei Cloud | Ascend-backed inference, Supernode | V4-Pro, V4-Flash inference | 2026-04-24 |

| Snowflake | Cortex AI | R1 | 2025-01 |

Note that hyperscaler availability of V4 specifically is still rolling out. Amazon, Microsoft, Google, Huawei, and Alibaba Cloud are among those that have brought DeepSeek onboard their cloud platforms. Expect V4-Flash to appear first on Western clouds (smaller weights, lower serving cost) and V4-Pro to follow as capacity clears.

Enterprise integration partnerships: agent platforms and vertical tools

Outside the hyperscalers, a layer of enterprise-AI platforms has been absorbing V4 inside a day of launch. Aurora Mobile’s GPTBots.ai enterprise AI agent platform integrated the newly released DeepSeek-V4 Preview series, combining V4’s long-context processing and agentic performance with GPTBots.ai’s enterprise security, no-code deployment, and workflow orchestration.

Earlier cross-sector partnerships are also worth noting for context, though these predate V4 and should be treated as historical signal rather than live SLAs. By April 2025, partnerships were announced with automotive firms like BMW and BYD for in-car AI and with industrial firms like XCMG for complex modelling. That pattern — automotive, industrial, customer-engagement — is the shape of DeepSeek’s enterprise distribution, and it skews heavily toward Chinese-market and cross-border deployments rather than US enterprise SaaS.

Developer-ecosystem partnerships: Hugging Face, NIM, AMD

Under the headlines, DeepSeek’s quieter partnerships are with the places developers already work. Key integrations included Microsoft Azure in May 2024, NVIDIA NIM APIs in July 2024, and AMD GPU support in December 2024. In January 2025, a commercial agreement with Snowflake made DeepSeek-R1 available on the Snowflake Cortex AI platform.

For individual developers, the Hugging Face distribution is the practical one. V4-Pro weights are roughly 865GB, V4-Flash roughly 160GB. They are using the standard MIT license; V4-Pro is likely the new largest open-weights model, larger than Kimi K2.6 (1.1T) and GLM-5.1 (754B) and more than twice the size of DeepSeek V3.2 (685B); Pro is 865GB on Hugging Face, Flash is 160GB. That size is why most readers will not self-host V4-Pro, and why the DeepSeek hardware calculator and the partner-hosted options above matter.

Funding and investor talks: partnership, with a capital P

Partnerships also exist at the cap-table level. Tencent and Alibaba are in discussions to join a maiden round of financing for DeepSeek; Tencent has proposed acquiring as much as a 20% stake, though DeepSeek is not keen on ceding such a large portion of control. Talks are proceeding with a valuation benchmark around MiniMax’s roughly $40 billion. Neither deal is closed at the time of writing — treat these as a pipeline, not a line item.

What these partnerships mean for your build decision

If you are choosing where to integrate today, the partnerships sort into three buckets:

- Direct API — lowest price, statelessness on you. V4-Flash lists at $0.0028 cache-hit / $0.14 cache-miss input and $0.28 output per 1M tokens; V4-Pro lists at promotional $0.435 / $0.87 (75% off through 2026-05-31; list $0.0145 / $1.74 / $3.48). Pricing as of April 24, 2026; verify at DeepSeek API pricing.

- Hyperscaler hosting — VPC, compliance, and billing consolidation in exchange for a price premium and usually older model coverage (R1 / distills) until V4 propagates.

- Agent frameworks — Claude Code, OpenClaw and OpenCode let you use V4 without writing your own tool-calling harness.

A V4-Flash cost example, end to end

Imagine one million agent calls a day with a cached 2,000-token system prompt, a 200-token user turn, and a 300-token response, all on deepseek-v4-flash:

- Cached input: 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200,000,000 tokens × $0.14/M = $28.00

- Output: 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

The same workload on deepseek-v4-pro would cost $1,421.00 — roughly 10× more — so reserve Pro for the agentic work the benchmark lift justifies. That price gap is a bigger decision driver than any partnership logo.

Legacy model IDs and the migration window

If you already call DeepSeek via deepseek-chat or deepseek-reasoner, your existing integration keeps working for now. deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after July 24, 2026, 15:59 UTC — currently routing to deepseek-v4-flash non-thinking and thinking respectively. Migration is a one-line change to the model= field; base_url does not change.

Caveats and things I am watching

Three honest cautions:

- V4 is still labelled a preview. Pricing, partner SLAs, and chip supply all move during preview windows.

- Regulatory risk is real. Italy’s Garante ordered the app blocked in January 2025, and multiple US states and agencies have restricted the service on government devices. Partnership availability in a given region depends on both provider terms and local rules — check your jurisdiction.

- Competitor response is already in motion. Expect more V4-Flash hosting deals on Western clouds over the coming quarter, and clearer Huawei Ascend numbers when the Supernode livestreams drop.

For a running log of the integrations above and anything that lands after publication, the DeepSeek news hub is the place I keep updated. For the underlying company context behind this partner sprawl, see DeepSeek company news.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Who are DeepSeek’s main hardware partners for V4?

Huawei and Cambricon are the named chip partners for V4. Huawei pledged full support with its Ascend chip range and Supernode clustering systems for V4 inference, and Cambricon confirmed compatibility. DeepSeek engineers also rewrote parts of the model code to run on Chinese silicon. For a broader architectural view of the model, see our page on DeepSeek V4-Pro.

How do I use DeepSeek through a cloud provider instead of the direct API?

Azure AI Foundry, AWS Bedrock / SageMaker, Google Cloud Vertex AI and Alibaba Cloud PAI all host DeepSeek variants — mostly R1 and distilled Llama/Qwen versions at time of writing, with V4 support rolling out. Each provider manages its own SKUs and pricing. For a direct-API alternative with one-line migration, see our DeepSeek API getting started tutorial.

Does DeepSeek V4 work with Claude Code and similar agent tools?

Yes. DeepSeek’s V4 announcement states the model is integrated with Claude Code, OpenClaw and OpenCode. The Anthropic-compatible endpoint at https://api.deepseek.com is the mechanism — swap base_url and api_key in an Anthropic SDK and point it at deepseek-v4-pro. Our DeepSeek for coding guide walks through an end-to-end workflow.

Is DeepSeek investing in or being invested in by Tencent and Alibaba?

As of April 2026, Bloomberg reports that Tencent and Alibaba are in discussions to join DeepSeek’s first external funding round, benchmarked against a valuation in MiniMax’s roughly $40 billion range. Tencent has floated up to a 20% stake. Nothing is finalised. For ongoing coverage, see DeepSeek funding news.

Can I compare DeepSeek’s partner-hosted versions with the direct API on price?

Yes, and you should. Third-party hosts often trade price for uptime, caching quality, or feature parity — some cap context at 131K instead of the full context window, and some don’t implement tool calling the same way. Use the DeepSeek pricing calculator to model your actual workload, then verify features against the DeepSeek API pricing reference.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Context sources

- AnalysisCounterpoint Research (Wei Sun) on DeepSeek V4 chip partnersV4 on Huawei/Cambricon vs R1's Nvidia trainingLast checked: April 30, 2026

- NewsMicrosoft: DeepSeek R1 on Azure AI Foundry / GitHub announcementMicrosoft cloud distribution of DeepSeek modelsLast checked: April 30, 2026

- NewsBloomberg: Tencent/Alibaba DeepSeek funding-round talksTencent's 20% stake and MiniMax-benchmarked valuationLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.