DeepSeek V4 Release Date: Pro and Flash Land as an Open-Weight Preview

If you were waiting for a firm DeepSeek V4 release date, here it is: the V4 Preview dropped on April 24, 2026, as two open-weight Mixture-of-Experts models — `deepseek-v4-pro` and `deepseek-v4-flash` — both with a one-million-token context window. After months of rumour (Hunter Alpha on OpenRouter, Reuters leaks about Huawei Ascend hardware, an “any day now” consensus on X), DeepSeek published the weights to Hugging Face, flipped the API over, and swapped the chat.deepseek.com default model in a single morning. This article gives you the verified timeline, what each V4 tier actually is, how pricing lands against the previous generation, the benchmark picture in context, and a concrete migration plan off the legacy `deepseek-chat` and `deepseek-reasoner` IDs before they retire on July 24, 2026.

The confirmed DeepSeek V4 release date

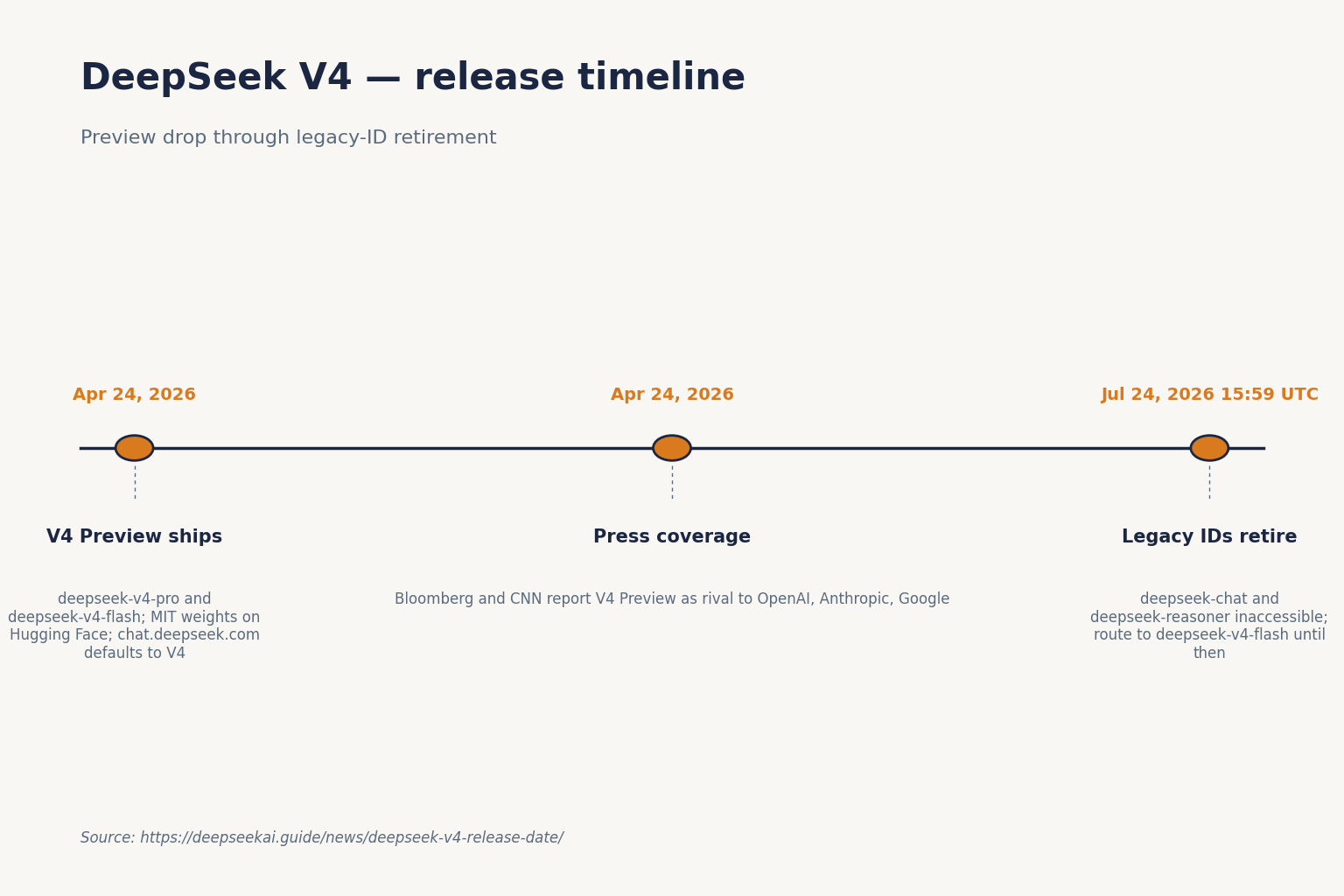

DeepSeek posted the V4 Preview announcement on its API docs site on April 24, 2026, the same morning the weights went live on Hugging Face and Expert/Instant Mode appeared in the web chat. The post opened by declaring DeepSeek-V4 Preview officially live and open-sourced, welcomed “the era of cost-effective 1M context length,” and introduced two model IDs: DeepSeek-V4-Pro at 1.6T total / 49B active parameters, and DeepSeek-V4-Flash at 284B total / 13B active parameters.

Mainstream coverage locked in within hours. Bloomberg reported on April 24, 2026 that DeepSeek rolled out preview versions of its new flagship, unveiling the V4 Flash and V4 Pro series with top-tier coding-benchmark performance and advances in reasoning and agentic tasks. CNN’s coverage from the same day framed it as a preview rival to OpenAI, Anthropic and Google, with upgrades in reasoning, agentic ability, and token-processing efficiency.

The “Preview” label is not cosmetic. DeepSeek has historically used it to signal that pricing, routing, and tokenizer details can still shift during the window, so treat the numbers below as current as of the release day and re-check before you commit a production budget.

What V4 actually is: two tiers, one feature set

V4 is not a single model — it is a family of two open-weight MoE checkpoints that share architecture, context length, and the same three reasoning-effort modes. You pick the tier with the model field and the effort with a parameter.

| Attribute | deepseek-v4-pro | deepseek-v4-flash |

|---|---|---|

| Total parameters | 1.6T | 284B |

| Active per token | 49B | 13B |

| Context window | 1,000,000 tokens | 1,000,000 tokens |

| Max output | 384,000 tokens | 384,000 tokens |

| Reasoning modes | Non-thinking, high, max | Non-thinking, high, max |

| Weights license | MIT | MIT |

| On-disk size (HF) | ~865 GB | ~160 GB |

| Release date | 2026-04-24 | 2026-04-24 |

Simon Willison’s day-of writeup independently confirmed the sizing: Pro is 1.6T total with 49B active, Flash is 284B total with 13B active, both under the MIT license, with Pro at 865GB on Hugging Face and Flash at 160GB — making V4-Pro the largest open-weight model currently published, larger than Kimi K2.6 and more than twice the size of V3.2. For a deeper technical read on DeepSeek V4 as a family, see our dedicated model page; tier-specific deep dives live at DeepSeek V4-Pro and DeepSeek V4-Flash.

Architecture: why the 1M context is the actual story

Context length alone is marketing. What matters for agent workloads is how cheap each forward pass stays at that depth. DeepSeek’s Hugging Face model card describes a hybrid attention mechanism combining Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA); in the 1M-token setting, DeepSeek-V4-Pro requires only 27% of the single-token inference FLOPs and 10% of the KV cache compared with V3.2. V4-Flash drops these further: roughly 10% of the FLOPs and 7% of the KV cache of V3.2.

Pricing — current rates and the off-peak status

Pricing landed well below the previous generation. Simon Willison’s writeup quotes DeepSeek’s pricing page at $0.14/M input and $0.28/M output for Flash, and $0.435/M input and $0.87/M output for Pro during the 75% promo through 2026-05-31 (list $1.74/M and $3.48/M). Cache-hit rates carry over the structure from V3.2 — $0.0028/M for Flash and $0.0145/M (list; promo $0.003625 through 2026-05-31) for Pro on the hit tier. All numbers in USD per million tokens, verified as of April 24, 2026; the V3.2 era’s off-peak discount ended on September 5, 2025 and was not reintroduced with V4.

| Tier | Input (cache hit) | Input (cache miss) | Output |

|---|---|---|---|

| deepseek-v4-flash | $0.0028 / M | $0.14 / M | $0.28 / M |

| deepseek-v4-pro | $0.003625 promo / $0.0145 list | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list |

Pro sits at roughly 6× Flash on output, so Flash remains the default recommendation for most chat and standard coding work. Pro earns its keep on agentic trajectories, long tool-use chains, and the harder SWE-Bench / Terminal-Bench style workloads where the benchmark lift justifies the spend. For live modelling, our DeepSeek pricing calculator handles the three-bucket math for you.

Worked example: 1M Flash calls with a cached system prompt

A common pattern: 1,000,000 calls, 2,000-token system prompt cached across calls, 200-token user message per call (uncached — each new user turn misses the prefix), 300-token response.

- Cached input: 2,000 × 1,000,000 = 2.0B tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200M tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300M tokens × $0.28/M = $84.00

- Total: $117.60

The same workload at Pro rates runs $29.00 + $348.00 + $1,044.00 = $1,421.00. Do not skip the uncached line — the user message on each call is still a miss against that cached prefix.

Benchmarks: competitive, not category-leading

DeepSeek was clear-eyed in its own announcement. According to the V4 technical report, V4-Pro (reasoning_effort=max) demonstrates superior performance relative to GPT-5.2 and Gemini-3.0-Pro on standard reasoning benchmarks, while falling marginally short of GPT-5.4 and Gemini-3.1-Pro — a trajectory that trails the current frontier by roughly 3 to 6 months.

| Benchmark | V4-Pro (Max) | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|---|

| SWE-Bench Verified | 80.6 | 80.8 | n/r | 80.6 |

| Terminal-Bench 2.0 | 67.9 | 65.4 | 75.1 | 68.5 |

| LiveCodeBench | 93.5 | 88.8 | — | — |

| HMMT 2026 (math) | 95.2 | 96.2 | 97.7 | — |

| HLE (no tools) | 37.7 | 40.0 | 39.8 | 44.4 |

| SimpleQA-Verified | 57.9 | — | — | 75.6 |

Independent reporting from OfficeChai confirms the pattern: V4-Pro leads on IMOAnswerBench (89.8) against Claude (75.3) and Gemini (81.0) but trails GPT-5.4 (91.4); on HMMT 2026, Claude (96.2) and GPT-5.4 (97.7) pull decisively ahead; SWE-Verified shows V4-Pro at 80.6, within a fraction of Claude (80.8) and matching Gemini (80.6); Terminal-Bench 2.0 has V4-Pro (67.9) beating Claude (65.4) and close to Gemini (68.5), with GPT-5.4 leading at 75.1. For a fuller benchmark roundup, see our DeepSeek benchmarks 2026 page.

How to access V4 today

Three routes went live on release day.

- Web chat — chat.deepseek.com exposes V4 behind Expert Mode (Pro) and Instant Mode (Flash). The DeepThink toggle now switches the active V4 model between non-thinking and thinking mode.

- API —

POST /chat/completionsagainsthttps://api.deepseek.com, the OpenAI-compatible endpoint. An Anthropic-compatible surface is live on the same base URL. - Open weights — published on Hugging Face under the DeepSeek collection.

A minimal Python snippet using the OpenAI SDK against V4-Pro with thinking mode enabled:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_DEEPSEEK_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[

{"role": "system", "content": "You are a careful engineer."},

{"role": "user", "content": "Plan the migration from deepseek-chat."},

],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

print(resp.choices[0].message.content)

When thinking is enabled the response returns reasoning_content alongside the final content. Core knobs worth knowing: temperature (DeepSeek recommends 0.0 for code, 1.3 for general chat, 1.5 for creative writing), top_p, max_tokens (set high enough to avoid JSON-mode truncation), stream=true, context caching (automatic on repeated prefixes), tool calling, FIM completion in Beta (requires thinking: {"type": "disabled"}), and Chat Prefix Completion in Beta. If you are starting from scratch, the DeepSeek API getting started walkthrough covers account setup, keys, and the first request. JSON mode is designed to return valid JSON, not guaranteed — include the word “json” plus a schema example in your prompt.

Think Max: the context-window gotcha

DeepSeek’s own model card recommends setting the context window to at least 384K tokens for the Think Max reasoning mode. If you set reasoning_effort="max" without raising max_model_len, expect truncation.

Migration off legacy model IDs

If your integration still points at deepseek-chat or deepseek-reasoner, you have a hard deadline. The announcement states that deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after July 24, 2026, 15:59 UTC, and currently route to deepseek-v4-flash in non-thinking and thinking modes respectively. DeepSeek’s guidance is to keep base_url and just update the model to deepseek-v4-pro or deepseek-v4-flash.

deepseek-chat→deepseek-v4-flash(no extra parameters needed for non-thinking).deepseek-reasoner→deepseek-v4-flashwithreasoning_effort="high"and the thinking flag inextra_body.- Need frontier-tier agentic performance? Swap to

deepseek-v4-proand price-check against the Pro tier before rollout.

The API is stateless — your client must resend the full message history with every request; only the web and app surfaces keep conversation state on DeepSeek’s side. That has not changed. Background reading: DeepSeek OpenAI SDK compatibility.

Licensing and hardware

Both V4 Preview models ship under the MIT license for weights and code — the same stance as V3.2, V3.1, and R1. Some older releases (V3 base, Coder-V2, VL2) still split MIT code from a separate DeepSeek Model License on weights, so check the specific Hugging Face repo if licensing matters for your deployment. On the training side, CNN reports that DeepSeek partnered with Huawei on the compute side, with Huawei confirming it supports V4 via its Supernode technology combining Ascend 950 clusters; Counterpoint’s Wei Sun highlighted that V4 was run on domestic chips from Huawei and Cambricon, in contrast with R1’s Nvidia training, arguing this could give V4 an even bigger impact than R1 by accelerating domestic adoption.

What V4 does not fix

Three honest caveats. First, the benchmark gap on expert-level reasoning is real: HLE puts V4-Pro at 37.7% — below Claude (40.0%), GPT-5.4 (39.8%) and well below Gemini-3.1-Pro (44.4%), with DeepSeek acknowledging the gap directly. Second, factual world-knowledge retrieval still trails Gemini on SimpleQA-Verified by a wide margin. Third, the “Preview” label means exactly what it says — pricing, routing, and tokenizer details can still move. Keep your eval harness warm. For a broader look at where DeepSeek slots against the competition, see DeepSeek vs Claude and DeepSeek vs ChatGPT, or the full DeepSeek news hub for the next beat in the story.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

What is the DeepSeek V4 release date?

DeepSeek V4 Preview released on April 24, 2026, with two open-weight model IDs going live the same day: deepseek-v4-pro (1.6T total / 49B active parameters) and deepseek-v4-flash (284B total / 13B active), both with a one-million-token context window under the MIT license. The rollout covered Hugging Face weights, the API, and chat.deepseek.com simultaneously. See the DeepSeek V4 model page for tier details.

How do I migrate from deepseek-chat or deepseek-reasoner to V4?

Keep your base_url and API key, swap the model field to deepseek-v4-flash or deepseek-v4-pro, and add reasoning_effort plus the thinking flag if you need the reasoner behaviour. The legacy IDs currently route to deepseek-v4-flash and retire at July 24, 2026, 15:59 UTC. Step-by-step in our DeepSeek API best practices guide.

Is DeepSeek V4 free to use?

The web chat at chat.deepseek.com is free for individual use and now defaults to V4. The open weights are free to download under MIT and run locally if you have the hardware. API usage is metered per token — $0.14/M input miss and $0.28/M output for Flash, $1.74/M list / $0.435/M promo input miss and $3.48/M list / $0.87/M promo output for Pro (75% promo runs through 2026-05-31) as of April 24, 2026. More on the free surface at is DeepSeek free.

Does DeepSeek V4 beat GPT-5.4 or Claude Opus 4.6?

On benchmarks, no — not across the board. DeepSeek itself says V4-Pro trails frontier closed models by 3 to 6 months on reasoning. V4-Pro leads Claude on Terminal-Bench 2.0 and LiveCodeBench, matches Claude and Gemini on SWE-Bench Verified, and trails all three on HLE and HMMT 2026 math. The pitch is cost, not supremacy. Full head-to-heads at DeepSeek comparisons.

Can I run DeepSeek V4 locally?

Flash is 160 GB on disk and will fit on a workstation with enough unified or VRAM memory (think a maxed-out M-series Mac or a multi-GPU box); Pro is 865 GB and realistically needs a small cluster or aggressive quantization to serve. Both are published under MIT on Hugging Face. For a practical setup walkthrough, see install DeepSeek locally.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- OfficialDeepSeek V4 Preview release notes (api-docs.deepseek.com)V4 launch announcement, model IDs, retirement deadlineLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- Technical reportDeepSeek V4 technical reportV4-Pro-Max benchmark positioning vs GPT-5.x and GeminiLast checked: April 30, 2026

Context sources

- NewsBloomberg: DeepSeek unveils newest flagship a year after AI breakthroughLaunch-day coverage of V4 Flash / Pro debutLast checked: April 30, 2026

- AnalysisSimon Willison: V4 launch day writeupIndependent confirmation of V4 sizing and pricingLast checked: April 30, 2026

- NewsCNN coverage of DeepSeek V4 PreviewFraming V4 as preview rival to OpenAI/Anthropic/GoogleLast checked: April 30, 2026

- NewsOfficeChai: DeepSeek V4 independent benchmark reportingCross-check on IMOAnswerBench/HMMT/SWE-Verified scoresLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.