DeepSeek Janus: The Unified Multimodal Model, Tested and Explained

If you have been hunting for an open-weight model that can both *describe* an image and *generate* one from a prompt, DeepSeek Janus is the family worth knowing about. Most multimodal systems pick a side: vision-language models read pictures, diffusion models draw them. DeepSeek Janus does both, using a single transformer with two separate visual encoders bolted on. The current flagship, Janus-Pro-7B, was released on January 27, 2025 and posts higher GenEval and DPG-Bench scores than DALL-E 3 and Stable Diffusion 3 Medium on DeepSeek’s own evaluations. This guide walks through what Janus actually is, how the architecture differs from typical vision-language models, the benchmark numbers in context, and how to run it locally or test it in a browser.

What DeepSeek Janus is

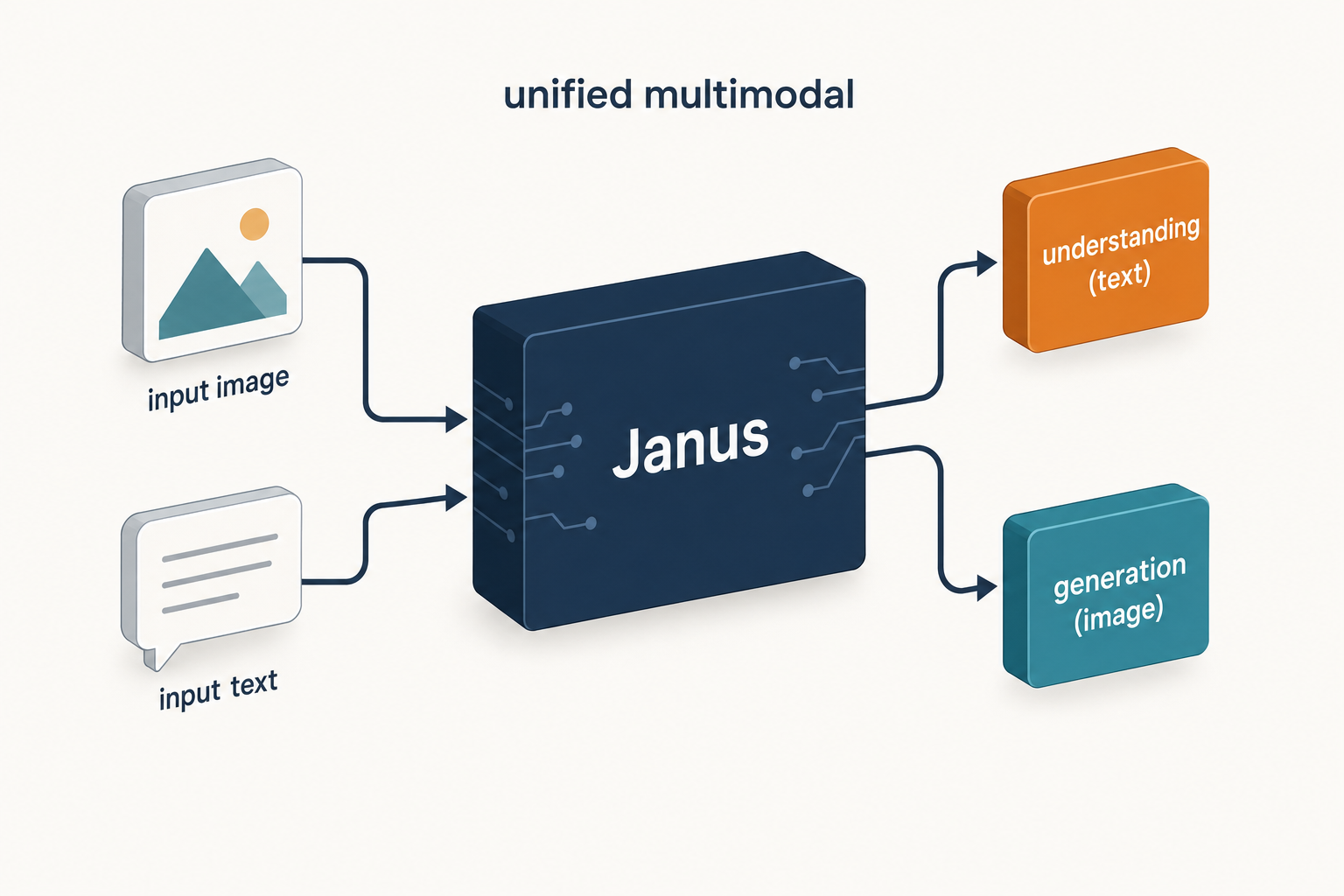

DeepSeek Janus is a family of open-weight multimodal models that handle both image understanding (look at a picture, answer questions about it) and image generation (text-to-image) inside a single autoregressive transformer. Janus is a novel autoregressive framework that unifies multimodal understanding and generation. It addresses the limitations of previous approaches by decoupling visual encoding into separate pathways, while still utilizing a single, unified transformer architecture for processing. The decoupling not only alleviates the conflict between the visual encoder’s roles in understanding and generation, but also enhances the framework’s flexibility.

The line is distinct from DeepSeek’s text-only generation models. If you want a chat or coding model, the DeepSeek V4-Flash tier or its frontier sibling DeepSeek V4-Pro are the right entry points. Janus is the multimodal sibling — closer in spirit to DeepSeek VL2, but with image generation added to the mix.

Architecture and lineage

The Janus family has shipped three releases so far. Janus-Pro was released on January 27, 2025 — an advanced version of Janus that improves both multimodal understanding and visual generation significantly. JanusFlow was released on November 13, 2024 — a unified model with rectified flow for image generation. The original Janus (October 2024) was the proof-of-concept; Janus-Pro is the version most teams should look at today.

Decoupled visual encoding — the core trick

Most unified multimodal models use one image encoder for both reading and drawing. The Janus team argues that the encodings needed for those two jobs are different, and a single encoder forces a compromise. The main design principle in the Janus architecture is to decouple visual encoding for multimodal understanding and generation. This is achieved by utilizing different encoders for each type of task. For image understanding tasks, Janus uses SigLIP to encode images. SigLIP is an improved version of OpenAI’s CLIP model, which extracts semantic representations from images. For generation, a separate VQ tokenizer turns target images into discrete IDs that the transformer can predict autoregressively.

Concretely: For multimodal understanding, Janus-Pro uses SigLIP-L as the vision encoder, which supports 384 x 384 image input. For image generation, it uses a tokenizer with a downsample rate of 16. Both pathways feed the same shared transformer, which is built on the open DeepSeek-LLM backbones.

Sizes and parameter counts

Janus-Pro ships in two sizes that share the same architecture and only differ in backbone scale. Janus-Pro is a unified understanding and generation MLLM, which decouples visual encoding for multimodal understanding and generation. Janus-Pro is constructed based on the DeepSeek-LLM-1.5b-base/DeepSeek-LLM-7b-base.

| Model | Backbone | Image input | Image generation | Released |

|---|---|---|---|---|

| Janus (original) | DeepSeek-LLM 1.3B | SigLIP | VQ tokenizer | 2024-10-20 |

| JanusFlow | DeepSeek-LLM 1.3B | SigLIP | Rectified flow | 2024-11-13 |

| Janus-Pro-1B | DeepSeek-LLM-1.5B | SigLIP-L (384×384) | VQ tokenizer | 2025-01-27 |

| Janus-Pro-7B | DeepSeek-LLM-7B | SigLIP-L (384×384) | VQ tokenizer | 2025-01-27 |

Benchmarks: where Janus-Pro actually lands

The Janus-Pro paper benchmarks the 7B model on two flavours of evaluation: multimodal understanding (POPE, MME-Perception, GQA, MMMU, MMBench) and text-to-image generation (GenEval, DPG-Bench). The numbers below come from the official paper on arXiv (arXiv:2501.17811).

| Benchmark | What it measures | Janus-Pro-7B | Comparison |

|---|---|---|---|

| GenEval (overall) | Text-to-image instruction following | 0.80 | DALL-E 3: 0.67 · SD3-Medium: 0.74 · Janus: 0.61 |

| DPG-Bench | Dense / detailed prompt accuracy | 84.19 | Higher than every competitor reported in the paper |

| MMBench | Multimodal understanding | 79.2 | Janus: 69.4 · TokenFlow: 68.9 · MetaMorph: 75.2 |

| MMMU | Multimodal reasoning | 41.0 | Outperforms TokenFlow-XL (38.7) |

Janus-Pro-7B achieved a score of 79.2 on the multimodal understanding benchmark MMBench, surpassing leading unified multimodal models such as Janus (69.4), TokenFlow (68.9) and MetaMorph (75.2). On the GenEval leaderboard, Janus-Pro-7B scores 0.80, outperforming Janus (0.61), DALL-E 3 (0.67), and Stable Diffusion 3 Medium (0.74). On DPG-Bench, Janus-Pro achieves a score of 84.19, surpassing all other methods reported.

One important caveat: these were internal tests by DeepSeek; further independent testing is expected to provide a more comprehensive evaluation of the model’s capabilities. Treat the headline numbers as DeepSeek-reported rather than third-party verified, and weight them against the next section.

Strengths — where Janus wins

- Single model, two jobs. You do not need to run a vision-language model alongside a separate diffusion model. One checkpoint covers both.

- Strong instruction following on text-to-image. The GenEval and DPG-Bench numbers favour Janus-Pro for prompts that demand specific objects, colours, counts and positions.

- Realistic open weights. The 7B model fits on a single 24 GB consumer GPU at bf16, and the 1B variant is small enough to run in a browser via WebGPU and Transformers.js — there are public demos doing exactly that.

- Decoupled architecture is a real engineering win. The researchers introduced a decoupled architecture for visual understanding and generation, effectively used synthetic data to enhance training, and posted leading performance through data scaling techniques.

Weaknesses — where it falls short

- Low native resolution. The understanding encoder takes 384×384 inputs and the generation pipeline operates at similarly modest resolutions. If you need 1024×1024 photographic output, dedicated diffusion models still pull ahead on raw fidelity.

- Humans are hard. Side-by-side comparisons showed Janus-Pro-7B is better at creating realistic images, though it struggles significantly with generating humans. Faces, hands and full-body proportions are common failure modes.

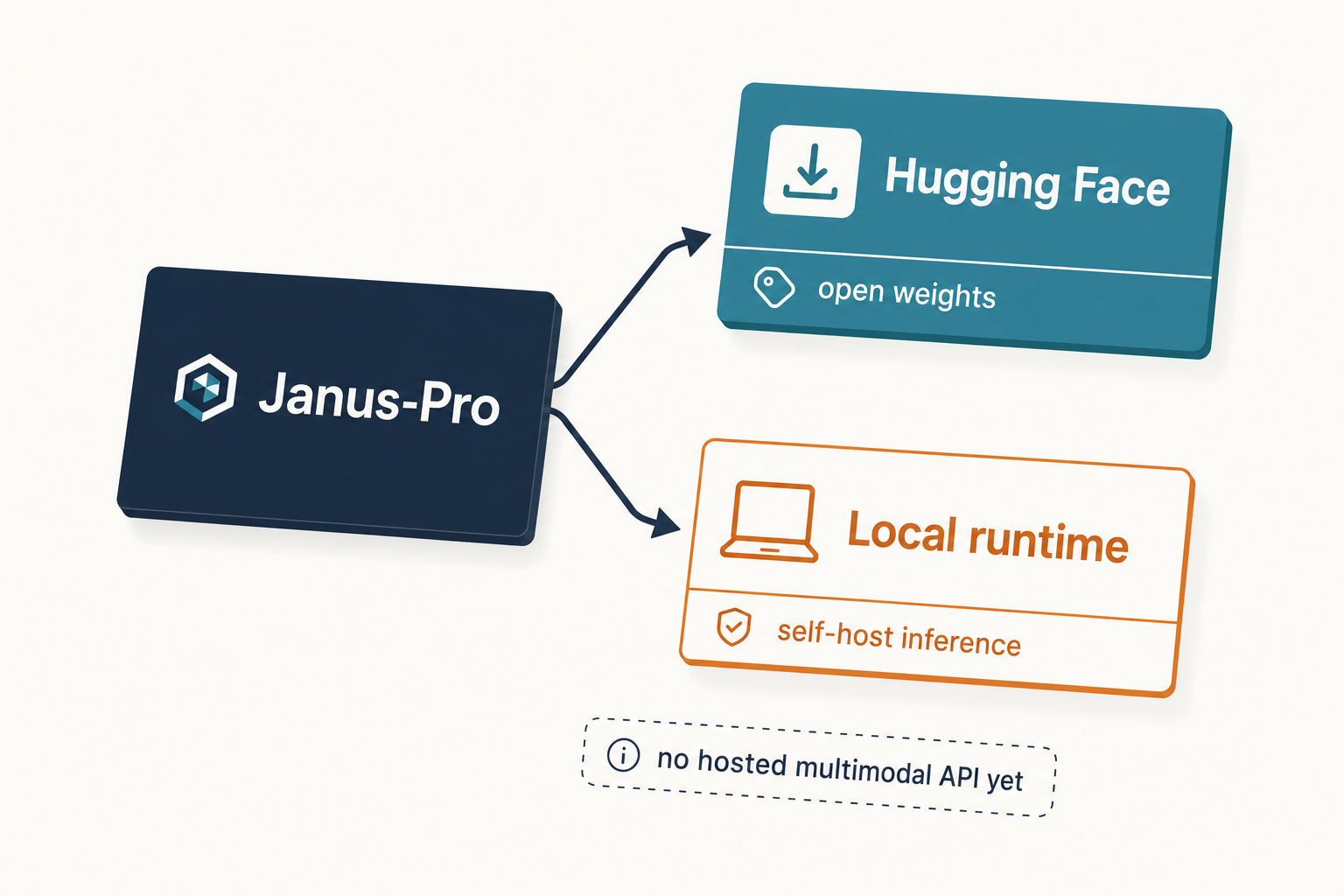

- No first-party API. Unlike the V4 chat models, Janus is not (yet) a paid endpoint on

api.deepseek.com. You self-host or use a community demo. - Licence is split. See the next section — code is MIT, weights are not.

Licensing — read this before you deploy

This trips people up. The Janus code repository is licensed under the MIT License. The use of Janus-Pro models is subject to the DeepSeek Model License. So the framework is permissive, but the weights themselves carry DeepSeek’s bespoke model licence — which does permit commercial use under its terms but is not the same as MIT. If your legal team requires MIT-licensed weights, the V4 family is a better fit; for Janus, read the model licence on the Hugging Face repo and confirm it covers your deployment. More background on the open-source angle is in our DeepSeek open-source guide.

How to access DeepSeek Janus

There is no official DeepSeek-hosted API for Janus. You have three practical options.

1. Hugging Face Spaces (no install)

The fastest test path. The Hugging Face Spaces demo lets you enter prompts and generate text or images directly in your browser. This requires no installation or setup. Good for sanity-checking whether Janus-Pro is even close to what you need.

2. Local Python (Hugging Face Transformers)

Pull the weights from deepseek-ai/Janus-Pro-7B and run them with the project’s own loader. The minimal Python pattern from the official repository is:

import torch

from transformers import AutoModelForCausalLM

from janus.models import MultiModalityCausalLM, VLChatProcessor

from janus.utils.io import load_pil_images

model_path = "deepseek-ai/Janus-Pro-7B"

vl_chat_processor = VLChatProcessor.from_pretrained(model_path)

tokenizer = vl_chat_processor.tokenizer

vl_gpt = AutoModelForCausalLM.from_pretrained(

model_path, trust_remote_code=True

)

vl_gpt = vl_gpt.to(torch.bfloat16).cuda().eval()For a more general walkthrough of running DeepSeek models on your own hardware, see install DeepSeek locally and the DeepSeek hardware calculator to size GPUs.

3. Browser via Transformers.js (1B only)

The 1B variant is small enough to run client-side. The 1B model runs in the browser using WebGPU via Transformers.js. Useful for demos, untrustworthy for production.

How Janus relates to the rest of the API surface

It does not, directly. DeepSeek’s commercial API exposes the V4 family — chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. Both deepseek-v4-pro (1.6T total / 49B active) and deepseek-v4-flash (284B / 13B active) are open-weight MoE models released under the MIT licence on April 24, 2026, with a 1,000,000-token default context window and up to 384,000 output tokens. Thinking mode on those models is a request parameter (reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}), not a separate model ID, and the response returns reasoning_content alongside the final content.

Legacy IDs deepseek-chat and deepseek-reasoner still work and currently route to deepseek-v4-flash until they retire on 2026-07-24 at 15:59 UTC. The API itself is stateless — you resend the conversation history with every request, in contrast to the web and app, which keep session state for you. Janus is none of this: it is a separate, self-hosted vision model. If you need DeepSeek’s text models programmatically, start with the DeepSeek API documentation.

Pricing snapshot

Janus has no DeepSeek-hosted price tag — the cost is whatever GPU time you spend running it. For reference, here is what the current text-model API costs on DeepSeek’s own pricing page as of April 2026 (always verify on the latest DeepSeek API pricing page before committing):

| Model | Input (cache hit) $/1M | Input (cache miss) $/1M | Output $/1M |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 | $0.14 | $0.28 |

deepseek-v4-pro |

$0.003625 (promo, list $0.0145) | $0.435 (promo, list $1.74) | $0.87 (promo, list $3.48) |

Off-peak discounts ended on September 5, 2025 and have not been reintroduced.

Best use cases for Janus

- Interleaved understanding + generation in one workflow. Receive a sketch, describe it, then generate variations from a refined prompt — without juggling two model APIs.

- Educational and research demos. The 1B model in a browser is a teaching tool’s dream.

- Synthetic data pipelines. Caption-then-render loops for training other models.

- Lightweight content prototyping. See DeepSeek for content creation for adjacent workflows.

Where Janus is the wrong tool: high-resolution photoreal output, long-form vision-language reasoning over high-DPI documents (a job for DeepSeek VL2 or larger frontier multimodal models), or anything where you need a managed API with an SLA.

Comparable alternatives

If Janus is not quite right, there are obvious adjacent choices to weigh up:

- For text-only chat or reasoning workloads — compare DeepSeek vs ChatGPT or DeepSeek vs Claude.

- For broader multimodal options — the open-source AI like DeepSeek roundup covers Qwen-VL, Llama 3.2 Vision, InternVL and more.

- For the full DeepSeek line-up — browse the DeepSeek models hub.

Verdict

DeepSeek Janus is the most interesting open-weight unified multimodal model published in the last twelve months. Janus-Pro-7B’s GenEval and DPG-Bench numbers are credible, the decoupled-encoder design is a genuine architectural contribution, and the 1B variant is a gift to anyone who teaches or demos. It is not a replacement for a polished hosted image API, and it is not the right model for high-resolution photorealism or human figures — but for research, prototyping and self-hosted multimodal pipelines, it earns a place in the toolbox.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

What is DeepSeek Janus used for?

DeepSeek Janus is a unified multimodal model that handles both image understanding and text-to-image generation in a single transformer. Practical uses include captioning images, answering questions about a picture, generating images from text prompts, and building research pipelines that combine the two. It is a self-hosted model rather than a paid API; for hosted text models, see the DeepSeek API documentation.

How does Janus-Pro compare to DALL-E 3?

On DeepSeek’s own benchmarks, Janus-Pro-7B scores 0.80 on GenEval versus DALL-E 3’s 0.67 and 84.19 on DPG-Bench, both of which test instruction-following for text-to-image generation. In practice DALL-E 3 still produces stronger photorealism, especially for human figures, while Janus-Pro is competitive on prompt adherence and is open-weight. See our broader AI comparison hub for context.

Is DeepSeek Janus free and open source?

The Janus code is MIT-licensed, but the model weights are governed by the separate DeepSeek Model License, which permits commercial use under its terms. That makes Janus open-weight rather than fully MIT-licensed end-to-end. You can download the weights from Hugging Face at no cost; what you pay is your own compute. More on licensing nuances in our DeepSeek open-source guide.

Can I run Janus-Pro-7B locally?

Yes. The 7B model loads in bf16 on a single 24 GB consumer GPU using Hugging Face Transformers and the official Janus loader, and the 1B variant runs in a browser via WebGPU. For step-by-step deployment guidance applicable to most DeepSeek models, see install DeepSeek locally and the DeepSeek hardware calculator to estimate VRAM needs.

Does DeepSeek offer Janus through its hosted API?

Not currently. DeepSeek’s hosted API at POST /chat/completions exposes the V4 text models (deepseek-v4-pro and deepseek-v4-flash), with legacy IDs deepseek-chat and deepseek-reasoner retiring on 2026-07-24 at 15:59 UTC. Janus is self-hosted only — you run the open weights yourself. See all DeepSeek models for what is currently available via API versus open weights.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- Model cardDeepSeek Janus-Pro-7B model cardCanonical 7B Janus-Pro weights and loaderLast checked: April 30, 2026

- Model cardDeepSeek Janus-Pro-1B model card1B variant runnable in browser via Transformers.jsLast checked: April 30, 2026

Technical references

- Technical reportJanus-Pro paper on arXiv (2501.17811)GenEval 0.80, DPG-Bench 84.19, MMBench 79.2 figuresLast checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.