DeepSeek V3 Explained: The 671B MoE That Reset Open-Weight Economics

If you landed here asking whether DeepSeek V3 is still the model you should be building on in 2026, the short answer is: probably not for new projects, but understanding it matters more than ever. DeepSeek V3 was the December 2024 release that forced every major AI lab to re-examine training budgets, and its architecture still underpins the DeepSeek-R1 reasoning model and the V3.1 / V3.2 updates that followed. With DeepSeek V4 now shipping as the current generation, V3 has moved from frontier flagship to reference point — the model you study to understand how the MoE cost curve actually bends. This guide covers what V3 is, how it was built, what it scored, where it still has a role, and how to migrate off it cleanly.

What DeepSeek V3 is

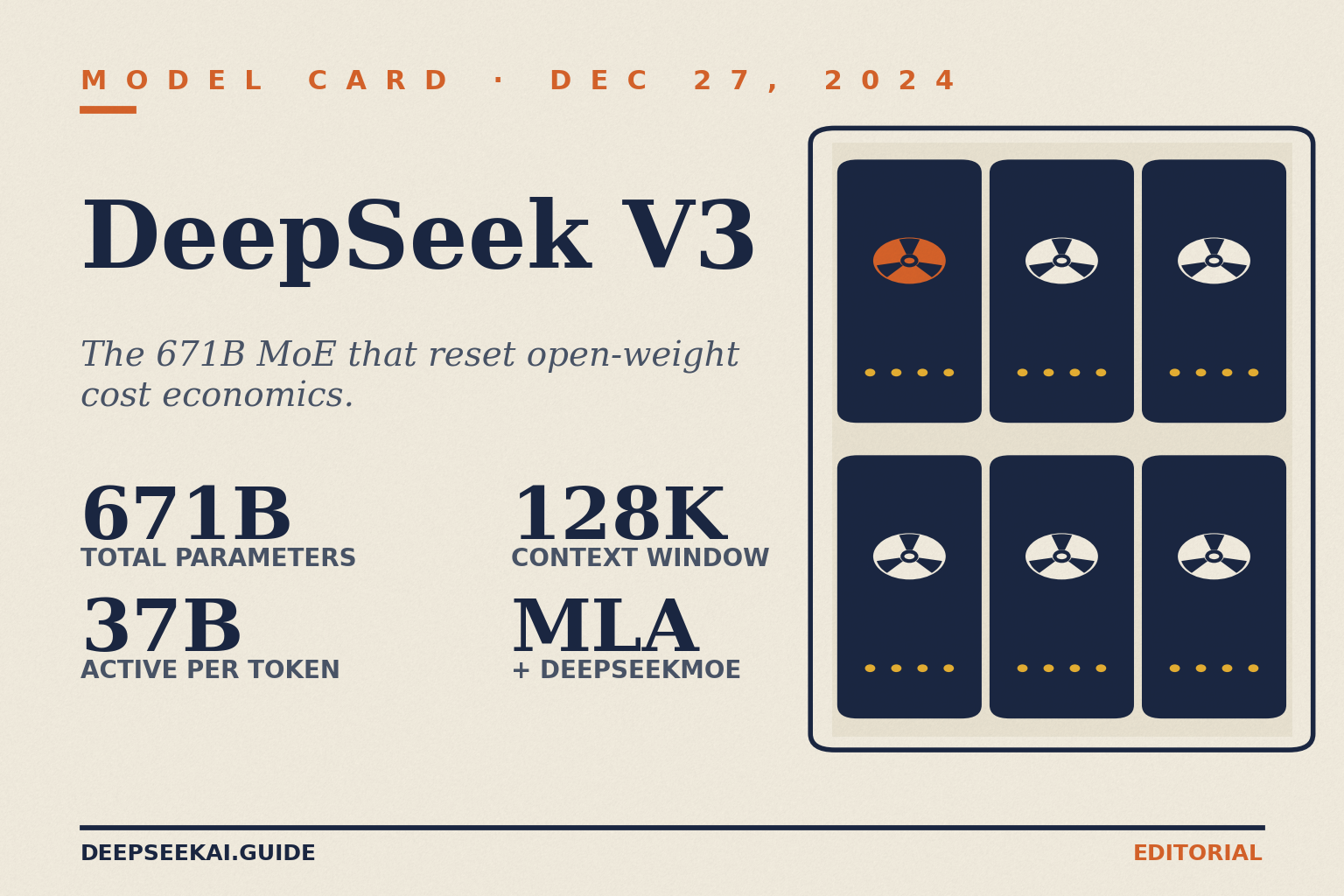

DeepSeek V3 is an open-weight Mixture-of-Experts (MoE) language model from the Hangzhou-based lab DeepSeek, first released on December 27, 2024. It is a strong Mixture-of-Experts model with 671B total parameters with 37B activated for each token, adopting Multi-head Latent Attention (MLA) and DeepSeekMoE architectures. The release drew global attention not because of raw benchmarks alone, but because of the combination: frontier-competitive quality, open weights on Hugging Face, a detailed technical report, and a training bill that undercut Western labs by roughly two orders of magnitude.

V3 is no longer the current generation. DeepSeek V4 launched on April 24, 2026, shipping as two open-weight MoE tiers — deepseek-v4-pro (1.6T total / 49B active) and deepseek-v4-flash (284B / 13B active) — both under the MIT license with a 1M-token default context window. If you are choosing a model today, start at the DeepSeek V4 page. If you are auditing an existing integration that still references V3, keep reading — the rest of this article is for you.

Architecture and lineage

V3 sits at a specific point in the DeepSeek research line: Transformer → DeepSeek-V2 (May 2024) → DeepSeek-V3 (December 2024) → R1 (January 2025, built on the same base) → V3.1 (August 2025) → V3.2 (late 2025) → V4 (April 2026). Two architectural choices from V2 carry through V3 and are worth naming:

- Multi-head Latent Attention (MLA) — compresses the KV cache into a low-dimensional latent space, which is what makes long-context inference affordable at this scale.

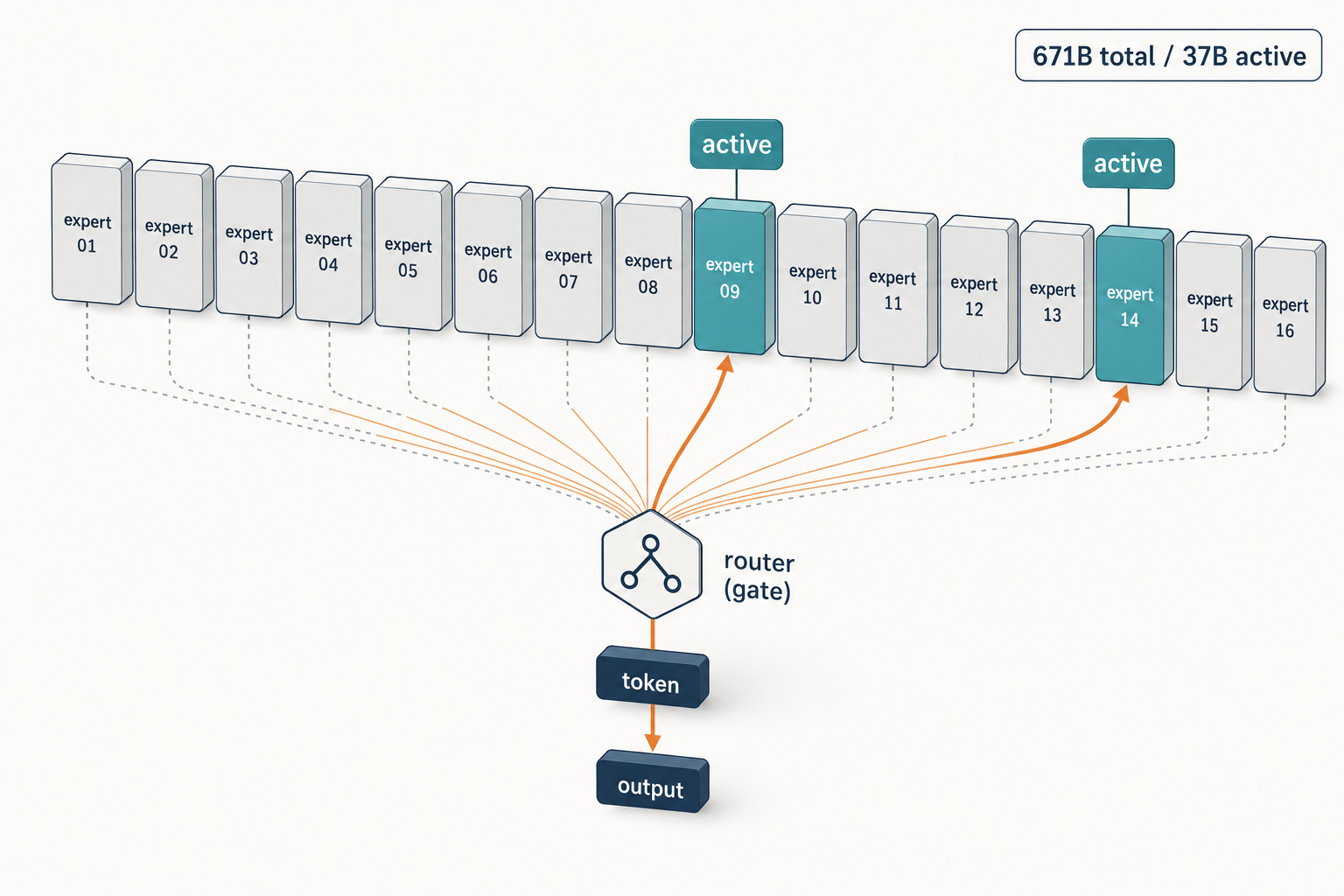

- DeepSeekMoE — fine-grained expert specialisation with shared experts. Each MoE layer consists of 1 shared expert and 256 routed experts, where the intermediate hidden dimension of each expert is 2048, and among the routed experts, 8 experts are activated for each token.

V3 adds two things V2 did not have. First, an auxiliary-loss-free load-balancing strategy, which avoids the usual trade-off where the balancing loss degrades model quality. Second, a Multi-Token Prediction (MTP) training objective that predicts two tokens ahead during training and can later be repurposed for speculative decoding. DeepSeek pre-trained V3 on 14.8 trillion diverse and high-quality tokens, followed by Supervised Fine-Tuning and Reinforcement Learning stages, and the full training required only 2.788M H800 GPU hours.

Specifications at a glance

| Specification | DeepSeek V3 |

|---|---|

| Total parameters | 671B |

| Active parameters per token | 37B |

| Architecture | Sparse MoE, decoder-only transformer |

| Attention | Multi-head Latent Attention (MLA) |

| Context length | 128K tokens |

| Pre-training tokens | 14.8T |

| Training compute | 2.788M H800 GPU-hours |

| Released | 2024-12-27 |

| Code license | MIT |

| Weights license | Separate DeepSeek Model License (original V3); V3-0324 weights under MIT |

The 685B figure you may see on Hugging Face is not a contradiction — the total size of DeepSeek-V3 models on HuggingFace is 685B, which includes 671B of the main model weights and 14B of the Multi-Token Prediction (MTP) module weights.

Benchmarks from the V3 technical report

These are the headline numbers DeepSeek published alongside the December 2024 release. Every comparison here is against the specific competitor version DeepSeek listed, not against later revisions. If you are writing a 2026 comparison, use V4 benchmarks instead — see the DeepSeek benchmarks 2026 roundup.

| Benchmark | DeepSeek V3 | GPT-4o-0513 | Claude-3.5-Sonnet-1022 |

|---|---|---|---|

| MMLU | 88.5 | 87.2 | 88.3 |

| MMLU-Pro | 75.9 | 73.3 | 78.0 |

| GPQA Diamond | 59.1 | 49.9 | 65.0 |

| MATH-500 | 90.2 | 74.6 | 78.3 |

| HumanEval | 82.6 | — | 81.7 |

| LiveCodeBench | 40.5 | 36.1 | — |

| AIME 2024 | 39.2 | — | — |

Read those carefully. V3 led on math and competitive coding, matched Claude-3.5-Sonnet on general knowledge, and trailed Claude on GPQA Diamond. The R1 report later pushed MMLU to 90.8 for R1 while keeping V3 at 88.5, which is why R1 — not V3 — became the reasoning-workload default through 2025. For an independent re-test, see our DeepSeek V3 review.

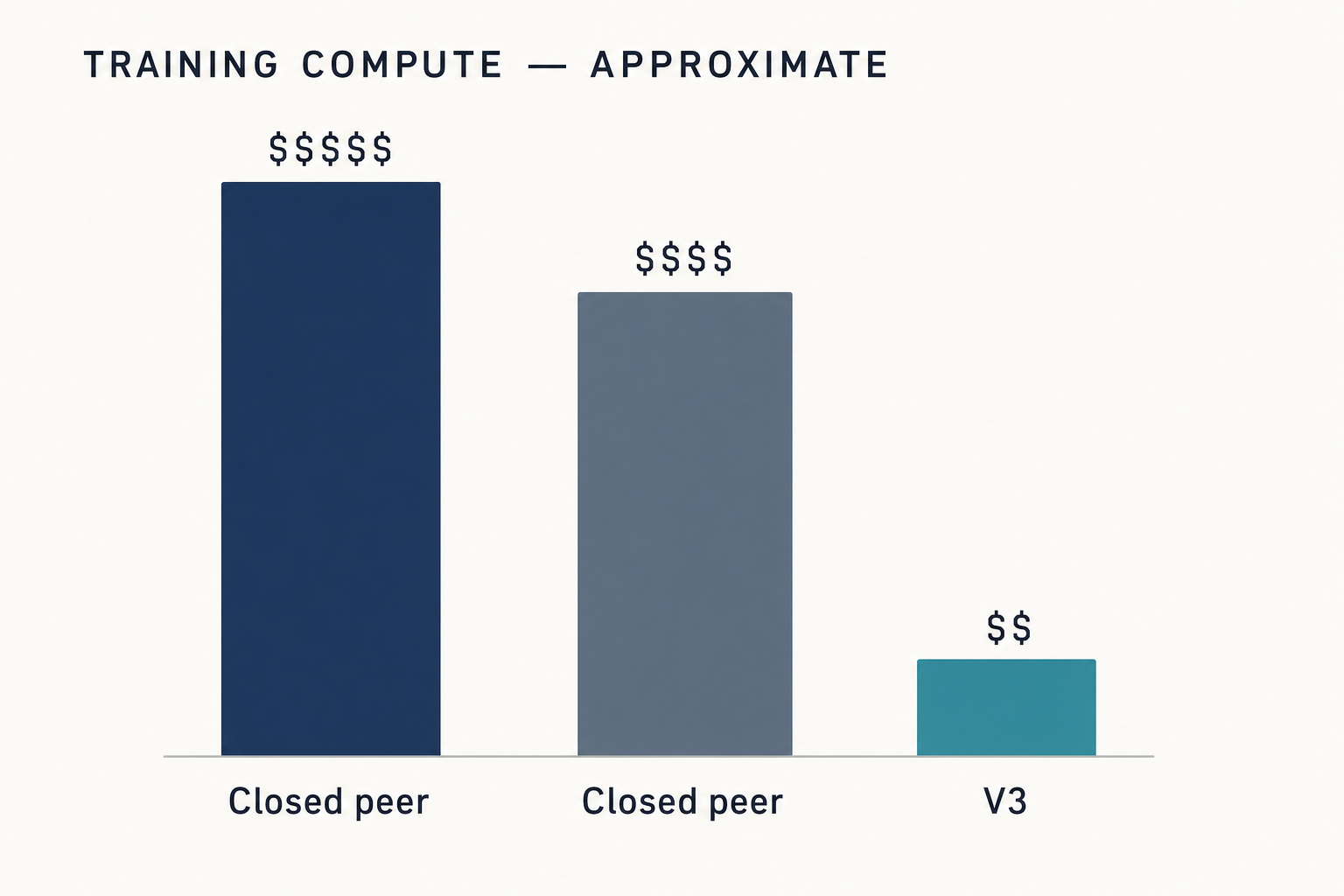

Training cost: the $5.6M number in context

The figure that launched a thousand headlines: training required merely 2.788 million H800 GPU hours at a total cost of $5.576 million. Two things to hold steady here. First, that is training compute only — not research salaries, not data acquisition, not the cost of the prior V2 line that made V3 possible. Second, this $5.6M figure belongs to V3. The $294,000 figure you may have read belongs to R1 (disclosed by Reuters in September 2025). Do not blend them.

What the number changed was the public conversation about what frontier training has to cost. The DeepSeek funding and cost coverage holds more on the market reaction.

Strengths — where V3 still earned its keep

- Open weights at frontier scale. Before V3, “open-weight” and “GPT-4o-class” were not phrases that lived in the same sentence.

- Mathematics. 90.2 on MATH-500 in the base V3 report is a strong result for a non-reasoning model.

- Inference cost economics. Because the weights are public, multiple providers host V3 at aggressive per-token rates, which kept the DeepSeek API pricing below proprietary frontier rates throughout 2025.

- Foundation for R1. R1’s reasoning RL was applied on top of the V3 base. Without V3 there is no R1 — see DeepSeek R1.

Weaknesses — where V3 falls short today

- No native thinking mode. Original V3 is a non-reasoning chat model. V3.1 later merged thinking/non-thinking into one model; V3 did not.

- 128K context. Fine in 2024, middling in 2026. V4 defaults to 1M tokens.

- Hardware barrier for local inference. 671B parameters means serious metal. For self-hosters, see the DeepSeek hardware calculator.

- Weaker agentic / tool-use behaviour than the V3.1 and V4 generations, which were trained specifically for agent workflows.

How to access DeepSeek V3 today

Open weights

The original V3 and the March 2025 V3-0324 refresh are on Hugging Face under the deepseek-ai org. The V3-0324 model supports features such as function calling, JSON output, and FIM completion. If you want to run it offline, start with the install DeepSeek locally tutorial.

Hosted API — and the legacy-ID migration window

Here is where it gets sharp. On DeepSeek’s official API today, V3 is no longer a selectable model. The legacy IDs deepseek-chat and deepseek-reasoner still work, but they now route to deepseek-v4-flash (non-thinking and thinking respectively). Those legacy IDs are scheduled to be fully retired on 2026-07-24 at 15:59 UTC. After that date, requests using them will fail.

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. The API is stateless — clients resend the full message history with every request — which is the opposite of how the web chat and mobile app behave, where session history is maintained for you. DeepSeek also ships an Anthropic-compatible surface against the same base URL, so the Anthropic SDK works with a base_url and api_key swap.

A minimal migration example in Python, using the OpenAI SDK:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash", # was "deepseek-chat" on V3 / V3.x

messages=[

{"role": "system", "content": "You are a terse assistant."},

{"role": "user", "content": "Summarise the V3 to V4 migration in one line."},

],

temperature=1.3, # DeepSeek's guidance for general conversation

max_tokens=512,

)

print(resp.choices[0].message.content)If you want thinking mode on V4, keep base_url, pass reasoning_effort="high", and set extra_body={"thinking": {"type": "enabled"}}. The response returns reasoning_content alongside the final content. Full walkthrough in the DeepSeek API getting started guide.

Pricing snapshot — and why V3’s old rates no longer apply

V3’s historical API rate card ($0.27 input / $1.10 output per million tokens) is retired. V4 replaced it. As of April 2026, the current DeepSeek rates are:

| Model tier | Input, cache hit | Input, cache miss | Output |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 / 1M | $0.14 / 1M | $0.28 / 1M |

deepseek-v4-pro |

$0.003625 / 1M (promo, list $0.0145) | $0.435 / 1M (promo, list $1.74) | $0.87 / 1M (promo, list $3.48) |

Worked example on V4-Flash — 1,000,000 calls with a 2,000-token cached system prompt, a 200-token user message, and a 300-token response:

- Cached input: 2,000 × 1,000,000 × $0.0028 / 1M = $5.60

- Uncached input: 200 × 1,000,000 × $0.14 / 1M = $28.00

- Output: 300 × 1,000,000 × $0.28 / 1M = $84.00

- Total: $117.60

The off-peak discount that existed in 2025 ended on 2025-09-05 and has not returned. Rates on DeepSeek’s pricing page can change during a Preview window; verify at the DeepSeek API pricing reference before committing.

Best use cases — and honest alternatives

If you are running V3 today, the honest guidance is: migrate to V4-Flash for anything production, keep R1 on the shortlist for pure reasoning workloads, and only stay on V3 open weights if you have a specific reason (regulatory, air-gapped deployment, fine-tuning pipeline built around the V3 base).

- Code generation — V3 was strong at HumanEval; V4-Pro is stronger on SWE-Bench Verified. See DeepSeek for coding.

- Math-heavy workloads — V3 base and R1 both shine. For reasoning-first work, start with DeepSeek R1.

- Long-form writing and translation — V3 is competent at temperature 1.3; V4 is better at longer horizons because of the 1M-token context.

- Cost-sensitive chat apps — V4-Flash undercuts V3’s own 2025 rates.

V3 vs later DeepSeek models

| Model | Release | Total / Active params | Context | Thinking mode | Status |

|---|---|---|---|---|---|

| V3 | Dec 2024 | 671B / 37B | 128K | No | Legacy reference |

| R1 | Jan 2025 | 671B / 37B | 128K | Yes (native) | Still in use |

| V3.1 | Aug 2025 | 671B / 37B | 128K | Hybrid toggle | Historical |

| V3.2 | Dec 2025 | 671B / 37B | 128K | Hybrid | Previous generation |

| V4-Flash | Apr 2026 | 284B / 13B | 1M | Parameter | Current default |

| V4-Pro | Apr 2026 | 1.6T / 49B | 1M | Parameter | Current frontier |

For deeper head-to-heads, see DeepSeek V3 vs GPT-4o and DeepSeek V3.2. A broader catalogue is on the DeepSeek models hub.

Verdict

DeepSeek V3 was the release that made open-weight frontier models economically plausible, and it still deserves credit for that. In 2026 it is a reference architecture, not a deployment target. Migrate hosted workloads to V4-Flash, keep R1 in mind for reasoning, and hold on to V3 weights only if you have a concrete self-hosting or fine-tuning reason. The migration itself is a one-line model= swap — the base URL does not change.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Is DeepSeek V3 still available?

The open weights remain on Hugging Face and you can self-host them freely. On DeepSeek’s hosted API, however, V3 is no longer directly selectable — the legacy deepseek-chat and deepseek-reasoner IDs now route to V4-Flash and are scheduled to be fully retired on 2026-07-24 at 15:59 UTC. For the current production path, see DeepSeek V4.

What is the difference between DeepSeek V3 and R1?

Both share the same 671B / 37B MoE base architecture, but R1 adds reinforcement-learning post-training that produces explicit step-by-step reasoning before the final answer. V3 is a standard chat model, R1 is a reasoning model. R1 scored higher on MMLU (90.8 vs 88.5) and on competition mathematics. The full comparison is in the DeepSeek R1 review.

How much did DeepSeek V3 cost to train?

DeepSeek reported 2.788M H800 GPU-hours, totalling roughly $5.576 million in compute. That figure covers GPU time only — not salaries, research overhead, or the prior V2 line. Do not confuse it with the $294,000 figure Reuters published in September 2025, which was for R1 specifically. More context in the DeepSeek company news feed.

Can I run DeepSeek V3 locally?

Technically yes, practically only if you have serious hardware. 671B parameters in BF16 or FP8 does not fit on a single consumer GPU. Community quantisations reduce this to something an 8× GPU rack or a high-RAM CPU rig can run slowly. For a realistic hardware estimate, use the DeepSeek hardware calculator or start with a smaller distill from DeepSeek R1 Distill.

Does DeepSeek V3 support JSON mode and function calling?

The V3-0324 update added function calling, JSON output, and FIM completion to the open-weights release. On the hosted API, these features exist today on V4 (where legacy V3 IDs now route). JSON mode is designed to return valid JSON, not guaranteed — prompt explicitly with the word “json” and an example schema, and set max_tokens high enough to avoid truncation. Details are in the DeepSeek API JSON mode reference.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- Model cardDeepSeek V3 model card on Hugging FaceV3 architecture, 685B total Hugging Face footprint, FIM/JSON supportLast checked: April 30, 2026

- Model cardDeepSeek V3-0324 refresh model cardMarch 2025 V3-0324 refresh weights under MITLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

- Technical reportDeepSeek V3 technical reportHeadline benchmark numbers (MMLU 88.5, MATH-500 90.2, etc.)Last checked: April 30, 2026

Context sources

- NewsReuters: DeepSeek R1 trained for $294,000 (September 2025)Disclosing the $294K R1 training cost separate from V3Last checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.