How DeepSeek API JSON Mode Works on V4 (With Code)

If you have ever shipped an LLM feature to production, you know the pain: the model returns prose where you needed a parseable object, your downstream code throws, and the on-call engineer is paged at 2 a.m. The DeepSeek API JSON mode is the fix — it forces `deepseek-v4-pro` and `deepseek-v4-flash` to emit a valid JSON string instead of free text, so you can pipe outputs straight into a typed parser.

This guide covers exactly how the feature behaves on V4 (released April 24, 2026), the three prompt rules that prevent empty responses, a worked cost example for both V4 tiers, error handling, and how it compares to tool calling for structured extraction. Every claim is backed by the official documentation or a tested code path.

What DeepSeek API JSON mode actually does

JSON mode is a request-level flag that constrains the model’s output to a valid JSON string. You enable it by setting response_format={"type": "json_object"} on a POST /chat/completions request. The official DeepSeek docs describe the feature as designed to ensure valid JSON output, and the parameter reference says setting to { “type”: “json_object” } enables JSON Output, which guarantees the message the model generates is valid JSON.

That word “guarantees” needs a caveat. In practice, two failure modes still happen: the model can return empty content if the prompt does not steer it correctly, and JSON can still be truncated if you set max_tokens too low. The official guide acknowledges the first failure mode directly: when using the JSON Output feature, the API may occasionally return empty content. We are actively working on optimizing this issue. You can try modifying the prompt to mitigate such problems. Treat JSON mode as a strong constraint, not a contract.

JSON mode works on both V4 model IDs — deepseek-v4-flash and deepseek-v4-pro — in either default thinking mode or non-thinking (pass extra_body={"thinking": {"type": "disabled"}}). It also works on the legacy deepseek-chat and deepseek-reasoner IDs, which currently route to deepseek-v4-flash and will be retired on 2026-07-24 at 15:59 UTC. If you have an older integration, swap the model value to a V4 ID before that date — base_url does not change. For a refresher on the migration mechanics, see our DeepSeek OpenAI SDK compatibility notes.

Quickstart: minimal Python and curl

The cheapest way to test JSON mode is with the official OpenAI SDK pointed at DeepSeek’s base URL. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. Here is the canonical Python snippet, lifted with light edits from DeepSeek’s own JSON Output guide:

import json

from openai import OpenAI

client = OpenAI(

api_key="<your api key>",

base_url="https://api.deepseek.com",

)

system_prompt = """

The user will provide some exam text.

Please parse the "question" and "answer" and output them in JSON format.

EXAMPLE INPUT:

Which is the highest mountain in the world? Mount Everest.

EXAMPLE JSON OUTPUT:

{

"question": "Which is the highest mountain in the world?",

"answer": "Mount Everest"

}

"""

user_prompt = "Which is the longest river in the world? The Nile River."

response = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[

{"role": "system", "content": system_prompt},

{"role": "user", "content": user_prompt},

],

response_format={"type": "json_object"},

max_tokens=1024,

)

print(json.loads(response.choices[0].message.content))The equivalent curl call against the same endpoint:

curl https://api.deepseek.com/chat/completions

-H "Content-Type: application/json"

-H "Authorization: Bearer ${DEEPSEEK_API_KEY}"

-d '{

"model": "deepseek-v4-flash",

"messages": [

{"role": "system", "content": "You return JSON only. Schema: {"city":string,"temp_c":number}"},

{"role": "user", "content": "Hangzhou, 24 degrees."}

],

"response_format": {"type": "json_object"},

"max_tokens": 256

}'If you have not provisioned credentials yet, work through the API key setup walk-through first; the rest of this guide assumes a working key in DEEPSEEK_API_KEY.

The three prompt rules that prevent empty responses

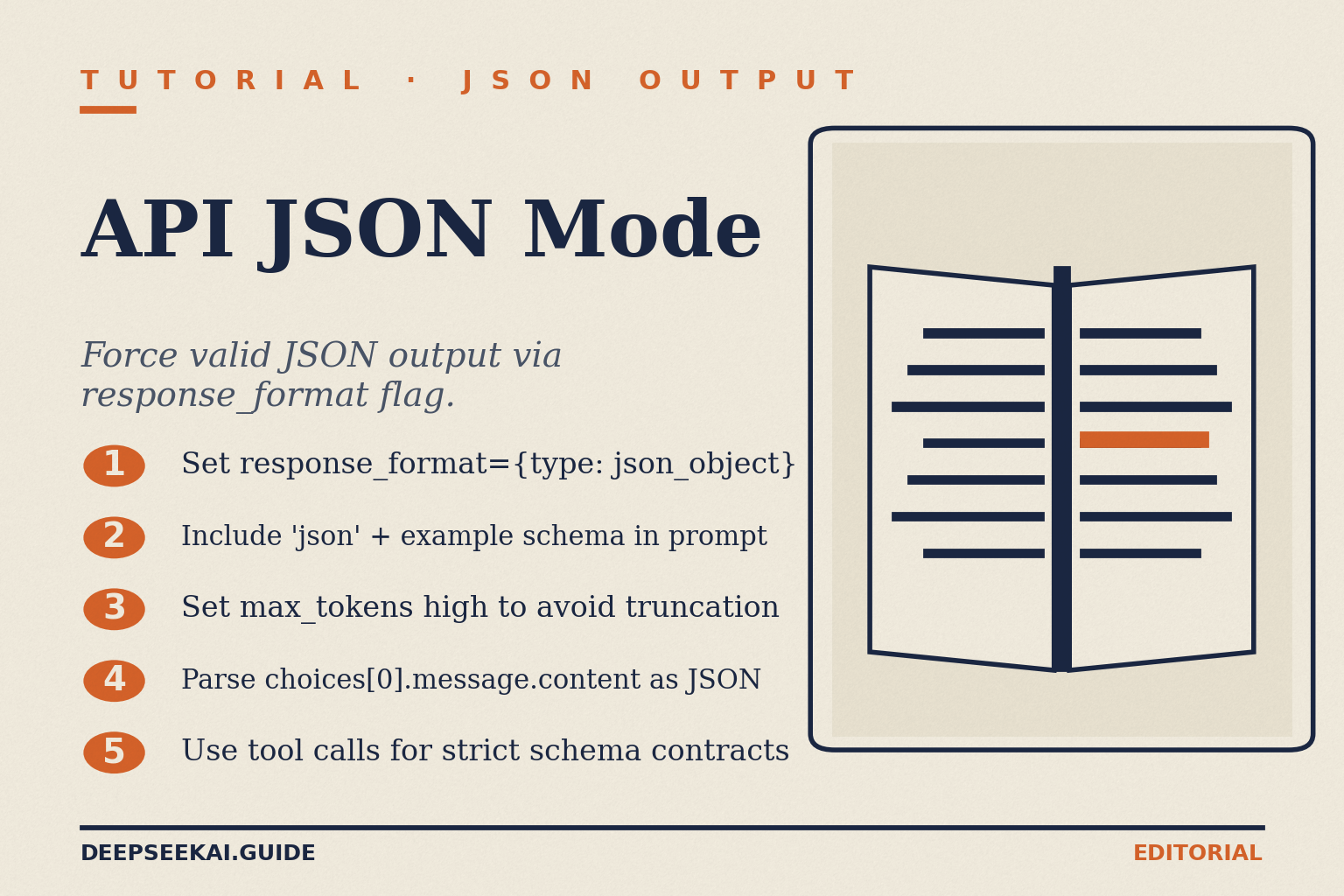

Most “JSON mode is broken” reports trace back to one of three preventable mistakes. The official docs are explicit about all three. To enable JSON Output, set the response_format parameter to {‘type’: ‘json_object’}. Include the word “json” in the system or user prompt, and provide an example of the desired JSON format to guide the model in outputting valid JSON. Set the max_tokens parameter reasonably to prevent the JSON string from being truncated midway.

- The literal word “json” must appear in the prompt. Without it, DeepSeek rejects the request before the model runs. A real bug filed against a Node integration captures the exact error: when using DeepSeek API, if the prompt doesn’t explicitly contain the word “json”, the API returns an error: Prompt must contain the word ‘json’ in some form to use ‘response_format’ of type ‘json_object’. The fix is one line of system prompt — “Reply in JSON only.” does it.

- Provide an example schema in the prompt. Without one, the model invents its own keys. The Stagehand catch-22 illustrates this: the project added “json” to satisfy the gate, then found DeepSeek returns a format like: { “action”: “fill”, “element”: {“id”: “…”, “role”: “…”, “name”: “…”}, “argument”: “Cota”, “twoStep”: false } — which did not match the integration’s expected schema at all. Always paste an example object showing exactly the keys, types, and nesting you want.

- Set

max_tokenshigh enough that the JSON cannot truncate. If the response stops mid-string, you get invalid JSON and a parser exception. The Chat Completions reference is blunt: the message content may be partially cut off if finish_reason=”length”, which indicates the generation exceeded max_tokens or the conversation exceeded the max context length. Estimate the largest reasonable response and double it; on V4 you can go up to 384,000 output tokens.

If you skip these and dump response_format on a stock chat prompt, expect failures. Our prompt-engineering reference covers the broader pattern of seeding examples into system prompts.

JSON mode vs tool calling: which to use when

Both features produce structured output, but they solve different problems.

| Feature | JSON mode | Tool calling (strict) |

|---|---|---|

| Activation | response_format={"type":"json_object"} |

tools=[...] with strict: true |

| Schema enforcement | Prompt-level only | Server-side against a JSON Schema |

| Output shape | Free-form JSON in content |

Structured args in tool_calls[].function.arguments |

| Best for | One-off extraction, classification, simple objects | Multi-step agents, validated function arguments, enums |

| Failure mode to handle | Empty content, schema drift | Hallucinated parameters (parser still required) |

Tool calling’s strict mode is stricter — DeepSeek validates against your JSON Schema before returning. The constraint is real: all properties of every object must be set as required, and the additionalProperties attribute of the object must be set to false. JSON mode has no such constraint, which is why an example schema in the prompt matters so much. For agent loops or anything where output keys map to function signatures, prefer tool calling — see the DeepSeek API function calling guide. For ad-hoc data extraction from documents, JSON mode is simpler and cheaper.

Detailed reference: parameters and response shape

The full request shape for JSON mode looks like this:

| Parameter | Value for JSON mode | Notes |

|---|---|---|

model |

deepseek-v4-flash or deepseek-v4-pro |

Legacy IDs accepted until 2026-07-24 15:59 UTC. |

messages |

Array including the word “json” + example | Stateless — resend full history every call. |

response_format |

{"type": "json_object"} |

Required to activate the constraint. |

max_tokens |

Generous — 1024 minimum, up to 384,000 | Prevents truncated JSON. |

temperature |

0.0 for deterministic extraction | DeepSeek recommends 0.0 for code/math; raise for creative work. |

reasoning_effort |

Optional "high" or "max" |

Pair with extra_body={"thinking": {"type": "enabled"}}. |

The response object follows the OpenAI shape. The JSON string lives in choices[0].message.content. If you enable thinking mode, the API returns reasoning_content alongside the final content, and your JSON parsing should target only content. Token usage breaks down into prompt_cache_hit_tokens, prompt_cache_miss_tokens, and completion_tokens — useful for accurate cost accounting (see the next section).

The API is stateless. The server keeps no memory of previous calls; you must resend the full messages array each time. This differs from the web app, which maintains session history on the server. The full API documentation hub has the complete request/response schema if you want to dig deeper.

Pricing worked example

JSON mode does not change the rate card — you pay the same per-token rates as a regular chat completion. The wrinkle is that example schemas in the system prompt are tokens you pay for on every call, so context caching matters more than usual. Pricing below is from DeepSeek’s published rate card as of April 2026; verify against the current DeepSeek API pricing page before committing.

| Model tier | Input cache hit ($/1M) | Input cache miss ($/1M) | Output ($/1M) |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 | $0.14 | $0.28 |

deepseek-v4-pro |

$0.003625 (promo, list $0.0145) | $0.435 (promo, list $1.74) | $0.87 (promo, list $3.48) |

Imagine a document-extraction job: 1,000,000 invoices through deepseek-v4-flash, with a 2,000-token system prompt (containing the JSON schema example) cached across calls, a 200-token user message per invoice (uncached miss), and a 300-token JSON response.

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

Same workload on deepseek-v4-pro for comparison — useful only if Flash’s quality is not enough on your specific schema:

- Cached input: 2,000,000,000 × $0.003625/M (promo) = $7.25 (list $29.00)

- Uncached input: 200,000,000 × $0.435/M (promo) = $87.00 (list $348.00)

- Output: 300,000,000 × $0.87/M (promo) = $261.00 (list $1,044.00)

- Total: $355.25 during the 75% promo through 2026-05-31 (list-price total $1,421.00) — roughly 3× the Flash bill during the promo, ~14× at list.

Two practical notes. First, the cache only applies to repeated prefixes — your per-invoice user message is always a miss, so the uncached-input line never goes to zero. Second, do not mix tier rates in one calculation. For more cost-modelling patterns, see our deep-dive on DeepSeek context caching.

Error handling patterns

JSON mode introduces two errors you need to handle on top of normal API failures.

Empty content

Per the official docs, the API may occasionally return empty content. Detect this on the client and retry with a slightly tweaked prompt or a temperature adjustment. A defensive pattern in Python:

raw = response.choices[0].message.content

if not raw or not raw.strip():

# Retry once with stronger instruction

return retry_with_stronger_prompt(...)

try:

parsed = json.loads(raw)

except json.JSONDecodeError:

# Truncation or malformed - check finish_reason

if response.choices[0].finish_reason == "length":

return retry_with_higher_max_tokens(...)

raiseTruncation

Always inspect finish_reason. If it equals "length", the response was cut off and the JSON is almost certainly invalid. Bump max_tokens and retry. For the broader catalogue of HTTP-level errors (401, 402 Insufficient Balance, 429 rate limit, 503), see the DeepSeek API error codes reference.

Schema drift

Even with an example schema in the prompt, the model may add or rename keys on edge-case inputs. Validate every parsed payload against a Pydantic model (Python) or Zod schema (TypeScript) before it touches business logic. If validation fails, log the raw output and retry with a strict reminder. This is the pattern that separates demoware from production code; our best-practices write-up goes deeper on it.

JSON mode in thinking mode

You can combine JSON mode with V4’s reasoning capability. Set reasoning_effort="high" and extra_body={"thinking": {"type": "enabled"}} alongside response_format. The API returns reasoning_content alongside the final content — the JSON lives in content, and the reasoning trace in reasoning_content is informational only.

This is useful for extraction tasks that need a chain of inference (legal clauses, multi-step financial reasoning, complex schema mapping). It is overkill for flat extraction. The output token bill goes up because reasoning tokens are billed as completion tokens. Stay in non-thinking mode for high-volume, structurally simple jobs.

Related reading

If you are wiring DeepSeek’s structured output into a wider stack:

- For agent-style workflows where the model picks a tool, see function calling.

- For long-running structured streams (e.g. live transcription cleanup), combine JSON mode with DeepSeek API streaming — note that JSON-mode streams are usable but you must buffer until the close brace before parsing.

- For end-to-end runnable scripts, browse the DeepSeek API code examples collection.

- For the broader API surface, the DeepSeek API docs and guides hub indexes every reference page on this site.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Does DeepSeek API JSON mode guarantee valid JSON output?

The official parameter description says JSON mode guarantees valid JSON, but the JSON Output guide also notes that the API may occasionally return empty content. Treat it as a strong constraint, not a contract — always wrap parsing in a try/except, check finish_reason for truncation, and validate against a schema. The DeepSeek API best practices guide covers the full retry pattern.

How do I fix the “Prompt must contain the word ‘json'” error?

DeepSeek requires the literal string “json” in your system or user prompt before it will honour response_format={"type": "json_object"}. Add a sentence like “Respond in JSON only” to your system message. While you are there, paste an example of the exact schema you want — the model otherwise invents its own keys. Our prompt engineering guide has more patterns.

What is the difference between JSON mode and tool calling on DeepSeek?

JSON mode constrains the assistant’s content field to a JSON string, with schema enforcement coming from your prompt. Tool calling returns structured arguments inside tool_calls, validated server-side against a JSON Schema when you set strict: true. Use JSON mode for one-shot extraction; use function calling for agents, enums, or anywhere a typed signature matters.

Can I use JSON mode with the legacy deepseek-chat model ID?

Yes, until 2026-07-24 at 15:59 UTC. deepseek-chat and deepseek-reasoner currently route to deepseek-v4-flash in non-thinking and thinking modes respectively, and JSON mode works on both routes. After that retirement date, those IDs will fail. Migrate by changing the model string to deepseek-v4-flash or deepseek-v4-pro — the OpenAI compatibility notes cover the one-line swap.

Why is my DeepSeek JSON response getting truncated?

You hit the max_tokens ceiling. The API stops generating mid-string, which produces invalid JSON. Inspect finish_reason — if it equals "length", raise max_tokens and retry. V4 supports up to 384,000 output tokens, so you have headroom. Use the DeepSeek token counter to size prompts accurately before sending.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- OfficialDeepSeek API JSON-mode documentationresponse_format json_object behaviour and empty-content caveatLast checked: April 30, 2026

Methodology

Pricing figures were checked against the official DeepSeek pricing page and normalised into estimated monthly costs using sample token usage scenarios. Actual costs may vary depending on caching, input/output ratio, regional availability, and provider updates.

Data confidence

High — figures checked directly against the official pricing page on the review date.

Editorial note

DeepSeek occasionally runs promotional discounts that are not always reflected in the headline page. The pricing dataset on this site auto-refreshes monthly; this article reflects the dataset on the date shown above.