DeepSeek V3.2 explained: sparse attention, benchmarks and what replaced it

If you shipped anything against DeepSeek’s API in late 2025, you almost certainly ran into DeepSeek V3.2. It landed on December 1, 2025 as the official successor to V3.2-Exp, carried an aggressive price drop, and introduced DeepSeek Sparse Attention (DSA) — the architectural change that made long-context calls roughly half the cost overnight. Four and a half months later, on April 24, 2026, DeepSeek V4 arrived and reshuffled the model lineup again. This article covers what V3.2 actually was (architecturally, not in marketing terms), how it benchmarked against V3.1-Terminus, what it cost to run, and what changes in practice if you are migrating an integration forward to V4-Flash or V4-Pro today.

What DeepSeek V3.2 is (and where it sits now)

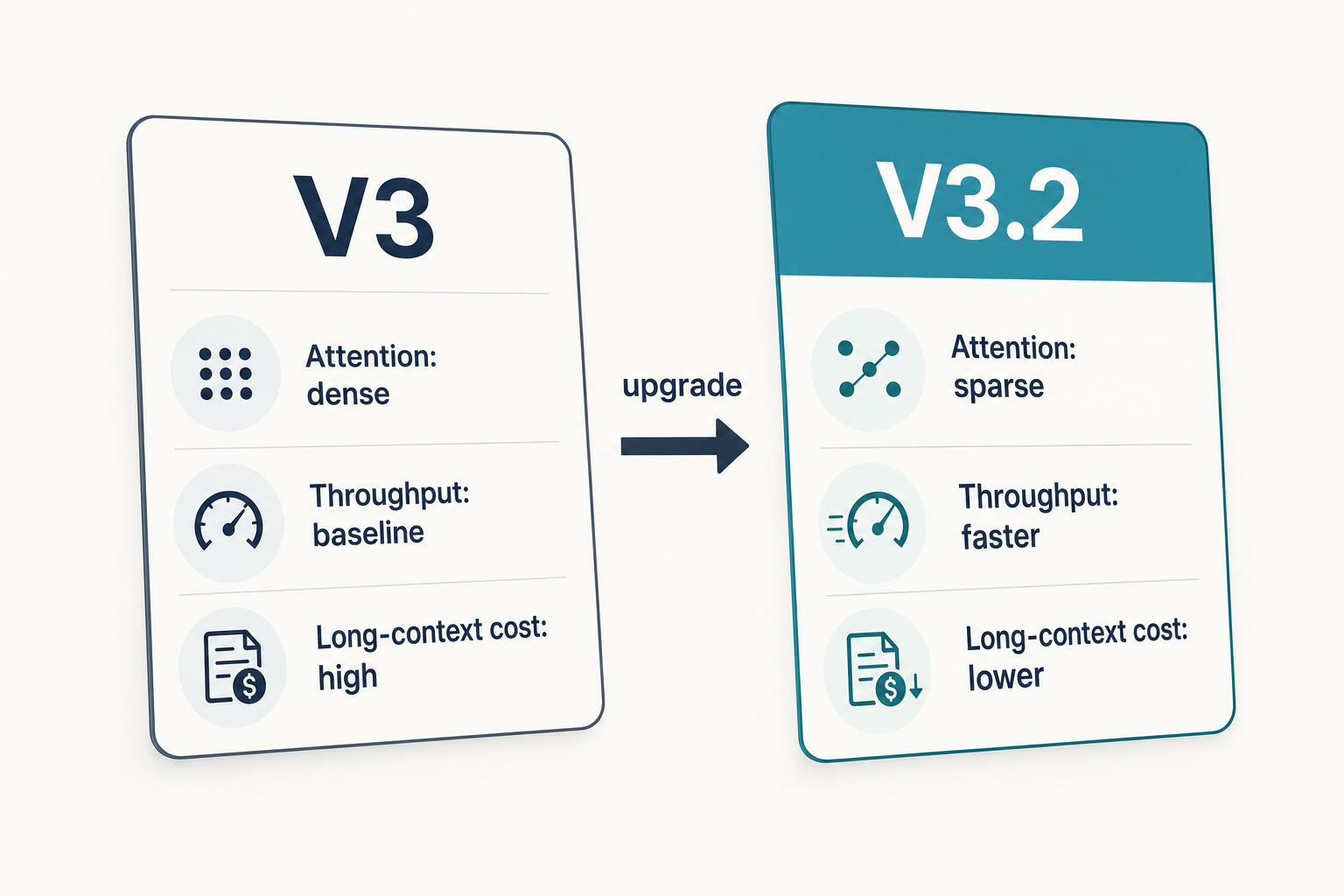

DeepSeek V3.2 is an open-weight mixture-of-experts (MoE) language model released by DeepSeek-AI on December 1, 2025, distributed on Hugging Face under the MIT License. It is the official, production-ready successor to the September experimental release V3.2-Exp, and it was the company’s flagship chat model until DeepSeek V4 launched on April 24, 2026. The headline architectural change versus V3.1-Terminus is a single one: DeepSeek-V3.2 uses exactly the same architecture as DeepSeek-V3.2-Exp, and compared with V3.1-Terminus the only architectural modification is the introduction of DeepSeek Sparse Attention (DSA) through continued training.

As of April 24, 2026, V3.2 is the previous generation. The legacy API IDs deepseek-chat and deepseek-reasoner, which pointed at V3.2 until the V4 release, now route to deepseek-v4-flash and will be retired at 2026-07-24 15:59 UTC. Any production integration still calling those IDs should plan a one-line swap within that window. See the full lineup on the DeepSeek models hub.

Architecture and lineage

V3.2 inherits the 671B-total-parameter MoE backbone introduced with V3, continued through V3.1 and V3.1-Terminus. What is new is how attention is computed.

DeepSeek Sparse Attention (DSA)

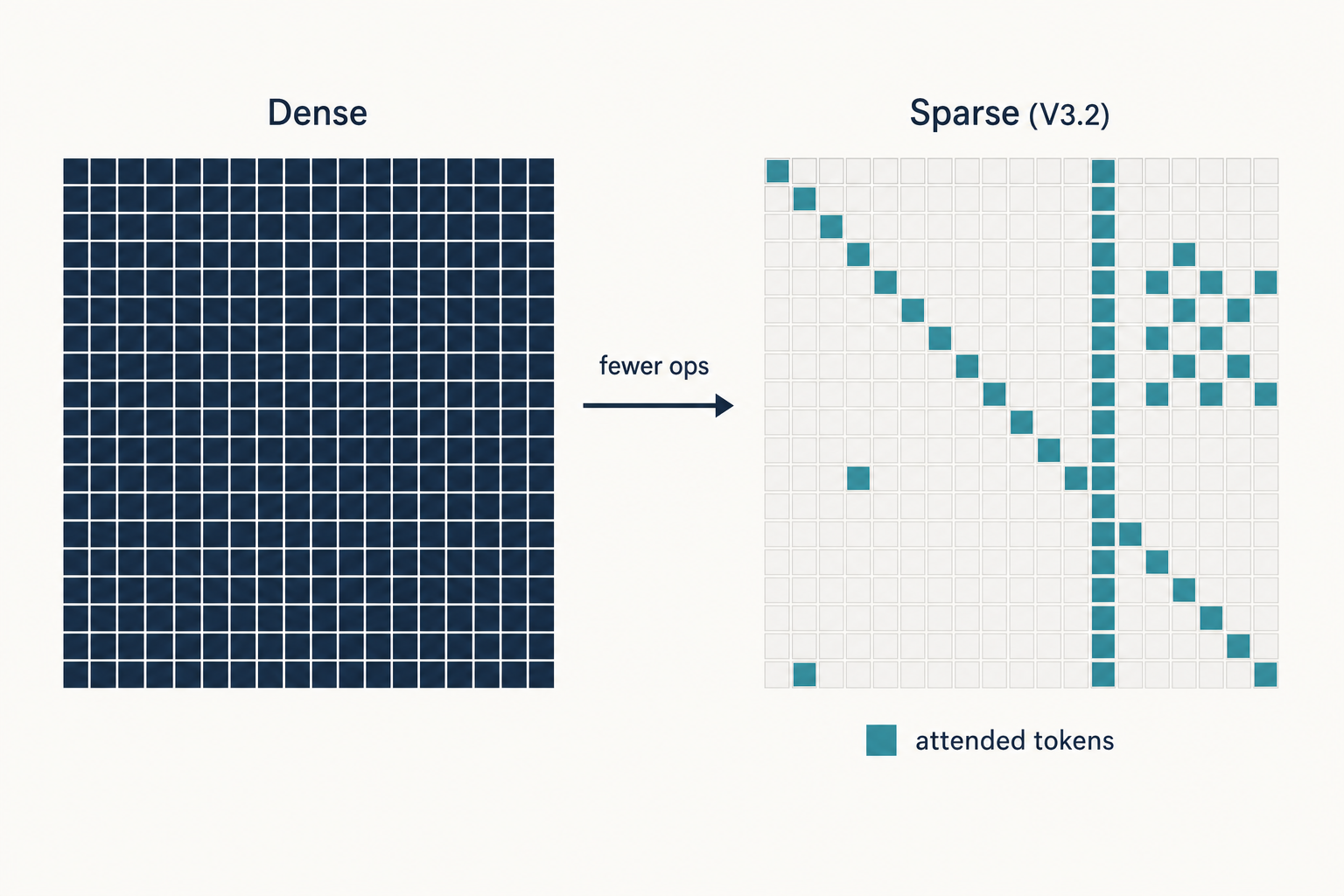

Classical transformer attention is quadratic: every token attends to every other token, so doubling the sequence length quadruples the compute. The DSA prototype consists of two components — a lightning indexer and a fine-grained token selection mechanism. The lightning indexer computes an index score between the current query token and each preceding token, determining which tokens the query should attend to. Only the top-k selected key-value entries feed the main attention step. DSA reduces attention’s computational complexity from quadratic O(L²) to linear O(Lk), where k (≪ L) is the number of selected tokens.

DeepSeek kept the training recipe deliberately conservative to isolate the architectural effect. DSA achieves fine-grained sparse attention while maintaining virtually identical model output quality, and the training configurations of V3.2-Exp were deliberately aligned with V3.1-Terminus to evaluate the impact cleanly. V3.2 then added a scaled reinforcement-learning post-training phase on top of the same architecture.

Variants shipped under the V3.2 name

- DeepSeek-V3.2 — the daily-driver model served on

deepseek-chat(non-thinking) anddeepseek-reasoner(thinking) during the V3.2 window. - DeepSeek-V3.2-Exp — the September 29, 2025 experimental predecessor, shipped first to stabilise serving infrastructure.

- DeepSeek-V3.2-Speciale — a high-compute reasoning variant. API-only, served via a temporary endpoint and intentionally without tool-calling to support community evaluation. It targeted maximum reasoning effort rather than everyday chat.

Context, licensing and release timeline

| Attribute | Value |

|---|---|

| Architecture | MoE with Multi-Head Latent Attention + DeepSeek Sparse Attention |

| Total parameters | 671B (shared with V3.1-Terminus) |

| Context window | 128K tokens |

| V3.2-Exp release | 2025-09-29 |

| V3.2 official release | 2025-12-01 |

| Superseded by | DeepSeek V4 on 2026-04-24 |

| Code and weights license | MIT |

For the older base model V3.2 was trained from, see DeepSeek V3. For the next generation that has replaced it, see DeepSeek V4.

Benchmarks: V3.2 vs V3.1-Terminus and the Speciale headline

DeepSeek designed V3.2-Exp as an architectural ablation: the point was to show parity with V3.1-Terminus while the attention cost dropped, not to claim new highs. Numbers below are from DeepSeek’s own report and the llm-stats summary of the September 2025 release; cross-check the V3.2 technical report on arXiv if you need the exact table.

| Benchmark | V3.1-Terminus | V3.2-Exp | Note |

|---|---|---|---|

| MMLU-Pro | 85.0 | 85.0 | Unchanged at 85.0 |

| AIME 2025 (Pass@1) | ~88 | 89.3 | Small rise; HMMT dipped modestly |

| Codeforces rating | 2046 | 2121 | Competitive-programming rating improved |

| SWE-Bench Verified | ~67 | 67.8 | Coding accuracy virtually tied with Kimi K2 on the late-2025 versions |

The V3.2 release (December 2025) then pushed further. DeepSeek-V3.2 harmonises high computational efficiency with stronger reasoning and agent performance, and the company reported it performs comparably to GPT-5 on its scalable RL framework. The extreme variant, V3.2-Speciale, was positioned specifically for long reasoning: Speciale achieves performance parity with Gemini-3.0-Pro and showed gold-medal performance in IOI 2025, ICPC World Final 2025, IMO 2025 and CMO 2025. Treat those as vendor-reported; external leaderboards typically show narrower gaps.

Strengths — where V3.2 actually won

- Long-context economics. DSA’s O(Lk) scaling is the whole point. At 128K tokens, V3.2 processed inputs far more cheaply than any dense-attention peer with the same parameter count.

- Agentic tool-use. V3.2 was DeepSeek’s first model to integrate thinking directly into tool-use, supporting tool-calling in both thinking and non-thinking modes. That combination made it a credible open choice for agent frameworks.

- Price. The September V3.2-Exp release triggered an API price cut of more than 50%, and the December V3.2 release kept those rates.

- Open weights. MIT-licensed weights meant you could self-host the same model used on the official API, which matters for regulated or air-gapped environments. See is DeepSeek open source for the licensing detail per release.

Weaknesses — where it fell short

- Context ceiling. 128K was generous in late 2025 but is now outclassed by V4’s 1,000,000-token default.

- No native multimodal input. V3.2 is a text model; for vision you needed DeepSeek VL2.

- Custom inference kernels. DSA is not a drop-in attention backend. Running weights locally required the FlashMLA / DeepGEMM / TileLang kernels DeepSeek released alongside the model, and early inference code had a subtle RoPE bug that was fixed on 2025-11-17 — self-hosters who pulled weights before then had to re-test.

- Speciale’s tool-use gap. The Speciale reasoning variant didn’t support tool-calling during its temporary endpoint window, so agentic workloads had to stay on standard V3.2.

How to access V3.2 today

DeepSeek V3.2 remains available as open weights and is still referenced on DeepSeek’s pricing documentation during the V4 Preview window, but the default chat and API experience has moved to V4. Your three paths:

- Official API. Chat requests hit

POST /chat/completions, the OpenAI-compatible endpoint athttps://api.deepseek.com. The API is stateless — clients must resend the entire conversation history on every request, which is a different contract from the web and mobile apps, which maintain session history for you. For new work, use the V4 model IDs directly; for legacy V3.2-era code, the old IDs still resolve (to V4-Flash now) until July 24, 2026. See DeepSeek API documentation. - Self-host. Download from Hugging Face and serve with vLLM or SGLang. SGLang launches with

python -m sglang.launch_server --model deepseek-ai/DeepSeek-V3.2-Exp --tp 8 --dp 8 --enable-dp-attention, and vLLM provided day-0 support. Hardware planning matters — start at the DeepSeek hardware calculator. - Web and mobile apps. The apps now default to V4. V3.2 is no longer user-selectable there.

A minimal V4-Flash Python call using the OpenAI SDK looks like this:

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise this contract in three bullets."}],

temperature=1.0,

max_tokens=1024,

)

print(resp.choices[0].message.content)Swap model to deepseek-v4-pro for frontier-tier work, or add reasoning_effort="high" plus extra_body={"thinking": {"type": "enabled"}} to get reasoning_content alongside the final content.

Pricing snapshot

V3.2 pricing applied from September 29, 2025 (the V3.2-Exp drop) through the V4 release. Those rates — $0.28 per million input tokens (cache miss) and $0.42 per million output tokens — are no longer current. As of April 2026, V4-Flash has undercut them. Use the live DeepSeek API pricing page and the DeepSeek pricing calculator before you quote costs to a stakeholder.

Worked example — V4-Flash (what V3.2 workloads should migrate to)

1,000,000 API calls with a 2,000-token cached system prompt, a 200-token uncached user message, and a 300-token response, at V4-Flash rates ($0.0028 cache-hit / $0.14 cache-miss / $0.28 output, per 1M tokens):

- Cached input: 2,000 × 1,000,000 = 2.0B tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 0.2B tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 0.3B tokens × $0.28/M = $84.00

- Total: $117.60

Enumerating all three buckets matters — the user message on every call is still a cache miss against the cached system prefix, so leaving that line out understates real spend.

Best use cases

V3.2’s strengths mapped neatly to a handful of roles. If you’re picking a model today, these are the workloads where V3.2 (or its V4 successor) fits:

- Long-document analysis. Contracts, filings, codebases — see DeepSeek for legal research.

- Research assistance. Synthesising across papers and sources — see DeepSeek for research.

- Coding agents. Thinking-mode + tool-use is a workable agent loop — see DeepSeek for coding.

- RAG backends. Open weights + DSA favour RAG setups with large retrieved contexts — see the DeepSeek RAG tutorial.

Comparable alternatives

Anyone evaluating V3.2 in 2025 was typically also evaluating GPT-5-family models and Claude 4-family models. In the open-weights tier, the main competitors were Kimi K2 and Qwen-3-Max on coding; on reasoning, DeepSeek’s own R1 family was still the internal benchmark. For structured comparisons: DeepSeek vs ChatGPT, DeepSeek vs Claude, and the broader DeepSeek alternatives roundup.

Verdict

DeepSeek V3.2 did exactly what DeepSeek said it would: it kept V3.1-Terminus quality, halved long-context costs through DSA, and seeded the agentic tool-use and scaled-RL patterns that V4 then built on. It was the right model to run from October 2025 through April 2026. It is not the right model to start a new integration on today — migrate to V4-Flash for chat workloads or V4-Pro for frontier agentic work, keeping the same base_url and changing only the model field.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Is DeepSeek V3.2 still the current model?

No. DeepSeek V4 replaced V3.2 as the default model on April 24, 2026. V3.2 weights remain downloadable under MIT on Hugging Face, and legacy API IDs that used to route to V3.2 now route to deepseek-v4-flash until 2026-07-24 15:59 UTC. For current options, see DeepSeek V4 or the DeepSeek latest updates feed.

What does DeepSeek Sparse Attention actually do?

DSA replaces dense attention with a two-step process: a lightning indexer scores preceding tokens, then a fine-grained selector keeps only the top-k for the main attention calculation. This moves attention cost from quadratic to linear in sequence length with minimal quality loss, which is why V3.2 undercut V3.1-Terminus on long-context pricing. More in the DeepSeek research papers roundup.

How do I migrate from deepseek-chat or deepseek-reasoner to V4?

Change one line: set model="deepseek-v4-flash" (for chat/non-thinking) or model="deepseek-v4-pro" (for frontier work). The base_url stays at https://api.deepseek.com. Add reasoning_effort="high" plus the thinking flag for reasoning mode. Walkthrough: DeepSeek API getting started.

Can I still run DeepSeek V3.2 locally?

Yes. Weights are MIT-licensed on Hugging Face and vLLM plus SGLang both support DSA’s custom kernels. Plan for eight-GPU H100/H200 class hardware at minimum for the 671B MoE. Check the requirements first at DeepSeek system requirements and the setup path in install DeepSeek locally.

Does V3.2 support thinking mode and tool-use together?

Yes — this was one of V3.2’s notable additions. V3.2 was DeepSeek’s first model to integrate thinking directly into tool-use, and supports tool-use in both thinking and non-thinking modes. The Speciale variant was the exception: it ran without tool-calling during its temporary endpoint window. For a deeper look at reasoning-capable DeepSeek models, see DeepSeek R1.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- Model cardDeepSeek V3.2-Exp model card on Hugging FaceV3.2-Exp open weights, MIT license, SGLang launch commandLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- Technical reportDeepSeek V3.2 technical report (arXiv 2512.02556)DSA architecture and benchmark parity with V3.1-TerminusLast checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.