A Practical DeepSeek for Developers Guide (V4, April 2026)

You inherited a service that calls `deepseek-chat`, your team lead wants the bill cut, and the V4 release notes mention a hard cutover date in July. What changes in your code, what stays the same, and is V4-Pro worth seven times the per-token spend over V4-Flash for your workload? This guide answers those questions for working developers. DeepSeek for developers in April 2026 means a single OpenAI-compatible base URL, two open-weight Mixture-of-Experts models, a 1-million-token context, and a stateless `POST /chat/completions` endpoint that you already know how to call. Below: a quickstart, the parameters that matter, honest cost math for both tiers, and where DeepSeek falls short.

What changed for developers in April 2026

On April 24, 2026, DeepSeek released a preview of DeepSeek V4 as two open-weight Mixture-of-Experts models. Both models are 1 million token context Mixture of Experts. Pro is 1.6T total parameters, 49B active. Flash is 284B total, 13B active. They share the same feature set — context window, JSON mode, tool calling, streaming, context caching — and differ in capability tier and price.

If your code currently calls deepseek-chat or deepseek-reasoner, you have a migration window. Deprecation: deepseek-chat and deepseek-reasoner retire July 24 2026. They currently route to deepseek-v4-flash. The retirement is at 15:59 UTC. After that, requests using legacy IDs fail. Migration is a one-line change to the model= field; the base_url stays the same.

Quickstart: minimal Python and curl

The DeepSeek API is OpenAI-compatible. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint, against base URL https://api.deepseek.com. The OpenAI Python SDK works unchanged — swap the base URL and key.

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_DEEPSEEK_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": "You are a terse code reviewer."},

{"role": "user", "content": "Review this diff: ..."},

],

temperature=0.0,

max_tokens=2000,

)

print(resp.choices[0].message.content)

The same call in curl:

curl https://api.deepseek.com/chat/completions

-H "Authorization: Bearer $DEEPSEEK_API_KEY"

-H "Content-Type: application/json"

-d '{

"model": "deepseek-v4-flash",

"messages": [{"role": "user", "content": "ping"}],

"temperature": 0.0

}'

DeepSeek also ships an Anthropic-compatible surface against the same base URL, which is useful if you already use the Anthropic SDK or run agent harnesses like Claude Code. DeepSeek V4-Pro is confirmed compatible with Claude Code, OpenClaw, and OpenCode. For Claude Code: set ANTHROPIC_BASE_URL to DeepSeek’s API endpoint and ANTHROPIC_AUTH_TOKEN to your DeepSeek API key. See our DeepSeek OpenAI SDK compatibility notes for the full list of supported parameters.

Stateless API: the one thing the docs bury

The API does not remember prior turns. You must resend the full messages array on every request to sustain a conversation. Contrast that with DeepSeek chat on the web and mobile app, which keeps session history server-side. New developers routinely confuse the two surfaces and then debug a “memory loss bug” that does not exist.

For multi-turn agents, that means you own the history-trimming policy: drop earliest turns when you near the 1M-token cap, summarise stale tool output, and rely on context caching (covered below) to keep repeated prefixes cheap.

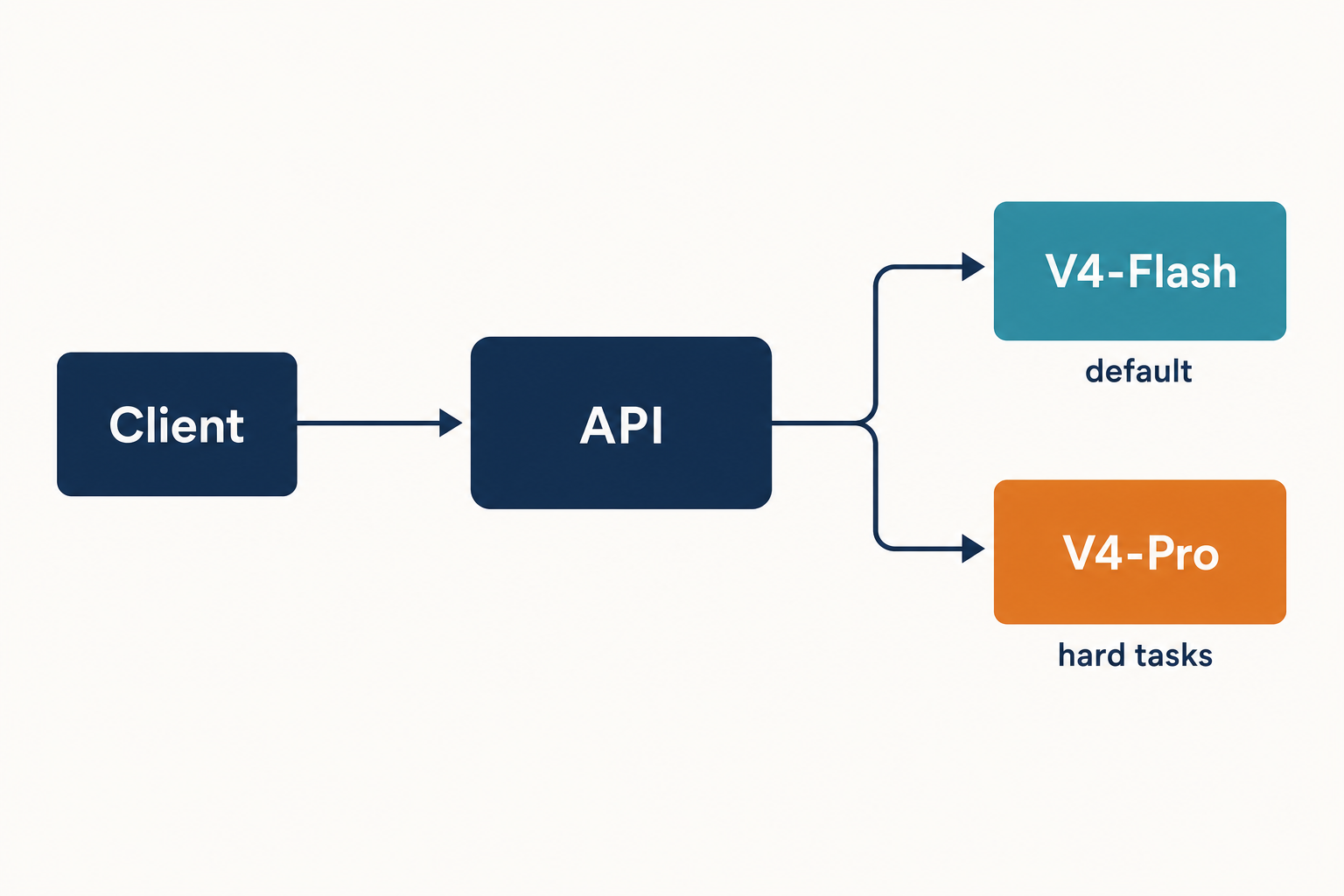

V4-Flash vs V4-Pro: which tier for which job

The two model IDs share the same wire protocol and feature set. Pick by capability needs and budget.

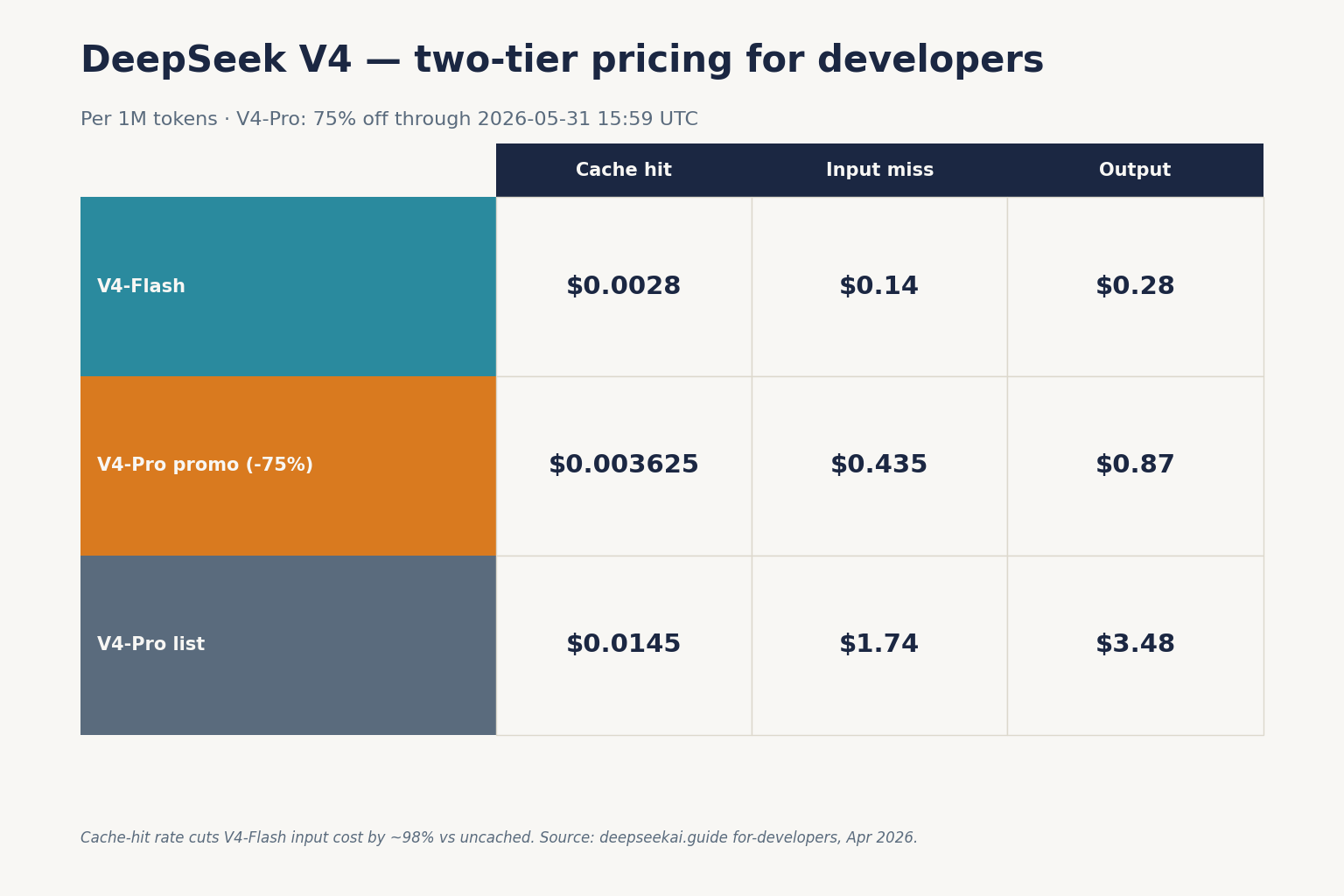

| Model | Total / active params | Cache-hit input | Cache-miss input | Output | Best for |

|---|---|---|---|---|---|

deepseek-v4-flash |

284B / 13B | $0.0028 / 1M | $0.14 / 1M | $0.28 / 1M | High-volume chat, classification, retrieval, drafting |

deepseek-v4-pro |

1.6T / 49B | $0.0145 / 1M (list; promo $0.003625 through 2026-05-31) | $0.435 promo / $1.74 list per 1M | $0.87 promo / $3.48 list per 1M | Agentic coding, competitive reasoning, hard tool use |

Prices verified against DeepSeek’s pricing page. They’re charging $0.14/million tokens input and $0.28/million tokens output for Flash, and $0.435/million input and $0.87/million output for Pro during the 75% promo through 2026-05-31 (list $1.74 / $3.48). as of April 25, 2026. The off-peak nighttime discount that V3 users may remember ended September 5, 2025 and was not reintroduced for V4 — do not assume it.

For coding work specifically, V4-Pro pays for itself when the task is hard. On coding benchmarks, DeepSeek V4-Pro leads Claude on Terminal-Bench 2.0 (67.9% vs 65.4%), LiveCodeBench (93.5% vs 88.8%), and Codeforces rating (3206 vs no reported score). Claude Opus 4.6 holds a marginal lead on SWE-bench Verified (80.8% vs 80.6%), and a meaningful lead on HLE (40.0% vs 37.7%) and HMMT 2026 math (96.2% vs 95.2%). If you are running an autonomous coding agent, those numbers move the cost-per-completed-task math more than the headline per-token rate. DeepSeek for coding goes deeper on the agent setup.

Thinking mode is a parameter, not a model

V4 dropped the separate “reasoner” model ID. Both V4 tiers support three reasoning-effort settings via request parameters:

- Non-thinking (default) — fastest path, no reasoning trace. Use for chat, classification, routine codegen. This is the only mode that supports FIM completion (Beta).

- Thinking (high) — set

reasoning_effort="high"withextra_body={"thinking": {"type": "enabled"}}. The response returnsreasoning_contentalongside the finalcontent. - Thinking-max —

reasoning_effort="max". For the Think Max reasoning mode, we recommend setting the context window to at least 384K tokens. Use for the hardest reasoning, math, and multi-step planning.

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Plan a zero-downtime DB migration."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

print(resp.choices[0].message.reasoning_content) # the trace

print(resp.choices[0].message.content) # the answer

Parameters every developer should know

DeepSeek publishes specific temperature defaults by task type, which are worth matching rather than guessing:

temperature=0.0— code generation and mathematicstemperature=1.0— data analysis and data cleaningtemperature=1.3— general conversation and translationtemperature=1.5— creative writing

Other parameters worth touching first:

top_p— nucleus sampling. Pick this or temperature, not both.max_tokens— output cap. V4 supports up to 384,000 output tokens. Set this high enough that JSON-mode replies are not truncated.stream=true— server-sent events. With thinking enabled, reasoning content streams alongside final content.response_format={"type": "json_object"}— JSON mode. Designed to return valid JSON, not guaranteed; the prompt should include the word “json” and a small example schema, and you should still handle occasional empty content. See DeepSeek API JSON mode for the full pattern.- Tool calling — OpenAI-compatible function-calling shape. Works in both thinking and non-thinking modes.

For deeper coverage, DeepSeek API best practices walks through error handling, retries, and idempotency.

Cost math: what 1M calls actually costs

The single most common mistake in DeepSeek cost estimates is collapsing input tokens into one bucket. The cache-hit rate only applies to the repeated prefix the provider has already seen; each new user message is a cache miss against that prefix.

Worked example — V4-Flash. 1,000,000 calls, with a cached 2,000-token system prompt, 200 tokens of fresh user input per call, and 300 tokens of output per call:

Input, cache hit : 2,000,000,000 tokens × $0.0028 / 1M = $5.60

Input, cache miss : 200,000,000 tokens × $0.14 / 1M = $28.00

Output : 300,000,000 tokens × $0.28 / 1M = $84.00

-------

Total $117.60

Same workload — V4-Pro.

Input, cache hit : 2,000,000,000 tokens × $0.0145 / 1M = $29.00

Input, cache miss : 200,000,000 tokens × $1.74 / 1M (list) = $348.00

Output : 300,000,000 tokens × $3.48 / 1M (list) = $1,044.00

---------

Total $1,421.00

That is the real Flash-vs-Pro economics: roughly 10× the spend for the frontier-tier model on identical traffic. If you want to plug your own token estimates in, the DeepSeek pricing calculator handles both tiers, and the DeepSeek token counter sizes individual prompts. DeepSeek context caching covers what the provider considers a cacheable prefix.

Workflows where DeepSeek pays off

Five concrete patterns from production use:

1. Agentic coding via Claude Code or OpenCode

Point your existing Claude Code config at DeepSeek’s Anthropic-compatible endpoint, swap the model string to deepseek-v4-pro, and you keep the harness. The cost delta over Claude Opus is large enough to make scheduled, long-running coding agents economically viable.

2. Long-context code review

The 1M-token context window means you can pass an entire mid-sized repository as a single prompt and ask for review across files. Cache the codebase prefix; iterate on per-file user messages. Expect cache-miss costs only on the new diff.

3. Local development with VS Code

For inline completions and chat in the editor, point a Continue or Cline-style extension at DeepSeek’s base URL with deepseek-v4-flash. The latency and per-token cost beat almost every commercial alternative for non-frontier work. DeepSeek with VS Code walks through the configuration.

4. Retrieval-augmented generation

Combine an embedding model with V4-Flash for cost-efficient RAG; reserve V4-Pro for synthesis steps where reasoning quality matters. DeepSeek RAG tutorial covers a working pipeline using DeepSeek with LangChain.

5. Self-hosted inference

Both V4 models ship under MIT on Hugging Face. Pro is 865GB on Hugging Face, Flash is 160GB. Flash is realistic on a single high-RAM workstation; Pro needs serious infrastructure. Install DeepSeek locally and running DeepSeek on Ollama cover the practical setups.

Limitations to plan around

DeepSeek for developers is not a universal answer. Three honest gaps:

- Multimodal. Multimodal. V4 is text only. DeepSeek says it is “working on incorporating multimodal capabilities to our models” but the current release does not accept images, audio, or video. If you need vision, route those calls elsewhere or use the older DeepSeek VL2.

- Frontier knowledge tasks. HLE (Humanity’s Last Exam) at 37.7% puts V4-Pro below Claude (40.0%), GPT-5.4 (39.8%), and well below Gemini-3.1-Pro (44.4%). SimpleQA-Verified at 57.9% versus Gemini’s 75.6% reveals a meaningful factual knowledge retrieval gap. If your use case requires accurate real-world knowledge recall — not just code generation — Gemini holds a clear edge.

- Data residency. Conversations against the public API are processed on servers subject to Chinese law. For regulated workloads, self-host the open weights or use a regional aggregator. DeepSeek privacy covers the trade-offs.

Migration checklist (deepseek-chat → V4)

- Inventory every call site that sends

model="deepseek-chat"or"deepseek-reasoner". - Replace with

"deepseek-v4-flash"as the default; promote specific call sites to"deepseek-v4-pro"based on quality testing. - Where you used

deepseek-reasoner, addreasoning_effort="high"and theextra_bodythinking flag. - Re-tune

max_tokensfor thinking mode — reasoning traces consume output tokens. - Re-baseline your monthly cost projection against the new rate card before the July 24, 2026 cutover. Compare with peers in our DeepSeek vs ChatGPT writeup if you are in an active vendor review.

For the broader landscape of DeepSeek use cases, see the category hub.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

How do I migrate from deepseek-chat to V4?

Change the model field from deepseek-chat to deepseek-v4-flash (or deepseek-v4-pro for harder tasks). The base_url stays the same, and the OpenAI SDK call shape does not change. Legacy IDs route to V4-Flash today and retire on July 24, 2026 at 15:59 UTC. See the DeepSeek API documentation for the full migration notes.

What is the difference between DeepSeek V4-Flash and V4-Pro?

Flash has 284B total / 13B active parameters and costs $0.14 input miss / $0.28 output per 1M tokens. Pro has 1.6T total / 49B active and costs $0.435 / $0.87 during the 75% promo through 2026-05-31 (list $1.74 / $3.48). Both share the 1M-token context, JSON mode, tool calling, streaming, and three reasoning-effort modes. Pro wins on hard coding and reasoning benchmarks; Flash is the right default for high-volume work. The full breakdown lives in our DeepSeek V4-Flash and DeepSeek V4-Pro pages.

Does the DeepSeek API remember conversation history?

No. The POST /chat/completions endpoint is stateless. You must resend the full messages array on every request. The web chat and mobile app keep session history for end users, but the API does not. Context caching makes resending repeated prefixes cheap, not free — see the DeepSeek context caching guide for the cache-hit pricing.

Can I use the OpenAI Python SDK with DeepSeek?

Yes. The DeepSeek API matches OpenAI’s Chat Completions wire format. Set base_url="https://api.deepseek.com" and your DeepSeek API key, and the OpenAI SDK works without changes to your call sites. DeepSeek also exposes an Anthropic-compatible surface against the same base URL. DeepSeek OpenAI SDK compatibility covers parameter coverage and known gaps.

Is DeepSeek a good fit for coding agents?

For agentic coding, V4-Pro is competitive with Claude Opus on Terminal-Bench 2.0, LiveCodeBench, and Codeforces, at roughly one-seventh the per-token output cost, and works with Claude Code via the Anthropic-compatible endpoint. V4-Flash is a strong fit for editor-side completions and lower-stakes agent steps. See DeepSeek Coder vs Copilot for an editor-focused comparison.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.