How DeepSeek API Context Caching Cuts Input Costs by ~98% on V4

If you run an agent with a 20,000-token system prompt and call it a thousand times an hour, the bill on most frontier APIs is brutal. DeepSeek API context caching is the feature that quietly fixes that problem — repeated prompt prefixes are detected on the server, served from a hard-disk cache, and billed at roughly one-fiftieth of the normal V4-Flash input rate (cache-hit $0.0028 vs miss $0.14, a 98% reduction since 2026-04-26). There is nothing to configure. No `cache_control` breakpoints, no TTL knobs, no extra SDK calls.

This guide walks through exactly how the cache works on DeepSeek V4-Flash and V4-Pro, what the `usage` fields tell you, two worked cost examples, and the prompt-structure habits that move your hit rate from accidental to deliberate.

What DeepSeek API context caching actually does

DeepSeek’s API ships a server-side disk cache that detects repeated prompt prefixes across requests on the same account and serves them at a discounted rate. The technology is enabled by default for all users, allowing them to benefit without needing to modify their code. Each user request will trigger the construction of a hard disk cache, and if subsequent requests have overlapping prefixes with previous requests, the overlapping part will only be fetched from the cache, which counts as a cache hit.

The model still generates the response from scratch — caching is only a billing and latency optimisation on the input side. The hard disk cache only matches the prefix part of the user’s input; the output is still generated through computation and inference, and it is influenced by parameters such as temperature, introducing randomness.

A few operational details that catch people out:

- The cache system uses 64 tokens as a storage unit; content less than 64 tokens will not be cached. Several third-party guides report a practical minimum closer to 1,024 tokens for reliable hits on V4 — prefixes must be at least 1,024 tokens long and must match byte-for-byte.

- The cache system works on a “best-effort” basis and does not guarantee a 100% cache hit rate. Cache construction takes seconds.

- Only requests with identical prefixes (starting from the 0th token) will be considered duplicates. Partial matches in the middle of the input will not trigger a cache hit.

- Each user’s cache is isolated and logically invisible to others, ensuring data privacy and security. Unused cache entries are automatically cleared after a period.

If you want to verify caching is actually working in your account, our DeepSeek API tester can fire two identical-prefix requests and surface the usage object in the response.

Pricing — V4-Flash and V4-Pro side by side

DeepSeek V4 launched on April 24, 2026 as two open-weight MoE tiers — deepseek-v4-pro (1.6T total / 49B active) and deepseek-v4-flash (284B / 13B active). Both share the cache. The cache-hit price is the headline savings; the cache-miss rate is what you pay for the variable tail of each request.

| Tier | Input — cache hit | Input — cache miss | Output | Hit vs miss saving |

|---|---|---|---|---|

| deepseek-v4-flash | $0.0028 / 1M | $0.14 / 1M | $0.28 / 1M | ~98% |

| deepseek-v4-pro | $0.003625 / 1M (promo, list $0.0145) | $0.435 / 1M (promo, list $1.74) | $0.87 / 1M (promo, list $3.48) | ~99% |

Rates as of April 2026, taken from DeepSeek’s pricing page on the V4 Preview launch — verify on the live page before committing to a budget. Note that the older deepseek-chat and deepseek-reasoner IDs now bill as V4-Flash aliases; the ID deprecation deadline is July 24, 2026 (15:59 UTC, per DeepSeek’s migration notice). The night-time off-peak discount that existed under V3 is gone — DeepSeek discontinued it on 2025-09-05 and did not reintroduce it with V4. For the full breakdown across tiers, see the DeepSeek API pricing reference.

Quickstart: a request that will hit the cache on the second call

The API is OpenAI-compatible and stateless — meaning your client must resend the conversation history on every call; DeepSeek does not store turns server-side. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. Here is a minimal Python example using the OpenAI SDK:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_DEEPSEEK_KEY",

)

SYSTEM = "You are a senior financial analyst. " * 200 # ~2,000 tokens, stable

def ask(question: str):

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": SYSTEM},

{"role": "user", "content": question},

],

temperature=1.0,

max_tokens=1024,

)

print(resp.usage)

return resp.choices[0].message.content

ask("Summarise the Q1 report.")

ask("Now analyse profitability.") # second call → cache hit on the system prefix

The same call works against deepseek-v4-pro with no other change. To enable thinking mode on either tier, add reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}; the model then returns reasoning_content alongside the final content. DeepSeek also exposes an Anthropic-compatible surface against the same base URL if your stack already uses the Anthropic SDK. Full setup walk-through: DeepSeek API getting started.

Reading the usage object

Every response includes a usage object with two fields you should log on every call:

prompt_cache_hit_tokens— input tokens served from the cache, billed at the hit rate.prompt_cache_miss_tokens— input tokens that were not cached, billed at the miss rate.

prompt_cache_hit_tokens is the number of tokens in the input of this request that resulted in a cache hit; prompt_cache_miss_tokens is the number that did not. If you use LiteLLM as a router, it normalises this into the OpenAI-style shape: a prompt_tokens_details object containing cached_tokens, the tokens that were a cache-hit for that call. The first call to a brand-new prefix will report zero hit tokens — that is expected. Cache construction happens in the background and the second identical-prefix call should report most of the prefix as hits.

Worked cost example A — V4-Flash agent

One million calls, each with a 2,000-token system prompt that stays constant, a 200-token user message that changes every time, and a 300-token reply. Tier: deepseek-v4-flash.

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028 / M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14 / M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28 / M = $84.00

- Total: $117.60

Without caching — i.e. if every input token billed at the miss rate — the input portion alone would be 2,200,000,000 × $0.14 / M = $308.00, taking the total to $392.00. Caching saves $274.40 across the run, or about 70% on that workload (the cache-hit row alone is now 98% cheaper than miss, since the 2026-04-26 rate drop).

Worked cost example B — V4-Pro agent

Same workload, frontier tier. Tier: deepseek-v4-pro.

- Cached input: 2,000,000,000 × $0.003625 / M (promo) = $7.25

- Uncached input: 200,000,000 × $0.435 / M (promo) = $87.00

- Output: 300,000,000 × $0.87 / M (promo) = $261.00

- Total: $355.25 (V4-Pro 75% promo through 2026-05-31; list-price total is $1,421.00)

The Pro example shows two things. First, caching is more valuable in absolute dollars on the Pro tier — at promo rates the per-million-token gap between hit and miss is about $0.43 on Pro versus $0.137 on Flash (and $1.74 on Pro at list). Second, output dominates frontier-tier spend. If your workload doesn’t actually need V4-Pro reasoning, V4-Flash is roughly 10× cheaper at scale. For a quick sanity check on your own numbers, the DeepSeek pricing calculator lets you plug in your hit rate and message profile.

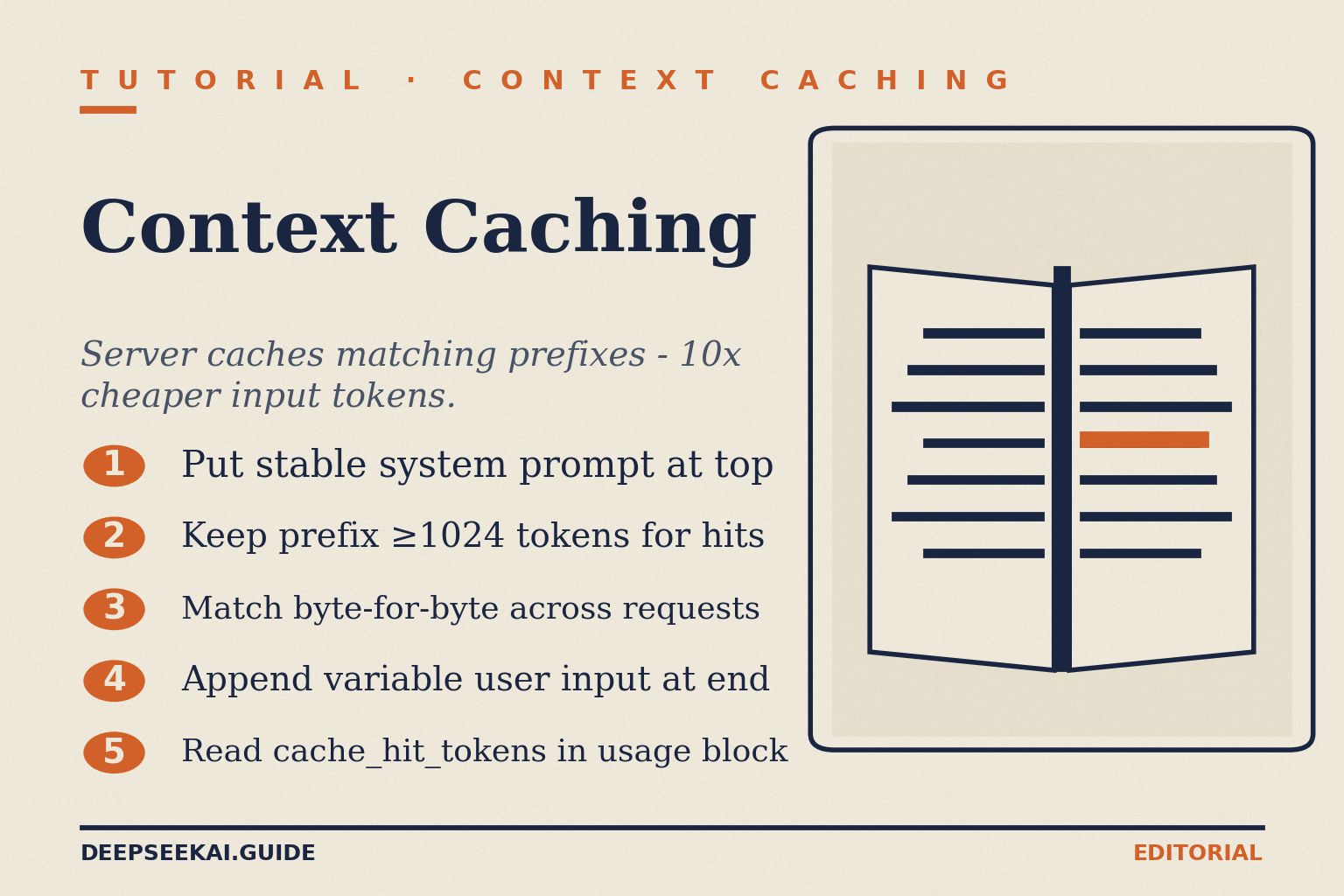

Five prompt patterns that maximise cache hits

The cache only matches identical prefixes from token 0. That single rule drives every optimisation below.

- Put stable content first. System prompt → tool schemas → retrieved documents → finally the user’s question. Anything that changes per call must sit at the end.

- Pin the system prompt byte-for-byte. Even rewording “You are a helpful assistant.” to “You are a helpful AI assistant.” invalidates the prefix for every downstream call. Hash your system prompt and alert if it changes.

- Freeze few-shot example order. Re-shuffling examples between calls is one of the most common reasons hit rates collapse. Keep the same examples in the same order; only the trailing user task changes.

- Move volatile metadata to the end. Timestamps, request IDs, user IDs, A/B flags — none of these belong before the cacheable prefix. Append them to the final user message.

- Pre-chunk long documents. If you swap a 500K-token document on every call, the document is not a cacheable prefix. Decide on a small set of canonical chunks, reference them by ID, and keep that ordering stable across requests.

For a deeper treatment of prompt structure, see DeepSeek prompt engineering. If you are wiring this into a retrieval pipeline, the DeepSeek RAG tutorial shows where to put the retrieved chunks for cache-friendliness.

How DeepSeek caching compares to OpenAI and Anthropic

All three providers cache prompt prefixes; the differences are in what you pay, who manages it, and what minimum size the cache will accept.

| Provider | How it’s enabled | Min prefix | Cache-hit discount | Storage / write cost |

|---|---|---|---|---|

| DeepSeek (V4) | Automatic; no config | ~1,024 tokens (64-token unit) | ~98% (Flash) / ~99% (Pro) | None |

| OpenAI | Automatic | Minimum prompt size of 1024 tokens | 0.25× or 0.50× input price, depending on model | None |

| Anthropic | Explicit cache_control breakpoints |

Model-dependent | ~90% | Anthropic charges for cache_creation_input_tokens — tokens written to cache |

The “automatic + no write cost” combination is what makes DeepSeek caching painless. You don’t pay to populate the cache, you don’t pay to keep it warm, and you don’t manage cache keys. The trade-off is that DeepSeek gives you less control: there is no equivalent to OpenAI’s prompt_cache_key routing hint or Anthropic’s manual breakpoints. If a hit rate looks low, the only fix is restructuring your prompt. For a broader head-to-head on the API surfaces, see DeepSeek vs Claude and the DeepSeek API docs and guides.

Common reasons your hit rate looks lower than expected

- The prefix changed. A timestamp injected into the system prompt, a regenerated tool-schema JSON with reordered keys, a different model version pinned in metadata — any of these break the prefix.

- The prefix is too short. Below 64 tokens nothing is cached at all; below ~1,024 tokens hits are unreliable in practice.

- Cache TTL expired. Unused entries are cleared in hours-to-days; a low-traffic prompt will go cold.

- You’re hitting the wrong account. Caches are isolated per account. Two API keys under different orgs do not share a cache.

- Streaming mid-response failures. If you abort partway and retry, the new request still gets full prefix-hit pricing — but downstream observability tools may misreport. See the DeepSeek API error codes reference for retry semantics.

When caching changes the architectural choice

Once you internalise that repeated prefixes cost a fiftieth of fresh ones (under 2% of the miss rate on V4-Flash), several patterns become viable that previously weren’t:

- Long system prompts. A 20K-token instruction set used to be wasteful. With caching, you pay it in full once and amortise it across thousands of calls. Useful for compliance-heavy assistants, agents with large tool catalogues, and domain-specific style guides.

- Few-shot at scale. Twenty in-context examples become economically reasonable for high-volume classification or extraction.

- Repository-wide code review. Feed the codebase as a stable prefix, change only the question. With V4’s 1,000,000-token context window and 384,000-token output ceiling, the entire prompt fits, and only the trailing question rebills at the miss rate.

- RAG with stable corpora. If your retrieved chunks come from a small, slow-moving knowledge base, ordering them deterministically lets the cache absorb most of the input cost.

For role-specific applications of these patterns, see DeepSeek for developers and the broader DeepSeek for coding use case.

Limitations and honest caveats

Three things to keep in mind before architecting around the cache:

- Best-effort, not guaranteed. Even an identical prompt may miss occasionally. Budget for the worst case (everything bills at miss) and treat the cache as upside.

- No cross-account sharing. Multi-tenant SaaS that proxies through one DeepSeek account benefits from cross-tenant cache; per-tenant API keys do not.

- Output is not cached. Same prompt, same temperature ≠ same response. If you need response-level caching, do it in your own application layer.

External reading on the original launch — DeepSeek’s August 2024 announcement — and Simon Willison’s contemporaneous technical write-up are both still useful for the architectural rationale, even though the rates have since changed.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

How does DeepSeek API context caching work?

DeepSeek detects when the start of your current prompt matches the start of a recent prompt on the same account, and serves the matching tokens from a hard-disk cache at roughly one-fiftieth of the normal V4-Flash input rate ($0.0028 vs $0.14 / 1M, a 98% reduction since 2026-04-26). It runs automatically — no code changes, no cache keys, no TTL configuration. Only identical prefixes from token 0 count; partial middle matches do not. See the DeepSeek API documentation for the response schema.

What is the minimum prompt length for a cache hit?

The cache uses 64 tokens as a storage unit, so anything shorter than 64 tokens will not be cached at all. In practice on V4, hits become reliable once the shared prefix is around 1,024 tokens or longer, and they scale up cleanly from there. If you are well under that, restructure your prompt to consolidate stable instructions. The DeepSeek token counter helps you measure prefix length precisely.

Does caching work on deepseek-chat and deepseek-reasoner?

Yes — both legacy IDs currently route to deepseek-v4-flash and benefit from the same cache. They will be fully retired on July 24, 2026 at 15:59 UTC, after which requests using those IDs will fail. Migration is a one-line change to the model field; the base_url stays the same. See DeepSeek OpenAI SDK compatibility for the full migration pattern.

Is the cache shared between users or accounts?

No. DeepSeek isolates each account’s cache so that one user’s prompt prefixes are not visible to another. Unused cache entries are automatically cleared after a period — typically hours to days — which means a rarely-used prompt may go cold and pay the full miss rate again. For production traffic with steady volume on the same prefix, this is rarely an issue. More on data handling in the DeepSeek privacy guide.

Why is my cache hit rate so low?

The most common cause is something changing at the front of the prompt — a timestamp, a regenerated JSON tool schema with reordered keys, a different system message, or shuffled few-shot examples. Other culprits are prefixes shorter than 1,024 tokens, prompts that drop below the 64-token storage unit, or low-traffic endpoints whose cache entries expired. Log prompt_cache_hit_tokens on every call and alert when the ratio drops. See DeepSeek API best practices for monitoring patterns.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- OfficialDeepSeek's August 2024 context-caching announcementOriginal launch of the disk-based context cacheLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

Context sources

- AnalysisSimon Willison: DeepSeek context-caching write-up (Aug 2024)Architectural rationale for the prefix cacheLast checked: April 30, 2026

Methodology

Pricing figures were checked against the official DeepSeek pricing page and normalised into estimated monthly costs using sample token usage scenarios. Actual costs may vary depending on caching, input/output ratio, regional availability, and provider updates.

Data confidence

High — figures checked directly against the official pricing page on the review date.

Editorial note

DeepSeek occasionally runs promotional discounts that are not always reflected in the headline page. The pricing dataset on this site auto-refreshes monthly; this article reflects the dataset on the date shown above.