DeepSeek Prover: The Open-Source Lean 4 Theorem-Proving Model

If you write Lean 4 proofs and you have ever spent an evening watching a tactic block fail on a goal you knew was true, you have already met the problem DeepSeek Prover was built to attack. DeepSeek Prover is the formal-mathematics specialist in the DeepSeek model family — separate from the general-purpose chat models — and the V2 release in April 2025 pushed open-source theorem proving to 88.9 % pass rate on MiniF2F-test. This guide explains what the model is, how it differs from DeepSeek’s chat and reasoning models, the benchmarks it actually posts, where to download or call it, and where it still falls short. By the end you will know whether Prover belongs in your proof pipeline, or whether a general reasoning model would do the job.

What DeepSeek Prover is

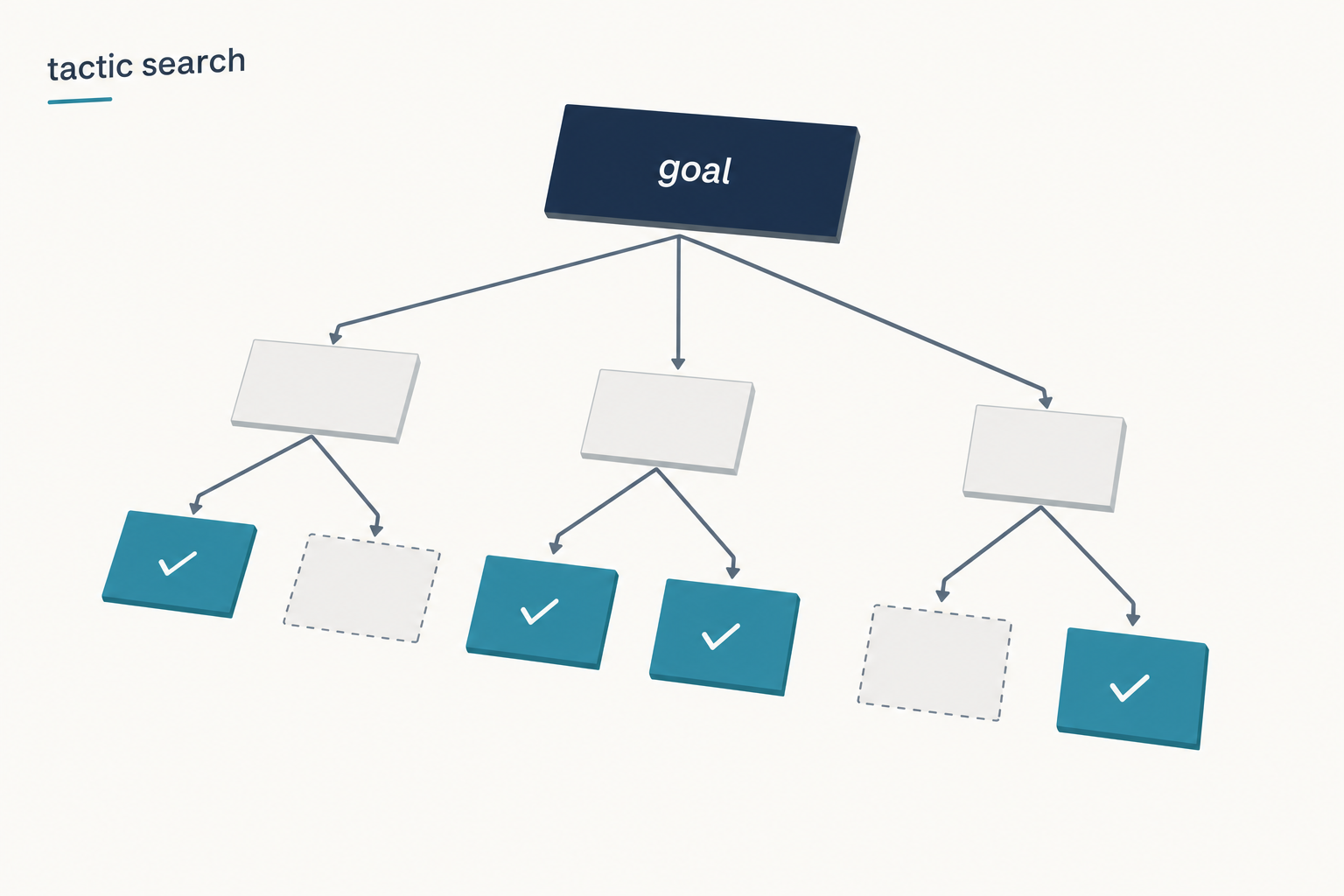

DeepSeek Prover is an open-source large language model built specifically for formal theorem proving in Lean 4. It is not a general chatbot. It is designed for formal theorem proving in Lean 4, with initialization data collected through a recursive theorem proving pipeline powered by DeepSeek-V3 — DeepSeek-V3 decomposes theorems into high-level proof sketches while simultaneously formalising these proof steps in Lean 4, resulting in a sequence of subgoals. The output is verifiable: every proof either typechecks under Lean’s kernel or it does not, which is a sharper success signal than you get from chat-style math benchmarks.

Prover sits inside a wider DeepSeek lineup that already includes general models such as DeepSeek V4-Flash, the math-focused DeepSeek Math, and the older reasoning model DeepSeek R1. Where those models try to “talk through” a proof in natural language, Prover emits Lean 4 code that a proof assistant can mechanically verify.

Architecture and lineage

The current generation is DeepSeek-Prover-V2, released on Hugging Face in late April 2025 in two sizes:

- DeepSeek-Prover-V2-671B — the flagship. It shares the same architecture as DeepSeek-V3, which makes it a Mixture-of-Experts model with roughly 671B total parameters and ~37B activated per token.

- DeepSeek-Prover-V2-7B — a small dense model used both as a research tool and as a workhorse during data synthesis. A smaller 7B model handles the proof search for each subgoal, thereby reducing the associated computational burden during training data construction.

The model card lists context windows of roughly 32K tokens for the 7B variant and up to 128K for the 671B, which is small relative to V4’s million-token window but generous for Lean files. Note that V2’s 671B body predates the V4 architectural overhaul; it is built on V3’s MoE, not V4’s hybrid attention design.

How V2 was trained

The training pipeline is what makes V2 interesting. Once the decomposed steps of a challenging problem are resolved, the team pairs the complete step-by-step formal proof with the corresponding step-by-step reasoning from DeepSeek-V3 to create cold-start reasoning data. They then curate a subset of challenging problems that remain unsolved by the 7B prover model in an end-to-end manner, but for which all decomposed subgoals have been successfully resolved. By composing the proofs of all subgoals, they construct a complete formal proof for the original problem.

Reinforcement learning then sharpens the reasoning trace mode. After fine-tuning the prover model on the synthetic cold-start data, a reinforcement learning stage further enhances its ability to bridge informal reasoning with formal proof construction, using binary correct-or-incorrect feedback as the primary form of reward supervision. Lean acts as the judge: a proof either compiles or it does not, so the reward signal is unusually clean compared with subjective text benchmarks.

Benchmarks

The V2 paper and Hugging Face cards report results on three suites: MiniF2F (high-school competition mathematics), PutnamBench (undergraduate competition problems), and ProverBench (DeepSeek’s own evaluation set).

| Benchmark | DeepSeek-Prover-V2-671B | Source |

|---|---|---|

| MiniF2F-test (pass ratio) | 88.9 % | arXiv 2504.21801 |

| PutnamBench | 49 of 658 solved | arXiv 2504.21801 |

| ProverBench (AIME 24/25 subset, 15 problems) | 6 solved | arXiv 2504.21801 |

| DeepSeek-V3 (informal, majority voting, same AIME subset) | 8 solved | arXiv 2504.21801 |

DeepSeek-Prover-V2-671B reaches 88.9 % pass ratio on MiniF2F-test and solves 49 out of 658 problems from PutnamBench. ProverBench is a collection of 325 formalised problems including 15 from recent AIME competitions (years 24-25); the model successfully solves 6 of them, while DeepSeek-V3 solves 8 using majority voting — highlighting that the gap between formal and informal mathematical reasoning in large language models is substantially narrowing.

For context against the previous generation: V1.5 reached 63.5 % on MiniF2F (with the RMaxTS inference strategy); V2-671B’s 88.9 % is a step-change improvement. Read the original paper for the full evaluation tables — see arXiv 2504.21801.

How to read the MiniF2F number

“88.9 %” is a sampled metric, not a one-shot accuracy. The 671B step-by-step reasoning model reaches that pass rate at Pass@8192, meaning the system is allowed to draw thousands of candidate proofs per problem and keep any that compile. That is honest under the rules of the benchmark, but if you plan to run Prover with a small sampling budget in production, expect lower numbers.

Strengths — where Prover specifically wins

- Verifiable output. Every proof Prover emits either compiles in Lean 4 or it does not. There is no need to second-guess hallucinated steps.

- Subgoal decomposition. Because the training pipeline was built on recursive subgoal decomposition, the model is unusually good at breaking a long theorem into named lemmas you can later reuse.

- Open weights. The 7B and 671B models, plus the DeepSeek-ProverBench dataset, are publicly available on Hugging Face; inference integrates easily with the Transformers library, with usage covered by the applicable Model License.

- A small distilled twin. The 7B variant is small enough to run on a single high-end GPU, which makes it a practical workhorse for batch proof search where you need throughput more than peak quality.

Weaknesses — where it falls short

- It is not a general assistant. Do not ask Prover to write code, draft an essay, or summarise a paper. Use DeepSeek V4 for that.

- Lean 4 only. If your project is in Coq, Isabelle/HOL, or Rocq, Prover will not help directly.

- PutnamBench is still hard. 49 of 658 is a single-digit percentage. Olympiad-level proofs remain mostly out of reach.

- Variance between sizes. Performance gaps occur among variants — the 7B model can solve some Putnam problems the 671B cannot, implying differences in acquired tactics. In practice, run both if you have the compute.

- Architecture lag. V2 inherits V3’s MoE design, not V4’s million-token hybrid attention. If a future Prover-V3 ports to V4, expect longer proof contexts and lower inference cost.

How to access DeepSeek Prover

Prover is not exposed through the standard `deepseek-v4-pro` / `deepseek-v4-flash` chat API at POST /chat/completions. It ships as open weights, so the realistic options are local inference, Hugging Face Transformers, or a third-party hosting provider.

Option 1 — Hugging Face Transformers (local)

The model card shows the canonical Python pattern for loading the weights and feeding a Lean 4 statement. The minimal version, in Python:

from transformers import AutoModelForCausalLM, AutoTokenizer

import torch

model_id = "deepseek-ai/DeepSeek-Prover-V2-7B" # or DeepSeek-Prover-V2-671B

tokenizer = AutoTokenizer.from_pretrained(model_id)

model = AutoModelForCausalLM.from_pretrained(

model_id, torch_dtype=torch.bfloat16, device_map="auto"

)

formal_statement = """

import Mathlib

import Aesop

set_option maxHeartbeats 0

open BigOperators Real Nat Topology Rat

theorem mathd_algebra_10 :

abs ((120 : ℝ) / 100 * 30 - 130 / 100 * 20) = 10 := by sorry

""".strip()

prompt = f"Complete the following Lean 4 code:n```lean4n{formal_statement}n```n"

inputs = tokenizer(prompt, return_tensors="pt").to(model.device)

output = model.generate(**inputs, max_new_tokens=2048)

print(tokenizer.decode(output[0], skip_special_tokens=True))The 7B model fits on a single 24-48 GB GPU at bf16. The 671B is far heavier — plan for a multi-GPU node. See our DeepSeek hardware calculator to size a deployment, and the install DeepSeek locally tutorial for the wider setup.

Option 2 — Hosted inference

Several third parties host DeepSeek-Prover-V2-671B for pay-per-token inference. If you do not want to rent your own GPUs, that is the cheapest way to test the model. Treat any specific per-token rate as time-sensitive — provider pricing pages move often, and Prover is a niche enough model that not every hosting platform carries it.

Option 3 — DeepSeek’s first-party API

DeepSeek’s first-party API at https://api.deepseek.com currently exposes the V4 family (deepseek-v4-pro and deepseek-v4-flash) for general chat, plus the legacy deepseek-chat and deepseek-reasoner IDs that route to deepseek-v4-flash until they retire on 2026-07-24 15:59 UTC. Prover-V2 is not a standard model ID on that endpoint at the time of writing — check the DeepSeek API documentation before assuming you can call it directly.

Pricing snapshot (as of April 2026)

DeepSeek does not publish a separate first-party API price for Prover-V2. The closest reference points are:

- DeepSeek V4-Flash (general chat): $0.0028 cache hit / $0.14 cache miss / $0.28 output, per 1M tokens.

- DeepSeek V4-Pro (frontier general chat): $0.0145 / $1.74 / $3.48 list (effective $0.003625 / $0.435 / $0.87 during the 75% promo through 2026-05-31), per 1M tokens.

- Prover-V2 weights: free to download under the model’s licence; you pay only your own GPU costs.

For the most current rates and any provider-side discounts on the chat models, see the DeepSeek API pricing page. Off-peak API discounts ended on 2025-09-05 and were not reintroduced with V4.

Best use cases

- Mathlib contributions. Use Prover to draft Lean 4 proofs of small lemmas, then review and refactor by hand before opening a PR.

- Course assistants. An undergraduate Lean class can use the 7B model as a tactic suggester. See DeepSeek for education for adjacent ideas.

- Research-grade proof search. Decompose a long theorem in your head, ask Prover to attack each subgoal independently, then stitch the results together.

- Verification pipelines. Combine Prover with a CI job that runs

lake buildon every generated proof — anything that compiles is a candidate. See DeepSeek for research for related workflows. - Math-heavy general work. If your task is informal mathematics, you almost always want DeepSeek for math with V4 instead, not Prover.

Comparable alternatives

Prover-V2 is not the only model targeting Lean 4. Kimina-Prover, Goedel-Prover, and AlphaProof-style systems are active research projects, and general reasoning models can sometimes substitute for short proofs.

- For day-to-day reasoning that includes proof sketches in natural language, see DeepSeek R1 vs OpenAI o1.

- If you are weighing DeepSeek’s overall stack against Anthropic’s, the DeepSeek vs Claude comparison covers reasoning and code, though neither Claude nor DeepSeek’s chat models are dedicated theorem provers.

- For a survey of open-weight options for math and reasoning, the open-source AI like DeepSeek roundup is the right starting point, alongside the broader DeepSeek models hub.

Verdict

DeepSeek Prover-V2 is the strongest open-weight Lean 4 theorem prover currently published, and the 88.9 % MiniF2F figure is real — at Pass@8192. If you write Lean 4 proofs daily, the 7B model is a cheap upgrade to your tactic suggester and the 671B model is worth renting GPU time to test. If you want a chat assistant that can also do math, look at the V4 family instead; Prover is a specialist tool, not a general one.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

What is DeepSeek Prover used for?

DeepSeek Prover is used for formal theorem proving in Lean 4. Given a theorem statement with a sorry placeholder, the model emits Lean 4 tactic code that — when it works — typechecks under Lean’s kernel. It is not a general chatbot; for that, use a general model from the DeepSeek models hub such as V4-Flash or V4-Pro.

How does DeepSeek Prover V2 compare to DeepSeek R1 on math?

They solve different problems. R1 (and the newer V4 thinking modes) write informal natural-language reasoning that often arrives at a correct numerical answer; Prover emits Lean 4 code that compiles. On the AIME 24/25 subset of ProverBench, Prover-V2-671B solved 6 of 15 problems formally, while DeepSeek-V3 solved 8 informally with majority voting. See the DeepSeek R1 review for the informal side.

Is DeepSeek Prover free to use?

The model weights are openly published on Hugging Face under the DeepSeek Model License, so you can download and run them at no licence cost. You still pay for the GPUs that host inference. There is no separate Prover tier on DeepSeek’s first-party chat API at the time of writing — see the DeepSeek API documentation for what is currently exposed there.

What hardware do I need to run DeepSeek-Prover-V2-671B?

The 671B model shares its architecture with DeepSeek-V3 (MoE, ~37B active per token), so you need a multi-GPU server with enough aggregate VRAM to hold the weights — typically several H100s or equivalents. The 7B variant fits on a single high-end consumer or workstation GPU at bf16. Use our DeepSeek hardware calculator to size a deployment.

Can DeepSeek Prover solve Olympiad-level proofs?

Sometimes, but not reliably. Prover-V2-671B solves 49 of 658 problems on PutnamBench, which is a single-digit percentage of an undergraduate competition set, and Olympiad-level problems remain harder still. Treat it as a strong assistant for medium-difficulty Lean 4 lemmas rather than a one-shot Olympiad solver. For wider research workflows around it, see DeepSeek for research.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- Model cardDeepSeek-Prover-V2-7B model card7B Prover variant for batch proof searchLast checked: April 30, 2026

- Model cardDeepSeek-Prover-V2-671B model cardFlagship 671B Prover weights and licenseLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- Technical reportDeepSeek-Prover-V2 paper (arXiv 2504.21801)MiniF2F 88.9%, PutnamBench 49/658, ProverBench AIME numbersLast checked: April 30, 2026

Methodology

Architecture, parameter counts, context window, and license were checked against the official DeepSeek model card and the corresponding technical report. Benchmark figures are reproduced as they appear in vendor materials and are treated as directional indicators rather than guarantees of real-world performance.

Data confidence

High for official architecture and license; medium for vendor-reported benchmarks; low for projected future capabilities.

Editorial note

Vendor-reported figures are not always independently replicated. Benchmarks at the frontier change quickly; expect this article to need a refresh whenever DeepSeek, OpenAI, Anthropic, or Google ship a new model.