DeepSeek Latest Updates — What Shipped With V4 Preview (April 2026)

If you maintain a DeepSeek integration, the last 24 hours changed your backlog. The main DeepSeek latest updates to absorb: V4 Preview is live as of April 24, 2026, the legacy `deepseek-chat` and `deepseek-reasoner` IDs are on a 90-day retirement clock, and pricing now splits across two tiers instead of one rate card. I spent the launch day re-running our production regression suite against both V4 models through the OpenAI-compatible API and reading DeepSeek’s technical report in full. This piece walks through what actually shipped, the numbers I verified against primary sources, and the migration work you cannot defer past July 24, 2026.

What DeepSeek shipped on April 24, 2026

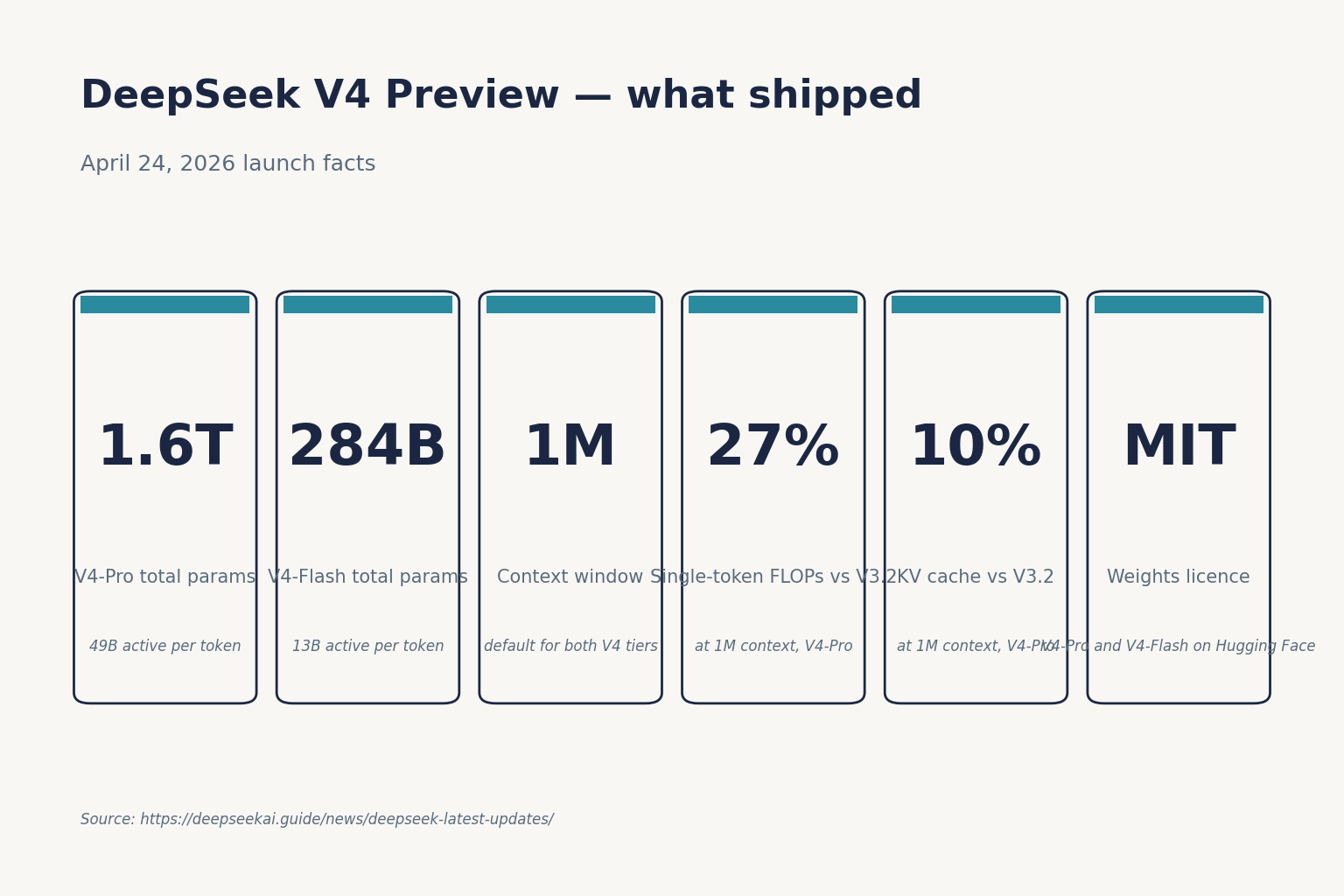

DeepSeek published the V4 Preview as an open-weight family of two Mixture-of-Experts models. DeepSeek-V4-Pro has 1.6T total / 49B active parameters with performance rivaling top closed-source models, while DeepSeek-V4-Flash at 284B total / 13B active is the fast, efficient, economical tier. Both models run on a default 1M-token context window, and weights are on Hugging Face under the MIT license.

The headline architectural change is efficiency, not parameter count. The Hybrid Attention Architecture combines Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA), and at 1M-token context DeepSeek-V4-Pro requires only 27% of single-token inference FLOPs and 10% of the KV cache compared with V3.2. That is the difference between long-context being a marketing checkbox and being a workload you can actually afford to run.

On the chatbot side, V4 is live on chat.deepseek.com with Expert Mode mapping to V4-Pro and Instant Mode to V4-Flash. The old DeepThink toggle now switches V4 between non-thinking and thinking mode, not V3.x. If you want the broader context on the model family itself, see the full DeepSeek V4 write-up.

V4-Pro vs V4-Flash — which tier is which

V4 is a family, not a single model. The two tiers share a feature set but target different budgets.

| Spec | deepseek-v4-pro | deepseek-v4-flash |

|---|---|---|

| Total parameters | 1.6T | 284B |

| Active per token | 49B | 13B |

| Context window | 1,000,000 tokens | 1,000,000 tokens |

| Max output tokens | 384,000 | 384,000 |

| Weights licence | MIT | MIT |

| Hugging Face size | 865 GB | 160 GB |

| Positioning | Frontier-tier | Cost-efficient default |

V4-Pro is now the largest open-weights model — larger than Kimi K2.6 (1.1T) and GLM-5.1 (754B), and more than twice the size of DeepSeek V3.2 (685B). Pro-tier spend is only justified for frontier-class agentic or coding work; for chat-shaped or RAG-shaped workloads, Flash is where I am pointing new projects. Detailed tier pages: DeepSeek V4-Pro and DeepSeek V4-Flash.

Benchmarks — what the technical report actually says

DeepSeek’s own numbers position V4-Pro at maximum reasoning effort (reasoning_effort=max) as competitive with the current frontier on coding and math while lagging on factual recall. I am citing them as reported; independent re-runs are still coming in.

| Benchmark | V4-Pro (reasoning_effort=max) | Claude Opus 4.6 | GPT-5.4 | Gemini 3.1 Pro |

|---|---|---|---|---|

| SWE-Bench Verified | 80.6 | 80.8 | — | 80.6 |

| Terminal-Bench 2.0 | 67.9 | 65.4 | 75.1 | 68.5 |

| LiveCodeBench | 93.5 | 88.8 | — | — |

| IMOAnswerBench | 89.8 | 75.3 | 91.4 | 81.0 |

| HMMT 2026 (Feb) | 95.2 | 96.2 | 97.7 | — |

| Humanity’s Last Exam | 37.7 | 40.0 | 39.8 | 44.4 |

| SimpleQA-Verified | 57.9 | — | — | 75.6 |

Two honest reads from that table. First, on SWE-Bench Verified V4-Pro sits at 80.6 — within a fraction of Claude (80.8) and matching Gemini (80.6), and on Terminal-Bench 2.0 V4-Pro (67.9) beats Claude (65.4) while staying competitive with Gemini (68.5), though GPT-5.4 leads at 75.1. Second, HLE at 37.7% puts V4-Pro below Claude (40.0), GPT-5.4 (39.8), and well below Gemini-3.1-Pro (44.4), and SimpleQA-Verified at 57.9% versus Gemini’s 75.6% reveals a meaningful factual knowledge retrieval gap. If you need accurate world-knowledge recall, closed-source models still lead; if you need an agentic coder, V4-Pro has real claims.

DeepSeek frames the overall position candidly. The “pro” version’s performance falls only “marginally short” of OpenAI’s GPT-5.4 and Gemini 3.1-Pro, “suggesting a developmental trajectory that trails current-generation frontier models by approximately 3 to 6 months,” the Hangzhou-based startup said. A deeper roll-up is in the DeepSeek benchmarks 2026 tracker.

Pricing — the new two-tier rate card

The single rate card from V3.2 is gone. V4 publishes separate pricing per tier, with three token buckets each. Rates below are per 1M tokens, as listed on DeepSeek’s pricing page on April 24, 2026.

| Bucket | deepseek-v4-flash | deepseek-v4-pro |

|---|---|---|

| Input — cache hit | $0.0028 | $0.0145 |

| Input — cache miss | $0.14 | $0.435 promo / $1.74 list |

| Output | $0.28 | $0.87 promo / $3.48 list |

Independent launch-day coverage confirms the headline numbers: they’re charging $0.14/million tokens input and $0.28/million tokens output for Flash, and $0.435/million input and $0.87/million output for Pro during the 75% promo through 2026-05-31 (list $1.74 / $3.48). For comparison reference points, GPT-5.4 costs $2.50 per 1M input tokens and $15.00 per 1M output tokens, while Claude Opus 4.6 costs $5 per 1M input tokens and $25 per 1M output tokens — which puts V4-Pro on output at roughly a seventh of Opus 4.6. A live calculator is on our DeepSeek pricing calculator page, and the full rate history is on DeepSeek API pricing.

Worked example — one million calls on V4-Flash

Assume a 2,000-token system prompt cached across calls, a 200-token user message on every call (uncached miss against that prefix), and a 300-token response. You must enumerate all three token buckets:

- Cached input: 2,000 × 1,000,000 = 2.0B tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200M tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300M tokens × $0.28/M = $84.00

- Total: $117.60

The same workload on V4-Pro lands at $1,421.00 — roughly ten times more. Do not mix rates across tiers in a single calculation.

API changes you need to implement

Chat requests still hit POST /chat/completions, the OpenAI-compatible endpoint, at https://api.deepseek.com. The API is stateless — clients must resend the full conversation history with every request, unlike the web chat which maintains session history. Two things are new:

- Model IDs changed. Use

deepseek-v4-proordeepseek-v4-flash. Keep base_url, just update model to deepseek-v4-pro or deepseek-v4-flash. Supports OpenAI ChatCompletions and Anthropic APIs. - Thinking is a parameter, not an ID. Both V4 models accept three reasoning-effort modes via the same request. Set

reasoning_effort="high"plusextra_body={"thinking": {"type": "enabled"}}for thinking mode, orreasoning_effort="max"for the Think-Max setting (which needs at least 384K tokens of context budget).

A minimal Python call using the OpenAI SDK:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Refactor this migration plan."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

max_tokens=8000,

)

print(resp.choices[0].message.content)When thinking is enabled, the response returns reasoning_content alongside the final content. JSON mode, tool calling, streaming, context caching, FIM completion (Beta — requires thinking: {"type": "disabled"}), and Chat Prefix Completion (Beta) are all carried forward. DeepSeek’s official guidance for temperature is 0.0 for code/math, 1.0 for data tasks, 1.3 for general conversation and translation, and 1.5 for creative writing. Full walkthrough on the DeepSeek API documentation page.

JSON mode is designed to return valid JSON, not guaranteed — include the word “json” and a small example schema in the prompt, set max_tokens high enough to avoid truncation, and handle occasional empty responses. Detail on the DeepSeek API JSON mode page.

The legacy-ID retirement clock

If you are still calling deepseek-chat or deepseek-reasoner, you have a migration window, not indefinite compatibility. deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time), currently routing to deepseek-v4-flash non-thinking/thinking.

The migration itself is a one-line change — swap the model field. base_url does not change. What does change is your pricing forecast (V4-Flash undercuts V3.2 rates) and your thinking-mode plumbing (now a parameter on either model). Plan to re-run your evals before the cutoff rather than discovering behaviour differences in production on July 25.

Distribution, hardware and geopolitics

Two context points from launch-day reporting that matter for procurement decisions:

- DeepSeek partnered with Huawei, which said it supports DeepSeek with “Supernode” technology combining clusters of Ascend 950 chips, and Counterpoint’s Wei Sun highlighted that V4 is run on domestic chips from Huawei and Cambricon, in comparison to R1 which was trained on Nvidia hardware. That shifts the political calculus for enterprises tracking US export-control exposure.

- Multiple US states, Australia, Taiwan, South Korea, Denmark and Italy introduced bans or other restrictions on DeepSeek-R1 shortly after its release, citing privacy and national security concerns. Check current status against your own jurisdiction before committing a regulated workload — see DeepSeek US restrictions and DeepSeek availability by country.

What I am doing in production this week

- Point all non-agentic traffic at

deepseek-v4-flashnon-thinking and diff outputs against V3.2 on our regression set. - Re-run the coding-agent bench on

deepseek-v4-prowithreasoning_effort="high"before deciding whether the 10× price step is worth it for that workload. - Add a CI check that fails the build if any service still hardcodes

deepseek-chatordeepseek-reasoner. July 24 is closer than it reads. - Re-cost the monthly API bill using the three-bucket template above. Cache-hit rates on long system prompts move the number more than any other single variable.

For the broader picture of where DeepSeek sits against its peers after this release, cross-reference our DeepSeek vs ChatGPT and DeepSeek vs Claude breakdowns, and the running DeepSeek news feed.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

What is the latest DeepSeek model as of April 2026?

The current generation is DeepSeek V4 Preview, released April 24, 2026. It ships as two open-weight MoE models: deepseek-v4-pro (1.6T total / 49B active) at the frontier tier and deepseek-v4-flash (284B / 13B active) at the cost-efficient tier. Both support a default 1M-token context under the MIT license. See the full DeepSeek V4 release date rundown for timeline details.

How much does the DeepSeek V4 API cost?

V4-Flash lists $0.0028 cache-hit / $0.14 cache-miss input and $0.28 output per 1M tokens. V4-Pro lists $0.0145 / $1.74 / $3.48 (currently 75% off through 2026-05-31, making the effective rates $0.003625 / $0.435 / $0.87) on the same buckets. Off-peak discounts remain discontinued since September 5, 2025. Run a real forecast on our DeepSeek cost estimator or check the full DeepSeek API pricing table before committing.

When do deepseek-chat and deepseek-reasoner stop working?

Both legacy IDs are fully retired after July 24, 2026, 15:59 UTC. Until then they route to deepseek-v4-flash in non-thinking and thinking mode respectively. Migration is a one-line change to the model field — base_url does not change. Our DeepSeek API getting started tutorial shows a before/after example.

Does DeepSeek V4 have a visible reasoning trace?

Thinking mode returns reasoning_content alongside the final content. Enable it by setting reasoning_effort="high" (or "max" for Think-Max) together with extra_body={"thinking": {"type": "enabled"}} on either V4 model. It is not a separate model ID in V4. Stream handling is covered in DeepSeek API streaming.

Can I run DeepSeek V4 locally?

The weights are on Hugging Face under MIT, so yes — but hardware matters. V4-Pro is 865 GB and V4-Flash is 160 GB at full precision, meaning Flash is the realistic option for a single high-end workstation with aggressive quantization. Start with the install DeepSeek locally guide and cross-check against the DeepSeek hardware calculator.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- Model cardHugging Face: DeepSeek-V4-Pro model cardV4-Pro parameter count, weights size, MIT licenseLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Context sources

- AnalysisSimon Willison: DeepSeek V4 launch-day write-upIndependent confirmation of V4 sizes and pricingLast checked: April 30, 2026

- AnalysisCounterpoint Research (Wei Sun) on DeepSeek V4 chip mixV4 trained on Huawei Ascend / Cambricon vs R1's NvidiaLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.