Build a Reliable DeepSeek Cost Estimator for V4-Flash and V4-Pro

“How much will this actually cost in production?” is the first question any engineering manager asks before greenlighting a DeepSeek migration. A good DeepSeek cost estimator answers that with three numbers, not one: cache-hit input, cache-miss input, and output tokens — each priced separately on every request. Get the buckets wrong and your forecast can be off by an order of magnitude, especially when you mix up the V4-Flash and V4-Pro tiers, which differ by roughly 12× on output at list (3× during the V4-Pro 75% promo through 2026-05-31). This article walks through the exact rates as of April 25, 2026, the math behind a per-call estimate, a worked monthly forecast for both tiers, and the assumptions you should pressure-test before you sign off on a budget.

What a DeepSeek cost estimator actually needs to compute

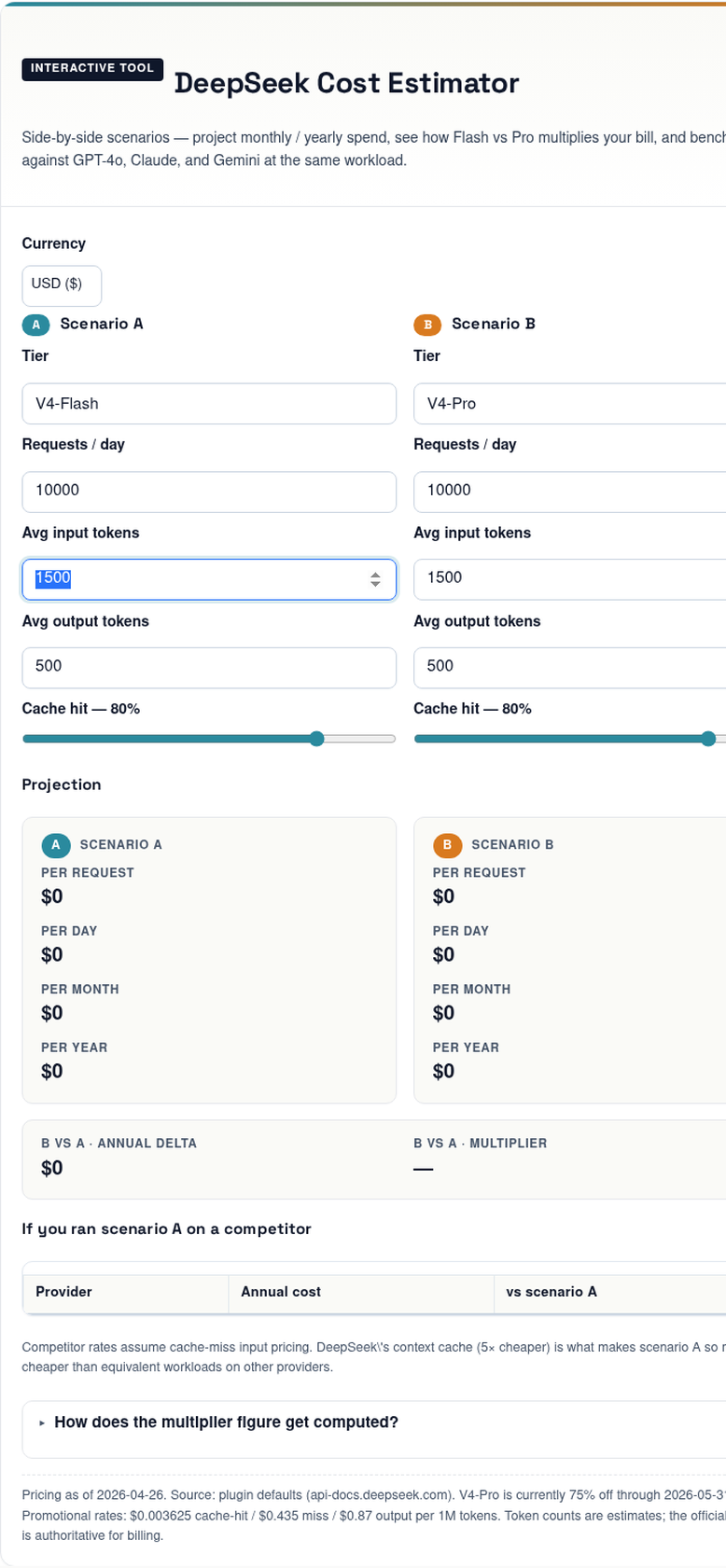

DeepSeek Cost Estimator

Side-by-side scenarios — project monthly / yearly spend, see how Flash vs Pro multiplies your bill, and benchmark against GPT-4o, Claude, and Gemini at the same workload.

A Scenario A

B Scenario B

Projection

If you ran scenario A on a competitor

| Provider | Annual cost | vs scenario A |

|---|

Competitor rates assume cache-miss input pricing. DeepSeek\'s context cache (5× cheaper) is what makes scenario A so much cheaper than equivalent workloads on other providers.

How does the multiplier figure get computed?

Cost per request = (input_tokens × cache_hit% × hit_rate) + (input_tokens × (1 − cache_hit%) × miss_rate) + (output_tokens × output_rate), all divided by 1M. Multiply by requests/day for daily, by 30 for monthly, by 365 for yearly. The multiplier is simply scenario B yearly / scenario A yearly. The competitor table assumes the simple formula since most providers don\'t price cache hits separately.

Pricing as of 2026-04-29. Source: official pricing page (auto-fetched). Token counts are estimates; the official tokeniser is authoritative for billing.

DeepSeek bills the API per million tokens, but a single request consumes tokens in three distinct buckets, each at its own rate. Skipping any of the three is the most common mistake in back-of-envelope forecasts.

- Cache-hit input tokens — the portion of your prompt prefix that DeepSeek’s context cache has already seen. Cheapest of the three.

- Cache-miss input tokens — fresh input the model has to process from scratch. This includes new user messages even when the system prompt is cached.

- Output tokens — everything the model generates, including any

reasoning_contentemitted in thinking mode.

The current generation is DeepSeek V4, released as a preview on April 24, 2026, in two open-source variants: DeepSeek-V4-Pro, a 1.6-trillion-parameter model, and DeepSeek-V4-Flash, a leaner 284-billion-parameter sibling. Both expose the same feature set, including a 1M-token context window and up to 384K output tokens. Pricing — and therefore your estimate — depends entirely on which tier you call.

DeepSeek V4 pricing as of April 2026

Verified against the official DeepSeek pricing documentation and corroborated by independent trackers. deepseek-v4-flash costs $0.0028 per 1M cache-hit input tokens (cut 10× from $0.0028 on 2026-04-26), $0.14 per 1M cache-miss input tokens, and $0.28 per 1M output tokens. deepseek-v4-pro lists at $0.0145 / $1.74 / $3.48 per 1M tokens; a 75% promotional discount through 2026-05-31 15:59 UTC brings those down to $0.003625 / $0.435 / $0.87.

| Model | Cache-hit input | Cache-miss input | Output | Best for |

|---|---|---|---|---|

deepseek-v4-flash |

$0.0028 / 1M | $0.14 / 1M | $0.28 / 1M | High-volume chat, classification, RAG |

deepseek-v4-pro (75% promo through 2026-05-31) |

$0.003625 / 1M (list $0.0145) | $0.435 / 1M (list $1.74) | $0.87 / 1M (list $3.48) | Frontier agents, complex coding |

Legacy deepseek-chat / deepseek-reasoner |

Routes to V4-Flash; retires 2026-07-24 15:59 UTC | Migration only | ||

One nuance worth flagging in any estimator: prices are the same whether you are in thinking mode or non-thinking mode. The model ID sets the rate; the reasoning mode just changes how many tokens you burn at that rate. A thinking-mode call simply produces more output tokens because the reasoning trace is billed alongside the final answer.

If you’re maintaining an integration that still points at the legacy IDs, you have a deadline. Note: deepseek-chat & deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time). (Currently routing to deepseek-v4-flash non-thinking/thinking). The fix is a one-line model= swap; the base_url stays the same.

The estimator formula

Every per-call estimate is the same three lines. Pick one tier, plug in token counts, sum.

Cost_call = (cache_hit_tokens × hit_rate / 1_000_000)

+ (cache_miss_tokens × miss_rate / 1_000_000)

+ (output_tokens × output_rate / 1_000_000)For monthly spend, multiply Cost_call by your call volume. The hard part isn’t the arithmetic — it’s predicting the cache-hit ratio. DeepSeek’s cache is automatic: cache-hit pricing is automatic. Every request with a repeated prefix against the same account benefits; you do not need to opt in or wire anything up. Prefixes must be at least 1,024 tokens long and must match byte-for-byte. If your prompts share a long, stable prefix, your hits dominate. If every call is unique, you’ll pay the miss rate on essentially everything.

A minimal Python sketch

The DeepSeek API is OpenAI-compatible. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint, against https://api.deepseek.com. You can also point the Anthropic SDK at the same base URL. Here is a minimal Python call against V4-Flash:

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": SYSTEM_PROMPT}, # cached after first call

{"role": "user", "content": user_msg},

],

temperature=1.3, # general conversation

max_tokens=1024,

)

usage = resp.usage # has prompt_tokens, completion_tokens, prompt_cache_hit_tokens, etc.The usage object on every response is the source of truth for an estimator: it reports cache-hit and cache-miss splits separately, so you can calibrate forecasts against real traffic instead of guessing. The API is stateless — you must resend the conversation history with each request — which is also why caching matters so much: that resent history is what hits the cache on the next turn.

Worked example: 1 million calls per month

Assume an agent with a stable 2,000-token system prompt (cached across calls), a 200-token user message (uncached, since it changes every time), and a 300-token response. Run that workload one million times in a month.

V4-Flash — the default recommendation

| Bucket | Tokens | Rate | Cost |

|---|---|---|---|

| Cache-hit input | 2,000,000,000 | $0.0028 / 1M | $5.60 |

| Cache-miss input | 200,000,000 | $0.14 / 1M | $28.00 |

| Output | 300,000,000 | $0.28 / 1M | $84.00 |

| Monthly total | $117.60 |

V4-Pro — frontier-tier

| Bucket | Tokens | Rate | Cost |

|---|---|---|---|

| Cache-hit input | 2,000,000,000 | $0.003625 / 1M (promo) | $7.25 |

| Cache-miss input | 200,000,000 | $0.435 / 1M (promo) | $87.00 |

| Output | 300,000,000 | $0.87 / 1M (promo) | $261.00 |

| Monthly total | $355.25 (promo; list $1,421.00) |

Same workload, ~3× spend gap during the 75% V4-Pro promo through 2026-05-31 (and ten-fold once the list rates resume). That gap is the single most important number any estimator should surface, because it directly informs the routing question: do you actually need V4-Pro for this task, or will V4-Flash do? DeepSeek’s own framing is helpful here. For agent use, Pro is the right model. For chat and reasoning workloads, Flash is close enough to Pro that the price-performance trade often favors it.

Hidden line items most estimators miss

Three categories of token consumption regularly blow up forecasts.

- Thinking-mode output bloat. When you set

reasoning_effort="high"withextra_body={"thinking": {"type": "enabled"}}, the API returnsreasoning_contentalongside the finalcontent. Both count toward billed output.reasoning_effort="max"requiresmax_model_len >= 393216and can balloon output dramatically. - Conversation history. Because the API is stateless, every multi-turn message has to resend prior turns. By turn ten, the input bucket can be five to ten times the size of the user’s actual message. The cache softens this, but only on byte-for-byte repeats.

- JSON mode retries. JSON mode is designed to return valid JSON, not guaranteed. Plan for occasional empty responses and re-prompts; include the word “json” plus a small example schema, and set

max_tokenshigh enough to avoid truncation. Retries are billed at full rate.

Recommended temperature settings to match DeepSeek’s docs

| Workload | Temperature |

|---|---|

| Code generation, mathematics | 0.0 |

| Data analysis, data cleaning | 1.0 |

| General conversation, translation | 1.3 |

| Creative writing, poetry | 1.5 |

Temperature itself doesn’t change your bill, but it shifts output length distributions in ways that do.

Building your own estimator vs using ours

You have three reasonable paths. A spreadsheet works if your traffic is predictable: one row per workload type, columns for the three buckets, multiplied by call volume. A small Python script tied to your usage log is more accurate because it reads real cache-hit ratios instead of guessing them. And our hosted DeepSeek pricing calculator handles the V4-Flash/V4-Pro split and the cached-prefix discount in a UI form. Pair any of them with the DeepSeek token counter to get accurate input sizes before you call the API.

Off-peak discounts are worth flagging because they used to be a major optimisation lever. They are no longer active — DeepSeek discontinued the night-time 50%/75% discount on 2025-09-05 and did not reinstate it for V4. Don’t model them.

Sanity-checking your forecast

- Did you state the tier? “DeepSeek will cost $X” without naming Flash or Pro is a red flag.

- Did you enumerate three buckets? A single “input × rate” line is wrong unless your cache-hit rate is genuinely 0%.

- Did you account for the user message on each call? Even with a fully cached system prompt, the new user content is a miss.

- Did you stress-test with a 2× output multiplier? Thinking-mode reasoning traces routinely double or triple output token counts.

- Did you verify rates against the official pricing page on the day you committed to the budget? V4 is in Preview and DeepSeek reserves the right to adjust.

Where this fits in your DeepSeek toolchain

Cost estimation is one node in a larger workflow. Before you forecast, you need accurate token counts; after you forecast, you need to validate against real usage logs. Useful adjacent reading: the DeepSeek API pricing reference for the canonical rate table, the DeepSeek context caching guide for the prefix rules that make or break your hit ratio, and DeepSeek API best practices for the structural changes (static content first, deterministic prefixes) that maximise cache hits. If you’re choosing between tiers, the DeepSeek V4-Flash and DeepSeek V4-Pro model pages cover capability differences in detail. For a complete inventory of the available DeepSeek tools and utilities, the hub lists everything from token counters to status checkers.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

How accurate is a DeepSeek cost estimator before you start sending real traffic?

Within roughly 10–20% if you’ve correctly counted tokens for a representative prompt and assumed a sensible cache-hit ratio. The biggest source of error is output length variability — thinking mode in particular can double output tokens. Calibrate by running 1,000 real calls, reading the usage object, and comparing actual cache splits against your assumption. See DeepSeek API best practices.

What is the cheapest way to test DeepSeek V4 before committing to a budget?

Run a small batch on V4-Flash with a stable system prompt so the cache kicks in by the second call. At $0.0028/1M for cache hits (since 2026-04-26) and $0.14/1M for misses, a few hundred test calls cost cents. The DeepSeek API getting started tutorial walks through authentication and the first request; the get a DeepSeek API key guide covers credential setup.

Does context caching change the math on a DeepSeek cost estimator?

Substantially. Cache-hit discount: roughly 98% off V4-Flash and over 99% off V4-Pro on repeated prefixes (since the 2026-04-26 cache-hit rate drop). If your workload has a stable prefix above 1,024 tokens — system prompts, tool schemas, RAG chunks — most of your input bill collapses. If every call is unique, caching saves nothing. The DeepSeek context caching guide covers the prefix-matching rules.

Can you estimate costs for legacy deepseek-chat or deepseek-reasoner?

Yes, but treat them as V4-Flash for billing purposes. deepseek-chat currently routes to deepseek-v4-flash non-thinking mode, and deepseek-reasoner currently routes to deepseek-v4-flash thinking mode. Both retire on 2026-07-24 15:59 UTC, so any forecast longer than that horizon should assume the V4 model IDs directly. See the DeepSeek API documentation.

Why does V4-Pro cost so much more than V4-Flash if they have the same context window?

Because they’re different models under the hood. DeepSeek-V4-Pro with 1.6T parameters (49B activated) and DeepSeek-V4-Flash with 284B parameters (13B activated) means Pro activates almost four times more parameters per token, with proportionally higher inference cost. Pro earns its premium on agentic coding and long-horizon reasoning; Flash is close enough on most chat workloads. The DeepSeek vs ChatGPT comparison covers competitor pricing for context.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- PricingDeepSeek pricing pageCost-estimator three-bucket V4-Flash and V4-Pro rate cardsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.