Is DeepSeek Safe? A Practical Guide to DeepSeek Safety in 2026

Can you use DeepSeek without handing sensitive data to the Chinese government, without triggering a compliance incident at work, and without relying on a model that researchers have jailbroken more easily than its Western rivals? That is the real deepseek safety question, and the honest answer is: it depends on which surface you use, what you feed it, and what your regulator says. The web app and the hosted API are not the same risk profile as running the open weights on your own hardware. Italy has blocked the app. Several governments have blocked it on official devices. And the models themselves — technically capable, open-weight, MIT-licensed — have documented security weaknesses. This guide lays out what is actually verified, what is speculation, and how to make a sensible call.

Short answer: is DeepSeek safe to use?

There are three DeepSeek surfaces, and they carry different risks. The web chat and mobile app send your prompts to servers in mainland China, where they are subject to Chinese law. The hosted API (api.deepseek.com) routes through the same infrastructure. The open-weight models — V4-Pro, V4-Flash, V3.2, R1 — can be run on your own hardware or a Western cloud, and when you do that the cross-border data-flow concern disappears, though the model’s own safety weaknesses do not.

For personal, non-sensitive use, the hosted DeepSeek is comparable in risk to any free foreign chatbot. For regulated data (health, legal, financial, government, or anything covered by GDPR), the hosted service is not an appropriate tool — and several regulators have said so on the record. The rest of this article explains why, with sources.

Where your data goes

DeepSeek’s privacy position is unusually candid about where data lives. The current privacy policy, last updated February 10, 2026, states plainly that the personal data collected from users may be stored on servers located outside their country, and that DeepSeek directly collects, processes and stores personal data in the People’s Republic of China. A European supplemental clause added after regulatory pressure spells this out further for EEA, Swiss, and UK users.

The data itself is broad. According to analyses of the policy, DeepSeek collects account details (email, phone, date of birth, username, password), prompt content, uploaded files, IP address, device identifiers, operating system, browser type, and keystroke patterns. Keystroke-pattern collection is worth calling out — typing rhythm can be biometric-like and used to re-identify users across sessions.

Chinese law is the core legal issue

Under China’s 2017 National Intelligence Law, Chinese companies can be compelled to cooperate with state intelligence agencies. Proton, in its assessment, describes the practical effect bluntly: the statute compels all Chinese companies to assist the government with national security matters, meaning any Chinese company can be forced to share user data with Chinese authorities even if that data is from users elsewhere. Western AI companies face government data requests too, but — as Proton and others note — companies in the US and EU can challenge those requests in independent courts, appeal, or refuse requests that violate laws like GDPR. That legal recourse is the difference.

Countries that have restricted DeepSeek

The regulatory response has been unusually fast and broad. Here is the verified picture as of early 2026.

| Jurisdiction | Action | Scope | Date |

|---|---|---|---|

| Italy | Emergency processing ban (Garante) | Nationwide — app removed from stores; web still reachable | 2025-01-30 |

| Czech Republic | Government ban | Public administration | 2025-07 |

| Australia | Government device ban | All federal government systems | 2025-02 |

| South Korea | Government device ban | Government agencies | 2025-02 |

| Taiwan | Government device ban | Government sector | 2025 |

| United States | State-level bans (TX, NY, VA and others); pending federal bills | Government devices | 2025 |

| Netherlands, Ireland, Belgium, France, Spain, Portugal | Active investigations | Civilian users | 2025 |

The Italian action is the most consequential one for EU users. On 30 January 2025, the Garante imposed an immediate and definitive limitation on the processing of Italian users’ personal data by Hangzhou DeepSeek Artificial Intelligence and Beijing DeepSeek Artificial Intelligence. DeepSeek’s initial defence — that it did not operate in Italy and was therefore outside GDPR — was rejected. The Garante concluded that the companies offered services to Italian data subjects and were therefore subject to GDPR, and noted that the privacy policy expressly informs users that personal data is stored in China, in violation of GDPR safeguards including Article 32 on security of processing. Practically, the order targeted distribution of the mobile app via official channels — DeepSeek was removed from the Italian Apple App Store and Google Play Store, though users who had installed it before the ban have reported the app still functions.

More broadly, an IAPP analysis published February 2026 captured the scale: Italy’s Garante imposed a ban within 72 hours, investigations followed in 13 European jurisdictions, the European Data Protection Board created a dedicated AI Enforcement Task Force, and government device bans proliferated from Washington to Canberra.

Security findings researchers have published

Separately from privacy law, independent researchers have tested DeepSeek’s apps and models. The findings are not flattering.

Mobile app weaknesses

- Unencrypted transmission and weak crypto. NowSecure’s analysis reported sensitive data sent over the internet without encryption, outdated 3DES encryption with hardcoded keys, iOS App Transport Security deliberately disabled, and high susceptibility to man-in-the-middle attacks.

- Third-party data flow. NowSecure found that DeepSeek transmits data to Volcengine, ByteDance’s cloud platform, raising questions about how broadly data may be shared within China’s tech ecosystem.

- Exposed database. In January 2025, security researchers from Wiz discovered a publicly accessible database belonging to DeepSeek that contained over one million log entries, including plaintext chat histories, API keys, backend system details, and operational metadata.

Model-level weaknesses

On the models themselves, the jailbreak picture is the most concerning. DeepSeek’s large language models have been found to be susceptible to jailbreak techniques like Crescendo, Bad Likert Judge, Deceptive Delight, Do Anything Now (DAN), and EvilBOT, and in Palo Alto Networks Unit 42 testing the team elicited detailed instructions for creating dangerous items and generating malicious code for attacks like SQL injection and lateral movement. Researchers also noted that methods ChatGPT and similar models patched long ago still work on DeepSeek, enabling it to produce disallowed or dangerous content. These are weaknesses in the model weights themselves — they persist whether you use the hosted API or download and self-host.

Censorship is built in

Independent testing has repeatedly shown that DeepSeek’s hosted chatbot refuses or redirects on topics sensitive to the Chinese government. The IAPP summary puts it well: “Open source distribution and local hosting can mitigate some security and privacy concerns, but censorship features remain intrinsic.” If you care about unrestricted output on geopolitical topics, this is a functional safety issue, not just a policy one.

DeepSeek API safety for developers

The API is a separate surface, and developers need to understand how it differs from the consumer app. The current generation is DeepSeek V4, released April 24, 2026, shipping as two model IDs — deepseek-v4-pro (1.6T total / 49B active parameters) and deepseek-v4-flash (284B / 13B active). Both are open-weight MoE models under the MIT licence, and thinking mode is a request parameter on either, not a separate model ID. Legacy IDs deepseek-chat and deepseek-reasoner currently route to deepseek-v4-flash until they retire at 2026-07-24 15:59 UTC.

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. A minimal Python call looks like this:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="sk-...",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise this."}],

)

Enable thinking with reasoning_effort="high" plus extra_body={"thinking": {"type": "enabled"}}; the response returns reasoning_content alongside the final content. The API is stateless, so conversation history must be resent on every call — a different behaviour from the web chat, which keeps session history. DeepSeek also exposes an Anthropic-compatible surface against the same base URL.

From a safety standpoint, the API inherits the same data-residency facts as the app: requests are processed on infrastructure in China. For EU-regulated workloads, that is typically a blocker unless you have a GDPR-valid transfer mechanism, which DeepSeek does not currently document in detail. If you need the model family but not the hosted service, the open weights are the way out — see how to install DeepSeek locally or run via Ollama.

How DeepSeek safety compares to Western alternatives

This is worth being specific about, because “all AI has privacy risks” is true but lazy. There are real differences in the legal environment and the audit picture.

| Factor | DeepSeek (hosted) | OpenAI / Anthropic / Google (hosted) |

|---|---|---|

| Data residency | Mainland China | US / EU (region-selectable on enterprise tiers) |

| Government-access law | China’s 2017 National Intelligence Law applies | US legal process (CLOUD Act, subpoenas) — challengeable in court |

| GDPR posture | Disputed; Italy blocked the app | EU reps appointed; DPIAs and SCCs published |

| Third-party audits (SOC 2, ISO 27001) | No public record | SOC 2 / ISO 27001 certifications published |

| Jailbreak resistance | Multiple documented bypasses still work | Patched most historical bypasses |

| Self-hosting option | Yes — MIT-licensed weights | Partial — OpenAI/Anthropic frontier models are closed |

The honest read: on audit posture and jailbreak resistance, the Western hosted services are currently stronger. On openness, DeepSeek is stronger — you can actually run the weights. A University of Alberta security assessment noted that there is no public record of DeepSeek undergoing regular third-party security audits or certifying compliance with international privacy standards such as GDPR or ISO/IEC 27001. That may change, but today it is a fair point.

For more detail on how the products compare outside of safety, see DeepSeek vs ChatGPT and DeepSeek vs Claude.

How to use DeepSeek more safely

If you have decided the trade-offs are acceptable for your situation, these are the practical steps that meaningfully reduce risk.

- Do not submit regulated data. No health records, no client names, no tax IDs, no unredacted contracts, no source code under NDA. This is the single highest-use rule.

- Use a disposable account. Register with an email you would not mind getting leaked. Avoid Apple or Google sign-in — that pulls in linked-account data.

- Turn off model training where offered. EU users have an opt-out for training in the privacy policy; use it.

- Self-host for anything sensitive. Run DeepSeek V4-Flash or a distilled model on your own hardware. This eliminates cross-border data flow; it does not fix censorship built into the weights.

- Prefer the web version over the mobile app if you are using the hosted service. The web surface has fewer documented client-side vulnerabilities than the app.

- Verify the app before installing. Fake “DeepSeek” apps are common. See our note on how to verify the official DeepSeek app.

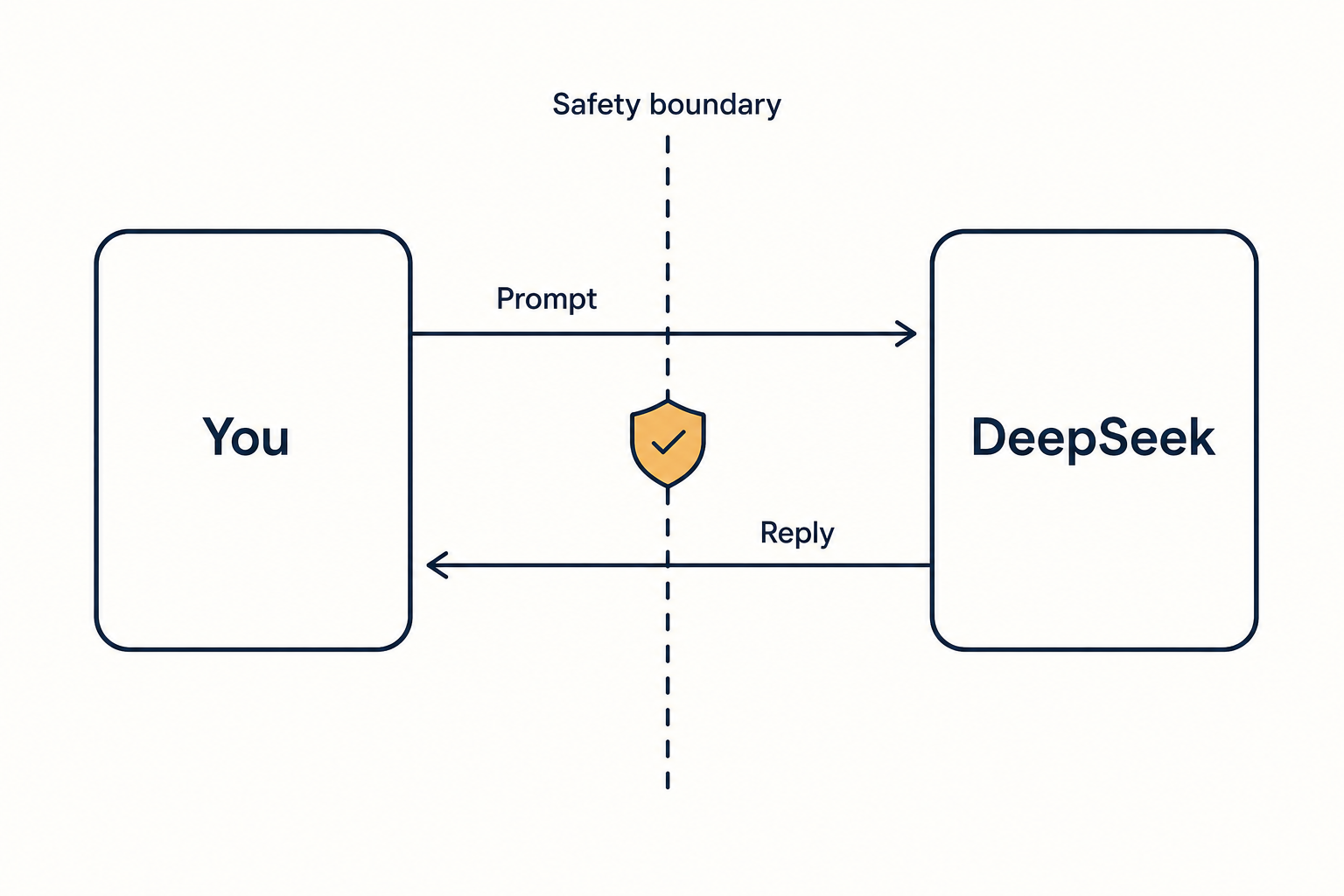

- For enterprises, route through a Western inference provider. Several providers host DeepSeek weights in US or EU regions; that trades one set of risks for a more familiar set.

When you should not use DeepSeek at all

Some situations are clear-cut. Do not use the hosted DeepSeek service if:

- You work for a government that has restricted it (check your department’s policy — several have).

- Your data is covered by HIPAA, GDPR special categories, PCI-DSS, FERPA, or equivalent regulated regimes, and you have no signed DPA with an approved processor.

- You are handling client-confidential legal, financial, or M&A material.

- You are in Italy and need the mobile app — it is still not available through official stores.

For those cases, alternatives to DeepSeek or self-hosting are the honest options.

Bottom line on deepseek safety

DeepSeek is a capable open-weight model family with a genuine and well-documented privacy problem when used as a hosted service, and a separate, technical safety problem — jailbreak resistance — that follows the weights themselves. The open-source release is a real contribution; the hosted service is not appropriate for regulated workloads as of April 2026. If you want the model without the hosting risk, run it yourself, and treat censorship and jailbreakability as real engineering constraints rather than marketing footnotes.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Is DeepSeek safe to use for personal, non-sensitive questions?

For casual, non-sensitive use — brainstorming, general knowledge, code snippets that are not proprietary — the hosted DeepSeek service is broadly comparable to any free foreign chatbot, with the added caveat that data is stored in China. Do not type anything you would not be comfortable seeing on a public forum. For more context on the free tier, see our write-up on whether DeepSeek is free.

Does DeepSeek really store my data in China?

Yes. DeepSeek’s own privacy policy, last updated February 10, 2026, states that personal data is collected, processed and stored in the People’s Republic of China. That is not speculation — it is in the policy and was cited by Italy’s data protection authority in the 2025 ban decision. Our DeepSeek privacy guide walks through what this means in practice.

Can I use DeepSeek at work?

Check your employer’s AI policy first. Many large companies, universities, and public sector bodies have added DeepSeek to their restricted-tools list alongside other foreign AI services. If your workplace allows it, treat it like any untrusted external service — no client data, no unreleased product information, no personnel matters. See our DeepSeek limitations guide for other caveats.

How does DeepSeek compare to ChatGPT on safety?

On audit posture, jailbreak resistance, and Western legal recourse, ChatGPT is currently stronger. On openness — the ability to download weights and run them yourself under MIT — DeepSeek is stronger. Neither is “safe” in an absolute sense; they fail in different ways. Our DeepSeek vs ChatGPT comparison covers the wider feature picture.

Why did Italy ban DeepSeek?

On January 30, 2025, Italy’s Garante ordered an immediate, definitive limitation on processing Italian users’ personal data by DeepSeek after finding DeepSeek’s responses to a GDPR questionnaire insufficient. The core issues were storage of data in China without adequate GDPR safeguards and an initial claim by DeepSeek that it was not subject to EU law. For the latest regulatory picture, see DeepSeek US restrictions and country-level availability in our availability by country guide.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- OfficialDeepSeek official API documentationCanonical reference for model IDs and endpointsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.