The Best DeepSeek Alternatives for Writing in 2026

You like DeepSeek’s price tag, but the prose comes back flat. Or your editor wants a different voice. Or you simply want to A/B-test against another model before committing a publishing pipeline to one provider. Whatever the reason, picking the right DeepSeek alternatives for writing matters more than chasing benchmark scores — writing quality is subjective, and the model that nails your tone is the one worth paying for.

This guide compares seven options I’ve used in production over the last six months: Claude Sonnet 4.5, GPT-5.4, Gemini 3.1 Pro, Mistral Large, Llama 3.3, Kimi K2, and DeepSeek V4 itself as the baseline. You’ll get pricing, head-to-head verdicts, and clear guidance on which one to pick for which writing job.

Why writers look past DeepSeek in the first place

DeepSeek V4 is genuinely strong for cost-sensitive workflows. DeepSeek-V4-Pro runs 1.6T total / 49B active params with performance rivaling top closed-source models, while DeepSeek-V4-Flash at 284B total / 13B active is positioned as the fast, efficient, economical choice. Both ship under MIT, both expose a 1M-token context, and the API undercuts most Western frontier providers.

But writing is not coding. Code has objective standards — does it run? Writing is different. Across my last 60 long-form drafts (newsletters, technical explainers, brand voice work), three patterns kept pushing me toward alternatives:

- Voice mimicry — DeepSeek’s default register is competent but neutral. Claude and GPT-5 hold a specific voice across longer drafts more reliably.

- Editorial judgment — alternatives like Claude Sonnet 4.5 will push back on weak phrasing rather than rewriting around it.

- Tooling depth — for browser-native drafting, ChatGPT’s and Claude’s app surfaces are simply more mature than DeepSeek’s chat UI.

If those gaps matter to your workflow, here are the alternatives worth testing. For a broader category overview, see DeepSeek alternatives.

The seven alternatives at a glance

Pricing in the table below is per million tokens, current as of April 2026. Verify on each provider’s pricing page before committing — these change.

| Model | Best for | Input $/M | Output $/M | Context | License |

|---|---|---|---|---|---|

| DeepSeek V4-Flash (baseline) | High-volume drafting | $0.14 | $0.28 | 1M | MIT |

| Claude Sonnet 4.5 | Editing, voice mimicry | $3.00 | $15.00 | 200K (1M beta) | Proprietary |

| GPT-5.4 | Style transfer, citations | $1.25 | $10.00 | ~1M | Proprietary |

| Gemini 3.1 Pro | Research-grounded writing | Varies | Varies | 1M+ | Proprietary |

| Mistral Large | EU-hosted writing | Varies | Varies | 128K | Apache 2.0 (some) |

| Llama 3.3 70B | Self-hosted writing | Self-host | Self-host | 128K | Llama Community |

| Kimi K2.6 | Chinese-language writing | Varies | Varies | 200K+ | Modified MIT |

Sources: Anthropic lists Sonnet 4.5 at $3 per million input tokens and $15 per million output tokens for prompts up to 200K, with $6/$22.50 above that. GPT-5 sits at $1.25/$10 per million tokens. DeepSeek V4 rates are from DeepSeek’s pricing page: $0.14 input and $0.28 output per million for Flash; $0.435 and $0.87 for Pro during the 75% promo through 2026-05-31 (list $1.74 and $3.48).

1. Claude Sonnet 4.5 — the editor’s choice

If you’re going to replace DeepSeek with one model for writing, this is the one to test first. Anthropic released it in late September 2025 and it has held its position as the default writing model for most of the editors I work with.

Strengths. Anthropic positions Sonnet 4.5 as their most intelligent model for real-world work, available on Claude.ai for documents, data analysis, and complex research. In side-by-side fiction tests, when asked to write dialogue-only conversation between three characters, Sonnet 4.5 produced clear scenes where each speaker felt distinct through speech patterns alone, with minimal confusion about who was talking.

Weaknesses. Wordiness is the biggest issue — when rewriting a scene, output often comes back at double the word count, requiring edits for conciseness. Style mimicry is also imperfect for distinctive voices: GPT-5 was the clear winner in pure style mimicry, nailing fragmented rhythm and dry humour better than Sonnet.

Verdict. Best for editing, document polish, and any writing that has to read as professional prose. Worst for matching a strong, idiosyncratic personal style. See DeepSeek vs Claude for a deeper head-to-head.

2. GPT-5.4 — the style-transfer specialist

GPT-5.4 (and the newer GPT-5.5 released April 23, 2026) replaced the old “use ChatGPT for drafts, Claude for edits” workflow that many writers ran in 2025.

Strengths. Style mimicry is the standout. In a 400-word continuation test against a fragmented, urgent prose excerpt, GPT-5 nailed the fragmented rhythm and dry humour perfectly. OpenAI claims hallucinations are down roughly 18 to 33 percent compared to GPT-5.2, which matters when you’re drafting anything fact-bearing. In an internal test of 250 complex tasks, GPT-5.4 achieved the same accuracy while using 47 percent fewer tokens by better integrating tool calls.

Weaknesses. The model picker labels move around — GPT-5.4 is available via the ChatGPT interface as the new default on paid tiers and via the API, but specific variant names (Instant, Thinking, Pro) shift between plans. Free-tier users hit dynamic message caps, after which heavier sessions fall back to a smaller model.

Verdict. The pick if you need to mimic a specific writer’s voice or generate research-grounded prose with inline citations. See DeepSeek vs ChatGPT for the broader comparison.

3. Gemini 3.1 Pro — research-heavy long-form

Google’s Gemini 3.1 Pro is where I send anything that needs to digest source material before drafting. DeepSeek itself acknowledges that V4-Pro leads all current open models on world knowledge, trailing only Gemini-3.1-Pro.

Strengths. Long-context grounding. The model genuinely uses a million-token window for synthesis rather than treating context as a dump. Useful for writing literature reviews, long memos that summarise dozens of inputs, or branded content that has to absorb a style guide plus product docs.

Weaknesses. The default voice is the most “AI-sounding” of the three frontier options. Without aggressive prompting toward a specific tone, drafts read like a competent intern.

Verdict. Pick it for research-led writing where the source pile is large. See DeepSeek vs Gemini for an extended look.

4. Mistral Large — the EU-hosted option

If your team needs a writing model with European data residency, Mistral remains the cleanest answer. The Paris-based lab keeps inference in EU regions and ships open-weight variants you can self-host.

Strengths. French and other Romance-language writing is noticeably more idiomatic than the US-trained frontier models. Decent at structured business prose. Apache 2.0 licensing on several releases means you can deploy weights commercially without licence-fee anxiety.

Weaknesses. English creative writing trails the frontier by a clear margin. Reasoning depth on multi-step prompts is also weaker than V4-Pro or Sonnet 4.5.

Verdict. Use for compliance-driven workflows in the EU, or for French/German/Spanish content where its training shows. See DeepSeek vs Mistral for the technical breakdown.

5. Llama 3.3 70B — the self-hosted writing model

If you want to keep manuscripts entirely on your own infrastructure — for legal, contract, or NDA reasons — Llama 3.3 70B is the realistic ceiling for self-hosted writing on a single high-end node.

Strengths. Runs on a 2× H100 box at sensible token rates. Decent stylistic flexibility once fine-tuned on your house style. Free to use commercially within Meta’s licence terms.

Weaknesses. Out of the box, prose is duller than any hosted frontier model. Fine-tuning is essentially mandatory for serious editorial use, which means data, infrastructure, and time.

Verdict. Pick it when “your text leaves the building” is a hard no. See DeepSeek vs Llama for deployment specifics.

6. Kimi K2.6 — strong Chinese-language writing

Moonshot AI’s Kimi handles Chinese-language writing better than any Western model I’ve tested, and its English capability has caught up enough to use for bilingual content.

Strengths. Native idiomatic Chinese. Long context handling for legal and academic Chinese-language documents.

Weaknesses. Smaller than V4-Pro by total parameters — Kimi K2.6 is 1.1T compared to V4-Pro’s 1.6T. English creative writing is competent but unremarkable.

Verdict. The default for any writing pipeline whose primary output language is Chinese. See DeepSeek vs Kimi for benchmarks.

7. DeepSeek V4 itself — when to stay

For completeness: don’t switch off DeepSeek if your workflow is high-volume draft generation where unit economics matter more than the last 5 percent of prose quality. DeepSeek-V4 Preview is officially live and open-sourced — welcome to the era of cost-effective 1M context length. Both V4 tiers — `deepseek-v4-pro` and `deepseek-v4-flash` — are open-weight MoE under MIT, run from `https://api.deepseek.com`, and accept either the OpenAI or Anthropic SDK against that base URL.

Thinking mode is a request parameter on either V4 model, not a separate model ID. The response returns `reasoning_content` alongside the final `content` when you enable it. Chat requests hit `POST /chat/completions`, the OpenAI-compatible endpoint. A minimal Python call:

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": "You are a senior copy editor. Tighten prose without changing voice."},

{"role": "user", "content": "Edit this draft: ..."},

],

temperature=1.5, # creative writing

max_tokens=2000,

)Note the temperature: DeepSeek’s official guidance recommends 1.5 for creative writing and poetry, 1.3 for general conversation, 1.0 for analysis, and 0.0 for code or maths. The API is stateless — you must resend the full conversation history with each request, unlike the web/app which maintains session history. The legacy `deepseek-chat` and `deepseek-reasoner` IDs will be fully retired and inaccessible after July 24, 2026, 15:59 UTC, currently routing to deepseek-v4-flash non-thinking and thinking respectively. Migrating is a one-line `model=` swap; the `base_url` does not change.

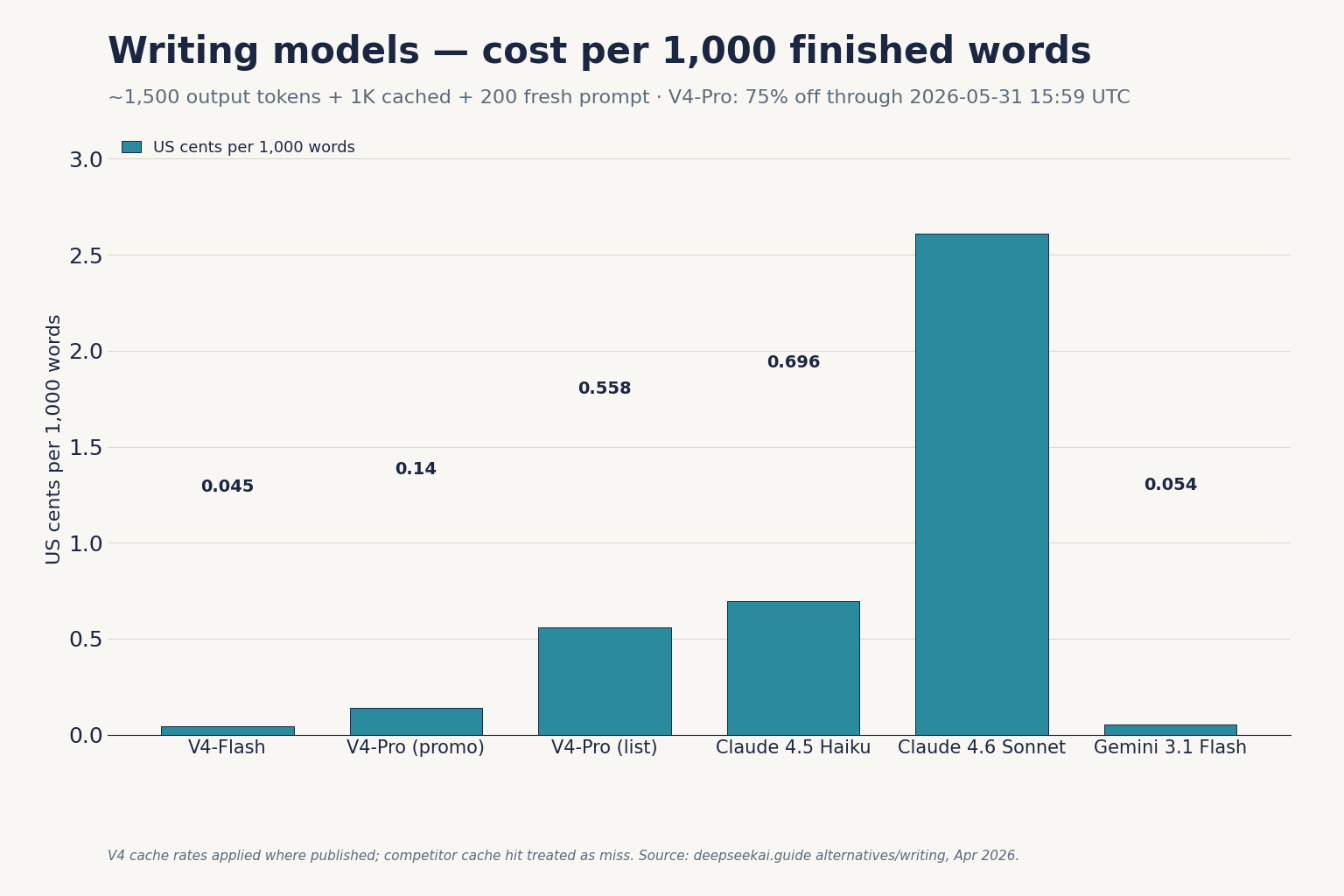

Cost comparison: a real writing workload

Consider a content team drafting 1,000 long articles a month. Each call uses a 2,000-token style-guide system prompt (cached across calls), a 200-token brief (uncached), and a 1,500-token draft response.

DeepSeek V4-Flash at the rates above:

- Cached input: 2,000 × 1,000 = 2,000,000 tokens × $0.0028/M = $0.056

- Uncached input: 200 × 1,000 = 200,000 tokens × $0.14/M = $0.0028

- Output: 1,500 × 1,000 = 1,500,000 tokens × $0.28/M = $0.42

- Total: $0.50

Claude Sonnet 4.5 at $3 input / $15 output (ignoring cache for a like-for-like floor):

- Input: 2,200,000 tokens × $3/M = $6.60

- Output: 1,500,000 tokens × $15/M = $22.50

- Total: $29.10

That’s roughly 58× more expensive than V4-Flash for the same workload. The Sonnet output may well be better — but it has to be 58× better to break even. For high-volume drafting, that maths usually loses. For the 50 articles a month where editorial quality matters most, it usually wins.

How to choose

- Default to Claude Sonnet 4.5 if writing quality is the top priority and per-call cost is secondary.

- Pick GPT-5.4 if you need to mimic a specific voice or produce research-grounded prose with citations.

- Pick Gemini 3.1 Pro for long, source-heavy synthesis writing.

- Pick Mistral or Llama if data residency or self-hosting is non-negotiable.

- Pick Kimi for Chinese-language work.

- Stay on DeepSeek V4 for high-volume drafting where unit economics dominate.

For role-specific guidance on how to structure your prompts once you’ve picked, see DeepSeek for writing and the DeepSeek prompt engineering guide. For an honest scorecard of DeepSeek’s own writing capability, the DeepSeek performance review covers ground I’ve left out here.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

What is the best DeepSeek alternative for creative writing?

Claude Sonnet 4.5 is the default recommendation for most writers. It produces cleaner prose, follows style instructions reliably, and handles dialogue well. GPT-5.4 wins on pure style mimicry of a distinctive voice. Both are far more expensive than DeepSeek V4-Flash, so the right pick depends on whether quality or cost dominates your use case. See the DeepSeek vs Claude comparison.

Is DeepSeek good for writing at all?

DeepSeek V4 is competent for high-volume drafting where editorial polish is added later by humans. Its prose tends toward neutral, generic register without aggressive prompting. For workflows that need a specific voice or strong editorial judgment, alternatives like Claude or GPT-5 outperform it. See DeepSeek for writing for prompt patterns that improve V4 output.

How does DeepSeek pricing compare to ChatGPT and Claude for writing?

DeepSeek V4-Flash lists $0.14 input miss and $0.28 output per million tokens. Claude Sonnet 4.5 lists $3 input and $15 output. GPT-5 sits at $1.25 input and $10 output. For a typical 1,000-article-per-month workload, V4-Flash runs roughly 58× cheaper than Sonnet 4.5. See the DeepSeek API pricing page for current rates.

Can I run an open-source DeepSeek alternative locally for writing?

Yes. Llama 3.3 70B and several Mistral releases ship open weights you can self-host. DeepSeek V4 itself is also open-weight under MIT, though Pro at 865GB and Flash at 160GB on Hugging Face require serious hardware. For most writers wanting a private setup, a Llama-class model on a single H100 node is the realistic option. See open-source AI like DeepSeek.

Why would a writer choose Gemini over DeepSeek?

Gemini 3.1 Pro is stronger on world knowledge and long-context synthesis. DeepSeek itself acknowledges that V4-Pro trails only Gemini-3.1-Pro on world-knowledge benchmarks. For research-led writing — literature reviews, briefings that synthesise many sources, content that has to absorb a long style guide alongside source documents — Gemini’s long-context handling is a meaningful advantage. See DeepSeek vs Gemini.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingGoogle Gemini API pricingGemini API rates for cross-vendor comparisonsLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.