DeepSeek vs GLM: Which Chinese Open Model Wins in 2026?

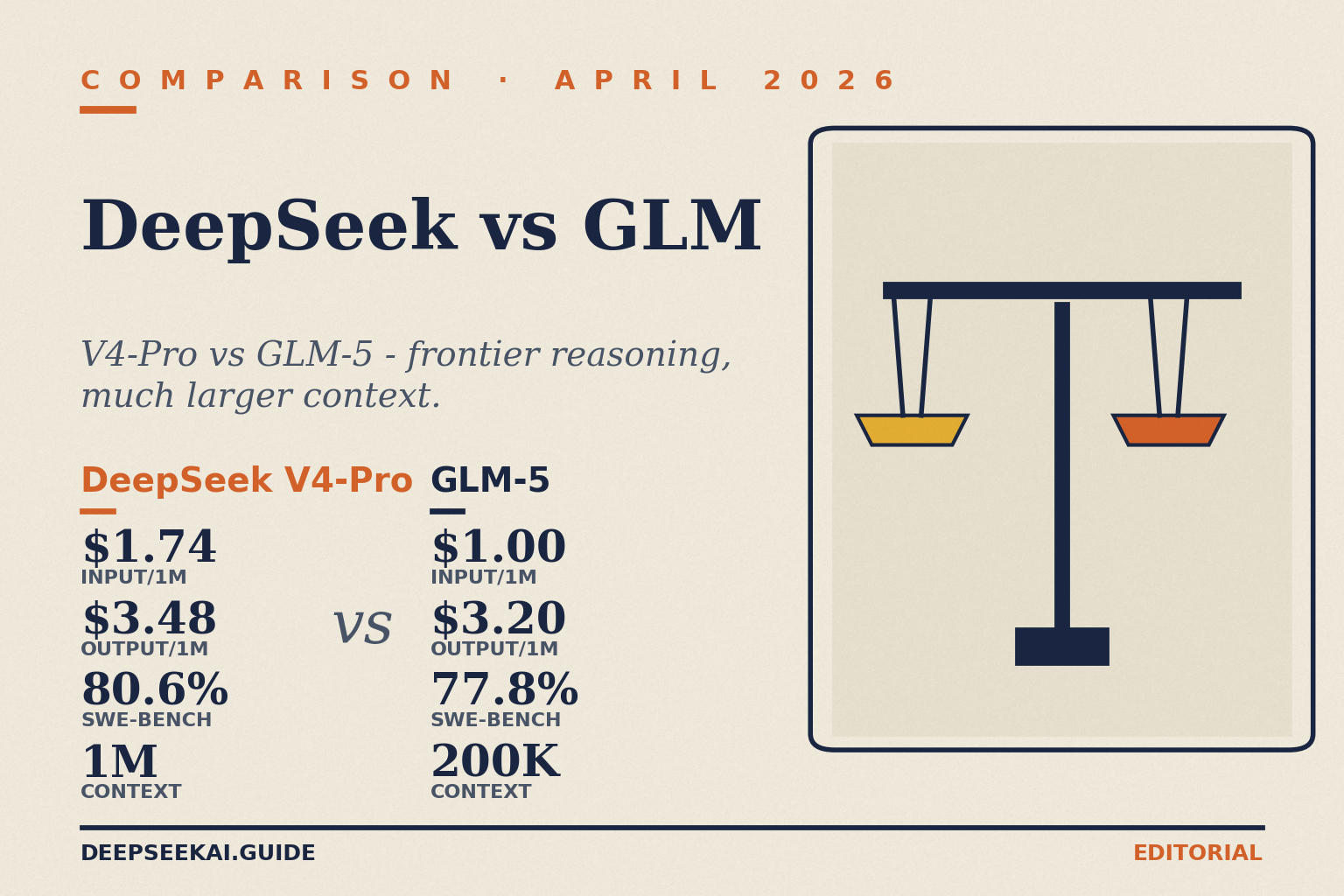

If you are choosing between two Chinese open-weight model families for a production workload in 2026, the deepseek vs glm decision usually comes down to three numbers: price per million tokens, SWE-Bench Verified score, and how much agentic work you actually need the model to do. Both labs ship MIT-licensed weights. Both undercut Claude and GPT-5 on cost. But they make different trade-offs — DeepSeek V4 leans on extreme efficiency and a 1M-token context window, while Zhipu’s GLM-5 family pushes harder on agentic engineering and Claude-style coding behaviour. This guide gives you the verdict up front, the head-to-head detail underneath, and a worked cost example you can plug into your own workload.

Verdict: who wins the deepseek vs glm matchup

For the majority of teams, DeepSeek V4-Flash is the better default. It is cheaper than every paid GLM tier, ships a 1M-token context window, and the legacy deepseek-chat / deepseek-reasoner IDs route to it until they retire on July 24, 2026 at 15:59 UTC. Pick GLM-5 instead if you need a Claude-style agentic coding partner with strong long-horizon tool use and you can absorb the price premium — it scores 77.8% on SWE-bench Verified, 92.7% on AIME 2026, and 86.0% on GPQA-Diamond, and Z.ai pitches it explicitly as a Claude Opus alternative.

Pick DeepSeek V4-Pro only when you need frontier-tier reasoning at lower cost than GLM-5 on output tokens but with a much larger context window. Avoid GLM-4.5 / 4.6 for new builds — they are previous-generation. Avoid the legacy DeepSeek model IDs beyond the retirement date.

At-a-glance comparison table

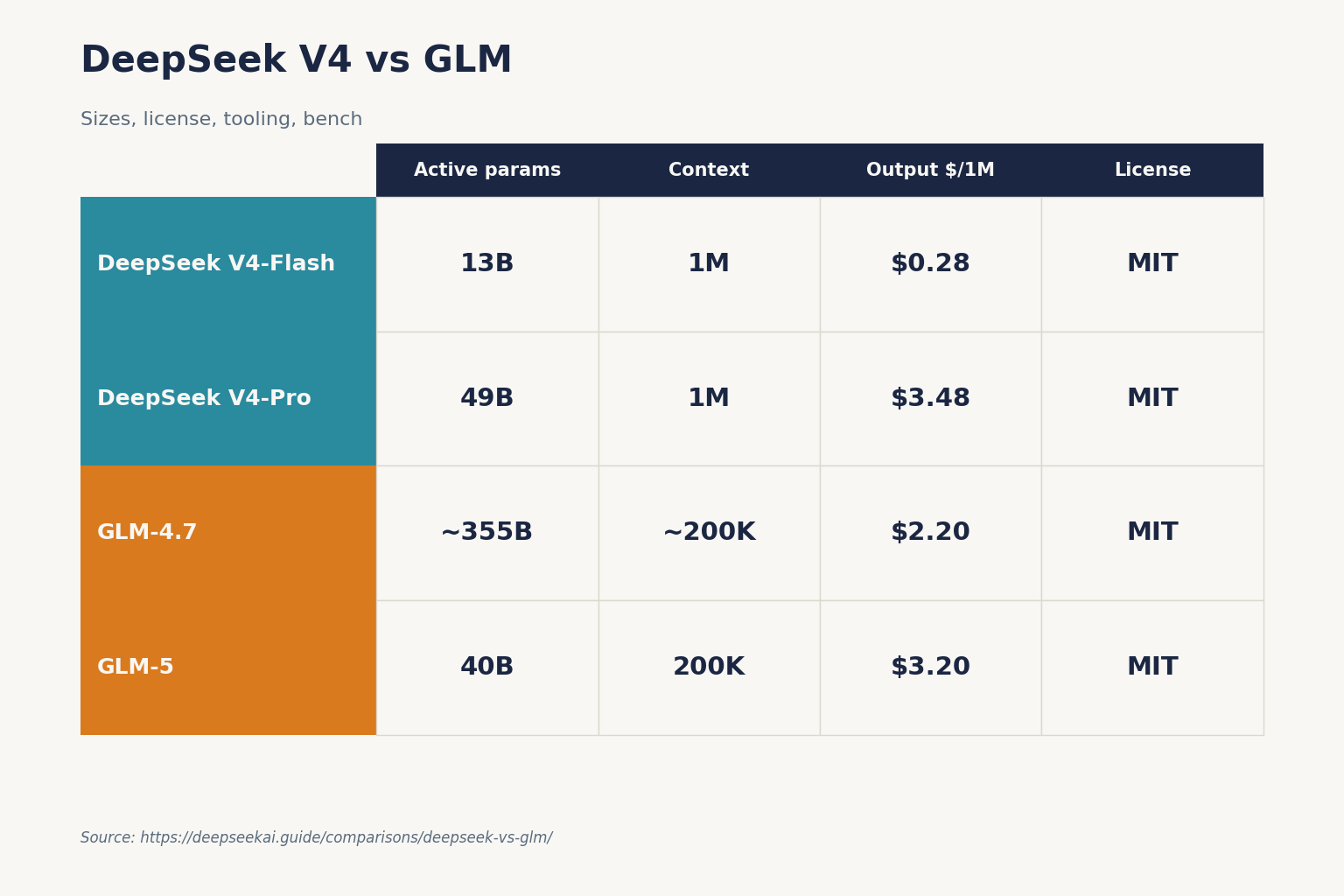

| Feature | DeepSeek V4-Flash | DeepSeek V4-Pro | GLM-4.7 | GLM-5 |

|---|---|---|---|---|

| Released | 2026-04-24 | 2026-04-24 | December 2025 | 2026-02-11 |

| Total params (MoE) | 284B / 13B active | 1.6T / 49B active | ~355B | 744B / 40B active |

| Context window | 1,000,000 tokens | 1,000,000 tokens | ~200K | 200K tokens |

| Max output | 384K tokens | 384K tokens | ~131K | ~131K |

| Input $ /1M (cache miss) | $0.14 | $0.435 promo / $1.74 list | $0.60 | $1.00 |

| Output $ /1M | $0.28 | $0.87 promo / $3.48 list | $2.20 | $3.20 |

| Weights license | MIT | MIT | MIT | MIT |

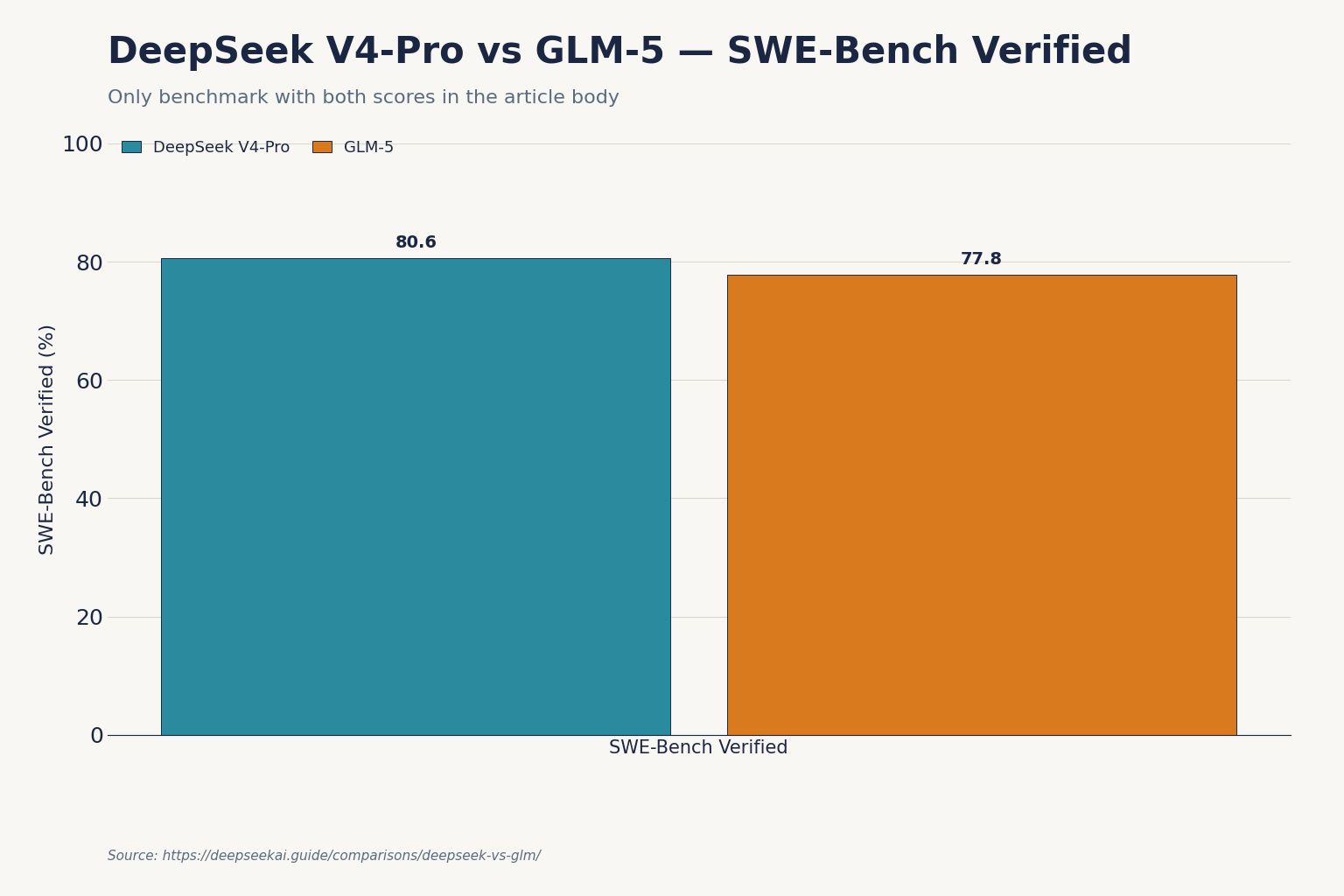

| SWE-Bench Verified | see V4 report | 80.6% | 73.8% | 77.8% |

Sources: DeepSeek V4 announcement (April 24, 2026); GLM-4.7 scores 73.8% on SWE-bench and 85.2% on HumanEval; GLM-5 API priced at $1.00 per million input tokens and $3.20 per million output tokens; GLM-4 family ranges from free Flash variants to $2.20 per million output tokens for GLM-4.7.

Coding: the GLM home turf, narrowly

Coding is where this comparison gets interesting. Z.ai has invested heavily in agentic engineering — GLM-5 is designed for complex system engineering and long-range Agent tasks, demonstrating strong deep-reasoning performance in backend architecture, complex algorithms, and stubborn bug fixing, and integrates DeepSeek Sparse Attention for higher token efficiency while preserving long-context quality. The borrowing of DeepSeek’s own attention mechanism is telling: it confirms DeepSeek’s architectural lead even as GLM closes the application gap.

For DeepSeek, the V4-Pro number to know is its SWE-Bench Verified score of 80.6% from the April 24, 2026 announcement, which edges out GLM-5’s 77.8%. But raw scores are only half the story. In practice:

- Greenfield code generation — both are excellent. V4-Flash at $0.28 per million output tokens is roughly 8× cheaper than GLM-5 on output, which matters when an agent loop emits hundreds of thousands of tokens.

- Long-horizon agent runs — GLM-5.1 can work independently for up to 8 hours in a single run, enabling a full loop from planning and execution to iterative refinement and final delivery, with comprehensive capability alignment with Claude Opus 4.6. This is GLM’s strongest pitch.

- Massive codebases — DeepSeek V4’s 1M-token default context dwarfs GLM-5’s 200K. If you need to feed an entire monorepo into a single prompt, DeepSeek wins by default.

For day-to-day coding tasks, our DeepSeek for coding guide walks through prompt patterns that work well on V4-Flash, and our DeepSeek Coder vs Copilot piece covers the IDE-integration angle separately.

Reasoning: V4-Pro thinking mode vs GLM-5

Both families ship dedicated reasoning behaviour. On DeepSeek V4, thinking mode is a request parameter — not a separate model ID. You set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}} on either V4-Pro or V4-Flash and the API returns reasoning_content alongside the final content. For maximum effort, use reasoning_effort="max", which requires max_model_len >= 393216 (384K tokens) to avoid truncation.

GLM-5 takes a different approach: separate model variants (GLM-5, GLM-5-Turbo, GLM-5.1) for different latency/quality trade-offs. Z.ai released GLM-5.1 on March 27, 2026, claiming a coding evaluation score of 45.3 compared to Claude Opus 4.6’s 47.9, a 28% improvement over the GLM-5 baseline score of 35.4. Worth noting: these benchmarks are self-reported by Z.ai and have not yet been independently verified.

On math and science, GLM-5 scores 92.7% on AIME 2026 and 86.0% on GPQA-Diamond. Cross-check the DeepSeek V4 technical report for V4-Pro’s matching numbers before you commit to either for a math-heavy workload — DeepSeek for math covers practical setup.

Writing and general chat

For non-coding chat — translation, drafting, summarisation, customer-facing copy — V4-Flash is the obvious pick on cost alone. The GLM family carries a quirk worth flagging: in December 2025, MIT researchers documented that GLM-series models identified themselves as Claude roughly 50% of the time when queried through non-standard methods. That doesn’t make GLM unusable, but if your product cares about model identity disclosure, it’s a real consideration.

Both providers ship in English and Chinese first; for everything else, our DeepSeek languages reference covers the supported set.

Pricing: the headline gap

Here are the rates you should plug into a spreadsheet today, all per 1M tokens, USD:

| Provider / Model | Input (cache hit) | Input (cache miss) | Output | Source |

|---|---|---|---|---|

| DeepSeek V4-Flash | $0.0028 | $0.14 | $0.28 | DeepSeek pricing page, April 2026 |

| DeepSeek V4-Pro | $0.003625 promo / $0.0145 list | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list | DeepSeek pricing page, April 2026 |

| GLM-4.7 | n/a published | $0.60 | $2.20 | Z.ai / pricepertoken.com, 2026 |

| GLM-5 | n/a published | $1.00 | $3.20 | Z.ai pricing, Feb 2026 |

| GLM-5.1 | n/a published | ~$1.40 | ~$4.40 | SCMP, March 2026 |

Zhipu charges US$1.40 per million input tokens and about US$4.40 per million output tokens for GLM-5.1, while Anthropic’s Claude Opus 4.6 cost US$5 per million input tokens and US$25 per million output tokens as of February 2026. So GLM is cheaper than Western frontier models — but DeepSeek V4-Flash undercuts every paid GLM tier on both input and output. For an end-to-end pricing tour, see DeepSeek API pricing.

Two important caveats:

- Off-peak discounts on the DeepSeek API ended on September 5, 2025 and were not reintroduced with V4. Don’t budget on the assumption of a night-time discount.

- Z.ai removed its $3/month promotional pricing on February 11, 2026, so if you’ve seen that number floating around it no longer applies — current pricing starts at ~$10/month.

Worked cost example: 1M API calls a month

Concrete math beats hand-waving. Take a typical workload: 1,000,000 API calls a month, with a 2,000-token system prompt (cached across calls), a 200-token user message (uncached on each call), and a 300-token response. We’ll cost it on deepseek-v4-flash, then on GLM-5 for comparison.

DeepSeek V4-Flash

Cached input : 2,000 × 1,000,000 = 2.0B tokens × $0.0028/M = $5.60

Uncached input : 200 × 1,000,000 = 200M tokens × $0.14/M = $28.00

Output : 300 × 1,000,000 = 300M tokens × $0.28/M = $84.00

-------

Total $117.60GLM-5 (no published cache-hit tier)

Input : 2,200 × 1,000,000 = 2.2B tokens × $1.00/M = $2,200.00

Output : 300 × 1,000,000 = 300M tokens × $3.20/M = $960.00

---------

Total $3,160.00Same workload, ~27× cost difference — driven mostly by DeepSeek’s context-caching tier, which now charges $0.0028 per million tokens on repeated prefixes (since the 2026-04-26 drop). DeepSeek context caching explains how the cache-hit detection works in practice. If you’d rather model your own numbers, the DeepSeek pricing calculator covers V4-Flash and V4-Pro.

Note that running the same example on V4-Pro at list ($0.0145 / $1.74 / $3.48) lands at $1,421.00 — still cheaper than GLM-5 by more than half, but the gap narrows sharply at the frontier tier (and during the 75% V4-Pro promo through 2026-05-31, the same workload is $355.25 — about 9× cheaper than GLM-5).

API ergonomics

Both providers expose OpenAI-compatible endpoints. On DeepSeek, chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. DeepSeek also ships an Anthropic-compatible surface against the same base URL, so the Anthropic SDK works by swapping base_url and api_key. Minimal Python:

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[{"role": "user", "content": "Summarise this PR."}],

temperature=0.0,

max_tokens=2000,

)The DeepSeek API is stateless — you must resend the conversation history with each request. Web and app surfaces maintain session history; the API does not. Useful parameters worth knowing: temperature (0.0 for code, 1.3 for general chat, 1.5 for creative writing), top_p, max_tokens, reasoning_effort, JSON mode, tool calling, streaming, context caching, FIM completion (Beta — requires thinking: {"type": "disabled"}), and Chat Prefix Completion (Beta).

JSON mode is designed to return valid JSON, not guaranteed. The model may occasionally return empty content; include the word “json” plus a small example schema in your prompt and set max_tokens high enough to avoid truncation. See DeepSeek API JSON mode for the full handling pattern.

GLM ships its own quirks: configure your coding tool to point at the GLM Coding endpoint at https://api.z.ai/api/coding/paas/v4 and select the compatibility mode — GLM supports both OpenAI and Anthropic API formats. Registration works with international email, but billing prioritizes AliPay and WeChat Pay, rate limits on new accounts are conservative, and support documentation is primarily in Chinese, though English docs exist for the core API endpoints. For non-Chinese teams, this is real friction.

If you maintain integrations against the legacy deepseek-chat or deepseek-reasoner IDs, both currently route to deepseek-v4-flash and will be retired on July 24, 2026 at 15:59 UTC. Migration is a one-line model= swap; base_url does not change. DeepSeek OpenAI SDK compatibility covers the swap in detail.

Privacy, ecosystem and licensing

Both labs publish open weights under MIT for current-generation releases — V4-Pro, V4-Flash, V3.2, R1 on the DeepSeek side; GLM-5, GLM-4.7 and the Flash variants on the GLM side. GLM-5 is released under the MIT license on Hugging Face, available via the Z.ai API, and on OpenRouter.

Hardware story: Zhipu AI trained the full GLM-5 family exclusively on Huawei Ascend 910B accelerators, making it one of the most prominent demonstrations that competitive frontier models can be built without access to Nvidia GPUs, particularly significant given Zhipu’s placement on the US Entity List since January 2025. DeepSeek’s training infrastructure is well-documented in its V3 and V4 technical reports. Both labs process API requests on servers subject to Chinese law; if that is a hard constraint for your industry, self-hosting open weights is the answer for either family.

When to pick which

- Pick DeepSeek V4-Flash for high-volume chat, RAG, classification, and general-purpose coding where price-per-task dominates.

- Pick DeepSeek V4-Pro for frontier-tier coding agents and reasoning workloads where you need 1M-token context and the SWE-Bench Verified lift over Flash.

- Pick GLM-5 / GLM-5.1 when you specifically want a Claude-style long-horizon agentic engineering partner and you’ve validated that GLM’s tool-calling stack fits your framework.

- Pick GLM-4.7-Flash only as a free fallback for non-critical sub-tasks; it is genuinely free but capability-limited.

- Self-host instead if your data residency rules prohibit either Chinese cloud — both families publish open weights under MIT.

For a wider field of view, see DeepSeek vs Qwen and DeepSeek vs Kimi, or browse the full AI comparison hub.

Bottom line

DeepSeek V4-Flash is the value pick: cheaper than every paid GLM tier, with a 1M-token context window and the SWE-Bench Verified numbers from V4-Pro to fall back on when you need frontier-tier behaviour. GLM-5 is the better choice if you specifically want Claude-style agentic engineering, are willing to absorb roughly 19× the per-call cost of V4-Flash on the worked example above, and don’t need the 1M-token window. For most teams reading this, the deepseek vs glm decision ends with model="deepseek-v4-flash" and a calendar reminder to migrate off legacy IDs before July 24, 2026.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

Is DeepSeek cheaper than GLM?

Yes, on most workloads. DeepSeek V4-Flash lists $0.14 input (cache miss) and $0.28 output per 1M tokens; with cache hits the input rate drops to $0.0028. GLM-4.7 lists $0.60 input and $2.20 output, GLM-5 lists $1.00 / $3.20. On the worked example in this article, V4-Flash came out roughly 27× cheaper than GLM-5. See the full DeepSeek API pricing breakdown for current rates.

How does GLM-5 compare to DeepSeek V4 on coding?

GLM-5 scores 77.8% on SWE-Bench Verified per Z.ai’s announcement, while DeepSeek V4-Pro posted 80.6% on the same benchmark in its April 24, 2026 release. Both are strong; V4-Pro edges ahead on the headline number while GLM-5 leans harder into long-horizon agent loops. For day-to-day work, see DeepSeek for coding.

What is the context window for DeepSeek V4 vs GLM-5?

DeepSeek V4-Pro and V4-Flash both ship a 1,000,000-token default context window with output up to 384,000 tokens. GLM-5 supports a 200K-token context window with output around 131K. If you need to feed entire monorepos or long document sets in a single prompt, DeepSeek’s window is 5× larger. The DeepSeek context length checker can help you size requests.

Are both DeepSeek and GLM open source?

Both publish open weights under MIT for current-generation models — DeepSeek V4-Pro, V4-Flash, V3.2 and R1; GLM-5, GLM-4.7 and the Flash variants. Some older releases (the original DeepSeek V3 base, Coder-V2, VL2) split MIT code from a separate DeepSeek Model License for weights, so check the specific Hugging Face repo if licensing matters. More background in our is DeepSeek open source guide.

Can I use the same code to call both DeepSeek and GLM?

Largely, yes. Both expose OpenAI-compatible chat completions endpoints and both also offer an Anthropic-compatible surface. To switch between them you change base_url, api_key, and the model string. DeepSeek’s base URL is https://api.deepseek.com; GLM’s coding endpoint is https://api.z.ai/api/coding/paas/v4. See DeepSeek API getting started for a working Python quickstart.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.