DeepSeek vs Mistral in 2026: Which AI Should You Actually Use?

If you are choosing between a Chinese-built and a French-built open-weight model in April 2026, the deepseek vs mistral question usually comes down to four things: price per million tokens, context window, coding quality and where your data is allowed to live. Both labs ship MIT-or-similar weights, both expose an OpenAI-compatible API, and both undercut the US frontier on cost. They diverge sharply on context length, model lineup philosophy and EU data-residency posture. I have run DeepSeek V4-Pro and V4-Flash in production since the V4 Preview dropped on April 24, 2026, and I have used Mistral Large 3, Medium 3 and Codestral against the same workloads. Here is the honest breakdown — verdict first, evidence after.

Verdict: which one should you pick?

Pick DeepSeek if you need the cheapest serious frontier-tier API, a 1,000,000-token context window, or a single open-weight model that handles long-context coding and reasoning in one place. Pick Mistral if you need EU data residency, GDPR-aligned hosting, a dedicated coding-completion model with native fill-in-the-middle support (Codestral), or a smaller European-language-tuned model like Ministral.

For most US, UK, Canadian and Australian developers who do not have an EU-only data clause, DeepSeek V4-Flash is the better cost-per-quality default in 2026. For European teams under strict data-residency rules, Mistral is the only one of the two that solves the compliance problem out of the box.

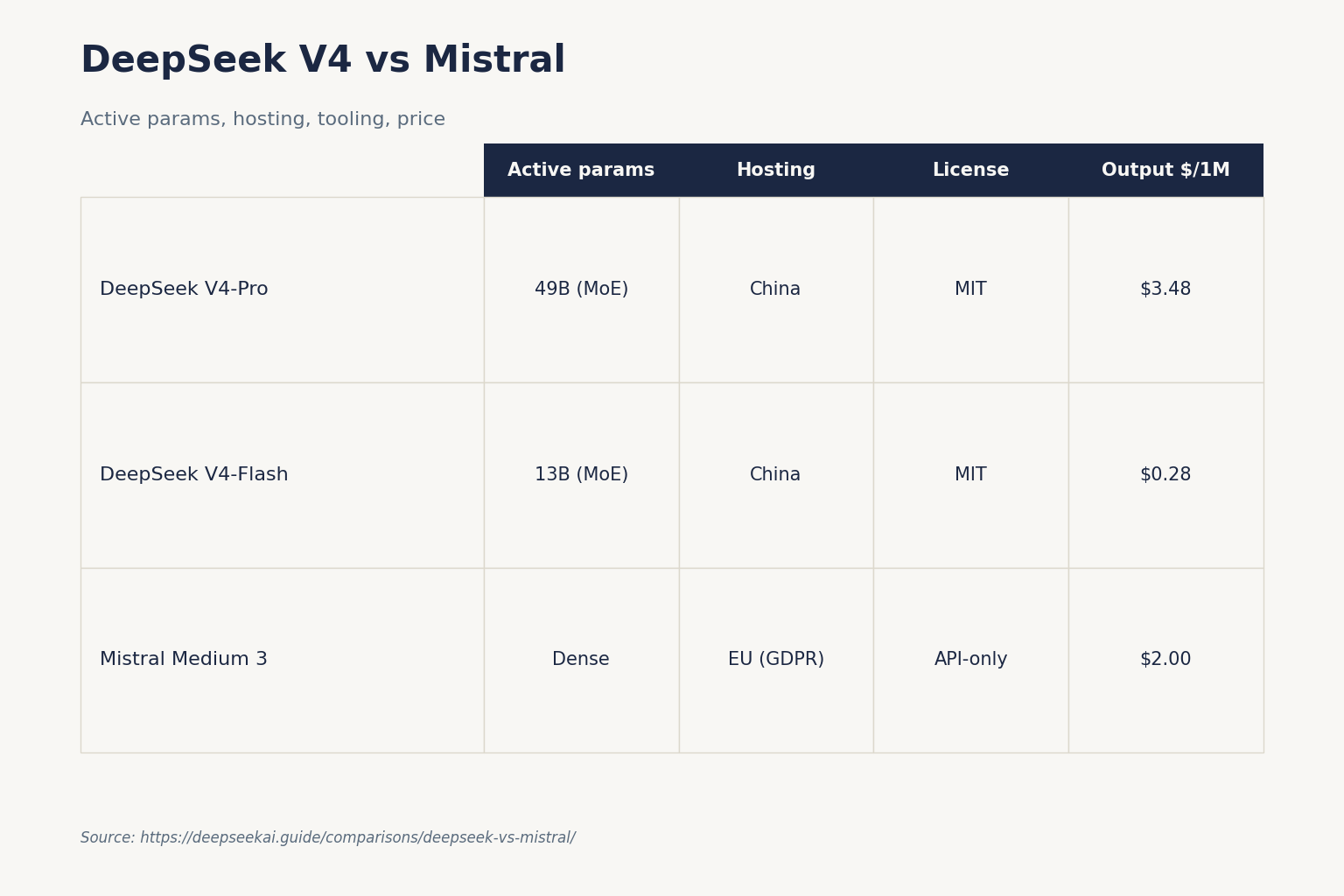

At-a-glance comparison

Prices are USD per 1,000,000 tokens, verified against each provider’s pricing page in April 2026. DeepSeek figures match the V4 baseline; Mistral figures are pulled from third-party trackers that mirror Mistral’s own console (link below) — confirm on the official pages before contracting.

| Feature | DeepSeek V4-Flash | DeepSeek V4-Pro | Mistral Medium 3 | Mistral Large 3 |

|---|---|---|---|---|

| Released | 2026-04-24 | 2026-04-24 | 2025-05 | 2025-12 |

| Architecture | MoE 284B / 13B active | MoE 1.6T / 49B active | Dense (undisclosed) | Dense (undisclosed) |

| Context window | 1,000,000 tokens | 1,000,000 tokens | ~131K tokens | ~128K tokens |

| Input (cache miss) | $0.14 | $0.435 promo / $1.74 list | $0.40 | $0.50–$2.00* |

| Input (cache hit) | $0.0028 | $0.0145 | n/a | n/a |

| Output | $0.28 | $0.87 promo / $3.48 list | $2.00 | $1.50–$6.00* |

| Weights license | MIT | MIT | API-only | API-only |

| Hosting region | China | China | EU (GDPR) | EU (GDPR) |

*Mistral Large 3 pricing varies by tracker and date. Price Per Token’s April 23, 2026 snapshot lists Mistral Large 3 2512 at $0.50 / $1.50; other April 2026 trackers list Large 3 at $2/$6 per million tokens. Confirm against Mistral’s console.

Coding

This is where the comparison gets interesting, because Mistral splits the problem in a way DeepSeek does not.

DeepSeek’s approach

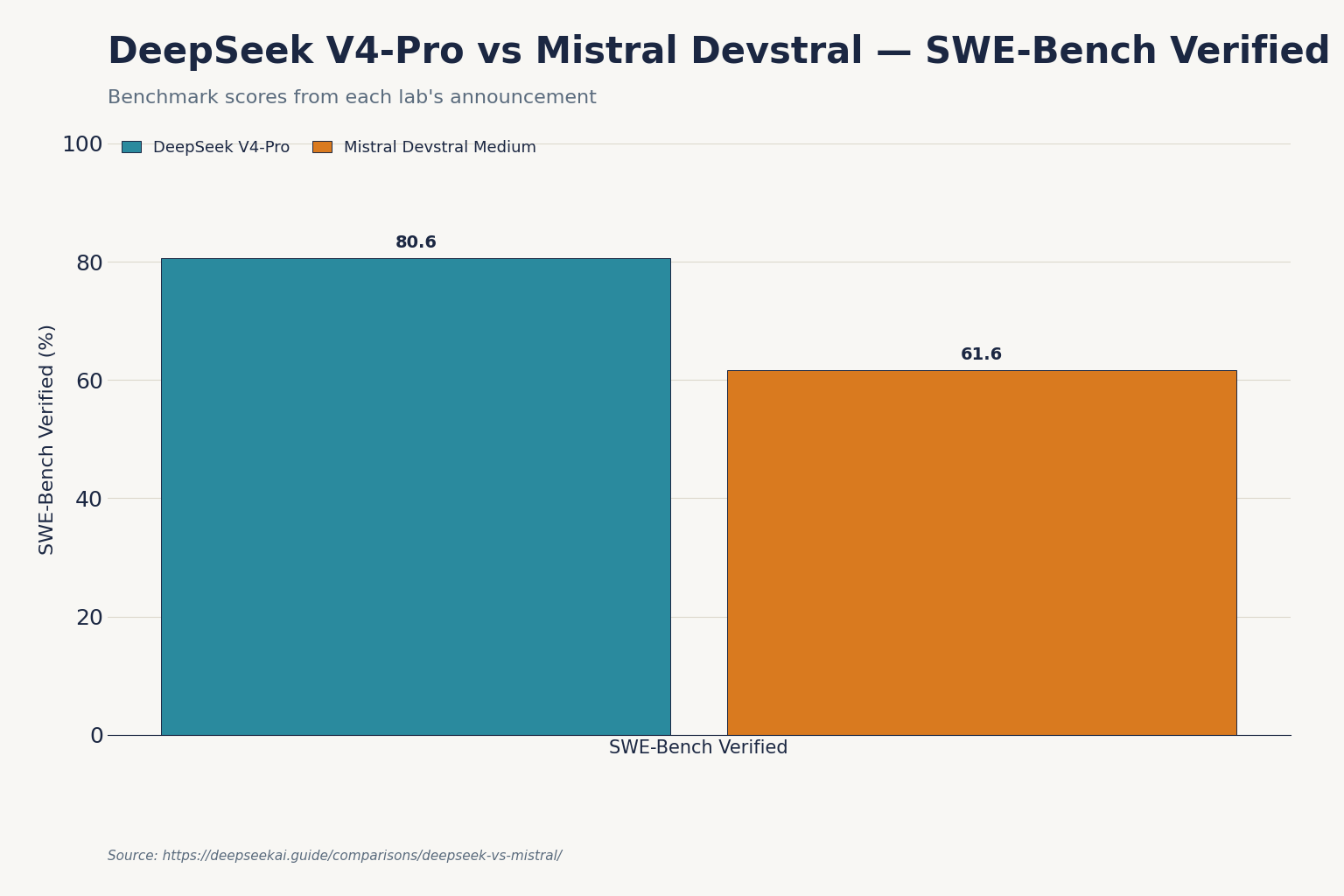

One model handles everything. Both V4-Flash and V4-Pro do agentic coding, repo-scale reasoning and FIM completion (Beta, non-thinking mode only). DeepSeek’s announcement put V4-Pro at 80.6% on SWE-Bench Verified, and the company also reported V4-Pro outperforming Claude on Terminal-Bench. Verify both numbers against the V4 technical report before quoting them in production decisions.

Mistral’s approach

Mistral keeps coding in three lanes: Codestral for autocomplete, Devstral for agentic coding, and Large 3 for general-purpose work that happens to include code. Codestral 2508 ships with a 256K context at $0.30/M input and $0.90/M output, and Devstral Medium achieves 61.6% on SWE-Bench Verified, placing it ahead of Gemini 2.5 Pro and GPT-4.1 in code-related tasks, at a fraction of the cost. Devstral Medium is API-only and supports enterprise deployment on private infrastructure.

For pure IDE autocomplete, Codestral is genuinely strong — the August 2025 release delivered a 30% increase in accepted completions and 50% fewer runaway generations — and its dedicated FIM endpoint is more polished than DeepSeek’s Beta FIM. For agentic coding (repo-scale changes, tool use, multi-file refactors), DeepSeek V4-Pro’s reported SWE-Bench Verified score of 80.6% is the higher number, but Devstral Medium’s 61.6% costs less per request. If you want hands-on detail, see our DeepSeek for coding guide.

Reasoning

DeepSeek V4 unifies reasoning and chat into a single family: thinking mode is a request parameter, not a separate model ID. You set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}} on either V4 model and the response returns reasoning_content alongside the final content. Mistral splits this differently: Magistral is the dedicated reasoning line, separate from Large 3. Magistral Medium costs $2.00/$5.00 per million tokens; Magistral Small 1.2 costs $0.50/$1.50, and because reasoning generates more output tokens per request, actual per-request costs run higher than a simple token-count estimate suggests.

Practically: if you flip thinking on for a long-context task, DeepSeek V4-Flash at $0.28/M output is dramatically cheaper than Magistral Medium at $5/M output. Quality at the very top end is closer than the price gap suggests, but DeepSeek’s 1M context is the deciding factor for most agentic and document-grounded reasoning tasks.

Writing and general chat

Both models are competent. Mistral’s European-language coverage is genuinely better than DeepSeek’s for French, German, Italian, Spanish, Portuguese and Arabic. Large 2 supports dozens of languages including French, German, Spanish, Italian, Portuguese, Arabic, Hindi, Russian, Chinese, Japanese, and Korean, along with 80+ coding languages, and Large 3 inherits that breadth.

DeepSeek wins for English long-form work where you want to feed in 200,000+ tokens of source material — the 1M context window is simply not available on the Mistral side. If you write in English and your inputs are short, the difference is small. If you write in French, German or Arabic and care about idiom and register, Mistral has the edge.

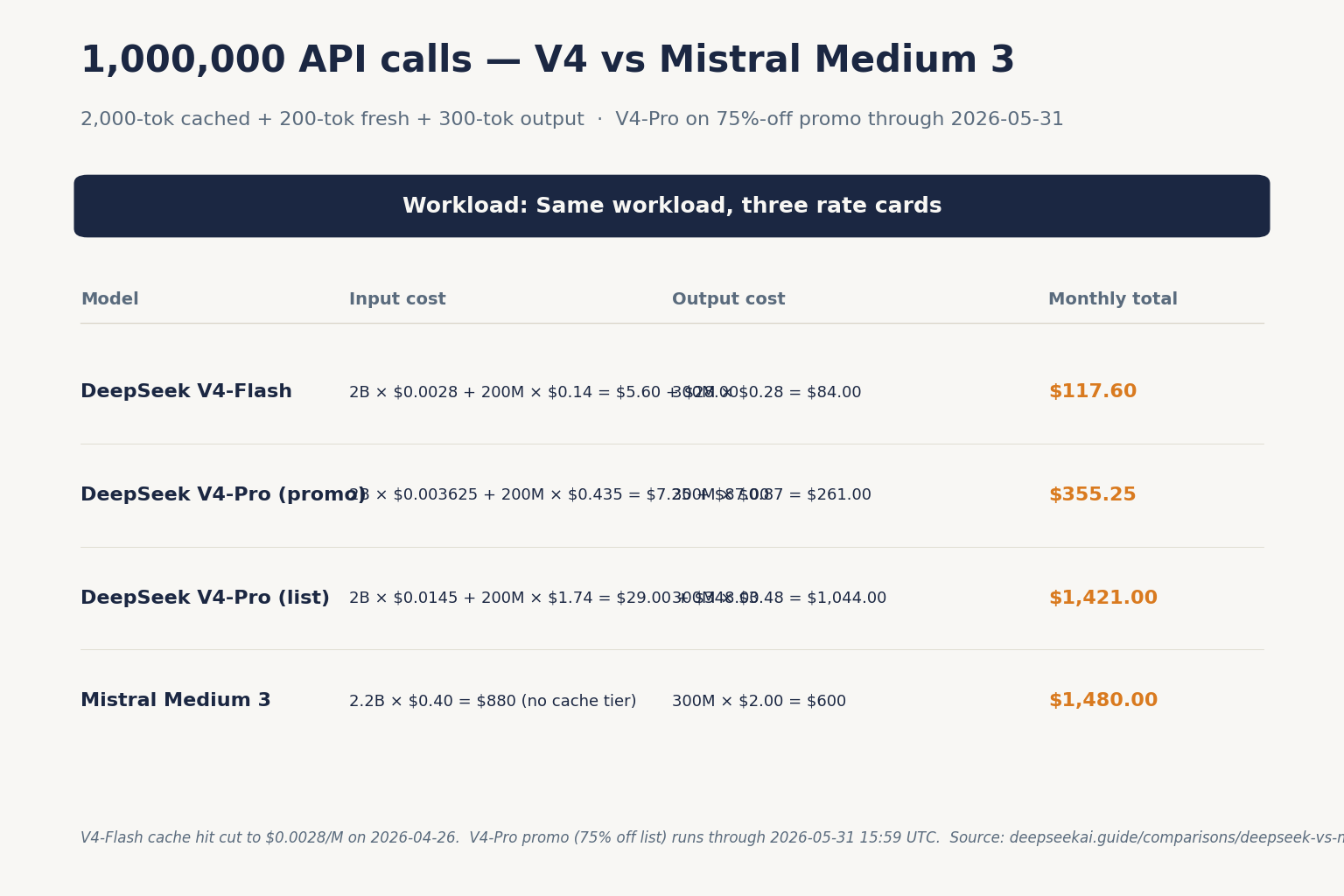

Pricing — a worked example

Let’s cost a realistic agentic workload: 1,000,000 API calls, each with a 2,000-token cached system prompt, a 200-token uncached user message, and a 300-token response. I am using DeepSeek V4-Flash rates; do not mix tiers.

Cached input: 2,000,000,000 tokens × $0.0028/M = $5.60

Uncached input: 200,000,000 tokens × $0.14/M = $28.00

Output: 300,000,000 tokens × $0.28/M = $84.00

-------

Total $117.60Same workload on DeepSeek V4-Pro (no cache discount on Mistral so this is the fair upper bound):

Cached input: 2,000,000,000 tokens × $0.0145/M = $29.00

Uncached input: 200,000,000 tokens × $1.74/M (list) = $348.00

Output : 300,000,000 tokens × $3.48/M (list) = $1,044.00

---------

Total $1,421.00 list (or $355.25 during the 75% V4-Pro promo through 2026-05-31) list (or $355.25 during the 75% V4-Pro promo through 2026-05-31)Same workload on Mistral Medium 3 at $0.40 / $2.00 per million tokens (no cache tier):

Input (all uncached): 2,200,000,000 tokens × $0.40/M = $880.00

Output: 300,000,000 tokens × $2.00/M = $600.00

---------

Total $1,480.00For high-volume workloads with cacheable prefixes, DeepSeek V4-Flash is roughly 13× cheaper than Mistral Medium 3 on this profile. Even DeepSeek V4-Pro lands slightly above Mistral Medium 3 — but you are getting frontier-tier output, not mid-tier. Build your own numbers using the DeepSeek pricing calculator before committing.

API ergonomics

Both providers expose OpenAI-compatible endpoints. With DeepSeek, chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com; the Anthropic SDK also works against the same base URL. With Mistral, Mistral uses an OpenAI-compatible API format, so if you already use the OpenAI SDK, switching is straightforward.

Minimal Python against DeepSeek V4 with thinking enabled, using the OpenAI SDK:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="sk-...",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Plan the migration."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

print(resp.choices[0].message.content)Three points worth flagging on the DeepSeek side: the API is stateless, so you must resend the conversation history with each request — the web chat keeps session state, the API does not. Legacy IDs deepseek-chat and deepseek-reasoner still work but route to deepseek-v4-flash and will be retired on 2026-07-24 at 15:59 UTC; migrating is a one-line model= swap. JSON mode is designed to return valid JSON, not guaranteed — include the word “json” in the prompt with a small example schema, and set max_tokens high enough to avoid truncation. Full reference in our DeepSeek API documentation and DeepSeek OpenAI SDK compatibility notes.

Privacy and hosting

This is the cleanest decision criterion. DeepSeek hosts in China; conversations sent to api.deepseek.com are processed and may be stored on servers subject to Chinese law. Mistral hosts in the EU. All Mistral models are GDPR-compliant with EU hosting available, making Mistral the go-to choice for European data residency requirements.

If you are bound by GDPR, HIPAA-equivalent rules, or sector-specific data-residency requirements that exclude Chinese hosting, the cost comparison stops mattering — Mistral is the only of the two you can deploy without a long compliance review. For everyone else, the privacy posture is a trade-off you cost into the decision; see our DeepSeek privacy notes for the full picture. Either way, both providers’ open weights let you self-host under MIT (DeepSeek V4-Pro and V4-Flash) or Apache 2.0 (Mistral’s open-weight family) if you want full control.

Ecosystem and lineup

Mistral’s lineup is broader and more specialised. The Mistral changelog shows Codestral 2508 (August 2025), Magistral Medium 1.1 and Magistral Small 1.1 (July 2025), Voxtral Small and Mini for audio, Devstral Small 1.1 and Devstral Medium, and Mistral Small 3.2. There is also Mistral Small 4, the next major release in the Mistral Small family, unifying capabilities of several flagship models, combining strong reasoning from Magistral, multimodal understanding from Pixtral, and agentic coding capabilities from Devstral.

DeepSeek’s lineup is narrower but deeper: DeepSeek V4-Pro and DeepSeek V4-Flash at the top, DeepSeek R1 and the older DeepSeek V3.2 in the back catalogue, plus DeepSeek Coder V2 for coding. The full catalogue is on the DeepSeek models hub.

When to pick DeepSeek

- You need a 1,000,000-token context window for long-document workflows.

- You are price-sensitive and your workload is output-heavy.

- You want one model that does coding, reasoning and chat without juggling endpoints.

- You are happy to host outside the EU or self-host the open weights.

When to pick Mistral

- EU data residency or GDPR compliance is non-negotiable.

- You need a dedicated FIM autocomplete model (Codestral) for IDE integrations.

- Your inputs fit comfortably under 128K tokens.

- You write or generate content in French, German, Italian, Spanish or Arabic at scale.

Alternatives worth considering

If neither lab quite fits, look at DeepSeek vs Llama for self-hosted options under permissive licensing, or DeepSeek vs Claude if frontier-tier quality matters more than cost. For a wider sweep, the AI comparison hub has the rest of the field broken down model by model, and our open-source AI like DeepSeek roundup covers the open-weight landscape.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Is DeepSeek cheaper than Mistral?

For most workloads, yes. DeepSeek V4-Flash lists $0.14 input (cache miss) and $0.28 output per million tokens; Mistral Medium 3 lists $0.40 input and $2.00 output. On output-heavy traffic the gap is roughly 7x in DeepSeek’s favour. Mistral Large 3 is more competitive on output but still trails V4-Flash. Run a real estimate with the DeepSeek cost estimator.

Which has a longer context window, DeepSeek or Mistral?

DeepSeek, by a wide margin. Both DeepSeek V4-Pro and V4-Flash support a 1,000,000-token context with up to 384,000-token output. Mistral’s Large 3 and Medium 3 cap out around 128–131K tokens; Codestral reaches 256K. If you need to feed an entire codebase or large document corpus into a single request, see the DeepSeek context length checker.

Are both DeepSeek and Mistral open source?

Both publish open weights, but with different scope. DeepSeek V4-Pro, V4-Flash, V3.2, V3.1 and R1 ship under MIT for both code and weights. Mistral’s open-weight family (Mistral 7B, Mixtral 8x7B/8x22B) is Apache 2.0, but its frontier API models (Large 3, Magistral Medium, Devstral Medium) are API-only. Details in our is DeepSeek open source guide.

Can I use the OpenAI SDK with both DeepSeek and Mistral?

Yes. Both providers expose OpenAI-compatible Chat Completions endpoints. For DeepSeek, set base_url="https://api.deepseek.com" and your API key; the model field accepts deepseek-v4-pro or deepseek-v4-flash. Legacy IDs deepseek-chat and deepseek-reasoner work until 2026-07-24 15:59 UTC. Step-by-step in our DeepSeek API getting started tutorial.

Why would a European company pick Mistral over DeepSeek?

Data residency. Mistral hosts in the EU and offers GDPR-compliant deployments out of the box, which removes weeks of compliance review for regulated industries. DeepSeek processes requests on servers subject to Chinese law, which is a non-starter for many EU public-sector and financial customers. For more on the compliance trade-off, see DeepSeek availability by country.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingGoogle Gemini API pricingGemini API rates for cross-vendor comparisonsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

Context sources

- AnalysisPrice Per Token: Mistral AI pricing snapshot (Apr 23, 2026)Mistral Large 3 2512 listed at $0.50 / $1.50Last checked: April 30, 2026

- AnalysisTokenMix: Mistral API pricing trackerAlternate Mistral Large 3 pricing trackers ($2/$6)Last checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.