DeepSeek vs Perplexity: Which One Should You Actually Use in 2026?

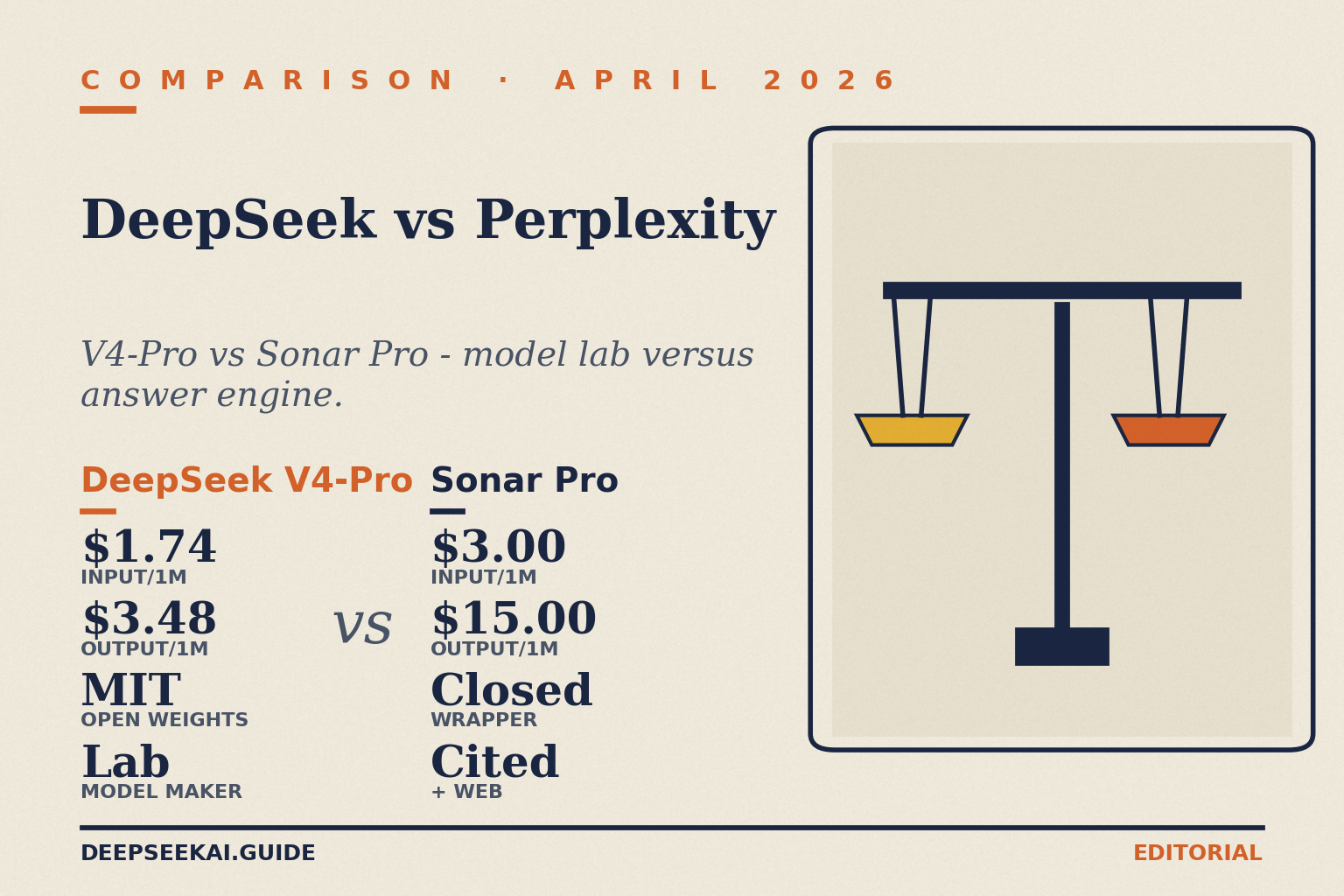

You want a straight answer: should you build on DeepSeek or Perplexity, and which one should you open when you have a question? The deepseek vs perplexity choice trips people up because the two products solve different problems. DeepSeek is a model lab — it ships open-weight large language models and an OpenAI-compatible API for developers. Perplexity is an answer engine — it wraps third-party models (and its own Sonar variants) in a retrieval pipeline that cites the web. Both have a chat UI, both have an API, and both have a $20-ish consumer tier, which is where the confusion starts. This article gives you a verdict up front, an at-a-glance table, head-to-head sections on coding, reasoning, writing, pricing and privacy, plus a worked cost example so you can choose with numbers, not vibes.

Verdict: who wins, for whom

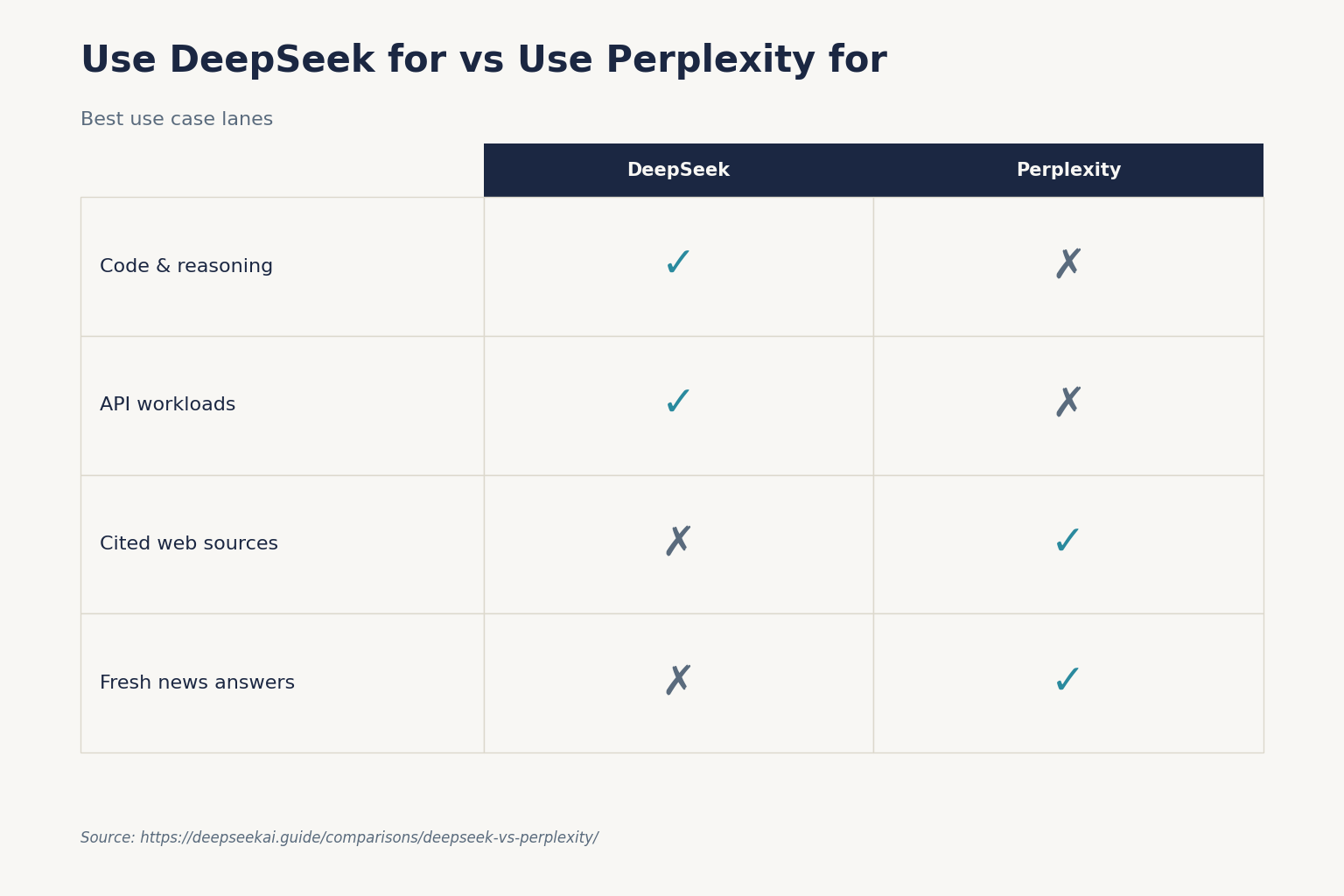

For developers building a product: DeepSeek wins on raw price and on output quality per dollar. V4-Flash undercuts every Sonar tier on token cost, and V4-Pro competes with frontier models at a fraction of the spend. Pick DeepSeek if you can supply your own retrieval, or if your workload is generation-heavy (code, long-form writing, structured extraction).

For researchers, analysts and journalists who live in a chat UI: Perplexity wins. The product is purpose-built around web-grounded answers with citations, and the Sonar API bakes search into every response — no retrieval pipeline to build. Pick Perplexity if you need fresh web data with footnotes more than you need the cheapest tokens.

For the budget-conscious individual who wants one chatbot: DeepSeek’s chat is free with no published daily message cap, while Perplexity’s free tier limits Pro searches. If you mostly write, code, and reason, DeepSeek. If you mostly research current events, Perplexity Pro at $20/month.

At-a-glance: DeepSeek vs Perplexity

The table below covers the surface most readers actually compare. Where a number is competitor-side, it carries a citation date.

| Feature | DeepSeek | Perplexity |

|---|---|---|

| Primary product | Open-weight models + OpenAI-compatible API | Answer engine with retrieval + Sonar API |

| Current flagship (chat) | DeepSeek V4 (Pro and Flash, released 2026-04-24) | Routes to GPT-5 / Claude / Gemini frontier models depending on plan |

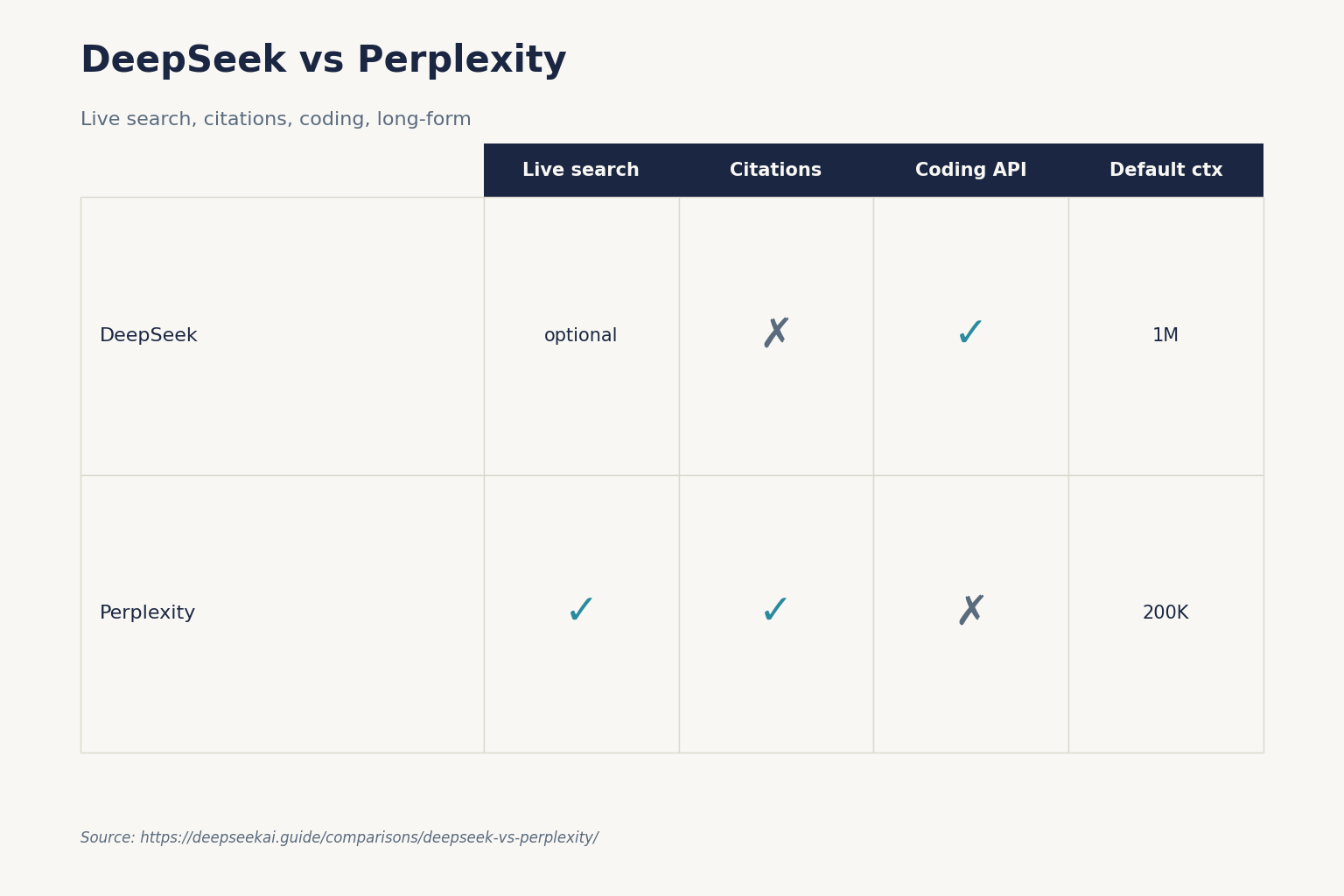

| Web search built in? | Optional, via tool calling — you supply the retriever | Yes, on every Sonar request, with citations |

| Default chat context window | 1,000,000 tokens (output up to 384,000) | Up to 200K tokens on Sonar Pro |

| Cheapest API tier (input miss / output, per 1M) | V4-Flash: $0.14 / $0.28 | Sonar: $1 / $1 |

| Frontier API tier (input miss / output, per 1M) | V4-Pro: $0.435 / $0.87 promo (list $1.74 / $3.48) | Sonar Pro: $3.00 / $15.00 |

| Consumer subscription | Free tier; no published cap | $20/month Pro (or $200/year) |

| Open weights | Yes — V4-Pro, V4-Flash, V3.2, R1 all MIT-licensed | No — proprietary stack |

| Reasoning mode | Thinking via reasoning_effort, returns reasoning_content alongside the final content |

Reasoning tokens used for step-by-step problem-solving on Sonar Deep Research |

| Best at | Coding, long-form generation, low-cost agents | Web research, fact-checking, current events |

Architecture and what each one actually is

This is the fork in the road. The two companies ship products that look similar from the outside but solve different problems internally.

DeepSeek: a model lab with an OpenAI-compatible API

DeepSeek trains its own models, publishes their weights to Hugging Face, and exposes them through a stateless chat API. The current generation is DeepSeek V4, released April 24, 2026, in two open-weight Mixture-of-Experts tiers: deepseek-v4-pro (1.6T total / 49B active parameters) and deepseek-v4-flash (284B / 13B active). Both ship under the MIT license. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. DeepSeek also exposes an Anthropic-compatible surface at the same base URL, so either SDK works by swapping base_url and api_key.

Thinking mode is a request parameter — not a separate model — controlled by reasoning_effort with an extra_body={"thinking": {"type": "enabled"}} flag. Legacy IDs deepseek-chat and deepseek-reasoner still work but route to deepseek-v4-flash and retire on 2026-07-24 at 15:59 UTC. For the full model lineage, see the DeepSeek V4 page.

Perplexity: an answer engine that orchestrates other people’s models

Perplexity does not train frontier LLMs. It runs a proprietary retrieval pipeline and routes generation to a mix of third-party models (GPT-5, Claude, Gemini) plus its own Sonar family. All Sonar models run on Perplexity’s fine-tuned Llama derivatives with proprietary retrieval pipeline. The product’s hook is that every Sonar Online and Sonar Pro request runs live web retrieval before generation, and returns citations alongside the answer. There’s no separate per-search charge; it’s baked into the per-token price.

The consumer subscription and the API are different products. The “unlimited” usage included in Pro and Max subscriptions applies only to the chat interface — not the API. If you want programmatic access, you buy Sonar tokens separately.

Coding

This is where the gap is widest. Perplexity is not built for code. Its Sonar models are tuned for grounded question answering; they do not match dedicated coding models on benchmarks like SWE-Bench Verified or Terminal-Bench, and the product UI does not offer the streaming, FIM completion, or chat prefix completion that developers want.

DeepSeek leans the other way. V4-Pro shipped with strong agentic-coding numbers on its 2026-04-24 announcement, and V4-Flash gives you a credible coder for pennies. Both support tool calling, JSON mode, streaming, and FIM completion (Beta — requires thinking: {"type": "disabled"}). For a deeper read on how DeepSeek stacks up against the dedicated coding incumbent, see the DeepSeek vs GitHub Copilot piece, or the DeepSeek for coding use-case page.

Reasoning

Both products expose a reasoning mode, but they expose it differently.

- DeepSeek: enable thinking on either V4 tier with

reasoning_effort="high"(or"max"for max-effort). The API returnsreasoning_contentalongside the finalcontent, so you can log, audit, or hide the trace as you like. The same endpoint, the same model — just one parameter. - Perplexity: uses a separate Sonar Reasoning Pro SKU for deep analytical tasks. Reasoning tokens are used for step-by-step logical reasoning and problem-solving — only applies to Sonar Deep Research model. Reasoning here is fused with retrieval rather than offered as a clean parameter on every model.

If your reasoning needs to chew on a corpus you already control (a codebase, a knowledge base, a long document), DeepSeek’s 1M-token context plus thinking mode is the cleaner build. If your reasoning needs fresh web evidence at every step, Perplexity’s reasoning tier saves you the retrieval engineering.

Writing

For long-form writing without web grounding, DeepSeek is the better tool. The 1M-token context tolerates long source documents, the temperature defaults are well-documented (1.5 for creative writing, 1.3 for general conversation), and V4-Flash output at $0.28 per million tokens lets you regenerate freely. See DeepSeek for writing for prompts and workflows.

For citation-backed writing — articles where you need footnotes the editor can verify — Perplexity is purpose-built. You ask, it cites, you keep the sources in the response metadata. The trade-off is cost: Sonar Pro pricing starts at $3.00 per million input tokens and $15.00 per million output tokens. That is roughly 18× DeepSeek V4-Flash on input miss and over 50× on output.

Pricing

The headline numbers tell most of the story. DeepSeek V4 pricing (as of April 2026, per 1M tokens):

- V4-Flash: $0.0028 cache hit / $0.14 cache miss / $0.28 output

- V4-Pro: $0.0145 / $1.74 / $3.48 (list); $0.003625 / $0.435 / $0.87 (75% promo through 2026-05-31)

Perplexity Sonar pricing (per 1M tokens):

- Sonar: $1 input / $1 output

- Sonar Pro: $3.00 input / $15.00 output with a 200K token context window

- Search tool fee: if a model makes 3 web searches during a request, you pay model tokens + (3 × $0.005) for searches — but only on tool-style search calls; standard Sonar bakes search into the token price.

For consumer plans: DeepSeek’s chat is free, while Perplexity Pro is $20/month (or $200/year) with unlimited searches, full model selection, unlimited file uploads, and $5/month in API credits. Perplexity also sells a Max plan at $200/user/month. For a current pricing reference on the DeepSeek side, see DeepSeek API pricing.

Worked example: 1M API calls per month

Here is the same workload — 1,000,000 calls with a 2,000-token cached system prompt, a 200-token user message, and a 300-token response — costed against deepseek-v4-flash. Always enumerate the three buckets so you do not double-count cache hits.

Cached input : 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

Uncached input : 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

Output : 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

--------

Total $117.60Now the same workload on Perplexity Sonar (no caching tier, search bundled). Sonar lists $1 input and $1 output per 1M tokens, so:

Input : 2,200 × 1,000,000 = 2,200,000,000 tokens × $1.00/M = $2,200.00

Output : 300 × 1,000,000 = 300,000,000 tokens × $1.00/M = $300.00

----------

Total $2,500.00That is roughly 21× the DeepSeek bill, but Sonar runs live web retrieval inside that price. If your workload genuinely needs fresh web data on every call, Perplexity may still come out ahead once you price in a separate search provider on the DeepSeek side. Perplexity’s pitch is integrated retrieval at a lower total cost than stitching together GPT-5.4 with a search tool — a GPT-5.4 agent call with Bing web search typically costs $2.50 / $15.00 per 1M tokens plus roughly $10 per 1,000 search queries.

A minimal DeepSeek call

Here is a minimal Python example using the OpenAI SDK against DeepSeek V4-Flash:

from openai import OpenAI

client = OpenAI(base_url="https://api.deepseek.com", api_key="...")

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": "You are a research assistant."},

{"role": "user", "content": "Summarise the V4 release."}

],

temperature=1.3,

max_tokens=1024,

)

print(resp.choices[0].message.content)For the full developer reference, see the DeepSeek API documentation.

Privacy and data handling

Both products process data on remote servers. The differences matter:

- DeepSeek: API is stateless — clients must resend conversation history with every request. Web/app keeps session history server-side. Data is processed under Chinese law, which several jurisdictions have flagged. Italy’s Garante ordered the app blocked in January 2025; several US states have restricted government-device use. There is no documented federal US ban as of this article’s date. The mitigation, for sensitive workloads, is to run the open-weight V4 models on your own hardware. See DeepSeek privacy for a deeper read.

- Perplexity: US-based, with enterprise plans that add audit logs, SCIM and configurable retention — though audit logs, data retention configurability, and SCIM security features are only accessible with 50+ members or 1 Enterprise Max user in your organization. Generation is delegated to whichever model the route lands on, which means your data flows to OpenAI/Anthropic/Google as well.

Ecosystem and integrations

Perplexity has the broader consumer surface: a desktop app, iOS and Android apps, the Comet browser, and (as of February 2026) Perplexity Computer, an agentic tool that unifies AI capabilities into a single system using 19 different AI models. DeepSeek’s surface is leaner — a web chat and mobile app — but the developer ecosystem is wider because the weights are open. You can run V4 in Ollama, deploy it under Docker, integrate it with LangChain, or wire it into VS Code. Perplexity offers nothing comparable; you cannot self-host Sonar.

When to pick which

Decision rules, written in plain language:

- Pick DeepSeek if you are a developer optimising for cost-per-token, you need a frontier model with long context, you want open weights for self-hosting or compliance, or you mostly do code, math and long-form writing.

- Pick Perplexity if your work depends on cited web sources, you are a researcher or journalist using a chat UI eight hours a day, or you are building an answer engine and do not want to maintain a retrieval pipeline.

- Use both if you can: DeepSeek for code, drafting and bulk reasoning; Perplexity for fact-finding and current-events queries. The combined cost — DeepSeek free tier plus Perplexity Pro — is $20/month.

Alternatives worth considering

Neither product is the only option. If web-grounded search is the priority, look at DeepSeek vs You.com and DeepSeek vs Bing Chat. For broader context across the comparison space, browse the AI comparison hub or our list of DeepSeek alternatives. Researchers may also want our DeepSeek for research guide for prompts that close the citation gap on the DeepSeek side.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Is DeepSeek better than Perplexity?

“Better” depends on the job. DeepSeek is better for developers who want cheap, capable tokens and open weights — V4-Flash is roughly 21× cheaper than Sonar on a like-for-like API workload before retrieval is priced in. Perplexity is better for researchers who need cited web answers without engineering a retrieval pipeline. For a deeper feature breakdown, see our DeepSeek vs competitors review.

Does DeepSeek search the web like Perplexity?

Not by default. The DeepSeek API is a generation surface — it does not retrieve from the open web on its own. You can build search into a DeepSeek workflow with tool calling and a search provider, or use the DeepSeek web chat’s optional search toggle. Perplexity bakes search into every Sonar request. See DeepSeek for research for grounded-research patterns on the DeepSeek side.

Can I use DeepSeek and Perplexity together?

Yes, and many practitioners do. A common split: Perplexity Pro for live web research and citation gathering in the browser, DeepSeek V4 for drafting, coding and bulk reasoning through the API. Total monthly cost can stay under $25 for a single user. Our DeepSeek beginners guide covers the chat side; the DeepSeek API documentation covers programmatic access.

How much does the Perplexity API cost vs the DeepSeek API?

As of April 2026, DeepSeek V4-Flash lists $0.14 input miss / $0.28 output per 1M tokens, and V4-Pro lists $1.74 / $3.48 (currently $0.435 / $0.87 during the 75% promo through 2026-05-31). Perplexity Sonar lists $1.00 / $1.00 and Sonar Pro lists $3.00 / $15.00 per 1M tokens, with web search bundled into the token price. Always re-check before integrating — see the live DeepSeek API pricing page.

Why is Perplexity more expensive than DeepSeek?

Two reasons. First, Perplexity does not train its own frontier models — it pays OpenAI, Anthropic and others for inference, and that cost flows through to its rate card. Second, every Sonar request runs live web retrieval, which has real infrastructure cost. DeepSeek owns its models and ships them open-weight under MIT, which gives it room to price aggressively. Read is DeepSeek open source for context.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingGoogle Gemini API pricingGemini API rates for cross-vendor comparisonsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.