What DeepSeek Features Actually Do: A V4-Era Walkthrough

If you have landed here trying to work out which DeepSeek features matter, which are marketing gloss, and which actually change how you build, this guide is for you. The DeepSeek lineup shifted on April 24, 2026 with the V4 Preview, so a lot of older write-ups are now wrong about model IDs, context length, thinking modes and pricing. I run V4-Pro and V4-Flash in production, ran V3, V3.2 and R1 before that, and have spent enough hours alongside ChatGPT, Claude and Gemini to know where DeepSeek wins and where it does not. What follows is a feature-by-feature walkthrough — chat interface, model tiers, API surface, reasoning modes, long context, tool use, JSON mode and pricing — with the specifics you need to make a build decision today.

The two surfaces: chat app and API

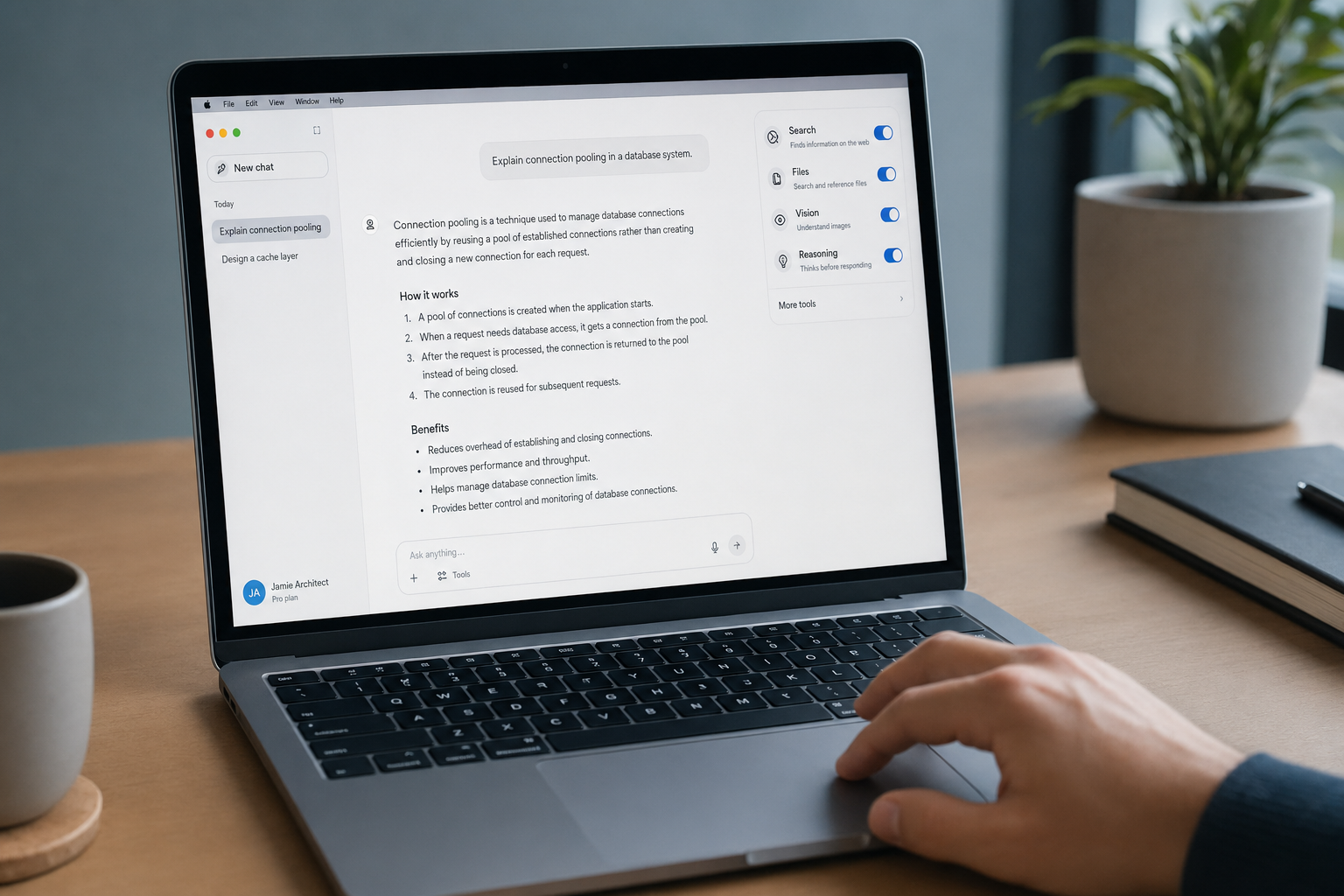

DeepSeek ships in two very different shapes, and conflating them is the most common mistake I see in tutorials. The web chat at chat.deepseek.com and the mobile apps are a consumer-style product: you sign in, conversations are saved server-side, and a DeepThink toggle switches the assistant between fast and reasoning modes. The API is a separate, stateless surface — you send messages, you get a response, and your client must resend the full conversation history on the next call. Both surfaces are now powered by V4 by default.

This split matters when you read older guides. Many describe behaviour you only get in the chat app (saved threads, file uploads, image attachments) as if it were an API feature. It is not. If you are building, you are working against the API and you own the state.

The V4 model family

V4 ships as two open-weight Mixture-of-Experts models, both released as a Preview on April 24, 2026. DeepSeek-V4-Pro has 1.6T parameters (49B activated) and DeepSeek-V4-Flash has 284B parameters (13B activated) — both supporting a context length of one million tokens. Both run under MIT licensing for code and weights, so you can self-host if your hardware budget allows it. For the deeper architecture write-up, see our dedicated DeepSeek V4 page; the tier-specific pages cover DeepSeek V4-Pro and DeepSeek V4-Flash in more detail.

Pro vs Flash — when each one earns its keep

| Feature | V4-Flash | V4-Pro |

|---|---|---|

| Total / active params | 284B / 13B | 1.6T / 49B |

| Default context | 1,000,000 tokens | 1,000,000 tokens |

| Max output | 384,000 tokens | 384,000 tokens |

| Thinking modes | non-thinking, high, max | non-thinking, high, max |

| Input price (cache hit / miss, per 1M) | $0.0028 / $0.14 | $0.003625 / $0.435 promo (list $0.0145 / $1.74) |

| Output price (per 1M) | $0.28 | $0.87 promo / $3.48 list |

| SWE-Bench Verified | 79.0% | 80.6% |

| License (code + weights) | MIT | MIT |

| Best for | Chat, high-volume agents, RAG | Frontier coding, complex agentic work |

The Flash tier is my default. V4-Flash has 284 billion total parameters (13B active) and costs $0.28/M output tokens — 12.4x cheaper than Pro. On SWE-bench Verified, Flash scores 79.0% versus Pro’s 80.6%. The main practical gaps appear on Terminal-Bench 2.0 (56.9% vs 67.9%) and SimpleQA-Verified (34.1% vs 57.9%), suggesting Flash struggles more on complex multi-step tool use and factual recall tasks. If you are building a chat product or running batch jobs, Flash is the right starting point. Pro earns its 7× output premium when you are running long agentic coding sessions.

Three reasoning-effort modes

This is the cleanest change in V4: thinking is a request parameter, not a separate model. Both deepseek-v4-pro and deepseek-v4-flash support three settings:

- Non-thinking (default) — no step-by-step reasoning. Fastest and cheapest. Use for chat, classification, summarisation, FIM completion.

- Thinking (high) — set

reasoning_effort="high"plusextra_body={"thinking": {"type": "enabled"}}. The model emits a reasoning trace before the answer. - Thinking-max —

reasoning_effort="max". Maximum compute on the problem. For the Think Max reasoning mode, we recommend setting the context window to at least 384K tokens.

When thinking is enabled, the response returns reasoning_content alongside the final content. You can show the trace in your UI, log it for debugging, or discard it. There is no separate “reasoner” model ID to choose any more — the choice is one parameter on either tier.

Legacy IDs and the migration window

If your code still calls deepseek-chat or deepseek-reasoner, you have until 2026-07-24 at 15:59 UTC to migrate. deepseek-chat & deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time). (Currently routing to deepseek-v4-flash non-thinking/thinking). The migration is a one-line model= swap; base_url does not change. Our DeepSeek API documentation notes cover the change in detail.

The 1M-token context window — and why this one is different

A million-token context is not new in 2026 — Gemini and Claude have shipped it, and earlier Chinese labs advertised it. What is genuinely different about V4 is the architecture underneath. DeepSeek-V4 series incorporate several key upgrades in architecture and optimization: Hybrid Attention Architecture: We design a hybrid attention mechanism combining Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA) to dramatically improve long-context efficiency. In the 1M-token context setting, DeepSeek-V4-Pro requires only 27% of single-token inference FLOPs and 10% of the KV cache compared with DeepSeek-V3.2.

Flash drops those numbers further still — V4-Flash drops these numbers even further: 10% of the FLOPs and 7% of the KV cache. In plain terms, V4 was designed for long context as a default rather than bolted on as a stretch goal, which is why the per-token cost stayed low even as the window grew. If you want to confirm a payload fits, our DeepSeek context length checker handles the maths.

API features that matter for building

All chat requests hit POST /chat/completions, the OpenAI-compatible endpoint, at base URL https://api.deepseek.com. Supports OpenAI ChatCompletions & Anthropic APIs. That means the official OpenAI Python or Node SDKs work against DeepSeek by changing only base_url and api_key; the Anthropic SDK works the same way against the Anthropic-compatible surface. No call-site rewrites.

Minimal Python example

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[

{"role": "system", "content": "You are a senior reviewer."},

{"role": "user", "content": "Audit this migration plan."},

],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

temperature=0.0,

max_tokens=4096,

)

print(resp.choices[0].message.content)

For the full setup walkthrough, see DeepSeek API getting started and get a DeepSeek API key.

Parameters worth knowing

temperature— 0.0 for code/maths, 1.0 for data analysis, 1.3 for chat/translation, 1.5 for creative writing. These are DeepSeek’s own recommended defaults.top_p— nucleus sampling. Pair with temperature, do not stack aggressively.max_tokens— output cap. Can go up to 384,000 in V4. Always set high enough that JSON cannot truncate.reasoning_effort— V4 only."high"or"max"; omit for non-thinking mode.response_format={"type": "json_object"}— JSON mode. Designed to return valid JSON, not guaranteed; the prompt should include the word “json” plus a small example schema, and the API may occasionally return empty content. Treat both as failure cases your client must handle.tools/tool_choice— function calling in OpenAI-compatible format, supported in both thinking and non-thinking modes.stream=true— server-sent events. With thinking enabled, reasoning content streams alongside final content.

FIM (fill-in-the-middle) completion and Chat Prefix Completion both ship as Beta features; FIM is non-thinking mode only.

Context caching is automatic — but you still pay for the miss

Repeated prefixes (a long system prompt, a fixed knowledge block) are detected by the provider and billed at the cache-hit tier. The savings are large, but there is a footgun: each new user message is still a cache miss against that prefix. Worked example for V4-Flash, 1,000,000 calls with a 2,000-token cached system prompt, a 200-token user message and a 300-token response:

Cached input : 2,000 × 1,000,000 = 2,000,000,000 tok × $0.0028/M = $5.60

Uncached input: 200 × 1,000,000 = 200,000,000 tok × $0.14 /M = $28.00

Output : 300 × 1,000,000 = 300,000,000 tok × $0.28 /M = $84.00

-------

Total $117.60

The same workload on V4-Pro lands at $1,421.00 — the Pro premium is roughly 10× on this shape. DeepSeek context caching walks through the cache-key rules; the DeepSeek pricing calculator automates the maths. Pricing as of April 2026 — confirm on the official pricing page before committing to production volumes.

Performance: what V4 actually does well

The Hugging Face model card reports the headline numbers from DeepSeek’s own evaluation. For V4-Pro: SWE Bench Resolved on SWE-bench/SWE-bench_Verified — 80.6, Mmlu Pro on TIGER-Lab/MMLU-Pro — 87.5, Gsm8k on openai/gsm8k — 92.6, Diamond on Idavidrein/gpqa — 90.1, Hle on cais/hle — 37.7. Independent reviewers confirm the agentic-tool numbers: On coding benchmarks, DeepSeek V4-Pro leads Claude on Terminal-Bench 2.0 (67.9% vs 65.4%), LiveCodeBench (93.5% vs 88.8%), and Codeforces rating (3206 vs no reported score). Claude Opus 4.6 holds a marginal lead on SWE-bench Verified (80.8% vs 80.6%), and a meaningful lead on HLE (40.0% vs 37.7%) and HMMT 2026 math (96.2% vs 95.2%).

The honest read: V4-Pro is competitive with the frontier on coding and agentic work, behind on broad world-knowledge tasks like HLE and SimpleQA. DeepSeek-V4-Pro beats all rival open models for maths and coding, and trails only Google’s Gemini 3.1-Pro, a closed model, for world knowledge, DeepSeek said in an announcement on social media. The “pro” version’s performance falls only “marginally short” of OpenAI’s GPT-5.4 and Gemini 3.1-Pro. If your workload is software engineering or autonomous agents, the value is exceptional. If it is broad factual recall, Gemini still leads on the benchmarks DeepSeek itself reports.

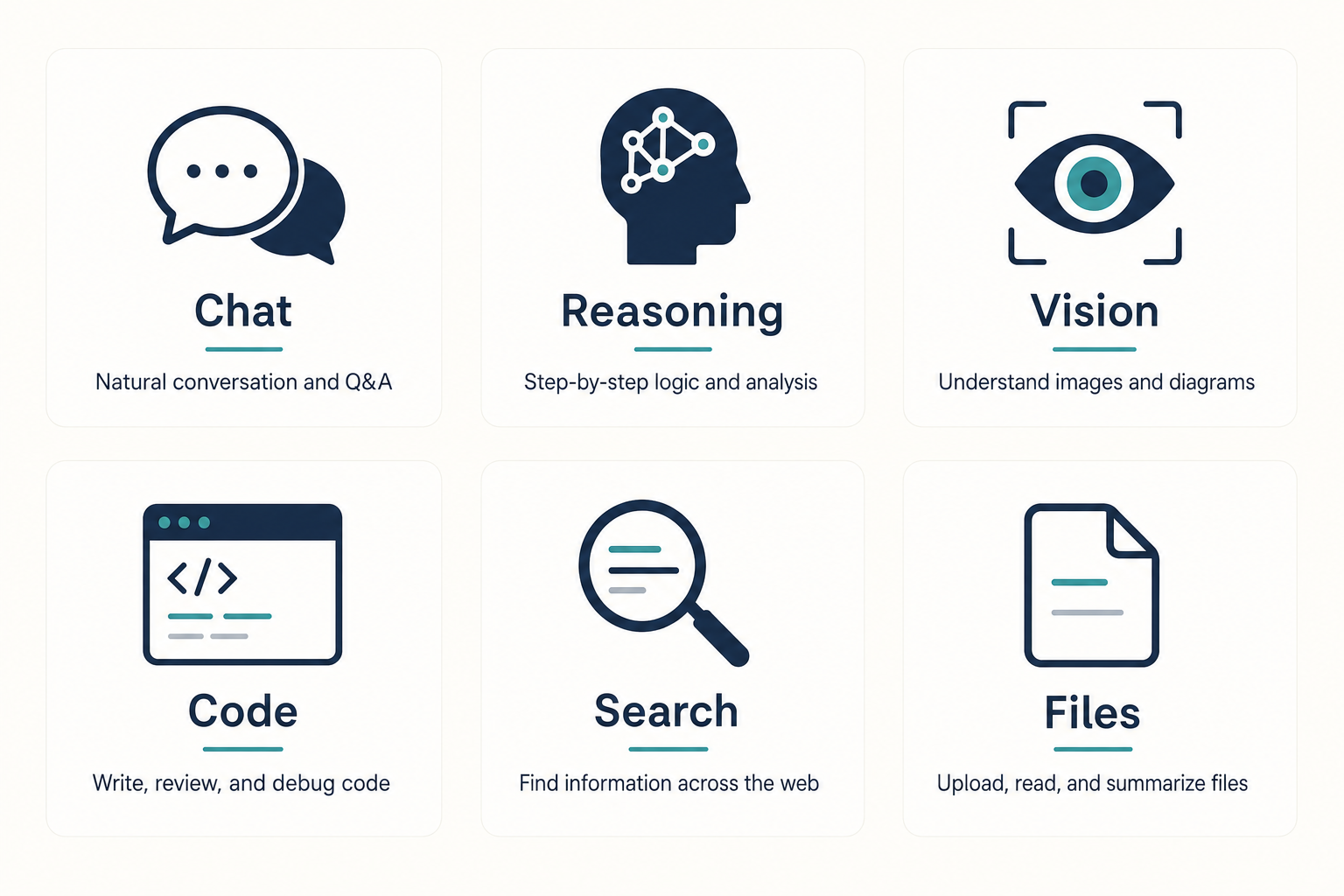

How to access DeepSeek

- Web chat — chat.deepseek.com, with Instant Mode (Flash) and Expert Mode (Pro). See our DeepSeek chat walkthrough.

- Mobile apps — official iOS and Android apps with the same V4 backend.

- API — covered above; stateless, OpenAI- and Anthropic-compatible.

- Open weights — both V4 models are published on Hugging Face under MIT for code and weights.

- Local install — feasible for Flash on serious hardware; see our guide on whether DeepSeek is open source for the licensing detail.

What DeepSeek does not do well

Three honest weaknesses you should plan around:

- Broad world knowledge. V4-Pro’s HLE score of 37.7 trails GPT-5.4 and Gemini-3.1-Pro by a few points. For research-heavy assistants where breadth matters more than code, the gap is real.

- Multimodal. V4 is a text-only release. DeepSeek said the current version focuses on text, but it is preparing a multimodal AI roadmap. If you need image input today, look elsewhere.

- Regulatory exposure. Several jurisdictions have restricted DeepSeek on government devices over the past year, and data is processed in China. Read our DeepSeek privacy note before deploying in regulated industries.

How V4 compares to alternatives

If you are weighing options, the most useful head-to-heads are DeepSeek vs Claude for coding and agent work, DeepSeek vs ChatGPT for general assistant use and DeepSeek vs Gemini when you need strong factual recall. The wider DeepSeek beginner guides hub collects the rest.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

What are the main DeepSeek features in V4?

The headline DeepSeek features in V4 are a 1M-token default context, three reasoning-effort modes (non-thinking, high, max) on both V4-Pro and V4-Flash, JSON mode, tool calling, streaming, automatic context caching, and Anthropic-compatible plus OpenAI-compatible API surfaces. Both V4 models are open-weight under MIT. See our DeepSeek capabilities overview for the full list.

How does DeepSeek’s thinking mode work?

Thinking is a request parameter on either deepseek-v4-pro or deepseek-v4-flash, not a separate model ID. Set reasoning_effort="high" plus extra_body={"thinking": {"type": "enabled"}} to enable it; use "max" for maximum effort. The response returns reasoning_content alongside the final content. Our DeepSeek prompt engineering guide covers when each mode is worth the extra latency.

Is DeepSeek free to use?

The web chat at chat.deepseek.com is free with a sign-in. The API is paid per token. You can try both models immediately at chat.deepseek.com via Instant Mode or Expert Mode. The API is available today. DeepSeek may offer a granted balance — a small promotional credit that can expire — so check the billing console for current offers. See is DeepSeek free for the full breakdown.

Does the DeepSeek API remember conversations?

No. The API is stateless — every request to POST /chat/completions must include the full message history, with roles system, user, and assistant. The web chat and mobile apps maintain session history for you, but that is a feature of the app surface, not the API. Our DeepSeek API best practices notes cover how to manage conversation state cleanly on the client.

What is the difference between DeepSeek V4-Pro and V4-Flash?

V4-Pro has 1.6T total parameters (49B active) and is the frontier-tier model; V4-Flash has 284B total (13B active) and is the cost-efficient default. Both share the 1M-token context, the three thinking modes, and the same feature set. Pro charges roughly 12× more per output token. For most workloads Flash is the right pick — see our AI comparison hub for tier-by-tier breakdowns.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.