What DeepSeek Can Actually Do: V4 Capabilities Tested

If you’re trying to work out whether DeepSeek can replace Claude or GPT-5 in your stack, the answer changed on April 24, 2026. That is when DeepSeek shipped V4 Preview as two open-weight models — `deepseek-v4-pro` and `deepseek-v4-flash` — and the DeepSeek capabilities reset that came with them is the biggest shift since R1. Million-token context became the default, thinking mode became a parameter rather than a separate model, and prices stayed at a fraction of frontier closed-source rates. This guide is what I wish I’d had on launch day: every capability that matters in production, with the benchmark numbers, pricing math, and limitations stated plainly so you can decide what to build on.

The short answer: what DeepSeek can do in April 2026

DeepSeek today is a family of two frontier-adjacent open-weight models with a chat app, a developer API, and a Hugging Face release. DeepSeek-V4-Pro has 1.6T parameters (49B activated) and DeepSeek-V4-Flash has 284B parameters (13B activated) — both supporting a context length of one million tokens. Both are released under the MIT license, both support a three-way thinking-mode switch, and both expose the same OpenAI- and Anthropic-compatible API surface.

In practical terms, here is what that buys you:

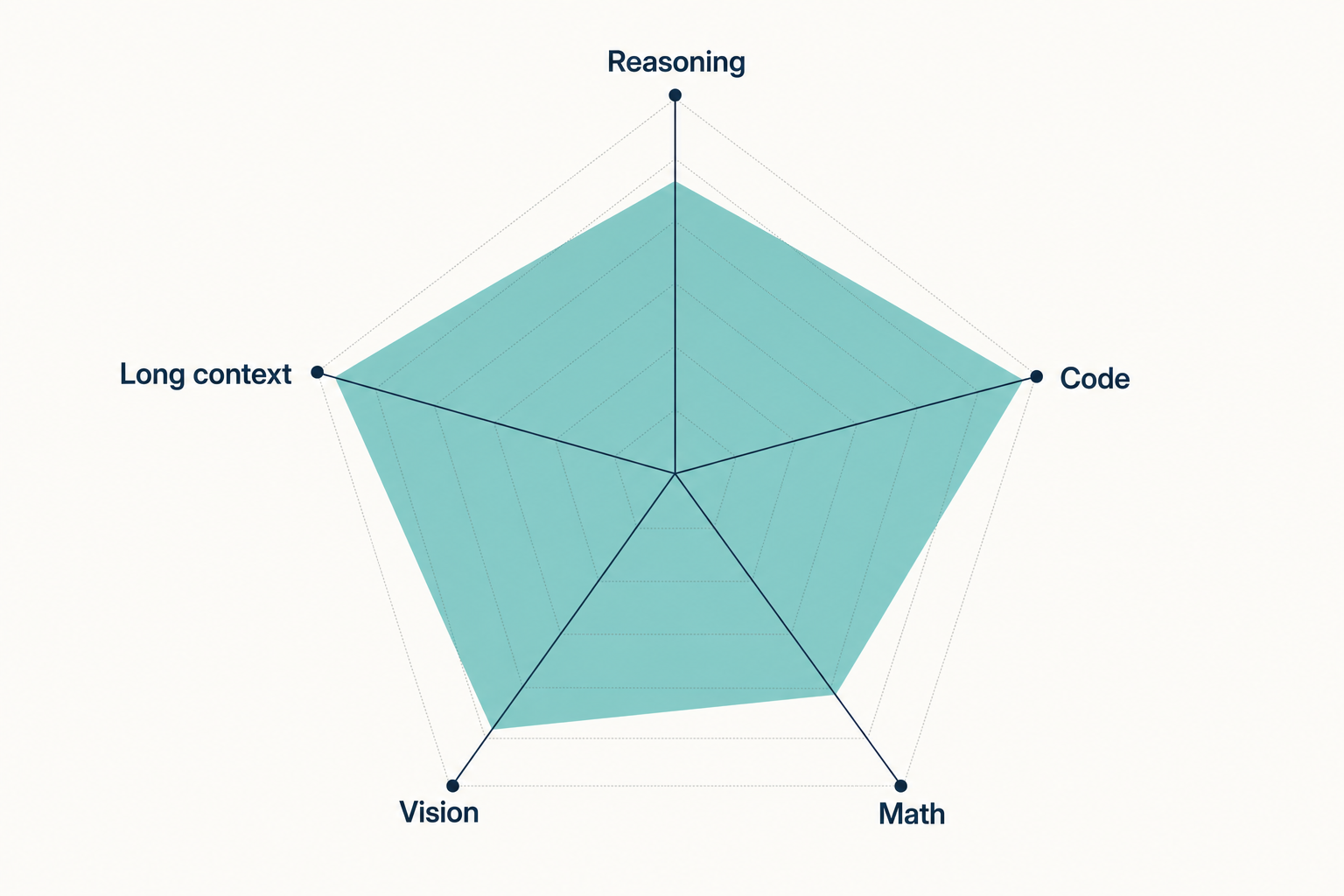

- Long-document reasoning. 1,000,000-token input window with up to 384,000 tokens of output.

- Frontier-tier coding. V4-Pro achieves 80.6% on SWE-bench Verified — within 0.2 points of Claude Opus 4.6.

- Agentic workflows. V4 has special optimizations for mainstream Agent frameworks such as Claude Code, OpenClaw, and CodeBuddy.

- Three reasoning effort levels. Non-thinking, thinking (high), and thinking-max — selected per request, not per model.

- Open weights. Both V4 tiers ship on Hugging Face under MIT, so you can self-host if compliance demands it.

The two-model family, in plain English

Treat V4 as a tier choice, not a model choice. Picking between Pro and Flash is the most consequential decision a new DeepSeek developer makes, because it dictates both cost and ceiling.

V4-Pro: the frontier-tier model

DeepSeek-V4-Pro is the flagship: 1.6 trillion total parameters, 49 billion active per token, pre-trained on 33 trillion tokens. This is the model DeepSeek calls ‘the best open-source model available today.’ Use Pro when you need agentic coding work, hard reasoning over long contexts, or competition-grade math.

V4-Flash: the cost-efficient sibling

DeepSeek-V4-Flash is the efficiency play: 284 billion total parameters, 13 billion active per token, trained on 32 trillion tokens. The 13B active parameter count is in the same ballpark as many mid-range models — but Flash has access to 284B worth of specialized expert knowledge. Flash is what I run for chat, customer-facing assistants, and high-volume retrieval pipelines.

For a deeper dive on each tier, see the DeepSeek V4-Pro and DeepSeek V4-Flash model pages, or the broader DeepSeek V4 overview.

Benchmark capabilities: where V4-Pro lands

I treat self-reported benchmarks as a starting point, not a verdict. Here are DeepSeek’s published numbers from the V4 technical report, cross-checked against third-party reporting from launch day. Frontier numbers shift monthly — verify before you procure.

| Benchmark | DeepSeek V4-Pro (reasoning_effort=max) | Closest closed-source comparator | Source |

|---|---|---|---|

| SWE-bench Verified | 80.6% | Claude Opus 4.6: 80.8% | HF blog, 2026-04-24 |

| Terminal-Bench 2.0 | 67.9% | GPT-5.4-xHigh: 75.1% | HF blog, 2026-04-24 |

| LiveCodeBench | 93.5% | Claude Opus 4.6: 88.8% | buildfastwithai review, 2026-04-24 |

| Codeforces rating | 3,206 | GPT-5.4-xHigh: 3,168 | ofox launch guide, 2026-04-24 |

| BrowseComp | 83.4% | GPT-5.5: 84.4% | VentureBeat, 2026-04-24 |

| GPQA Diamond | 90.1% | Claude Opus 4.7: 94.2% | VentureBeat, 2026-04-24 |

| HMMT 2026 (math) | 95.2% | Claude Opus 4.6: 96.2% | buildfastwithai review, 2026-04-24 |

The honest read: V4-Pro does not appear to dethrone GPT-5.5 or Claude Opus 4.7 on the benchmarks that can be directly compared, but it gets close enough on several of them — especially BrowseComp, Terminal-Bench 2.0 and MCP Atlas — that its much lower API pricing becomes the headline. Where V4-Pro clearly leads is in coding-arena results: Vals AI noted that DeepSeek V4 is “now the #1 open-weight model on our Vibe Code Benchmark, and it’s not close.”

The architecture that makes 1M context affordable

A million-token window is meaningless if every forward pass costs ten times what it did at 128K. The reason V4 ships 1M as the default is an attention rewrite. DeepSeek-V4 designs a hybrid attention mechanism combining Compressed Sparse Attention (CSA) and Heavily Compressed Attention (HCA) to dramatically improve long-context efficiency. In the 1M-token context setting, DeepSeek-V4-Pro requires only 27% of single-token inference FLOPs and 10% of KV cache compared with DeepSeek-V3.2.

V4-Flash drops further to 10% of FLOPs and 7% of KV cache. That is what makes long agent trajectories — SWE-bench tasks, multi-hour browse sessions, terminal sessions with hundreds of commands — economically viable on an open model. For a refresher on what changed between generations, the DeepSeek V3.2 page covers the previous-generation baseline.

Thinking modes: a parameter, not a model

In V4, you no longer pick between deepseek-chat and deepseek-reasoner. You pick a tier (deepseek-v4-pro or deepseek-v4-flash) and set the reasoning effort:

- Non-thinking (default). No step-by-step reasoning; fastest and cheapest.

- Thinking (high). Set

reasoning_effort="high"and passextra_body={"thinking": {"type": "enabled"}}. The response returnsreasoning_contentalongside the finalcontent. - Thinking-max. Set

reasoning_effort="max"for the heaviest reasoning budget; for the Think Max reasoning mode, set the context window to at least 384K tokens.

A minimal Python call against V4-Pro in thinking mode, using the OpenAI SDK:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Plan the migration from V3.2 to V4."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

print(resp.choices[0].message.content)

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com. Both models support the OpenAI ChatCompletions format and the Anthropic API format. Swap the SDK and you can reuse Anthropic-flavoured client code against the same base URL. For full request/response details, see the DeepSeek API documentation.

Important: the API is stateless

The web chat at chat.deepseek.com keeps your session history. The API does not. Every POST /chat/completions request must include the entire conversation in the messages array — DeepSeek’s servers do not remember prior turns on your behalf. That is a contrast with the app, and it is the single most common source of confusion for first-time integrators.

Developer features worth knowing

| Feature | What it does | Notes |

|---|---|---|

| Streaming | SSE chunks via stream=true |

Reasoning content streams alongside final content in thinking mode |

| Tool calling | OpenAI-format function declarations | Works in both thinking and non-thinking modes |

| JSON mode | response_format={"type": "json_object"} |

Designed to return valid JSON, not guaranteed; include the word “json” plus a sample schema and set max_tokens high |

| Context caching | Cache-hit pricing tier applied automatically | Charges still apply to uncached tokens — see costing below |

| FIM completion (Beta) | Fill-in-the-middle code completion | Non-thinking mode only |

| Chat Prefix Completion (Beta) | Continuation-style prompts | Useful for templated outputs |

Temperature guidance from DeepSeek’s own docs: 0.0 for code and math, 1.0 for data analysis, 1.3 for general chat and translation, 1.5 for creative writing. Default top_p is 1.0.

Pricing and a worked cost example

As of April 2026, DeepSeek’s published rates per 1M tokens are:

| Tier | Input (cache hit) | Input (cache miss) | Output |

|---|---|---|---|

| deepseek-v4-flash | $0.0028 | $0.14 | $0.28 |

| deepseek-v4-pro | $0.003625 promo / $0.0145 list | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list |

A V4-Flash worked example — 1,000,000 calls per month, with a 2,000-token system prompt cached across calls, a 200-token user message (uncached), and a 300-token response:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

The same workload on V4-Pro costs ~$1,421.00 — roughly 12× more, because Pro’s output rate is $3.48/M list (currently $0.87/M during the 75% promo through 2026-05-31) versus Flash’s $0.28/M. Default to Flash unless your workload demonstrably needs the Pro lift on agentic coding or hard reasoning. Off-peak discounts are not active; DeepSeek discontinued them on 2025-09-05 and did not reintroduce them with V4. Build a more detailed model with the DeepSeek pricing calculator or read the dedicated DeepSeek API pricing breakdown.

Legacy IDs and the migration window

If you have older integrations using deepseek-chat or deepseek-reasoner, they still work — for now. deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time) (currently routing to deepseek-v4-flash non-thinking/thinking). Migration is a one-line change to your model= parameter; the base_url stays the same. I’d schedule the swap in May rather than June — the Pro tier is worth testing before the deadline pressure.

What DeepSeek still cannot do well

Open-weight does not mean omnipotent. Honest limitations as of April 2026:

- Multimodal input. DeepSeek said the current version focuses on text, but it is preparing a multimodal AI roadmap. If you need vision, look at the older DeepSeek VL2 family or a different provider.

- Closed-model parity on the hardest reasoning. On Humanity’s Last Exam without tools, DeepSeek-V4-Pro at maximum reasoning effort scores 37.7% versus 46.9% for Claude Opus 4.7 (VentureBeat, April 2026). It is competitive, not dominant.

- Self-hosting cost. Pro is 865GB on Hugging Face, Flash is 160GB. Pro needs tens of GPUs for any reasonable throughput; Flash is more realistic on a well-equipped single server.

- Privacy posture. Hosted requests are processed on servers subject to Chinese law. If that matters for your data, self-host the weights or pick another provider. Read the DeepSeek privacy guide before committing.

- Regional availability. Italy’s Garante restricted the consumer app in January 2025; some US states limit DeepSeek on government devices. The availability-by-country guide tracks the current status.

For a fuller list, the DeepSeek limitations page is the deeper companion to this section.

Where DeepSeek capabilities fit best

After three weeks of running V4 in production alongside Claude and GPT-5, my heuristics:

- High-volume chat or RAG? V4-Flash. The output rate is the cheapest of any frontier-adjacent API I’ve tested in April 2026 — pair it with the DeepSeek context caching guide to push effective costs lower.

- Agentic coding loops? V4-Pro with thinking mode enabled. The Codeforces and LiveCodeBench numbers translate well to real PR-fixing work.

- Long-document analysis? Either tier with the 1M context. Flash is fine for summarisation; Pro earns its premium when you need cross-document reasoning.

- Anything requiring vision or audio? Use a different model.

For role-specific recipes, see DeepSeek for coding and DeepSeek for developers, or browse the wider DeepSeek beginner guides hub.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

What can DeepSeek V4 actually do that V3.2 could not?

V4’s core capability gain is making 1M-token context economical. In the 1M-token context setting, DeepSeek-V4-Pro requires only 27% of single-token inference FLOPs and 10% of KV cache compared with DeepSeek-V3.2. That changes which agentic and long-document workloads are viable on an open model. V4 also folds thinking mode into a request parameter rather than a separate model ID. See the DeepSeek V4 overview for the full feature delta.

How does DeepSeek V4-Pro compare to GPT-5 and Claude Opus on coding?

It’s competitive but not dominant on the hardest agent benchmarks, and ahead on several coding-specific ones. On coding benchmarks, DeepSeek V4-Pro leads Claude on Terminal-Bench 2.0 (67.9% vs 65.4%), LiveCodeBench (93.5% vs 88.8%), and Codeforces rating (3206 vs no reported score). SWE-Bench Pro and HLE still favour the closed models. The DeepSeek vs Claude comparison breaks the gaps down task by task.

Is DeepSeek’s million-token context real, or marketing?

It’s real and economically usable, which is the harder claim. The hybrid CSA + HCA attention is what brings the inference cost down at long depth, and DeepSeek’s published retrieval numbers hold up reasonably well at depth — MRCR 8-needle accuracy stays above 0.82 through 256K tokens and around 0.59 at 1M. Verify against your own corpus before you commit. The DeepSeek context length checker helps with prompt sizing.

Does DeepSeek remember my conversation between API calls?

No — the API is stateless. The chat app at chat.deepseek.com keeps session history for you, but the developer surface does not. Every POST /chat/completions call must include the full messages array (system, user, assistant turns) for DeepSeek to see the history. The DeepSeek API getting started tutorial walks through the multi-turn pattern with concrete code.

Can I run DeepSeek V4 on my own hardware?

Flash is realistic; Pro is hard. Pro is 865GB on Hugging Face, Flash is 160GB. A lightly quantised Flash can run on a high-end workstation; Pro needs tens of GPUs for sensible throughput. Both ship under MIT, so commercial self-hosting is allowed. The install DeepSeek locally tutorial covers quantisation choices and GPU sizing.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- OfficialDeepSeek news: V4 Preview release notesV4 launch-day announcement referenced for benchmark numbersLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.