DeepSeek Beginners Guide: V4, Chat, and Your First API Call

You opened DeepSeek, saw a chat box that looks like every other AI assistant, and then noticed model names like `deepseek-v4-pro`, a “DeepThink” toggle, and an API page full of token-cost tables. Where do you actually start? This DeepSeek beginners guide cuts through the menus. I run V4-Pro and V4-Flash in production, used V3.2 and R1 before that, and I will show you what each surface (web chat, mobile app, API) actually does, what it costs, and which model to pick for your first task. By the end you will have sent a real message in the chat, sized up the pricing, and — if you want — made your first API call in Python. One coffee. Let’s go!

What DeepSeek is, in one paragraph

DeepSeek is a Chinese AI lab (founded 2023, based in Hangzhou, linked to the hedge fund High-Flyer) that builds large language models and ships them as open weights on Hugging Face plus a hosted chat and API. The current generation is DeepSeek V4, released on April 24, 2026, available as two open-weight Mixture-of-Experts models: deepseek-v4-pro (1.6T total / 49B active parameters, frontier tier) and deepseek-v4-flash (284B / 13B active, cost-efficient tier). Both ship under the MIT license and share a 1,000,000-token context window with output up to 384,000 tokens. If you want a longer primer on the company itself, see what is DeepSeek.

The three ways you’ll actually use DeepSeek

Most beginners conflate “DeepSeek” with the chat website. There are really three distinct surfaces, and they behave differently:

| Surface | Who it’s for | State | Cost |

|---|---|---|---|

| Web chat (chat.deepseek.com) | Anyone with a browser | Keeps history per conversation | Free at time of writing |

| Mobile app (iOS, Android) | Phone users | Keeps history per conversation | Free at time of writing |

API (api.deepseek.com) |

Developers | Stateless — you resend history every call | Pay per token |

Important detail beginners miss: the API is stateless. The web chat and app remember what you said earlier in the conversation; the API does not. If you build something on the API, your code must resend the full message history with every request. More on that below.

Step 1: Try the chat (5 minutes, free)

The simplest way to feel out V4 is the hosted chat. Go to chat.deepseek.com, sign up with an email or Google account, and you land on a chat page that defaults to DeepSeek V4. There is one toggle worth knowing about: DeepThink. Switching it on tells V4 to reason step-by-step before answering — slower, but better on maths, code, and multi-step problems. Off by default. Walk-through and screenshots: how to use DeepSeek.

A first prompt I recommend, because it stresses both modes:

“I have a CSV with 12 months of revenue and cost data per region. Suggest three exploratory analyses and the Python (pandas) code for each. Then list two pitfalls in interpreting the results.”

Run it once with DeepThink off, then again with it on. You’ll see the on-mode produce a longer, more structured plan with caveats. That is the same trade-off you’ll make in the API later via the reasoning_effort parameter.

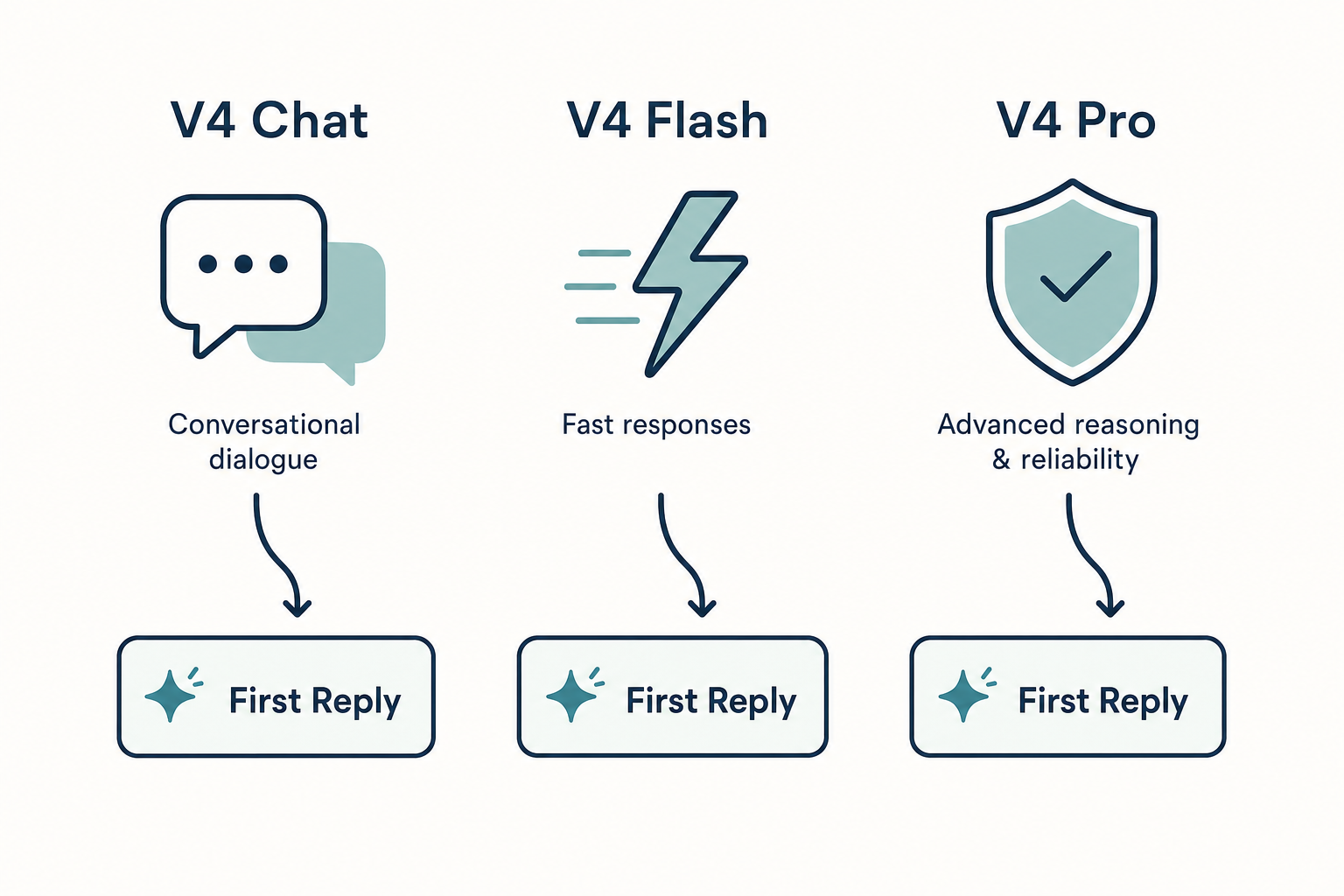

Step 2: Pick a model (V4-Pro vs V4-Flash)

For chat users this happens automatically. For API users it’s the most important decision you’ll make, because output costs differ by ~12×.

| Model | Total / active params | Best for | Output $/1M tokens |

|---|---|---|---|

deepseek-v4-flash |

284B / 13B | Default chat, summarisation, classification, RAG | $0.28 |

deepseek-v4-pro |

1.6T / 49B | Frontier coding, complex agents, hardest reasoning tasks | $0.87 promo / $3.48 list |

Start with V4-Flash. Move to V4-Pro only if a specific task fails on Flash and the benchmark lift justifies the spend. For a deeper pros/cons breakdown, see the dedicated pages for DeepSeek V4-Flash and DeepSeek V4-Pro, or browse the DeepSeek models hub for the full lineup.

What about the old deepseek-chat and deepseek-reasoner IDs?

If you’ve seen tutorials referencing deepseek-chat or deepseek-reasoner, those are legacy V3-era model IDs. They still work — both currently route to deepseek-v4-flash (non-thinking and thinking respectively) — but they will be retired on 2026-07-24 at 15:59 UTC. Migration is a one-line change: keep base_url, just update model. Do this now if you maintain anything in production.

Step 3: Understand the pricing before you spend a dollar

DeepSeek bills three buckets per request. Forgetting any one of them is the most common cost-estimate mistake I see beginners make.

| Bucket | V4-Flash $/1M | V4-Pro $/1M |

|---|---|---|

| Input — cache hit | $0.0028 | $0.0145 |

| Input — cache miss | $0.14 | $0.435 promo / $1.74 list |

| Output | $0.28 | $0.87 promo / $3.48 list |

Rates as of April 2026; verify on the live DeepSeek API pricing page before committing. Note that the night-time off-peak discount that some older blog posts reference was discontinued on September 5, 2025 and has not returned.

Worked example — V4-Flash, 1 million calls

2,000-token system prompt (cached across calls), 200-token user message (uncached on each call), 300-token response:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

The same workload on V4-Pro comes to $1,421.00 — about 10× more. That’s the trade you’re making when you pick the frontier tier. For interactive what-if estimates, the DeepSeek pricing calculator handles the three buckets for you.

Step 4: Your first API call (Python)

The DeepSeek API is OpenAI-compatible. That means the official OpenAI Python SDK works against DeepSeek by changing only base_url and api_key. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. (DeepSeek also exposes an Anthropic-compatible surface against the same base URL if you prefer that SDK.)

First, get a key — see get a DeepSeek API key for the click-by-click. Then install the SDK:

pip install openaiThe minimal Python script:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_DEEPSEEK_API_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-flash",

messages=[

{"role": "system", "content": "You are a concise assistant."},

{"role": "user", "content": "Explain MoE in two sentences."},

],

temperature=1.3,

max_tokens=256,

)

print(resp.choices[0].message.content)

That’s it. To turn on thinking mode (V4 returns reasoning_content alongside the final content), add two arguments:

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Plan a 3-step database migration."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

)

print(resp.choices[0].message.reasoning_content)

print(resp.choices[0].message.content)

Use reasoning_effort="max" for the hardest problems; you’ll need max_tokens headroom (up to 384K) for long traces. Full parameter reference and more samples in the DeepSeek API documentation.

Temperature defaults worth memorising

- 0.0 — code generation, mathematics

- 1.0 — data analysis, data cleaning

- 1.3 — general conversation, translation

- 1.5 — creative writing, poetry

Step 5: Know what V4 can and can’t do

V4 is strong at coding (DeepSeek reported 80.6% on SWE-Bench Verified for V4-Pro at launch — see the official DeepSeek API docs for the current technical report link), multilingual reasoning, long-context analysis, and tool calling. It is weaker on real-time information (no built-in web browsing in the API), and image generation is not part of the V4 chat model — that lives in separate releases like DeepSeek Janus. Honest list of rough edges: DeepSeek limitations.

Beginner pitfalls — and the fixes

| Pitfall | Fix |

|---|---|

| “My API costs are 5× what I expected” | You forgot the uncached-input bucket. Each new user message is a cache miss against the cached prefix. |

| “My multi-turn chatbot forgot the last message” | The API is stateless. Resend the full messages array every call. |

| “JSON mode returned empty content” | Include the word “json” plus a small example schema in your prompt and raise max_tokens. JSON mode is designed to return valid JSON, not guaranteed. |

“Tutorials use deepseek-chat — should I?” |

Use deepseek-v4-flash for new code. Legacy IDs retire 2026-07-24 15:59 UTC. |

Where to go next

You now know the surfaces, the two V4 tiers, the three pricing buckets, and how to send a first request. Pick the path that matches what you’re building:

- Building a chatbot or content tool? → DeepSeek prompt engineering

- Comparing against tools you already use? → DeepSeek vs ChatGPT

- Want to run weights on your own GPU? → install DeepSeek locally

- Ready for the deep end? → DeepSeek advanced guide or browse all DeepSeek beginner guides

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Is DeepSeek free for beginners?

The DeepSeek web chat at chat.deepseek.com and the official mobile apps are free to use at the time of writing, with sign-up via email or Google account. The API is paid, billed per token, with the cheapest tier (V4-Flash) starting at $0.14 per million input tokens (cache miss) and $0.28 per million output tokens. DeepSeek may offer a granted balance — a small promotional credit that can expire — so check the billing console for current offers. More detail: is DeepSeek free.

What’s the difference between DeepSeek V4-Pro and V4-Flash?

V4-Pro is the frontier tier (1.6T total / 49B active parameters), aimed at the hardest coding, agentic, and reasoning tasks. V4-Flash is the cost-efficient tier (284B / 13B active) and is the right default for chat, summarisation, classification, and RAG. Both share the same 1M-token context window and feature set; the gap is roughly 10–12× on output cost. Side-by-side: DeepSeek V4.

How do I make my first DeepSeek API call?

Install the OpenAI Python SDK with pip install openai, then point it at base_url="https://api.deepseek.com" with your DeepSeek API key. Call client.chat.completions.create(model="deepseek-v4-flash", messages=[...]) against POST /chat/completions. That is the entire integration; OpenAI SDK code paths work unchanged. Step-by-step: DeepSeek API getting started.

Does DeepSeek remember my conversation history?

The web chat and mobile app keep conversation history per session — you can scroll back through past chats. The API does not. The API is stateless, which means your client code must resend the full messages array (system, user, assistant turns) on every request to maintain context. This is the single biggest difference between the chat surface and the developer surface. Pricing implications: DeepSeek context caching.

Can I run DeepSeek offline on my own machine?

Yes — V4-Pro, V4-Flash, V3.2, V3.1 and R1 publish weights under the MIT license on Hugging Face, so you can self-host. Practically, V4-Pro and V4-Flash are large MoE models requiring serious GPU memory; most beginners start with smaller distilled options like DeepSeek R1 Distill or run inference via Ollama. Hardware sizing and a walkthrough: running DeepSeek on Ollama.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- OfficialDeepSeek official API documentationCanonical reference for endpoints, model IDs and behaviourLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.