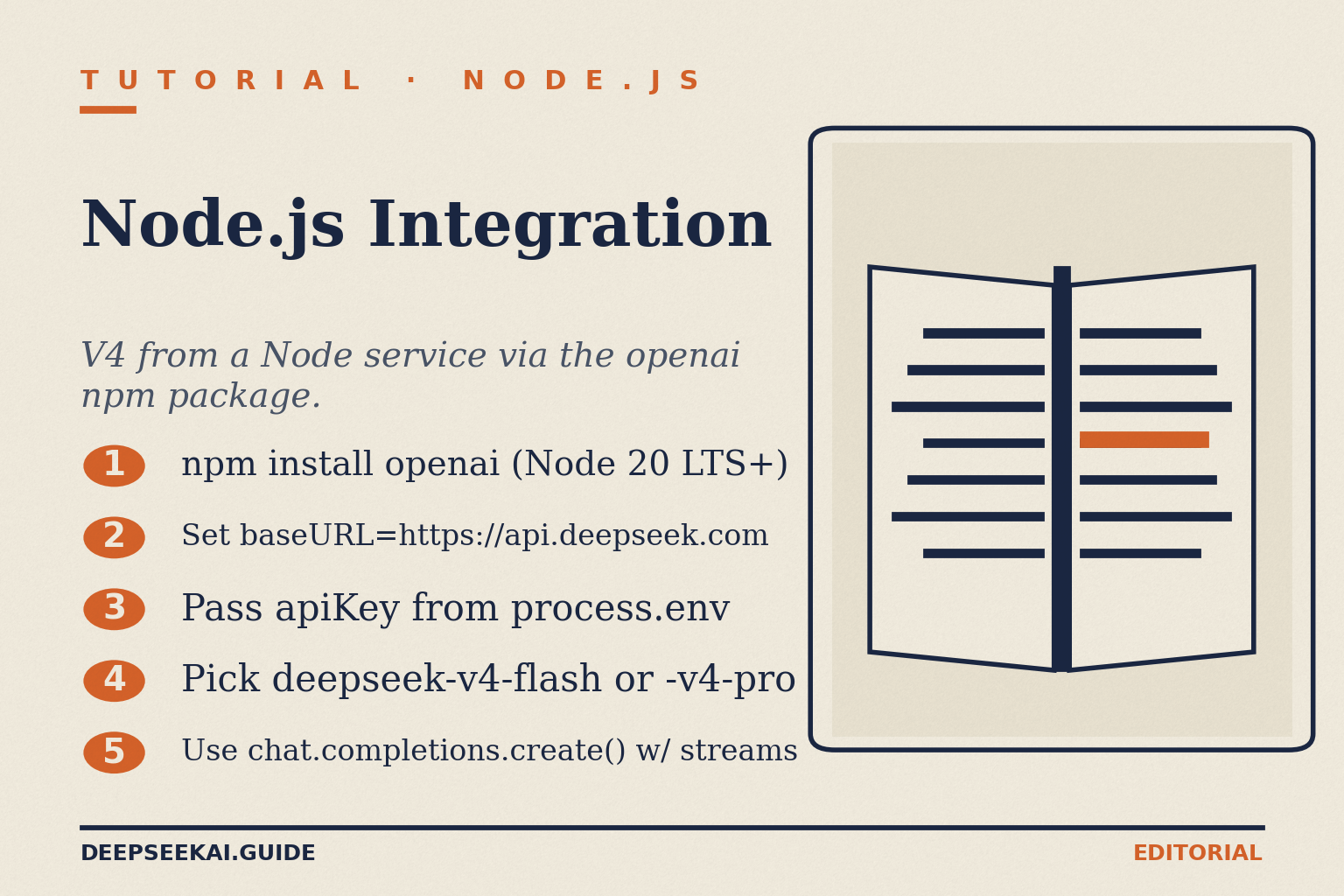

DeepSeek Node.js Integration: A Production Quickstart for V4

You want to call DeepSeek from a Node.js service today, and you don’t want to babysit a custom HTTP client to do it. The good news: a working DeepSeek Node.js integration is roughly fifteen lines of code, because the API speaks the same wire format as OpenAI’s Chat Completions. Drop in the official `openai` package, swap the `baseURL`, change the `model`, and the rest of your code keeps working. The friction is in the details — the new V4 model IDs, thinking mode as a parameter, JSON mode caveats, retry logic, and getting your cost math right. This guide walks through all of that with code you can paste into a fresh project, then hardens it for production. By the end you’ll have a typed client, streaming, tool calls and a cost-aware logger.

What you’ll build

You’ll set up a Node.js 20+ project, install the OpenAI SDK, configure it for DeepSeek’s POST /chat/completions endpoint, and progressively add streaming, JSON mode, tool calling and a cost logger. The final client targets DeepSeek V4, the current generation, which DeepSeek released and open-sourced on April 24, 2026. V4 ships as two open-weight MoE tiers — DeepSeek-V4-Pro at 1.6T total / 49B active parameters, and DeepSeek-V4-Flash at 284B total / 13B active parameters. Both are MIT-licensed and share a 1,000,000-token context with up to 384,000 tokens of output.

If you’d rather work in Python first, the patterns map one-to-one — see our DeepSeek Python integration guide. The wire format is identical.

Prerequisites

- Node.js 20 LTS or newer. The OpenAI SDK uses native

fetchand ES modules. - A DeepSeek API key. If you don’t have one, follow our walkthrough to get a DeepSeek API key.

- The official OpenAI Node SDK (

[email protected]or later). No DeepSeek-specific package is needed. - A funded balance. DeepSeek may show a granted promotional balance in the billing console, but plan for paid usage from day one.

- Comfort with async/await and environment variables.

Step 1: Scaffold the project

Create a new directory and initialise it as an ES module project so you can use import syntax cleanly.

mkdir deepseek-node && cd deepseek-node

npm init -y

npm pkg set type="module"

npm install openai dotenv

echo "DEEPSEEK_API_KEY=sk-..." > .env

echo ".env" >> .gitignoreConfirm the SDK pinned to a recent version. Node [email protected] works without modification against DeepSeek, and 4.x is what npm install openai resolves to today.

Step 2: Configure the client

The whole integration hinges on two values: the base URL and the model ID. Create client.js:

// client.js

import "dotenv/config";

import OpenAI from "openai";

export const deepseek = new OpenAI({

apiKey: process.env.DEEPSEEK_API_KEY,

baseURL: "https://api.deepseek.com",

timeout: 60_000,

maxRetries: 2,

});That’s the entire configuration. The OpenAI-format base URL is https://api.deepseek.com; the Anthropic-format base URL is https://api.deepseek.com/anthropic, so if your codebase already uses the Anthropic SDK you can point that at DeepSeek instead. We’re sticking with OpenAI shape for this guide because it’s the broader ecosystem.

Choose the right model ID

V4 introduces two explicit IDs. Use them in code, not the legacy aliases.

| Model ID | Tier | Best for | Context / output |

|---|---|---|---|

deepseek-v4-flash |

Cost-efficient | Chat, classification, RAG, high-volume backends | 1M / 384K |

deepseek-v4-pro |

Frontier | Agentic coding, hard reasoning, long-context analysis | 1M / 384K |

deepseek-chat (legacy) |

Alias | Migration only — routes to V4-Flash non-thinking | 1M / 384K |

deepseek-reasoner (legacy) |

Alias | Migration only — routes to V4-Flash thinking | 1M / 384K |

The legacy aliases still work, but plan around the deadline. deepseek-chat and deepseek-reasoner will be fully retired and inaccessible after Jul 24th, 2026, 15:59 (UTC Time), currently routing to deepseek-v4-flash non-thinking/thinking. Migrating is a one-line change to the model field; the baseURL does not change.

Step 3: Make your first call

Create hello.js:

// hello.js

import { deepseek } from "./client.js";

const resp = await deepseek.chat.completions.create({

model: "deepseek-v4-flash",

messages: [

{ role: "system", content: "You are a precise assistant." },

{ role: "user", content: "Summarise REST in one sentence." },

],

temperature: 1.3,

max_tokens: 200,

});

console.log(resp.choices[0].message.content);

console.log("Tokens:", resp.usage);Run it: node hello.js. You should see a one-sentence summary plus a usage block. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at the configured base URL.

Picking temperature

DeepSeek publishes opinionated defaults. Match them unless you have a reason not to:

- 0.0 — code generation, mathematics, deterministic extraction

- 1.0 — data analysis and data cleaning

- 1.3 — general conversation and translation

- 1.5 — creative writing and poetry

Step 4: Multi-turn conversation (state lives on the client)

Here’s the trap newcomers fall into. The DeepSeek web chat at chat.deepseek.com remembers your previous messages; the API does not. The API is stateless — you must resend the full message history with every request to sustain a multi-turn conversation. Build a small array and append to it:

// chat.js

import { deepseek } from "./client.js";

const history = [

{ role: "system", content: "You are a concise senior engineer." },

];

async function ask(userText) {

history.push({ role: "user", content: userText });

const resp = await deepseek.chat.completions.create({

model: "deepseek-v4-flash",

messages: history,

max_tokens: 500,

});

const reply = resp.choices[0].message;

history.push({ role: "assistant", content: reply.content });

return reply.content;

}

console.log(await ask("What is a circuit breaker?"));

console.log(await ask("Sketch one in TypeScript."));For background on storing this server-side, see the DeepSeek API best practices reference.

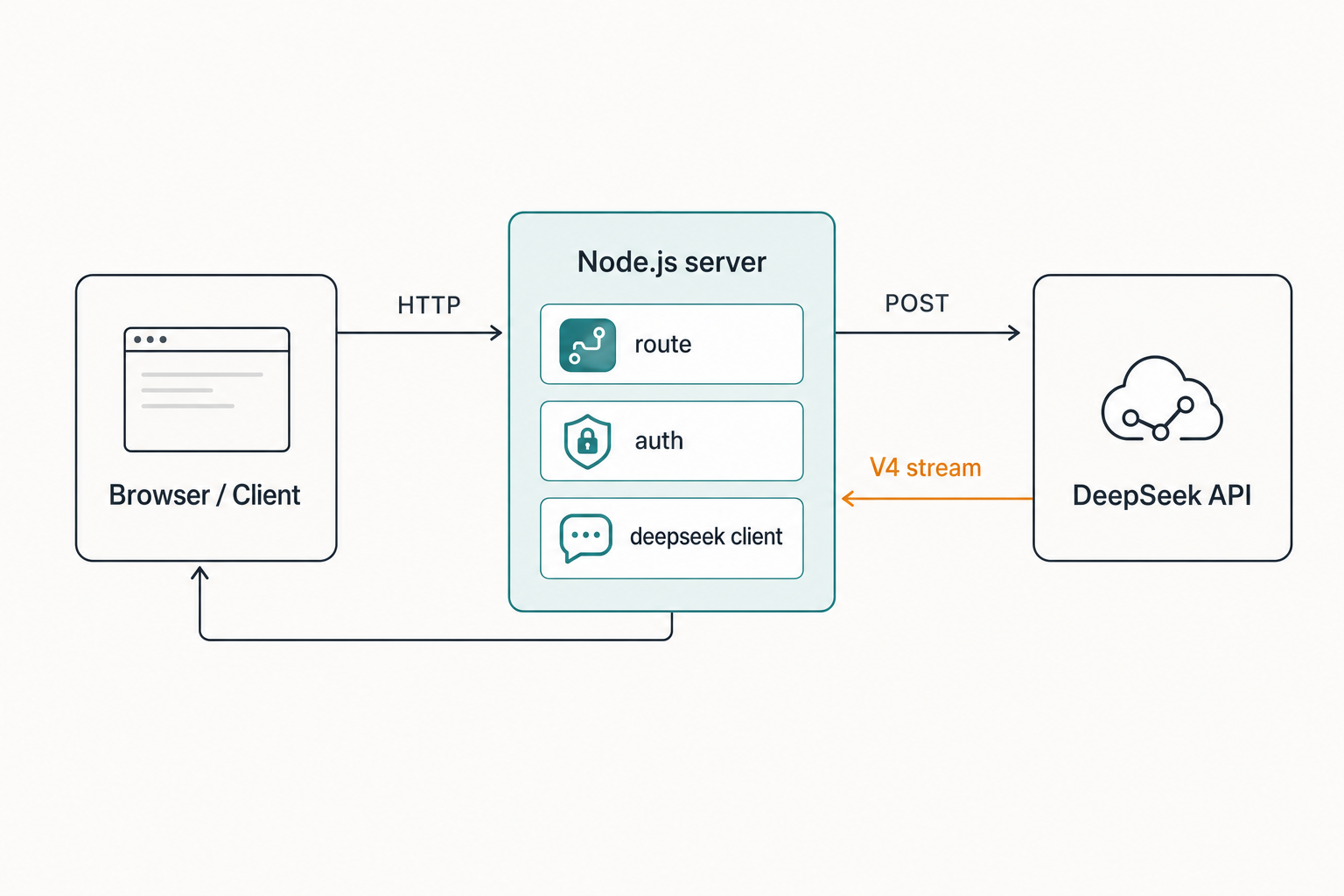

Step 5: Streaming responses

For chat UIs you want tokens as they arrive. Set stream: true and iterate the async iterable:

// stream.js

import { deepseek } from "./client.js";

const stream = await deepseek.chat.completions.create({

model: "deepseek-v4-flash",

messages: [{ role: "user", content: "Write a haiku about caches." }],

stream: true,

});

for await (const chunk of stream) {

process.stdout.write(chunk.choices[0]?.delta?.content ?? "");

}

process.stdout.write("n");Each chunk is a server-sent-event delta. For deeper detail on backpressure and Express/Fastify wiring, see DeepSeek API streaming.

Step 6: Thinking mode

V4 makes thinking a request parameter, not a separate model ID. Both V4-Pro and V4-Flash accept it, and the response returns reasoning_content alongside the final content.

// thinking.js

import { deepseek } from "./client.js";

const resp = await deepseek.chat.completions.create({

model: "deepseek-v4-pro",

messages: [

{ role: "user", content: "Plan a zero-downtime Postgres major-version upgrade." },

],

reasoning_effort: "high",

// The OpenAI SDK forwards unknown fields, so 'thinking' goes through verbatim.

thinking: { type: "enabled" },

});

const msg = resp.choices[0].message;

console.log("--- reasoning ---");

console.log(msg.reasoning_content);

console.log("--- answer ---");

console.log(msg.content);Use reasoning_effort: "max" when you need the longest reasoning budget; that mode requires max_tokens headroom up to 384K to avoid truncation. Do not feed reasoning_content back into the next turn’s messages array — only the final content belongs in conversation history.

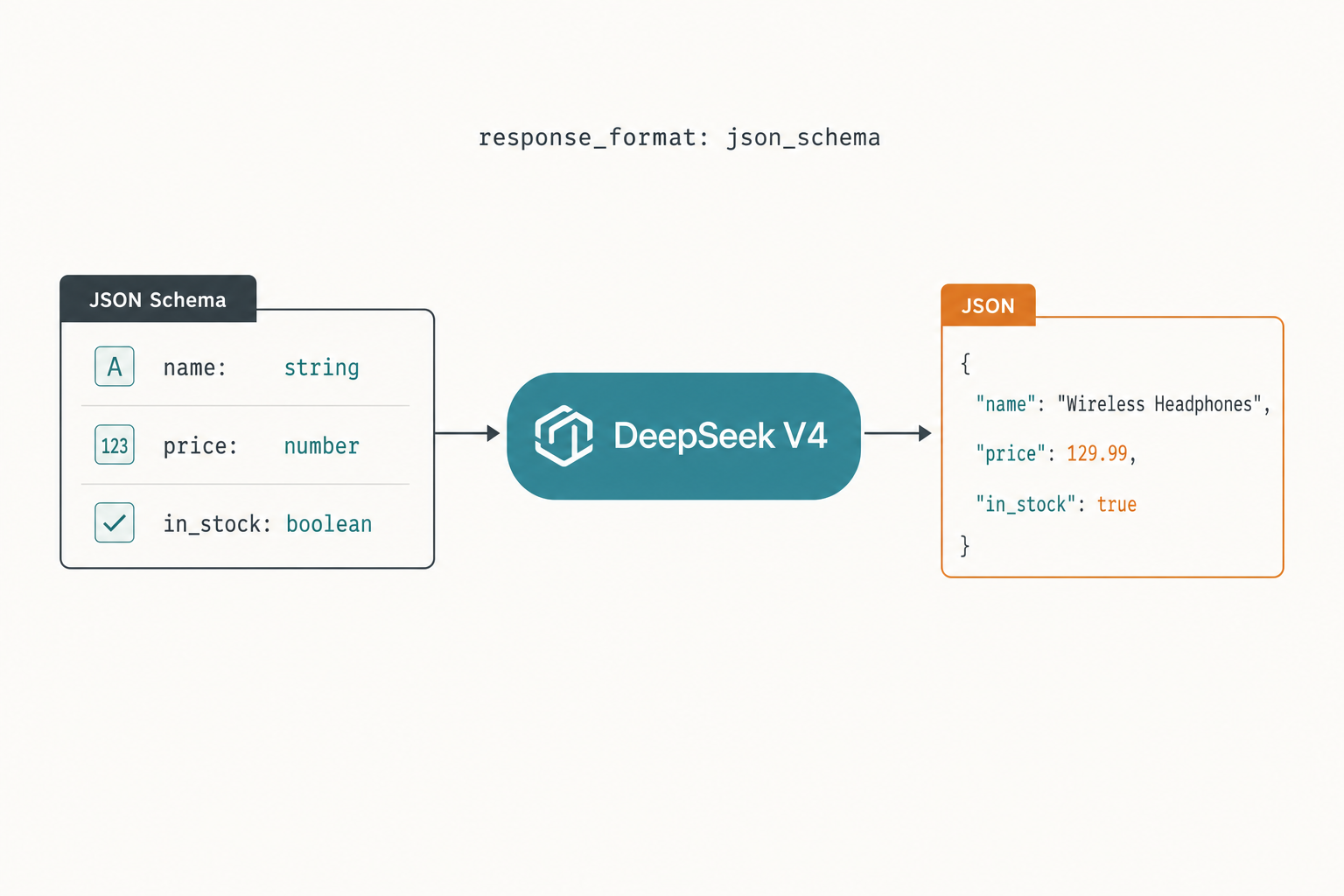

Step 7: JSON mode

JSON mode is designed to return valid JSON, not guaranteed. The DeepSeek docs are explicit that you must (1) include the word “json” in the prompt, (2) provide a small example schema in the prompt, and (3) set max_tokens high enough that the response cannot be truncated mid-object. The model may also occasionally return empty content — handle that.

// json.js

import { deepseek } from "./client.js";

const resp = await deepseek.chat.completions.create({

model: "deepseek-v4-flash",

response_format: { type: "json_object" },

messages: [

{

role: "system",

content:

'Reply as json. Schema example: {"sentiment":"pos|neg|neu","score":0.0}',

},

{ role: "user", content: "The deploy went fine but the dashboards are down." },

],

max_tokens: 500,

temperature: 0,

});

const raw = resp.choices[0].message.content ?? "";

let parsed;

try {

parsed = JSON.parse(raw);

} catch {

parsed = { error: "invalid_or_empty", raw };

}

console.log(parsed);For schema validation, layer Zod or Ajv on top of JSON.parse. The DeepSeek API JSON mode reference covers retry strategies in detail.

Step 8: Tool calling

Tool calling uses the OpenAI-compatible tools array. Declare functions, run the model, then execute the tool and feed the result back.

// tools.js

import { deepseek } from "./client.js";

const tools = [

{

type: "function",

function: {

name: "get_weather",

description: "Get current weather for a city",

parameters: {

type: "object",

properties: { city: { type: "string" } },

required: ["city"],

},

},

},

];

const messages = [{ role: "user", content: "Weather in Dublin?" }];

const first = await deepseek.chat.completions.create({

model: "deepseek-v4-flash",

messages,

tools,

});

const call = first.choices[0].message.tool_calls?.[0];

if (call?.function.name === "get_weather") {

const { city } = JSON.parse(call.function.arguments);

const toolResult = { city, tempC: 11, conditions: "drizzle" };

messages.push(first.choices[0].message);

messages.push({

role: "tool",

tool_call_id: call.id,

content: JSON.stringify(toolResult),

});

const second = await deepseek.chat.completions.create({

model: "deepseek-v4-flash",

messages,

});

console.log(second.choices[0].message.content);

}Tool calling works in both thinking and non-thinking modes. For agent-style loops, see DeepSeek API function calling.

Step 9: Cost logging

Wrap the client so every call writes a cost line. Costs depend on three token buckets — cache hit, cache miss, and output — and on the tier you chose. As of April 2026, V4-Flash lists $0.0028 / $0.14 / $0.28 per million tokens (cache hit / cache miss / output), and V4-Pro lists $0.0145 / $1.74 / $3.48 (currently 75% off through 2026-05-31, making the effective rates $0.003625 / $0.435 / $0.87). Confirm current numbers on the DeepSeek API pricing page before locking in a budget.

// cost.js

const FLASH = { hit: 0.028, miss: 0.14, out: 0.28 };

const PRO = { hit: 0.145, miss: 1.74, out: 3.48 };

export function priceUSD(usage, model) {

const r = model.startsWith("deepseek-v4-pro") ? PRO : FLASH;

const hit = usage.prompt_tokens_details?.cached_tokens ?? 0;

const miss = usage.prompt_tokens - hit;

const out = usage.completion_tokens;

return (hit * r.hit + miss * r.miss + out * r.out) / 1_000_000;

}Worked example: V4-Flash at scale

1,000,000 calls with a 2,000-token cached system prompt, a 200-token user message (uncached against that prefix), and a 300-token reply:

- Cached input: 2,000,000,000 × $0.0028/M = $5.60

- Uncached input: 200,000,000 × $0.14/M = $28.00

- Output: 300,000,000 × $0.28/M = $84.00

- Total: $117.60

The same workload on V4-Pro costs $29.00 + $348.00 + $1,044.00 = $1,421.00 — roughly ten times the spend. Default to Flash; promote individual call sites to Pro only when measurements justify it.

Step 10: Verify it worked

Three quick checks before you call this done:

- Run

node hello.jsand confirm a 200-OK response with a non-emptycontentfield. - Hit

GET /modelswithfetchand confirm bothdeepseek-v4-proanddeepseek-v4-flashappear in the list. - Make a deliberate 401 (bad key) and a deliberate 400 (empty messages) call — confirm your error handler logs both clearly.

Common errors and fixes

| Symptom | Cause | Fix |

|---|---|---|

401 Unauthorized |

Bad or missing key | Check .env loads before the client module runs; print process.env.DEEPSEEK_API_KEY?.slice(0,5) |

404 Not Found |

Wrong base URL or model ID typo | Use https://api.deepseek.com and explicit V4 IDs |

429 Too Many Requests |

Rate limit hit | Exponential backoff; SDK maxRetries covers transient cases |

Empty content in JSON mode |

Truncation or model edge case | Raise max_tokens; include “json” + example in the prompt; retry once |

| HTTP 400 on second turn | reasoning_content sent back in messages |

Strip it before appending — only keep content |

| Hangs at ~30s | Default fetch timeout on long thinking calls | Set timeout: 120_000 on the client |

For a fuller mapping, see DeepSeek API error codes.

Next steps

- Add prefix caching deliberately — see DeepSeek context caching.

- Wire it into a chat UI — the DeepSeek Streamlit app tutorial covers the streaming UX patterns even if you port them to React.

- Compare the SDK ergonomics across languages in our DeepSeek OpenAI SDK compatibility notes.

- Browse more DeepSeek tutorials for adjacent integrations like Discord bots and RAG pipelines.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

How do I integrate DeepSeek with Node.js?

Install the official OpenAI SDK with npm install openai, instantiate it with baseURL: "https://api.deepseek.com" and your DeepSeek API key, then call chat.completions.create with model: "deepseek-v4-flash" or "deepseek-v4-pro". The wire format is identical to OpenAI’s, so existing code paths transfer with a one-line config change. Full walkthrough in our DeepSeek API getting started guide.

What model ID should I use in 2026?

Use deepseek-v4-flash for most workloads and deepseek-v4-pro when you need frontier-tier reasoning or agentic coding. The older deepseek-chat and deepseek-reasoner aliases still work but retire on July 24, 2026 at 15:59 UTC, after which requests using them will fail. Background on the lineage in our DeepSeek V4 overview.

Does DeepSeek’s API remember previous messages?

No. The API is stateless — you must resend the full conversation history on every request. The web chat at chat.deepseek.com keeps session history for users, but the developer API does not store anything between calls. Maintain a messages array in your Node service and append each user and assistant turn before the next request. See DeepSeek API documentation.

Can I stream responses to a Node.js client?

Yes. Pass stream: true to chat.completions.create and iterate the returned async iterable. Each chunk is a server-sent-event delta with the next text fragment in chunk.choices[0].delta.content. When thinking mode is enabled the reasoning content streams alongside the final content. See DeepSeek API streaming for SSE plumbing across Express and Fastify.

How much does a typical Node.js app cost on DeepSeek?

It depends on tier and caching. As a worked example on deepseek-v4-flash, one million calls with a 2,000-token cached system prompt, 200-token uncached user input and 300-token reply costs about $117.60 total: $56 cached input, $28 uncached input, $84 output. The same workload on V4-Pro is roughly ten times that. Confirm current rates on the DeepSeek API pricing page.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.