How to Build a DeepSeek Streamlit App in Python

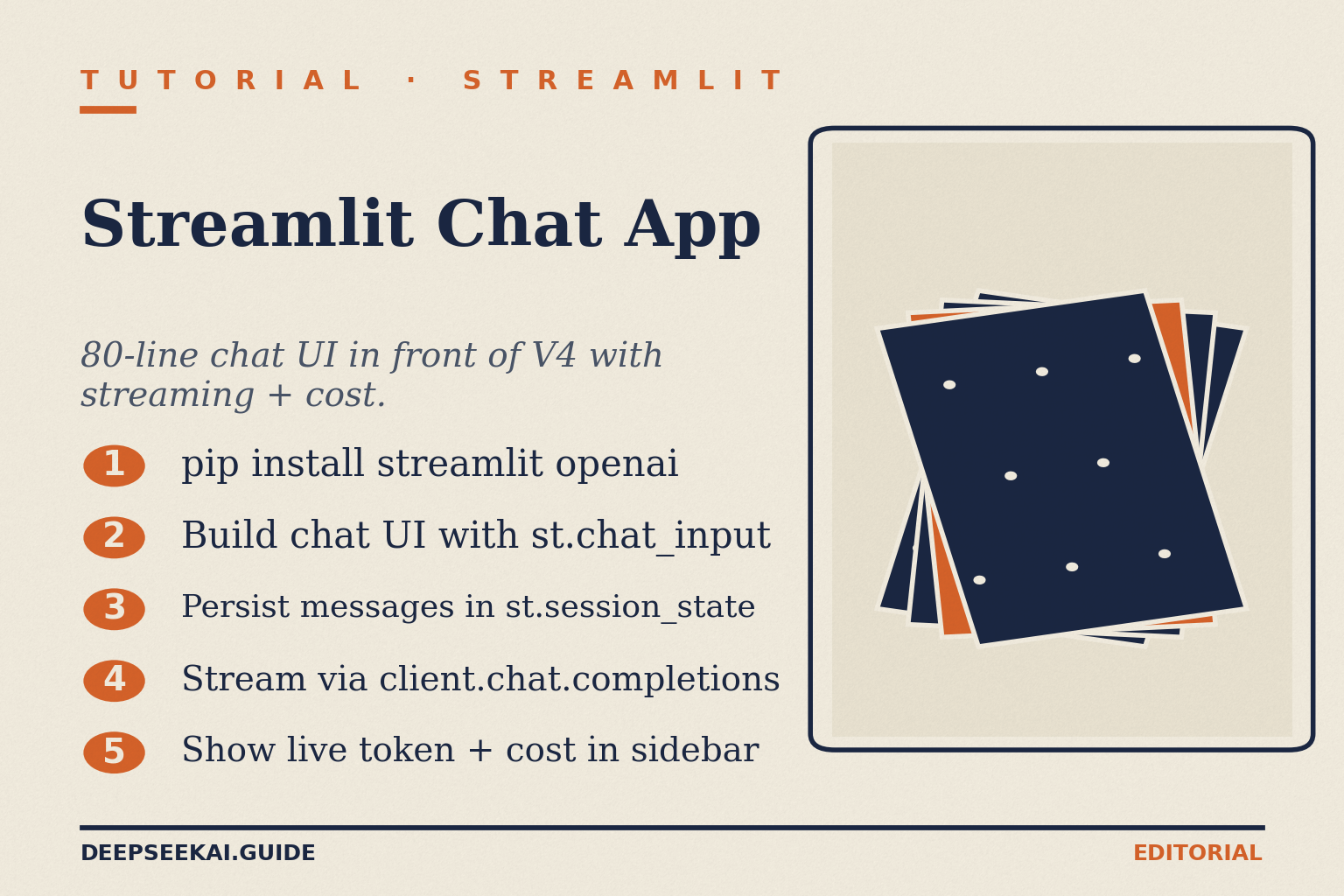

You want a working chat UI in front of DeepSeek without spending a weekend wiring up React, websockets and a backend. A DeepSeek Streamlit app gets you there in roughly 80 lines of Python: a text input, streamed responses, a model picker for the V4 tiers, and a running token counter. That is the project this tutorial walks through end to end.

By the end you will have a single `app.py` that talks to `deepseek-v4-flash` for cheap default chat, switches to `deepseek-v4-pro` when you need frontier reasoning, toggles thinking mode, streams tokens as they arrive, and shows the live cost per conversation. The code runs locally and deploys to Streamlit Community Cloud unchanged.

What you’ll build

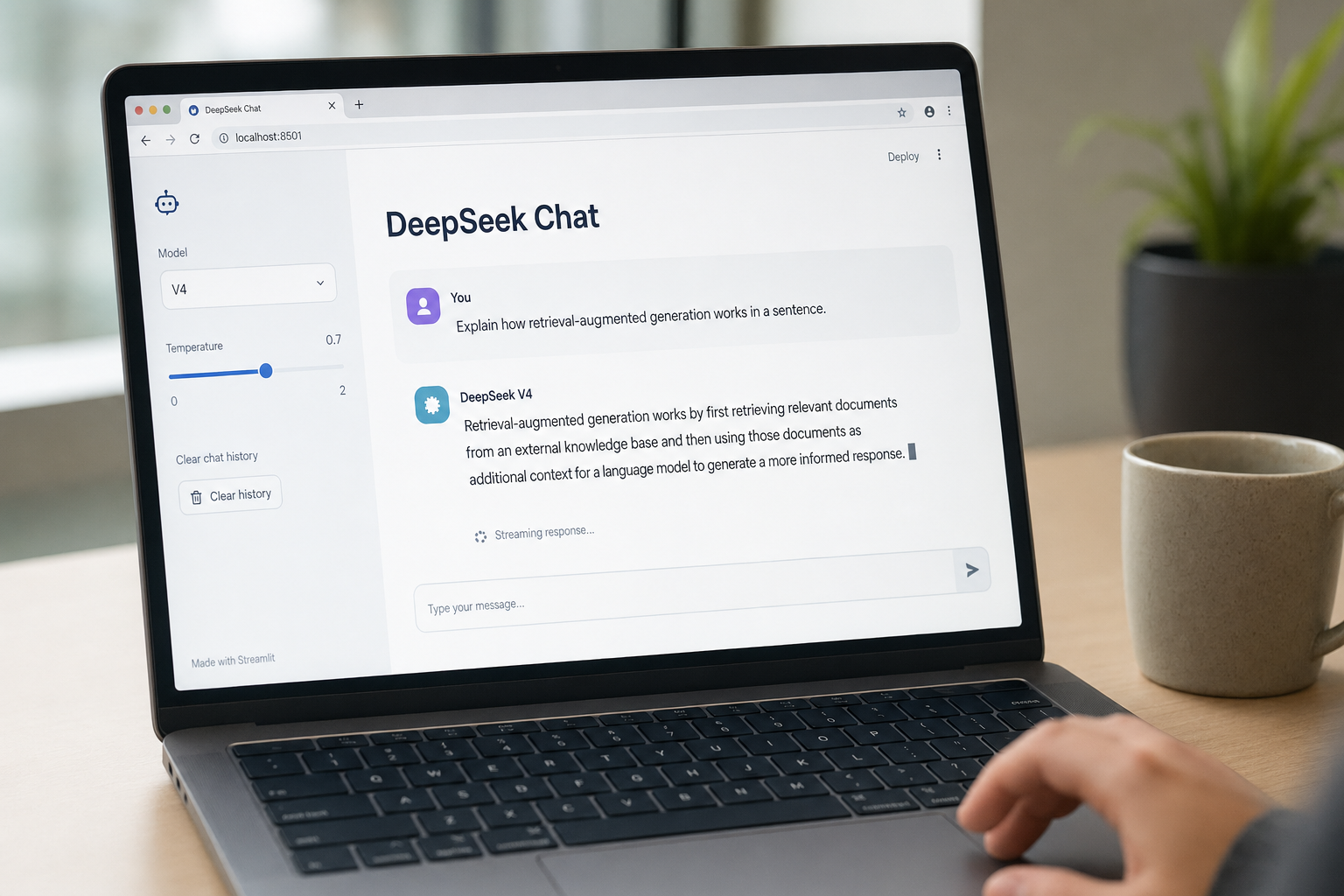

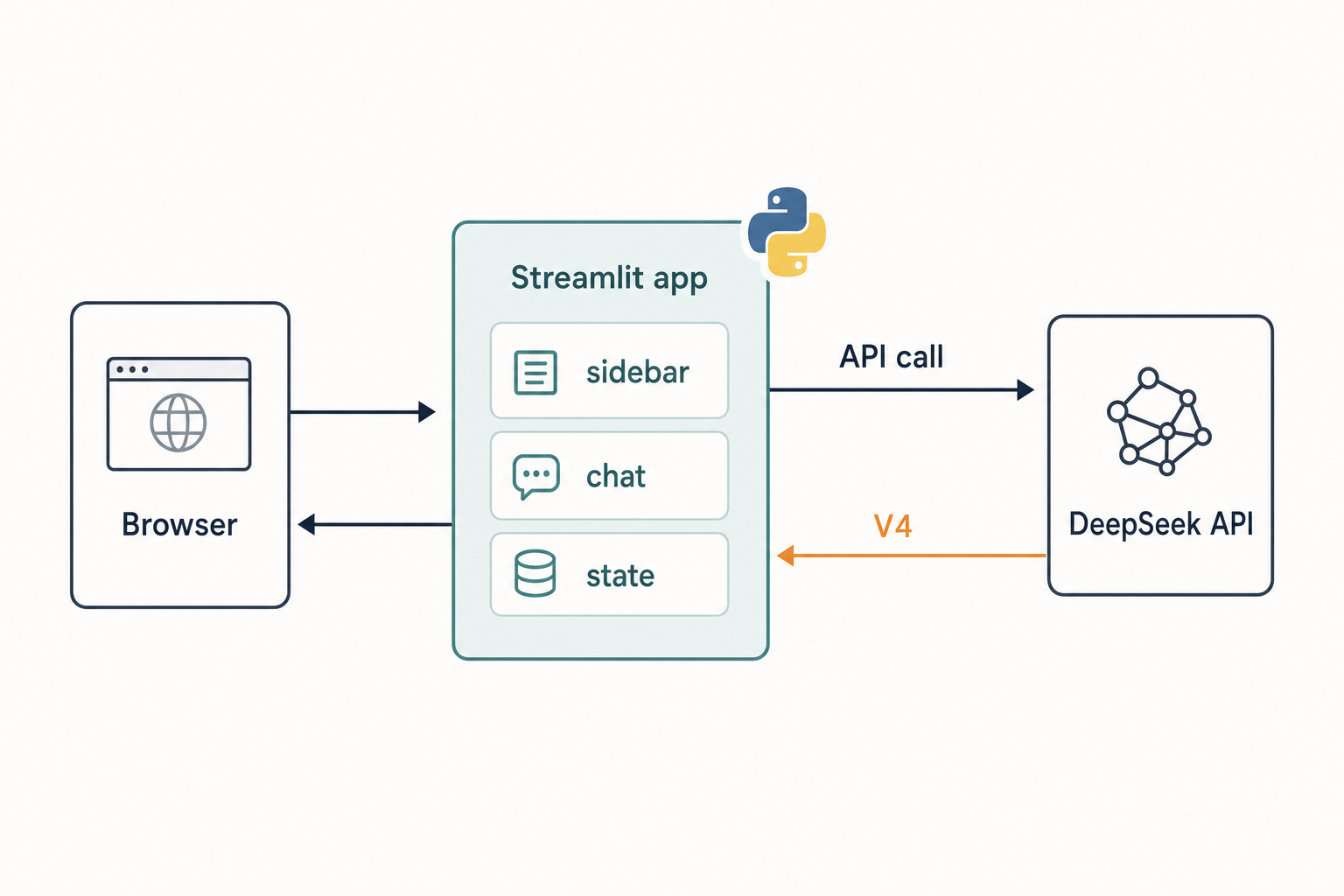

The finished app is a single-file Streamlit chat that talks to DeepSeek’s OpenAI-compatible API. It supports both V4 tiers, a thinking-mode toggle, server-sent-events streaming, conversation persistence within the session, and a sidebar that totals tokens and dollars as you chat. Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint at https://api.deepseek.com, so the official OpenAI Python SDK works unchanged once you swap base_url and api_key.

Two design choices matter up front. First, the DeepSeek API is stateless — the server does not remember prior turns, so the client has to resend the full messages array every request. Streamlit’s st.session_state is exactly the right place to keep that history. Second, V4 ships as a family of two open-weight MoE models with shared features — deepseek-v4-pro (1.6T total / 49B active) for frontier work and deepseek-v4-flash (284B / 13B active) for cost-efficient chat — and thinking is a request parameter, not a separate model ID. We will expose both choices in the sidebar.

Prerequisites

- Python 3.10 or newer with

pipavailable on your PATH. - A DeepSeek API key. If you do not have one yet, follow the get a DeepSeek API key walkthrough before continuing.

- A virtual environment tool —

venv,uvorpoetry. We will usevenv. - About 5 minutes and roughly $0.01 of API credit to test end to end on V4-Flash.

- Optional but recommended: a free Streamlit Community Cloud account for deployment.

If this is your first time hitting the API at all, run through DeepSeek API getting started first — it covers authentication and a minimal curl call before you wrap anything in a UI.

Step 1: Create the project and install dependencies

Open a terminal and create a clean project folder. The commands below are bash; PowerShell users can swap the activation line.

mkdir deepseek-streamlit && cd deepseek-streamlit

python -m venv .venv

source .venv/bin/activate # Windows: .venvScriptsactivate

pip install streamlit openai tiktokenThree packages, nothing else. streamlit is the UI framework, openai is the official SDK we point at DeepSeek’s base URL, and tiktoken gives us a reasonable client-side token estimate for the cost meter. (DeepSeek’s tokenizer is not byte-identical to OpenAI’s, so treat the count as an estimate within a few percent — or use the DeepSeek token counter for a sanity check before you commit pricing to a customer.)

Step 2: Store the API key safely

Streamlit reads secrets from .streamlit/secrets.toml locally and from the dashboard in production. Create the file:

mkdir -p .streamlit

cat > .streamlit/secrets.toml <<'EOF'

DEEPSEEK_API_KEY = "sk-your-key-here"

EOF

echo ".venv/" > .gitignore

echo ".streamlit/secrets.toml" >> .gitignoreThe .gitignore entry matters. Committing secrets.toml to GitHub is the single most common way readers leak a key. If you prefer environment variables, export DEEPSEEK_API_KEY and the code below will read either source.

Step 3: Write the chat app

Create app.py with the following Python. It is roughly 90 lines and covers everything: model selection, thinking toggle, streaming, history, and live cost.

import os

import streamlit as st

from openai import OpenAI

# --- Pricing (USD per 1M tokens) — update from the official pricing page ---

PRICING = {

"deepseek-v4-flash": {"in_miss": 0.14, "in_hit": 0.028, "out": 0.28},

"deepseek-v4-pro": {"in_miss": 1.74, "in_hit": 0.145, "out": 3.48},

}

st.set_page_config(page_title="DeepSeek Chat", page_icon="·", layout="centered")

st.title("DeepSeek Streamlit App")

# --- Sidebar: model, thinking, temperature, cost ---

with st.sidebar:

model = st.selectbox("Model", list(PRICING.keys()), index=0)

thinking = st.toggle("Thinking mode", value=False,

help="Enables reasoning_effort=high")

temperature = st.slider("Temperature", 0.0, 2.0, 1.3, 0.1,

help="0.0 code/math, 1.0 analysis, 1.3 chat, 1.5 creative")

max_tokens = st.number_input("Max output tokens", 256, 8192, 1024, 256)

if st.button("Clear conversation"):

st.session_state.messages = []

st.session_state.cost = 0.0

st.rerun()

st.metric("Session cost (USD)", f"${st.session_state.get('cost', 0.0):.4f}")

# --- Client (OpenAI SDK pointed at DeepSeek) ---

api_key = st.secrets.get("DEEPSEEK_API_KEY") or os.getenv("DEEPSEEK_API_KEY")

if not api_key:

st.error("Set DEEPSEEK_API_KEY in .streamlit/secrets.toml or your environment.")

st.stop()

client = OpenAI(base_url="https://api.deepseek.com", api_key=api_key)

# --- State ---

if "messages" not in st.session_state:

st.session_state.messages = []

if "cost" not in st.session_state:

st.session_state.cost = 0.0

# --- Replay prior turns ---

for m in st.session_state.messages:

with st.chat_message(m["role"]):

st.markdown(m["content"])

# --- Handle new input ---

if prompt := st.chat_input("Ask DeepSeek anything"):

st.session_state.messages.append({"role": "user", "content": prompt})

with st.chat_message("user"):

st.markdown(prompt)

# Build the request — stateless, so we resend full history every time.

kwargs = {

"model": model,

"messages": st.session_state.messages,

"temperature": temperature,

"max_tokens": max_tokens,

"stream": True,

"stream_options": {"include_usage": True},

}

if thinking:

kwargs["reasoning_effort"] = "high"

kwargs["extra_body"] = {"thinking": {"type": "enabled"}}

with st.chat_message("assistant"):

placeholder = st.empty()

thinking_box = st.expander("Reasoning", expanded=False) if thinking else None

full_text, reasoning_text, usage = "", "", None

stream = client.chat.completions.create(**kwargs)

for chunk in stream:

if chunk.usage:

usage = chunk.usage

if not chunk.choices:

continue

delta = chunk.choices[0].delta

if getattr(delta, "reasoning_content", None) and thinking_box:

reasoning_text += delta.reasoning_content

thinking_box.markdown(reasoning_text)

if delta.content:

full_text += delta.content

placeholder.markdown(full_text + " ")

placeholder.markdown(full_text)

st.session_state.messages.append({"role": "assistant", "content": full_text})

# --- Cost calculation: enumerate all three buckets ---

if usage:

rates = PRICING[model]

cached = getattr(usage, "prompt_cache_hit_tokens", 0) or 0

miss = usage.prompt_tokens - cached

cost = (cached * rates["in_hit"]

+ miss * rates["in_miss"]

+ usage.completion_tokens * rates["out"]) / 1_000_000

st.session_state.cost += cost

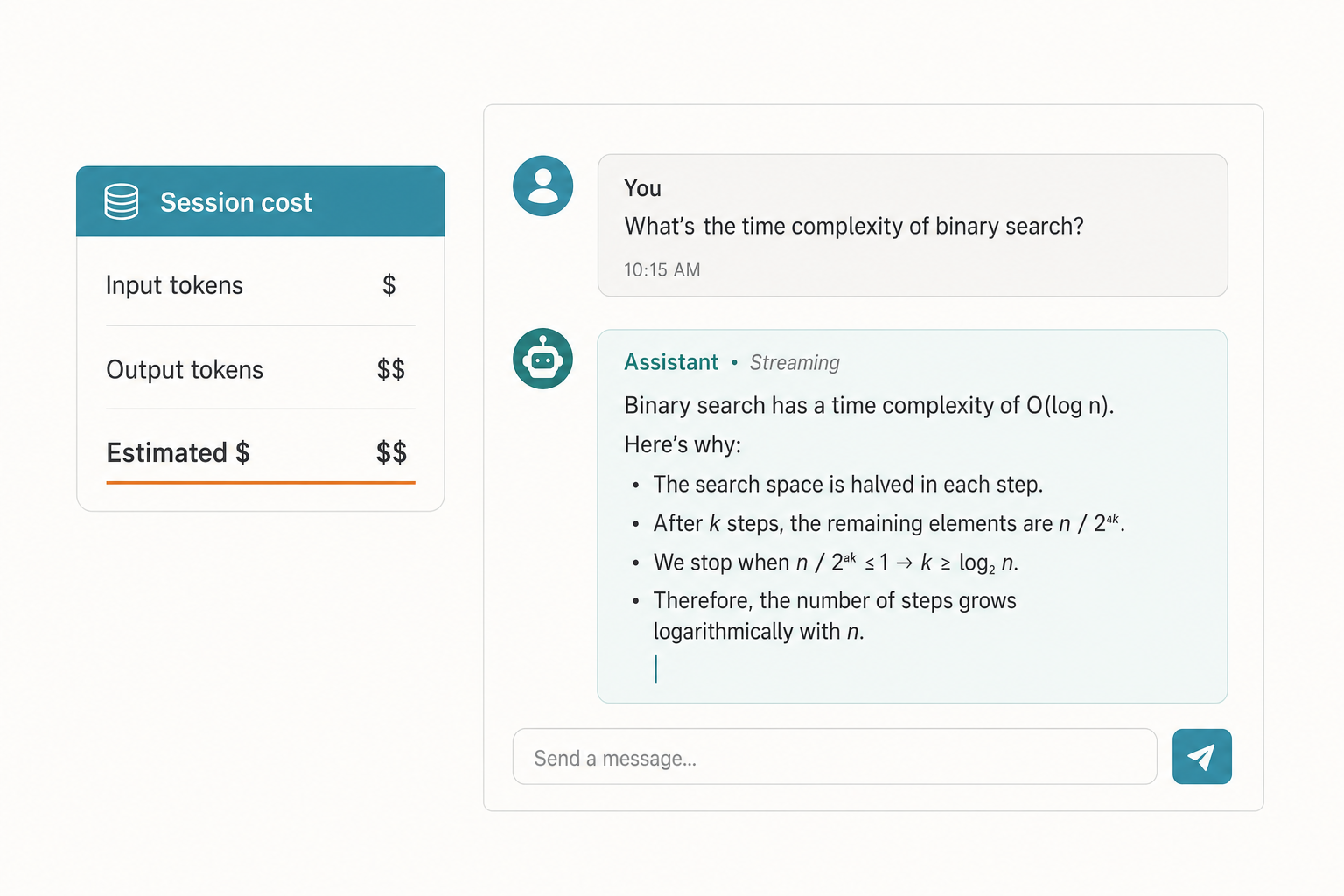

st.rerun()A few things worth flagging in that code. We pass stream_options={"include_usage": True} so DeepSeek returns a final usage chunk with prompt and completion token counts — that is what makes the live cost meter accurate rather than an estimate. The reasoning_content handling is the V4 thinking-mode contract: when thinking is enabled, the stream returns reasoning_content alongside the final content, and we render the trace in a collapsible expander so it does not dominate the UI.

Step 4: Run it locally

From the project folder:

streamlit run app.pyStreamlit prints a local URL (usually http://localhost:8501) and opens it in your browser. Type a question, watch the response stream in token by token, and check that the sidebar cost ticks up. With V4-Flash, a short conversation should cost a fraction of a cent.

Verify it worked

- Send “Reply with the single word: pong.” — you should see

pongstream in. - Switch the sidebar model to

deepseek-v4-proand ask a multi-step reasoning question. Confirm the response arrives and the session cost jumps by roughly a factor of ten compared to Flash for similar token counts. - Toggle Thinking mode on and ask a planning question. The “Reasoning” expander should populate with the trace, and the final answer should follow in the main message body.

- Click Clear conversation and confirm both history and the cost meter reset.

Step 5: Cost worked example

Suppose your Streamlit app handles 1,000,000 chat calls per month against deepseek-v4-flash, with a 2,000-token system prompt that DeepSeek’s context cache picks up after the first call, a 200-token user message each turn, and a 300-token reply. The math, with all three buckets enumerated:

| Bucket | Tokens | Rate (per 1M) | Cost |

|---|---|---|---|

| Input, cache hit | 2,000,000,000 | $0.0028 | $56.00 |

| Input, cache miss | 200,000,000 | $0.14 | $28.00 |

| Output | 300,000,000 | $0.28 | $84.00 |

| Total | $117.60 |

Run the same workload on deepseek-v4-pro and the total climbs to roughly $1,421 — about ten times more — because Pro lists $0.0145 cache-hit, $1.74 cache-miss and $3.48 output (currently $0.003625 / $0.435 / $0.87 during the 75% promo through 2026-05-31) per 1M tokens. Pricing rates are as of April 2026; verify against the DeepSeek API pricing page before quoting them in production. For larger sweeps the DeepSeek pricing calculator is faster than rebuilding the table by hand.

Two things to note. Each new user message is a cache miss against the cached prefix, even when the system prompt is fully cached — that is why the uncached-input line is non-zero. And the off-peak discount that older tutorials reference ended on September 5, 2025; do not budget around it.

Step 6: Add useful extras

JSON mode for structured output

If you want the assistant to return structured data — a form extraction, a classification result — set response_format={"type": "json_object"} in the request kwargs. DeepSeek’s JSON mode is designed to return valid JSON, not guaranteed: it can occasionally return empty content, the prompt must include the word “json” plus a small example schema, and you should set max_tokens high enough to avoid truncation. Always wrap json.loads in a try/except and fall back gracefully.

Tool calling

The app above is text-only, but DeepSeek supports OpenAI-format function calling on both V4 tiers in either thinking or non-thinking mode. Pass a tools=[...] list and handle tool_calls deltas in the stream. For a fuller pattern, the DeepSeek Python integration tutorial covers tool dispatch end to end.

RAG over your own documents

To let users chat with their own files, layer a retrieval step before the chat call: embed documents, query a vector store, and inject the top chunks into the system message. The DeepSeek RAG tutorial walks through that pattern with the same OpenAI-SDK pointer at DeepSeek’s base URL.

Common errors and fixes

| Symptom | Likely cause | Fix |

|---|---|---|

AuthenticationError: 401 |

Missing or wrong API key | Re-check .streamlit/secrets.toml; confirm the key starts with sk- and was copied without trailing whitespace. |

Model not found for deepseek-chat |

Using a legacy ID after migration | Switch to deepseek-v4-flash. Legacy IDs deepseek-chat and deepseek-reasoner currently route to V4-Flash but retire on 2026-07-24 at 15:59 UTC. |

| Streaming hangs, no tokens render | Streamlit placeholder.markdown not flushing inside the loop |

Confirm you are calling placeholder.markdown(full_text + " ") inside the for-loop, not after it. |

| JSON mode returns empty content | Prompt lacks the word “json” or max_tokens too low |

Add an example schema in the prompt; raise max_tokens; wrap parsing in try/except. |

| Cost meter stays at $0.00 | Usage chunk not requested | Pass stream_options={"include_usage": True}; check the final chunk has chunk.usage populated. |

| 429 rate-limit errors under load | Concurrent users on a low quota | Add exponential backoff; review DeepSeek API rate limits. |

Deploying to Streamlit Community Cloud

Push the project to a public or private GitHub repository (without secrets.toml), connect the repo on share.streamlit.io, point it at app.py, and paste your DEEPSEEK_API_KEY into the app’s Secrets panel in the same TOML format. The deploy takes about 90 seconds. Because the API is stateless and history lives in st.session_state, each user gets their own conversation without any backend work on your side — but note that session state evaporates on container restart. For persistent history you would add a database; for most internal tools that is overkill.

Why these defaults

The temperature slider defaults to 1.3 because that is DeepSeek’s documented setting for general conversation and translation. Drop it to 0.0 for code generation or maths, leave it at 1.0 for data analysis, and push to 1.5 for creative writing. The default model is V4-Flash because the cost gap between the tiers is roughly 7× on output, and Flash is plenty for the bulk of chat workloads — reach for DeepSeek V4-Pro when a benchmark or task quality genuinely demands the lift.

The 1,000,000-token context window is the same on both tiers, with output up to 384,000 tokens, so you can paste a whole codebase into a turn without architecture changes. Most chat UIs never come close to that ceiling; if yours does, lean on context caching and check token counts before sending.

Next steps

- Wire your app to Discord with the DeepSeek Discord bot tutorial — same SDK, different transport.

- Add memory and document retrieval with DeepSeek with LangChain.

- Swap the API for a local model using running DeepSeek on Ollama if you need offline inference.

- Browse other walkthroughs in the DeepSeek tutorials hub.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

How do I connect Streamlit to the DeepSeek API?

Use the official OpenAI Python SDK and point it at DeepSeek’s base URL: OpenAI(base_url="https://api.deepseek.com", api_key=...). Chat requests hit POST /chat/completions with the same wire format as OpenAI, so no call sites change. Store the key in .streamlit/secrets.toml and read it with st.secrets. The full setup is covered in the DeepSeek OpenAI SDK compatibility guide.

What does a DeepSeek Streamlit app cost to run?

On deepseek-v4-flash, expect roughly $0.14 per million input miss tokens, $0.0028 cache-hit, and $0.28 output per million as of April 2026. A typical short chat costs a fraction of a cent. The V4-Pro tier is around 6–7× more on output. Always enumerate all three token buckets when budgeting; the DeepSeek API pricing page is the source of truth.

Can I enable thinking mode in a Streamlit chat?

Yes. In V4, thinking is a request parameter on either tier, not a separate model. Pass reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}. The stream then returns reasoning_content alongside the final content, which you can render in a Streamlit expander. See DeepSeek V4 for the full parameter contract.

Why does my app forget previous messages between requests?

The DeepSeek API is stateless — the server does not remember prior turns, so the client must resend the full conversation in the messages array on every request. Streamlit’s st.session_state is the right place to hold history during a session. The web chat behaves differently because its UI persists state for you. More on the underlying API in the DeepSeek API documentation.

Is it safe to deploy a DeepSeek Streamlit app publicly?

Yes, with the usual caveats: keep the API key in Streamlit secrets and never in the repo, add per-user rate limiting if the URL is public, and review what data your users will send before deploying. DeepSeek processes requests on servers subject to Chinese law, so route sensitive data accordingly. The DeepSeek privacy guide covers the trade-offs.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- RepositoryDeepSeek-AI on GitHubOpen-weight release details, training/inference notesLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.