DeepSeek Docker Deployment: A Tested Setup for V4 Workloads

You want a containerised application that talks to DeepSeek V4, runs the same on your laptop and on a production VM, and does not leak your API key into a Git history. That is what this DeepSeek Docker deployment guide covers — concrete Dockerfiles, a working `docker-compose.yml`, and the bits people get wrong (GPU passthrough for local distills, secrets handling, health checks, and proxy patterns for cost control).

I run V4-Flash and V4-Pro in containers daily, alongside an internal LiteLLM proxy. The patterns below are what survived contact with real traffic, not toy examples. By the end you will have a reproducible setup for two scenarios: a Python service calling DeepSeek’s hosted API, and a self-hosted distill model behind an OpenAI-compatible endpoint.

What you will build

Two deployments, both production-shaped:

- Scenario A — Hosted API client. A small FastAPI service in a container that calls DeepSeek’s hosted

POST /chat/completionsendpoint using the OpenAI SDK. No GPU required. Suitable for V4-Flash and V4-Pro. - Scenario B — Self-hosted distill. A vLLM container serving a DeepSeek R1 Distill model behind an OpenAI-compatible API on your own GPU box, with NVIDIA Container Toolkit. Useful when you need offline inference or strict data residency.

Both scenarios share the same compose file with profiles, so you can bring them up independently.

Prerequisites

- Docker Engine 24.0+ and the Compose v2 plugin (

docker compose, notdocker-compose). - A DeepSeek API key from the developer console — see get a DeepSeek API key if you have not generated one.

- For Scenario B: an NVIDIA GPU with at least 24 GB VRAM (for a 14B distill in fp16) and the NVIDIA Container Toolkit installed on the host.

- Linux, macOS, or Windows with WSL2. Most of this is identical across them; GPU passthrough is Linux-first.

- Familiarity with environment variables and a basic Python or Node service. If you are new to the API surface itself, skim the DeepSeek API getting started guide first.

The model IDs you will use

DeepSeek V4 (released April 24, 2026) ships as two open-weight MoE models under the MIT license: deepseek-v4-pro (1.6T total / 49B active parameters, frontier tier) and deepseek-v4-flash (284B / 13B active, cost-efficient tier). Both expose a 1,000,000-token context with output up to 384,000 tokens.

Thinking mode is a request parameter, not a separate model ID — set reasoning_effort="high" with extra_body={"thinking": {"type": "enabled"}}, or "max" for maximum effort. Legacy IDs deepseek-chat and deepseek-reasoner still work but route to deepseek-v4-flash; they retire on 2026-07-24 at 15:59 UTC. Migrating is a one-line model= swap; base_url does not change. Full background on the family lives on the DeepSeek V4 page.

Project layout

Here is the directory we will build:

deepseek-stack/

├── .env # never committed

├── .env.example

├── docker-compose.yml

├── api-client/

│ ├── Dockerfile

│ ├── requirements.txt

│ └── app.py

└── proxy/

└── litellm.config.yaml # optional, for Scenario A+

Step 1 — Write the API client image

Scenario A is a Python 3.12 service. Create api-client/requirements.txt with two pinned dependencies:

openai==1.54.0

fastapi==0.115.4

uvicorn[standard]==0.32.0Then api-client/app.py — a minimal endpoint that proxies a prompt to DeepSeek. Note the explicit base_url; chat requests hit POST /chat/completions, the OpenAI-compatible endpoint:

import os

from fastapi import FastAPI, HTTPException

from pydantic import BaseModel

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key=os.environ["DEEPSEEK_API_KEY"],

)

app = FastAPI()

class Ask(BaseModel):

prompt: str

thinking: bool = False

@app.get("/healthz")

def healthz():

return {"ok": True}

@app.post("/ask")

def ask(body: Ask):

kwargs = {

"model": "deepseek-v4-flash",

"messages": [{"role": "user", "content": body.prompt}],

"temperature": 1.3, # general conversation

"max_tokens": 1024,

}

if body.thinking:

kwargs["reasoning_effort"] = "high"

kwargs["extra_body"] = {"thinking": {"type": "enabled"}}

try:

resp = client.chat.completions.create(**kwargs)

except Exception as e:

raise HTTPException(status_code=502, detail=str(e))

msg = resp.choices[0].message

return {

"content": msg.content,

"reasoning_content": getattr(msg, "reasoning_content", None),

}

The API is stateless — each request must carry the full messages array if you want multi-turn context. The web chat keeps session history; the API does not. When thinking is enabled, the response returns reasoning_content alongside the final content.

Now api-client/Dockerfile. Use a slim base, run as non-root, and copy requirements.txt before the source so layer caching survives code edits:

FROM python:3.12-slim AS base

ENV PYTHONDONTWRITEBYTECODE=1

PYTHONUNBUFFERED=1

PIP_NO_CACHE_DIR=1

RUN useradd --create-home --uid 1000 app

WORKDIR /app

COPY requirements.txt .

RUN pip install --no-cache-dir -r requirements.txt

COPY app.py .

RUN chown -R app:app /app

USER app

EXPOSE 8000

HEALTHCHECK --interval=30s --timeout=5s --retries=3

CMD python -c "import urllib.request; urllib.request.urlopen('http://localhost:8000/healthz')" || exit 1

CMD ["uvicorn", "app:app", "--host", "0.0.0.0", "--port", "8000"]

Step 2 — Handle secrets properly

Never bake API keys into images. Two patterns work; pick one:

- Environment variables via

.env(development). Create.env.examplecommitted to Git, and a real.envin.gitignore:# .env.example DEEPSEEK_API_KEY=sk-replace-me DEEPSEEK_MODEL=deepseek-v4-flash - Docker secrets (production / Swarm). Mount the key as a file at

/run/secrets/deepseek_api_keyand read it at startup. Compose v2 supports this on a single host without Swarm by usingsecrets:with afile:source.

For Kubernetes, use a Secret mounted as an env var or projected file. Whatever you do, audit your image with docker history <image> before pushing — keys in build args are visible there.

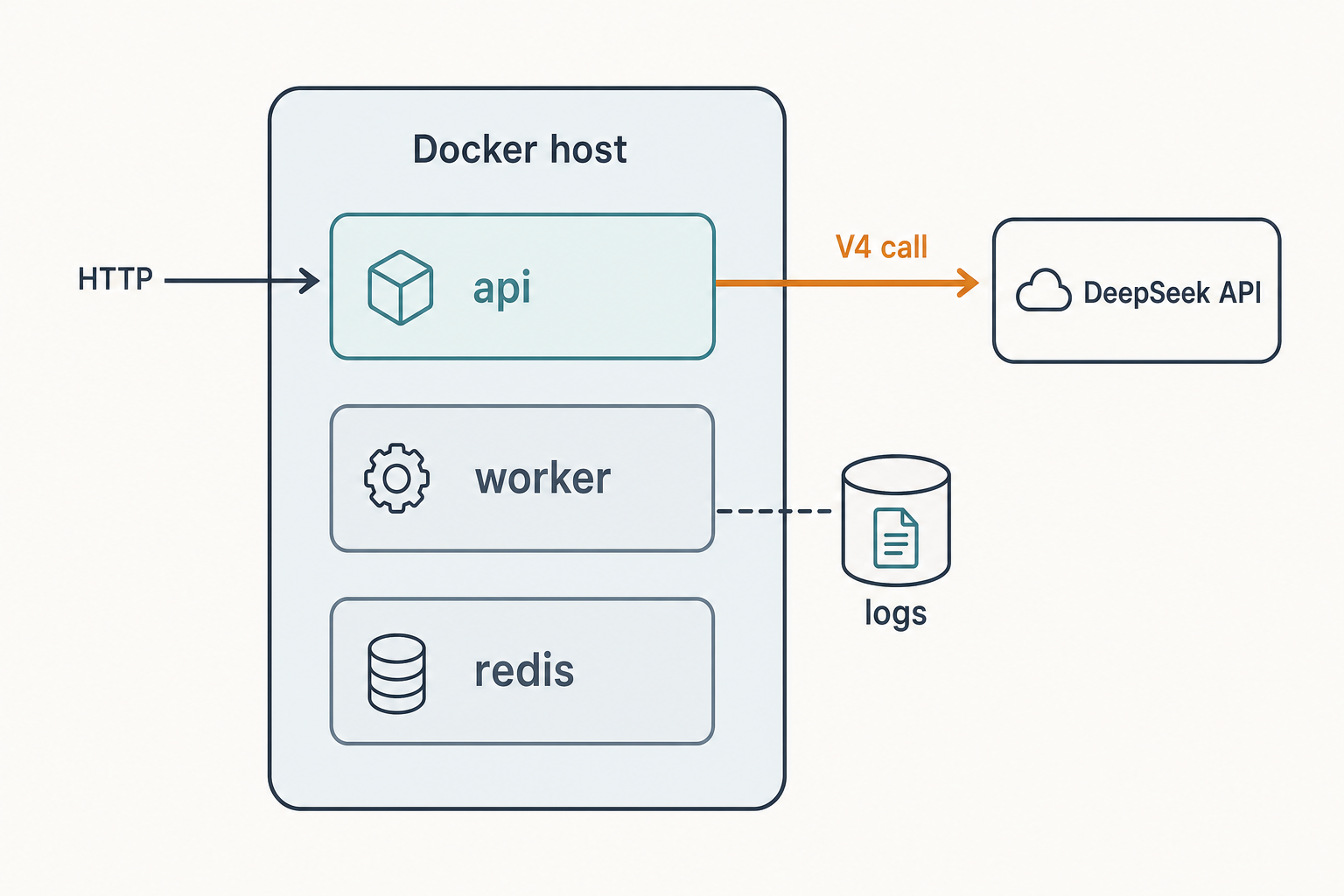

Step 3 — Compose the stack

Here is the docker-compose.yml that ties everything together. Profiles let you bring up only the API client by default and opt in to the local GPU service when needed:

name: deepseek-stack

services:

api-client:

build: ./api-client

image: deepseek-api-client:latest

env_file: .env

ports:

- "8000:8000"

restart: unless-stopped

read_only: true

tmpfs:

- /tmp

security_opt:

- no-new-privileges:true

healthcheck:

test: ["CMD", "python", "-c", "import urllib.request; urllib.request.urlopen('http://localhost:8000/healthz')"]

interval: 30s

timeout: 5s

retries: 3

litellm:

image: ghcr.io/berriai/litellm:main-stable

profiles: ["proxy"]

env_file: .env

command: ["--config", "/app/config.yaml", "--port", "4000"]

ports:

- "4000:4000"

volumes:

- ./proxy/litellm.config.yaml:/app/config.yaml:ro

restart: unless-stopped

vllm-distill:

image: vllm/vllm-openai:latest

profiles: ["gpu"]

ipc: host

ports:

- "8001:8000"

volumes:

- hf-cache:/root/.cache/huggingface

environment:

- HUGGING_FACE_HUB_TOKEN=${HF_TOKEN:-}

command: >

--model deepseek-ai/DeepSeek-R1-Distill-Qwen-14B

--dtype bfloat16

--max-model-len 32768

--gpu-memory-utilization 0.90

deploy:

resources:

reservations:

devices:

- driver: nvidia

count: 1

capabilities: [gpu]

restart: unless-stopped

volumes:

hf-cache:

Bring it up with docker compose up -d --build for Scenario A, or docker compose --profile gpu up -d to add the local distill (Scenario B). The read_only root filesystem and no-new-privileges flag are cheap hardening wins; few applications need to write outside /tmp.

Step 4 — Verify it worked

Probe the health endpoint and run a real call:

# Health

curl -s http://localhost:8000/healthz

# Round-trip a prompt

curl -s -X POST http://localhost:8000/ask

-H "Content-Type: application/json"

-d '{"prompt": "Summarise Docker layer caching in two sentences.", "thinking": false}'For the GPU service, hit it directly — it speaks OpenAI-compatible JSON on port 8001:

curl -s http://localhost:8001/v1/chat/completions

-H "Content-Type: application/json"

-d '{"model":"deepseek-ai/DeepSeek-R1-Distill-Qwen-14B","messages":[{"role":"user","content":"hi"}]}'If you want a deeper local-only path without Docker, the running DeepSeek on Ollama walkthrough covers the same R1 Distill family on a different runtime.

Step 5 — Add a proxy for cost control (optional)

Once you have more than one service calling DeepSeek, route them through an internal proxy. LiteLLM is a small Python service that exposes an OpenAI-compatible API and forwards to multiple backends. A minimal proxy/litellm.config.yaml:

model_list:

- model_name: chat-default

litellm_params:

model: deepseek/deepseek-v4-flash

api_base: https://api.deepseek.com

api_key: os.environ/DEEPSEEK_API_KEY

- model_name: chat-frontier

litellm_params:

model: deepseek/deepseek-v4-pro

api_base: https://api.deepseek.com

api_key: os.environ/DEEPSEEK_API_KEY

litellm_settings:

drop_params: true

cache: true

Bring it up with docker compose --profile proxy up -d. Now your other services point at http://litellm:4000 instead of https://api.deepseek.com, and you get centralised logging, retries, and per-team budgets without changing call sites.

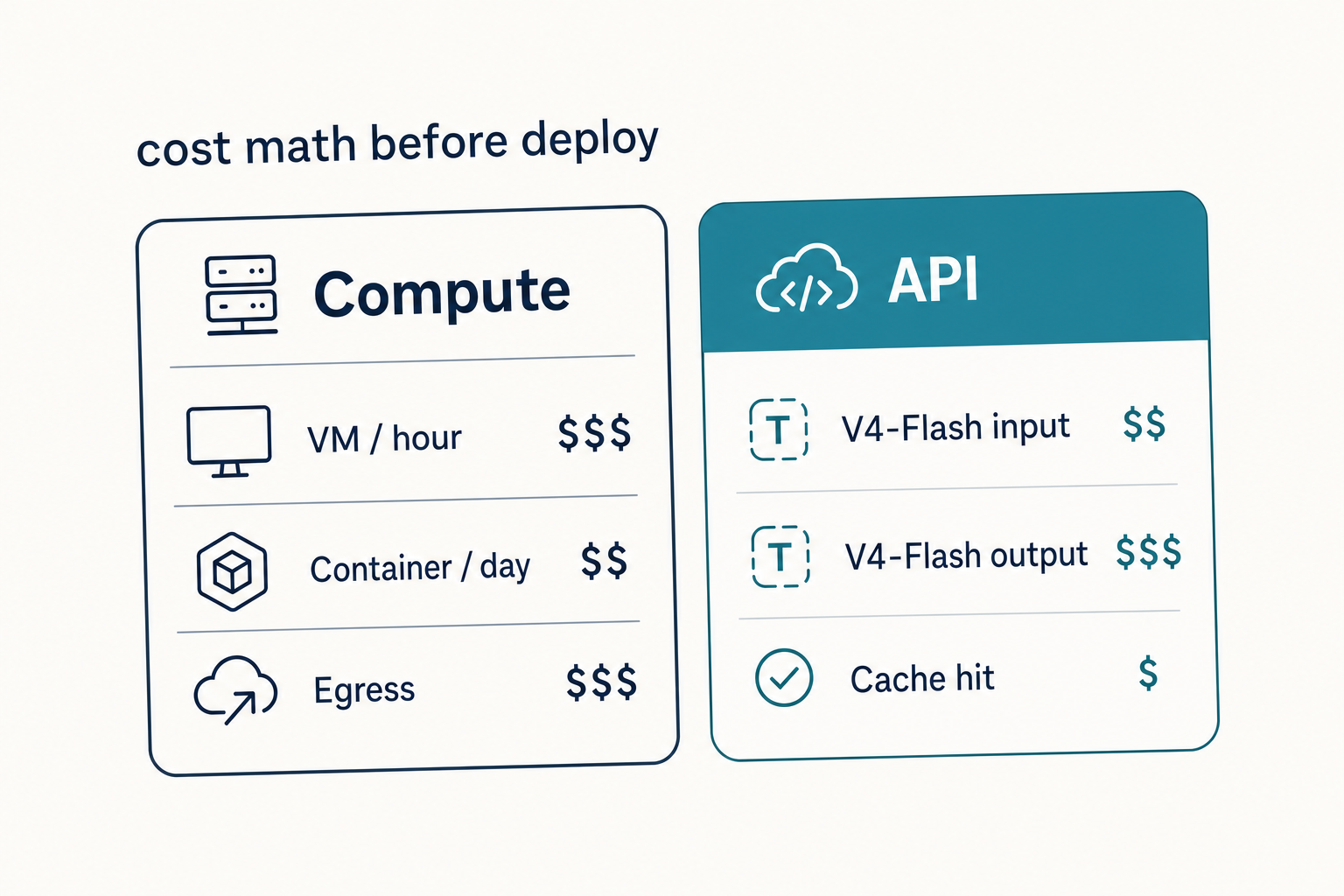

Cost math you should run before deploying

Container orchestration does not change pricing — it changes how often you make calls. Run the numbers before you ship. The example below is for deepseek-v4-flash: 1,000,000 calls per month with a 2,000-token cached system prompt, a 200-token user message, and a 300-token response.

| Bucket | Tokens | Rate (per 1M) | Cost |

|---|---|---|---|

| Input, cache hit | 2,000,000,000 | $0.0028 | $56.00 |

| Input, cache miss | 200,000,000 | $0.140 | $28.00 |

| Output | 300,000,000 | $0.280 | $84.00 |

| Total | $117.60 |

For the same workload on deepseek-v4-pro the totals become $29.00 + $348.00 + $1,044.00 = $1,421.00. Pro is roughly 6× the output rate of Flash, so reserve it for agentic or coding work where the benchmark lift earns it. Pricing is current as of April 2026; verify against the official pricing page before committing. The DeepSeek API pricing reference and DeepSeek context caching guide go deeper on rate tiers and cache-hit detection.

Common errors and fixes

| Symptom | Likely cause | Fix |

|---|---|---|

401 Unauthorized from DeepSeek |

Empty DEEPSEEK_API_KEY at runtime |

Run docker compose config to confirm the variable is interpolated; check .env sits next to docker-compose.yml |

| Container exits immediately | Healthcheck or app crash on import | docker compose logs api-client; pin SDK versions; confirm Python version |

could not select device driver "nvidia" |

NVIDIA Container Toolkit not installed | Install nvidia-container-toolkit on the host and restart Docker |

| Empty content from JSON mode | Truncation or missing schema hint | Set max_tokens high; include the word “json” plus a short example schema in the prompt — see DeepSeek API JSON mode |

| Streaming stalls behind a load balancer | Proxy buffering server-sent events | Disable response buffering on the proxy; for nginx, set proxy_buffering off |

| Out-of-memory on vllm-distill | Context length too high for VRAM | Lower --max-model-len or pick a smaller distill |

Production hardening checklist

- Pin image digests (

image: vllm/vllm-openai@sha256:...) for reproducible deploys. - Set resource limits with

deploy.resources.limitsso a runaway container cannot starve the host. - Log structured JSON from your application; ship logs with the Docker JSON-file driver or a sidecar.

- Rotate API keys regularly and use separate keys per environment.

- Monitor token spend with the LiteLLM proxy or your own middleware — surprise bills almost always come from a retry loop, not from real users.

- Back up the Hugging Face cache volume if you self-host; redownloading a 14B model takes time you do not want during an incident.

Where this fits in your stack

If you are building a chatbot, the same image pattern wraps cleanly into a DeepSeek Discord bot service. For retrieval-augmented workflows, point the container at a vector store and follow the DeepSeek RAG tutorial. Teams shipping internal tools should also read the DeepSeek API best practices notes on retries, idempotency, and timeouts before going live.

Next steps

- Browse other DeepSeek tutorials for adjacent integrations (LangChain, LlamaIndex, VS Code).

- If you would rather skip Docker for local development, the install DeepSeek locally guide covers bare-metal options.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

How do I pass my DeepSeek API key into a Docker container safely?

Use an .env file referenced by env_file: in your compose file for development, and Docker secrets or a Kubernetes Secret in production. Never bake the key into the image with ENV or build args — it stays visible in docker history. For full setup steps see the get a DeepSeek API key guide and the DeepSeek API authentication reference.

What model ID should I use in my Dockerised app today?

Use deepseek-v4-flash for general workloads and deepseek-v4-pro for frontier-tier coding or agentic tasks. Legacy IDs deepseek-chat and deepseek-reasoner still resolve to V4-Flash but retire on 2026-07-24 at 15:59 UTC, so migrate the model= field now. The DeepSeek V4-Flash page covers the trade-offs in detail.

Can I run DeepSeek V4 itself inside a Docker container on my own GPU?

Not realistically — V4-Pro is 1.6T total parameters and V4-Flash is 284B, both well beyond a single workstation. For self-hosted Docker deployments, use the smaller DeepSeek R1 Distill family (1.5B to 70B) served by vLLM or TGI. Use the hosted API for V4. The DeepSeek system requirements page lists VRAM needs by model size.

Does Docker change how DeepSeek’s API behaves at runtime?

No. The container is just a network client. The API is still stateless — your code must resend the full messages array on every POST /chat/completions call to maintain multi-turn context, regardless of where the client runs. For request-shape details see the DeepSeek API documentation and DeepSeek OpenAI SDK compatibility notes.

Why use a LiteLLM proxy container instead of calling DeepSeek directly?

A proxy gives you one place for retries, caching, key rotation, per-team budgets, and structured logs — without editing every service that calls the API. It also makes it trivial to fail over to a self-hosted distill or another provider during incidents. For more on retry and timeout patterns, see the DeepSeek API best practices guide alongside other step-by-step DeepSeek guides.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.