DeepSeek Comparison Tool: Match the Right Model to Your Workload

You opened a DeepSeek comparison tool because you have a real decision to make: do you ship `deepseek-v4-pro` and pay frontier-tier rates, fall back to `deepseek-v4-flash` for the cost savings, or keep your existing `deepseek-chat` integration running until the legacy IDs retire? The wrong call can multiply a monthly bill by seven, or quietly cap quality on a workload that needs more reasoning headroom. This guide walks through how to compare DeepSeek’s current models against each other and against the GPT-5 and Claude 4 families, using the numbers DeepSeek published on April 24, 2026 alongside third-party verification. By the end you will have a repeatable framework — pricing, context, benchmarks, thinking modes — to pick a model with confidence.

What a DeepSeek comparison tool actually needs to compare

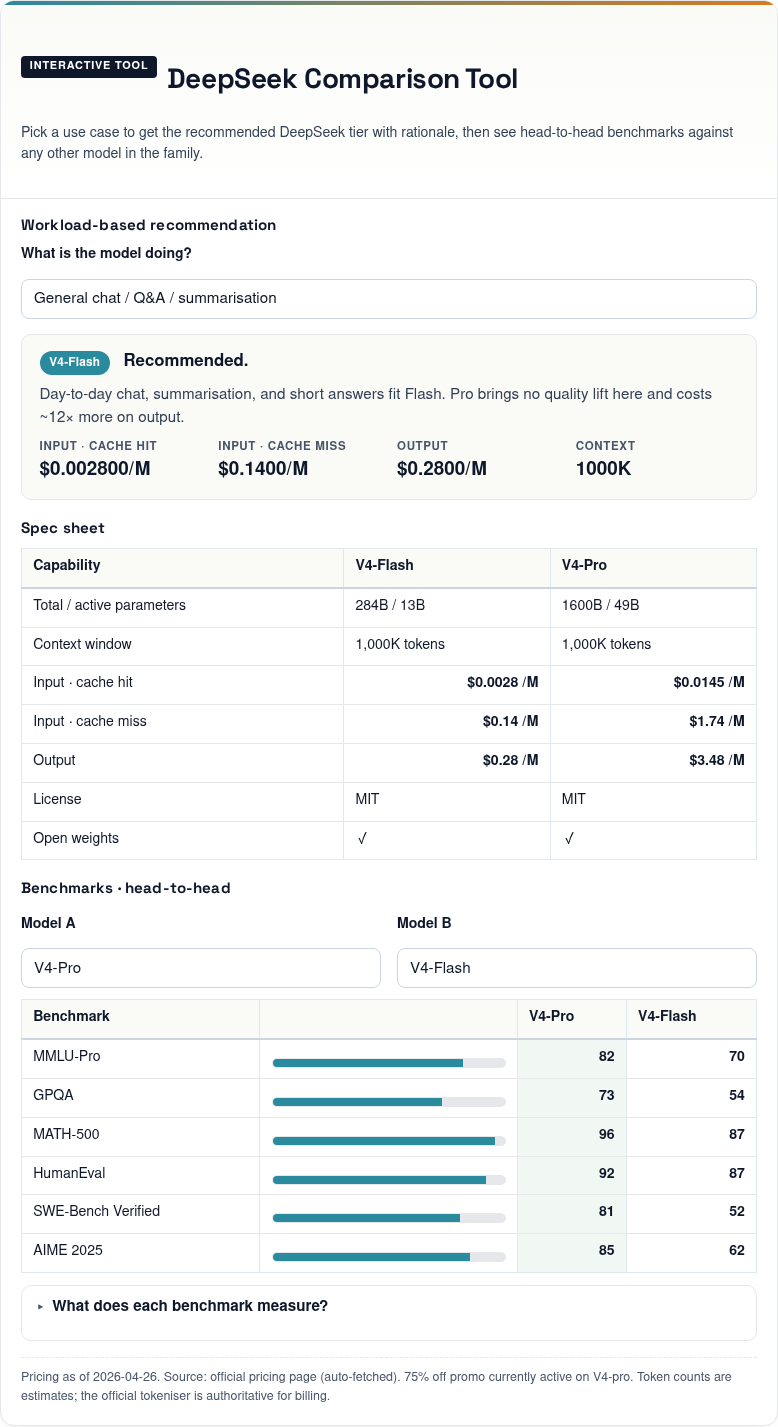

DeepSeek Comparison Tool

Pick a use case to get the recommended DeepSeek tier with rationale, then see head-to-head benchmarks against any other model in the family.

Workload-based recommendation

Spec sheet

| Capability | V4-Flash | V4-Pro |

|---|---|---|

| Total / active parameters | 284B / 13B | 1600B / 49B |

| Context window | 1,000K tokens | 1,000K tokens |

| Input · cache hit | $0.0028 /M | $0.0145 /M |

| Input · cache miss | $0.14 /M | $1.74 /M |

| Output | $0.28 /M | $3.48 /M |

| License | MIT | MIT |

| Open weights | ✓ | ✓ |

Benchmarks · head-to-head

What does each benchmark measure?

MMLU-Pro · 12,000 questions across 57 academic subjects — broad knowledge + reasoning. GPQA · graduate-level science questions written by domain PhDs. MATH-500 · competition math. HumanEval · 164 hand-written Python problems. SWE-Bench Verified · 500 real issues from real OSS projects, model has to ship a passing patch. AIME 2025 · the most recent invitational math contest.

Pricing as of 2026-04-29. Source: official pricing page (auto-fetched). Token counts are estimates; the official tokeniser is authoritative for billing.

A useful comparison tool is not a feature checklist. It is a decision aid that surfaces the four variables that change your bill or your output quality: the model tier, the thinking-mode setting, the token rates across cache-hit, cache-miss and output buckets, and the benchmark relevant to your workload. Anything else is decoration.

The current DeepSeek line-up is shorter than it used to be. The official model list returns just two IDs: deepseek-v4-flash and deepseek-v4-pro. Legacy aliases still resolve, but the migration window is closing.

The current generation at a glance

DeepSeek V4 launched on April 24, 2026 as a preview release. DeepSeek dropped the first of its V4 series as two preview models, DeepSeek-V4-Pro and DeepSeek-V4-Flash. Both models are 1 million token context Mixture of Experts. Pro is 1.6T total parameters, 49B active. Flash is 284B total, 13B active. They use the standard MIT license. Both are open-weight; they support OpenAI ChatCompletions and Anthropic APIs, with both models supporting 1M context and dual modes (Thinking / Non-Thinking).

Side-by-side: DeepSeek V4-Pro vs V4-Flash vs legacy IDs

This is the table to start every comparison from. Pricing is sourced from DeepSeek’s official pricing page and OpenRouter listings as of April 25, 2026.

| Feature | deepseek-v4-pro | deepseek-v4-flash | deepseek-chat / deepseek-reasoner (legacy) |

|---|---|---|---|

| Tier | Frontier | Cost-efficient | Alias to V4-Flash |

| Total / active params | 1.6T / 49B | 284B / 13B | Routes to V4-Flash |

| Context window | 1,000,000 tokens | 1,000,000 tokens | 1,000,000 tokens (post-routing) |

| Max output | 384,000 tokens | 384,000 tokens | 384,000 tokens |

| Input, cache hit ($/M) | $0.0145 | $0.0028 | V4-Flash rates |

| Input, cache miss ($/M) | $0.435 promo / $1.74 list | $0.14 | V4-Flash rates |

| Output ($/M) | $0.87 promo / $3.48 list | $0.28 | V4-Flash rates |

| License | MIT (code + weights) | MIT (code + weights) | n/a |

| Status | Active | Active | Retires 2026-07-24 15:59 UTC |

DeepSeek’s pricing page states the model names deepseek-chat and deepseek-reasoner will be deprecated, and they correspond to the non-thinking mode and thinking mode of deepseek-v4-flash respectively. If you maintain an older integration, the migration is a one-line `model=` swap; the `base_url` does not change. DeepSeek’s own guidance: keep base_url, just update model to deepseek-v4-pro or deepseek-v4-flash.

How to compare benchmarks honestly

Benchmark tables are where comparison tools mislead readers. Versions drift, runs differ, and a single number rarely captures real-world behaviour. Use this rule: cite the benchmark, name the version of every competitor, and prefer the model’s own report cross-checked against an independent source.

Coding

This is where V4-Pro looks strongest right now. On LiveCodeBench it scores 93.5, ahead of Gemini 3.1 Pro (91.7) and Claude Opus 4.6 (88.8). Its Codeforces rating of 3206 also tops GPT-5.4 (3168). On SWE-Bench Verified, third-party reporting puts DeepSeek V4 Pro at Terminal-Bench 67.9% vs Claude 65.4%, LiveCodeBench 93.5% vs 88.8%, and SWE-bench 80.6%. Cross-check the exact split (Verified / Full / Lite) in DeepSeek’s V4 technical report before quoting a single SWE-Bench number in production decisions.

Reasoning, math and knowledge

On math benchmarks, V4-Pro is strong. IMOAnswerBench (89.8) is its best result relative to peers — well ahead of Claude (75.3) and Gemini (81.0), though GPT-5.4 edges ahead at 91.4. HMMT 2026 is the one benchmark where Claude (96.2) and GPT-5.4 (97.7) pull decisively ahead of V4-Pro (95.2). On general knowledge, DeepSeek acknowledges directly that V4-Pro leads all open models but trails Gemini-3.1-Pro on rich world knowledge.

Independent intelligence indices

Artificial Analysis, an independent benchmark aggregator, scores V4-Pro in the upper tier of open-weight models. DeepSeek V4 Pro (Reasoning, Max Effort) scores 52 on the Artificial Analysis Intelligence Index, placing it well above average among other open weight models of similar size (median: 28). Throughput is unremarkable — it generates output at 33.5 tokens per second, at the lower end compared to other open weight models of similar size (median: 55.1 t/s) — so latency-sensitive products should test before committing.

Thinking modes: a parameter, not a model

One of the biggest changes from V3.x is that thinking mode in V4 is no longer a separate model ID. Both V4 tiers expose three reasoning settings via a single request parameter:

- Non-thinking (default) — fastest, cheapest. Supports FIM completion (Beta).

- Thinking (high) — set

reasoning_effort="high"withextra_body={"thinking": {"type": "enabled"}}. The response returnsreasoning_contentalongside the finalcontent. - Thinking (max) —

reasoning_effort="max". Requires enough output budget; setmax_tokenshigh to avoid truncation.

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. Here is a minimal Python example using the OpenAI SDK against V4-Pro in thinking mode:

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="YOUR_KEY",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Plan the migration."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

max_tokens=8000,

)

print(resp.choices[0].message.reasoning_content)

print(resp.choices[0].message.content)

The API is stateless. You must resend the conversation history with every request — unlike the web chat, which maintains your session. DeepSeek also exposes an Anthropic-compatible surface against the same base URL, so the Anthropic SDK works by swapping base_url and api_key.

Worked cost comparison: same job, three models

Comparison tools fail when they quote a per-token rate without showing the math. Here is the same workload — 1,000,000 calls with a 2,000-token cached system prompt, a 200-token user message and a 300-token response — costed against both V4 tiers.

deepseek-v4-flash

- Cached input: 2,000 × 1,000,000 × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 × $0.14/M = $28.00

- Output: 300 × 1,000,000 × $0.28/M = $84.00

- Total: $117.60

deepseek-v4-pro

- Cached input: 2,000,000,000 × $0.0145/M = $29.00

- Uncached input: 200,000,000 × $1.74/M (list) = $348.00

- Output: 300,000,000 × $3.48/M (list) = $1,044.00

- Total: $1,421.00 at list (currently $355.25 during the 75% V4-Pro promo through 2026-05-31)

Pro is roughly 10× the spend of Flash on this shape of workload. For competitive context, OpenAI’s GPT-5.4 costs $2.50 per 1M input tokens and $15.00 per 1M output tokens, while Claude Opus 4.6 costs $5 per 1M input tokens and $25 per 1M output tokens. DeepSeek delivers similar performance to these models at a 50-80% cost reduction. Verify both providers’ pricing pages before committing — rates change.

Decision framework: which model when

Use this checklist. It is the framework I run on every new DeepSeek deployment.

- Default to V4-Flash. For chat, summarisation, extraction, classification and most coding assistance, Flash hits the price-quality knee. Move up only when you have measured a quality gap on your own evaluations.

- Choose V4-Pro when your workload is agentic coding, multi-step automation across long contexts, or knowledge-intensive analysis where benchmark deltas (LiveCodeBench, Terminal-Bench, IMOAnswerBench) translate to fewer wrong outputs. Expect ~7–10× the per-call spend.

- Enable thinking mode when answers must be correct on the first attempt — math, planning, multi-file refactors. Skip it for streaming chat and short user replies.

- Migrate off legacy IDs before 2026-07-24 15:59 UTC. They route to V4-Flash today, but requests will fail after the cutoff.

- Compare against GPT-5 and Claude 4 only with current pricing pages open. The competitor numbers above are accurate as of April 25, 2026 but move month to month.

Comparison-tool gotchas that quietly break your math

- Mixing tiers in one cost calc. Pick V4-Flash or V4-Pro — never blend rates.

- Forgetting the uncached input bucket. A cached system prompt does not cover the new user message on each call. Always include three buckets in any estimate.

- Quoting V3.2 prices as current. V4 superseded V3.2 on April 24, 2026 — the V3.2 rates ($0.28 miss / $0.42 output) are historical now.

- Believing “no daily message cap” on the web chat. DeepSeek does not publicly document a cap, but absence of documentation is not the same as a guarantee.

- Treating off-peak discounts as active. They ended on September 5, 2025 and have not returned with V4.

For follow-up reading, the DeepSeek pricing calculator turns the math above into per-day and per-month estimates, and the DeepSeek cost estimator compares scenarios across both tiers. If you want benchmark depth rather than pricing, the DeepSeek benchmarks 2026 page tracks third-party verification as new runs land.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek pricing page and the V4 technical report before committing to a production decision.

How does a DeepSeek comparison tool decide between V4-Pro and V4-Flash?

It compares price-per-token, benchmark scores on workloads that match yours, and context budgets. Both V4 models share a 1M-token context, so the real question is whether your evaluation shows a quality gap large enough to justify Pro’s roughly 10× higher per-call cost. Start on Flash, measure, then move up if needed. The DeepSeek V4-Flash page covers the cost-efficient tier in detail.

What is the difference between deepseek-chat and deepseek-v4-flash?

They are currently the same underlying model. The legacy ID `deepseek-chat` routes to V4-Flash in non-thinking mode, while `deepseek-reasoner` routes to V4-Flash in thinking mode. Both legacy aliases will be retired on July 24, 2026 at 15:59 UTC, so new code should call the V4 IDs directly. The DeepSeek API documentation covers the migration steps.

Can I compare DeepSeek V4 against GPT-5 and Claude 4 fairly?

Yes, but version-match everything. Quote the exact competitor labels (Claude Opus 4.6, GPT-5.4) and pull benchmark numbers and pricing from the same week. Independent aggregators like Artificial Analysis help, but always cross-check with the lab’s own technical report. See the DeepSeek vs ChatGPT and DeepSeek vs Claude breakdowns for current head-to-heads.

Is the DeepSeek model comparison tool useful for self-hosting decisions?

It is, because the licence and weight sizes change the calculation. V4-Pro and V4-Flash both ship MIT-licensed weights on Hugging Face, so self-hosting is allowed. Pro is roughly 865GB and Flash is roughly 160GB on disk, which dictates GPU memory and quantisation strategy. Use the DeepSeek hardware calculator to estimate the rig you need.

Why does my cost estimate differ from the comparison tool’s number?

Almost always because of cache assumptions or output length. A cached system prompt is billed at the cache-hit rate, but each new user message hits the uncached-input bucket, and output tokens dominate when responses are long. Re-run the math with three buckets — cache hit, cache miss, output — and pick a single tier. The DeepSeek context caching guide explains how the cache actually triggers.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- PricingDeepSeek pricing pageLive V4-Flash and V4-Pro pricing for the comparison toolLast checked: April 30, 2026

- Technical reportDeepSeek V4 technical report PDF (Hugging Face)Source for V4-Pro benchmark numbers cited in comparisonsLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- BenchmarkArtificial Analysis intelligence indexIndependent V4-Pro reasoning index score (52) and throughputLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.