How to Use a DeepSeek API Tester to Validate Your Setup

You generated a key, pasted the base URL into your code, and the first call returned a 401. Was it the header format, the model ID, or did your balance hit zero? A good DeepSeek API tester answers that question in under a minute, before you waste an afternoon debugging production code. This guide walks through the practical tools — curl, Postman, the OpenAI SDK, and lightweight web playgrounds — that let you confirm authentication, model routing, thinking-mode behaviour, and token accounting against the live `POST /chat/completions` endpoint. You will get copy-paste examples for V4-Pro and V4-Flash, a checklist for verifying cache-hit billing, and a short list of common errors with fixes. By the end, you will have a repeatable smoke test you can run before every deployment.

What a DeepSeek API tester actually does

DeepSeek API Tester

Configure a request — chat, streaming, JSON mode, function calling, reasoning — and the tool generates working code in six languages. Your key never leaves the page.

Generated code

Why doesn\'t this fire the request from my browser?

Two reasons. First, the DeepSeek API doesn\'t allow CORS from arbitrary origins — a browser request from deepseekai.guide would be blocked. Second, even if it did, putting a real API key into JavaScript loaded from a third-party site is unsafe — anyone reading the network tab on your machine sees the key. Generating code that you run locally is the right way to test an API key.

The OpenAI SDKs work as-is once you set base_url to https://api.deepseek.com — DeepSeek\'s API is OpenAI-compatible, so existing tooling (LangChain, LlamaIndex, instructor, etc.) just works.

Run the generated snippet in your terminal or editor. The OpenAI SDK is recommended for Python/Node — DeepSeek\'s API is fully OpenAI-compatible.

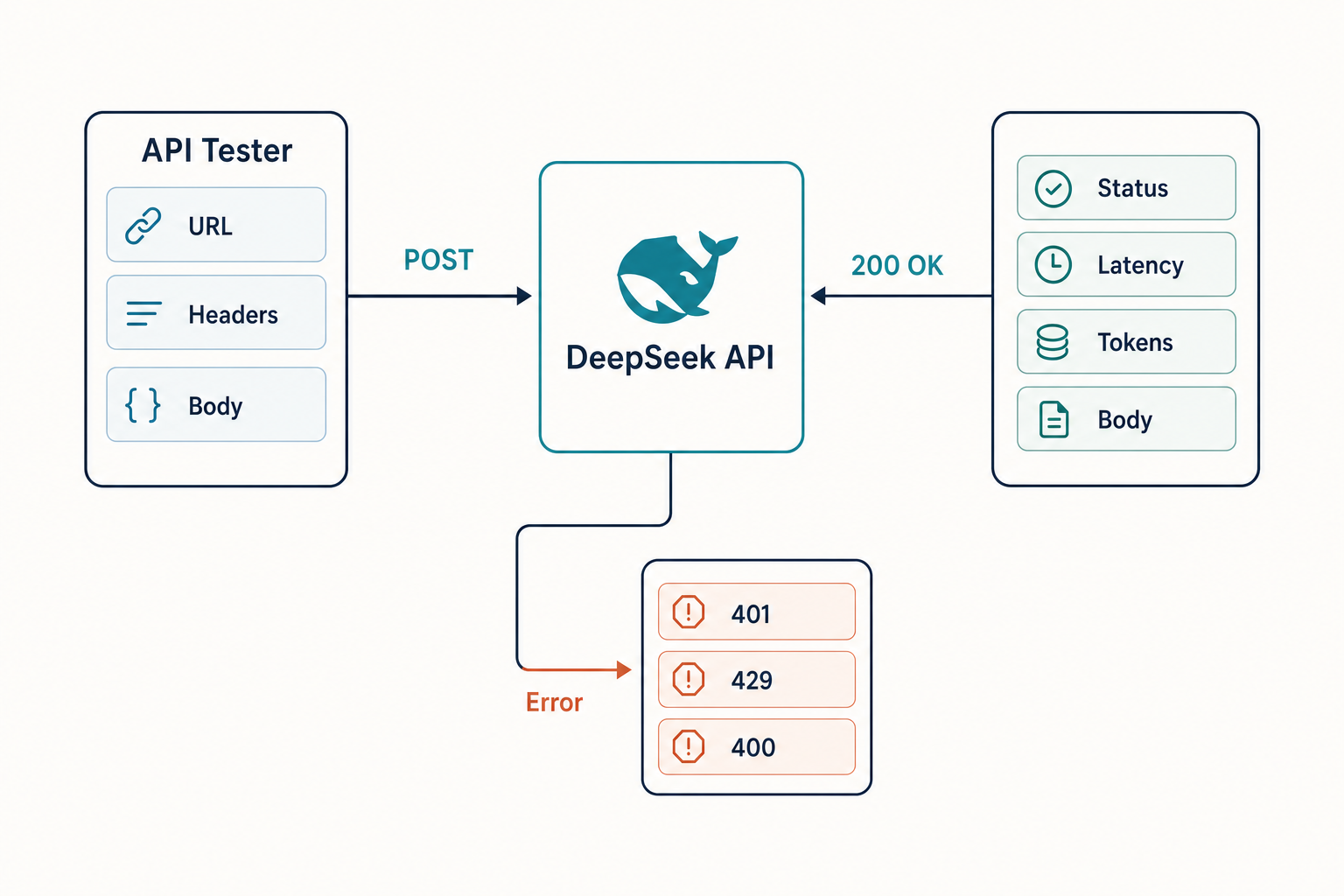

A DeepSeek API tester is any tool — script, GUI, or browser playground — that sends a request to the DeepSeek API and shows you the raw response, status code, latency, and token usage. The point is not to write your application; it is to isolate variables. When something breaks in production, you want to know whether the fault is your code, your key, the model ID, or the service itself. Testing tools strip the call down to its smallest reproducible form.

Five things you should be able to verify with a tester:

- Authentication — does your bearer token work against

https://api.deepseek.com? - Model routing — does

deepseek-v4-flashreturn what you expect, and do legacy IDs still resolve? - Parameter behaviour — what changes when you flip

reasoning_effortor enable JSON mode? - Token accounting — do

prompt_cache_hit_tokensandprompt_cache_miss_tokensmatch your billing assumptions? - Error shape — when you send a deliberately broken request, what does the error envelope look like?

The endpoint and model IDs you are testing against

Every test in this article hits the same path: POST /chat/completions, the OpenAI-compatible chat endpoint at base URL https://api.deepseek.com. DeepSeek’s API is compatible with OpenAI ChatCompletions and Anthropic-style endpoints, enabling drop-in integration for existing toolchains, so the OpenAI Python SDK and the Anthropic SDK both work by swapping base_url and api_key.

As of April 25, 2026, the current generation is DeepSeek V4, released April 24, 2026. The DeepSeek API supports the new deepseek-v4-pro and deepseek-v4-flash models with 1M context windows and dual Thinking and Non-Thinking modes, while maintaining the same base_url for quick migration. V4-Pro is the frontier tier (1.6T total / 49B active parameters); V4-Flash is the cost-efficient tier (284B total / 13B active). Both ship under the MIT license.

If you are migrating an older integration, the legacy IDs still work for now. Older models like deepseek-chat and deepseek-reasoner will be retired by July 24, 2026, at 15:59 UTC, currently routing to deepseek-v4-flash equivalents. Your migration is a one-line model= swap — the base_url does not change.

Method 1: curl — the fastest sanity check

If you cannot get a curl call to work from your terminal, no SDK will save you. Start here. The shell snippet below is the simplest non-streaming test against V4-Flash:

curl https://api.deepseek.com/chat/completions

-H "Content-Type: application/json"

-H "Authorization: Bearer $DEEPSEEK_API_KEY"

-d '{

"model": "deepseek-v4-flash",

"messages": [

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Reply with the word OK."}

],

"stream": false

}'You are looking for three things in the response: a 200 status, a choices[0].message.content string, and a usage object with prompt_tokens, completion_tokens, and the cache-hit/miss breakdown. Number of tokens in the prompt equals prompt_cache_hit_tokens plus prompt_cache_miss_tokens. Total number of tokens used in the request equals prompt plus completion. If you see those fields populated correctly, your authentication, network path, and model ID are all good.

For a full reference of accepted parameters and response fields, see the DeepSeek API documentation on this site.

Method 2: the OpenAI Python SDK

The next step up is the official OpenAI SDK pointed at DeepSeek’s base URL. This is the closest test to what your production app actually does. Here is a minimal Python script that exercises V4-Pro in thinking mode:

import os

from openai import OpenAI

client = OpenAI(

api_key=os.environ["DEEPSEEK_API_KEY"],

base_url="https://api.deepseek.com",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Outline a migration plan in three steps."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

max_tokens=2048,

)

print("REASONING:", resp.choices[0].message.reasoning_content)

print("FINAL:", resp.choices[0].message.content)

print("USAGE:", resp.usage)When thinking is enabled, the response returns reasoning_content alongside the final content — that is the signal the parameter actually took effect. For thinking mode only, the reasoning contents of the assistant message are returned before the final answer. If reasoning_content is empty, your extra_body flag did not pass through correctly.

For a deeper walkthrough of getting the first request working, see DeepSeek API getting started and the dedicated guide to DeepSeek OpenAI SDK compatibility.

Method 3: Postman, Insomnia, and Bruno

GUI clients are useful when you want to share a test collection with teammates or non-engineers. Set up:

- Create a new request:

POST https://api.deepseek.com/chat/completions. - Add header

Authorization: Bearer {{DEEPSEEK_API_KEY}}and store the key as an environment variable, not in plain text. - Set body type to

raw / JSONand paste the same payload from the curl example. - Save as a collection so you can fork it for V4-Pro, JSON mode, function calling, and streaming variants.

Postman’s response viewer shows latency and headers, which matters when you want to compare cold-start versus warm cache-hit calls. Bruno is the open-source alternative if you want the collection to live in Git alongside your code.

Method 4: web-based playgrounds

Several third-party sites let you paste a key and test calls in the browser. They are convenient for quick checks on a borrowed machine, but they share a drawback: your API key passes through their server. For anything beyond a throwaway test, prefer a local tool. Use a playground to:

- Compare V4-Flash and V4-Pro outputs side by side on the same prompt.

- See token counts before you commit to a longer prompt — a separate DeepSeek token counter is more accurate.

- Estimate spend with a DeepSeek pricing calculator before you scale a workload up.

A repeatable smoke-test checklist

Whatever tool you use, run the same five tests every time you change keys, deploy a new model ID, or chase a regression:

| Test | What to send | What to confirm |

|---|---|---|

| Auth | Any valid request with the new key | 200 status, no authentication_error |

| Model routing | model: "deepseek-v4-flash" and a trivial prompt |

Response model field echoes deepseek-v4-flash |

| Thinking mode | reasoning_effort: "high" + thinking.enabled |

reasoning_content is non-empty |

| JSON mode | response_format: {"type": "json_object"} with the word “json” in the prompt |

Output parses as JSON; not truncated |

| Cache accounting | Two identical calls 30 seconds apart | Second call shows non-zero prompt_cache_hit_tokens |

The JSON mode test deserves a footnote. JSON mode is designed to return valid JSON, not guaranteed. Setting response_format to json_object enables JSON Output. When using JSON Output, you must also instruct the model to produce JSON yourself via a system or user message. Without this, the model may generate an unending stream of whitespace until the generation reaches the token limit, resulting in a long-running and seemingly stuck request. Always include a small example schema in your prompt and set max_tokens high enough to avoid truncation. The dedicated DeepSeek API JSON mode page has fuller patterns.

Worked example: costing out a smoke test on V4-Flash

If your smoke test runs nightly across a CI pipeline, costs add up. Here is a transparent calculation for 1,000 daily smoke-test calls on deepseek-v4-flash, with a 2,000-token cached system prompt, a 200-token user message (uncached miss), and a 300-token response. V4-Flash rates are $0.0028 cache-hit / $0.14 cache-miss / $0.28 output per 1M tokens (cache-hit dropped 10× on 2026-04-26).

- Cached input: 2,000 × 1,000 = 2,000,000 tokens × $0.0028/M = $0.0056

- Uncached input: 200 × 1,000 = 200,000 tokens × $0.14/M = $0.0028

- Output: 300 × 1,000 = 300,000 tokens × $0.28/M = $0.084

- Daily total: $0.1176 — roughly $3.53 a month.

Note the uncached-input line: even when the system prompt hits cache, each new user message is a miss against that prefix. Skipping that line is the most common costing error I see in spec docs. Cross-check current rates against the DeepSeek API pricing page before budgeting, and review the broader DeepSeek API best practices for cache-friendly prompt design. Always verify against the official DeepSeek pricing page before committing to a budget.

Common errors and how to read them

| Status | Likely cause | Fix |

|---|---|---|

| 401 | Bearer token missing or wrong | Check the header format; regenerate the key in the console |

| 402 | Account balance is zero | Top up; DeepSeek may show a granted balance — check the billing console |

| 404 | Wrong path or unrecognised model ID | Confirm /chat/completions and a current V4 ID |

| 429 | Rate limit hit | Implement exponential backoff; review concurrency |

| finish_reason: length | max_tokens too low |

Raise the cap; with thinking-max set max_model_len ≥ 393216 |

Other DeepSeek-specific finish reasons exist. This will be stop if the model hit a natural stop point or a provided stop sequence, length if the maximum number of tokens specified in the request was reached, content_filter if content was omitted due to a flag from our content filters, tool_calls if the model called a tool, or insufficient_system_resource if the request is interrupted due to insufficient resource of the inference system. The last one is the giveaway that the issue is on DeepSeek’s side rather than yours. For a fuller catalogue, see DeepSeek API error codes.

Streaming, tool calls, and FIM — extending the test

Once the basic call works, test the surfaces you actually use in production:

- Streaming — set

stream: trueand confirm chunks arrive incrementally. With thinking enabled, reasoning content streams alongside final content. Details: DeepSeek API streaming. - Tool calling — declare a function and verify the model returns a

tool_callsobject. See DeepSeek API function calling. - Context caching — fire two identical-prefix requests and compare cache-hit counts. The DeepSeek context caching page covers prefix design.

- FIM completion (Beta) — non-thinking mode only; useful for code-fill scenarios.

- Chat Prefix Completion (Beta) — pin the assistant’s opening tokens against

https://api.deepseek.com/beta.

Remember the API is stateless: every request must resend the full conversation history. The web chat keeps session state for you; the API does not. If you want to compare chat behaviour to API behaviour, see the broader collection of DeepSeek tools and utilities.

Verdict

You do not need a dedicated DeepSeek API tester product. You need a one-page checklist and three small scripts: a curl smoke test, a Python SDK script that flips thinking on and off, and a Postman collection your team can clone. Run all three against a fresh key after every model migration — especially the V3.x → V4 swap and the legacy-ID retirement on July 24, 2026. The cost of running the suite is pennies; the cost of shipping a bad model= string to production is not.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

Frequently asked questions

How do I test if my DeepSeek API key works?

The fastest check is a single curl call to POST /chat/completions with your bearer token and a trivial prompt. A 200 response confirms the key, your network path, and a valid model ID all work; a 401 means the token is wrong, and a 402 means your balance is zero. Walk through the full flow in our get a DeepSeek API key guide before testing.

What model ID should I use when testing the DeepSeek API?

For new integrations, use deepseek-v4-flash for routine tests and deepseek-v4-pro when you need to verify frontier-tier behaviour. The legacy IDs deepseek-chat and deepseek-reasoner still work but retire on July 24, 2026 at 15:59 UTC. See the DeepSeek V4-Flash model page for the full feature set.

Can I use Postman as a DeepSeek API tester?

Yes. Create a POST request to https://api.deepseek.com/chat/completions, add an Authorization: Bearer header, and paste a JSON body with model and messages fields. Save it as a collection so teammates can fork variants for streaming, JSON mode and function calling. The same JSON payload format works in Insomnia and Bruno — see DeepSeek API code examples.

Does the DeepSeek API behave the same as the chatbot?

No. The web chat and mobile app keep your conversation history server-side; the API is stateless and forgets prior turns the moment a request finishes. To sustain a multi-turn conversation through the API, your client must resend the full messages array on every request. The differences are covered in DeepSeek browser vs app.

Why does my JSON mode test return empty content?

JSON mode is designed to return valid JSON, not guaranteed. The model may return empty content if the prompt does not include the literal word “json” plus an example schema, or if max_tokens is too low and the output is truncated mid-object. Set max_tokens high, prompt explicitly, and handle empty responses defensively. The DeepSeek API JSON mode page has working examples.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- PricingDeepSeek pricing pagePre-deploy smoke test cost reference ratesLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

Methodology

Information in this article was checked against the official DeepSeek documentation, the article's cited primary sources, and the deepseekai.guide pricing dataset on the date shown above. Where third-party numbers are quoted, the originating source is linked inline so the reader can verify and re-check.

Data confidence

High for facts cited inline with primary-source links; medium for context narrative.

Editorial note

AI tooling moves quickly. If you spot an outdated number or a broken link, the contact link in the footer is the fastest way to flag it for the editorial team.