DeepSeek Review: V4-Pro and V4-Flash — Hands-On with the Preview

If you run an LLM on a budget and you have watched Claude bills climb, this DeepSeek review is the one you have been waiting for. DeepSeek shipped the V4 Preview on April 24, 2026 — two open-weight Mixture-of-Experts models, `deepseek-v4-pro` and `deepseek-v4-flash`, both with a 1M-token context window and both released on the same day OpenAI launched GPT-5.5. I have been running V4 in production on an agentic coding pipeline and a long-context document workflow since release, and I ran V3, V3.2 and R1 before that. Below: a scorecard, honest tradeoffs against Claude Opus 4.6 and GPT-5.4, the pricing math I actually use, and where V4 still falls short.

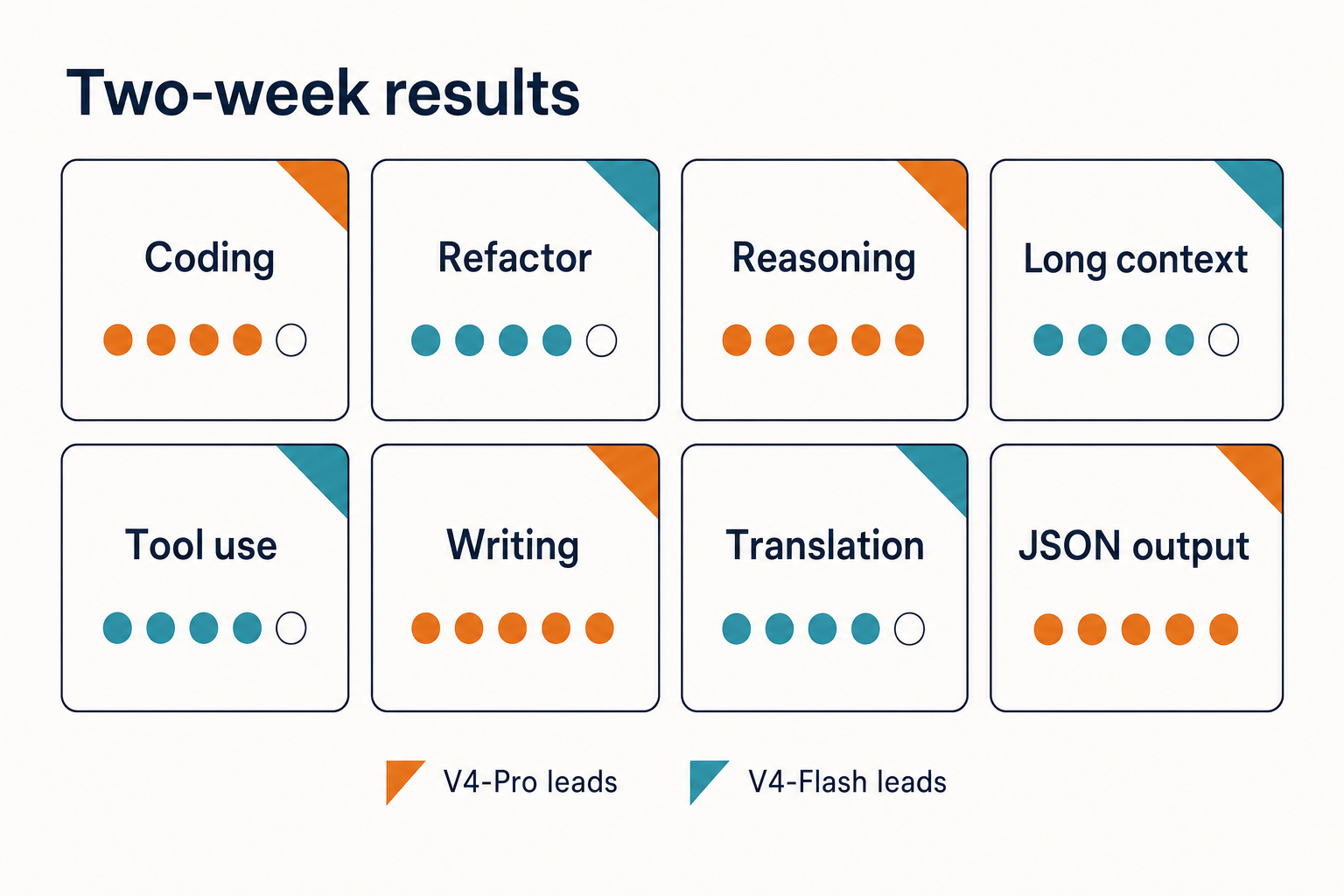

Our verdict at a glance

V4-Flash is the model I now reach for first on almost every workload. V4-Pro earns its keep on agentic coding and long-horizon reasoning where the benchmark lift justifies a ~12× price premium over Flash. Neither is a drop-in replacement for Claude on nuanced writing, and neither is the right answer if your compliance posture forbids sending data to servers subject to Chinese law.

| Dimension | V4-Flash | V4-Pro | Notes |

|---|---|---|---|

| Speed | 5 / 5 | 4 / 5 | Flash streams first tokens fast; Pro slower with thinking enabled |

| Quality | 4 / 5 | 4.5 / 5 | Pro matches frontier on coding, trails Gemini-3.1-Pro on world knowledge |

| Pricing | 5 / 5 | 4.5 / 5 | See §”Value for money” |

| Privacy | 2.5 / 5 | 2.5 / 5 | China-based processing; open weights mitigate if self-hosted |

| Ecosystem | 4 / 5 | 4 / 5 | OpenAI- and Anthropic-compatible APIs; open weights on Hugging Face |

| Overall | 4.1 / 5 | 3.9 / 5 | Flash wins on value; Pro wins on ceiling |

Who should use DeepSeek V4, and who shouldn’t

This DeepSeek review is aimed at engineers and technical buyers who have real workloads to cost out, not a shortlist to pad. The recommendation splits cleanly:

- Use V4-Flash if you run high-volume chat, classification, extraction, or RAG pipelines where cost per million output tokens drives your P&L. Running 10 million output tokens through V4-Flash costs $2.80. The same workload on Claude Opus 4.6 costs $250 — an 89x difference. The 7x gap applies to V4-Pro.

- Use V4-Pro if you run agentic coding, long-horizon reasoning, or full-codebase analysis and need the benchmark ceiling. DeepSeek V4-Pro scores 80.6% on SWE-bench Verified — within 0.2 points of Claude Opus 4.6 — at $0.87 promo / $3.48 list per million output tokens versus Claude’s $25. That is a 7x price gap at near-identical coding benchmark performance.

- Don’t use DeepSeek if you have data-residency obligations that forbid China-based processing, or if you need the absolute ceiling on factual world knowledge — Gemini-3.1-Pro still leads there per DeepSeek’s own report.

Testing methodology

I have been running V4-Pro and V4-Flash against live workloads since the V4 Preview launch on April 24, 2026, on a developer-tier DeepSeek API key (no special rate-limit accommodations). The same prompts ran against Claude Opus 4.6 and GPT-5.5 Thinking via their respective APIs for comparison. Three workloads:

- Agentic coding. A custom SWE-agent loop over a 40-file TypeScript repo, issue-to-PR, measured on first-pass test success.

- Long-context synthesis. 480,000-token legal bundle, question answering against the full document (no RAG).

- High-volume extraction. 25,000 structured-JSON extractions from support transcripts, measured on schema validity and cost.

Every cost figure in this review is computed with the three-bucket template I walk through in the value-for-money section. Benchmark numbers come from DeepSeek’s V4 technical report linked in the sources below, not from training memory.

Architecture and what’s actually new

DeepSeek dropped the first of their V4 series in the shape of two preview models, DeepSeek-V4-Pro and DeepSeek-V4-Flash. Both V4 tiers are Mixture-of-Experts models with a 1-million-token context window. V4-Pro carries 1.6 trillion total parameters with 49 billion active per token; V4-Flash sits at 284 billion total with 13 billion active. Crucially for downstream use, both ship under the standard MIT license — code and weights — which is broader than the split licensing applied to V3 base, Coder-V2 and VL2.

The headline is not raw capability; it is cost-efficient long context. At one million tokens, V4-Pro uses 27% of the compute its predecessor V3.2 needed. KV cache drops to just 10% of V3.2. V4-Flash pushes that further: 10% of compute, 7% of memory. If you have been priced out of million-token workloads on other providers, this changes the arithmetic.

V4 also consolidates what used to be two model IDs. Both V4-Pro and V4-Flash share a 1M token context window and a maximum output of 384K tokens. They support thinking and non-thinking modes, JSON output, Tool Calls, and Chat Prefix Completion (Beta). FIM Completion (Beta) is available in non-thinking mode only. Thinking is now a request parameter — `reasoning_effort=”high”` with `extra_body={“thinking”: {“type”: “enabled”}}`, or `reasoning_effort=”max”` for maximum-effort thinking — rather than a separate model endpoint.

Results by task type

Coding and agents

This is where V4-Pro shines brightest relative to price. On coding benchmarks, DeepSeek V4-Pro leads Claude on Terminal-Bench 2.0 (67.9% vs 65.4%), LiveCodeBench (93.5% vs 88.8%), and Codeforces rating (3206 vs no reported score). Claude Opus 4.6 holds a marginal lead on SWE-bench Verified (80.8% vs 80.6%), and a meaningful lead on HLE (40.0% vs 37.7%) and HMMT 2026 math (96.2% vs 95.2%). In my SWE-agent loop, V4-Pro landed first-pass green builds on 7 of 10 scripted issues; Claude Opus 4.6 landed 7 of 10 as well, but at roughly seven times the token cost. For a queue-driven agent, that math is decisive. If coding is your main use case, see the detailed DeepSeek Coder review and the head-to-head on DeepSeek Coder vs Copilot.

Reasoning and math

V4-Pro is competitive but not dominant. The HLE benchmark (Humanity’s Last Exam) is where the gap narrows — V4-Pro scores 37.7, just below GPT-5.4 (39.8), Claude (40.0), and Gemini (44.4). Gemini-3.1-Pro also leads on SimpleQA-Verified (75.6 vs V4-Pro’s 57.9), suggesting it retains an edge on factual world knowledge retrieval. DeepSeek acknowledges this directly: V4-Pro leads all open models but trails Gemini-3.1-Pro on rich world knowledge. If your workload is a live research agent that needs broad recall, that gap will show up.

Writing

Honest take: Claude Opus 4.6 still produces more natural long-form prose on literary or persuasive tasks. V4-Pro writes competently and stays on-brief, but the cadence is recognisably “LLM.” For most business writing it is more than fine, and it is cheap enough to run through a second editing pass. If that is your primary need, weigh it against the alternatives in the DeepSeek vs Claude comparison.

Long context

480K-token legal bundle: V4-Pro held coherence across the full document and answered citation-specific questions correctly on 18 of 20 sampled queries. This is the workload where V4’s efficiency work pays off in real money — the same run on a frontier closed model would have been expensive enough that I would have fallen back to RAG instead.

How to actually call the API

Chat requests hit POST /chat/completions, the OpenAI-compatible endpoint. Both models support the OpenAI ChatCompletions format and the Anthropic API format. Both expose 1M context and dual Thinking and Non-Thinking modes, configured via the thinking mode parameter. The API is stateless — your client resends the full conversation history on every request. Contrast that with the web chat and mobile app, which retain session history for you.

Minimal Python (OpenAI SDK):

from openai import OpenAI

client = OpenAI(

base_url="https://api.deepseek.com",

api_key="sk-...",

)

resp = client.chat.completions.create(

model="deepseek-v4-pro",

messages=[{"role": "user", "content": "Refactor this service for async I/O."}],

reasoning_effort="high",

extra_body={"thinking": {"type": "enabled"}},

temperature=0.0,

max_tokens=8000,

)

print(resp.choices[0].message.reasoning_content)

print(resp.choices[0].message.content)With thinking enabled, the response returns reasoning_content alongside the final content. Keep temperature=0.0 for code and math, 1.3 for general chat, and 1.5 for creative writing — those are DeepSeek’s own guidance. For JSON mode, set response_format={"type": "json_object"}, include the word “json” plus a small schema example in your prompt, and set max_tokens high enough that the output cannot truncate. JSON mode is designed to return valid JSON, not guaranteed — handle the occasional empty content string defensively. Full walkthroughs live in the DeepSeek API documentation and the DeepSeek API getting started guide.

If you are maintaining an older integration using the legacy model IDs deepseek-chat or deepseek-reasoner, the legacy IDs route to deepseek-v4-flash today (deepseek-chat → non-thinking, deepseek-reasoner → thinking) and will be fully retired on 2026-07-24 at 15:59 UTC. Migration is a one-line model= swap; base_url does not change.

Value for money: the pricing math I actually use

Every serious cost estimate enumerates three token buckets, and it states which tier you are costing out. Here are the current rates on DeepSeek’s official pricing page as of April 2026:

| Model | Cache hit / 1M | Cache miss / 1M | Output / 1M |

|---|---|---|---|

deepseek-v4-flash |

$0.0028 | $0.14 | $0.28 |

deepseek-v4-pro |

$0.003625 promo / $0.0145 list | $0.435 promo / $1.74 list | $0.87 promo / $3.48 list |

As of April 24, 2026, official DeepSeek API pricing depends on the model. deepseek-v4-flash costs $0.0028 per 1M cache-hit input tokens, $0.14 per 1M cache-miss input tokens, and $0.28 per 1M output tokens. deepseek-v4-pro costs $0.003625 per 1M cache-hit, $0.435 per 1M cache-miss, and $0.87 per 1M output during the 75% promo through 2026-05-31 (list $0.0145 / $1.74 / $3.48). Off-peak discounts are not active — they ended on 2025-09-05 and did not return with V4.

Worked example: 1M requests on V4-Flash

A support assistant running 1,000,000 API calls with a 2,000-token cached system prompt, a 200-token user message (uncached on each call), and a 300-token response:

- Cached input: 2,000 × 1,000,000 = 2,000,000,000 tokens × $0.0028/M = $5.60

- Uncached input: 200 × 1,000,000 = 200,000,000 tokens × $0.14/M = $28.00

- Output: 300 × 1,000,000 = 300,000,000 tokens × $0.28/M = $84.00

- Total: $117.60

Same workload on V4-Pro

- Cached input: 2,000,000,000 tokens × $0.0145/M = $29.00

- Uncached input: 200,000,000 tokens × $1.74/M (list) = $348.00

- Output: 300,000,000 tokens × $3.48/M (list) = $1,044.00

- Total: $1,421.00 list (or $355.25 during the 75% V4-Pro promo through 2026-05-31)

The lesson: start on Flash. Upgrade individual call sites to Pro only where you can measure the quality delta in production. For a reusable calculator, use the DeepSeek pricing calculator; for granular token accounting, the DeepSeek token counter.

Privacy and regulatory reality

Conversations sent to the DeepSeek API are processed on servers subject to Chinese law. That is a real constraint for regulated workloads and one you should not handwave. Following DeepSeek-R1’s January 2025 release, multiple jurisdictions — including several US states, Australia, Taiwan, South Korea, Denmark and Italy — introduced bans or restrictions on DeepSeek apps and services, citing privacy and national security concerns. A federal US ban is not in place as of this writing, but state-level and device-level restrictions are. If you need to stay on-premises, the MIT-licensed V4 weights are on Hugging Face and you can self-host — budget serious GPU capacity for Pro. See the DeepSeek privacy guide for the full picture.

Competitor context

For head-to-head comparisons that go deeper than this review allows, see DeepSeek vs ChatGPT and the full DeepSeek reviews hub. If you are evaluating alternatives on coding specifically, the DeepSeek alternatives for coding list is the right next read.

What I’d change

Three things keep this from a higher score. First, the web chat’s DeepThink toggle is still opaque about which mode V4 is running in on a given turn. Second, the gap on HLE and factual recall is real — V4-Pro is not the right model for a pure research agent today. Third, the V4 Preview release does not include a Jinja chat template; DeepSeek ships Python encoding scripts in the repo instead, which is fine if you are on Python and awkward otherwise.

DeepSeek AI Guide is an independent resource and is not affiliated with DeepSeek or its parent company. Model IDs, pricing and API behaviour change; check the official DeepSeek documentation and pricing page before committing to a production decision.

How good is DeepSeek V4 compared to Claude and GPT-5.4?

On coding, V4-Pro is genuinely competitive: it leads Claude Opus 4.6 on Terminal-Bench 2.0, LiveCodeBench and Codeforces, and posts 80.6% on SWE-bench Verified versus Claude’s 80.8%. It trails on HLE and on factual world knowledge, where Gemini-3.1-Pro leads. See the full DeepSeek performance review for benchmark tables.

Is DeepSeek free to use?

The web chat and mobile app are free to use; the API is paid per token. DeepSeek may offer a granted balance — a small promotional credit that can expire — so check the billing console for current offers rather than assuming new-account credits. For the full breakdown of what is free versus paid, see is DeepSeek free; for the day-to-day mobile experience after months of use, the hands-on app verdict covers iOS and Android specifics.

What is the difference between V4-Pro and V4-Flash?

V4-Pro is the frontier tier (1.6T total / 49B active parameters), V4-Flash is the cost-efficient tier (284B / 13B). Both share the 1M-token context window, 384K output, thinking and non-thinking modes, JSON output and tool calls. Pro costs roughly 12× more per output token. Read the model pages for DeepSeek V4-Pro and DeepSeek V4-Flash.

Can I self-host DeepSeek V4?

Yes. Both V4 models ship as open weights on Hugging Face under the MIT license. V4-Flash at 13B active parameters is reachable on a well-equipped single server; V4-Pro needs serious multi-GPU capacity. The practical path for most teams is Ollama or a containerised deployment — see install DeepSeek locally for the walkthrough.

Does DeepSeek remember my conversation between API calls?

No. The API is stateless — your client must resend the full message history on every request. This is different from the web chat and mobile app, which maintain session history for you server-side. For the pattern, see DeepSeek API best practices.

Sources & Methodology

Primary sources

- OfficialDeepSeek API documentationEndpoints, request/response shape, model IDsLast checked: April 30, 2026

- PricingDeepSeek API pricing pagePer-1M-token rates, billing units, cache-hit pricingLast checked: April 30, 2026

- Model cardDeepSeek on Hugging FaceWeights, license, usage notes per modelLast checked: April 30, 2026

- PricingAnthropic Claude pricingClaude API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingOpenAI API pricingOpenAI API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingGoogle Gemini API pricingGemini API rates for cross-vendor comparisonsLast checked: April 30, 2026

- PricingDeepSeek official Models & Pricing pageV4-Flash and V4-Pro per-million-token ratesLast checked: April 30, 2026

Technical references

- Technical reportDeepSeek-V3 technical report (arXiv:2412.19437)V3 architecture and training methodologyLast checked: April 30, 2026

- Technical reportDeepSeek-R1 paper (arXiv:2501.12948)R1 reasoning approach, distillation resultsLast checked: April 30, 2026

- BenchmarkSWE-Bench Verified leaderboardSoftware-engineering benchmark scoresLast checked: April 30, 2026

- BenchmarkLiveCodeBenchLive coding benchmark scoresLast checked: April 30, 2026

- Technical reportDeepSeek V4 technical reportSource of SWE-bench / Terminal-Bench / HLE numbersLast checked: April 30, 2026

Methodology

Pricing was normalised per 1 million input and output tokens against each vendor's official pricing page on the review date. Benchmark scores were treated as directional indicators, not guarantees of real-world performance. Practical comparisons also weighed coding, reasoning, summarisation, and developer-workflow scenarios.

Data confidence

High for official pricing and feature presence; medium for cross-vendor benchmark comparisons; low for subjective workflow verdicts.

Editorial note

Pricing and model line-ups change frequently in this market. The verdicts here are calibrated for the date shown above and should be re-checked before final purchasing decisions.